DBMS Architecture Components and Query Optimization Techniques

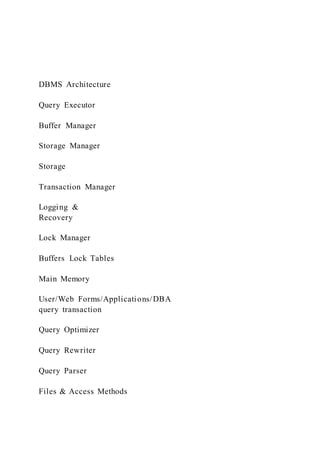

- 1. DBMS Architecture Query Executor Buffer Manager Storage Manager Storage Transaction Manager Logging & Recovery Lock Manager Buffers Lock Tables Main Memory User/Web Forms/Applications/DBA query transaction Query Optimizer Query Rewriter Query Parser Files & Access Methods

- 2. Past lectures Today’s lecture Many query plans to execute a SQL query 3 S UTR SR T U SR T U • Even more plans: multiple algorithms to execute each operation SR T U Sort-merge Sort-merge

- 3. hash join Table-scan index-scan Table-scanindex-scan • Compute the join of R(A,B) S(B,C) T(C,D) U(D,E) Explain command in PostgreSQL 4 SELECT C.STATE, SUM(O.NETAMOUNT), SUM(O.TOTALAMOUNT) FROM CUSTOMERS C JOIN CUST_HIST CH ON C.CUSTOMERID = CH.CUSTOMERID JOIN ORDERS O ON CH.ORDERID = O.ORDERID GROUP BY C.STATE Communication between operators: iterator model • Each physical operator implements three functions: – Open: initializes the data structures. – GetNext: returns the next tuple in the result. – Close: ends the operation and frees the resources.

- 4. • It enables pipelining • Other option: compute the result of the operator in full and store it in disk or memory: – inefficient. 5 Query optimization: picking the fastest plan • Optimal approach plan – enumerate each possible plan – measure its performance by running it – pick the fastest one – What’s wrong? • Rule-based optimization – Use a set of pre-defined rules to generate a fast plan • e.g., If there is an index over a table, use it for scan and join. 6 Review Definitions • Statistics on table R: – T(R): Number of tuples in R – B(R): Number of blocks in R • B(R) = T(R ) / block size – V(R,A): Number of distinct values of attribute A in R

- 5. 7 Plans to select tuples from R: σA=a(R) • We have an unclustered index on R • Plans: – (Unclustered) indexed-based scan – Table-scan (sequential access) • Statistics on R – B(R)=5000, T(R)=200,000 – V(R,A) = 2, one value appears in 95% of tuples. • Unclustered indexed scan vs. table-scan ? 8 Presenter Presentation Notes Unclustered indexed scan vs. table-scan ? table-scan is the winner! Query optimization methods • Rule-based optimizer fails – It uses static rules – The rules do not consider the distribution of the data. • Cost-based optimization – predict the cost of each plan

- 6. – search the plan space to find the fastest one – do it efficiently • Optimization itself should be fast! 9 Cost-based optimization • Plan space – which plans to consider? – it is time consuming to explore all alternatives. • Cost estimator – how to estimate the cost of each plan without executing it? – we would like to have accurate estimation • Search algorithm – how to search the plan space fast? – we would like to avoid checking inefficient plans 10 Space of query plans: basic operators • Selection – algorithms: sequential, index-based – Ordering • Projection – Ordering

- 7. • Join – algorithms: nested loop, sort-merge, hash – ordering 11 Reducing plan space • Multiple logical query plan for each SQL query Star(name,BD,bio), StarsIn(movie, sname, year, plot) SELECT movie,name FROM Stars, StarsIn WHERE Star.name = StarsIn.sname AND year = 1950 12 Generally Faster StarsIn Star StarsIn.sname = Star.name σ year=1950 StarsIn Star StarsIn.sname = Star.name year=1950 movie, name movie, name

- 8. Reducing plan space • Push selection down to reduce # of rows • Push projection down to reduce # of columns SELECT movie, name, BD FROM Stars, StarsIn WHERE Star.name = StarsIn.sname 13 StarsIn Star StarsIn.sname = Star.name movie, name, BD StarsIn Star StarsIn.sname = Star.name movie, name, BD movie, sname name,BD Less effective than pushing down selection. 14 • Queries with multiple joins may have exponentially many possible plans.

- 9. • System-R style considers only left-deep joins Reducing plan space SR T U SR T U T USR • Left-deep trees allow us to generate fully pipelined plans 15 • System R-style avoids plans with Cartesian products – Cartesian products are generally larger than joins. • Example: – Join of R(A,B), S(B,C), U(C,D) – (R ⋈ U) ⋈ S has a Cartesian product – Pick (R ⋈ S) ⋈ U instead • If cannot avoid Cartesian products, delay them. Reducing plan space

- 10. Components of a cost-based optimizer • Plan space – Which plans to consider? – Time-consuming to explore every possible plan. • Cost estimator – How to estimate the cost of a plan without executing it? – Accurate estimations. • Search algorithm – How to search the plan space fast? – Avoid checking inefficient plans. 16 17 • Our goal is to maximize relative accuracy – Compare plans, not to predict exact costs. • For each operator in the plan: – Find input size – Estimate its cost based on the input size • Example: sort-merge join of R ⋈ S is 3 B(R) + 3 B(S) – Estimate output size or selectivity • Selectivity: ratio of output to input Cost estimation

- 11. 18 • Why estimate output size? – Output of an operator = Input to the next one in the plan – Used to estimate of the cost of the next operator • Cost of a plan – Sum of the cost of its operators Cost estimation Cost estimation: Selinger Style • Input: stats on each table – T(R): Number of tuples in R – B(R): Number of blocks in R • B(R) = T(R ) / block size – V(R,A): Number of distinct values of attribute A in R • We assume that attributes and predicates are independence. • When no estimate available, use magic numbers. 19 Presenter Presentation Notes too much information to keep, use histogram

- 12. 20 Selectivity factors: selection • Point selection: S = σA=a(R) – T(S) ranges from 0 to T(R) – V(R,A) + 1 – consider its mean: F = 1 / V (R,A) • Range selection: S = σA>a(R) – F = (max(A) – a) / (max(A) – min(A)) – If not arithmetic inequality, use magic number • F = 1 / 3 • Range selection: S = σ b <A<a(R) – F = (a - b) / (max(A) – min(A)) – If not arithmetic, use magic number • F = 1 / 4 21 Examples: selection with multiple conditions • S = σA=1 AND B>10(R) – Given T(R) = 10,000, V(R,A) = 50 – No information on B – Multiply 1/V(R,A) for equality and 1/3 for inequality • T(S) = 10000 / (50 * 3) = 66 • S = σA=1 OR B>10(R)

- 13. – Sum of estimates of predicates minus their product • T(S) = 200 + 3333 – 66 = 3467 22 • Containment of values assumption – If V(S,A) <= V (R,A), then A-values in S are a subset of A- values in R • Let’s assume V(S,A) <= V (R,A) – Each tuple t in S joins x tuple(s) in R – consider its mean: x = T(R) / V (R,A) – T(R ⋈A S) = T (S) * T(R) / V(R,A) • General formula: T(R ⋈A S) = T(R) * T(S) / max(V(R,A), V(S,A)) Selectivity factors: join predicates Presenter Presentation Notes Typical join: A is a key in R and a foreign key in S 23 • What if we join over more than one attribute? T(R S) = T(R) T(S) / max(V(R,A),V(S,A) max(V(R,B),V(S,B))

- 14. A,B Selectivity factors: join predicates Components of a cost-based optimizer • Plan space – Which plans to consider? – Time-consuming to explore every possible plan. • Cost estimator – How to estimate the cost of a plan without executing it? – Accurate estimations. • Search algorithm – How to search the plan space fast? – Avoid checking inefficient plans. 24 Search the plan space • Baseline: exhaustive search – Enumerate all (left-deep) trees and compare their costs • Enormous space to search => time-consuming! 25

- 15. Plan search: System-R style • A.K.A: Selinger style optimization • Bottom-up – Start from the ground relation (in FROM) – Work up the tree to form a plan – Compute the cost of larger plans based on its sub-trees. • Dynamic programming – greedily remove sub-trees that are costly (useless) 26 27 • Step 1: For each {Ri}: – Plans({Ri}): algorithms to access Ri • Table-Scan, Index-Scan – Costs({Ri}): Costs of accessing to Ri • e.g., B(Ri) for table-scan on Ri – Plan({Ri}): access method with lowest cost – Cost({Ri}): lowest cost in Costs({Ri}) – Output-size ({Ri}): B(Ri) Dynamic programming algorithm 28 • Step 2: For each {Ri, Rj}: – Plans({Ri,Rj}): join algorithms to compute Ri ⋈ Rj

- 16. • E.g., nested-loop and sort-merge based algorithms – Costs({Ri,Rj}): function of size of Ri and Rj • #I/O access of the chosen join algorithm • E.g., 5 B(R) + 5 B(S) for sort-merge join – Plan({Ri,Rj}): the join algorithm with lowest cost – Cost({Ri,Rj}): lowest cost in Costs({Ri,Rj}) – Output-size ({Ri,Rj}): estimate of the size of join Dynamic programming algorithm 29 • Step i: For each S ⊆ {R1, …, Rn} of cardinality i: – for every S1 ,S2 s.t. S = S1 ∪ S2 compute C = cost(S1) + cost(S2) + cost(S1 ⋈ S2) – Cost(S) = the smallest C – Plan(S) = the plan with cost(S) – Output-size(S): estimate the size of S • Return Plan({R1, …, Rn}) Dynamic programming algorithm 30 • R ⋈ S ⋈ T ⋈ U on common attribute A • T(R)= 2000, T(S)=5000, T(T)=3000, T(U)=1000 • We assume that each block contains a single tuple.

- 17. – B(R)= 2000, B(S)=5000, B(T)=3000, B(U)=1000 • No index on relations – Table-scan to access each relation • The only join algorithm is sort-merge – Cost for R ⋈ S: 5 B(R) + 5 B(S) – There is enough memory for sort-merge joins. Example 31 • Relations: R, S, T, U • T(R)= 2000, T(S)=5000, T(T)=3000, T(U)=1000 • B(R)= 2000, B(S)=5000, B(T)=3000, B(U)=1000 • To simplify our example, we assume – V(R,A)=V(S,A)=V(T,A)=V(U,A)= 100 – T(R ⋈ S) = 0.01 * T(R) * T(S) • Same formula for joins of every pair of relations. Example 32 Query Output-Size Cost Plan R⋈ S

- 18. R⋈ T R⋈ U S⋈ T S⋈ U T⋈ U R⋈ S⋈ T R⋈S⋈U R⋈T⋈U S⋈T⋈U R⋈S⋈T⋈U 33 Query Output-Size Cost Plan R⋈S 100K 35K R⋈S R⋈T 60K 25K R⋈T R⋈U 20K 15K R⋈U S⋈T 150K 40K S⋈T S⋈U 50K 30K S⋈U

- 19. T⋈U 30K 20K T⋈U R⋈S⋈ T R⋈S⋈U R⋈T⋈U S⋈T⋈U R⋈S⋈T⋈U 34 Query Output-Size Cost Plan R⋈S 100K 35K R⋈S R⋈T 60K 25K R⋈T R⋈U 20K 15K R⋈U S⋈T 150K 40K S⋈T S⋈U 50K 30K S⋈U T⋈U 30K 20K T⋈U R⋈S⋈T 3M 170K S⋈ (R⋈T) R⋈S⋈U 1M 80K S⋈ (R⋈U) R⋈T⋈U 0.6M 70K T⋈ (R⋈U)

- 20. S⋈T⋈U 1.5M 105K S⋈ (T⋈U) R⋈S⋈T⋈U 35 Query Output-Size Cost Plan R⋈S 100K 35K R⋈S R⋈T 60K 25K R⋈T R⋈U 20K 15K R⋈U S⋈T 150K 40K S⋈T S⋈U 50K 30K S⋈U T⋈U 30K 20K T⋈U R⋈S⋈T 3M 170K S⋈ (R⋈T) R⋈S⋈U 1M 80K S⋈ (R⋈U) R⋈T⋈U 0.6M 70K T⋈ (R⋈U) S⋈T⋈U 1.5M 105K S⋈ (T⋈U) R⋈S⋈T⋈U 30M 1.35M S⋈ (T⋈ (R⋈U)) Interesting Orders • Useful sorted intermediate results

- 21. • Order By – E.g., Order By based on A for the result R⋈AS • Sorted order based on A is an interesting order • Multi-relation joins – E.g., T⋈A (R⋈AS) • Sorted output of R⋈AS helps fast join of T⋈A (R⋈AS). • Sorted order based on A is an interesting order • Grouping 36 Interesting Orders • Plans with sorted results save sorting time/cost. – E.g., sort-merge join for R⋈AS with Order By on A. – E.g., sort-merge join for R⋈AS for query T⋈A (R⋈AS). • The dynamic programming algorithm always keeps – Fastest overall plan – Fastest plan for each interesting order 37 Nested subqueries • Subqueries are optimized separately • Correlation: order of evaluation – uncorrelated queries

- 22. • nested subqueries do not reference outer subqueries • evaluate the most deeply nested subquery first – correlated queries: nested subqueries reference the outer subqueries Select name From employee X Where salary > (Select salary From employee Where employee_num = X.manager) 38 Nested subqueries • correlated queries: nested subqueries reference the outer subqueries Select name From employee X Where salary > (Select salary From employee Where employee_num = X.manager) • The nested subquery is evaluated once for each tuple in the outer query. • If there are small number of distinct values in the outer relation, it is worth sorting the tuples. – reduces the #evaluation of the nested query. 39

- 23. Optimization: all operations • Reduce plan space – Push down selections and projections – Choose good plans, discard bad ones • Base relations access – find all plans for accessing each base relations • Join ordering – Bottom-up dynamic programming algorithm – Consider only left-deep plans – Remove/ postpone Cartesian product – Keep the cheapest plan for unordered and each interesting order 40 Summary: optimization • Ideal goal – Map a declarative query to the most efficient plan • Plan space – Many semantically equivalent alternatives • Why important? – Difference between good/bad plans is order of magnitude • Conventional wisdom: – At least avoid bad plans

- 24. 41 State of the art • Academia: always a core database research topic – Optimizing for interactive querying – Optimizing for novel parallel frameworks • Industry: most optimizers use System-R style – They started with rule-based. • Oracle 7 and its prior versions used rule-based • Oracle 7 – 10: rule-based, and cost based • Oracle 10g (2003): cost-based 42 CS 540 �Database Management SystemsDBMS ArchitectureMany query plans to execute a SQL queryExplain command in PostgreSQLCommunication between operators: � iterator modelQuery optimization: picking the fastest planReview Definitions�Plans to select tuples from R: sA=a(R) �Query optimization methodsCost-based optimizationSpace of query plans: basic operatorsReducing plan spaceReducing plan spaceReducing plan spaceReducing plan spaceComponents of a cost-based optimizerCost estimationCost estimationCost estimation: Selinger Style Selectivity factors: selectionExamples: selection with multiple conditionsSelectivity factors: join predicatesSelectivity factors: join predicatesComponents of a cost-based optimizerSearch the plan spacePlan search: System-R styleDynamic programming algorithmDynamic programming algorithmDynamic programming algorithmExampleExampleSlide Number 32Slide Number 33Slide Number 34Slide Number 35Interesting

- 25. OrdersInteresting OrdersNested subqueriesNested subqueriesOptimization: all operationsSummary: optimizationState of the art 1 1: Query optimization (2 points) Consider the following relations: R(A, B, C, D, E) S(F, D) T(G, B, D, H) U(I, J, K) V(L, J, M) W(L, J, N) Suggest an optimized logical query plan for the above query and explain why your proposed plan may be faster than other possible plans. You should find the best guess(es) for the join order in your plan without knowing the statistics of the join attributes and base relations. SELECT B,C FROM R, S, T, U, V, W WHERE R.D = S.D and R.D = T.D

- 26. and R.B = T.B and U.J <= V.J and U.J = W.J and R.E <= 200 and W.N <= 100 Please note that your answer may not always be more efficient than other plans, but it should run faster than other plans for most input relations. 2: Query optimization (4 points) For the four relations in the following table, find the best join order according to the dynamic programming algorithm used in System-R. You should give the dynamic programming table entries for evaluating the join orders. The cost of each join is the number of I/O accesses the database system performs to execute the join. Assume that the database system uses the two-pass sort-merge join algorithm to perform the join operations. Each block contains 4 tuples and tuples of all relations have the same size. We are interested only in left-deep join trees. Note that you should use the System-R optimizer formula to compute the size of each join output. R(A,B,C) S(B,C) W(B,D) U(A,D) T(R)=4000 T(S)=3000 T(W)=2000 T(U)=1000 V(R,A) =100 V(R,B) =200 V(R,C) =100

- 27. V(S,B) =100 V(S,C) = 300 V(W,B) =100 V(W,D) =50 V(U,A) =100 V(U,D) =100 2 3: Query optimization (2 points) What is the time-complexity of the dynamic programming algorithm you used in question (3) of this assignment? You should explain your answer. 4: Serializability and 2PL (5 points) (a) Consider the following classes of schedules: serializable and

- 28. 2PL. For each of the following schedules, state which of the preceding classes it belongs to. If you cannot decide whether a schedule belongs in a certain class based on the listed actions, explain briefly. Also, for each 2PL schedule, identify whether a cascading rollback (abort) may happen. A cascading rollback will happen in a schedule if a given transaction aborts at some point in the schedule, and at least one other transaction must be aborted by the system to keep the database consistent. (4 points) The actions are listed in the order they are scheduled and prefixed with the transaction name. If a commit or abort is not shown, the schedule is incomplete; assume that abort or commit must follow all the listed actions. 1. T1:R(X), T2:R(Y), T3:W(X), T2:R(X), T1:R(Y) 2. T1:R(X), T1:R(Y), T1:W(X), T2:R(Y), T3:W(Y), T1:W(X), T2:R(Y) 3. T1:W(X), T2:R(X), T1:W(X) 4. T1:R(X), T2:W(X), T1:W(X), T3:R(X) (b) Consider a database DB with relations R1 and R2. The relation R1 contains tuples t1 and t2 and the relation R2 contains tuples t3, t4, and t5. Assume that the database DB, relations, and

- 29. tuples form a hierarchy of lockable database elements. Explain the sequence of lock requests and the response of the locking scheduler to the following schedule. You may assume all lock requests occur just before they are needed, and all unlocks occur at the end of the transaction, i.e., EOT. (1 point) • T1:R(t1), T2:W(t2), T2:R(t3), T1:W(t4) 5: Degrees of Consistency (4 points) (a) Consider the schedule shown in Table 1. What are the maximum degrees of consistency for T1 and T2 in this schedule? You must find the maximum degrees of consistency for T1 and T2 that makes this schedule possible. (2 points) (b) Consider a transaction that reads the information about a set of accounts from a CSV file and writes them in a database. What degree of consistency will you choose for this transaction? 3

- 30. T1 T2 0 start 1 read X 2 write X 3 start 4 read X 5 write X 6 Commit 7 read Y 8 write Y 9 Commit Table 1: Transaction schedule You should justify your answer. Next, consider another transaction in a banking system that reads the balances of all bank accounts in a branch and computes their average. What degree of consistency will you choose for this transaction? You should justify your answer. (2 points) 6: Serializability (6 points) Consider the following protocol for concurrency control. The database system assigns each transaction a unique and strictly increasingly id at the start of the transaction. For each data item, the database system also keeps the id of the last transaction that has modified the data item, called the transaction-id of the data item. Before a

- 31. transaction T wants to read or write on a data item A, the database system checks whether the transaction-id of A is greater than the id of T . If this is the case, the database system allows T to read/write A. Otherwise, the database system aborts and restarts T . (a) Does this protocol allow only serializable schedules for transactions? If not, you may suggest a change to the protocol so that all schedules permitted by this protocol are serializable. You should justify your answer. (3 points) (b) Propose a change to this protocol or the modified version you have designed for part (a) that increases its degree of concurrency, i.e., it allows more serializable schedules. (3 points) 1: Query optimization (2 points)2: Query optimization (4 points)3: Query optimization (2 points)4: Serializability and 2PL (5 points)5: Degrees of Consistency (4 points)6: Serializability (6 points)