Raw system logs processing with hive

•Download as DOCX, PDF•

0 likes•148 views

Processing System Logs using HIVE and analyzing specific data.

Report

Share

Report

Share

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Dangerous on ClickHouse in 30 minutes, by Robert Hodges, Altinity CEO

Dangerous on ClickHouse in 30 minutes, by Robert Hodges, Altinity CEO

ClickHouse Features for Advanced Users, by Aleksei Milovidov

ClickHouse Features for Advanced Users, by Aleksei Milovidov

Five Great Ways to Lose Data on Kubernetes - KubeCon EU 2020

Five Great Ways to Lose Data on Kubernetes - KubeCon EU 2020

ClickHouse materialized views - a secret weapon for high performance analytic...

ClickHouse materialized views - a secret weapon for high performance analytic...

Data warehouse or conventional database: Which is right for you?

Data warehouse or conventional database: Which is right for you?

New features in ProxySQL 2.0 (updated to 2.0.9) by Rene Cannao (ProxySQL)

New features in ProxySQL 2.0 (updated to 2.0.9) by Rene Cannao (ProxySQL)

Clickhouse Capacity Planning for OLAP Workloads, Mik Kocikowski of CloudFlare

Clickhouse Capacity Planning for OLAP Workloads, Mik Kocikowski of CloudFlare

ClickHouse Analytical DBMS. Introduction and usage, by Alexander Zaitsev

ClickHouse Analytical DBMS. Introduction and usage, by Alexander Zaitsev

A Practical Introduction to Handling Log Data in ClickHouse, by Robert Hodges...

A Practical Introduction to Handling Log Data in ClickHouse, by Robert Hodges...

InfluxDB IOx Tech Talks: The Impossible Dream: Easy-to-Use, Super Fast Softw...

InfluxDB IOx Tech Talks: The Impossible Dream: Easy-to-Use, Super Fast Softw...

Fun with click house window functions webinar slides 2021-08-19

Fun with click house window functions webinar slides 2021-08-19

Monitoring Your ISP Using InfluxDB Cloud and Raspberry Pi

Monitoring Your ISP Using InfluxDB Cloud and Raspberry Pi

ClickHouse Query Performance Tips and Tricks, by Robert Hodges, Altinity CEO

ClickHouse Query Performance Tips and Tricks, by Robert Hodges, Altinity CEO

Meet the Experts: Visualize Your Time-Stamped Data Using the React-Based Gira...

Meet the Experts: Visualize Your Time-Stamped Data Using the React-Based Gira...

ClickHouse tips and tricks. Webinar slides. By Robert Hodges, Altinity CEO

ClickHouse tips and tricks. Webinar slides. By Robert Hodges, Altinity CEO

Similar to Raw system logs processing with hive

Similar to Raw system logs processing with hive (20)

Checking clustering factor to detect row migration

Checking clustering factor to detect row migration

Database & Technology 1 _ Clancy Bufton _ Flashback Query - oracle total reca...

Database & Technology 1 _ Clancy Bufton _ Flashback Query - oracle total reca...

Oracle goldengate 11g schema replication from standby database

Oracle goldengate 11g schema replication from standby database

Recently uploaded

Saudi Arabia [ Abortion pills) Jeddah/riaydh/dammam/+966572737505☎️] cytotec tablets uses abortion pills 💊💊

How effective is the abortion pill? 💊💊 +966572737505) "Abortion pills in Jeddah" how to get cytotec tablets in Riyadh " Abortion pills in dammam*💊💊

The abortion pill is very effective. If you’re taking mifepristone and misoprostol, it depends on how far along the pregnancy is, and how many doses of medicine you take:💊💊 +966572737505) how to buy cytotec pills

At 8 weeks pregnant or less, it works about 94-98% of the time. +966572737505[ 💊💊💊

At 8-9 weeks pregnant, it works about 94-96% of the time. +966572737505)

At 9-10 weeks pregnant, it works about 91-93% of the time. +966572737505)💊💊

If you take an extra dose of misoprostol, it works about 99% of the time.

At 10-11 weeks pregnant, it works about 87% of the time. +966572737505)

If you take an extra dose of misoprostol, it works about 98% of the time.

In general, taking both mifepristone and+966572737505 misoprostol works a bit better than taking misoprostol only.

+966572737505

Taking misoprostol alone works to end the+966572737505 pregnancy about 85-95% of the time — depending on how far along the+966572737505 pregnancy is and how you take the medicine.

+966572737505

The abortion pill usually works, but if it doesn’t, you can take more medicine or have an in-clinic abortion.

+966572737505

When can I take the abortion pill?+966572737505

In general, you can have a medication abortion up to 77 days (11 weeks)+966572737505 after the first day of your last period. If it’s been 78 days or more since the first day of your last+966572737505 period, you can have an in-clinic abortion to end your pregnancy.+966572737505

Why do people choose the abortion pill?

Which kind of abortion you choose all depends on your personal+966572737505 preference and situation. With+966572737505 medication+966572737505 abortion, some people like that you don’t need to have a procedure in a doctor’s office. You can have your medication abortion on your own+966572737505 schedule, at home or in another comfortable place that you choose.+966572737505 You get to decide who you want to be with during your abortion, or you can go it alone. Because+966572737505 medication abortion is similar to a miscarriage, many people feel like it’s more “natural” and less invasive. And some+966572737505 people may not have an in-clinic abortion provider close by, so abortion pills are more available to+966572737505 them.

+966572737505

Your doctor, nurse, or health center staff can help you decide which kind of abortion is best for you.

+966572737505

More questions from patients:

Saudi Arabia+966572737505

CYTOTEC Misoprostol Tablets. Misoprostol is a medication that can prevent stomach ulcers if you also take NSAID medications. It reduces the amount of acid in your stomach, which protects your stomach lining. The brand name of this medication is Cytotec®.+966573737505)

Unwanted Kit is a combination of two mediciAbortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get CytotecAbortion pills in Riyadh +966572737505 get cytotec

Ashok Vihar Call Girls in Delhi (–9953330565) Escort Service In Delhi NCR PROVIDE 100% REAL GIRLS ALL ARE GIRLS LOOKING MODELS AND RAM MODELS ALL GIRLS” INDIAN , RUSSIAN ,KASMARI ,PUNJABI HOT GIRLS AND MATURED HOUSE WIFE BOOKING ONLY DECENT GUYS AND GENTLEMAN NO FAKE PERSON FREE HOME SERVICE IN CALL FULL AC ROOM SERVICE IN SOUTH DELHI Ultimate Destination for finding a High Profile Independent Escorts in Delhi.Gurgaon.Noida..!.Like You Feel 100% Real Girl Friend Experience. We are High Class Delhi Escort Agency offering quality services with discretion. We only offer services to gentlemen people. We have lots of girls working with us like students, Russian, models, house wife, and much More We Provide Short Time and Full Night Service Call ☎☎+91–9953330565 ❤꧂ • In Call and Out Call Service in Delhi NCR • 3* 5* 7* Hotels Service in Delhi NCR • 24 Hours Available in Delhi NCR • Indian, Russian, Punjabi, Kashmiri Escorts • Real Models, College Girls, House Wife, Also Available • Short Time and Full Time Service Available • Hygienic Full AC Neat and Clean Rooms Avail. In Hotel 24 hours • Daily New Escorts Staff Available • Minimum to Maximum Range Available. Location;- Delhi, Gurgaon, NCR, Noida, and All Over in Delhi Hotel and Home Services HOTEL SERVICE AVAILABLE :-REDDISSON BLU,ITC WELCOM DWARKA,HOTEL-JW MERRIOTT,HOLIDAY INN MAHIPALPUR AIROCTY,CROWNE PLAZA OKHALA,EROSH NEHRU PLACE,SURYAA KALKAJI,CROWEN PLAZA ROHINI,SHERATON PAHARGANJ,THE AMBIENC,VIVANTA,SURAJKUND,ASHOKA CONTINENTAL , LEELA CHANKYAPURI,_ALL 3* 5* 7* STARTS HOTEL SERVICE BOOKING CALL Call WHATSAPP Call ☎+91–9953330565❤꧂ NIGHT SHORT TIME BOTH ARE AVAILABLE

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service9953056974 Low Rate Call Girls In Saket, Delhi NCR

Recently uploaded (20)

Call Girls Bommasandra Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Call Girls Bommasandra Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Vip Mumbai Call Girls Thane West Call On 9920725232 With Body to body massage...

Vip Mumbai Call Girls Thane West Call On 9920725232 With Body to body massage...

VIP Model Call Girls Hinjewadi ( Pune ) Call ON 8005736733 Starting From 5K t...

VIP Model Call Girls Hinjewadi ( Pune ) Call ON 8005736733 Starting From 5K t...

Just Call Vip call girls Palakkad Escorts ☎️9352988975 Two shot with one girl...

Just Call Vip call girls Palakkad Escorts ☎️9352988975 Two shot with one girl...

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

➥🔝 7737669865 🔝▻ mahisagar Call-girls in Women Seeking Men 🔝mahisagar🔝 Esc...

➥🔝 7737669865 🔝▻ mahisagar Call-girls in Women Seeking Men 🔝mahisagar🔝 Esc...

Call Girls In Bellandur ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Bellandur ☎ 7737669865 🥵 Book Your One night Stand

Just Call Vip call girls roorkee Escorts ☎️9352988975 Two shot with one girl ...

Just Call Vip call girls roorkee Escorts ☎️9352988975 Two shot with one girl ...

Vip Mumbai Call Girls Marol Naka Call On 9920725232 With Body to body massage...

Vip Mumbai Call Girls Marol Naka Call On 9920725232 With Body to body massage...

Aspirational Block Program Block Syaldey District - Almora

Aspirational Block Program Block Syaldey District - Almora

Escorts Service Kumaraswamy Layout ☎ 7737669865☎ Book Your One night Stand (B...

Escorts Service Kumaraswamy Layout ☎ 7737669865☎ Book Your One night Stand (B...

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Just Call Vip call girls Mysore Escorts ☎️9352988975 Two shot with one girl (...

Just Call Vip call girls Mysore Escorts ☎️9352988975 Two shot with one girl (...

Call Girls Bannerghatta Road Just Call 👗 7737669865 👗 Top Class Call Girl Ser...

Call Girls Bannerghatta Road Just Call 👗 7737669865 👗 Top Class Call Girl Ser...

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

➥🔝 7737669865 🔝▻ malwa Call-girls in Women Seeking Men 🔝malwa🔝 Escorts Ser...

➥🔝 7737669865 🔝▻ malwa Call-girls in Women Seeking Men 🔝malwa🔝 Escorts Ser...

Just Call Vip call girls kakinada Escorts ☎️9352988975 Two shot with one girl...

Just Call Vip call girls kakinada Escorts ☎️9352988975 Two shot with one girl...

5CL-ADBA,5cladba, Chinese supplier, safety is guaranteed

5CL-ADBA,5cladba, Chinese supplier, safety is guaranteed

Call Girls Indiranagar Just Call 👗 9155563397 👗 Top Class Call Girl Service B...

Call Girls Indiranagar Just Call 👗 9155563397 👗 Top Class Call Girl Service B...

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

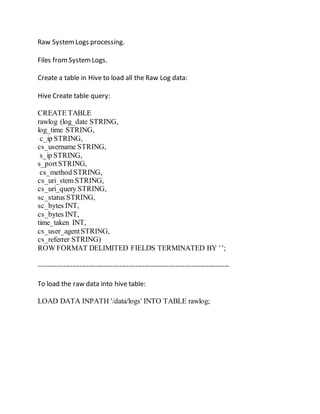

Raw system logs processing with hive

- 1. Raw SystemLogs processing. Files fromSystemLogs. Create a table in Hive to load all the Raw Log data: Hive Create table query: CREATE TABLE rawlog (log_date STRING, log_time STRING, c_ip STRING, cs_username STRING, s_ip STRING, s_portSTRING, cs_method STRING, cs_uri_stem STRING, cs_uri_query STRING, sc_status STRING, sc_bytes INT, cs_bytes INT, time_taken INT, cs_user_agentSTRING, cs_referrer STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ' '; ------------------------------------------------------------------------------------- To load the raw data into hive table: LOAD DATA INPATH '/data/logs' INTO TABLE rawlog;

- 2. Check if the data is loaded correctly: Select * from rawlog LIMIT100; O/P:-

- 3. Create an External Table: Clean Log to store data that does not contain NULL values: Create External Table : Clean Log: CREATE EXTERNAL TABLE cleanlog (log_date DATE, log_time STRING, c_ip STRING, cs_username STRING, s_ip STRING, s_portSTRING, cs_method STRING, cs_uri_stem STRING, cs_uri_query STRING, sc_status STRING, sc_bytes INT, cs_bytes INT, time_taken INT, cs_user_agentSTRING, cs_referrer STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ' ' STOREDAS TEXTFILE LOCATION '/data/cleanlog'; Insertdata into Clean Log table: To create a clean log table: INSERT INTO TABLE cleanlog SELECT * FROM rawlog WHERE SUBSTR(log_date, 1, 1) <> '#';

- 5. select * fromcleanlog limit 100; O/P will be a table with no NULL values: Creating a view for the cleanlog table: CREATE VIEW vDailySummary AS SELECT log_date, COUNT(*) AS requests, SUM(cs_bytes)AS inbound_bytes, SUM(sc_bytes)AS outbound_bytes FROM cleanlog GROUP BY log_date; SELECT * FROM daily_summary ORDER BY log_date;

- 6. O/P ordered by log date Sum of all sc_bytes Sum of all cs_bytes. Now we Partition the table, which will help enhance performancewhen querying on specific columns or when the job can be effectively split across multiple reducer nodes. CREATE EXTERNAL TABLE partitionedlog (log_day int, log_time STRING, c_ip STRING, cs_username STRING, s_ip STRING, s_portSTRING, cs_method STRING, cs_uri_stem STRING, cs_uri_query STRING, sc_status STRING, sc_bytes INT, cs_bytes INT, time_taken INT, cs_user_agentSTRING, cs_referrer STRING)PARTITIONED BY (log_year int, log_month int) ROW FORMAT DELIMITED FIELDS TERMINATED BY ' ' STOREDAS TEXTFILE LOCATION '/data/partitionedlog';

- 7. While loading into the partitioned table, we need to set the following modes: SET hive.exec.dynamic.partition.mode=nonstrict; SET hive.exec.dynamic.partition = true; INSERT INTO TABLE partitionedlog PARTITION(log_year, log_month) SELECT DAY(log_date), log_time, c_ip, cs_username, s_ip, s_port, cs_method, cs_uri_stem, cs_uri_query, sc_status, sc_bytes, cs_bytes, time_taken, cs_user_agent, cs_referrer, YEAR(log_date), MONTH(log_date) FROM rawlog WHERE SUBSTR(log_date, 1, 1) <> '#'; By partitioning the data in this way, queries filtered on year and month will benefit from having to search a smaller volume of data to retrieve the results.

- 8. For EX: If wewant to specifically look at year 2008 and month 6: SELECT log_day, count(*) AS page_hits FROM partitionedlog WHERE log_year=2008 AND log_month=6 GROUP BY log_day;