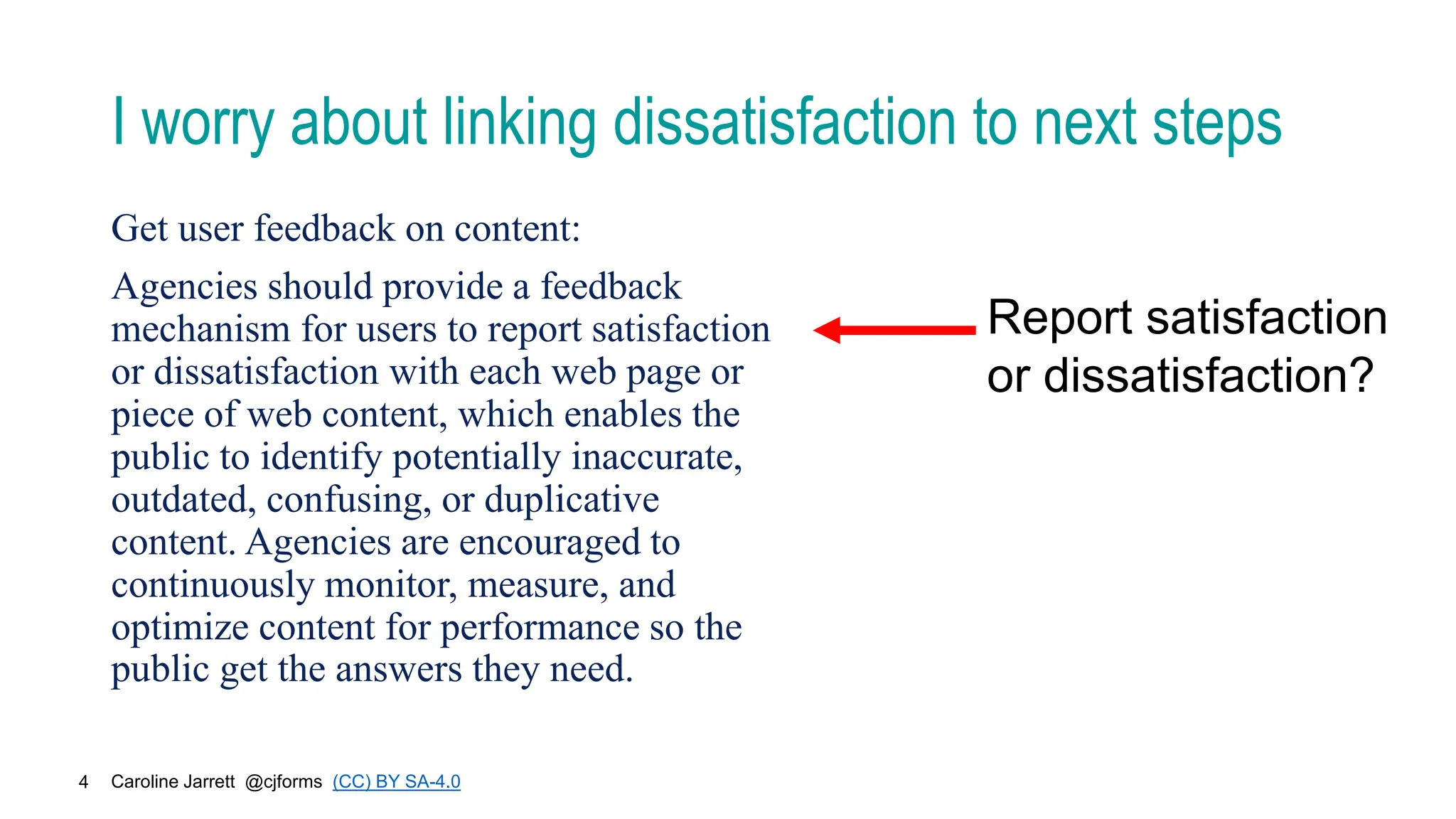

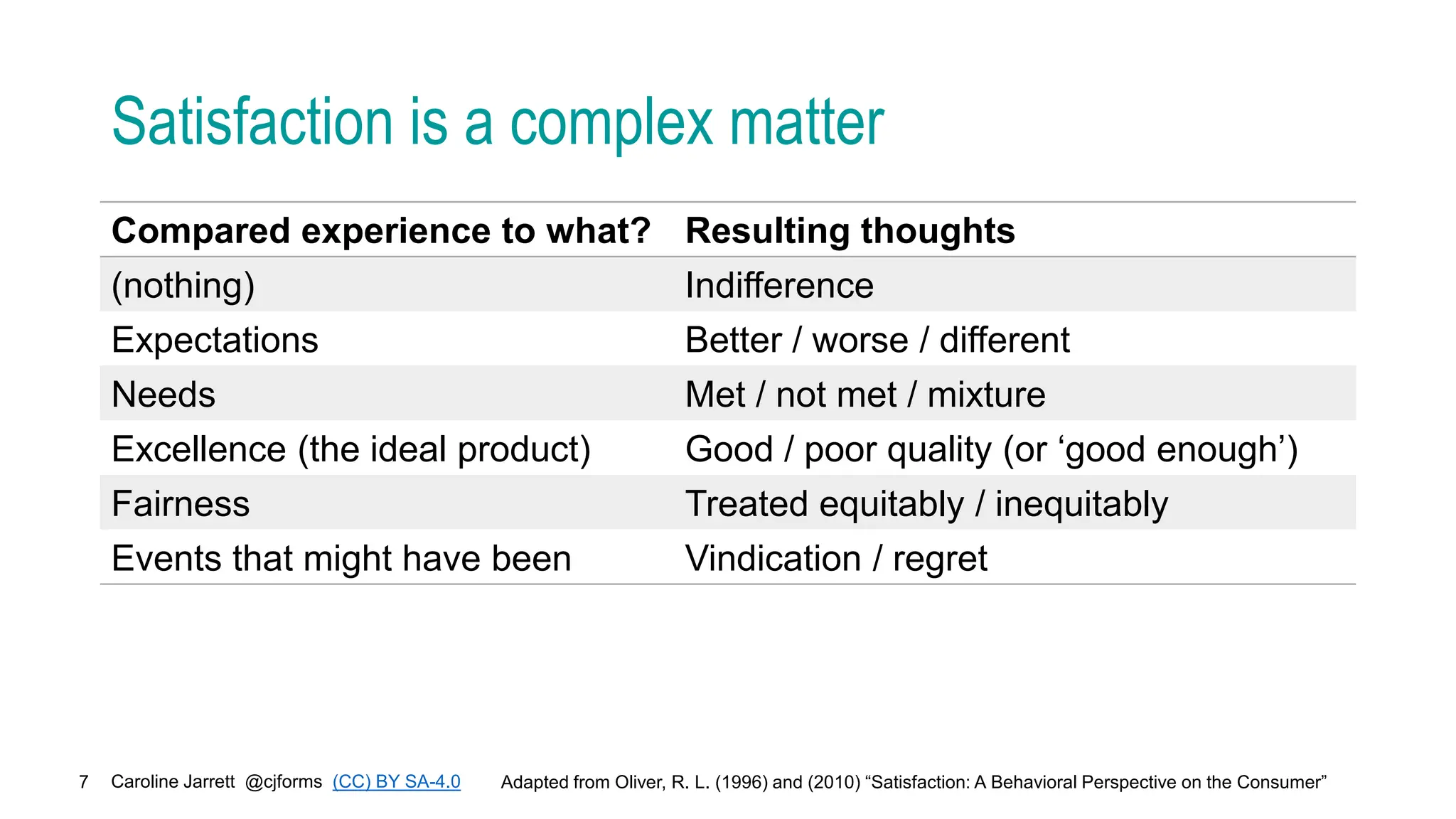

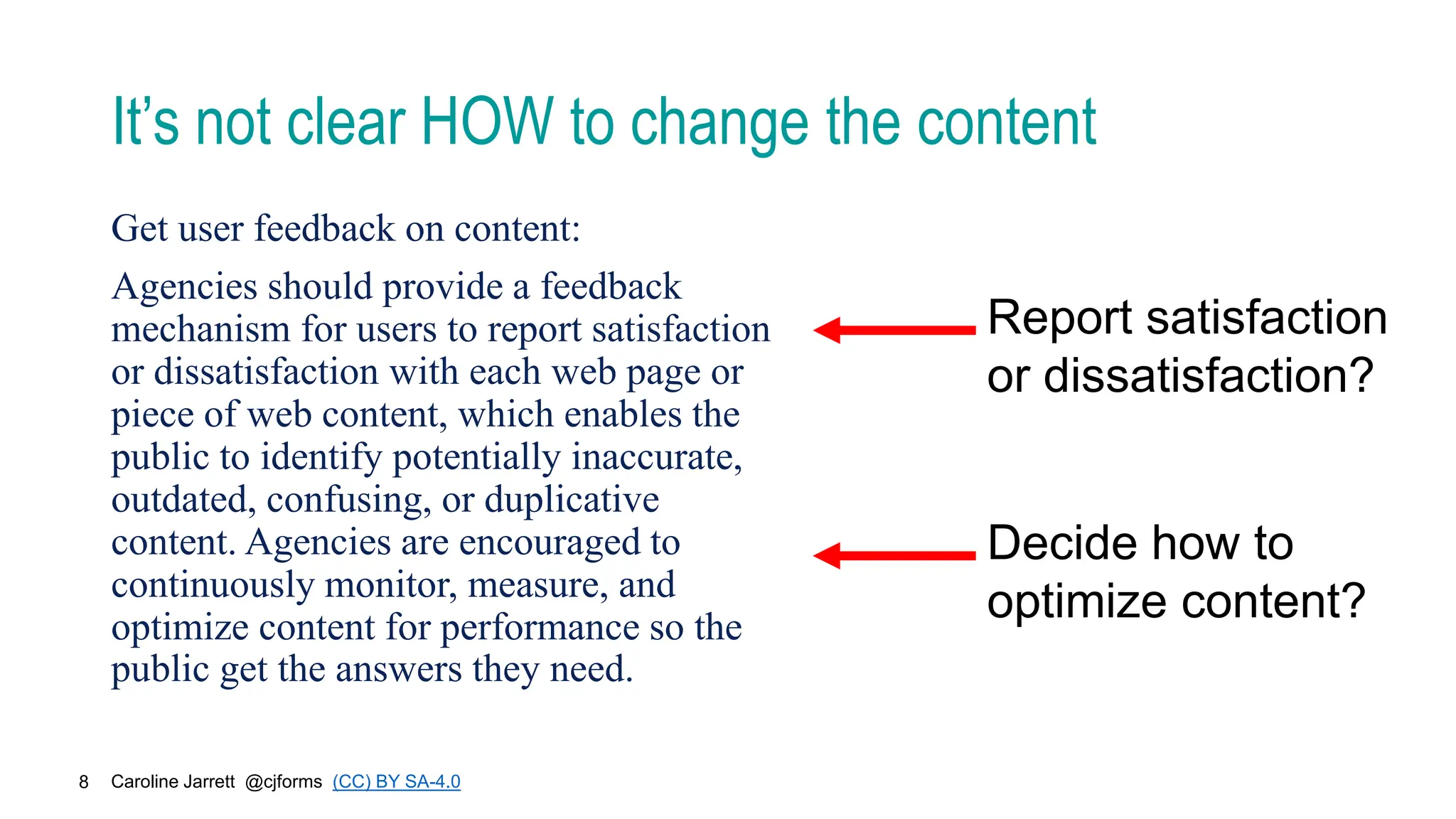

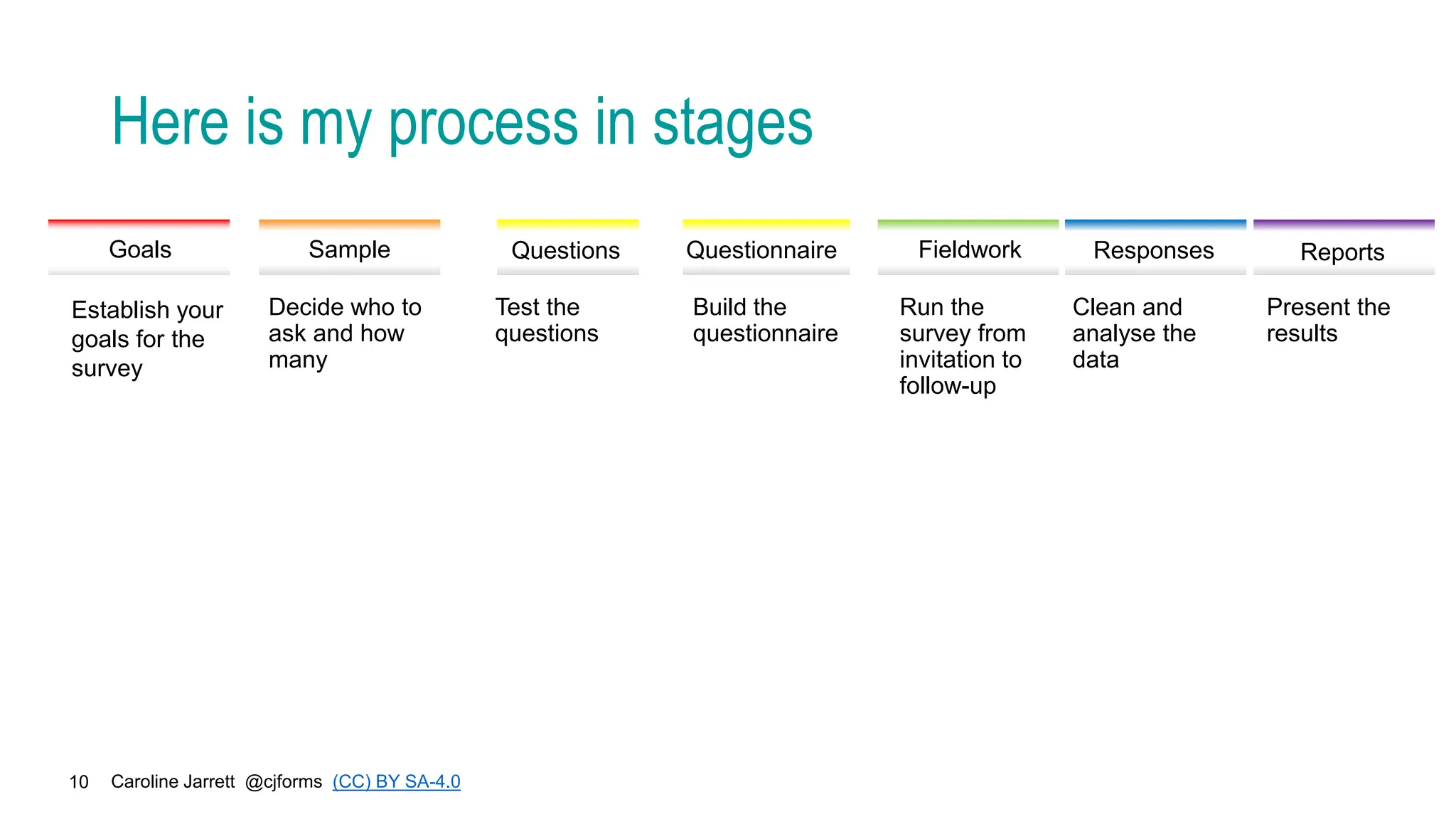

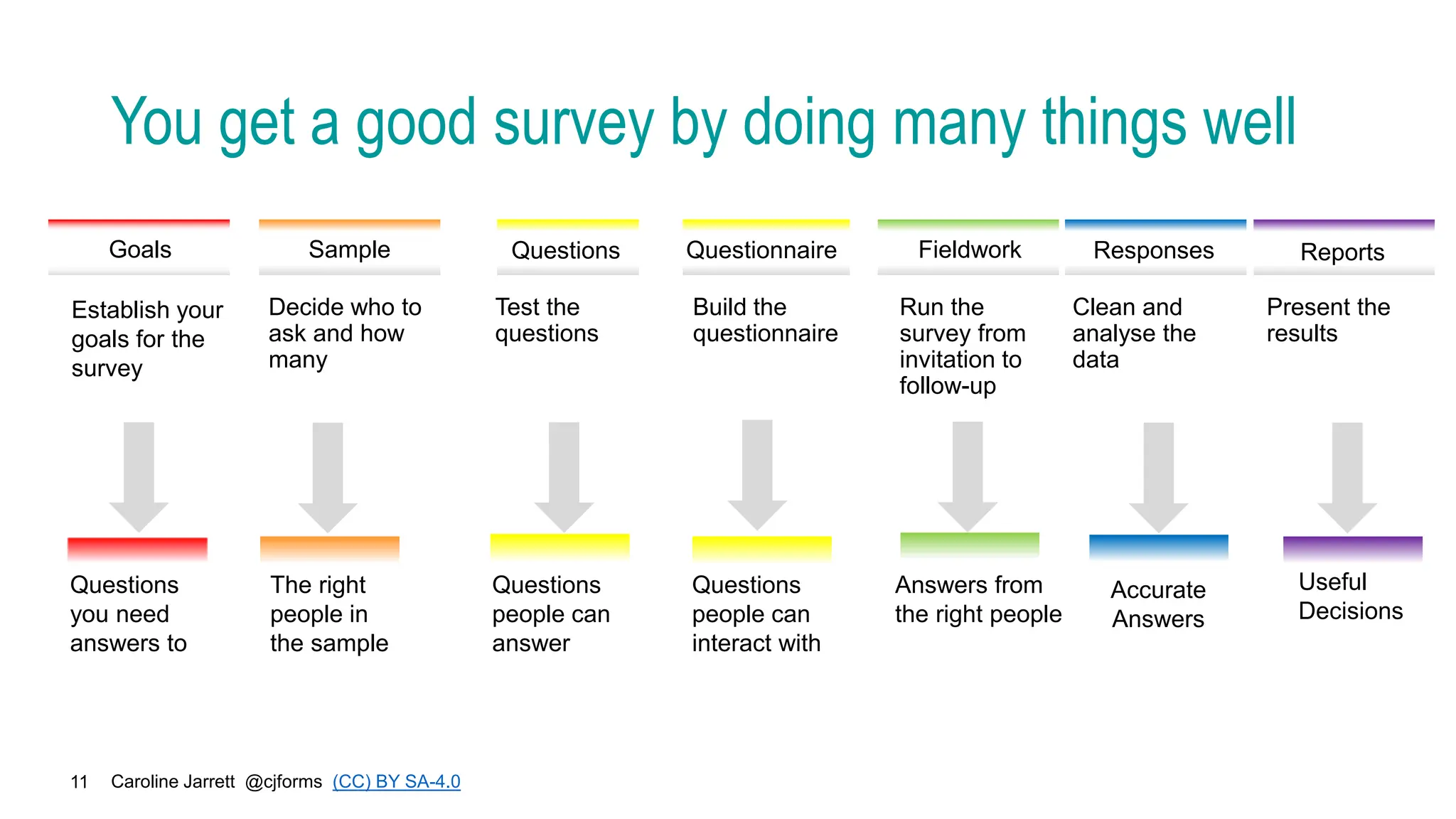

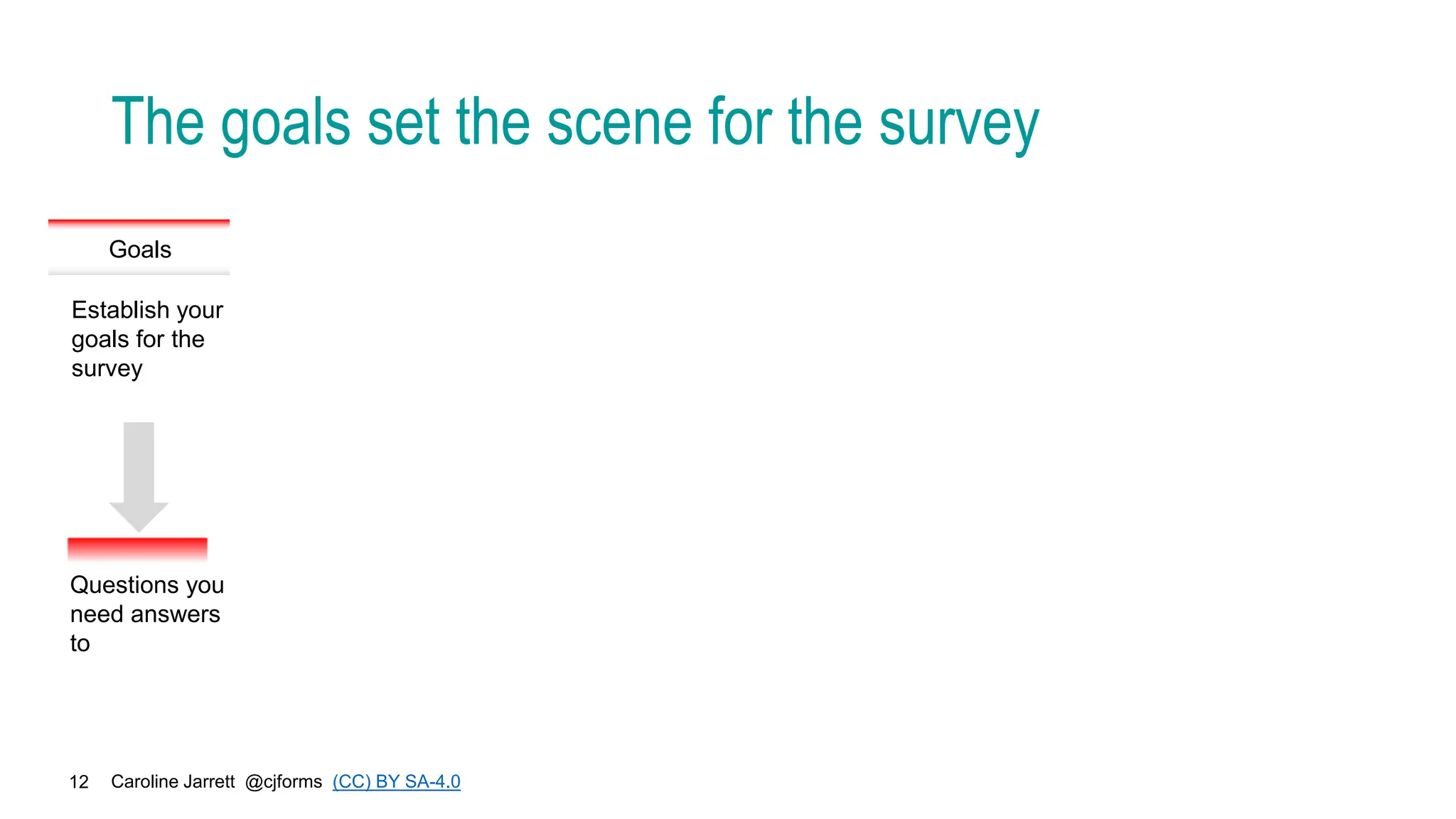

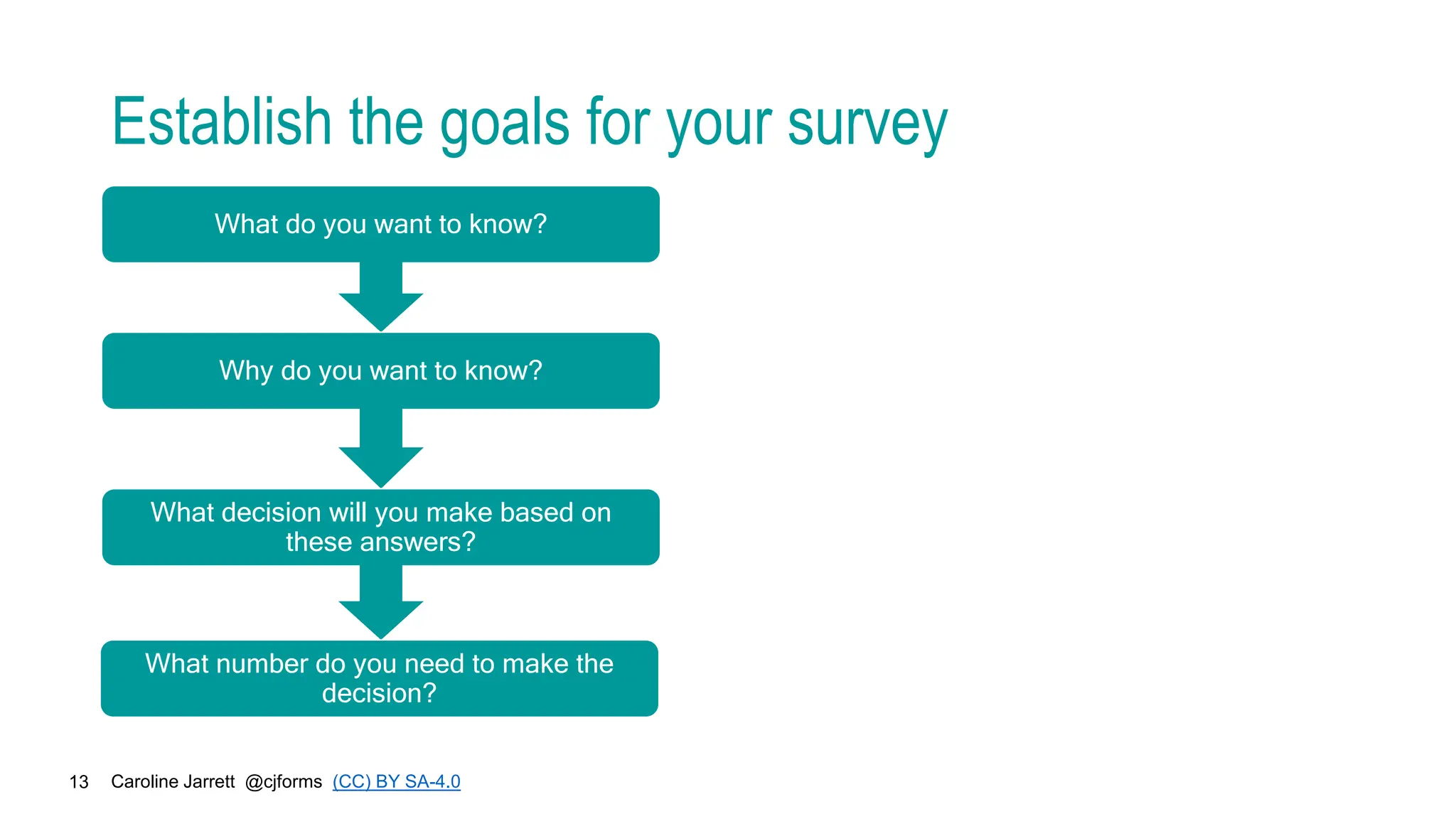

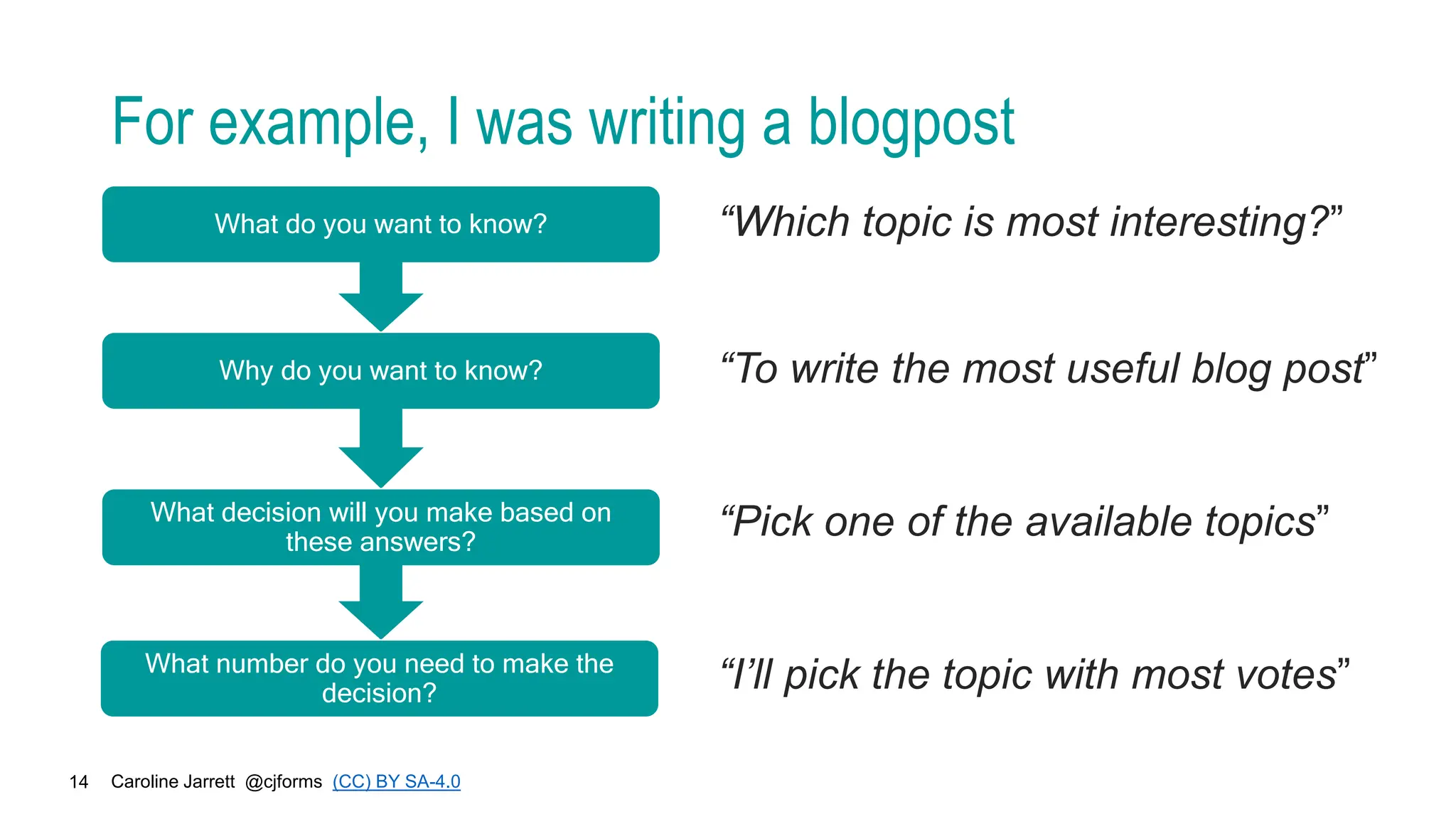

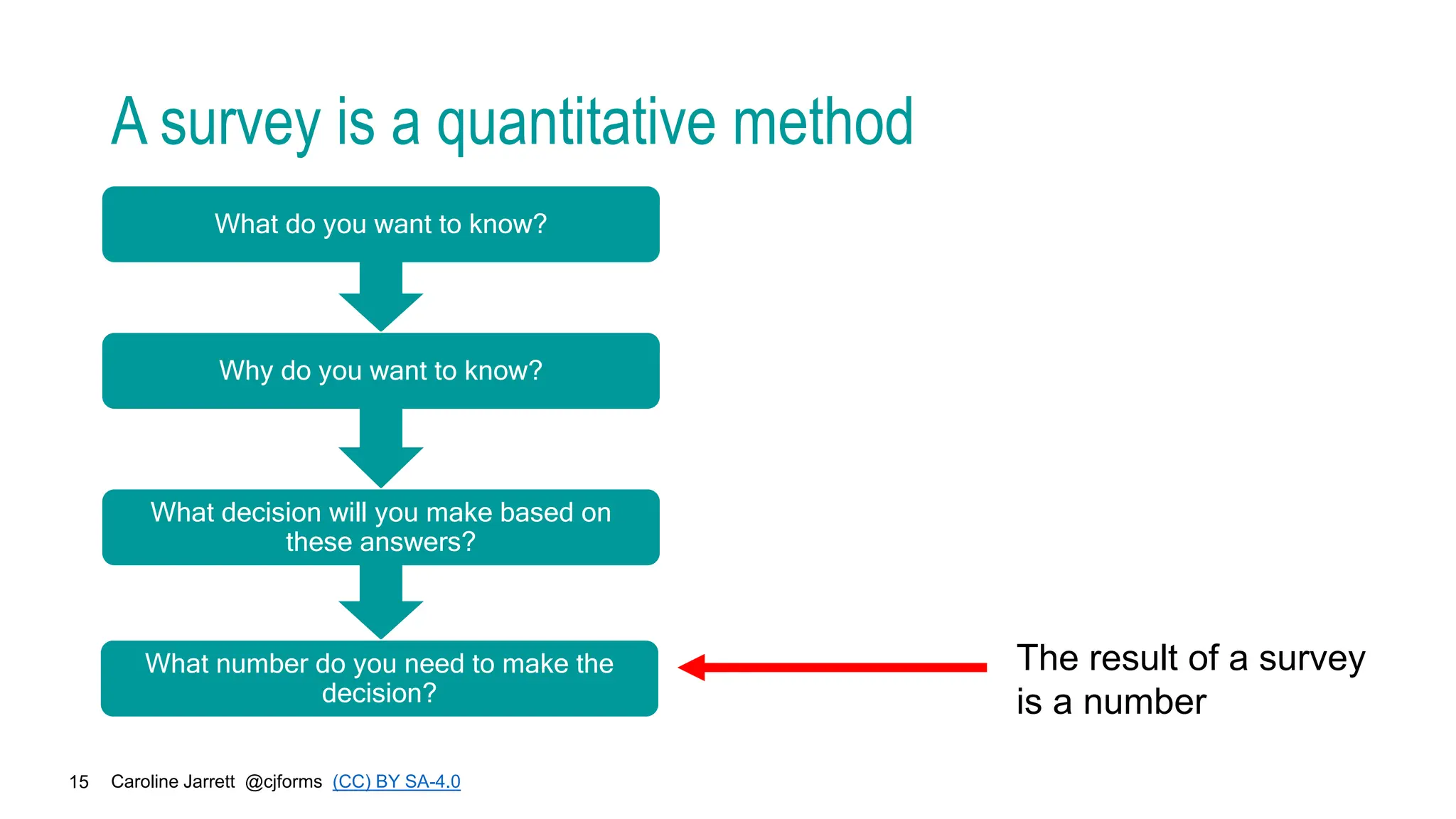

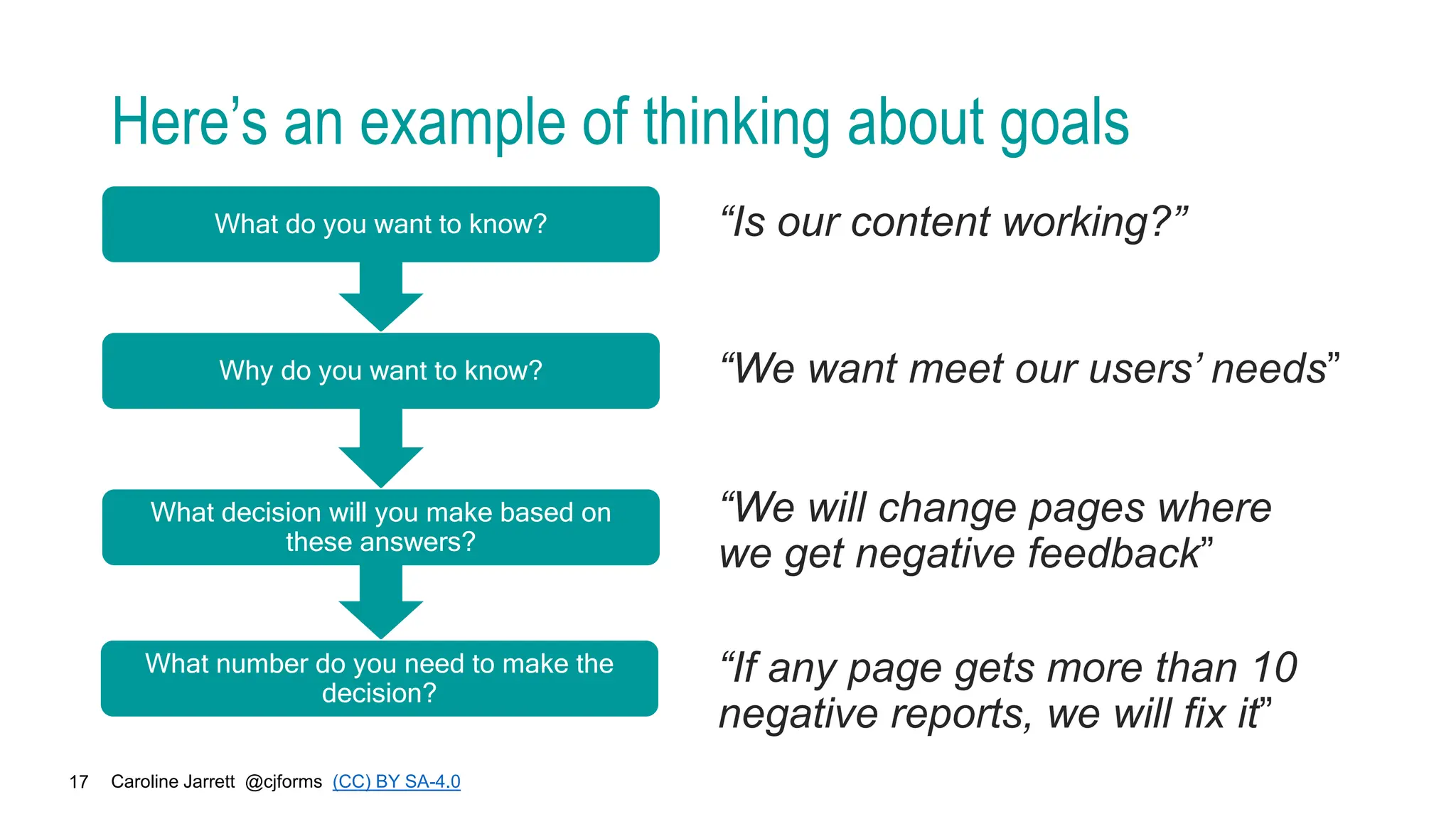

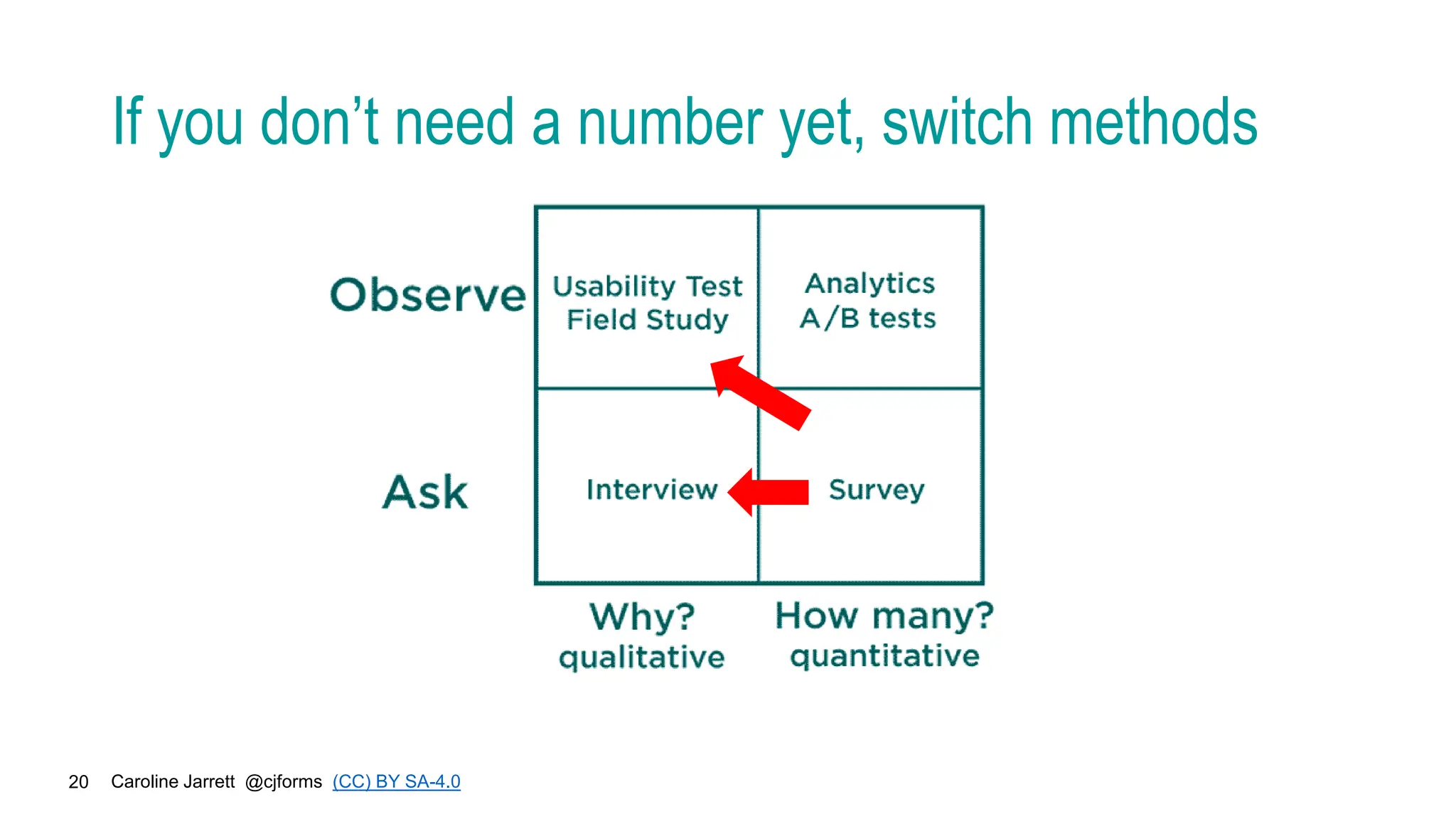

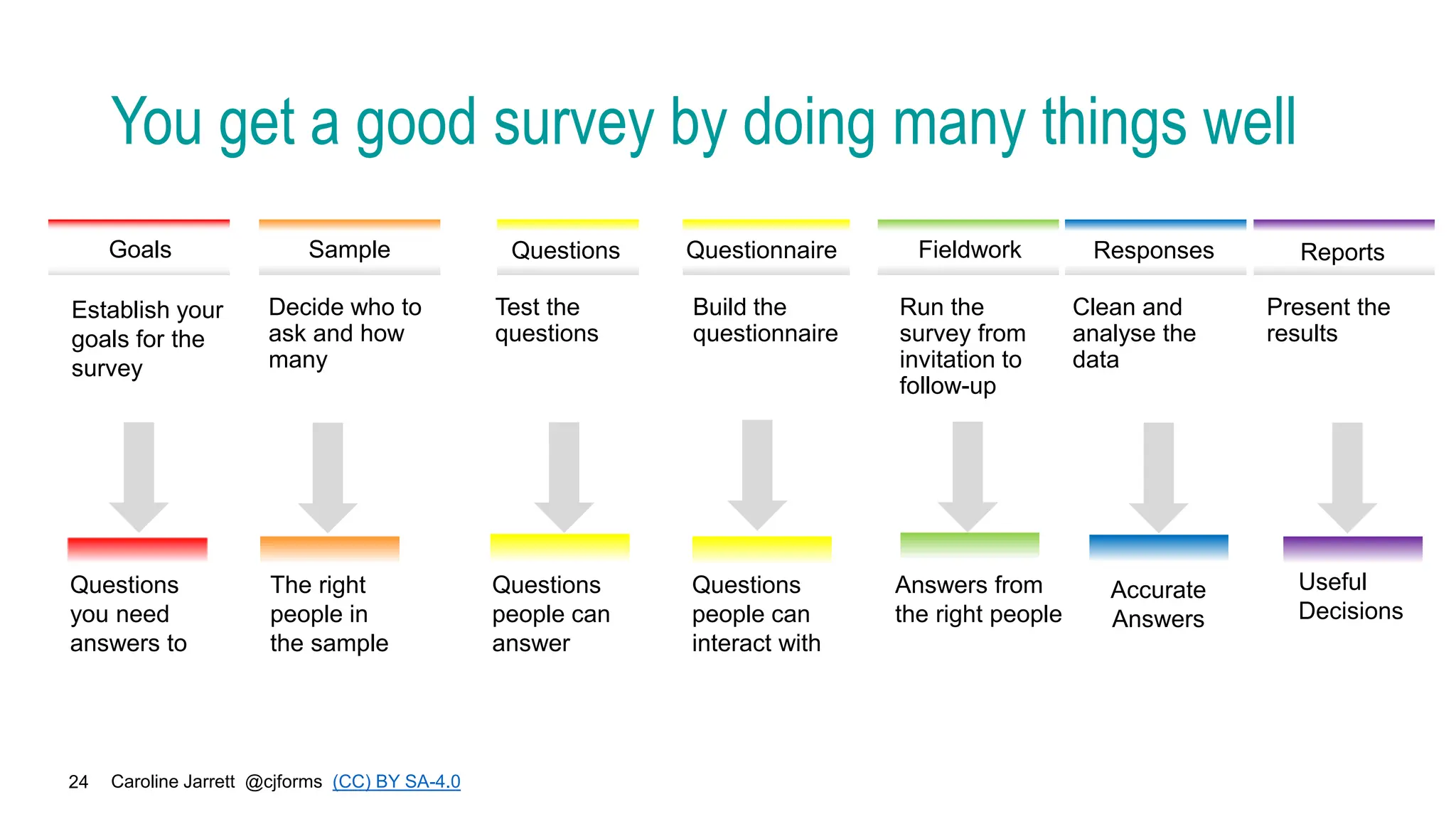

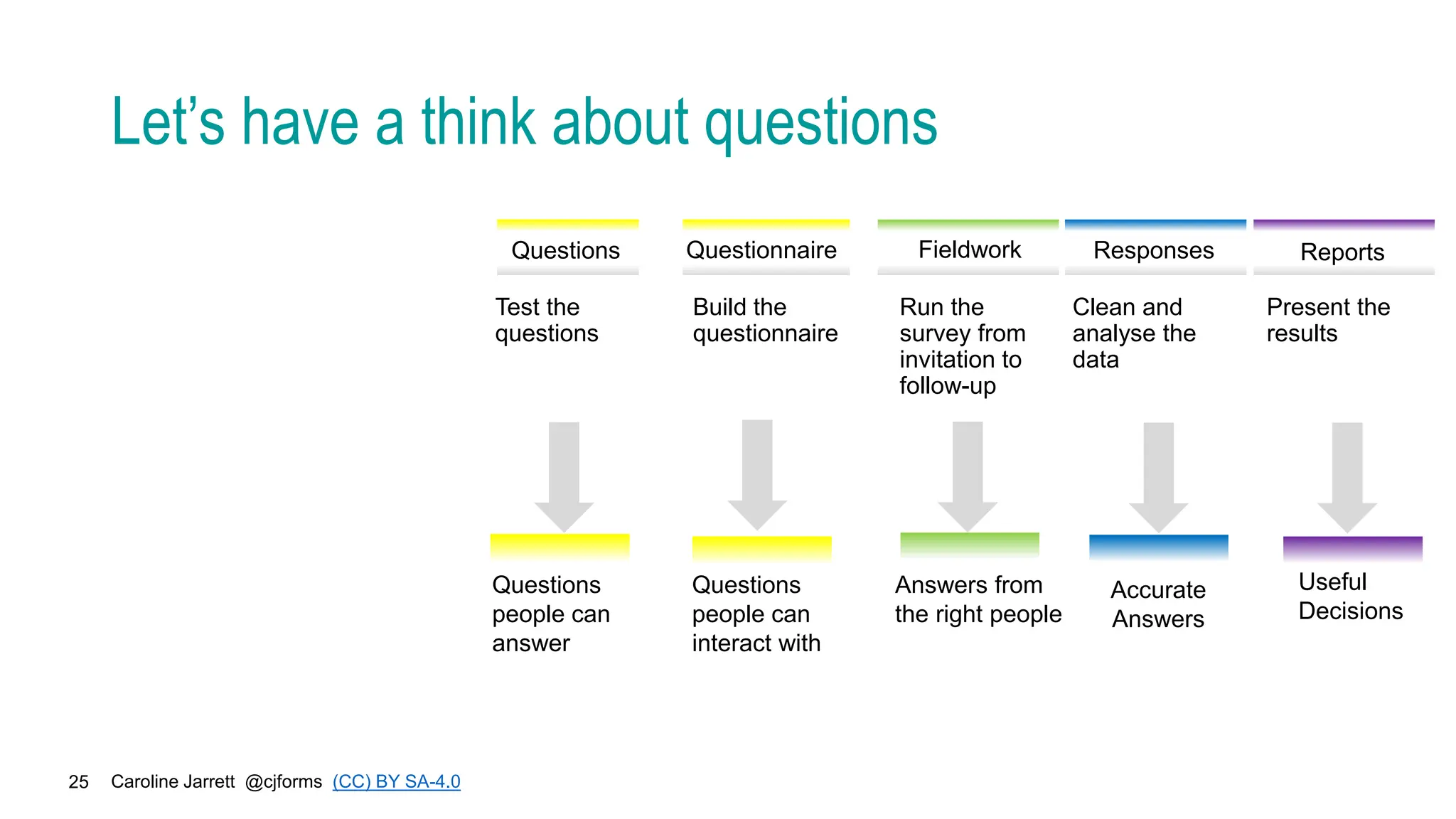

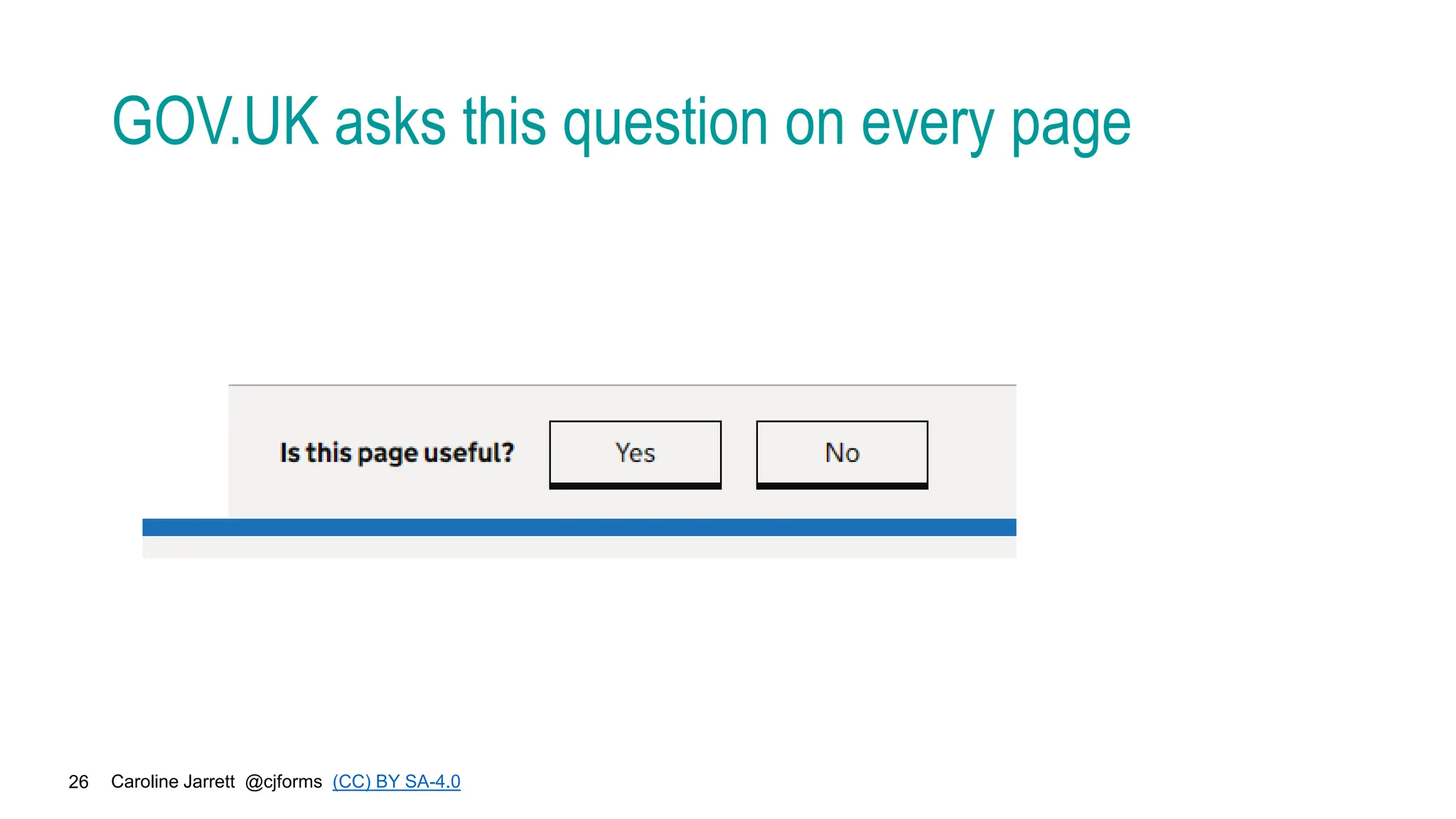

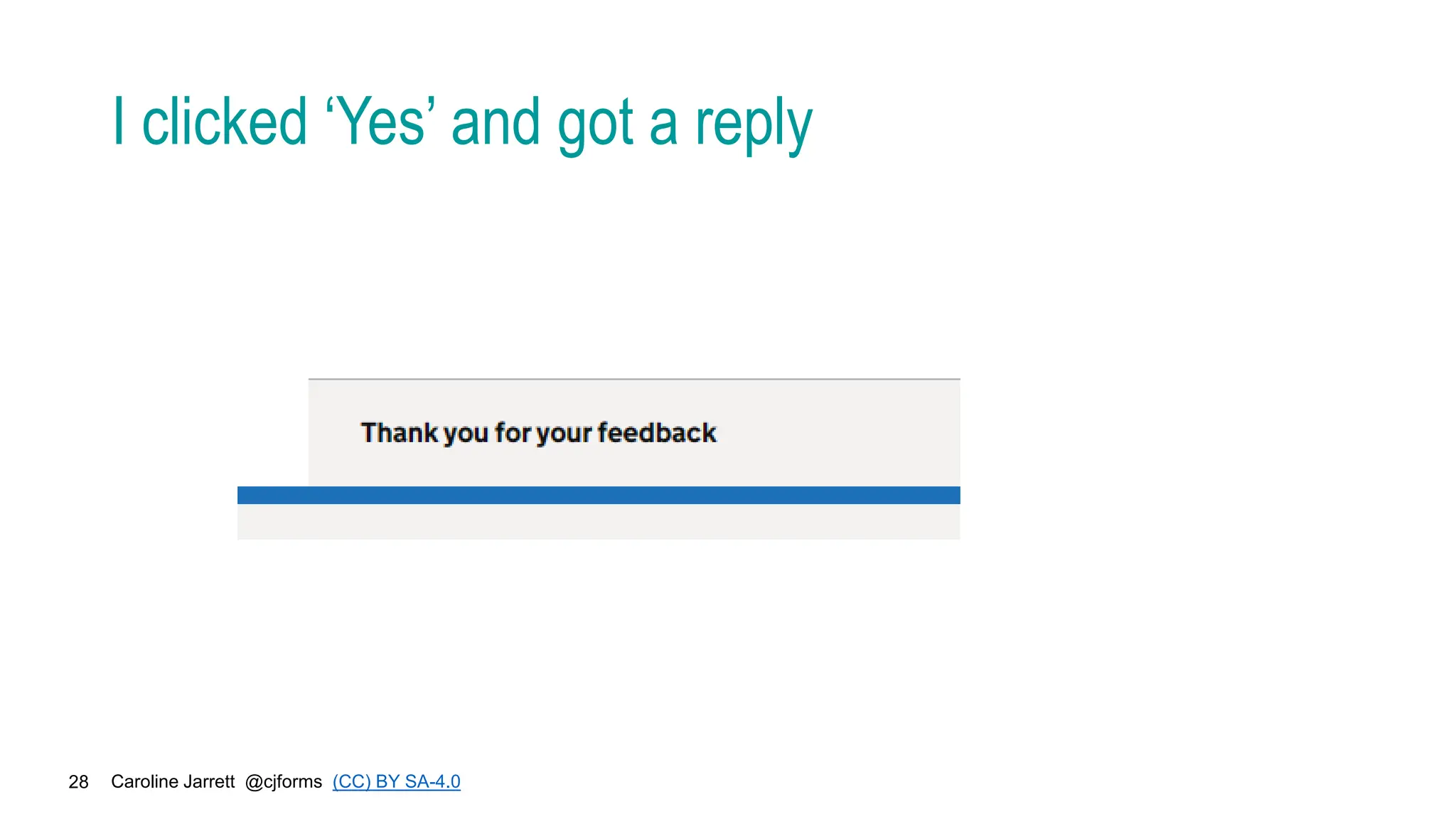

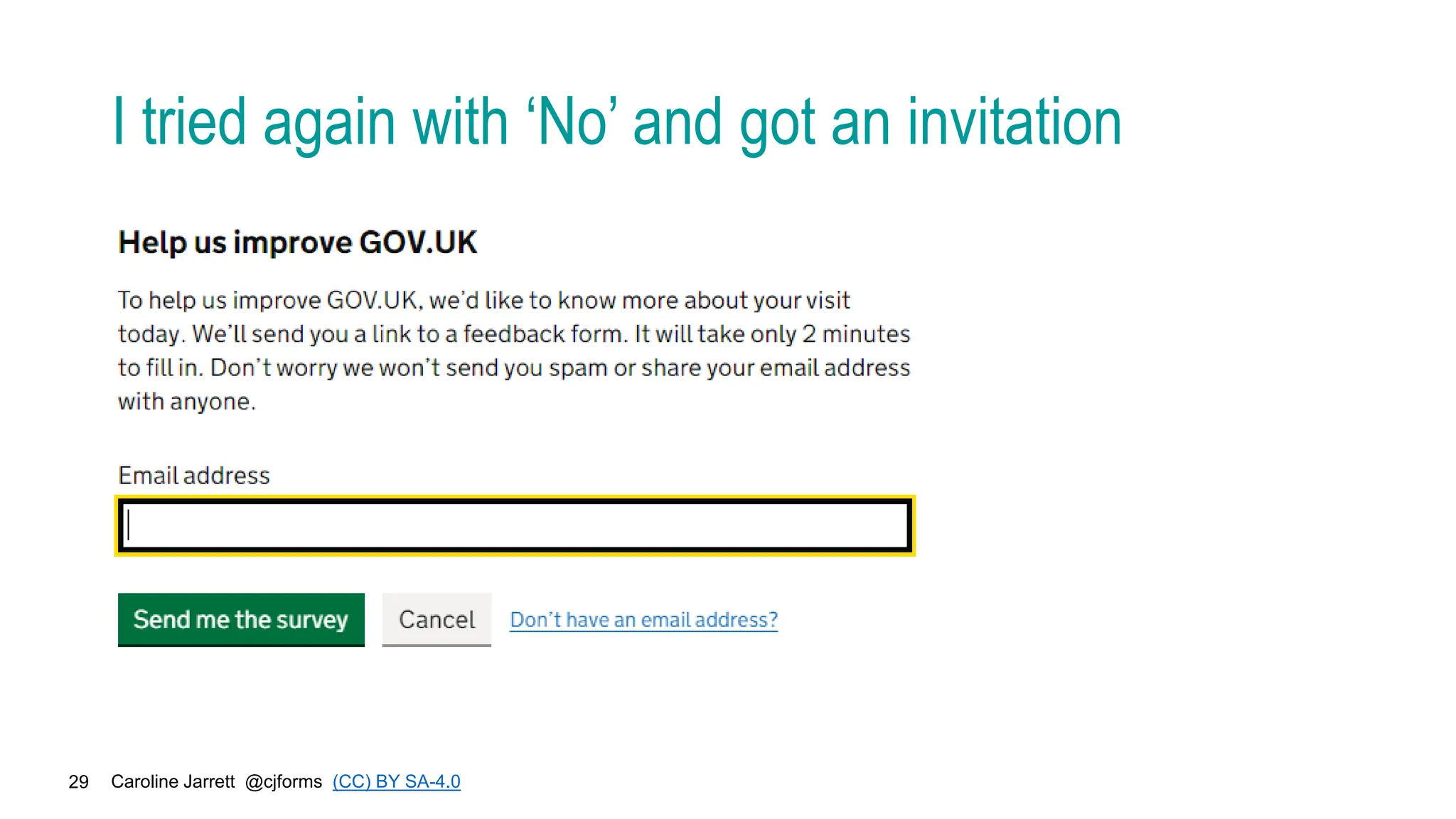

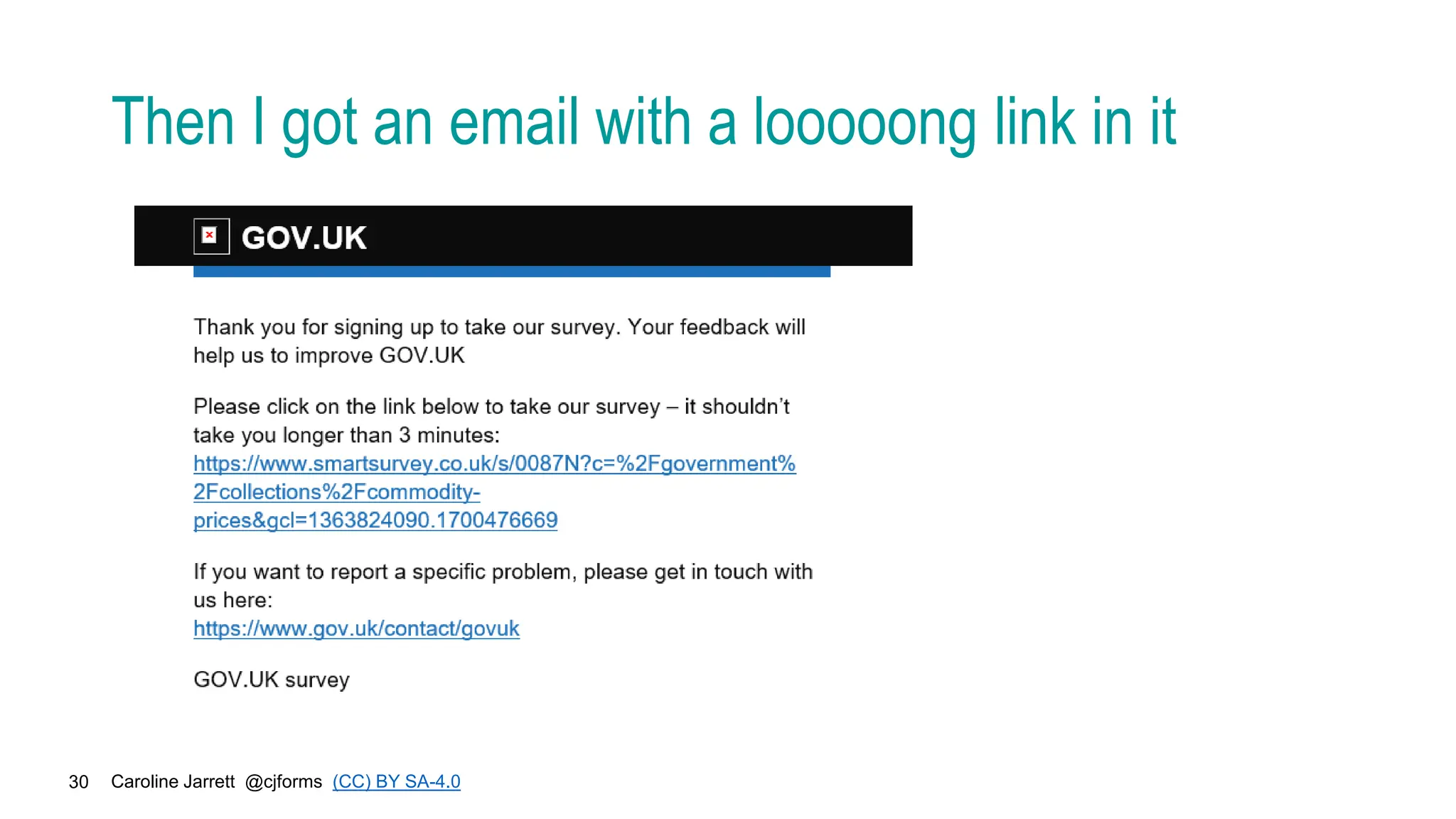

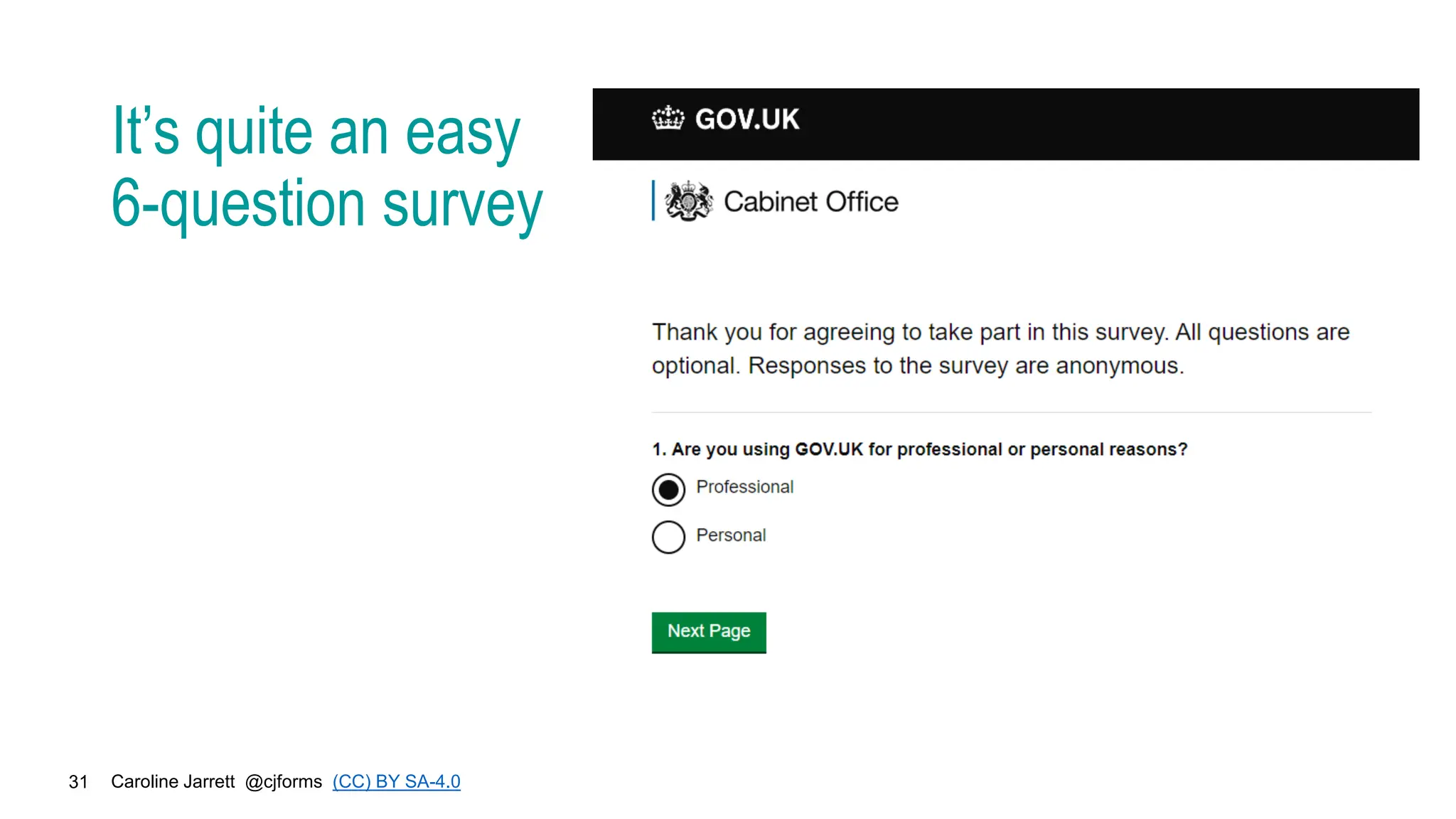

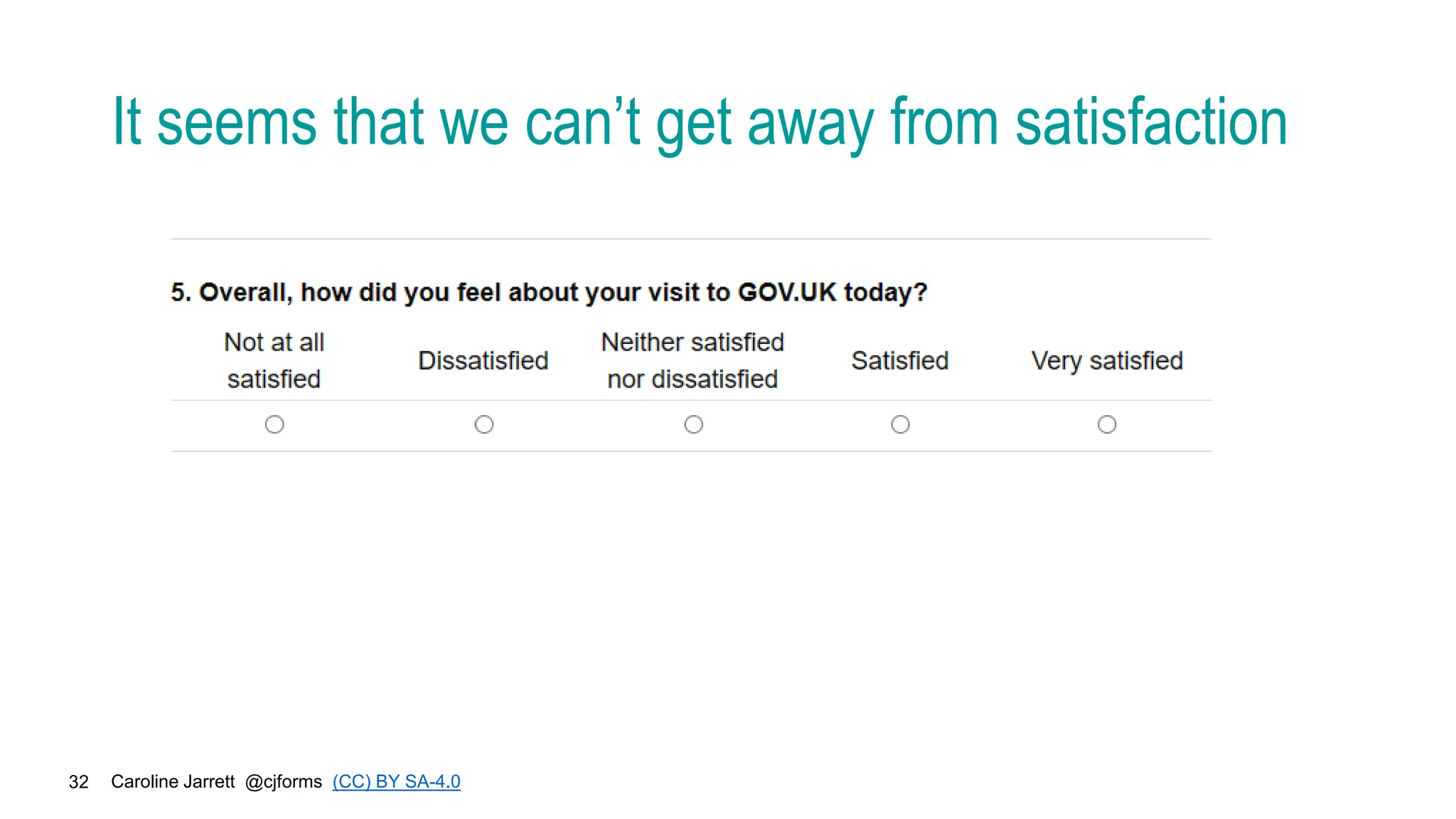

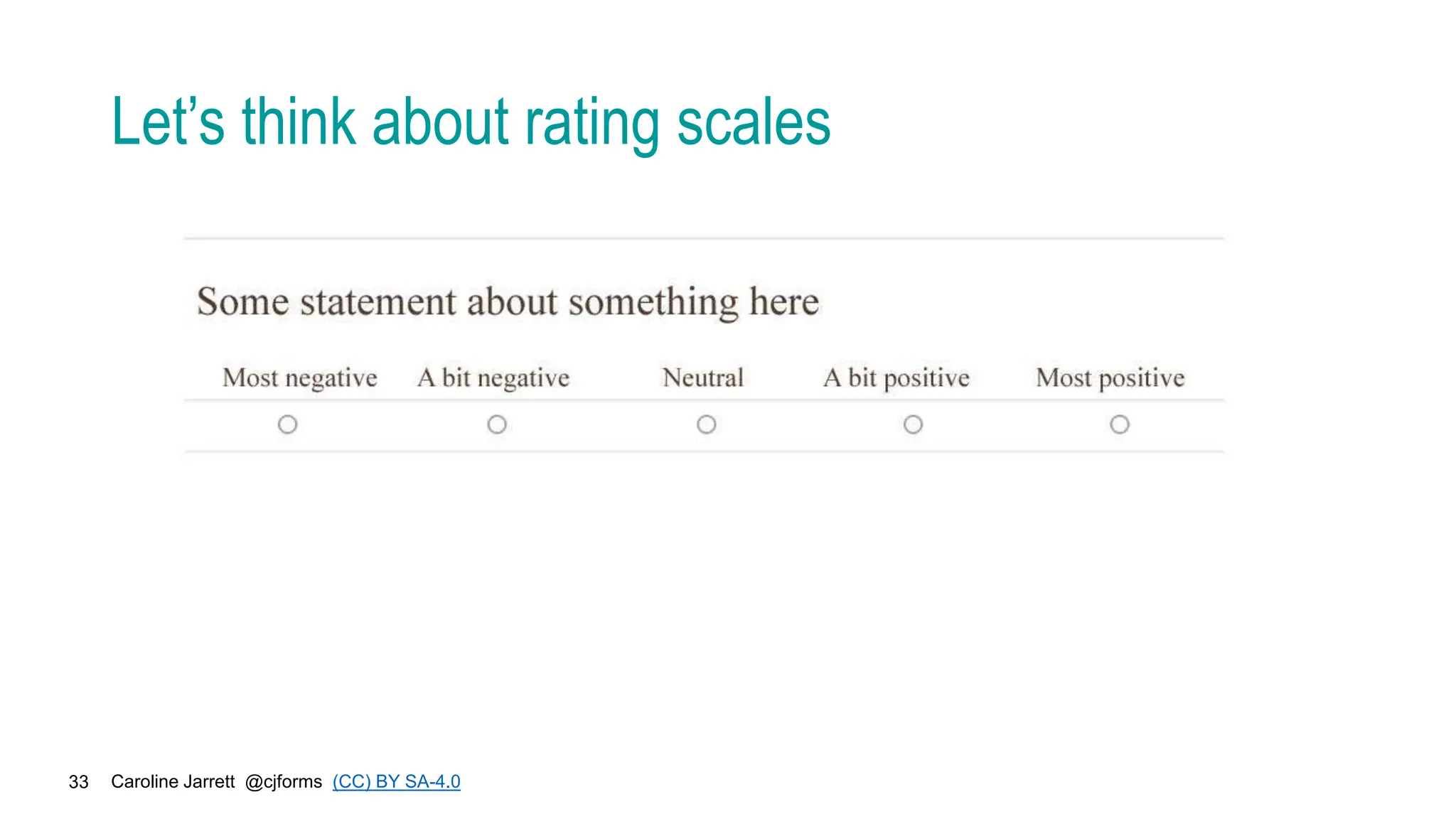

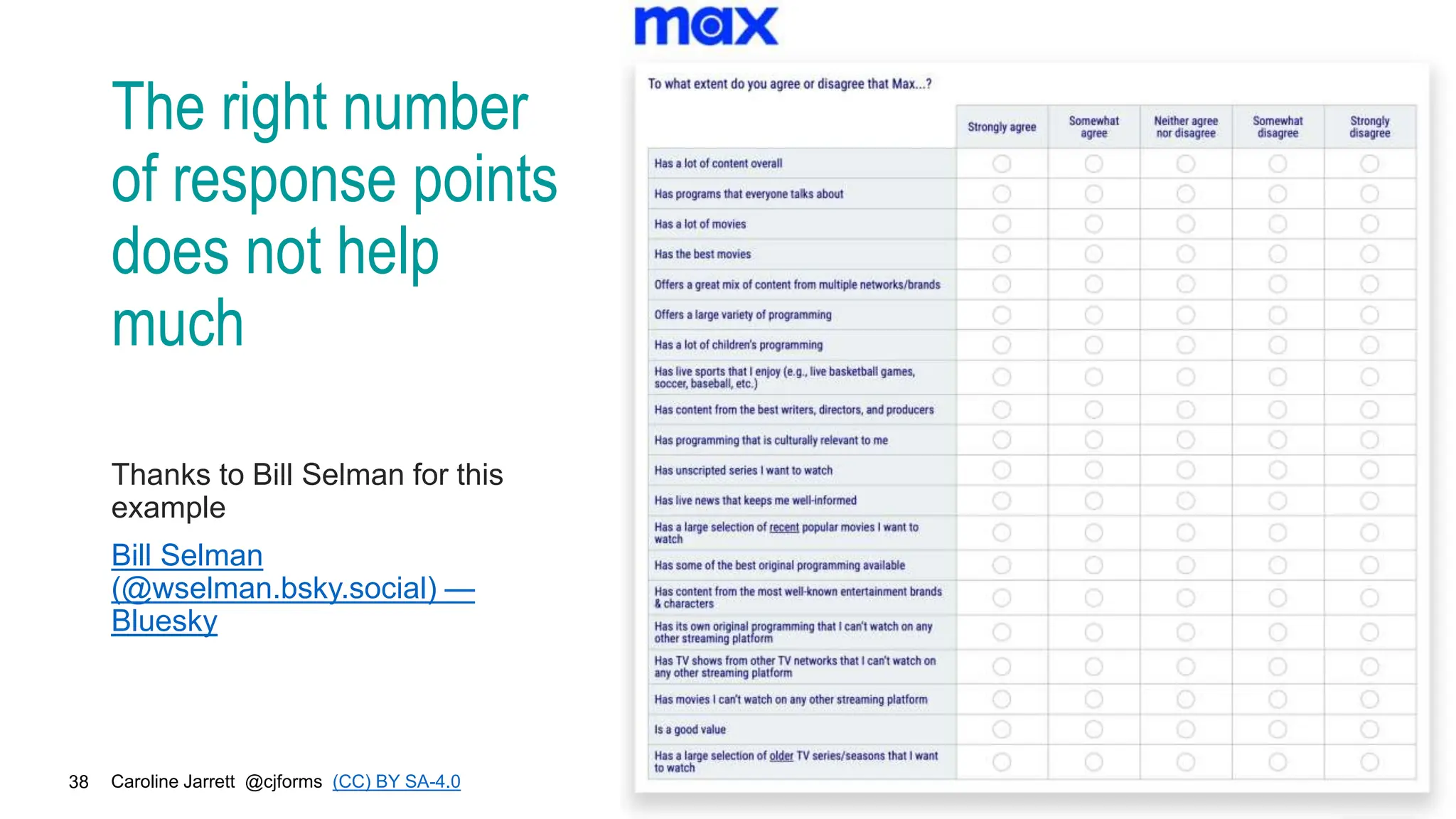

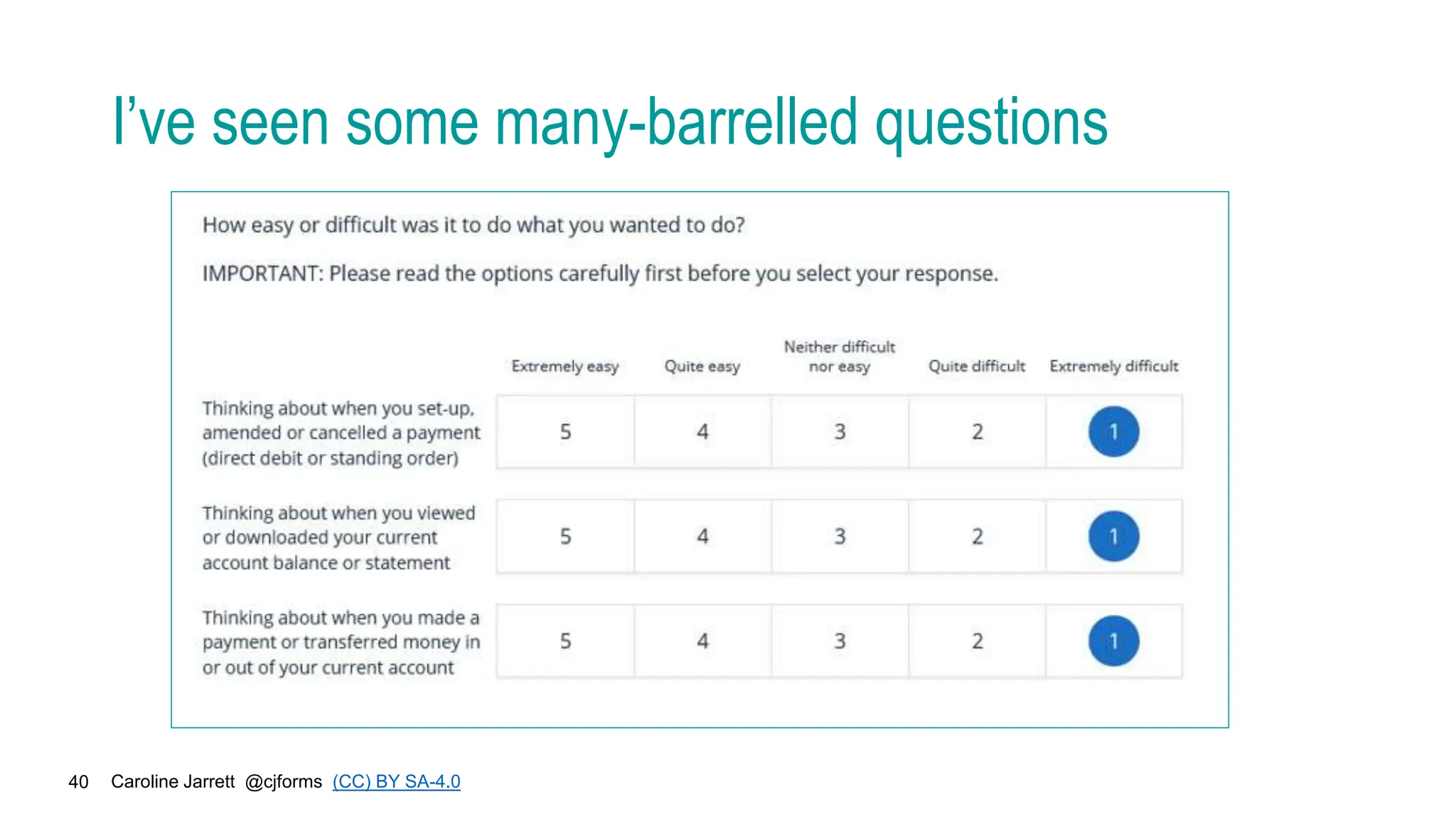

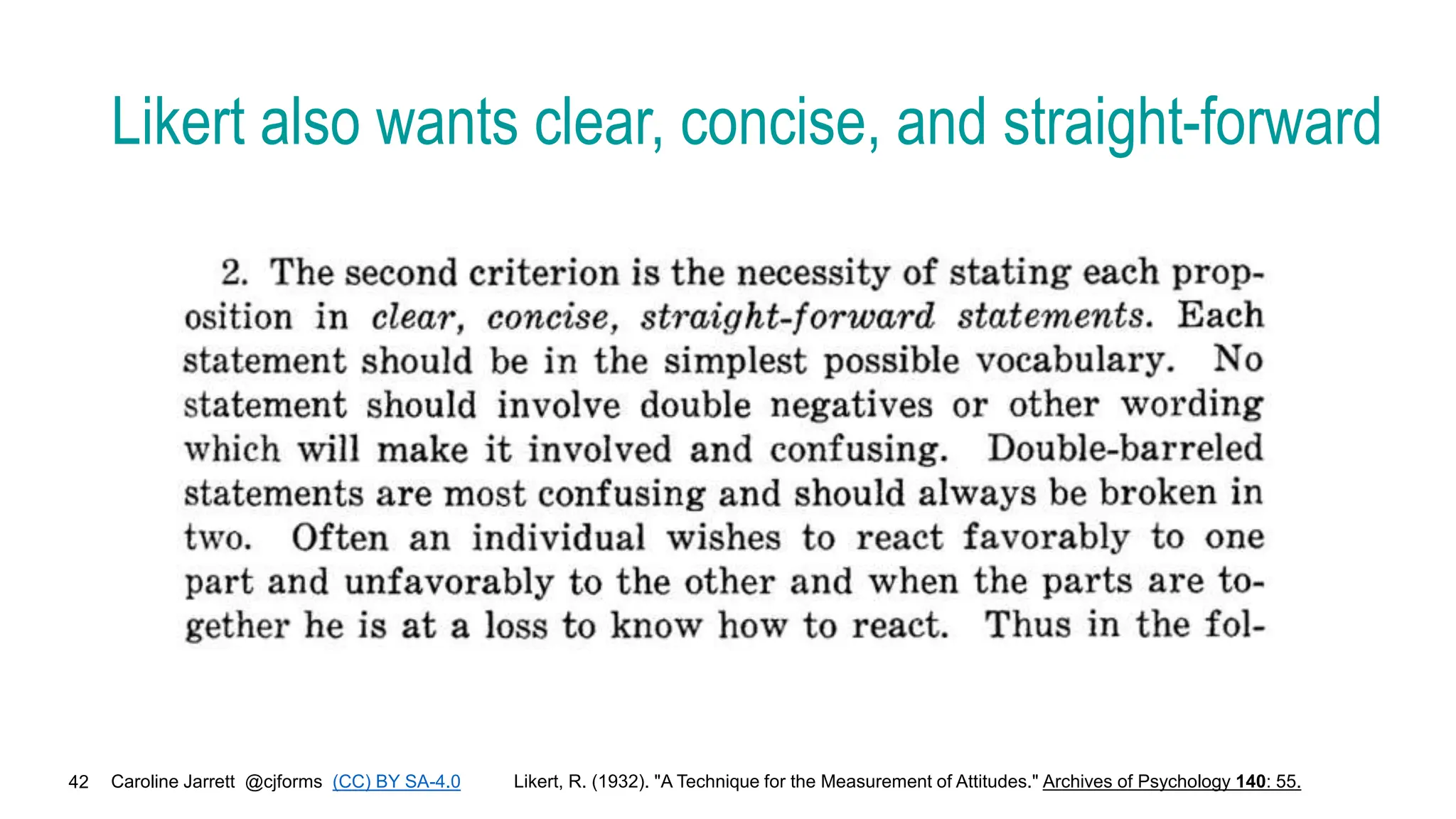

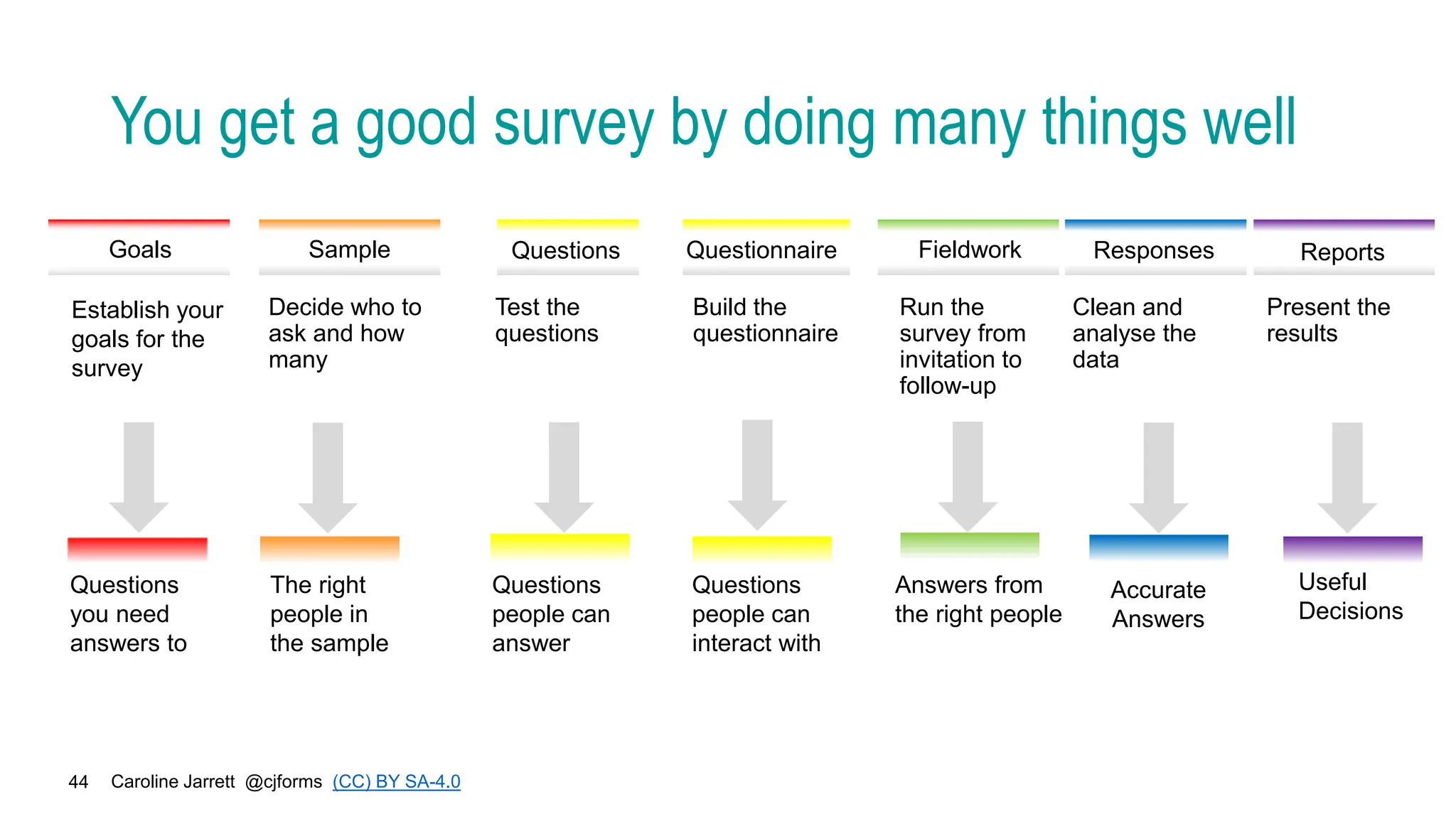

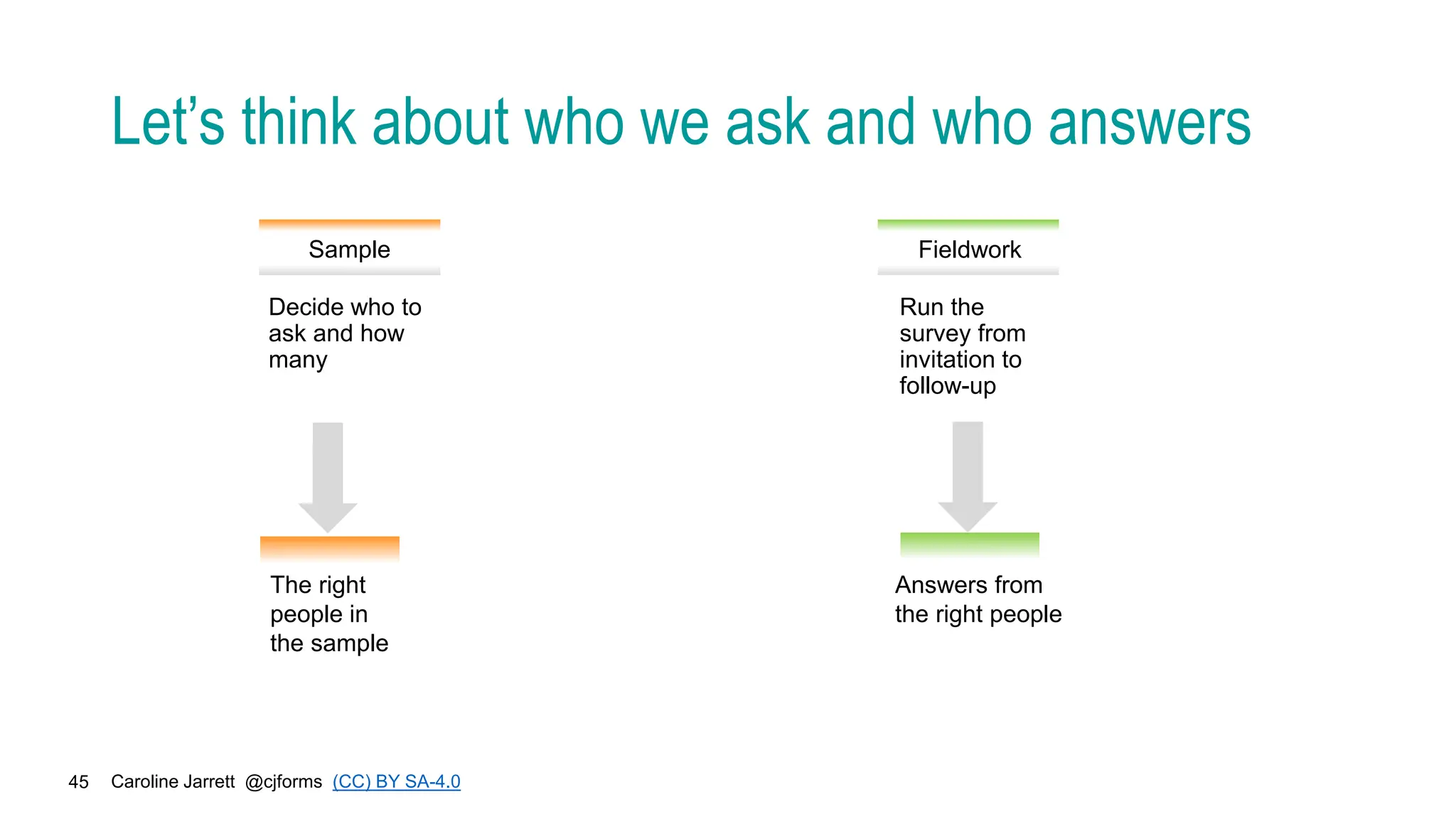

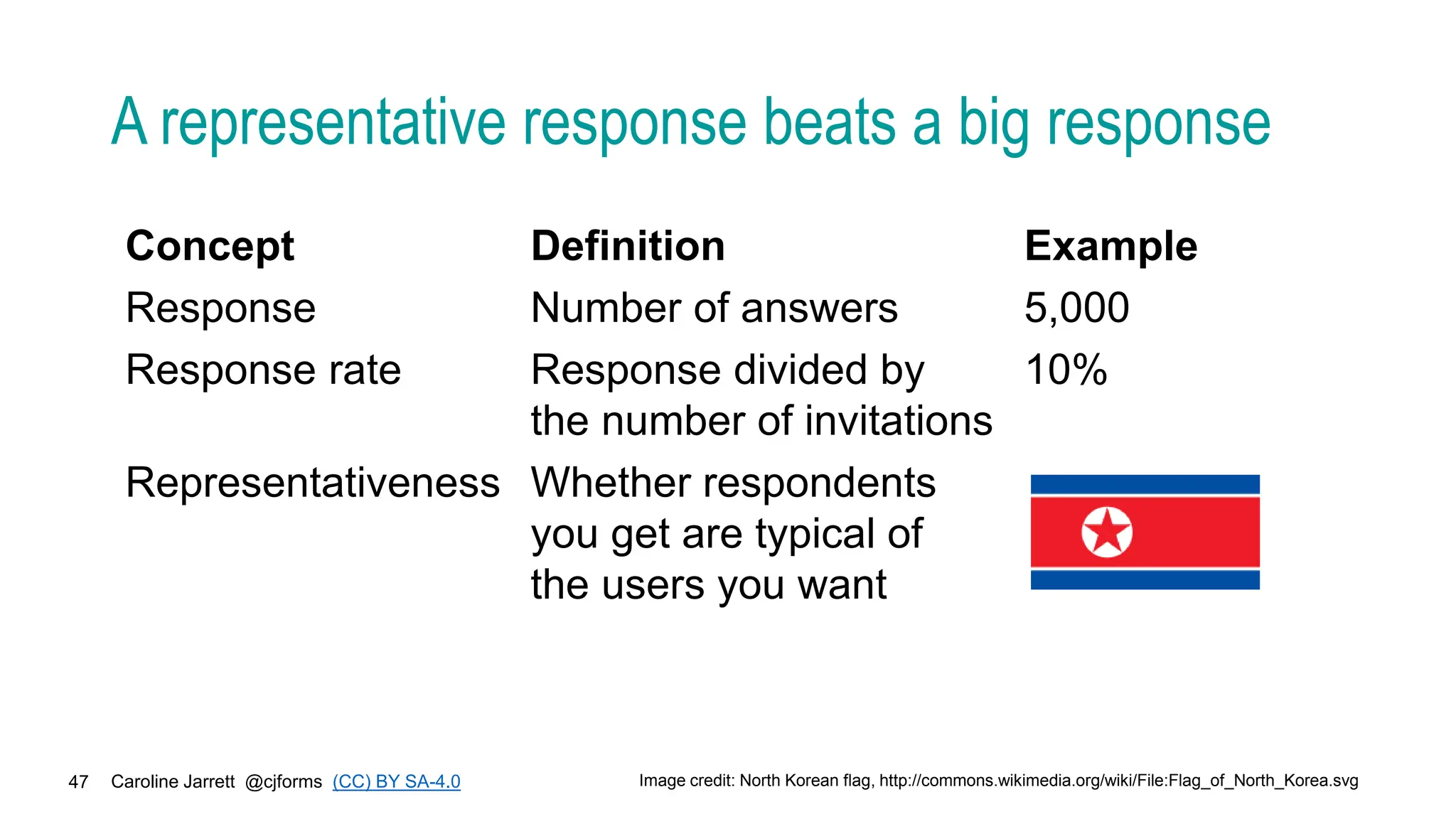

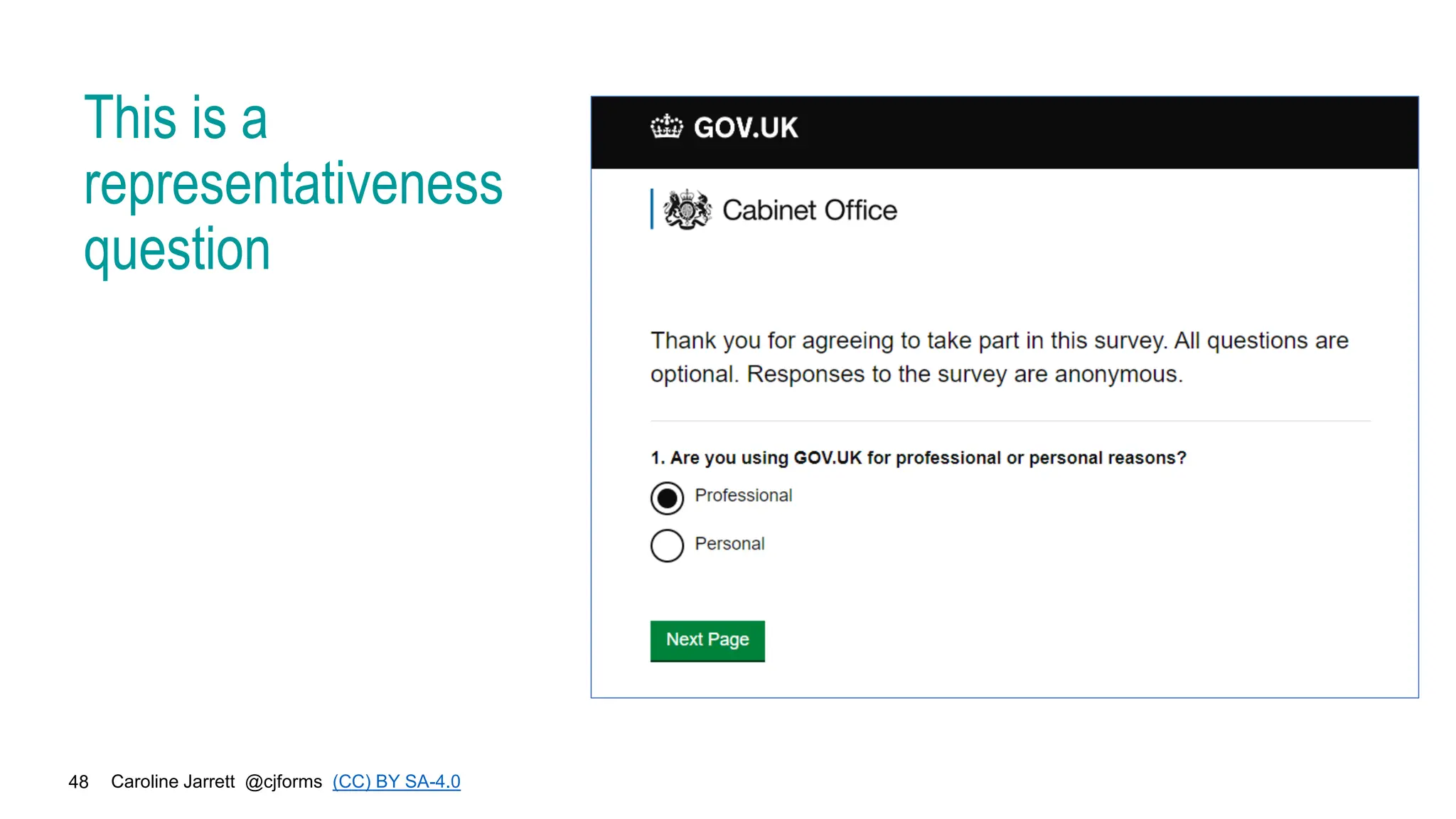

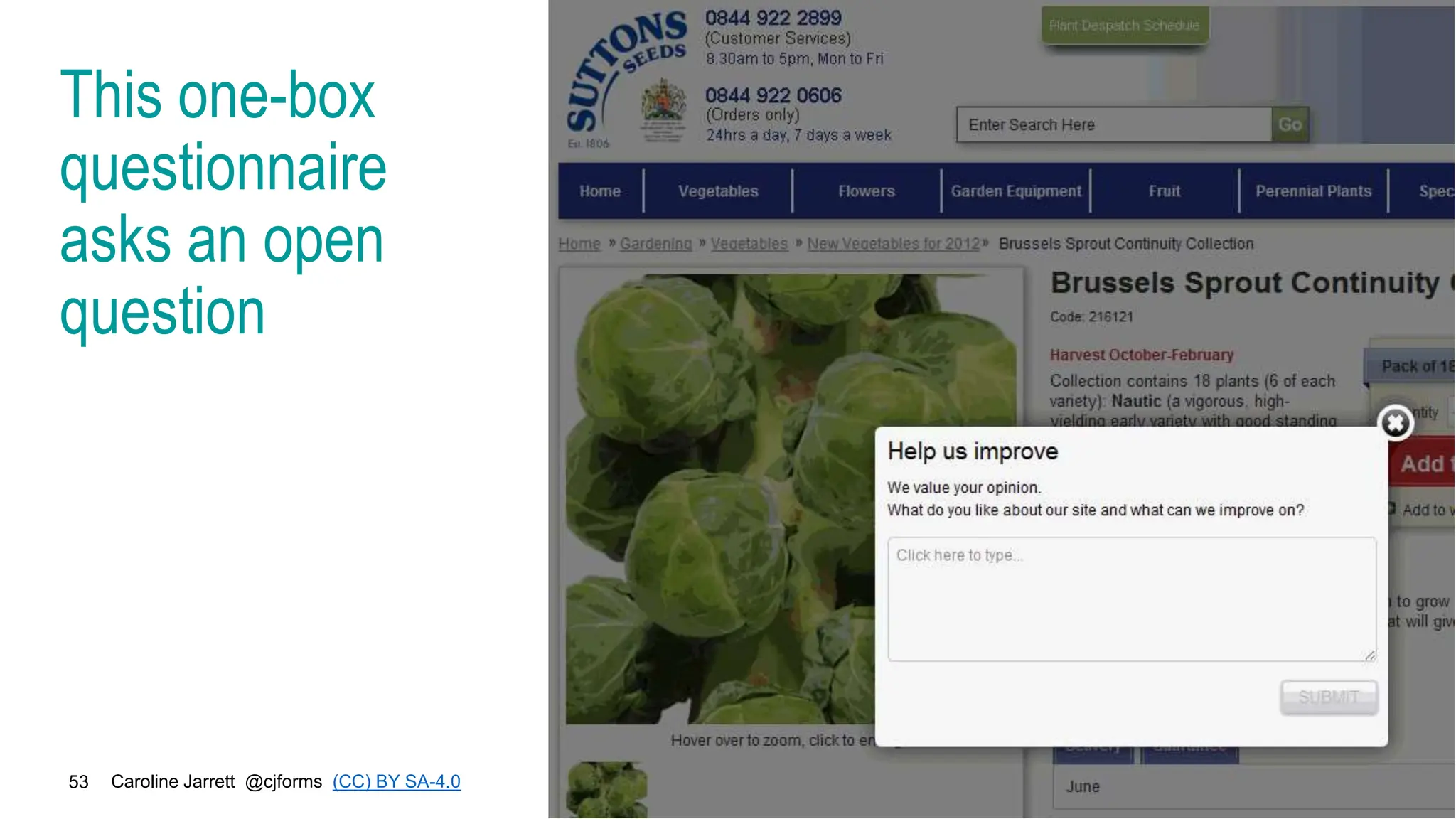

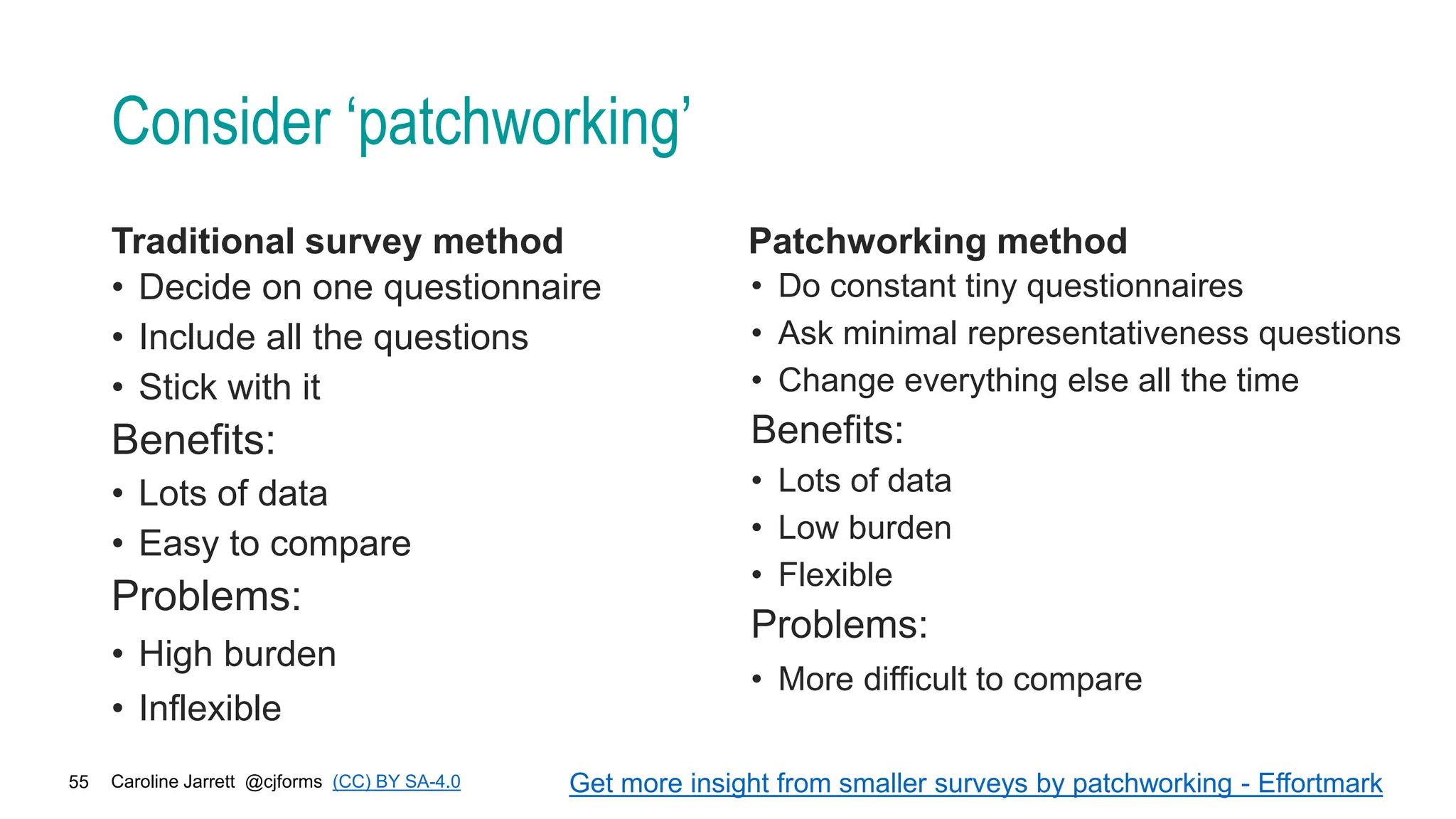

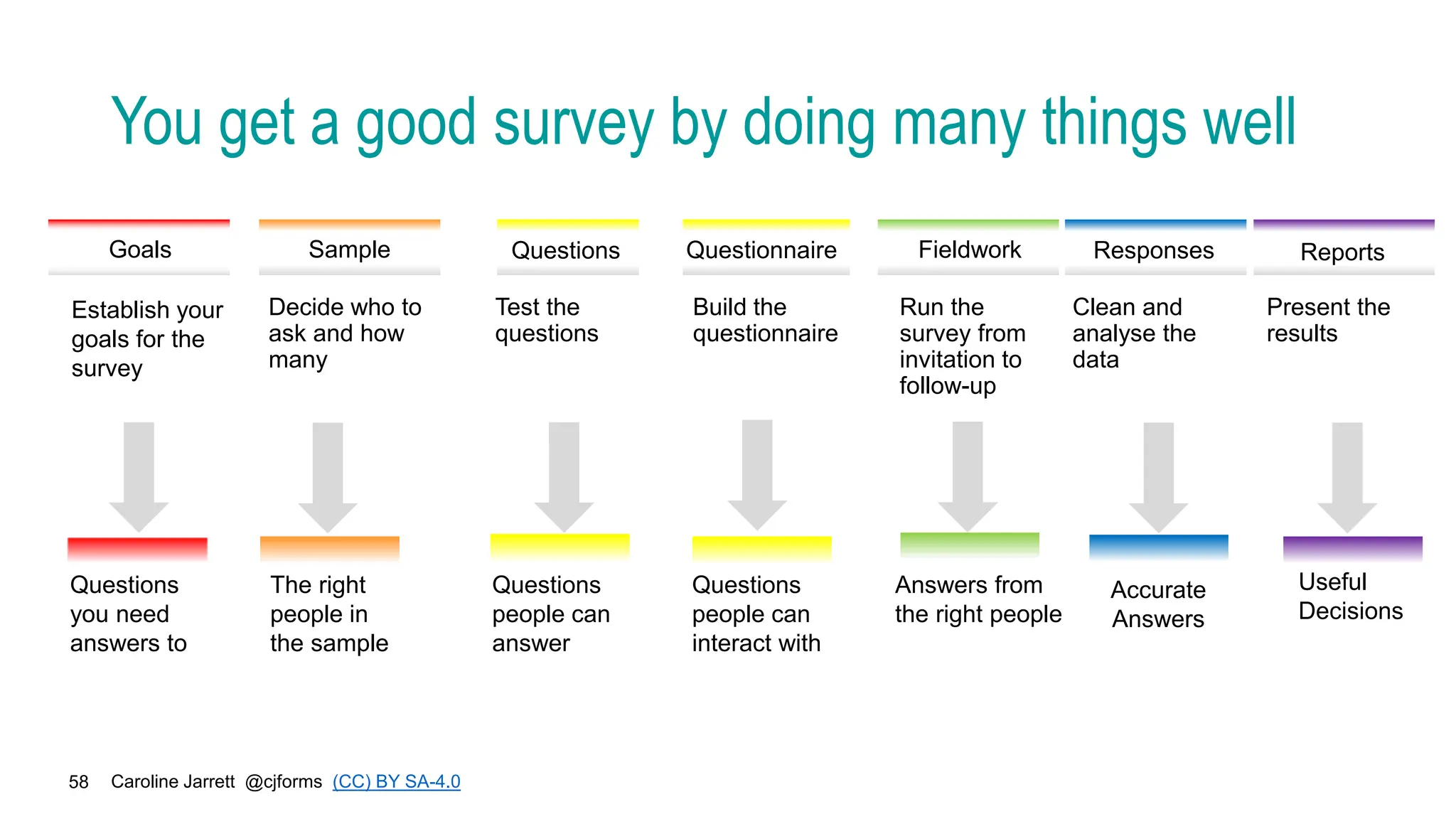

The document discusses the importance of surveys for gaining audience insights and emphasizes the need for establishing clear goals, selecting the right participants, and crafting effective questions to gather useful data. It highlights the role of feedback mechanisms in optimizing web content and the complexities of measuring user satisfaction. Additionally, it suggests iterative approaches to surveys and the significance of considering representativeness in survey responses.