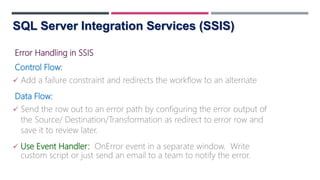

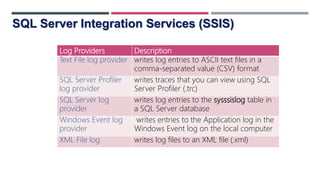

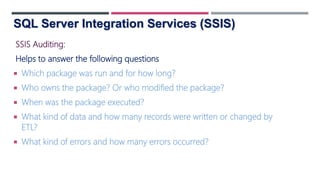

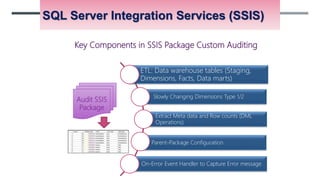

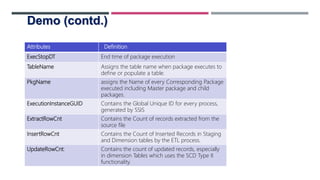

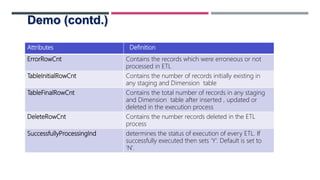

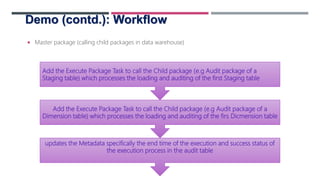

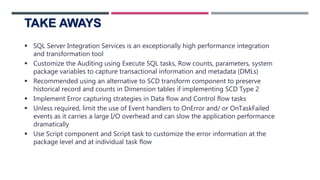

This document discusses event handling, logging, and configuration files in SQL Server Integration Services (SSIS). It provides an overview of SSIS and describes how to handle errors in the control flow and data flow. It also discusses different logging options in SSIS and the various event handlers that can be used. The document demonstrates how to set up auditing in an SSIS package by adding tasks to event handlers, capturing row counts, and storing metadata in variables. It notes some benefits of custom auditing over standard logging. Finally, it provides recommendations for optimizing long-running packages and key components to include in a custom auditing package.