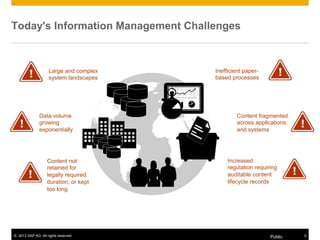

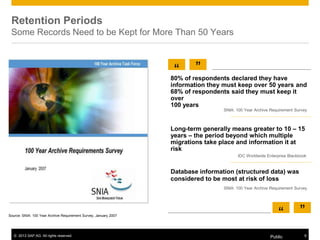

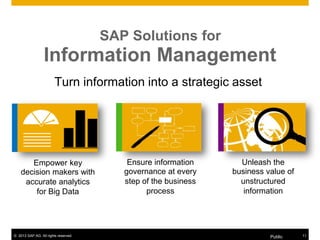

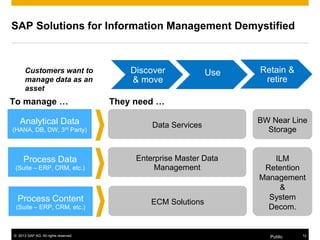

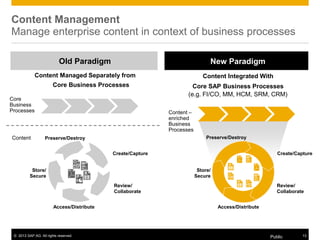

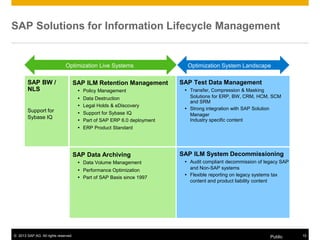

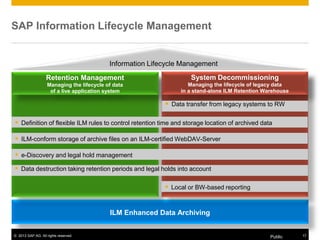

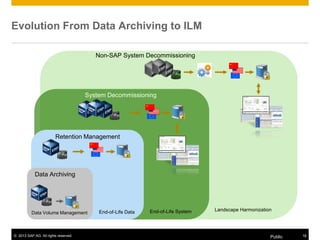

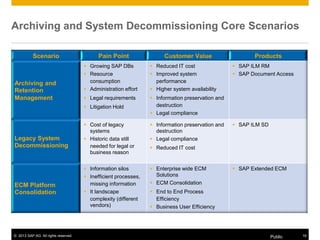

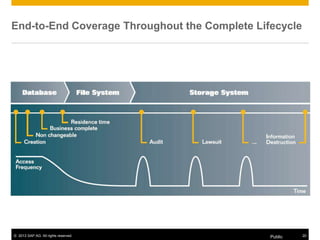

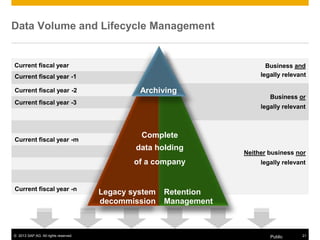

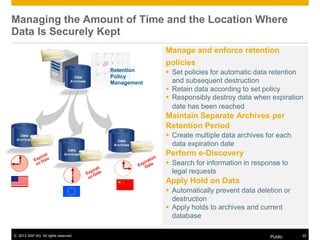

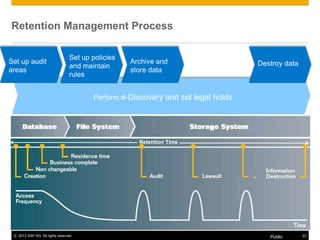

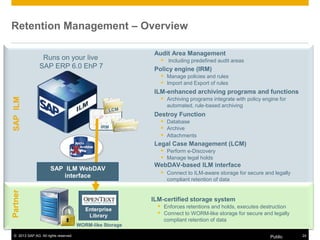

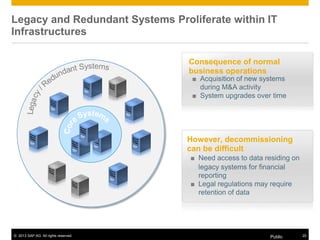

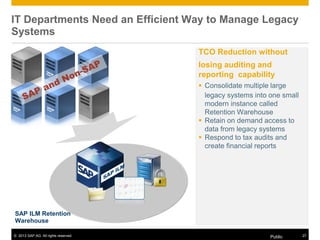

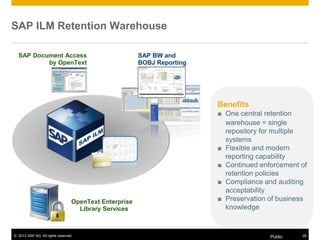

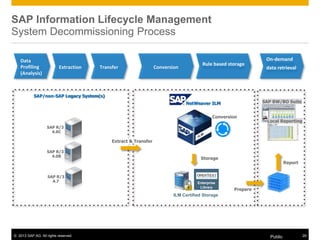

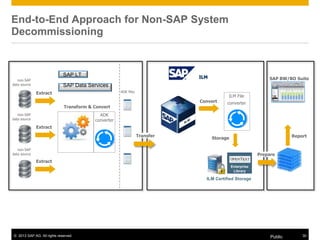

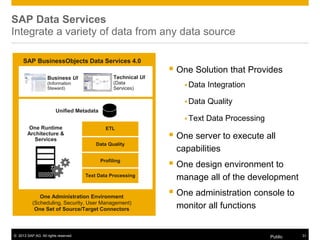

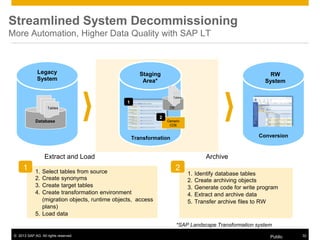

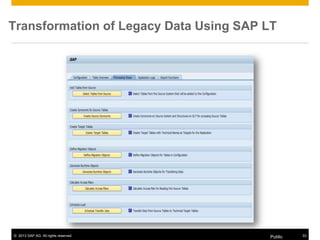

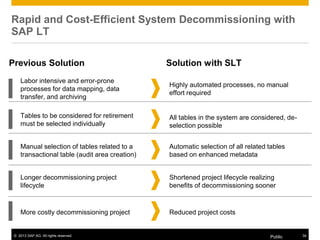

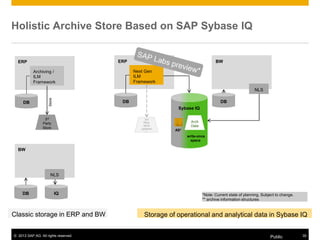

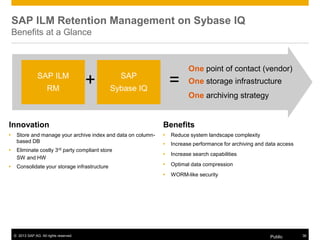

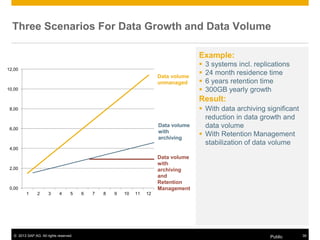

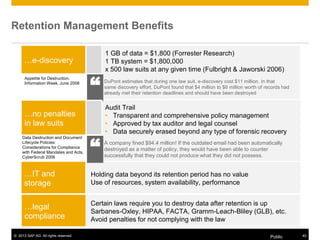

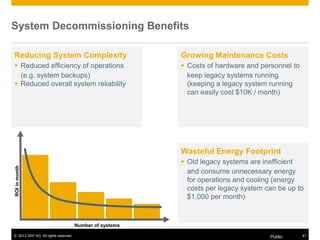

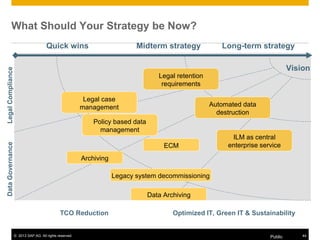

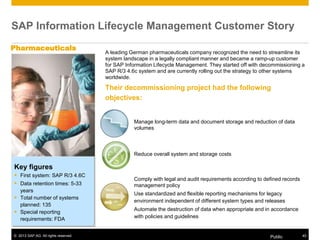

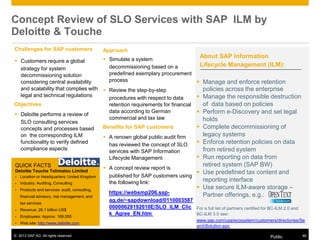

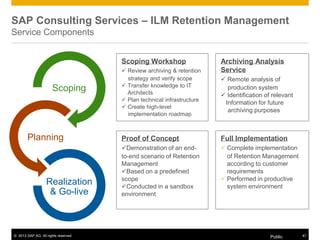

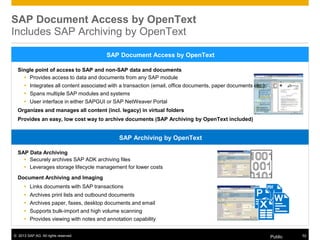

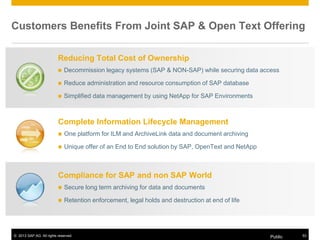

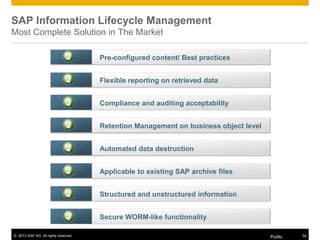

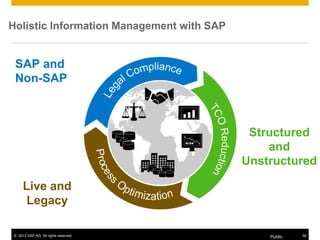

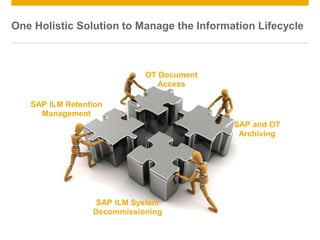

The document provides an overview of SAP solutions for Information Lifecycle Management (ILM), highlighting customer challenges such as growing data volume, inefficient processes, and regulatory compliance needs. It details services like data archiving, retention management, and system decommissioning aimed at optimizing costs and enhancing data governance. Additionally, it underscores the benefits of integrating content management with core business processes and ensuring compliance with legal retention requirements.