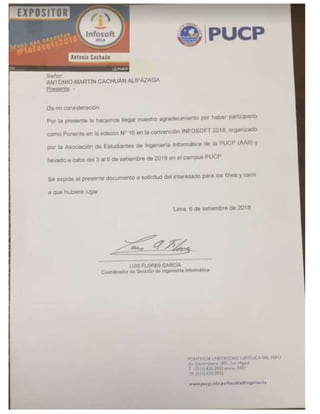

PUCP Diploma Infosoft 2018

•

0 likes•42 views

Speaker with the theme Integrating Machine Learning and Business Intelligence with Big Data

Report

Share

Report

Share

Download to read offline

Recommended

Databricks Apache Spark Developer Certification

Antonio Martin Cachuan Alipazaga was granted a Databricks Certified Developer - Apache Spark 2.x for Python certification on April 27, 2019. The certificate ID for this certification is 0000000031. This certification demonstrates proficiency in developing applications using Apache Spark 2.x with Python.

Cloudera Big Data Architecture Workshop

This document provides details about a Cloudera Big Data Architecture workshop held from December 11-13, 2018. The workshop was led by Antonio Cachuan and provided training on Cloudera's big data architecture and solutions over a three day period from the start date of December 11th through the end date of December 13th, 2018.

Gk ibm global knowledge_certificate

Antonio Martín Cachuán Alipázaga completed the KM204G course on IBM InfoSphere DataStage Essentials (version 11.5) on November 30, 2017. The document certifies that Antonio successfully finished the essentials training for IBM InfoSphere DataStage.

Importing Data in Python (Part 2) Course

This document is about a course titled "Importing Data in Python (Part 2)" by Antonio Martín Cachuán Alipázaga. The course number is 3,955,612 and focuses on techniques for importing and working with data in the Python programming language.

Recommended

Databricks Apache Spark Developer Certification

Antonio Martin Cachuan Alipazaga was granted a Databricks Certified Developer - Apache Spark 2.x for Python certification on April 27, 2019. The certificate ID for this certification is 0000000031. This certification demonstrates proficiency in developing applications using Apache Spark 2.x with Python.

Cloudera Big Data Architecture Workshop

This document provides details about a Cloudera Big Data Architecture workshop held from December 11-13, 2018. The workshop was led by Antonio Cachuan and provided training on Cloudera's big data architecture and solutions over a three day period from the start date of December 11th through the end date of December 13th, 2018.

Gk ibm global knowledge_certificate

Antonio Martín Cachuán Alipázaga completed the KM204G course on IBM InfoSphere DataStage Essentials (version 11.5) on November 30, 2017. The document certifies that Antonio successfully finished the essentials training for IBM InfoSphere DataStage.

Importing Data in Python (Part 2) Course

This document is about a course titled "Importing Data in Python (Part 2)" by Antonio Martín Cachuán Alipázaga. The course number is 3,955,612 and focuses on techniques for importing and working with data in the Python programming language.

Attended 4th Annual International Symposium on Information Management and Bi...

Attended the 4th Annual International Symposium on Information Management and Big Dat

Speaker SIMBig 17

Speaker of the 4th Annual International Symposium on Information Management and Big Data

Importing Data in Python (Part 1) Course

This document is about a Python course titled "Importing Data in Python (Part 1)" by Antonio Martín Cachuán Alipázaga. The course teaches students how to import different types of data into Python programs for analysis and manipulation. Students will learn the fundamentals of importing CSV files, JSON data, XML documents and more into Python.

Deep Learning in Python Course

Antonio Martín Cachuán Alipázaga has completed the Deep Learning in Python course. The course number is 2,997,280. The course title is Deep Learning in Python.

Intro to Python for Data Science Course

Antonio Martín Cachuán Alipázaga is taking an introductory Python for Data Science course. The course number is 2,338,974. The document provides Antonio's name and details about the Python course he is enrolled in.

Python Data Science Toolbox (Part 1) Course

Antonio Martín Cachuán Alipázaga completed the Python Data Science Toolbox (Part 1) course. The course teaches fundamental Python programming and data science tools and techniques. It provides a foundation for performing data analysis and visualization with Python.

Diploma in Applied Statistics

El documento es un diploma que otorga a Antonio Martín Cachuán Alipázagala diplomatura de Estudios en Estadística Aplicada de la Facultad de Ciencias e Ingeniería. Antonio completó satisfactoriamente los estudios entre agosto de 2016 y abril de 2017 con un total de 174 horas en cursos como Procedimientos Básicos Estadísticos, Técnicas de Predicción, Técnicas de Muestreo, Análisis Multivariado y Análisis de Datos Categóricos. El diploma fue firmado por

一比一原版(GWU,GW文凭证书)乔治·华盛顿大学毕业证如何办理

毕业原版【微信:176555708】【(GWU,GW毕业证书)乔治·华盛顿大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版一比一弗林德斯大学毕业证(Flinders毕业证书)如何办理

原版制作【微信:41543339】【弗林德斯大学毕业证(Flinders毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Navigating today's data landscape isn't just about managing workflows; it's about strategically propelling your business forward. Apache Airflow has stood out as the benchmark in this arena, driving data orchestration forward since its early days. As we dive into the complexities of our current data-rich environment, where the sheer volume of information and its timely, accurate processing are crucial for AI and ML applications, the role of Airflow has never been more critical.

In my journey as the Senior Engineering Director and a pivotal member of Apache Airflow's Project Management Committee (PMC), I've witnessed Airflow transform data handling, making agility and insight the norm in an ever-evolving digital space. At Astronomer, our collaboration with leading AI & ML teams worldwide has not only tested but also proven Airflow's mettle in delivering data reliably and efficiently—data that now powers not just insights but core business functions.

This session is a deep dive into the essence of Airflow's success. We'll trace its evolution from a budding project to the backbone of data orchestration it is today, constantly adapting to meet the next wave of data challenges, including those brought on by Generative AI. It's this forward-thinking adaptability that keeps Airflow at the forefront of innovation, ready for whatever comes next.

The ever-growing demands of AI and ML applications have ushered in an era where sophisticated data management isn't a luxury—it's a necessity. Airflow's innate flexibility and scalability are what makes it indispensable in managing the intricate workflows of today, especially those involving Large Language Models (LLMs).

This talk isn't just a rundown of Airflow's features; it's about harnessing these capabilities to turn your data workflows into a strategic asset. Together, we'll explore how Airflow remains at the cutting edge of data orchestration, ensuring your organization is not just keeping pace but setting the pace in a data-driven future.

Session in https://budapestdata.hu/2024/04/kaxil-naik-astronomer-io/ | https://dataml24.sessionize.com/session/667627

一比一原版兰加拉学院毕业证(Langara毕业证书)学历如何办理

原版办【微信号:BYZS866】【兰加拉学院毕业证(Langara毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版制作(unimelb毕业证书)墨尔本大学毕业证Offer一模一样

学校原件一模一样【微信:741003700 】《(unimelb毕业证书)墨尔本大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

by

Timothy Spann

Principal Developer Advocate

https://budapestdata.hu/2024/en/

https://budapestml.hu/2024/en/

tim.spann@zilliz.com

https://www.linkedin.com/in/timothyspann/

https://x.com/paasdev

https://github.com/tspannhw

https://www.youtube.com/@flank-stack

milvus

vector database

gen ai

generative ai

deep learning

machine learning

apache nifi

apache pulsar

apache kafka

apache flink

一比一原版(UO毕业证)渥太华大学毕业证如何办理

UO毕业证录取书【微信95270640】购买(渥太华大学毕业证成绩单硕士学历)Q微信95270640代办UO学历认证留信网伪造渥太华大学学位证书精仿渥太华大学本科/硕士文凭证书补办渥太华大学 diplomaoffer,Transcript购买渥太华大学毕业证成绩单购买UO假毕业证学位证书购买伪造渥太华大学文凭证书学位证书,专业办理雅思、托福成绩单,学生ID卡,在读证明,海外各大学offer录取通知书,毕业证书,成绩单,文凭等材料:1:1完美还原毕业证、offer录取通知书、学生卡等各种在读或毕业材料的防伪工艺(包括 烫金、烫银、钢印、底纹、凹凸版、水印、防伪光标、热敏防伪、文字图案浮雕,激光镭射,紫外荧光,温感光标)学校原版上有的工艺我们一样不会少,不论是老版本还是最新版本,都能保证最高程度还原,力争完美以求让所有同学都能享受到完美的品质服务。

文凭办理流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:微信95270640我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。

7完成交易删除客户资料

高精端提供以下服务:

一:渥太华大学渥太华大学毕业证文凭证书全套材料从防伪到印刷水印底纹到钢印烫金

二:真实使馆认证(留学人员回国证明)使馆存档

三:真实教育部认证教育部存档教育部留服网站可查

四:留信认证留学生信息网站可查

五:与学校颁发的相关证件1:1纸质尺寸制定(定期向各大院校毕业生购买最新版本毕,业证成绩单保证您拿到的是鲁昂大学内部最新版本毕业证成绩单微信95270640)

A.为什么留学生需要操作留信认证?

留信认证全称全国留学生信息服务网认证,隶属于北京中科院。①留信认证门槛条件更低,费用更美丽,并且包过,完单周期短,效率高②留信认证虽然不能去国企,但是一般的公司都没有问题,因为国内很多公司连基本的留学生学历认证都不了解。这对于留学生来说,这就比自己光拿一个证书更有说服力,因为留学学历可以在留信网站上进行查询!

B.为什么我们提供的毕业证成绩单具有使用价值?

查询留服认证是国内鉴别留学生海外学历的唯一途径但认证只是个体行为不是所有留学生都操作所以没有办理认证的留学生的学历在国内也是查询不到的他们也仅仅只有一张文凭。所以这时候我们提供的和学校颁发的一模一样的毕业证成绩单就有了使用价值。只硕大的蛇皮袋手里拎着长铁钩正站在门口朝黑色的屋内张望不好坏人小偷山娃一怔却也灵机一动立马仰起头双手拢在嘴边朝楼上大喊:“爸爸爸——有人找——那人一听朝山娃尴尬地笑笑悻悻地走了山娃立马“嘭的一声将铁门锁死心却咚咚地乱跳当山娃跟父亲说起这事时父亲很吃惊抚摸着山娃的头说还好醒得及时要不家早被人掏空了到时连电视也没得看啰不过父亲还是夸山娃能临危不乱随机应变有胆有谋山娃笑笑说那都是书上学的看童话和小说时多

办(uts毕业证书)悉尼科技大学毕业证学历证书原版一模一样

原版一模一样【微信:741003700 】【(uts毕业证书)悉尼科技大学毕业证学历证书】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

Dynamic policy enforcement is becoming an increasingly important topic in today’s world where data privacy and compliance is a top priority for companies, individuals, and regulators alike. In these slides, we discuss how LinkedIn implements a powerful dynamic policy enforcement engine, called ViewShift, and integrates it within its data lake. We show the query engine architecture and how catalog implementations can automatically route table resolutions to compliance-enforcing SQL views. Such views have a set of very interesting properties: (1) They are auto-generated from declarative data annotations. (2) They respect user-level consent and preferences (3) They are context-aware, encoding a different set of transformations for different use cases (4) They are portable; while the SQL logic is only implemented in one SQL dialect, it is accessible in all engines.

#SQL #Views #Privacy #Compliance #DataLake

一比一原版(UCSF文凭证书)旧金山分校毕业证如何办理

毕业原版【微信:176555708】【(UCSF毕业证书)旧金山分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(CU毕业证)卡尔顿大学毕业证如何办理

原件一模一样【微信:95270640】【卡尔顿大学毕业证CU学位证成绩单】【微信:95270640】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信:95270640】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信:95270640】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份【微信:95270640】

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】外观非常精致,由特殊纸质材料制成,上面印有校徽、校名、毕业生姓名、专业等信息。

办理卡尔顿大学毕业证CU学位证本科学位证成绩单【微信:95270640 】格式相对统一,各专业都有相应的模板。通常包括以下部分:

校徽:象征着学校的荣誉和传承。

校名:学校英文全称

授予学位:本部分将注明获得的具体学位名称。

毕业生姓名:这是最重要的信息之一,标志着该证书是由特定人员获得的。

颁发日期:这是毕业正式生效的时间,也代表着毕业生学业的结束。

其他信息:根据不同的专业和学位,可能会有一些特定的信息或章节。

办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】价值很高,需要妥善保管。一般来说,应放置在安全、干燥、防潮的地方,避免长时间暴露在阳光下。如需使用,最好使用复印件而不是原件,以免丢失。

综上所述,办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】是证明身份和学历的高价值文件。外观简单庄重,格式统一,包括重要的个人信息和发布日期。对持有人来说,妥善保管是非常重要的。

一比一原版(Unimelb毕业证书)墨尔本大学毕业证如何办理

原版制作【微信:41543339】【(Unimelb毕业证书)墨尔本大学毕业证】【微信:41543339】《成绩单、外壳、雅思、offer、留信学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同进口机器一比一制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版巴斯大学毕业证(Bath毕业证书)学历如何办理

原版办理【微信号:BYZS866】【巴斯大学毕业证(Bath毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【关于学历材料质量】

我们承诺采用的是学校原版纸张(原版纸质、底色、纹路、)我们工厂拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有成品以及工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

More Related Content

More from Antonio Cachuan

Attended 4th Annual International Symposium on Information Management and Bi...

Attended the 4th Annual International Symposium on Information Management and Big Dat

Speaker SIMBig 17

Speaker of the 4th Annual International Symposium on Information Management and Big Data

Importing Data in Python (Part 1) Course

This document is about a Python course titled "Importing Data in Python (Part 1)" by Antonio Martín Cachuán Alipázaga. The course teaches students how to import different types of data into Python programs for analysis and manipulation. Students will learn the fundamentals of importing CSV files, JSON data, XML documents and more into Python.

Deep Learning in Python Course

Antonio Martín Cachuán Alipázaga has completed the Deep Learning in Python course. The course number is 2,997,280. The course title is Deep Learning in Python.

Intro to Python for Data Science Course

Antonio Martín Cachuán Alipázaga is taking an introductory Python for Data Science course. The course number is 2,338,974. The document provides Antonio's name and details about the Python course he is enrolled in.

Python Data Science Toolbox (Part 1) Course

Antonio Martín Cachuán Alipázaga completed the Python Data Science Toolbox (Part 1) course. The course teaches fundamental Python programming and data science tools and techniques. It provides a foundation for performing data analysis and visualization with Python.

Diploma in Applied Statistics

El documento es un diploma que otorga a Antonio Martín Cachuán Alipázagala diplomatura de Estudios en Estadística Aplicada de la Facultad de Ciencias e Ingeniería. Antonio completó satisfactoriamente los estudios entre agosto de 2016 y abril de 2017 con un total de 174 horas en cursos como Procedimientos Básicos Estadísticos, Técnicas de Predicción, Técnicas de Muestreo, Análisis Multivariado y Análisis de Datos Categóricos. El diploma fue firmado por

More from Antonio Cachuan (7)

Attended 4th Annual International Symposium on Information Management and Bi...

Attended 4th Annual International Symposium on Information Management and Bi...

Recently uploaded

一比一原版(GWU,GW文凭证书)乔治·华盛顿大学毕业证如何办理

毕业原版【微信:176555708】【(GWU,GW毕业证书)乔治·华盛顿大学毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版一比一弗林德斯大学毕业证(Flinders毕业证书)如何办理

原版制作【微信:41543339】【弗林德斯大学毕业证(Flinders毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Navigating today's data landscape isn't just about managing workflows; it's about strategically propelling your business forward. Apache Airflow has stood out as the benchmark in this arena, driving data orchestration forward since its early days. As we dive into the complexities of our current data-rich environment, where the sheer volume of information and its timely, accurate processing are crucial for AI and ML applications, the role of Airflow has never been more critical.

In my journey as the Senior Engineering Director and a pivotal member of Apache Airflow's Project Management Committee (PMC), I've witnessed Airflow transform data handling, making agility and insight the norm in an ever-evolving digital space. At Astronomer, our collaboration with leading AI & ML teams worldwide has not only tested but also proven Airflow's mettle in delivering data reliably and efficiently—data that now powers not just insights but core business functions.

This session is a deep dive into the essence of Airflow's success. We'll trace its evolution from a budding project to the backbone of data orchestration it is today, constantly adapting to meet the next wave of data challenges, including those brought on by Generative AI. It's this forward-thinking adaptability that keeps Airflow at the forefront of innovation, ready for whatever comes next.

The ever-growing demands of AI and ML applications have ushered in an era where sophisticated data management isn't a luxury—it's a necessity. Airflow's innate flexibility and scalability are what makes it indispensable in managing the intricate workflows of today, especially those involving Large Language Models (LLMs).

This talk isn't just a rundown of Airflow's features; it's about harnessing these capabilities to turn your data workflows into a strategic asset. Together, we'll explore how Airflow remains at the cutting edge of data orchestration, ensuring your organization is not just keeping pace but setting the pace in a data-driven future.

Session in https://budapestdata.hu/2024/04/kaxil-naik-astronomer-io/ | https://dataml24.sessionize.com/session/667627

一比一原版兰加拉学院毕业证(Langara毕业证书)学历如何办理

原版办【微信号:BYZS866】【兰加拉学院毕业证(Langara毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版制作(unimelb毕业证书)墨尔本大学毕业证Offer一模一样

学校原件一模一样【微信:741003700 】《(unimelb毕业证书)墨尔本大学毕业证》【微信:741003700 】学位证,留信认证(真实可查,永久存档)原件一模一样纸张工艺/offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原。

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

【主营项目】

一.毕业证【q微741003700】成绩单、使馆认证、教育部认证、雅思托福成绩单、学生卡等!

二.真实使馆公证(即留学回国人员证明,不成功不收费)

三.真实教育部学历学位认证(教育部存档!教育部留服网站永久可查)

四.办理各国各大学文凭(一对一专业服务,可全程监控跟踪进度)

如果您处于以下几种情况:

◇在校期间,因各种原因未能顺利毕业……拿不到官方毕业证【q/微741003700】

◇面对父母的压力,希望尽快拿到;

◇不清楚认证流程以及材料该如何准备;

◇回国时间很长,忘记办理;

◇回国马上就要找工作,办给用人单位看;

◇企事业单位必须要求办理的

◇需要报考公务员、购买免税车、落转户口

◇申请留学生创业基金

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

by

Timothy Spann

Principal Developer Advocate

https://budapestdata.hu/2024/en/

https://budapestml.hu/2024/en/

tim.spann@zilliz.com

https://www.linkedin.com/in/timothyspann/

https://x.com/paasdev

https://github.com/tspannhw

https://www.youtube.com/@flank-stack

milvus

vector database

gen ai

generative ai

deep learning

machine learning

apache nifi

apache pulsar

apache kafka

apache flink

一比一原版(UO毕业证)渥太华大学毕业证如何办理

UO毕业证录取书【微信95270640】购买(渥太华大学毕业证成绩单硕士学历)Q微信95270640代办UO学历认证留信网伪造渥太华大学学位证书精仿渥太华大学本科/硕士文凭证书补办渥太华大学 diplomaoffer,Transcript购买渥太华大学毕业证成绩单购买UO假毕业证学位证书购买伪造渥太华大学文凭证书学位证书,专业办理雅思、托福成绩单,学生ID卡,在读证明,海外各大学offer录取通知书,毕业证书,成绩单,文凭等材料:1:1完美还原毕业证、offer录取通知书、学生卡等各种在读或毕业材料的防伪工艺(包括 烫金、烫银、钢印、底纹、凹凸版、水印、防伪光标、热敏防伪、文字图案浮雕,激光镭射,紫外荧光,温感光标)学校原版上有的工艺我们一样不会少,不论是老版本还是最新版本,都能保证最高程度还原,力争完美以求让所有同学都能享受到完美的品质服务。

文凭办理流程:

1客户提供办理信息:姓名生日专业学位毕业时间等(如信息不确定可以咨询顾问:微信95270640我们有专业老师帮你查询);

2开始安排制作毕业证成绩单电子图;

3毕业证成绩单电子版做好以后发送给您确认;

4毕业证成绩单电子版您确认信息无误之后安排制作成品;

5成品做好拍照或者视频给您确认;

6快递给客户(国内顺丰国外DHLUPS等快读邮寄)。

7完成交易删除客户资料

高精端提供以下服务:

一:渥太华大学渥太华大学毕业证文凭证书全套材料从防伪到印刷水印底纹到钢印烫金

二:真实使馆认证(留学人员回国证明)使馆存档

三:真实教育部认证教育部存档教育部留服网站可查

四:留信认证留学生信息网站可查

五:与学校颁发的相关证件1:1纸质尺寸制定(定期向各大院校毕业生购买最新版本毕,业证成绩单保证您拿到的是鲁昂大学内部最新版本毕业证成绩单微信95270640)

A.为什么留学生需要操作留信认证?

留信认证全称全国留学生信息服务网认证,隶属于北京中科院。①留信认证门槛条件更低,费用更美丽,并且包过,完单周期短,效率高②留信认证虽然不能去国企,但是一般的公司都没有问题,因为国内很多公司连基本的留学生学历认证都不了解。这对于留学生来说,这就比自己光拿一个证书更有说服力,因为留学学历可以在留信网站上进行查询!

B.为什么我们提供的毕业证成绩单具有使用价值?

查询留服认证是国内鉴别留学生海外学历的唯一途径但认证只是个体行为不是所有留学生都操作所以没有办理认证的留学生的学历在国内也是查询不到的他们也仅仅只有一张文凭。所以这时候我们提供的和学校颁发的一模一样的毕业证成绩单就有了使用价值。只硕大的蛇皮袋手里拎着长铁钩正站在门口朝黑色的屋内张望不好坏人小偷山娃一怔却也灵机一动立马仰起头双手拢在嘴边朝楼上大喊:“爸爸爸——有人找——那人一听朝山娃尴尬地笑笑悻悻地走了山娃立马“嘭的一声将铁门锁死心却咚咚地乱跳当山娃跟父亲说起这事时父亲很吃惊抚摸着山娃的头说还好醒得及时要不家早被人掏空了到时连电视也没得看啰不过父亲还是夸山娃能临危不乱随机应变有胆有谋山娃笑笑说那都是书上学的看童话和小说时多

办(uts毕业证书)悉尼科技大学毕业证学历证书原版一模一样

原版一模一样【微信:741003700 】【(uts毕业证书)悉尼科技大学毕业证学历证书】【微信:741003700 】学位证,留信认证(真实可查,永久存档)offer、雅思、外壳等材料/诚信可靠,可直接看成品样本,帮您解决无法毕业带来的各种难题!外壳,原版制作,诚信可靠,可直接看成品样本。行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备。十五年致力于帮助留学生解决难题,包您满意。

本公司拥有海外各大学样板无数,能完美还原海外各大学 Bachelor Diploma degree, Master Degree Diploma

1:1完美还原海外各大学毕业材料上的工艺:水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠。文字图案浮雕、激光镭射、紫外荧光、温感、复印防伪等防伪工艺。材料咨询办理、认证咨询办理请加学历顾问Q/微741003700

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

Dynamic policy enforcement is becoming an increasingly important topic in today’s world where data privacy and compliance is a top priority for companies, individuals, and regulators alike. In these slides, we discuss how LinkedIn implements a powerful dynamic policy enforcement engine, called ViewShift, and integrates it within its data lake. We show the query engine architecture and how catalog implementations can automatically route table resolutions to compliance-enforcing SQL views. Such views have a set of very interesting properties: (1) They are auto-generated from declarative data annotations. (2) They respect user-level consent and preferences (3) They are context-aware, encoding a different set of transformations for different use cases (4) They are portable; while the SQL logic is only implemented in one SQL dialect, it is accessible in all engines.

#SQL #Views #Privacy #Compliance #DataLake

一比一原版(UCSF文凭证书)旧金山分校毕业证如何办理

毕业原版【微信:176555708】【(UCSF毕业证书)旧金山分校毕业证】【微信:176555708】成绩单、外壳、offer、留信学历认证(永久存档真实可查)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信176555708】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信176555708】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

→ 【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版(CU毕业证)卡尔顿大学毕业证如何办理

原件一模一样【微信:95270640】【卡尔顿大学毕业证CU学位证成绩单】【微信:95270640】(留信学历认证永久存档查询)采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信:95270640】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信:95270640】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份【微信:95270640】

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

→ 【关于价格问题(保证一手价格)

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:可来公司面谈,可签订合同,会陪同客户一起到教育部认证窗口递交认证材料,客户在教育部官方认证查询网站查询到认证通过结果后付款,不成功不收费!

办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】外观非常精致,由特殊纸质材料制成,上面印有校徽、校名、毕业生姓名、专业等信息。

办理卡尔顿大学毕业证CU学位证本科学位证成绩单【微信:95270640 】格式相对统一,各专业都有相应的模板。通常包括以下部分:

校徽:象征着学校的荣誉和传承。

校名:学校英文全称

授予学位:本部分将注明获得的具体学位名称。

毕业生姓名:这是最重要的信息之一,标志着该证书是由特定人员获得的。

颁发日期:这是毕业正式生效的时间,也代表着毕业生学业的结束。

其他信息:根据不同的专业和学位,可能会有一些特定的信息或章节。

办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】价值很高,需要妥善保管。一般来说,应放置在安全、干燥、防潮的地方,避免长时间暴露在阳光下。如需使用,最好使用复印件而不是原件,以免丢失。

综上所述,办理卡尔顿大学毕业证本科学位证成绩单CU学位证【微信:95270640 】是证明身份和学历的高价值文件。外观简单庄重,格式统一,包括重要的个人信息和发布日期。对持有人来说,妥善保管是非常重要的。

一比一原版(Unimelb毕业证书)墨尔本大学毕业证如何办理

原版制作【微信:41543339】【(Unimelb毕业证书)墨尔本大学毕业证】【微信:41543339】《成绩单、外壳、雅思、offer、留信学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路),我们拥有全套进口原装设备,特殊工艺都是采用不同进口机器一比一制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

一比一原版巴斯大学毕业证(Bath毕业证书)学历如何办理

原版办理【微信号:BYZS866】【巴斯大学毕业证(Bath毕业证书)】【微信号:BYZS866】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【关于学历材料质量】

我们承诺采用的是学校原版纸张(原版纸质、底色、纹路、)我们工厂拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有成品以及工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信号BYZS866】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信号BYZS866】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

原版一比一多伦多大学毕业证(UofT毕业证书)如何办理

原版制作【微信:41543339】【多伦多大学毕业证(UofT毕业证书)】【微信:41543339】《成绩单、外壳、雅思、offer、真实留信官方学历认证(永久存档/真实可查)》采用学校原版纸张、特殊工艺完全按照原版一比一制作(包括:隐形水印,阴影底纹,钢印LOGO烫金烫银,LOGO烫金烫银复合重叠,文字图案浮雕,激光镭射,紫外荧光,温感,复印防伪)行业标杆!精益求精,诚心合作,真诚制作!多年品质 ,按需精细制作,24小时接单,全套进口原装设备,十五年致力于帮助留学生解决难题,业务范围有加拿大、英国、澳洲、韩国、美国、新加坡,新西兰等学历材料,包您满意。

【我们承诺采用的是学校原版纸张(纸质、底色、纹路)我们拥有全套进口原装设备,特殊工艺都是采用不同机器制作,仿真度基本可以达到100%,所有工艺效果都可提前给客户展示,不满意可以根据客户要求进行调整,直到满意为止!】

【业务选择办理准则】

一、工作未确定,回国需先给父母、亲戚朋友看下文凭的情况,办理一份就读学校的毕业证【微信41543339】文凭即可

二、回国进私企、外企、自己做生意的情况,这些单位是不查询毕业证真伪的,而且国内没有渠道去查询国外文凭的真假,也不需要提供真实教育部认证。鉴于此,办理一份毕业证【微信41543339】即可

三、进国企,银行,事业单位,考公务员等等,这些单位是必需要提供真实教育部认证的,办理教育部认证所需资料众多且烦琐,所有材料您都必须提供原件,我们凭借丰富的经验,快捷的绿色通道帮您快速整合材料,让您少走弯路。

留信网认证的作用:

1:该专业认证可证明留学生真实身份

2:同时对留学生所学专业登记给予评定

3:国家专业人才认证中心颁发入库证书

4:这个认证书并且可以归档倒地方

5:凡事获得留信网入网的信息将会逐步更新到个人身份内,将在公安局网内查询个人身份证信息后,同步读取人才网入库信息

6:个人职称评审加20分

7:个人信誉贷款加10分

8:在国家人才网主办的国家网络招聘大会中纳入资料,供国家高端企业选择人才

留信网服务项目:

1、留学生专业人才库服务(留信分析)

2、国(境)学习人员提供就业推荐信服务

3、留学人员区块链存储服务

【关于价格问题(保证一手价格)】

我们所定的价格是非常合理的,而且我们现在做得单子大多数都是代理和回头客户介绍的所以一般现在有新的单子 我给客户的都是第一手的代理价格,因为我想坦诚对待大家 不想跟大家在价格方面浪费时间

对于老客户或者被老客户介绍过来的朋友,我们都会适当给一些优惠。

选择实体注册公司办理,更放心,更安全!我们的承诺:客户在留信官方认证查询网站查询到认证通过结果后付款,不成功不收费!

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Harness the power of AI-backed reports, benchmarking and data analysis to predict trends and detect anomalies in your marketing efforts.

Peter Caputa, CEO at Databox, reveals how you can discover the strategies and tools to increase your growth rate (and margins!).

From metrics to track to data habits to pick up, enhance your reporting for powerful insights to improve your B2B tech company's marketing.

- - -

This is the webinar recording from the June 2024 HubSpot User Group (HUG) for B2B Technology USA.

Watch the video recording at https://youtu.be/5vjwGfPN9lw

Sign up for future HUG events at https://events.hubspot.com/b2b-technology-usa/

Open Source Contributions to Postgres: The Basics POSETTE 2024

Postgres is the most advanced open-source database in the world and it's supported by a community, not a single company. So how does this work? How does code actually get into Postgres? I recently had a patch submitted and committed and I want to share what I learned in that process. I’ll give you an overview of Postgres versions and how the underlying project codebase functions. I’ll also show you the process for submitting a patch and getting that tested and committed.

Population Growth in Bataan: The effects of population growth around rural pl...

A population analysis specific to Bataan.

Recently uploaded (20)

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

Orchestrating the Future: Navigating Today's Data Workflow Challenges with Ai...

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

06-12-2024-BudapestDataForum-BuildingReal-timePipelineswithFLaNK AIM

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

ViewShift: Hassle-free Dynamic Policy Enforcement for Every Data Lake

A presentation that explain the Power BI Licensing

A presentation that explain the Power BI Licensing

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Predictably Improve Your B2B Tech Company's Performance by Leveraging Data

Open Source Contributions to Postgres: The Basics POSETTE 2024

Open Source Contributions to Postgres: The Basics POSETTE 2024

Population Growth in Bataan: The effects of population growth around rural pl...

Population Growth in Bataan: The effects of population growth around rural pl...