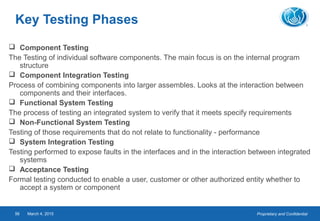

This document provides an overview of software testing concepts. It defines software testing, discusses the testing process, and covers related terminology. The key points are:

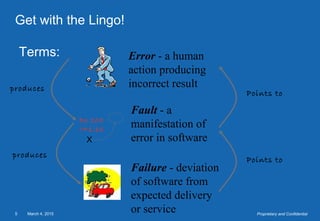

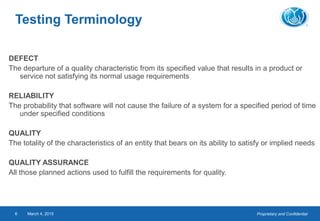

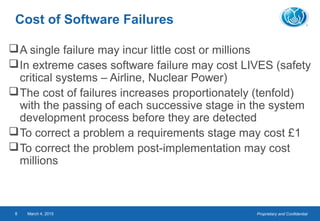

- Software testing is the process of executing a program to evaluate its quality and identify errors. It involves designing and running tests to verify requirements are met.

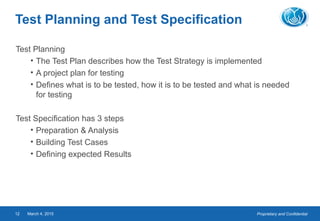

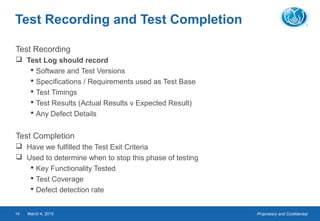

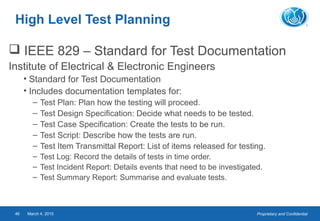

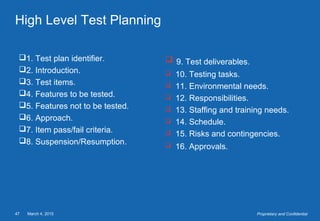

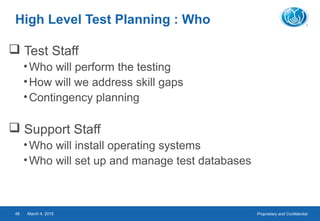

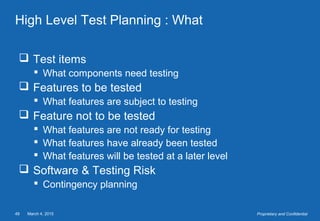

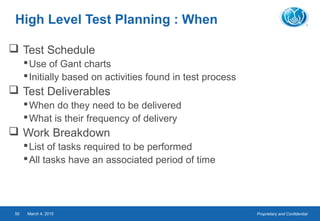

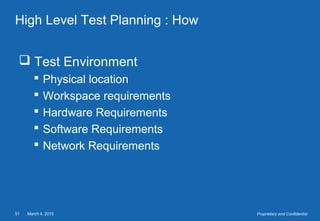

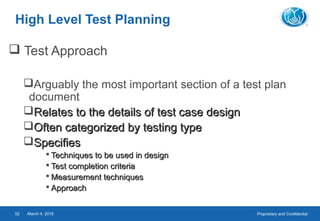

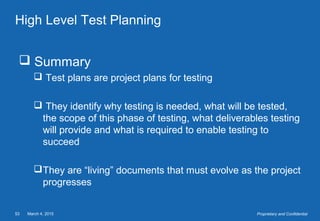

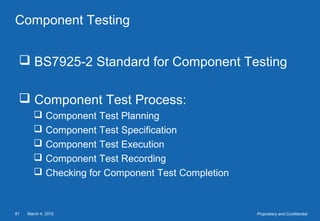

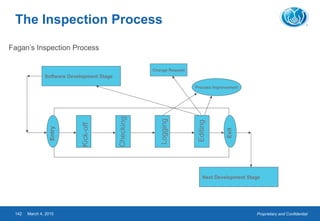

- The testing process includes planning, specification, execution, recording results, and checking for completion. Regression testing is also important to check for unintended changes.

- Defining expected results is crucial, as it allows testers to properly evaluate actual outputs. Good communication and independence from development are also important aspects of testing.