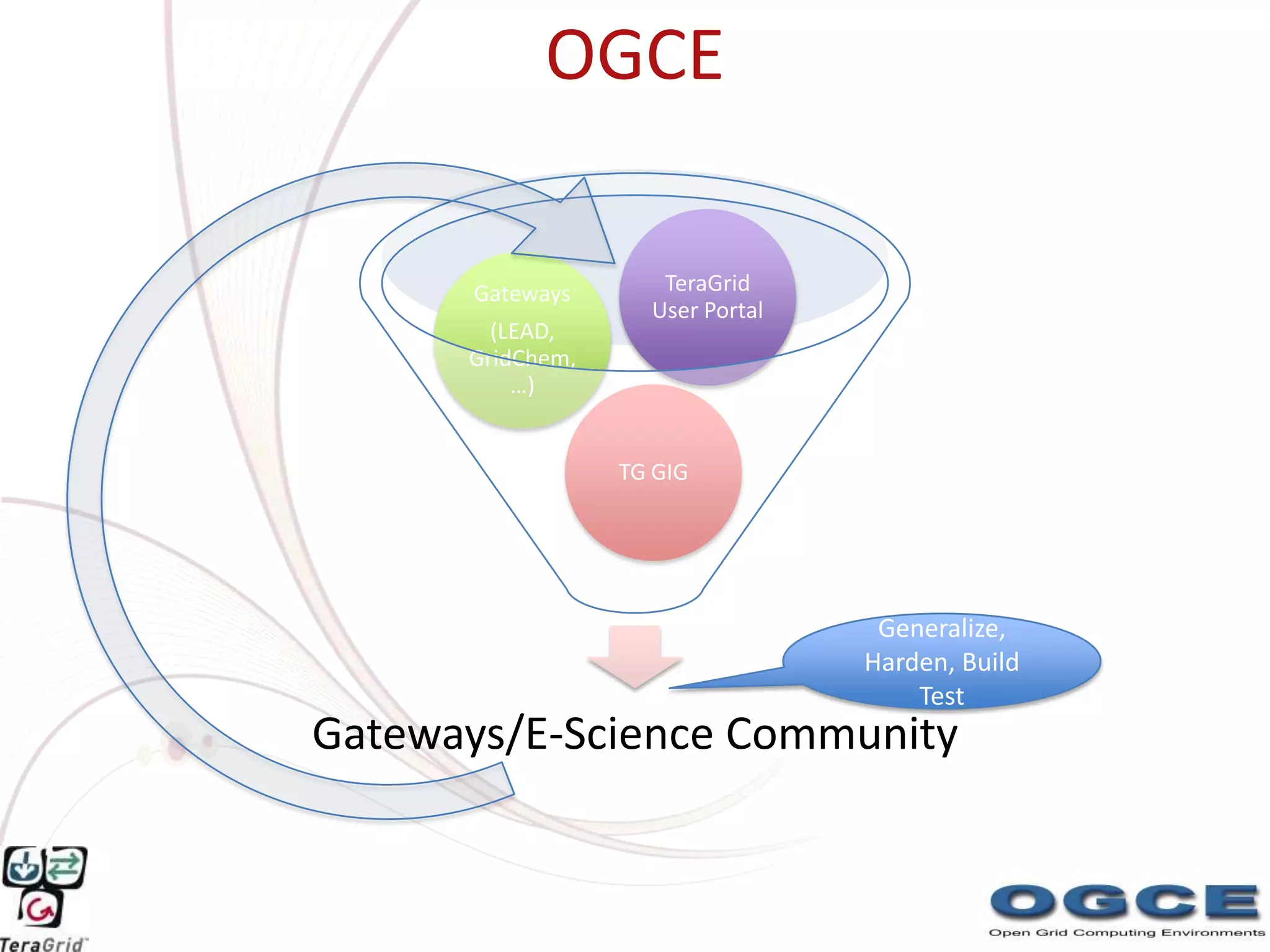

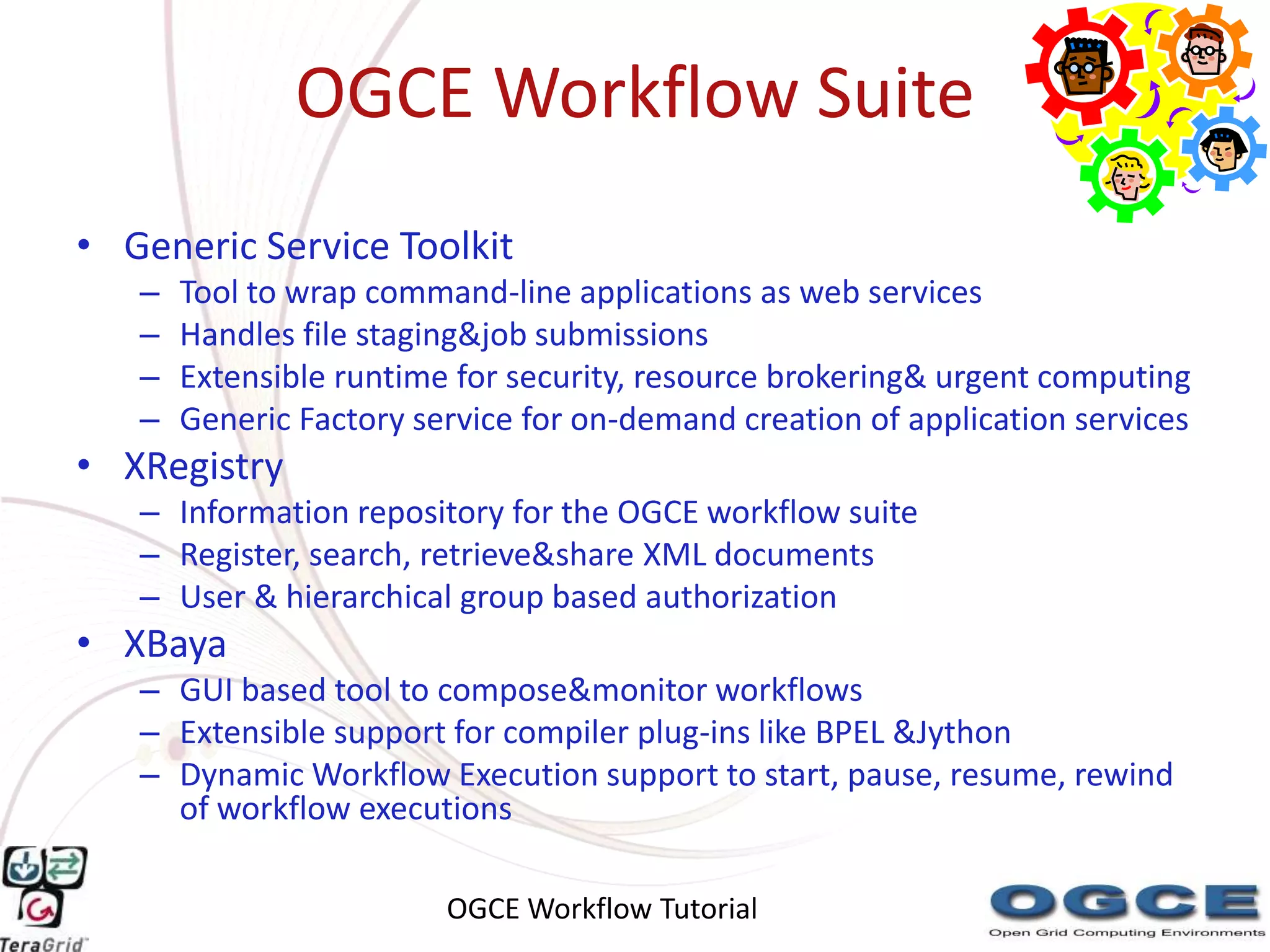

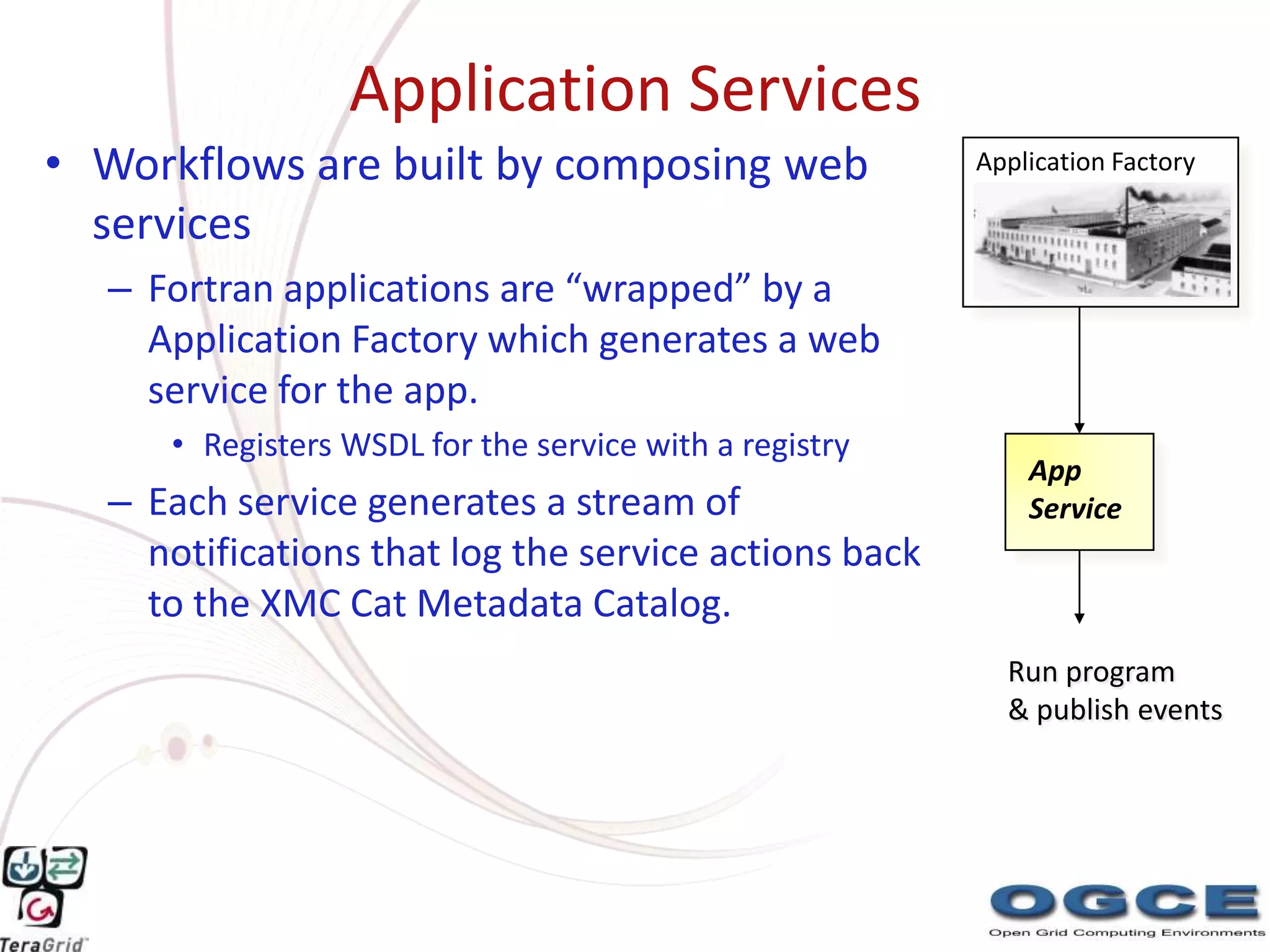

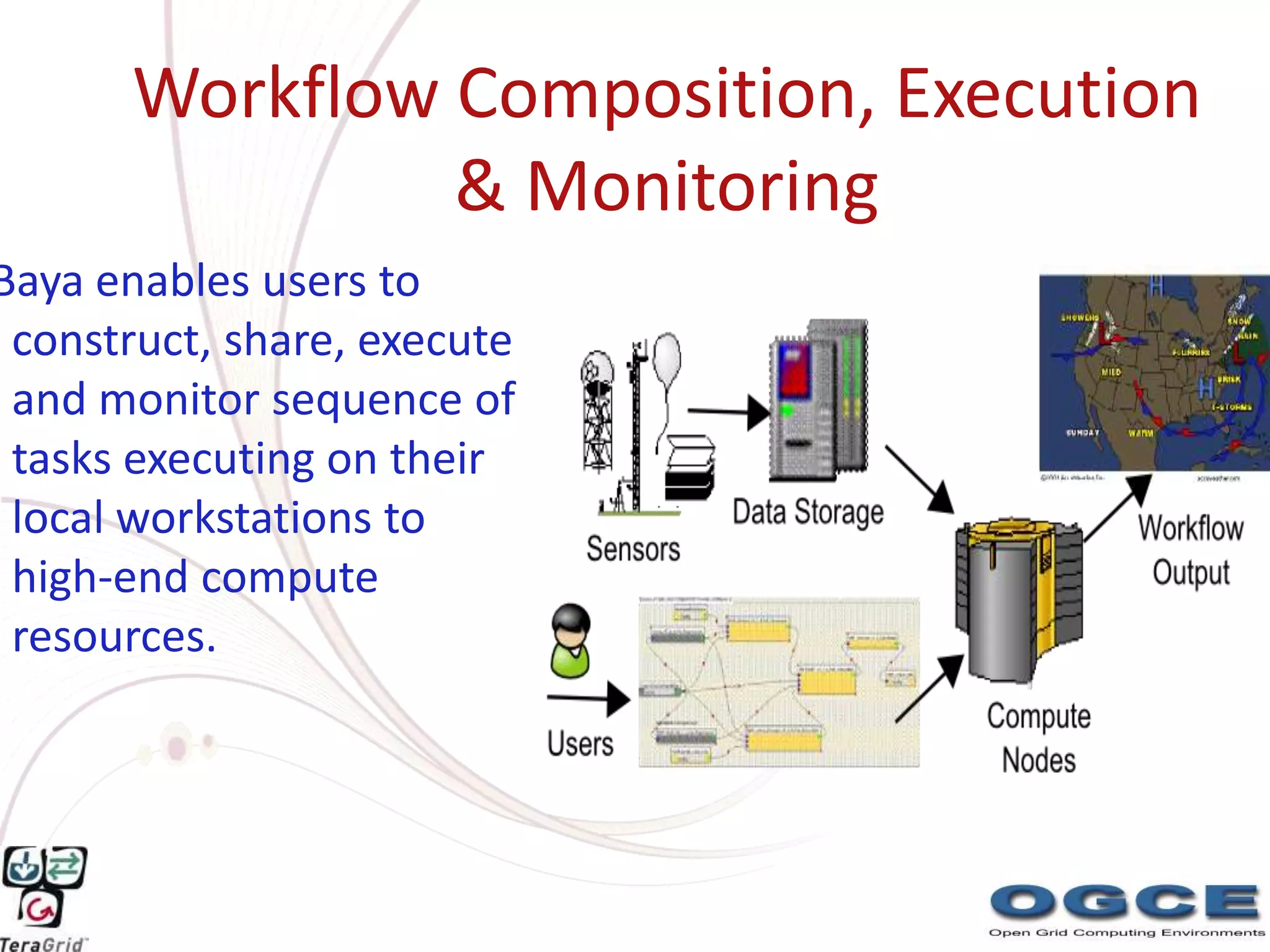

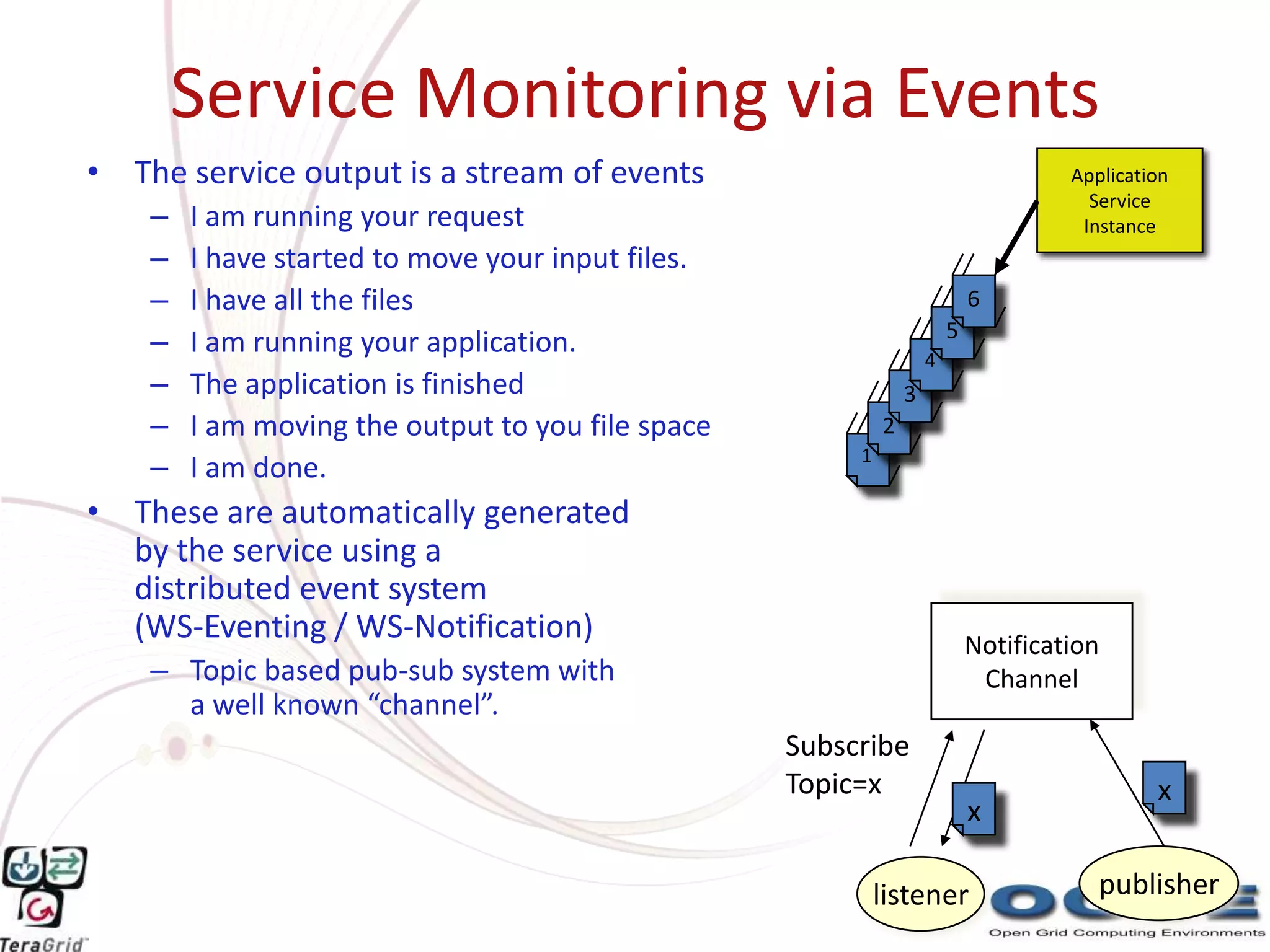

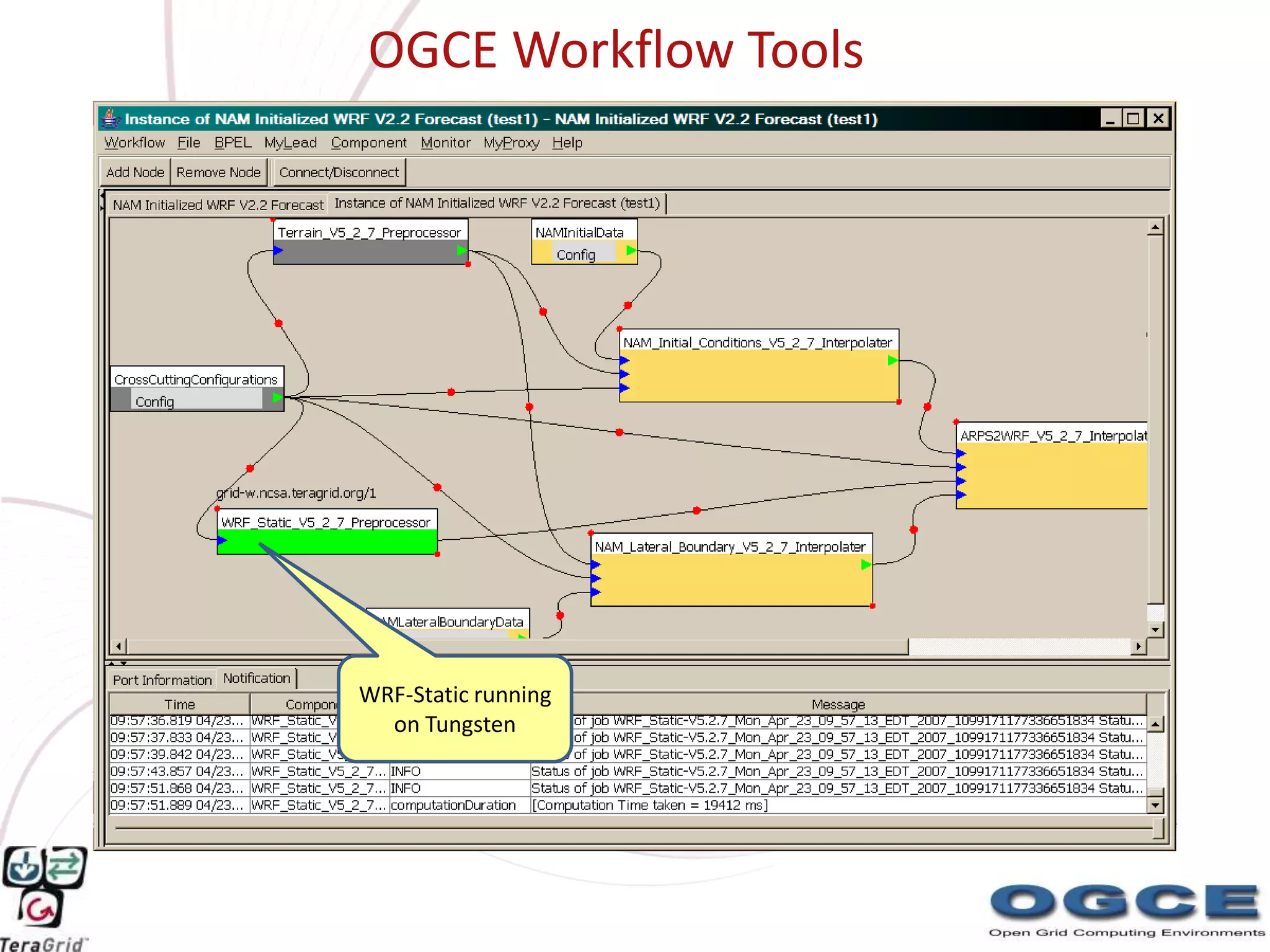

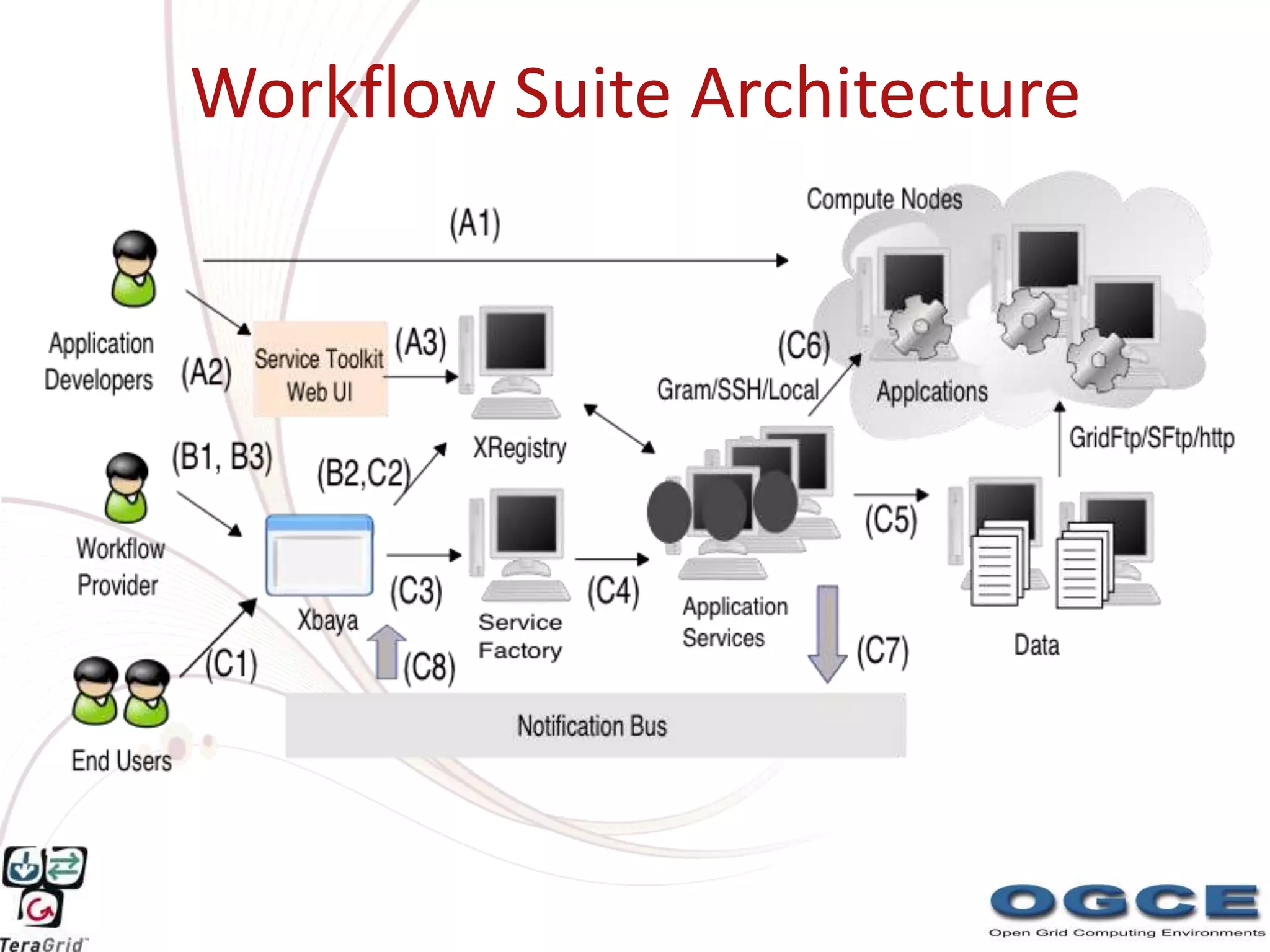

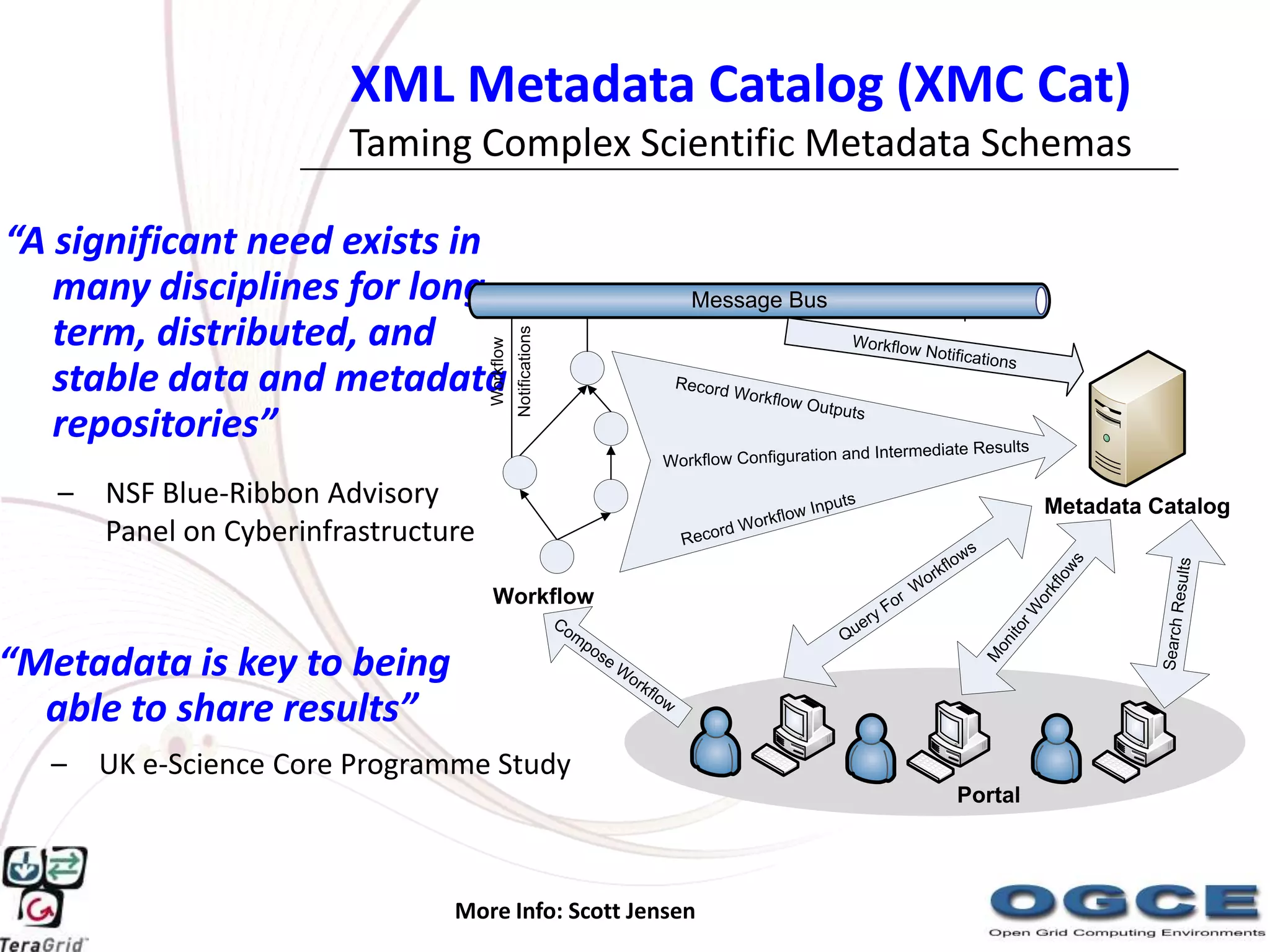

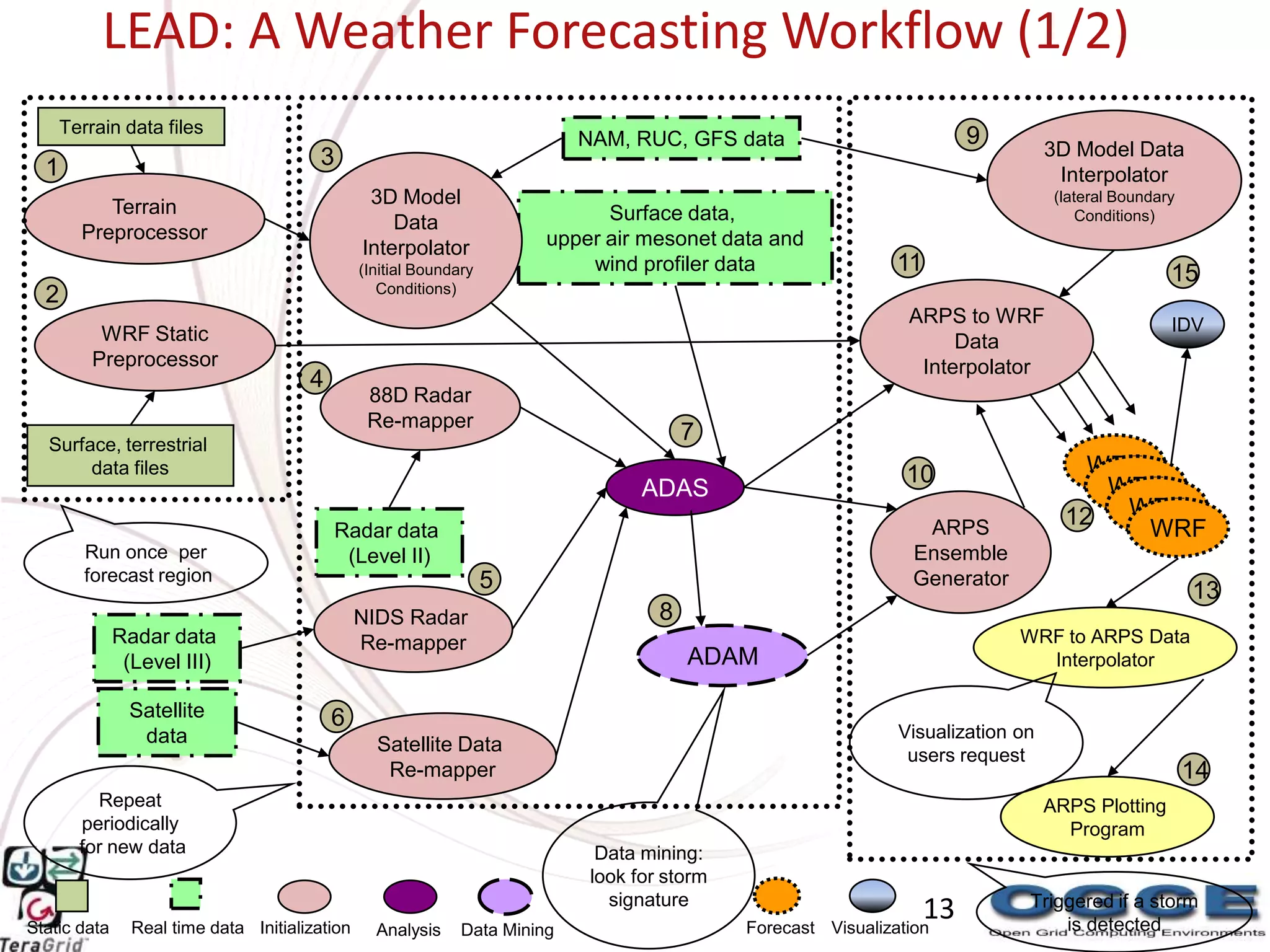

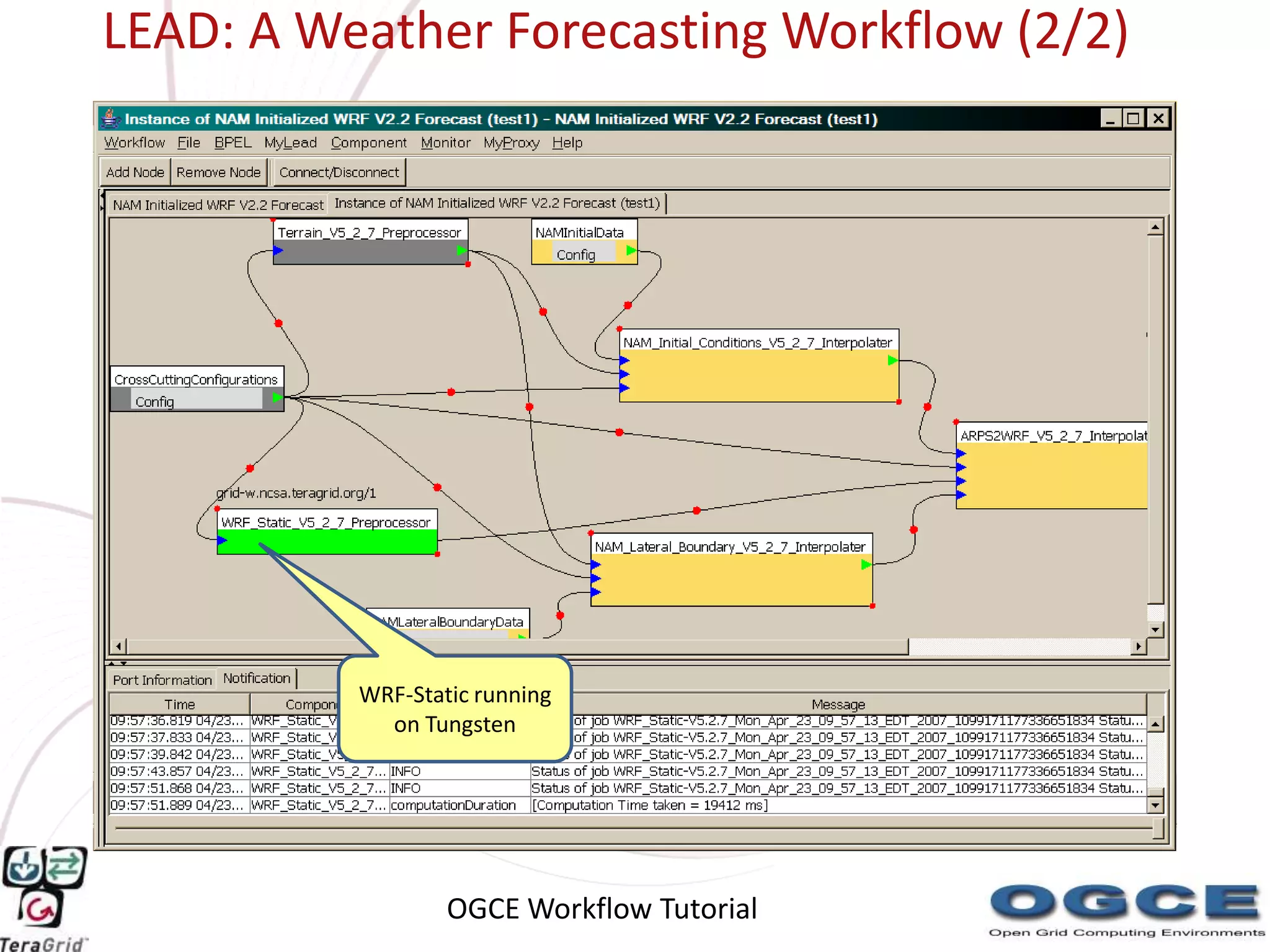

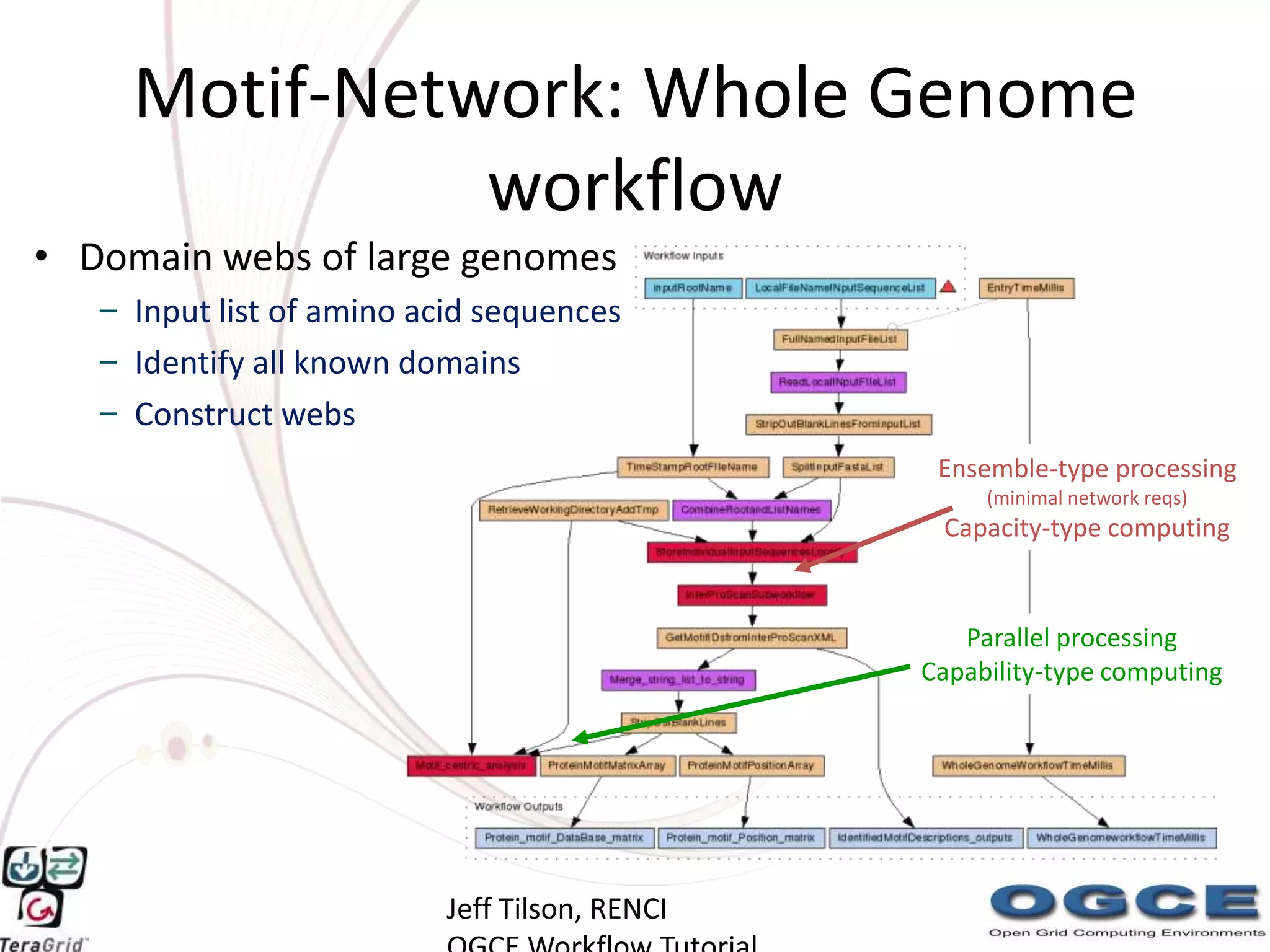

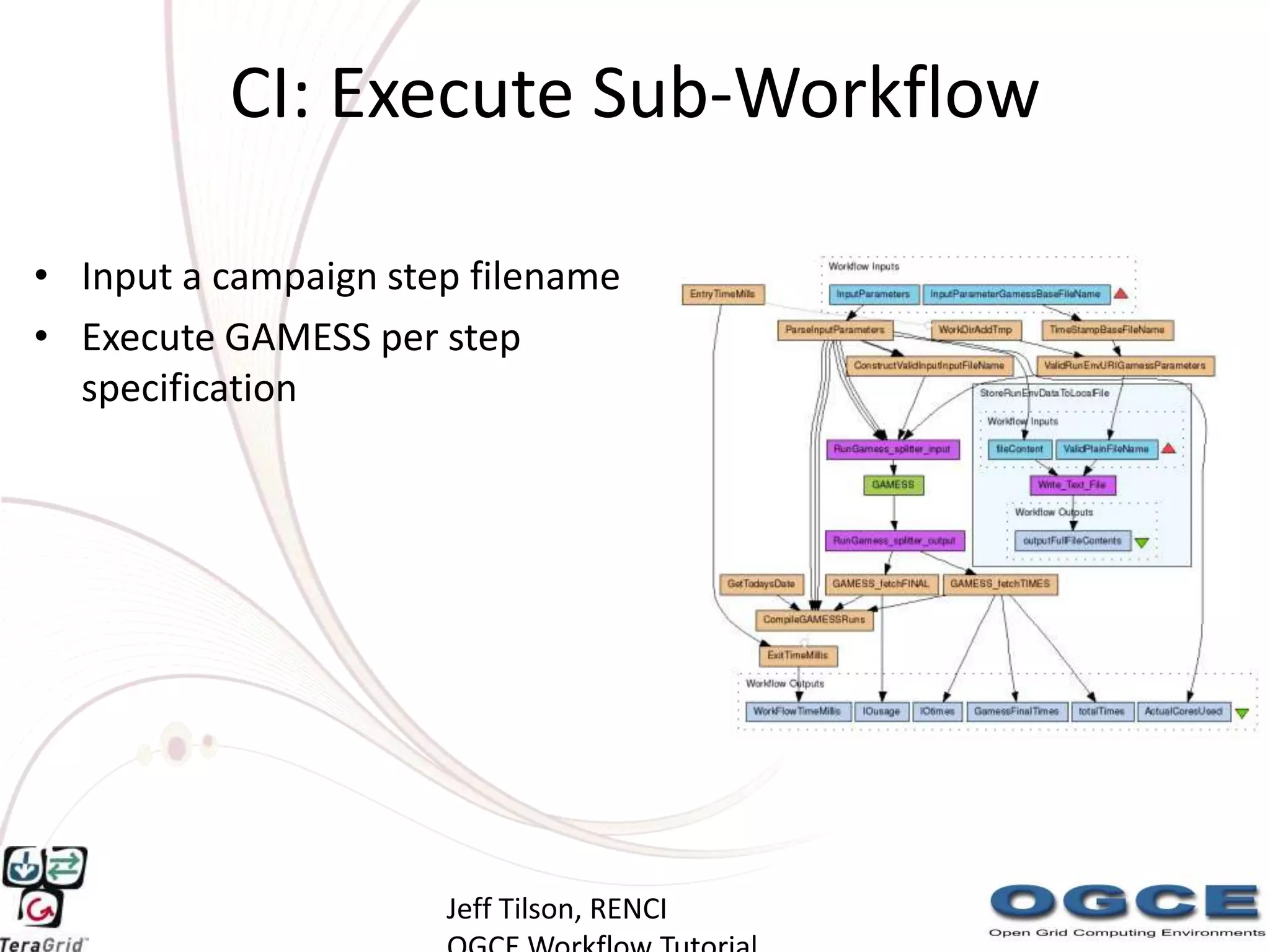

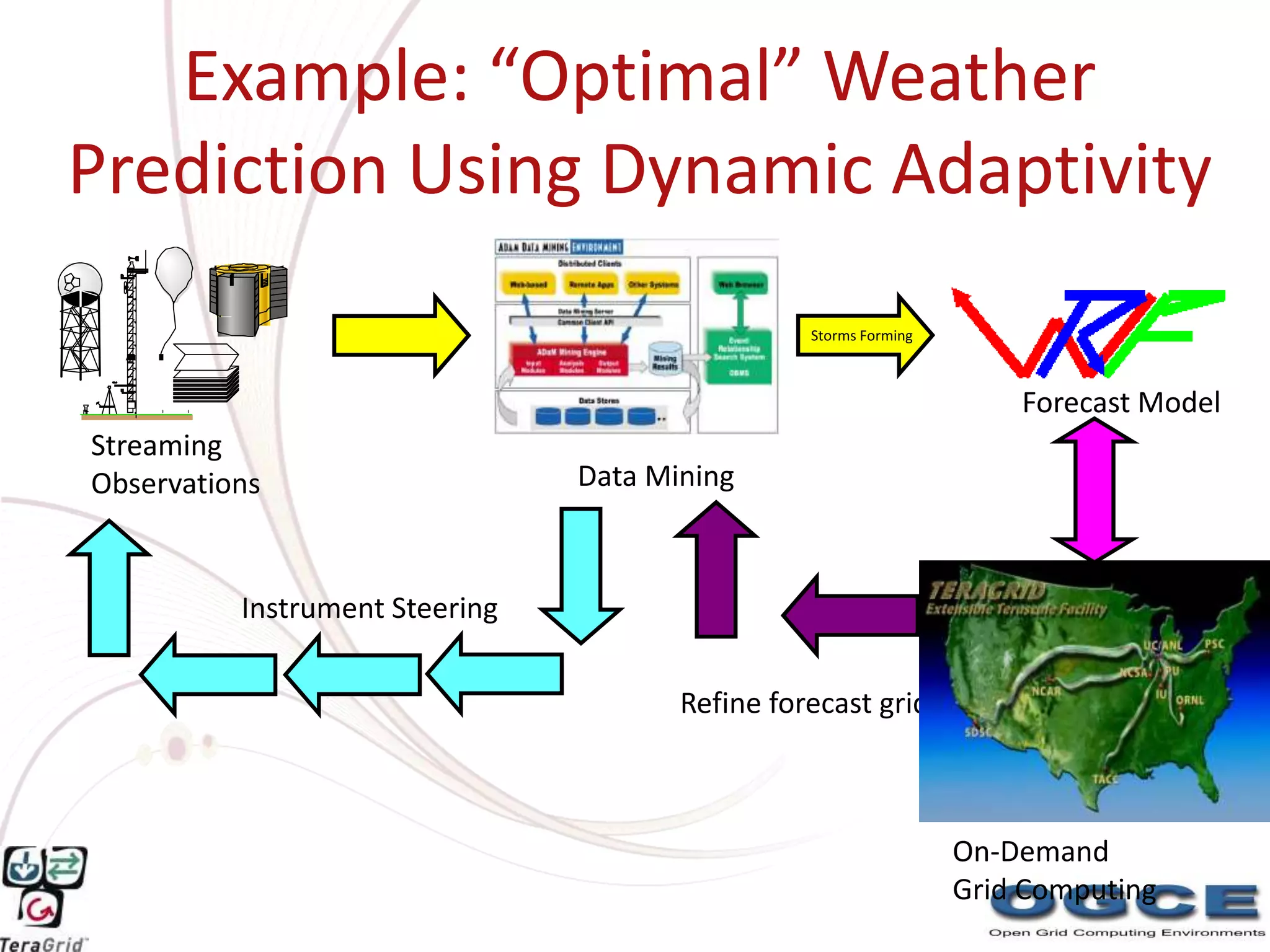

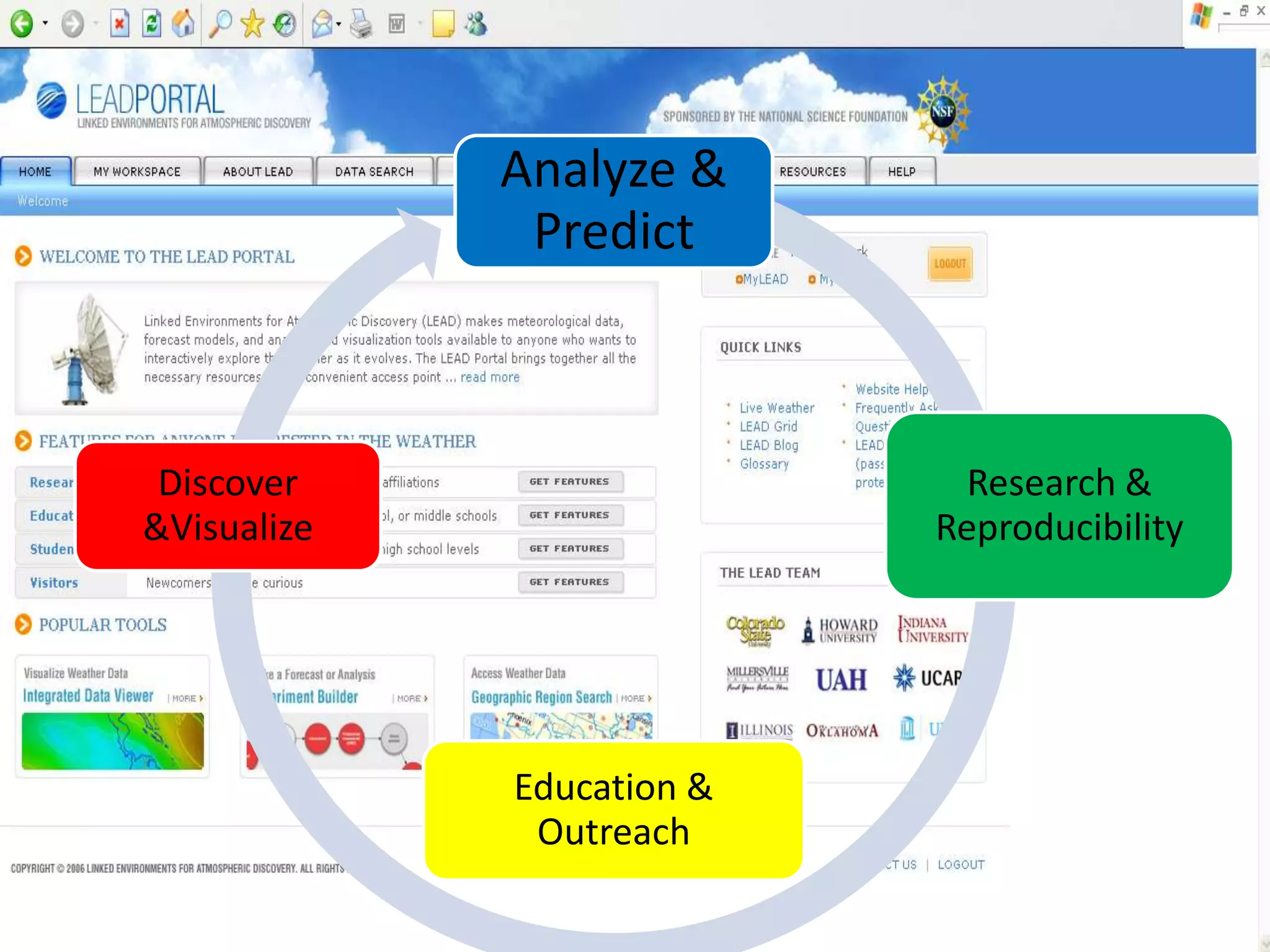

The document describes the OGCE WorkflowSuite, which provides tools for composing and executing scientific workflows. It includes the Generic Service Toolkit for wrapping applications as web services, the XRegistry for information sharing, and XBaya for graphical workflow composition and monitoring. Workflows can integrate various resources and be made flexible, dynamic, and interoperable. Example applications discussed are weather forecasting, genome analysis, and computational evaluation.