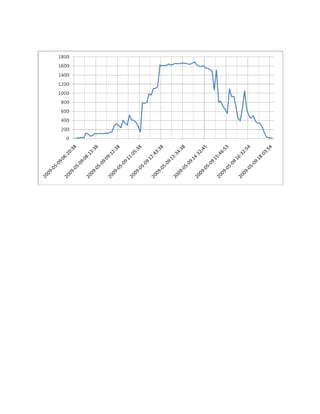

May 09 2009 Solar

•Download as DOC, PDF•

0 likes•94 views

Solar Energy Production for May 9, 2009 for Erik and Heather

Report

Share

Report

Share

Recommended

Sentence Validation by Statistical Language Modeling and Semantic Relations

This paper deals with Sentence Validation - a sub-field of Natural Language Processing. It finds various applications in

different areas as it deals with understanding the natural language (English in most cases) and manipulating it. So the effort is on

understanding and extracting important information delivered to the computer and make possible efficient human computer

interaction. Sentence Validation is approached in two ways - by Statistical approach and Semantic approach. In both approaches

database is trained with the help of sample sentences of Brown corpus of NLTK. The statistical approach uses trigram technique based

on N-gram Markov Model and modified Kneser-Ney Smoothing to handle zero probabilities. As another testing on statistical basis,

tagging and chunking of the sentences having named entities is carried out using pre-defined grammar rules and semantic tree parsing,

and chunked off sentences are fed into another database, upon which testing is carried out. Finally, semantic analysis is carried out by

extracting entity relation pairs which are then tested. After the results of all three approaches is compiled, graphs are plotted and

variations are studied. Hence, a comparison of three different models is calculated and formulated. Graphs pertaining to the

probabilities of the three approaches are plotted, which clearly demarcate them and throw light on the findings of the project.

historia del internet y de la web

La Web fue creada en el CERN en Suiza a principios de los años 90 por Tim Berners-Lee como una forma de compartir información de manera universal entre investigadores. Introdujo conceptos como el hipertexto que permiten acceder a información de forma sencilla desde cualquier ordenador. Esto llevó a un crecimiento exponencial de Internet ya que permitió a usuarios novatos acceder fácilmente a recursos en todo el mundo.

Protecting Global Records Sharing with Identity Based Access Control List

Generally, the information is stored in the database. Protecting sensitive information are encrypted before outsourcing to a

service provider. We send the request to service provider through SQL queries. The query expressiveness is limited by means of any

software-based cryptographical constructs then deployed, for server-side query working on the encrypted data.Data sharing in the

service provider is emerging as a promising technique for allowing users to access data. The growing number of customers who stores

their data in service provider is increasingly challenging users’ privacy and the security of data. The TrustedDB an outsourced

database prototype that allows clients to execute SQL queries with privacy and under regulatory compliance constraints by leveraging

server-hosted. Tamper-proof believed hardware in crucial query processing levels, thereby removing any limits on the type of

supported queries. It focuses on providing a dependable and secure data sharing service that allows users dynamic access to their

information. TrustedDB is constructed and runs on hardware, and its performance and costs are evaluated here.

Cifra City De Sara Bareilles

This document provides lyrics to the song "City" by Sara Bareilles. The song describes a night out at bars filled with people and perfume, but the singer does not feel like singing and wants to leave. The chorus reflects on how it's easy to get lost in a big city at night, and the singer is looking for any reason to escape. However, she wonders if she could find someone to hold onto before she fades away in the city lights.

Ap Review1

The document contains 11 multiple choice questions about cardiovascular system anatomy and physiology. Questions cover topics like hypertension, strokes, arterial walls, blood vessels associated with the heart, what gives blood its red color, oxygen transport by hemoglobin, and the structure of arteries.

Software Architecture Evaluation of Unmanned Aerial Vehicles Fuzzy Based Cont...

In this survey paper we discuss the recent techniques for software architecture evaluation methods for Unmanned Aerial

Vehicle (UAV) systems that use fuzzy control methodology. We discuss the current methodologies and evaluation approaches,

identify their limitations, and discuss the open research issues. These issues include methods used to evaluate the level of risk,

communications latency, availability, sensor performance, automation, and human interaction.

Duplicate Code Detection using Control Statements

Code clone detection is an important area of research as reusability is a key factor in software evolution. Duplicate code

degrades the design and structure of software and software qualities like readability, changeability, maintainability. Code clone

increases the maintenance cost as incorrect changes in copied code may lead to more errors. In this paper we address structural code

similarity detection and propose new methods to detect structural clones using structure of control statements. By structure we mean

order of control statements used in the source code. We have considered two orders of control structures: (i) Sequence of control

statements as it appears (ii) Execution flow of control statements.

Recommended

Sentence Validation by Statistical Language Modeling and Semantic Relations

This paper deals with Sentence Validation - a sub-field of Natural Language Processing. It finds various applications in

different areas as it deals with understanding the natural language (English in most cases) and manipulating it. So the effort is on

understanding and extracting important information delivered to the computer and make possible efficient human computer

interaction. Sentence Validation is approached in two ways - by Statistical approach and Semantic approach. In both approaches

database is trained with the help of sample sentences of Brown corpus of NLTK. The statistical approach uses trigram technique based

on N-gram Markov Model and modified Kneser-Ney Smoothing to handle zero probabilities. As another testing on statistical basis,

tagging and chunking of the sentences having named entities is carried out using pre-defined grammar rules and semantic tree parsing,

and chunked off sentences are fed into another database, upon which testing is carried out. Finally, semantic analysis is carried out by

extracting entity relation pairs which are then tested. After the results of all three approaches is compiled, graphs are plotted and

variations are studied. Hence, a comparison of three different models is calculated and formulated. Graphs pertaining to the

probabilities of the three approaches are plotted, which clearly demarcate them and throw light on the findings of the project.

historia del internet y de la web

La Web fue creada en el CERN en Suiza a principios de los años 90 por Tim Berners-Lee como una forma de compartir información de manera universal entre investigadores. Introdujo conceptos como el hipertexto que permiten acceder a información de forma sencilla desde cualquier ordenador. Esto llevó a un crecimiento exponencial de Internet ya que permitió a usuarios novatos acceder fácilmente a recursos en todo el mundo.

Protecting Global Records Sharing with Identity Based Access Control List

Generally, the information is stored in the database. Protecting sensitive information are encrypted before outsourcing to a

service provider. We send the request to service provider through SQL queries. The query expressiveness is limited by means of any

software-based cryptographical constructs then deployed, for server-side query working on the encrypted data.Data sharing in the

service provider is emerging as a promising technique for allowing users to access data. The growing number of customers who stores

their data in service provider is increasingly challenging users’ privacy and the security of data. The TrustedDB an outsourced

database prototype that allows clients to execute SQL queries with privacy and under regulatory compliance constraints by leveraging

server-hosted. Tamper-proof believed hardware in crucial query processing levels, thereby removing any limits on the type of

supported queries. It focuses on providing a dependable and secure data sharing service that allows users dynamic access to their

information. TrustedDB is constructed and runs on hardware, and its performance and costs are evaluated here.

Cifra City De Sara Bareilles

This document provides lyrics to the song "City" by Sara Bareilles. The song describes a night out at bars filled with people and perfume, but the singer does not feel like singing and wants to leave. The chorus reflects on how it's easy to get lost in a big city at night, and the singer is looking for any reason to escape. However, she wonders if she could find someone to hold onto before she fades away in the city lights.

Ap Review1

The document contains 11 multiple choice questions about cardiovascular system anatomy and physiology. Questions cover topics like hypertension, strokes, arterial walls, blood vessels associated with the heart, what gives blood its red color, oxygen transport by hemoglobin, and the structure of arteries.

Software Architecture Evaluation of Unmanned Aerial Vehicles Fuzzy Based Cont...

In this survey paper we discuss the recent techniques for software architecture evaluation methods for Unmanned Aerial

Vehicle (UAV) systems that use fuzzy control methodology. We discuss the current methodologies and evaluation approaches,

identify their limitations, and discuss the open research issues. These issues include methods used to evaluate the level of risk,

communications latency, availability, sensor performance, automation, and human interaction.

Duplicate Code Detection using Control Statements

Code clone detection is an important area of research as reusability is a key factor in software evolution. Duplicate code

degrades the design and structure of software and software qualities like readability, changeability, maintainability. Code clone

increases the maintenance cost as incorrect changes in copied code may lead to more errors. In this paper we address structural code

similarity detection and propose new methods to detect structural clones using structure of control statements. By structure we mean

order of control statements used in the source code. We have considered two orders of control structures: (i) Sequence of control

statements as it appears (ii) Execution flow of control statements.

A Survey of Existing Mechanisms in Energy-Aware Routing In MANETs

A mobile ad hoc network (MANET) is a distributed and Self-organized network. In MANET, network topology

frequently changes because of high mobility nodes. Mobility of nodes and battery energy depletion are two major factors that cause loss

of the discovered routes. battery power depletion causes the nodes to die and loss of the obtained paths and thus affects the network

connectivity. Therefore, a routing protocol for energy efficiency should consider all the aspects to manage the energy consumption in

the network. so introducing an energy aware routing protocol, is one of the most important issues in MANET. This paper reviews some

energy aware routing protocols. The main purpose energy aware protocols are efficiently use of energy, reducing energy consumption

and increasing the network lifetime

Data Mining in the World of BIG Data-A Survey

Rapid development and popularization of internet and technological advancement introduced massive amount

of data and still increasing continuously and daily. A very large amount of data generated, collected, stored, transferred by

applications such as sensors, smart mobile devices, cloud systems and social networks put us on the era of BIG data, a data

with huge size, complex and unstructured data types from many origins. So converting these BIG data into useful information

is essential, the technique for discovering hidden interesting patterns and knowledge insights into BIG data introduced

as BIG data mining. BIG data have rises so many problems and challenges related with handling, storing, managing,

transferring, analyzing and mining but it has provides new directions and wide range of opportunities for research

and information extraction and future of some technologies such as data mining in the terms of BIG data mining. In this

paper, we present the concept of BIG data and BIG data mining and mentioned problems with BIG data mining and listed

new research directions for BIG data mining and problems with traditional data mining techniques while dealing with

BIG data as well as we have also discuss some comparison between traditional data mining algorithms and some big data

mining algorithms that will be useful for new BIG data mining technology future.

Al Otro Lado Del Sol

El documento es una canción que habla sobre un hombre que ha terminado de construir una barca y está listo para emprender un viaje por mar con su amada para encontrar un lugar donde puedan estar juntos lejos de la envidia y el desconcierto. El hombre le pide a su amada que se apresure a preparar el equipaje para zarpar con la marea a un nuevo día y navegar hacia el mar azul, llevando su amor al otro lado del sol.

Dairy Products

This document lists various dairy products including milk, cheese, yogurt, drinking yogurt, milk meringue, smoothies, custard, ice-cream, and creme caramel. It provides a high-level overview of different types of dairy items without additional context or details about each one.

P S U V

El documento parece ser una lista de contactos que incluye el nombre, apellidos, documento de identidad, teléfono, correo electrónico, instancia y función de varias personas.

A Review Study on Secure Authentication in Mobile System

This document summarizes authentication techniques for mobile systems. It discusses single-factor and multi-factor authentication using passwords, tokens, and biometrics. It also reviews RFID authentication protocols like SRAC and ASRAC for secure and low-cost RFID systems. Public key cryptography models using elliptic curve cryptography are proposed for mobile security. Secure authentication provides benefits like protection, scalability, speed, and availability for mobile enterprises. Both encryption and authentication are needed but encryption requires more processing resources so should only be used for critical information.

A Novel Document Image Binarization For Optical Character Recognition

This paper presents a technique for document image binarization that segments the foreground text accurately from poorly

degraded document images. The proposed technique is based on the

Segmentation of text from poorly degraded document images and

it is a very demanding job due to the high variation between the background and the foreground of the document. This paper pr

oposes

a novel document image binarization technique that segments t

he texts by using adaptive image contrast. It is a combination of the

local image contrast and the local image gradient that is efficient to overcome variations in text and background caused by d

ifferent

types degradation effects. In the proposed technique

, first an adaptive contrast map is constructed for a degraded input document

image. The contrast map is then binarized by global thresholding and pooled with Canny’s edge map detection to identify the t

ext

stroke edge pixels. By applying Segmentation the

text is further segmented by a local thresholding method that. The proposed method

is simple, strong, and requires minimum parameter tuning

Pre K Week 1 09 10

The document outlines the daily schedule and activities for a pre-K class during the first week of school from August 24-28, 2009. Each day includes greetings, breakfast, morning meeting, bathroom breaks, outdoor time, lunch, story time, quiet time, guided discovery activities, and dismissal. The schedule introduces the children to classroom routines and rules while providing time for socialization, learning names, basic academic concepts like counting and patterns, guided play with materials, and outdoor physical activities.

Feature Selection Algorithm for Supervised and Semisupervised Clustering

This document reviews steganography techniques for hiding data in digital images. It discusses how the least significant bit insertion method can be used to embed secret messages in the pixel values of an image file without noticeably changing the image. The document also compares steganography to cryptography, noting that while cryptography encrypts messages, steganography aims to conceal even the existence of hidden communications. It proposes a novel approach that first encrypts data using cryptography before embedding it in images using least significant bit insertion and interpolation to increase capacity.

Enhancing Data Staging as a Mechanism for Fast Data Access

Most organizations rely on data in their daily transactions and operations. This data is retrieved from different source

systems in a distributed network hence it comes in varying data types and formats. The source data is prepared and cleaned by

subjecting it to algorithms and functions before transferring it to the target systems which takes more time. Moreover, there is pressure

from data users within the data warehouse for data to be availed quickly for them to make appropriate decisions and forecasts. This has

not been the case due to immense data explosion in millions of transactions resulting from business processes of the organizations. The

current legacy systems cannot handle large data levels due to processing capabilities and customizations. This approach has failed

because there lacks clear procedures to decide which data to collect or exempt. It is with this concern that performance degradation

should be addressed because organizations invest a lot of resources to establish a functioning data warehouse. Data staging is a

technological innovation within data warehouses where data manipulations are carried out before transfer to target systems. It carries

out data integration by harmonizing the staging functions, cleansing, verification, and archiving source data. Deterministic

Prioritization Approach will be employed to enhance data staging, and to clearly prove this change Experiment design is needed to test

scenarios in the study. Previous studies in this field have mainly focused in the data warehouses processes as a whole but less to the

specifics of data staging area.

Detection of Anemia using Fuzzy Logic

This document presents a fuzzy logic approach for detecting anemia using clinical test results. It describes developing a fuzzy expert system with 3 input variables (hemoglobin, mean corpuscular volume, mean corpuscular hemoglobin concentration) and 1 output variable (type of anemia). Fuzzy sets and rules are defined to classify anemia based on the input clinical values. The system was tested on sample input values and correctly classified the type of anemia based on the fuzzy logic rules. The approach aims to help doctors more accurately detect anemia using a fuzzy expert system compared to probabilistic logic or relying solely on symptoms.

Kitchen Brochure

This document advertises 3-D CAD home design services including kitchen and bath layouts with space planning, perspective, plan, and elevation views, and specialized rendering services available at reasonable pricing. The services are aimed at homeowners and contractors looking to design remodeling or new construction projects. Cabinet sales are also available from the company.

More Related Content

Viewers also liked

A Survey of Existing Mechanisms in Energy-Aware Routing In MANETs

A mobile ad hoc network (MANET) is a distributed and Self-organized network. In MANET, network topology

frequently changes because of high mobility nodes. Mobility of nodes and battery energy depletion are two major factors that cause loss

of the discovered routes. battery power depletion causes the nodes to die and loss of the obtained paths and thus affects the network

connectivity. Therefore, a routing protocol for energy efficiency should consider all the aspects to manage the energy consumption in

the network. so introducing an energy aware routing protocol, is one of the most important issues in MANET. This paper reviews some

energy aware routing protocols. The main purpose energy aware protocols are efficiently use of energy, reducing energy consumption

and increasing the network lifetime

Data Mining in the World of BIG Data-A Survey

Rapid development and popularization of internet and technological advancement introduced massive amount

of data and still increasing continuously and daily. A very large amount of data generated, collected, stored, transferred by

applications such as sensors, smart mobile devices, cloud systems and social networks put us on the era of BIG data, a data

with huge size, complex and unstructured data types from many origins. So converting these BIG data into useful information

is essential, the technique for discovering hidden interesting patterns and knowledge insights into BIG data introduced

as BIG data mining. BIG data have rises so many problems and challenges related with handling, storing, managing,

transferring, analyzing and mining but it has provides new directions and wide range of opportunities for research

and information extraction and future of some technologies such as data mining in the terms of BIG data mining. In this

paper, we present the concept of BIG data and BIG data mining and mentioned problems with BIG data mining and listed

new research directions for BIG data mining and problems with traditional data mining techniques while dealing with

BIG data as well as we have also discuss some comparison between traditional data mining algorithms and some big data

mining algorithms that will be useful for new BIG data mining technology future.

Al Otro Lado Del Sol

El documento es una canción que habla sobre un hombre que ha terminado de construir una barca y está listo para emprender un viaje por mar con su amada para encontrar un lugar donde puedan estar juntos lejos de la envidia y el desconcierto. El hombre le pide a su amada que se apresure a preparar el equipaje para zarpar con la marea a un nuevo día y navegar hacia el mar azul, llevando su amor al otro lado del sol.

Dairy Products

This document lists various dairy products including milk, cheese, yogurt, drinking yogurt, milk meringue, smoothies, custard, ice-cream, and creme caramel. It provides a high-level overview of different types of dairy items without additional context or details about each one.

P S U V

El documento parece ser una lista de contactos que incluye el nombre, apellidos, documento de identidad, teléfono, correo electrónico, instancia y función de varias personas.

A Review Study on Secure Authentication in Mobile System

This document summarizes authentication techniques for mobile systems. It discusses single-factor and multi-factor authentication using passwords, tokens, and biometrics. It also reviews RFID authentication protocols like SRAC and ASRAC for secure and low-cost RFID systems. Public key cryptography models using elliptic curve cryptography are proposed for mobile security. Secure authentication provides benefits like protection, scalability, speed, and availability for mobile enterprises. Both encryption and authentication are needed but encryption requires more processing resources so should only be used for critical information.

A Novel Document Image Binarization For Optical Character Recognition

This paper presents a technique for document image binarization that segments the foreground text accurately from poorly

degraded document images. The proposed technique is based on the

Segmentation of text from poorly degraded document images and

it is a very demanding job due to the high variation between the background and the foreground of the document. This paper pr

oposes

a novel document image binarization technique that segments t

he texts by using adaptive image contrast. It is a combination of the

local image contrast and the local image gradient that is efficient to overcome variations in text and background caused by d

ifferent

types degradation effects. In the proposed technique

, first an adaptive contrast map is constructed for a degraded input document

image. The contrast map is then binarized by global thresholding and pooled with Canny’s edge map detection to identify the t

ext

stroke edge pixels. By applying Segmentation the

text is further segmented by a local thresholding method that. The proposed method

is simple, strong, and requires minimum parameter tuning

Pre K Week 1 09 10

The document outlines the daily schedule and activities for a pre-K class during the first week of school from August 24-28, 2009. Each day includes greetings, breakfast, morning meeting, bathroom breaks, outdoor time, lunch, story time, quiet time, guided discovery activities, and dismissal. The schedule introduces the children to classroom routines and rules while providing time for socialization, learning names, basic academic concepts like counting and patterns, guided play with materials, and outdoor physical activities.

Feature Selection Algorithm for Supervised and Semisupervised Clustering

This document reviews steganography techniques for hiding data in digital images. It discusses how the least significant bit insertion method can be used to embed secret messages in the pixel values of an image file without noticeably changing the image. The document also compares steganography to cryptography, noting that while cryptography encrypts messages, steganography aims to conceal even the existence of hidden communications. It proposes a novel approach that first encrypts data using cryptography before embedding it in images using least significant bit insertion and interpolation to increase capacity.

Enhancing Data Staging as a Mechanism for Fast Data Access

Most organizations rely on data in their daily transactions and operations. This data is retrieved from different source

systems in a distributed network hence it comes in varying data types and formats. The source data is prepared and cleaned by

subjecting it to algorithms and functions before transferring it to the target systems which takes more time. Moreover, there is pressure

from data users within the data warehouse for data to be availed quickly for them to make appropriate decisions and forecasts. This has

not been the case due to immense data explosion in millions of transactions resulting from business processes of the organizations. The

current legacy systems cannot handle large data levels due to processing capabilities and customizations. This approach has failed

because there lacks clear procedures to decide which data to collect or exempt. It is with this concern that performance degradation

should be addressed because organizations invest a lot of resources to establish a functioning data warehouse. Data staging is a

technological innovation within data warehouses where data manipulations are carried out before transfer to target systems. It carries

out data integration by harmonizing the staging functions, cleansing, verification, and archiving source data. Deterministic

Prioritization Approach will be employed to enhance data staging, and to clearly prove this change Experiment design is needed to test

scenarios in the study. Previous studies in this field have mainly focused in the data warehouses processes as a whole but less to the

specifics of data staging area.

Detection of Anemia using Fuzzy Logic

This document presents a fuzzy logic approach for detecting anemia using clinical test results. It describes developing a fuzzy expert system with 3 input variables (hemoglobin, mean corpuscular volume, mean corpuscular hemoglobin concentration) and 1 output variable (type of anemia). Fuzzy sets and rules are defined to classify anemia based on the input clinical values. The system was tested on sample input values and correctly classified the type of anemia based on the fuzzy logic rules. The approach aims to help doctors more accurately detect anemia using a fuzzy expert system compared to probabilistic logic or relying solely on symptoms.

Kitchen Brochure

This document advertises 3-D CAD home design services including kitchen and bath layouts with space planning, perspective, plan, and elevation views, and specialized rendering services available at reasonable pricing. The services are aimed at homeowners and contractors looking to design remodeling or new construction projects. Cabinet sales are also available from the company.

Viewers also liked (17)

A Survey of Existing Mechanisms in Energy-Aware Routing In MANETs

A Survey of Existing Mechanisms in Energy-Aware Routing In MANETs

A Review Study on Secure Authentication in Mobile System

A Review Study on Secure Authentication in Mobile System

A Novel Document Image Binarization For Optical Character Recognition

A Novel Document Image Binarization For Optical Character Recognition

Feature Selection Algorithm for Supervised and Semisupervised Clustering

Feature Selection Algorithm for Supervised and Semisupervised Clustering

Enhancing Data Staging as a Mechanism for Fast Data Access

Enhancing Data Staging as a Mechanism for Fast Data Access