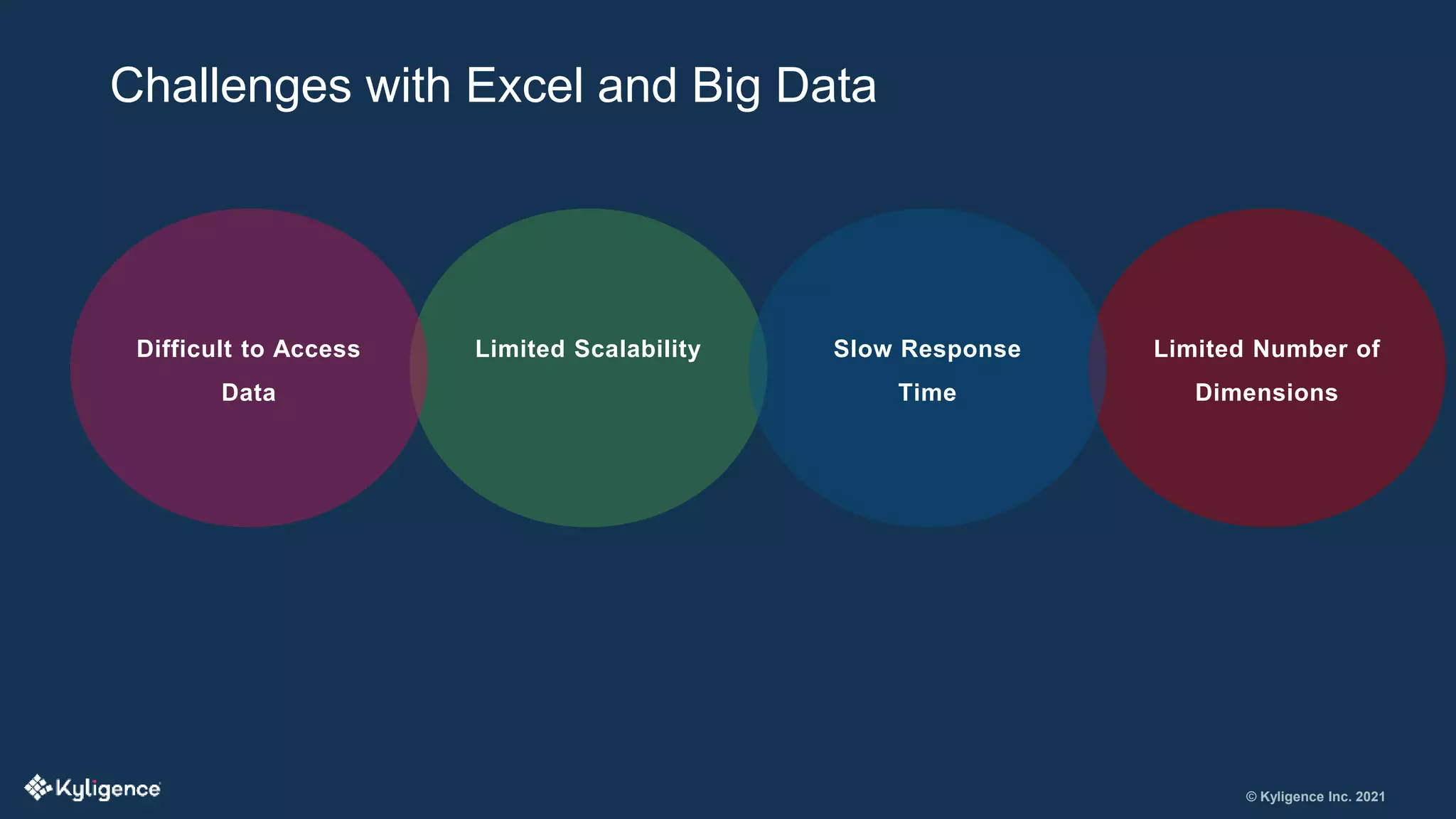

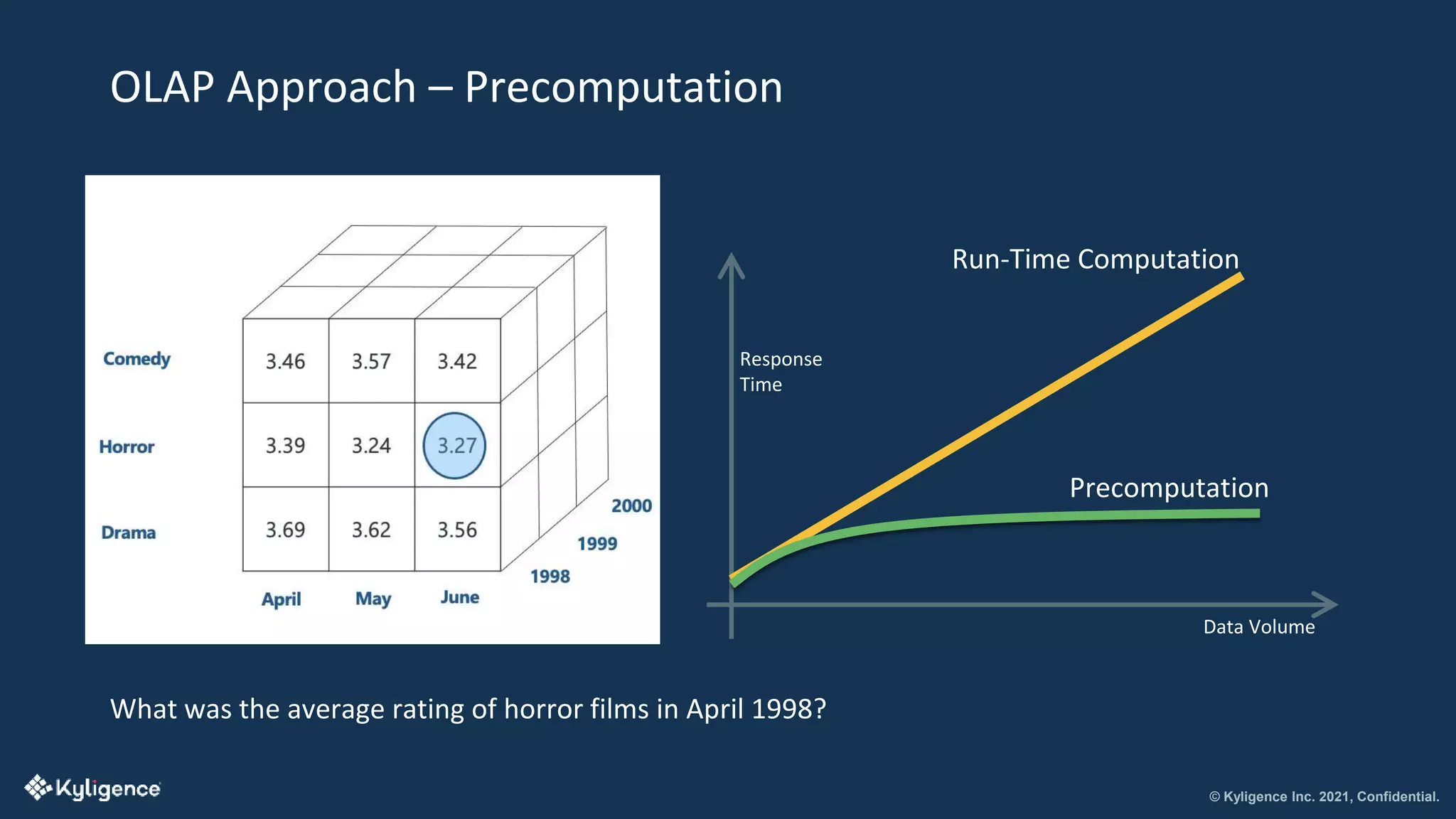

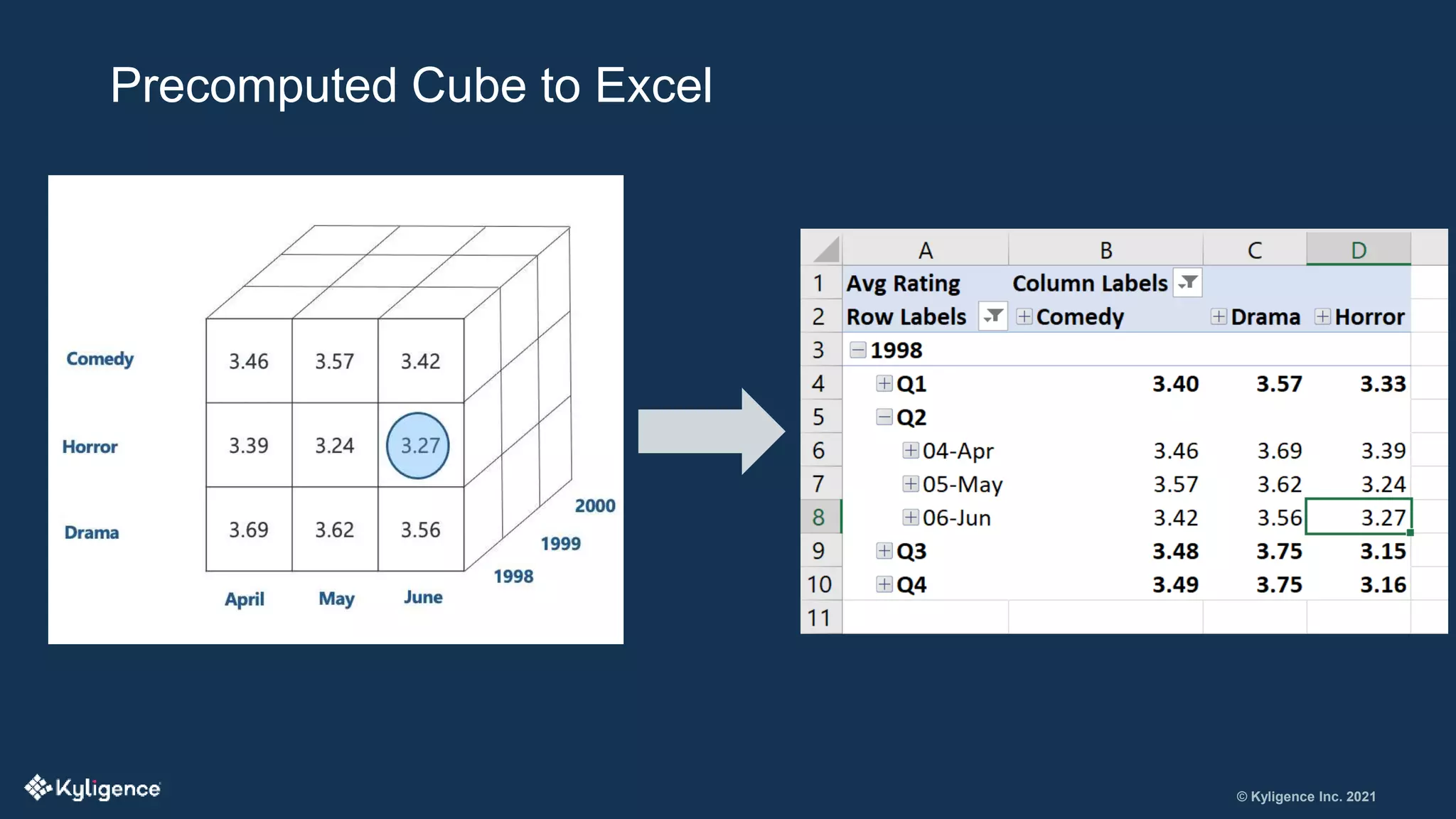

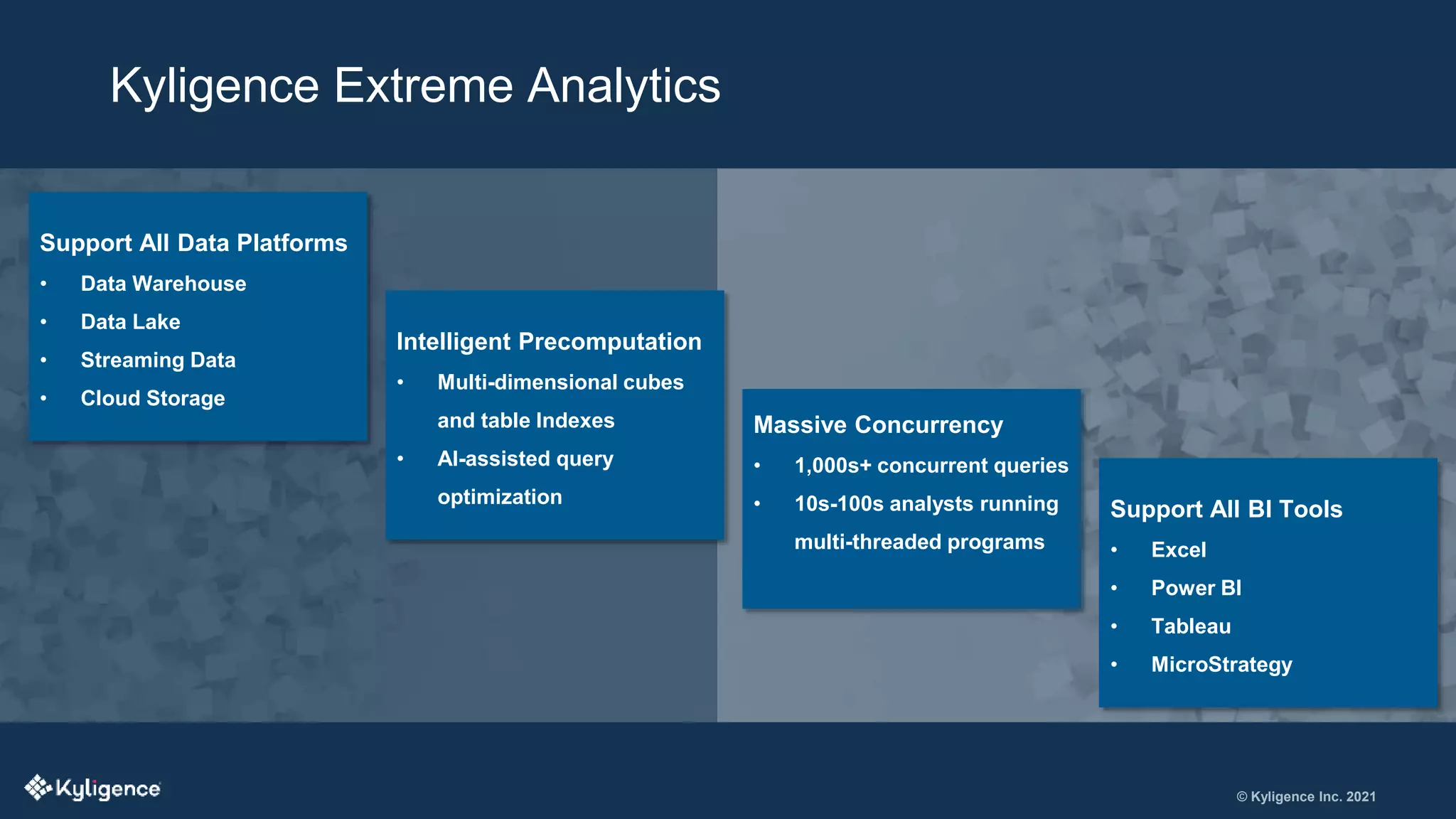

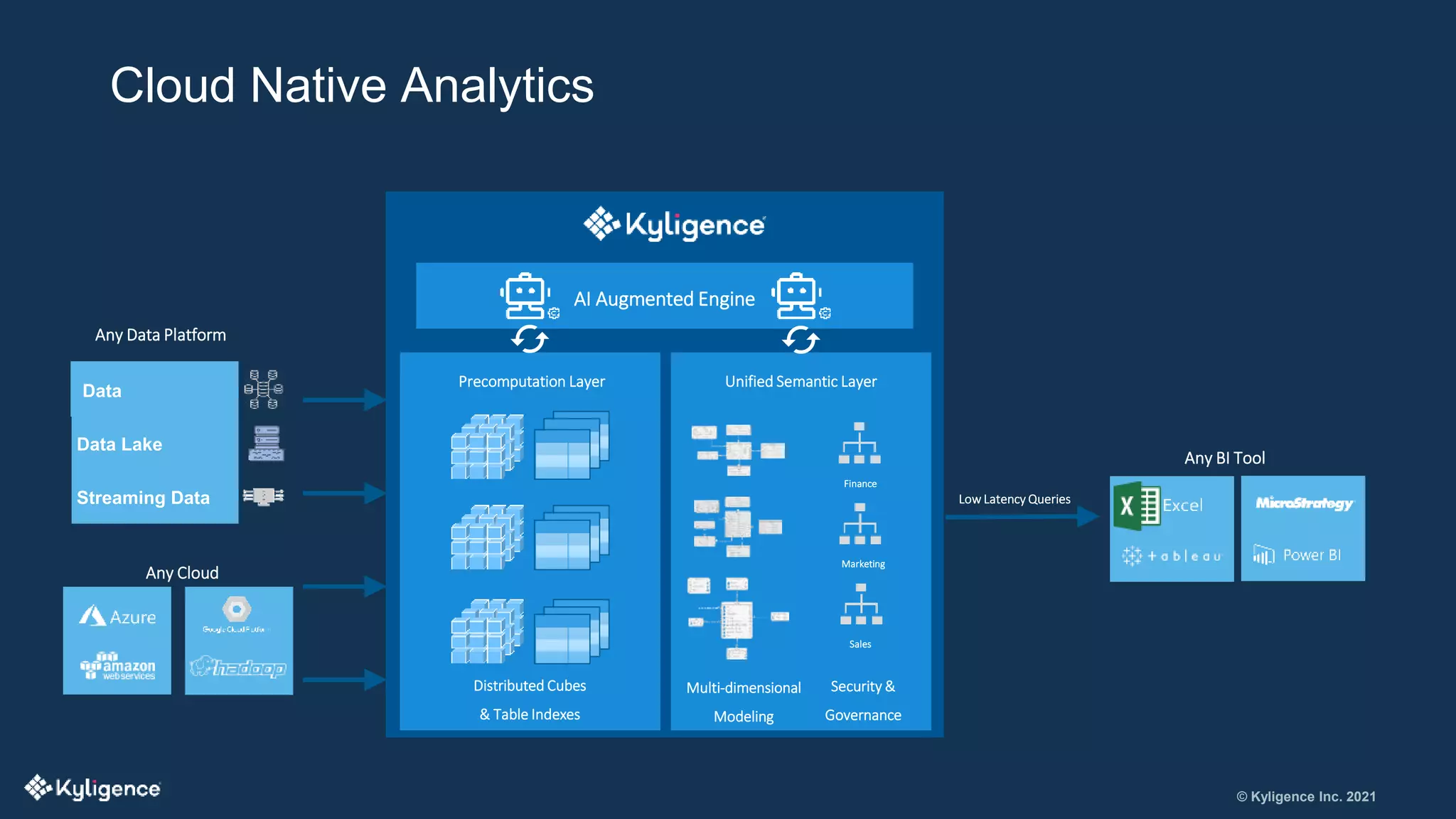

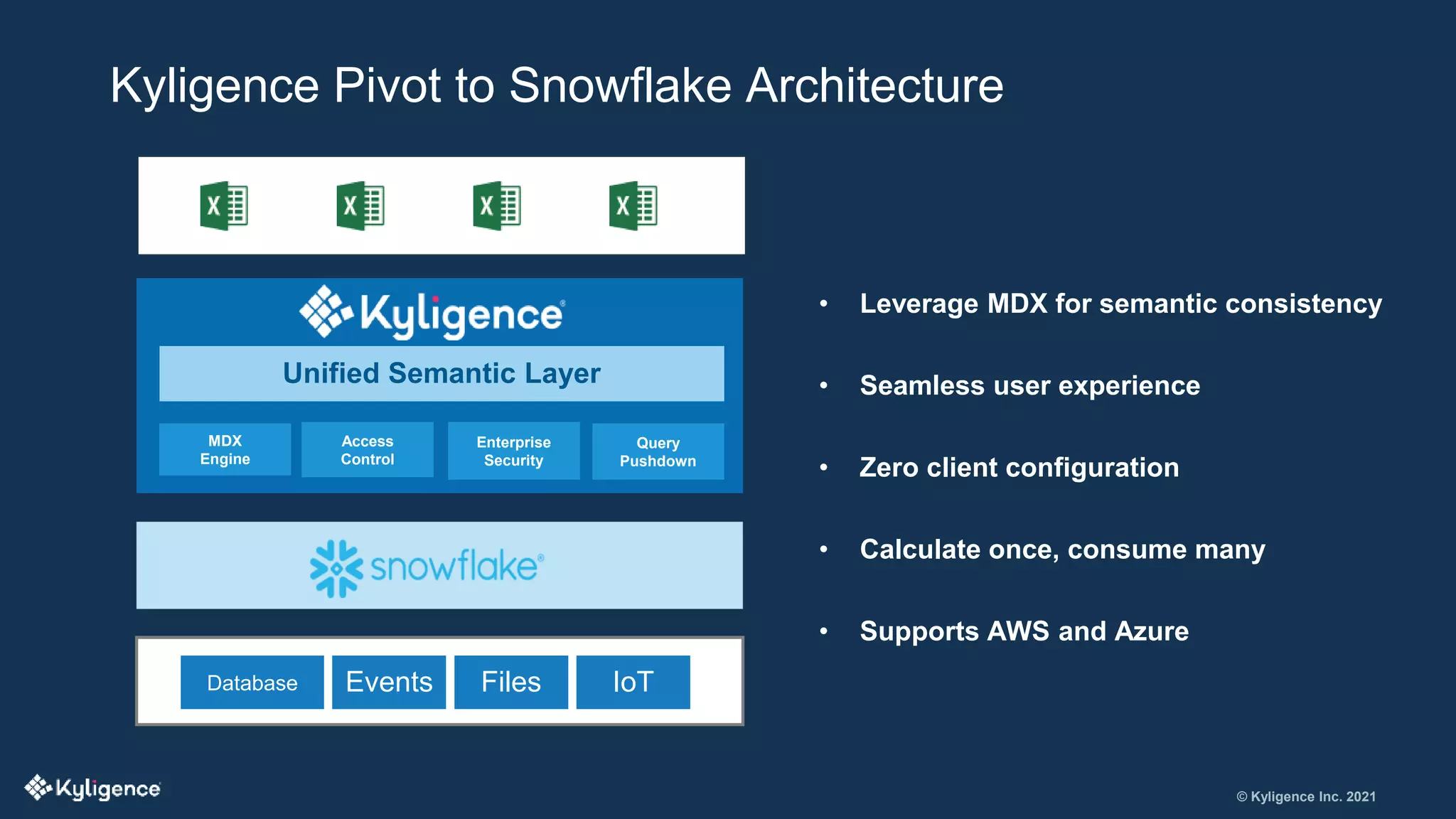

The document discusses the significance of Microsoft Excel in managing big data and highlights challenges such as limited dimensions and scalability. It introduces Kyligence as a solution through cloud-based analytics and OLAP for improved performance and data management. The document emphasizes Kyligence's mission to enhance interactive analytics and details its capabilities in supporting various data platforms and business intelligence tools.