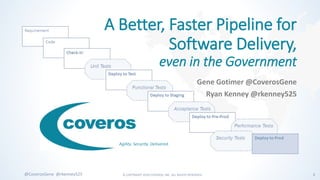

A better faster pipeline for software delivery, even in the government

- 1. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 1@CoverosGene @rkenney525 Agility. Security. Delivered. A Better, Faster Pipeline for Software Delivery, even in the Government Gene Gotimer @CoverosGene Ryan Kenney @rkenney525

- 2. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 2@CoverosGene @rkenney525 About Coveros • Coveros helps companies accelerate the delivery of secure, reliable software using agile methods • Services • Agile Transformations & Coaching • Agile Software Development • Agile Testing & Automation • DevOps and DevSecOps Implementations • Software Security Assurance & Testing • Agile, DevOps, Test Auto, Security Training • Open Source Products • SecureCI – Secure DevOps toolchain • Selenified – Agile test framework Areas of Expertise

- 3. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 3@CoverosGene @rkenney525 Selected Clients

- 4. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 4@CoverosGene @rkenney525 The Delivery Pipeline

- 5. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 5@CoverosGene @rkenney525 Delivery Pipeline Process of taking a code change from developers and getting it deployed into production or delivered to the customer • Stages along the way • Later stages lead • to higher confidence • closer to production

- 6. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 6@CoverosGene @rkenney525 Delivery Pipeline Do we have a viable candidate for production?

- 7. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 7@CoverosGene @rkenney525 Delivery Pipeline Requirement Code Check-in Unit Tests Deploy to Test Functional Tests Deploy to Staging Acceptance Tests Deploy to Pre-Prod Quality Gate Trigger Performance Tests Security Tests Deploy to Prod Rapid Feedback No surprises

- 8. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 8@CoverosGene @rkenney525 Goal is to Balance Early Rapid Feedback No Late Surprises

- 9. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 9@CoverosGene @rkenney525 Everything Can’t Be First Do just enough of each type of testing early in the pipeline to determine if further testing is justified.

- 10. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 10@CoverosGene @rkenney525 Defining Your Delivery Pipeline

- 11. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 11@CoverosGene @rkenney525 Value Stream Exercise • List out steps from developer to production • That is the delivery pipeline • whether manual or automated • Identify time for each task • work time • wait time • Take about 10 minutes • discussion with your neighbors is encouraged Task Wait Work

- 12. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 12@CoverosGene @rkenney525 Value Stream • Helps show • where bottlenecks are • what to automate • where time is being spent and/or wasted

- 13. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 13@CoverosGene @rkenney525 Pipeline Stages • Not hard-and-fast stages • Gradual change in focus minutes hours to daysbulk of the process

- 14. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 14@CoverosGene @rkenney525 Commit Stage Commit Stage Requirement Code Check-in Unit Tests Deploy to Test Functional Tests Deploy to Staging Acceptance Tests Deploy to Pre-Prod Performance Tests Security Tests Deploy to Prod Code-focused • Rapid feedback • CI cycle: 10 minutes maximum • Developers are waiting

- 15. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 15@CoverosGene @rkenney525 Acceptance Stage Acceptance Stage Requirement Code Check-in Unit Tests Deploy to Test Functional Tests Deploy to Staging Acceptance Tests Deploy to Pre-Prod Performance Tests Security Tests Deploy to Prod Quality-focused • Is this is a viable candidate for production?

- 16. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 16@CoverosGene @rkenney525 End Game End Game Requirement Code Check-in Unit Tests Deploy to Test Functional Tests Deploy to Staging Acceptance Tests Deploy to Pre-Prod Performance Tests Security Tests Deploy to Prod Delivery-focused • Steps that only get done when we are releasing • Does not begin until you are confident there will be no surprises

- 17. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 17@CoverosGene @rkenney525 Back to the Value Stream • Break into roughly three stages • won’t be hard-and-fast boundaries • some overlap is expected • look for wildly out-of-place tasks and consider reordering • Take 2 or 3 minutes Task Wait Work Code Focused Quality Focused End Game

- 18. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 18@CoverosGene @rkenney525 No Late Surprises

- 19. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 19@CoverosGene @rkenney525 Pipeline Steps Commit Stage • Compile • Unit tests • Static analysis Acceptance Stage • Functional tests • Regression tests • Acceptance tests • System integration • Security testing • Performance testing • Exploratory testing • Usability testing End Game • Security testing • Performance testing • Exploratory testing • Usability testing • Packaging • Printed documentation • Release announcement

- 20. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 20@CoverosGene @rkenney525 Pipeline Steps Commit Stage • Compile • Unit tests • Static analysis Acceptance Stage • Functional tests • Regression tests • Acceptance tests • System integration • Some security testing • Performance trend • Early exploratory testing • Basic usability testing End Game • Mandated security test • Full load and performance test • Continuing exploratory testing • Focus group usability testing • Packaging • Printed documentation • Release announcement Do just enough testing to determine if further testing is justified.

- 21. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 21@CoverosGene @rkenney525 Example: Performance Testing • Short JMeter test • On development system, no isolation • 10 concurrent users for 10,000 requests • Track the trend • Answers: “Are we getting slower or faster?” • Full load and performance test • Dedicated environment, no other traffic • Production-sized servers • 1,000 concurrent users for 4 hours • Answers: “What is the sustained capacity and throughput?”

- 22. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 22@CoverosGene @rkenney525 Example: Security Testing • Functional tests run through OWASP ZAP proxy • During early testing • Piggy-back on existing testing • Answers: “Do we have any XSS vulnerabilities?” • OpenVAS system scanning • Weekly in test environment • Looks for open network ports • Looks for software with CVEs • Answers: “Is Nessus likely to find anything?” • HP WebInspect application security scanning • By corporate security group • Looks for black-box web vulnerabilities • Answers: “Do we have any XSS vulnerabilities?” • Nessus system scanning • By corporate security group • Looks for open network ports • Looks for software with CVEs • Answers: “Is system compliant?”

- 23. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 23@CoverosGene @rkenney525 Advantages of Earlier Testing • Quicker feedback cycle • Easier to fix problems that are found • Developers still have context of changes • Less rework on defective product • Proactive response, not reactive

- 24. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 24@CoverosGene @rkenney525 Back to the Value Stream Again • When you find a problem, how much does it disrupt the pipeline? • time/effort expended on this build • time/effort expended on next build in flight • Does chaos ensue when problems are identified late in the pipeline? • surprise • commitment to release already made • will they ship it anyway? • Mark your highest risk late surprises with ↑ Task Wait Work Code Focused Quality Focused End Game

- 25. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 25@CoverosGene @rkenney525 Automation

- 26. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 26@CoverosGene @rkenney525 Automation isn’t Free • Committed Management • Budgeted Cost • time, effort, tools, training • Process • Dedicated Resources • not everyone can automate • learning curve • Realistic Expectations • 100% automated is unreasonable • benefits may not be immediately obvious

- 27. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 27@CoverosGene @rkenney525 Automated Deployment • Repeatable, reliable deployments • Test that through practice • Same deploy process everywhere • You will find more reasons to deploy

- 28. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 28@CoverosGene @rkenney525 Smoke Testing • After every deployment • Must be quick • Test the deployment, not the functionality • Focus on • basic signs of life • interfaces between systems • configuration settings

- 29. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 29@CoverosGene @rkenney525 Again with the Value Stream • If it can be automated, • what would it take to automate it? • would it remove bottlenecks? • could it be moved earlier? • would it reduce late surprises? • Where are the manual tests? • Strongly consider wait times • Mark manual with an M • Mark automated with A Task Wait Work Code Focused Quality Focused End Game

- 30. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 30@CoverosGene @rkenney525 Wrap Up

- 31. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 31@CoverosGene @rkenney525 #Coveros5 • Balance early rapid feedback with no late surprises • Do just enough of each type of testing early in the pipeline to determine if further testing is justified • Your pipeline should generally progress from code-focused to quality-focused to delivery-focused • Consider automation to help remove bottlenecks • Use value-stream mapping to help you understand your process

- 32. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 32@CoverosGene @rkenney525 Value Stream Back at Home • Per story/feature/task • Record each step as you do it • Record actual time • work time • wait time • Once a pattern emerges, make it visible • post it in the team room, online • keep it up-to-date • include it in retrospectives Task Wait Work

- 33. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 33@CoverosGene @rkenney525 Reading List • Learning to See: Value-Stream Mapping to Create Value and Eliminate MUDA, by Mike Rother and John Shook. ISBN-13: 978-0966784305 • The Principles of Product Development Flow: Second Generation Lean Product Development, by Donald G. Reinertsen. ISBN-13: 978-1935401001 • Implementing Lean Software Development: From Concept to Cash, by Mary and Tom Poppendieck. ISBN-13: 978-0321437389 • Toyota Kata: Managing People for Continuous Improvement, Adaptiveness, and Superior Results, by Mike Rother. ISBN-13: 978-0071635233 • The Art of Business Value, by Mark Schwartz. ISBN-13: 978-1942788041

- 34. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 34@CoverosGene @rkenney525 Upcoming Coveros Training Software Testing Week Atlanta December 3-7 • Selenium • Test Automation • Mobile Testing • DevOps • CTFL Testing Certification DevOps Week DC December 10-14 • Foundations of DevOps • DevOps Leadership • Docker and Kubernetes • Chef • Jenkins © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED.@CoverosGene @rkenney525 Use code COVGENE to save 20%

- 35. © COPYRIGHT 2018 COVEROS, INC. ALL RIGHTS RESERVED. 35@CoverosGene @rkenney525 Ryan Kenney ryan.kenney@coveros.com @rkenney525 Questions? Gene Gotimer gene.gotimer@coveros.com @CoverosGene

Editor's Notes

- Thanks for joining me today. My name is Gene Gotimer. I’m a senior architect with Coveros, and I’m going to talk about developing your delivery pipeline. Specifically, I’m going to talk about where to put different types of testing into your process to make sure your pipeline is efficient and effective. This is a workshop and involves audience participation, so I hope you’ll play along. I also hope this will be useful whether you are doing continuous delivery or not, whether you have a lot of automation or are doing things largely manually. Abstract The software delivery pipeline is the process of taking new or changed features from developers and getting them quickly delivered to the customers by getting the feature deployed into production. Testing within continuous delivery pipelines should be designed so the earliest tests are the quickest and easiest to run, giving developers the fastest feedback. Successive rounds of testing lead to increased confidence that the code is a viable candidate for production and that more expensive tests—be it time, effort, cost—are justified. Manual testing is performed toward the end of the pipeline, leaving computers to do as much work as possible before people get involved. Although it is tempting to arrange the delivery pipeline in phases (e.g., functional tests, then acceptance tests, then load and performance tests, then security tests), this can lead to serious problems progressing far down the pipeline before they are caught. Be prepared to discuss your pipeline, automated or not, and talk about what you think is slowing you down and what is keeping you up at night. In this interactive workshop, we will discuss how to arrange your tests so each round provides just enough testing to give you confidence that the next set of tests is worth the investment. We'll explore how to get the right types of testing into your pipeline at the right points so that you can determine quickly which builds are viable candidates for production. And we’ll explain some of the experiences we’ve had with clients, especially in the federal government, trying to build out delivery pipelines.

- 2

- 3

- Everyone that delivers code has a delivery pipeline. This is not only a Continuous Delivery thing or a DevOps thing. Doesn’t have to be automated. More automated is better, but we have a delivery pipeline anyway. Stages are not rigid phases, but rather at different points in the pipeline, in the SDLC, we will generally be more focused on certain outcomes than in other. We gradually shift our focus throughout the SDLC.

- The goal of the delivery pipeline is to build confidence that we have a viable candidate for production.

- We want to set up our pipeline so that the further you get through the pipeline, the more expensive the stage gates are to pass. Accordingly, the tests are generally harder to set up and usually take longer to run. That means feedback takes longer to get. Conversely, the closer to the front of the pipeline, the tests are quicker and easier and will be run far more often. And the feedback will be available that much quicker.

- Overall we want balance. Early on we’ll be willing to focus more on rapid feedback, later on we’ll need to be more sure that we haven’t missed anything. Defining a pipeline that works for you will involve managing and adjusting this balance.

- Jump to the punchline: this is what I generally think about in order to help me set that balance throughout the SDLC. If I wait until the end to do any type of testing, I am setting myself up so surprise. But everything can’t be done first.

- We do this exercise in our DevOps class, and I think it is a great way to start. Lots of different ways to do a value stream analysis. We’ll do a simple version that works for me. In fact, it is so simplified that we’ll drop most of the “value” piece and focus on the stream- the steps in the pipeline. Think about your current process. If you have many of them, pick one or make up a representative version. List out all the steps to take a feature from a user story or from the time a developer gets the task until it ends up in production. As you go through the steps, identify two times: the time spent working on completing the task and the average elapsed time spent waiting to do that work. Just rough estimates are fine. If it is something that happens every morning, avg. wait time is ½ day. If it happens every Friday, avg. wait time is ½ week.

- The goal of a value stream analysis is to find waste, which is anything that isn’t providing business value. Our goal is just to remind ourselves what our process really is. That will help show where the bottlenecks are, what we can or should automate. Thoughtworks suggests adding all of these steps to you CI engine, even if they are manual. Then you are reminded that a manual step has to take place to move to the next step.

- Code-focused: How are the developers doing? Are they using best practices? Is what they are building stable? Delivery-focused: What needs to happen to push this out the door? Last round of tests, compliance sign-off, packaging, documentation, announcements, marketing. Quality-focused: Everything in between those that helps us determine if the software is a viable candidate for production.

- Developer centric The automated build is critical. It has to happen so often that there is no doubt that it must be automated, no matter how easy it is to do manually. No questions asked– automate the build first. Develop automated unit tests next. Remember, we want to get a quick level of confidence that these changes represent a viable production candidate, that they have even a shot at going further, and that the time and effort of running further tests and checks is warranted. Code is checked in. That triggers an automated build, unit tests, static analysis, and packaging for deploy. If everything passes, deploy to test. If not, back to coding.

- A deploy to test triggers a smoke tests, integration tests, one or more rounds of functional tests, regression tests, possibly more deploys and smoke tests, and finally acceptance tests. The developers have not shifted modes – work on the commit stage is still going on. Since we got through the early quality gates, we are confident that running these next sets of tests is worth while even if they take more time. We can’t stop everything just to watch if the code gets through this next round of tests, but we know we are passed some of the stuff that would shut us down quick like compilation errors. The team is generally not waiting for this stage to pass before continuing work on other features, but will still make it a priority to resolve any problems that are found during this stage.

- We are confident we have a viable production candidate. This includes “packaging”, maybe announcements, marketing, documentation, other non-development-type stuff. Also, last round of tests, compliance sign-off. These tests might be more expensive: time, effort, manual inspection, monopolizing an environment for an extended time, could be outsourcing to cloud (e.g., LoadStorm, Sauce Labs) or bringing in specialists (e.g., security) so it could be actual money. But our goal is no surprises by this stage, so we should already have a reason to expect that these tests are going to succeed.

- Out-of-place would be a task that is only valuable if this is going to production, but done early in the acceptance stage. Or review static analysis results just before the end game. Or manual early. Or automated with automated evaluation late. (automated with manual eval late is fine) Think about what the goal of each task is and when it will be useful to act upon that information, or when it will no longer be useful.

- This is a pretty common way to look at these. But that last round of testing is a prime opportunity for surprise. And of course just saying they are in a different stage doesn’t actually change anything. We actually need to adjust the process some to help us reduce the chance of surprise.

- So we’ll do some security testing to make sure we feel good we will pass the one that is mandated for corporate compliance or regulation. We can watch our performance trends, either with a short test or maybe just watching how long the regression tests take to run, so that we are pretty sure we know how the full L&P test will turn out. We can start poking around with a quick once-over by a manual tester to give us a gut feel, and then let some more detailed testing continue while the other longer tests are going on.

- Maybe our earlier tests aren’t answering the same exact questions.

- Maybe we look at using more readily-available or easier-to-use tools.

- Shipping it anyway, especially when you never would have considered doing that if it had been found earlier.

- Not a cure-all, but necessary to get to continuous delivery

- Realistic Expectations 100% automated is an unreasonable goal May be unreachable May not be effective Automation benefits are seen after many cycles No obvious immediate payback Learning curve for automated testing is significant Record and playback has limited value Automation frameworks may not support your needs Not all testers/developers will be effective writing tests

- Practice by using it everywhere.

- But if we are doing deploys (automated or manual), there is no sense doing any other type of testing on the deployed system if we don’t know if the deployment was successful. How many times have you found all sorts of bugs, wondered how this code ever got out of development because it just doesn’t work, only to find out that a step in the deployment was left out or a configuration setting is wrong. You wasted all that time testing a defective product. Do just enough testing to be sure that further testing is justified. This is a great example.

- Cost to automate: effort, time, money, resources

- I hope everyone has some ideas on how they want to start changing or rearranging their process now.

- #Coveros5 is our company’s term for the 5 most important takeaways from a talk or blog post, and we send out tweets with that hashtag whenever we publish something.

- Also, consider doing an actual value-stream mapping exercise. We only touched the surface and didn’t get into the value part of the exercise. You’ll need everyone in the process (not just representatives) for a day or three to do it right.

- Software Testing Training Week • Atlanta December 3-7 • https://well.tc/DCAST-STWK A learning and networking event with five specialized courses for both novice and experienced test professionals, test and QA managers, developers, project and program managers, and more. Includes CTFL certification course for the new 2018 ISTQB syllabus. (Selenium, Test Automation, Mobile Testing, DevOps, CTFL testing certification) DevOps Week DC • Arlington/Rosslyn December 10-14 • https://well.tc/DCAST-DWK DevOps Week DC features a hands-on technical track for experienced test professionals, operations engineers, developers, and project managers, as well as a highly-interactive leadership track specifically designed for executives and organizational leaders. (DevOps Foundations, DevOps Leadership, Docker & Kubernetes, Chef, Jenkins) COVGENE - Save 20% Use code COVGENE to save 20% on all new DevOps Week and Software Testing Training Week registrations purchased through Nov. 30, 2018. This offer is only valid for new registrations and cannot be combined with other promotions.