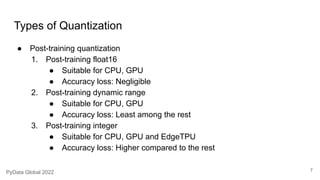

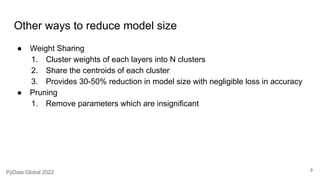

This document discusses deploying neural networks on microcontrollers. It notes that deploying on the edge is best for low latency, privacy, and network constraints. It describes training models at the edge using federated learning and inferencing at the edge. The document also discusses quantizing models to reduce size through techniques like post-training quantization, weight sharing, and pruning. It provides examples of different quantization methods and their effects on size and accuracy reduction. Benchmark results of Tiny MLPerf for edge devices are also referenced.

![Model quantization

What

Reduce the floating-point precision to store the model parameters from 32-bit to 16-bit or 8-bit

Why

● Reduce memory footprint

● Reduce memory usage

Drawbacks

● Reduced accuracy dependending on the type of quantization

● (Full integer quantization with int16 activations and int8 weights)[3]

● But how much size is reduced

● All most 4x reduction in models with primarily convolution layers[5]

5

PyData Global 2022](https://image.slidesharecdn.com/pydataglobal2022-thingsilearnedwhilerunningneuralnetworksonmicrocontroller-231208083855-b5e6f949/85/PyData-Global-2022-Things-I-learned-while-running-neural-networks-on-microcontroller-5-320.jpg)

![Benchmarks

1. Benchmark results of the recent Tiny MLPerf v0.7 [Link][Paper]

2. EdgeTPU benchmark result of different neural net architectures [Link]

10

PyData Global 2022](https://image.slidesharecdn.com/pydataglobal2022-thingsilearnedwhilerunningneuralnetworksonmicrocontroller-231208083855-b5e6f949/85/PyData-Global-2022-Things-I-learned-while-running-neural-networks-on-microcontroller-10-320.jpg)