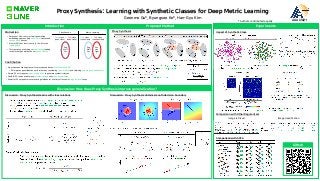

[AAAI2021] Proxy Synthesis: Learning with Synthetic Classes for Deep Metric Learning (poster))

•

0 likes•72 views

This is a poster for the paper of "Proxy Synthesis: Learning with Synthetic Classes for Deep Metric Learning" accepted in AAAI 2021. Written by Geonmo Gu*, Byungsoo Ko*, Han-Gyu Kim (* Authors contributed equally.) @NAVER/LINE Vision - Arxiv: https://arxiv.org/abs/2103.15454 - Github: https://github.com/navervision/proxy-synthesis - Presentation video: https://www.youtube.com/watch?v=v_KYo2Crbig

Report

Share

Report

Share

Download to read offline

Recommended

Recommended

More Related Content

Recently uploaded

Recently uploaded (20)

Web Form Automation for Bonterra Impact Management (fka Social Solutions Apri...

Web Form Automation for Bonterra Impact Management (fka Social Solutions Apri...

Apidays New York 2024 - The value of a flexible API Management solution for O...

Apidays New York 2024 - The value of a flexible API Management solution for O...

Apidays New York 2024 - Scaling API-first by Ian Reasor and Radu Cotescu, Adobe

Apidays New York 2024 - Scaling API-first by Ian Reasor and Radu Cotescu, Adobe

Why Teams call analytics are critical to your entire business

Why Teams call analytics are critical to your entire business

CNIC Information System with Pakdata Cf In Pakistan

CNIC Information System with Pakdata Cf In Pakistan

Strategies for Landing an Oracle DBA Job as a Fresher

Strategies for Landing an Oracle DBA Job as a Fresher

Boost Fertility New Invention Ups Success Rates.pdf

Boost Fertility New Invention Ups Success Rates.pdf

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

EMPOWERMENT TECHNOLOGY GRADE 11 QUARTER 2 REVIEWER

EMPOWERMENT TECHNOLOGY GRADE 11 QUARTER 2 REVIEWER

Navigating the Deluge_ Dubai Floods and the Resilience of Dubai International...

Navigating the Deluge_ Dubai Floods and the Resilience of Dubai International...

AWS Community Day CPH - Three problems of Terraform

AWS Community Day CPH - Three problems of Terraform

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

Featured

Featured (20)

How Race, Age and Gender Shape Attitudes Towards Mental Health

How Race, Age and Gender Shape Attitudes Towards Mental Health

AI Trends in Creative Operations 2024 by Artwork Flow.pdf

AI Trends in Creative Operations 2024 by Artwork Flow.pdf

Content Methodology: A Best Practices Report (Webinar)

Content Methodology: A Best Practices Report (Webinar)

How to Prepare For a Successful Job Search for 2024

How to Prepare For a Successful Job Search for 2024

Social Media Marketing Trends 2024 // The Global Indie Insights

Social Media Marketing Trends 2024 // The Global Indie Insights

Trends In Paid Search: Navigating The Digital Landscape In 2024

Trends In Paid Search: Navigating The Digital Landscape In 2024

5 Public speaking tips from TED - Visualized summary

5 Public speaking tips from TED - Visualized summary

Google's Just Not That Into You: Understanding Core Updates & Search Intent

Google's Just Not That Into You: Understanding Core Updates & Search Intent

The six step guide to practical project management

The six step guide to practical project management

Beginners Guide to TikTok for Search - Rachel Pearson - We are Tilt __ Bright...

Beginners Guide to TikTok for Search - Rachel Pearson - We are Tilt __ Bright...

Unlocking the Power of ChatGPT and AI in Testing - A Real-World Look, present...

Unlocking the Power of ChatGPT and AI in Testing - A Real-World Look, present...

[AAAI2021] Proxy Synthesis: Learning with Synthetic Classes for Deep Metric Learning (poster))

- 1. Proxy Synthesis: Learning with Synthetic Classes for Deep Metric Learning Geonmo Gu*, Byungsoo Ko*, Han-Gyu Kim AAAI2021 * Authors contributed equally. Contribution • We propose a novel regularizer for proxy-based losses: Proxy Synthesis (PS) • PS improves generalization performance by considering class relations and obtaining smooth decision boundary. • Simple: PS only requires linear interpolation to generate synthetic classes. • Flexible: PS can be used for any softmax variants and proxy-based losses. • Powerful: PS outperforms over existing methods for a variety of losses in image retrieval tasks. Classification Metric Learning Motivation • Purpose of DML: construct well-generalized embedding space on both seen (train) classes and unseen (test) classes. • Most of DML loss functions try to fit well to the training data. • This can cause overfitting to seen classes, leading to the lack of generalization on unseen classes. Dog Wolf Cat … Fox Lion Tiger … Dog Wolf Cat … Train class = Test class (seen class) (seen class) Train class ≠ Test class (seen class) (unseen class) Introduction Experiments Proposed Method Discussion: How does Proxy Synthesis improve generalization? Discussion: Proxy Synthesis learns with class relations Discussion: Proxy Synthesis obtains smooth decision boundary Proxy Synthesis Impact of Synthetic Class Comparison with SOTA Github Comparison with Other Regularizers Image retrieval Image classification