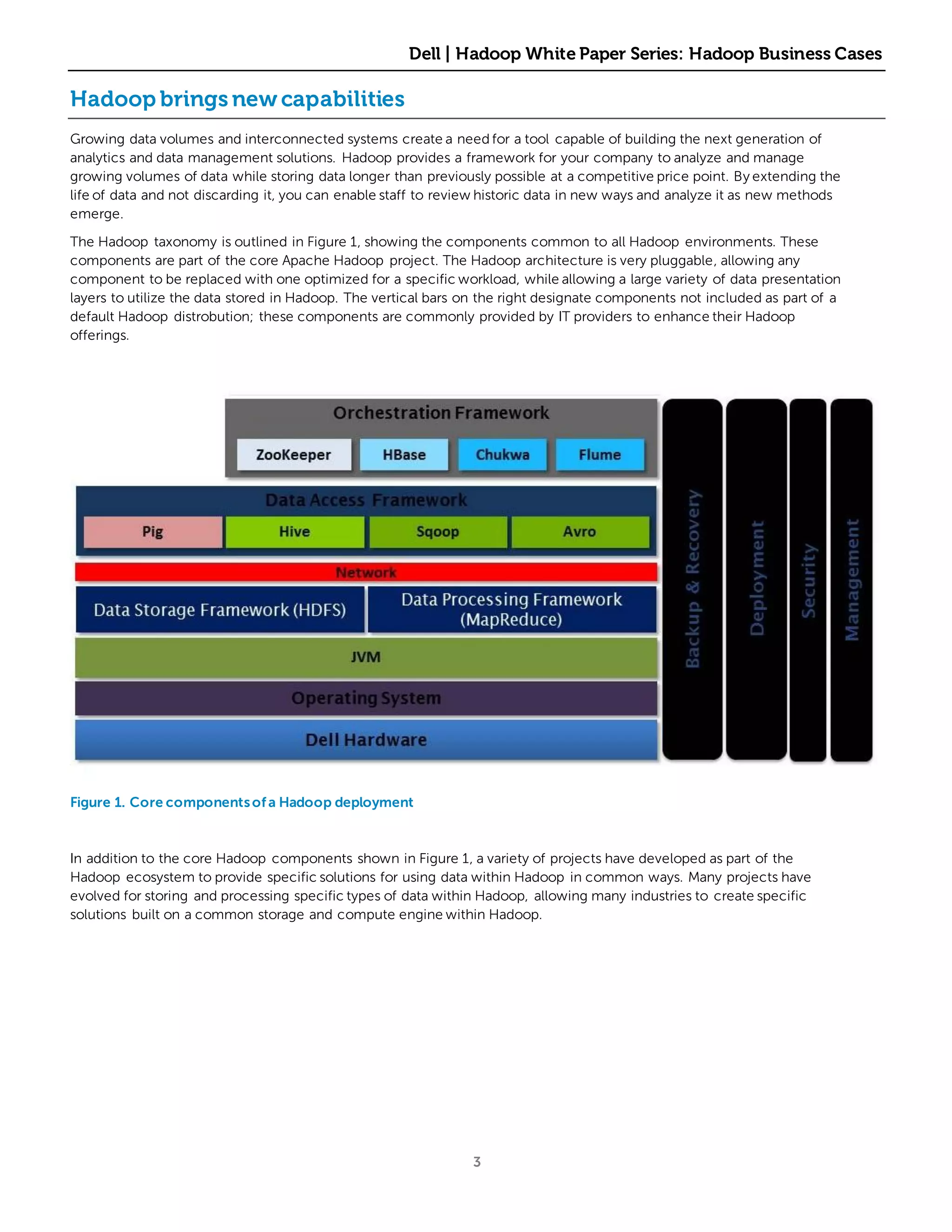

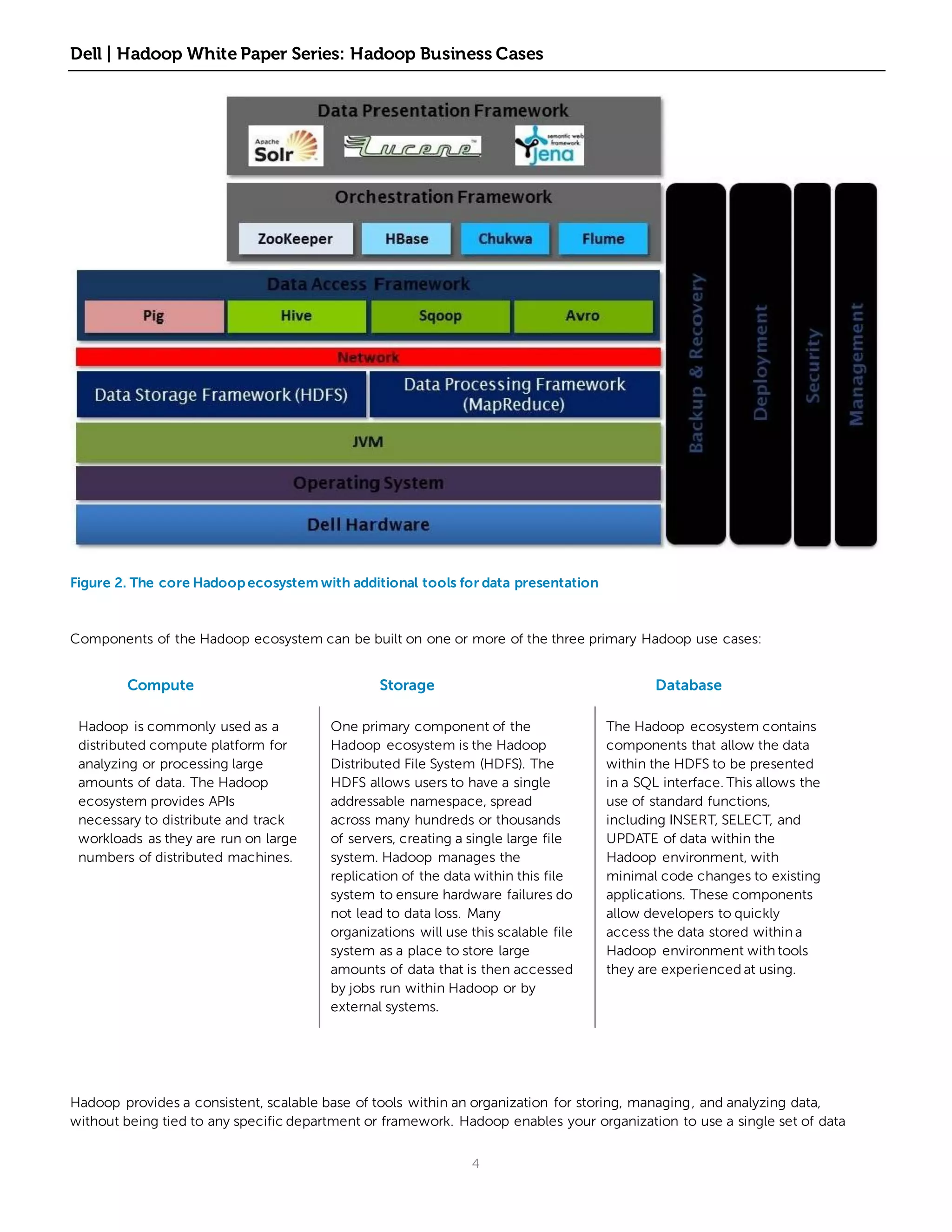

Hadoop provides a framework for companies to analyze and manage growing volumes of data at a lower cost than traditional solutions. It allows data to be stored for longer periods, enabling new analyses over time. Hadoop deployments typically start with a small test by one department and then expand as other departments see its value for analytics and managing large datasets. It commonly evolves from virtual deployments for testing to dedicated physical hardware as data volumes and performance needs increase. Understanding how Hadoop typically evolves can help companies better manage its adoption and growth within their organization.