MongoDB DBA Certificate

•

0 likes•50 views

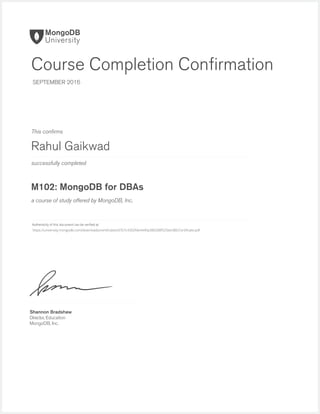

This document confirms that Rahul Gaikwad successfully completed the M102: MongoDB for DBAs course offered by MongoDB, Inc. in September 2016. The authenticity of the certificate can be verified by visiting the URL provided.

Report

Share

Report

Share

Download to read offline

Recommended

MongoDB Course

This document confirms that Michael Khegai successfully completed the M102: MongoDB for DBAs course offered by MongoDB, Inc. in July 2016. The authenticity of the completion confirmation can be verified by accessing the URL provided, which contains a downloadable certificate. Shannon Bradshaw, Director of Education at MongoDB, Inc., issued the confirmation.

Certificate

This document confirms that Charles Daringer successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in July 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., issued the course completion confirmation.

Certificate

This document confirms that Pete Watcharawit successfully completed the M101JS course on MongoDB for Node.js Developers offered by MongoDB, Inc. in July 2016. The authenticity of the certificate can be verified by visiting the URL provided.

Certificate

This document confirms that Alexander Burkovskiy successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in May 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., signed the course completion confirmation.

Certificate

This document confirms that Roman Baumgaertner successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in July 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., signed the course completion confirmation.

Certificate

This document confirms that Alonza Thompson successfully completed the M101JS course on MongoDB for Node.js Developers in May 2016. The authenticity of the completion confirmation can be verified by accessing the provided URL.

Hadoop_Fundamental

Hadoop Fundamentals I is a course taught by instructor Raul Chong on July 23, 2015. The course material was created by Rahul Gaikwad and provides an introduction to the fundamentals of Hadoop. The document lists the course title, creator, instructor, and date but does not provide any other details about the content or objectives of the course.

MongoDB Atlas Certificate

Rahul Gaikwad successfully completed the M123: Getting Started with MongoDB Atlas course offered by MongoDB, Inc. in September 2016. The authenticity of this course completion confirmation can be verified by visiting the URL provided, which contains Rahul's certificate.

Recommended

MongoDB Course

This document confirms that Michael Khegai successfully completed the M102: MongoDB for DBAs course offered by MongoDB, Inc. in July 2016. The authenticity of the completion confirmation can be verified by accessing the URL provided, which contains a downloadable certificate. Shannon Bradshaw, Director of Education at MongoDB, Inc., issued the confirmation.

Certificate

This document confirms that Charles Daringer successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in July 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., issued the course completion confirmation.

Certificate

This document confirms that Pete Watcharawit successfully completed the M101JS course on MongoDB for Node.js Developers offered by MongoDB, Inc. in July 2016. The authenticity of the certificate can be verified by visiting the URL provided.

Certificate

This document confirms that Alexander Burkovskiy successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in May 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., signed the course completion confirmation.

Certificate

This document confirms that Roman Baumgaertner successfully completed the M101J: MongoDB for Java Developers course offered by MongoDB, Inc. in July 2016. Shannon Bradshaw, Director of Education at MongoDB, Inc., signed the course completion confirmation.

Certificate

This document confirms that Alonza Thompson successfully completed the M101JS course on MongoDB for Node.js Developers in May 2016. The authenticity of the completion confirmation can be verified by accessing the provided URL.

Hadoop_Fundamental

Hadoop Fundamentals I is a course taught by instructor Raul Chong on July 23, 2015. The course material was created by Rahul Gaikwad and provides an introduction to the fundamentals of Hadoop. The document lists the course title, creator, instructor, and date but does not provide any other details about the content or objectives of the course.

MongoDB Atlas Certificate

Rahul Gaikwad successfully completed the M123: Getting Started with MongoDB Atlas course offered by MongoDB, Inc. in September 2016. The authenticity of this course completion confirmation can be verified by visiting the URL provided, which contains Rahul's certificate.

Big SQL V4

Rahul Gaikwad presented on SQL Access for Hadoop (Big SQL v4) on April 9, 2016. Big SQL v4 provides SQL access to data stored in Hadoop for analytics and business intelligence. The presentation discussed how Big SQL allows users to query data in Hadoop using standard SQL.

Accessing Hadoop Data Using Hive

Hive provides an SQL-like interface to query and analyze large datasets stored in Hadoop. It allows users familiar with SQL to write queries that get executed using MapReduce. Hive allows data summarization, query, and analysis of large datasets stored in Hadoop clusters or data warehouses.

Big_Data_Fundamental

Rahul Gaikwad submitted a Big Data Fundamentals assignment to instructor Raul Chong on July 23, 2015. The document contains Rahul Gaikwad's name and indicates he submitted an assignment for the course Big Data Fundamentals to his instructor Raul Chong on a specific date.

Hadoop_Fundamental_2

Hadoop is a framework for distributed storage and processing of large datasets across clusters of computers using simple programming models. It allows for the reliable, scalable, and distributed processing of large data sets across clusters of commodity hardware. Hadoop provides a software framework for distributed storage and processing of big data using the MapReduce programming model.

Using HBase for Real Time Access

HBase is an open-source, non-relational, distributed database that provides real-time read/write access to large datasets stored on commodity hardware. It is modeled after Google's Bigtable and is written in Java. The author Rahul Gaikwad discusses using HBase for real-time access to big data on April 9, 2016.

Moving Data into Hadoop

Moving data into Hadoop can be done in a few different ways. Common methods include using Sqoop to transfer data between Hadoop and relational databases, flume to collect log data and other streaming sources, and distcp for large scale copying of data between Hadoop clusters or from local filesystems. The method used depends on the source of the data and performance requirements.

Spark_Fundamental

Spark is a unified analytics engine for large-scale data processing. It provides high-level APIs in Scala, Java, Python, and R, and an optimized engine that supports general computation graphs for data analysis. It also supports a wide range of use cases including streaming, SQL, machine learning, and graph processing.

Oozie

Oozie is a workflow scheduler system that allows users to manage Hadoop jobs. It coordinates jobs such as Hive queries, Pig Latin scripts, MapReduce jobs and more. Oozie defines workflows and dependencies allowing jobs to be run sequentially or in parallel.

More Related Content

Viewers also liked

Big SQL V4

Rahul Gaikwad presented on SQL Access for Hadoop (Big SQL v4) on April 9, 2016. Big SQL v4 provides SQL access to data stored in Hadoop for analytics and business intelligence. The presentation discussed how Big SQL allows users to query data in Hadoop using standard SQL.

Accessing Hadoop Data Using Hive

Hive provides an SQL-like interface to query and analyze large datasets stored in Hadoop. It allows users familiar with SQL to write queries that get executed using MapReduce. Hive allows data summarization, query, and analysis of large datasets stored in Hadoop clusters or data warehouses.

Big_Data_Fundamental

Rahul Gaikwad submitted a Big Data Fundamentals assignment to instructor Raul Chong on July 23, 2015. The document contains Rahul Gaikwad's name and indicates he submitted an assignment for the course Big Data Fundamentals to his instructor Raul Chong on a specific date.

Hadoop_Fundamental_2

Hadoop is a framework for distributed storage and processing of large datasets across clusters of computers using simple programming models. It allows for the reliable, scalable, and distributed processing of large data sets across clusters of commodity hardware. Hadoop provides a software framework for distributed storage and processing of big data using the MapReduce programming model.

Using HBase for Real Time Access

HBase is an open-source, non-relational, distributed database that provides real-time read/write access to large datasets stored on commodity hardware. It is modeled after Google's Bigtable and is written in Java. The author Rahul Gaikwad discusses using HBase for real-time access to big data on April 9, 2016.

Moving Data into Hadoop

Moving data into Hadoop can be done in a few different ways. Common methods include using Sqoop to transfer data between Hadoop and relational databases, flume to collect log data and other streaming sources, and distcp for large scale copying of data between Hadoop clusters or from local filesystems. The method used depends on the source of the data and performance requirements.

Spark_Fundamental

Spark is a unified analytics engine for large-scale data processing. It provides high-level APIs in Scala, Java, Python, and R, and an optimized engine that supports general computation graphs for data analysis. It also supports a wide range of use cases including streaming, SQL, machine learning, and graph processing.

Oozie

Oozie is a workflow scheduler system that allows users to manage Hadoop jobs. It coordinates jobs such as Hive queries, Pig Latin scripts, MapReduce jobs and more. Oozie defines workflows and dependencies allowing jobs to be run sequentially or in parallel.

Viewers also liked (8)

MongoDB DBA Certificate

- 1. successfully completed Authenticity of this document can be verified at This confirms a course of study offered by MongoDB, Inc. Shannon Bradshaw Director, Education MongoDB, Inc. Course Completion Confirmation SEPTEMBER 2016 Rahul Gaikwad M102: MongoDB for DBAs https://university.mongodb.com/downloads/certificates/d767c4302fde4440a380288f525be386/Certificate.pdf