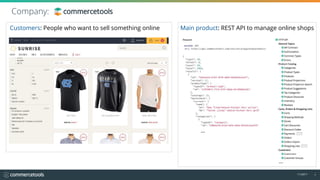

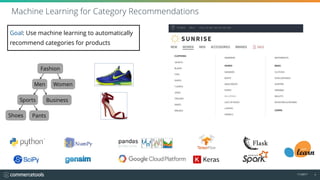

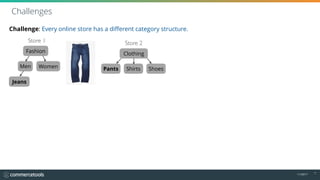

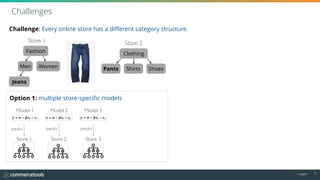

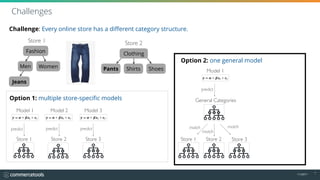

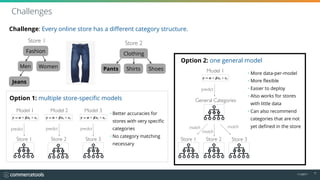

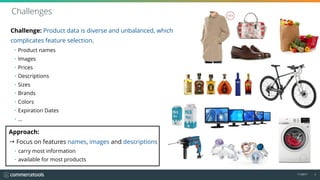

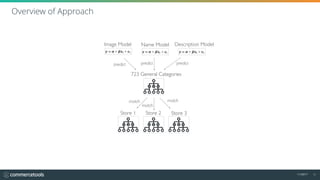

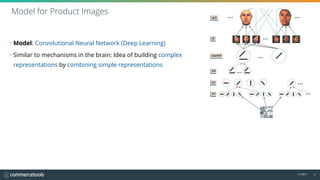

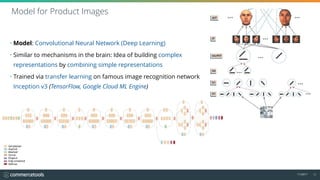

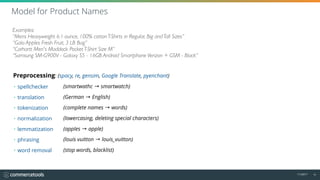

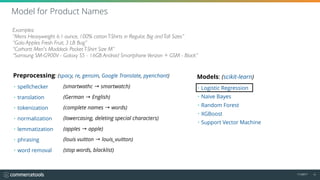

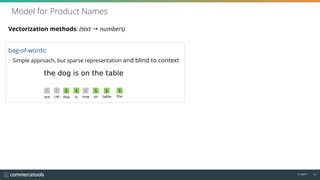

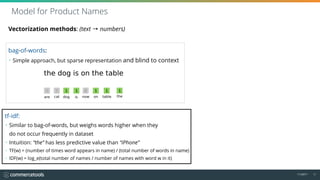

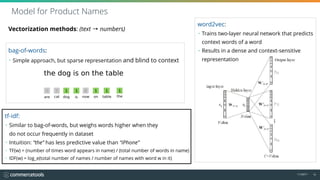

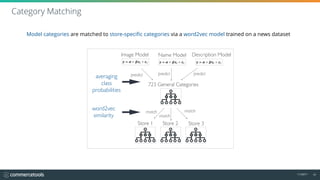

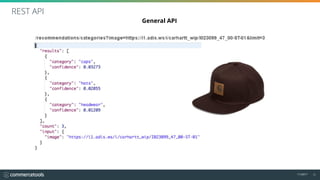

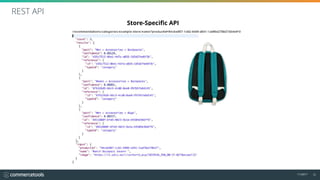

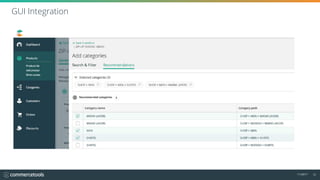

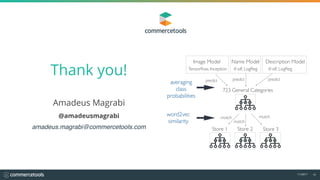

The document outlines a machine learning approach to automatically recommend product categories for online shops, addressing challenges like varying category structures across stores. It employs a convolutional neural network and various preprocessing techniques for product data, including text normalization and vectorization methods like TF-IDF and word2vec. The goal is to improve categorization accuracy and flexibility, enabling better category recommendations even for stores with limited data.