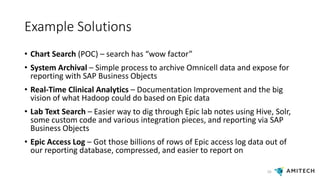

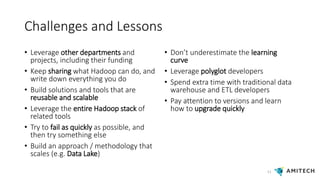

This document discusses building a Hadoop capability within an organization. It recommends creating a sense of urgency around opportunities not currently possible, building a guiding coalition of potential users and partners, and formalizing use cases. It also suggests enlisting an excited volunteer team, starting small to remove barriers, generating short-term wins, sustaining acceleration through communication of successes, and instituting Hadoop as the default platform. Examples of proof of concepts include search, archival, real-time analytics, and log analysis. Challenges discussed include leveraging other teams, documenting work, building reusable solutions, using the full Hadoop stack, failing quickly and learning, and gaining developer skills.