Computer Vision (CV) based Raspberry Pi robot vehicle

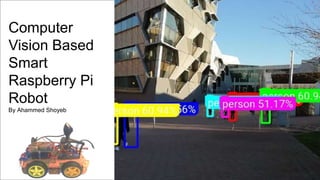

- 1. Computer Vision Based Smart Raspberry Pi Robot By Ahammed Shoyeb

- 3. CV Based Sensors Provide superior feedback through image processing than the reflection-based sensors Contains higher number of feedback information the image Cheaper to procure Easy to maintain due to a least number of non-contact parts Low power consumption

- 4. Aim of the project This project is aimed at developing an efficient, deployable Computer Vision (CV) based Raspberry Pi 4 powered mobile autonomous robot vehicle.

- 5. Project Objectives & Process Chronology Virtual Robot Simulation test bed P I D controller Raw code Commissioned for deployment P I D controller Virtual Robot

- 6. Process Chronology Virtual Robot Simulation test bed P I D controller Raw code Commissioned for deployment P I D controller Virtual Robot Raspberry Pi 4 with Heatsink Case DRV84 Dual Bridge motor controller Webcam

- 7. Process Chronology Commissioned for deployment P I D controller Virtual Robot Virtual Robot Simulation test bed P I D controller Raw code Detection Recognition Global coordination Distance measurement

- 8. Process Chronology Virtual Robot Simulation test bed P I D controller Raw code Virtual Robot Commissioned for deployment P I D controller Detection Recognition Global coordination Distance measurement

- 9. Background theory Passive vision sensor-based guidance system (Lee and Hung 2013: 202)

- 10. Background Theory (Wang and Silva 2009: 364) Q-learning assisted IBVS hybrid robotic guidance implementation

- 11. Background Theory Edge detection-localization based CV guidance system, Lin (2017)

- 12. Proposed Solution CV based guidance system structure

- 14. Proposed Hardware : Raspberry Pi 4B (4GB)

- 15. Programming Interface • Python 3 programming language has been used with Spyder 3 IDE.

- 17. Python 3 vs V-rep communication interface

- 22. Identifying wall and robot localization Start Find object coordinate Object Location? Location >1 and Left Location >1 and Right Location >1 and Centre Location <1 Left Pair Wheel (Clockwise) Right Pair Wheel (Clockwise) Both Pair Wheel (Clockwise) Left Pair Wheel ( Anti-Clockwise) Program Terminated Reset & End

- 23. Utilizing CNN for navigation Convolutional Neural Network (CNN) has been used to classify object in target and obstacle This information is used to provide guidance to the robot to move forward or navigate against

- 24. Project Outcome The robotic guidance system developed is able to identify targets and navigate to it It can identify the obstacles (walls) and navigate against it It can measure the target and obstacle distance, thus it can localize it’s own co- ordinates It follows a red blob and keep 1 feet distance away It navigates against the wall

- 25. Project Outcome

- 26. Project Timeline

- 27. Project process reflection Field of advanced robotic has been explored Python robotic capability explored Robotic hardware integration practiced Real life entrepreneurship option explored

- 28. Project Conclusion • The project aim and objectives have been accomplished. • A computer vision based robotic guidance system have been designed and deployed • It’s performance have been analyzed and documented • Future project works will be to make the operational parameters more precise and multi programming environment deployable.

- 30. List of References Bellis, M. (Jul. 3, 2019, thoughtco.com/definition-of-a-robot-1992364.) the Definition of a Robot [online] available from <www.thoughtco.com/definition-of-a- robot-1992364.> [11/10 2019] Billingsley, J. e. and Brett, P. e. (2015) Machine Vision and Mechatronics in Practice. 1st ed. 2015.. edn Chaumette, F. (2015) Potential Problems of Unstability and Divergence in Image-Based and Position-Based Visual Servoing. Chesi, G. and Hung, Y. S. (2007) 'Global Path-Planning for Constrained and Optimal Visual Servoing'. IEEE Transactions on Robotics 23 (5), 1050-1060 Dastur, J. and Khawaja, A. (2010) Robotic Arm Actuation with 7 DOF using Haar Classifier Gesture Recognition. Di Castro, M., Almagro, C. V., Lunghi, G., Marin, R., Ferre, M., and Masi, A. (2018) Tracking-Based Depth Estimation of Metallic Pieces for Robotic Guidance.