Discussion of BigBench: A Proposed Industry Standard Performance Benchmark for Big Data

This presentation was held by Meikel Poess at TPC TC'14 on September 5, 2014 in Hangzhou, China. Full paper and more information at: http://msrg.org/papers/TPCTC2014-Rabl Abstract: Enterprises perceive a huge opportunity in mining information that can be found in big data. New storage systems and processing paradigms are allowing for ever larger data sets to be collected and analyzed. The high demand for data analytics and rapid development in technologies has led to a sizable ecosystem of big data processing systems. However, the lack of established, standardized benchmarks makes it difficult for users to choose the appropriate systems that suit their requirements. To address this problem, we have developed the BigBench benchmark specification. BigBench is the first end-to-end big data analytics benchmark suite. In this paper, we present the BigBench benchmark and analyze the workload from technical as well as business point of view. We characterize the queries in the workload along different dimensions, according to their functional characteristics, and also analyze their runtime behavior. Finally, we evaluate the suitability and relevance of the workload from the point of view of enterprise applications, and discuss potential extensions to the proposed specification in order to cover typical big data processing use cases.

Recommended

Recommended

More Related Content

Recently uploaded

Recently uploaded (20)

Featured

Featured (20)

Discussion of BigBench: A Proposed Industry Standard Performance Benchmark for Big Data

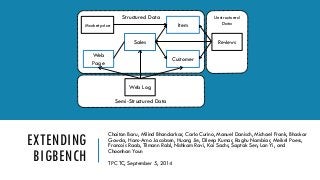

- 1. EXTENDING BIGBENCH Chaitan Baru, Milind Bhandarkar, Carlo Curino, Manuel Danisch, Michael Frank, BhaskarGowda, Hans-Arno Jacobsen, Huang Jie, DileepKumar, Raghu Nambiar, Meikel Poess, Francois Raab, Tilmann Rabl, NishkamRavi, Kai Sachs, SaptakSen, LanYi, and ChoonhanYoun TPC TC, September 5, 2014 Unstructured Data Semi-Structured Data Structured Data Sales Customer Item Marketprice Web Page Web Log Reviews

- 2. THE BIGBENCHPROPOSAL End to end benchmark Application level Based on a product retailer (TPC -DS) Focused on Parallel DBMS and MR engines History Launched at 1stWBDB, San Jose Published at SIGMOD 2013 Spec at WBDB proceedings 2012 (queries & data set) Full kit at WBDB 2014 Collaboration with Industry & Academia First: Teradata, University of Toronto, Oracle, InfoSizing Now: bankmark, CLDS, Cisco, Cloudera, Hortonworks, Infosizing, Intel, Microsoft, MSRG, Oracle, Pivotal, SAP 05.09.2014 EXTENDING BIGBENCH 2

- 3. DATA MODEL Structured: TPC-DS + market prices Semi-structured: website click-stream Unstructured: customers’ reviews 05.09.2014 EXTENDING BIGBENCH 3 Unstructured Data Semi-Structured Data Structured Data Sales Customer Item Marketprice Web Page Web Log Reviews Adapted TPC-DS BigBench Specific

- 4. DATA MODEL –3 VS Variety Different schema parts Volume Based on scale factor Similar to TPC-DS scaling, but continuous Weblogs & product reviews also scaled Velocity Refresh for all data 05.09.2014 EXTENDING BIGBENCH 4

- 5. WORKLOAD Workload Queries 30 “queries” Specified in English (sort of) No required syntax (first implementation in Aster SQL MR) Kit implemented in Hive, HadoopMR, Mahout, OpenNLP Business functions (Adapted from McKinsey) Marketing Cross-selling, Customer micro-segmentation, Sentiment analysis, Enhancing multichannel consumer experiences Merchandising Assortment optimization, Pricing optimization Operations Performance transparency, Product return analysis Supply chain Inventory management Reporting (customers and products) 05.09.2014 EXTENDING BIGBENCH5

- 6. WORKLOAD -TECHNICAL ASPECTS Generic Characteristics Hive Implementation Characteristics 05.09.2014 EXTENDING BIGBENCH 6 Data Sources #Queries Percentage Structured 18 60% Semi-structured 7 23% Un-structured 5 17% Analytic techniques #Queries Percentage Statistics analysis 6 20% Data mining 17 57% Reporting 8 27% Query Types #Queries Percentage Pure HiveQL 14 46% Mahout 5 17% OpenNLP 5 17% Custom MR 6 20%

- 7. SQL-MR QUERY 1 05.09.2014 EXTENDING BIGBENCH 7

- 8. HIVEQLQUERY 1 05.09.2014 EXTENDING BIGBENCH8 SELECT pid1, pid2, COUNT (*) AS cnt FROM ( FROM ( FROM ( SELECT s.ss_ticket_number AS oid , s.ss_item_sk AS pid FROM store_sales s INNER JOIN item i ON s.ss_item_sk = i.i_item_sk WHERE i.i_category_id in (1 ,2 ,3) and s.ss_store_sk in (10 , 20, 33, 40, 50) ) q01_temp_join MAP q01_temp_join.oid, q01_temp_join.pid USING 'cat' AS oid, pid CLUSTER BY oid ) q01_map_output REDUCE q01_map_output.oid, q01_map_output.pid USING 'java -cp bigbenchqueriesmr.jar:hive-contrib.jar de.bankmark.bigbench.queries.q01.Red' AS (pid1 BIGINT, pid2 BIGINT) ) q01_temp_basket GROUP BY pid1, pid2 HAVING COUNT (pid1) > 49 ORDER BY pid1, cnt, pid2;

- 9. SCALING Continuous scaling model Realistic SF 1 ~ 1 GB Different scaling speeds Taken from TPC-DS Static Square root Logarithmic Linear 05.09.2014 EXTENDING BIGBENCH9

- 10. BENCHMARK PROCESS Data Generation Loading Power Test Throughput Test I Data Maintenance Throughput Test II Result 05.09.2014 EXTENDING BIGBENCH10 Adapted to batch systems No trickle update Measured processes Loading Power Test (single user run) Throughput Test I (multi user run) Data Maintenance Throughput Test II (multi user run) Result Additive Metric

- 11. METRIC Throughput metric BigBench queries per hour Number of queries run 30*(2*S+1) Measured times Metric 05.09.2014 EXTENDING BIGBENCH11 Source: www.wikipedia.de

- 12. COMMUNITY FEEDBACK Gathered from authors’ companies, customers, other comments Positive Choice of TPC-DS as a basis Not only SQL Reference implementation Common misunderstanding Hive-only benchmark Due to reference implementation Feature requests Graph analytics Streaming Interactive querying Multi-tenancy Fault-tolerance Broader use case Social elements, advertisement, etc. Limited complexity Keep it simple to run and implement 05.09.2014 EXTENDING BIGBENCH12

- 13. EXTENDING BIGBENCH Better multi -tenancy Different types of streams (OLTP vs OLAP) More procedural workload Complex like page rank, indexing Simple like word count, sort More metrics Price and energy performance Performance in failure cases ETL workload Avoid complete reload of data for refresh 05.09.2014 EXTENDING BIGBENCH13

- 14. TOWARDS AN INDUSTRY STANDARD Collaboration with SPEC and TPC Aiming for fast development cycles Enterprise vs express benchmark (TPC) Specification sections ( open) Preamble High level overview Database design Overview of the schema and data Workload scaling How to scale the data and workload Metric and execution rules Reported metrics and rules on how to run the benchmark Pricing Reported price information Full disclosure report Wording and format of the benchmark result report Audit requirements Minimum audit requirements for an official result, self auditing scripts and tools 05.09.2014 EXTENDING BIGBENCH14 Enterprise Express Specification Kit Specificimplementation Kit evaluation Best optimization System tuning (not kit) Complete audit Self audit / peer review Price requirement No pricing FullACID testing ACID self-assessment (no durability) Large varietyof configuration Focused on key components Substantialimplementation cost Limited cost, fast implementation

- 15. BIGBENCH EXPERIMENTS Tests on ClouderaCDH 5.0, Pivotal GPHD-3.0.1.0, IBM InfoSphereBigInsights In progress: Spark, Impala, Stinger, … 3 Clusters (+) 1 node: 2x Xeon E5-2450 0 @ 2.10GHz, 64GB RAM, 2 x 2TB HDD 6 nodes: 2 x Xeon E5-2680 v2 @2.80GHz, 128GB RAM, 12 x 2TB HDD 546 nodes: 2 x Xeon X5670 @2.93GHz, 48GB RAM, 12 x 2TB HDD EXTENDING BIGBENCH 15 0:00:00 0:14:24 0:28:48 0:43:12 0:57:36 1:12:00 DG LOAD q1 q2 q3 q4 q5 q6 q7 q8 q9 q10 q11 q12 q13 q14 q15 q16 q17 q18 q19 q20 q21 q22 q23 q24 q25 q26 q27 q28 q29 q30 Q16 Q01 05.09.2014

- 16. Try it -full kit online https://github.com/intel-hadoop/Big-Bench Contact: tilmann.rabl@bankmark.de 05.09.2014 EXTENDING BIGBENCH16 QUESTIONS?