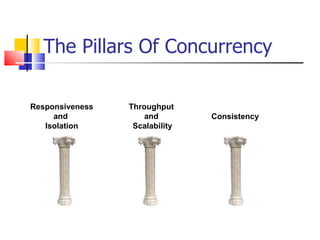

The Pillars Of Concurrency

•Download as PPT, PDF•

0 likes•356 views

The document discusses three pillars of concurrency: 1) Responsiveness and isolation through using background threads to avoid blocking the main UI thread. 2) Throughput and scalability by distributing work across multiple threads to maximize all available CPU cores. 3) Consistency by dealing with shared memory access across threads to prevent race conditions and deadlocks, such as through immutable objects, message passing, and transactional memory.

Report

Share

Report

Share

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Concurrency Programming in Java - 07 - High-level Concurrency objects, Lock O...

Concurrency Programming in Java - 07 - High-level Concurrency objects, Lock O...

Concurrency Programming in Java - 06 - Thread Synchronization, Liveness, Guar...

Concurrency Programming in Java - 06 - Thread Synchronization, Liveness, Guar...

Similar to The Pillars Of Concurrency

Similar to The Pillars Of Concurrency (20)

Spin Locks and Contention : The Art of Multiprocessor Programming : Notes

Spin Locks and Contention : The Art of Multiprocessor Programming : Notes

Beyond the RTOS: A Better Way to Design Real-Time Embedded Software

Beyond the RTOS: A Better Way to Design Real-Time Embedded Software

Beyond the RTOS: A Better Way to Design Real-Time Embedded Software

Beyond the RTOS: A Better Way to Design Real-Time Embedded Software

Java Performance, Threading and Concurrent Data Structures

Java Performance, Threading and Concurrent Data Structures

The Pillars Of Concurrency

- 1. The Pillars Of Concurrency Responsiveness and Isolation Throughput and Scalability Consistency

- 5. Why? The number of transistors never stopped climbing The Free Lunch is Over However, clock speed stopped somewhere near 3GHz

- 6. The Solution Re-Enable the Free Lunch Use the Thread-Pool to execute your work asynchronously Add a concurrency control mechanism which will adjust the amount of work items thrown into the pool according to the workload and the machine architecture, in order to put the maximum number of cores to work with minimum contentions How many callbacks to put in the pool? How to separate the work?

- 7. The Future Lock-Free Thread-Pool Instead of using a linked list, use the array-style, lock-free, GC-friendly ConcurrentQueue<T> class The increasing number of work items and worker threads result in a problematic contention on the pool.

- 8. The Future Work Stealing Queues When work is queued from a non-pool thread, it goes into the global queue. Each worker thread in the pool has its own private WSQ. When work is queued from a pool worker thread, the work goes into its WSQ, most of the time, avoiding all locking. WSQ has two ends, it allows lock-free pushes and pops from one end (“private”), but requires synchronization from the other end (“public”) Worker thread is being created/assigned to grab work from the global queue The worker thread grab work from its WSQ in a LIFO fashion, avoiding all locking. Worker threads steal work from other WSQs in a FIFO fashion, synchronization is required.