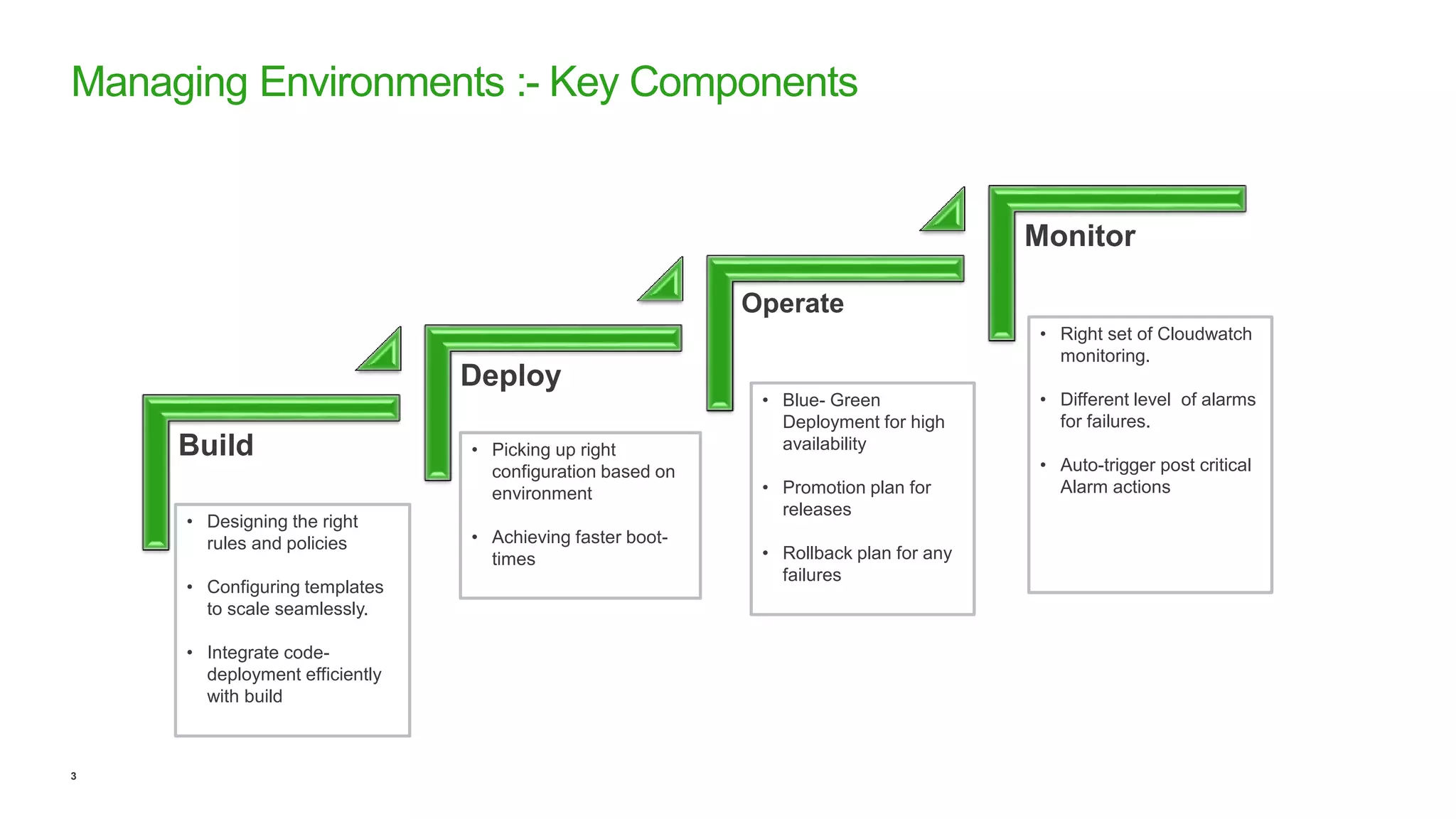

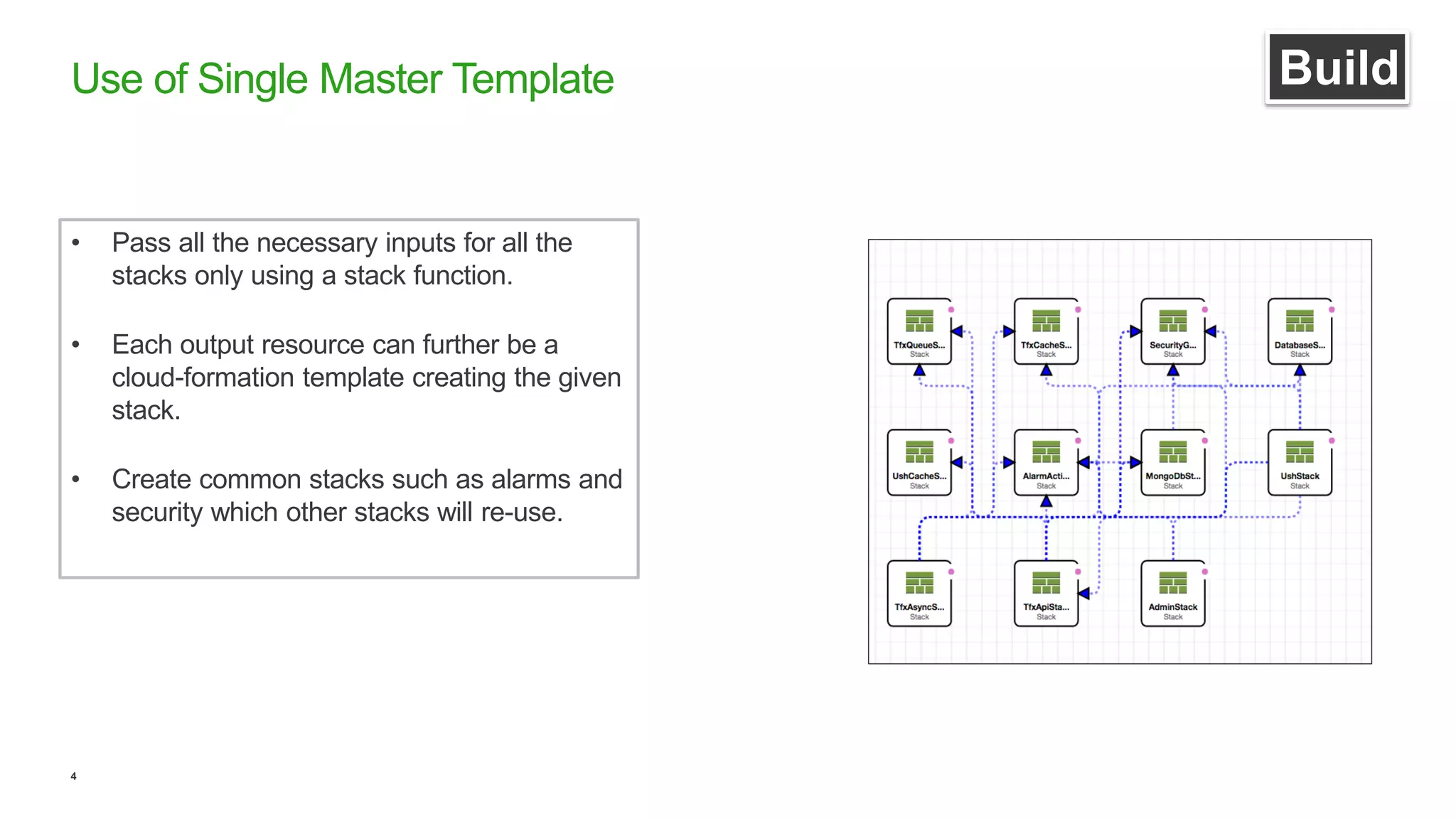

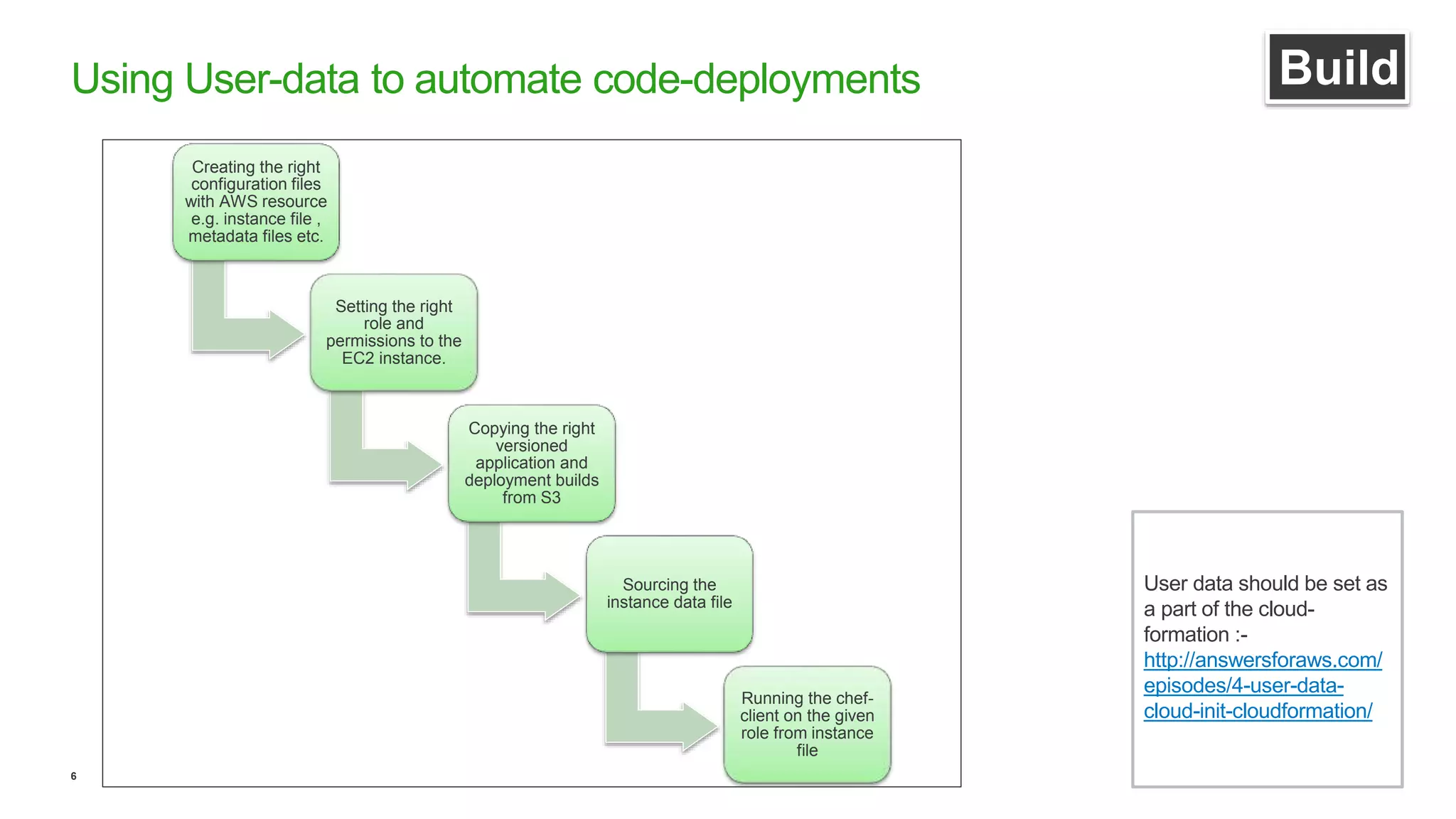

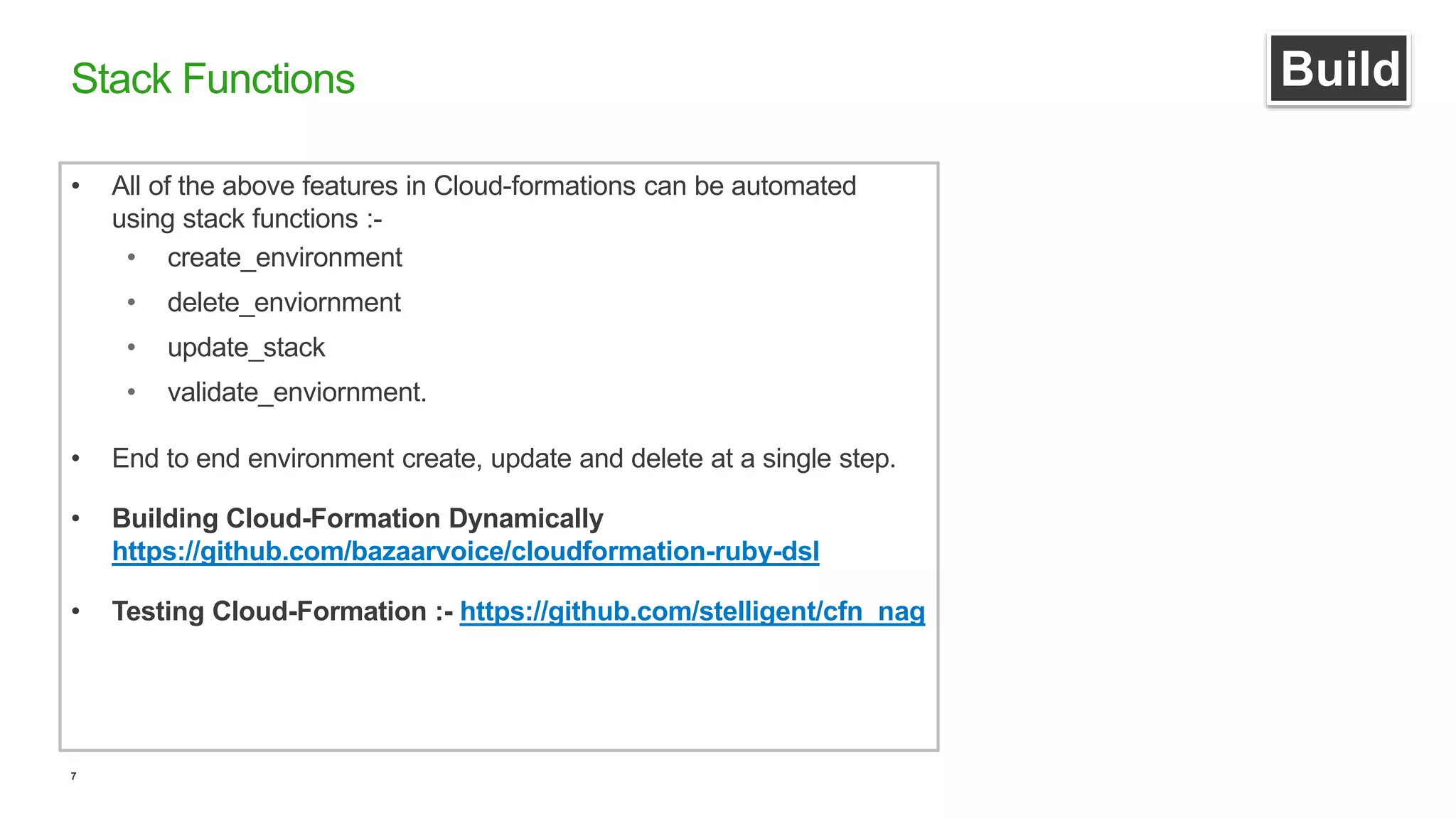

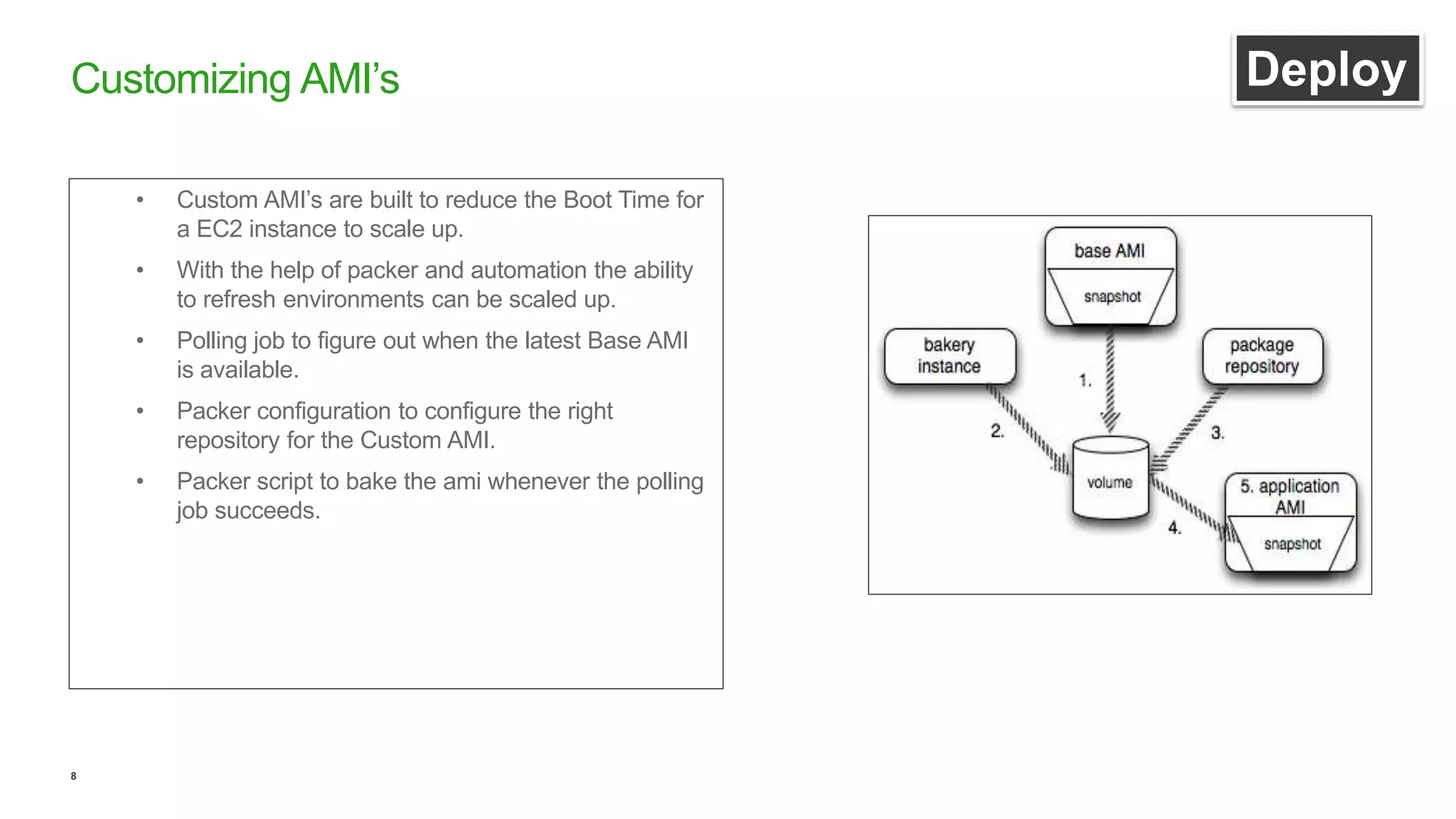

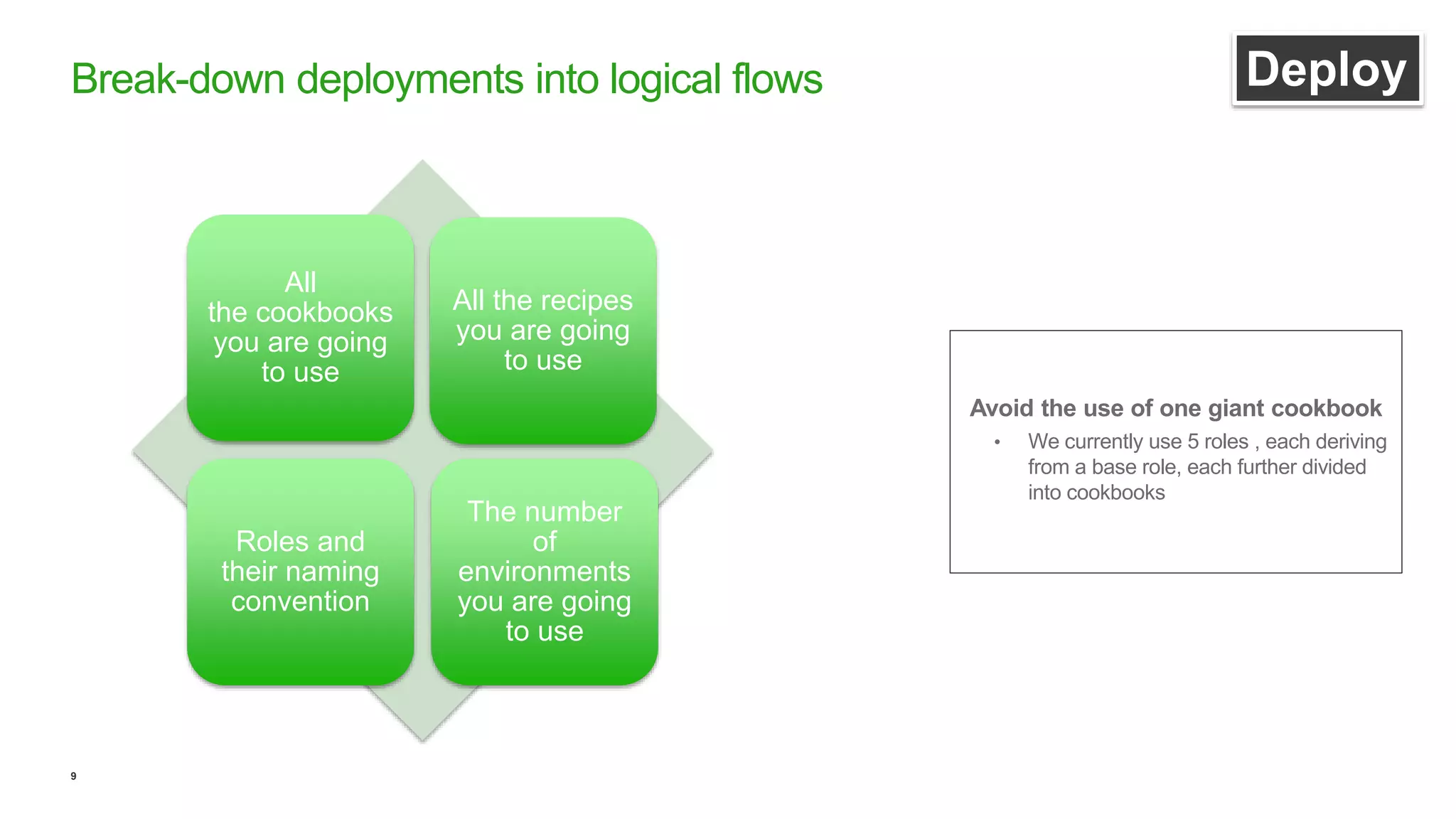

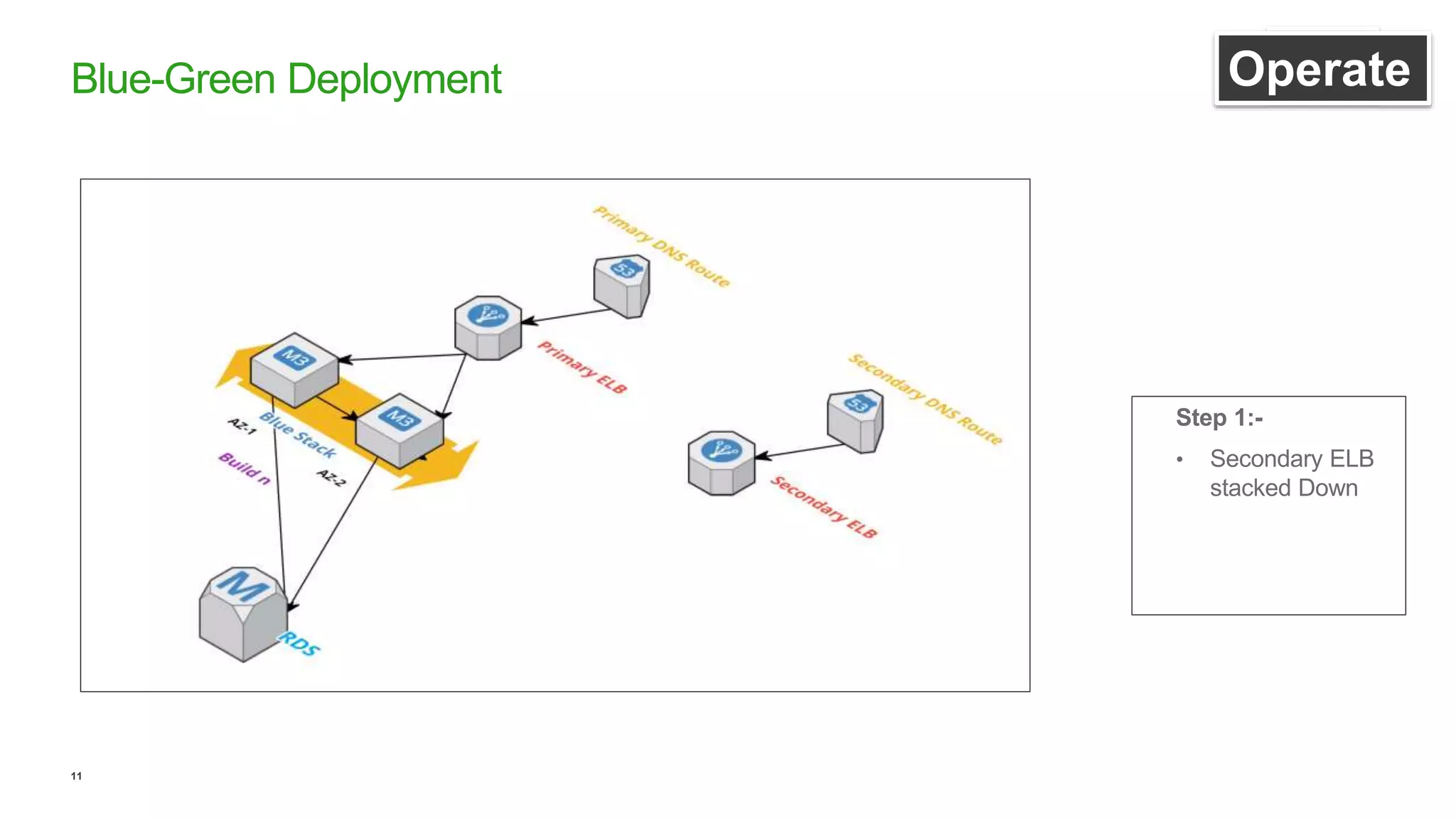

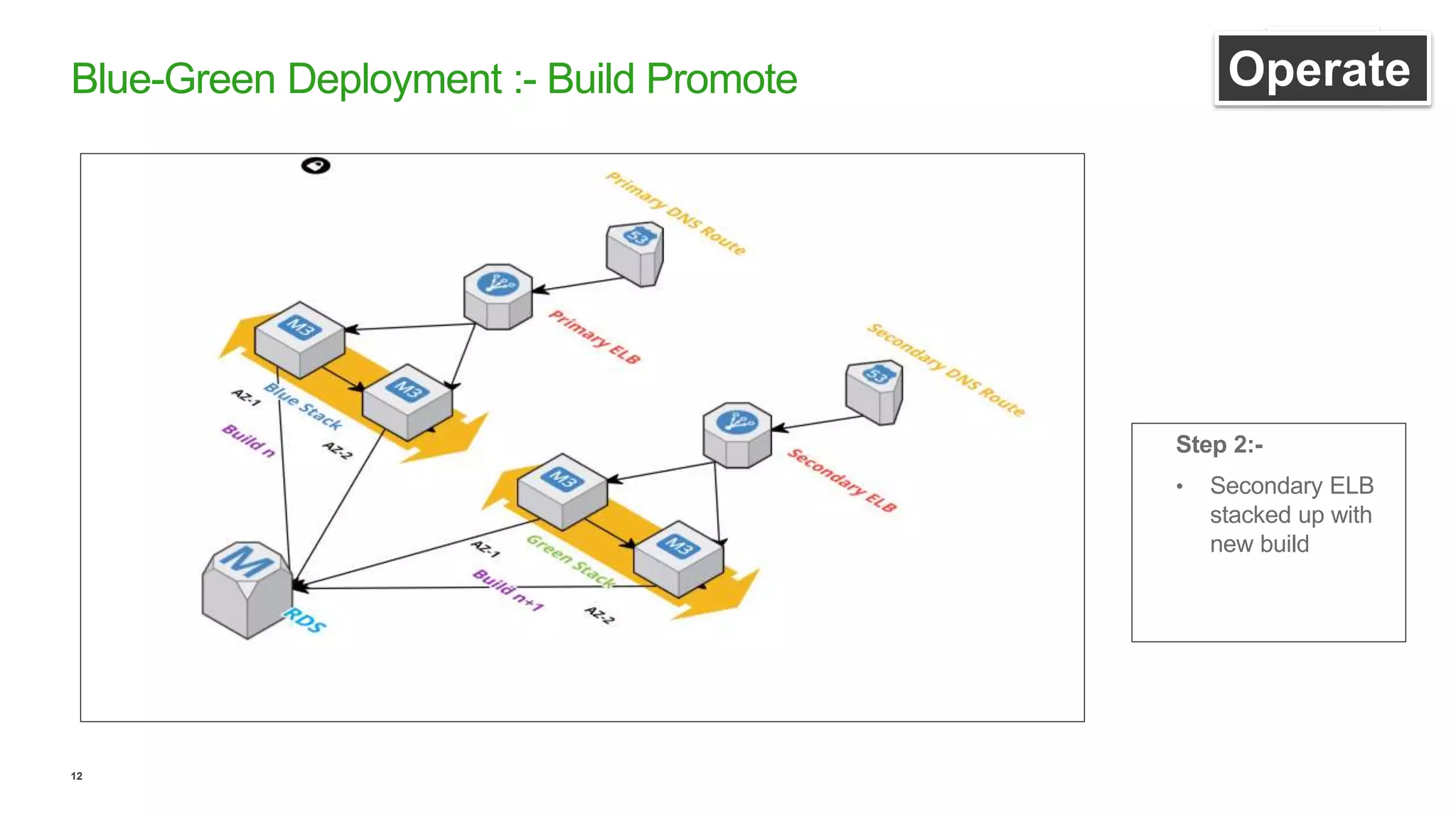

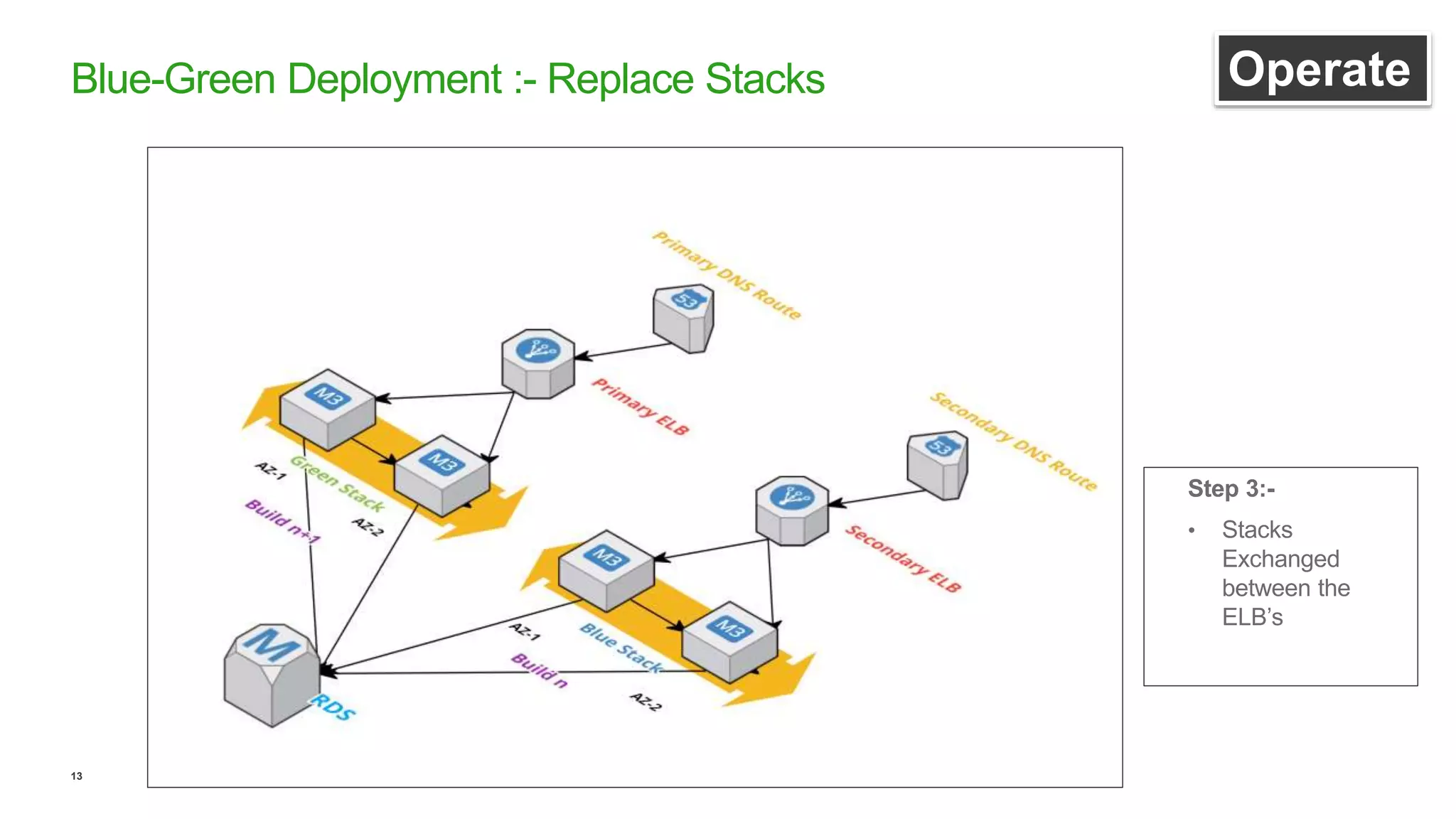

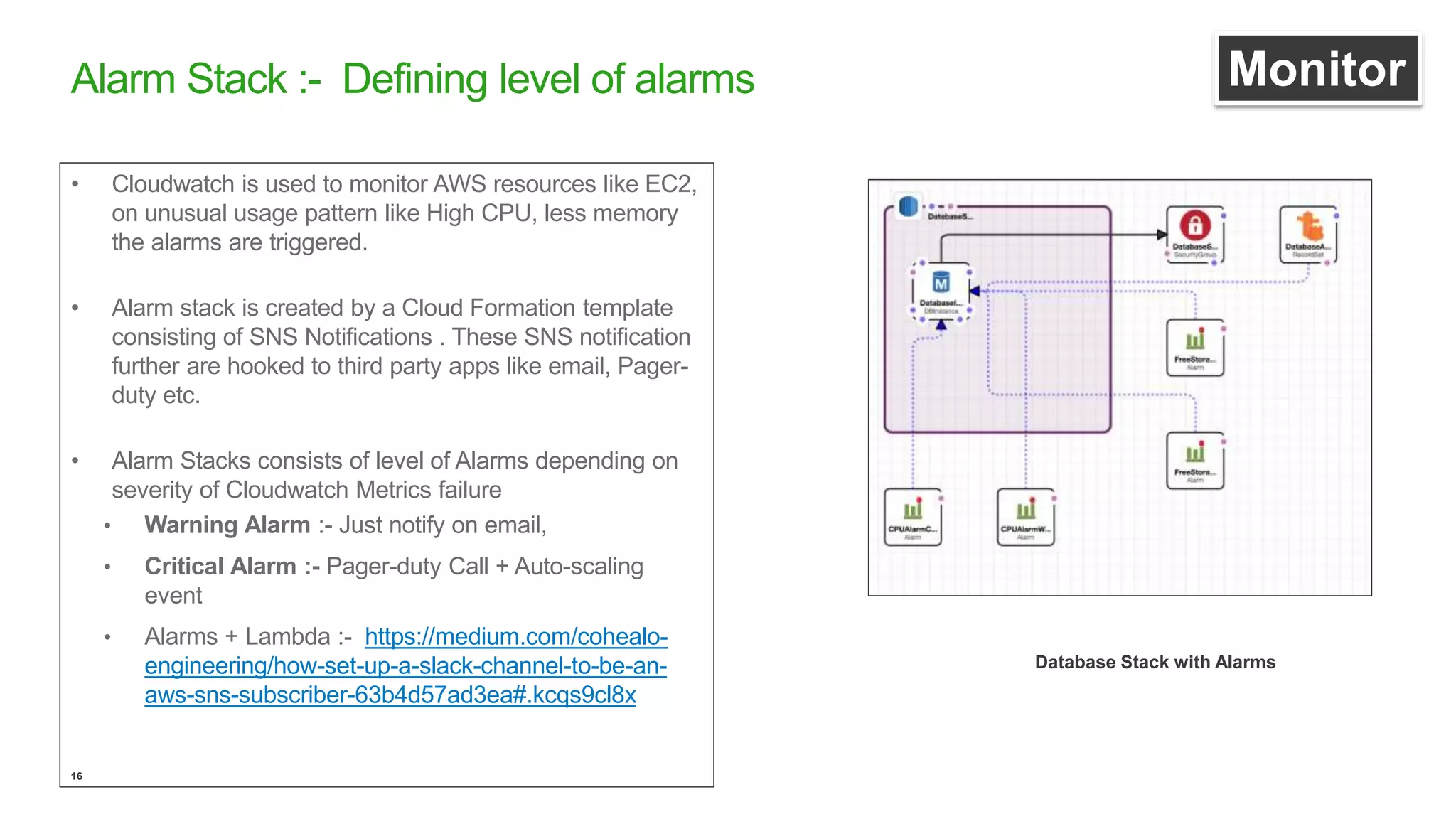

This document discusses efficient ways to manage environments in AWS using cloud formation templates. It covers key components like build, deploy, operate, and monitor. It provides guidance on using templates to configure environments, automating deployments with tools like Chef, implementing blue-green deployments, creating alarm stacks to monitor resources, and scaling infrastructure based on cloudwatch metrics. The overall aim is to achieve faster release cycles, predictability, and reliability when managing dynamic AWS infrastructure.