Ad hoc evaluation of SDS along project lifecylcle in Orange

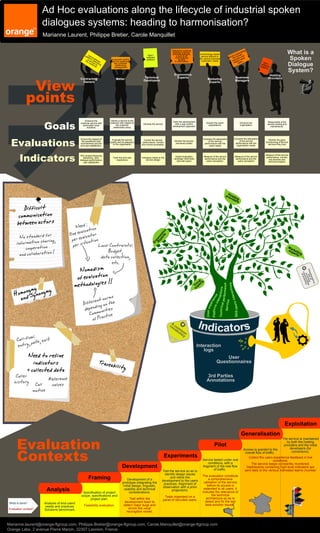

- 1. Ad Hoc evaluations along the lifecycle of industrial spoken dialogues systems: heading to harmonisation? Marianne Laurent, Philippe Bretier, Carole Manquillet pla Har Hard / Interactive systems implying a human- amchine dialogue, A technology based e om atin aut man g What is a rvic e hu rs low Spoken a QoS & an service tailored to tfo d/s software individualised Se th to lue a srm doftw Automate routing platform access the various business a per ed-va the er eli are service satisfying to information customers' needs o d cu vice veri ad tasks Dialogue Ha sto to ng me the customer soft rd / r relationship plat ware management form System? Ergonomics Hosting View Contracting Technical Experts Business Providers Métier Developers Marketing Managers Owners Experts points Goals Enhance the Deliver a service to the customer with respect to Tailor the development Enhance the Responsible of the customer service with Develop the service Answer the users' the customer with a user-centric expectations. organisation service hosting and automated vocal development approach solutions relationship policy maintenance. Evaluations Ensure the respect of Evaluate the service Compare the adequation Compare the adequation Monitor the good Control the service Monitor the service of the service the predefined QoS quality and its adequacy performance, monitor of the service functionning of the live commitments and the perceived quality performance with the performance with the to the organisation and correct anomalies. services they host end-user satisfaction. users needs organisation needs Indicators ROI (project financing Measure of the service Track the end-user Indicators linked to the Expert evaluation Measure of the service Measure of the service performance, monitor decisions), QoS, campaign (field tests performance and the performance and the dialogue performance, experience service design live services and and real users) users' perception. users' perception technical support user satisfaction Ho pro sti vid ng ers Difficult communication be tween actors eed : ion N at evalu ator One valu s tandard for rts ic No s pe om tion sharing, er e tuation p i ex n informa go per s Local Contraints: Er cooperation Budget, Ma ex rket and collaboration ! data collection, pe ing rts etc. Nomadism of ev aluation m ethodol ogies !! al ts oc er ctions formance ymynonymy V p ex mon Sy ctions Ho and a orms nter int e nt n the nc era fere ng on l per Dif di ers i rie Service pe epen munities Technica Platform d SDS-Us U s er e x Com ractice of P C , low: , exit all-f path % 12 ntry 58 e 36 46 3 ,2 14 Need to refine indicators Traceabilit + collected data y Caller Reference history Call values motive Exploitation Generalisation Evaluation The service is maintained by both the hosting Pilot providers and the initial Contexts Access is granted to the developers (for overall flow of traffic. corrections). Experiments . Collect the users experience feedback in live Service tested under real conditions. conditions, with a The service keeps constantky monitored. Development fragment of the real flow of traffic. Dashboards containing high-level indicators are Test the service so as to sent daily to the various interested teams (number identify design issues The evaluation constitute Framing Development of a and refine the development to the users a comprehensive prototype integrating the practices. Alignment of validation of the service initial design, linguistic, observation with a priori before its access is Analysis Specification of project usability and technical considerations. projections. extended to all users. It includes the relevance of scope, specifications and Tests organised on a the technical project plan. Test within the panel of recruited users. architecture.so as to development team to detect and fix the last What is done? Analysis of end-users' beta-solution issues. needs and practices. Feasibility evaluation. detect major bugs and Evaluation context? Solutions benchmark. enrich the vocal recongition model, Marianne.laurent@orange-ftgroup.com, Philippe.Bretier@orange-ftgroup.com, Carole.Manquillet@orange-ftgroup.com Orange Labs, 2 avenue Pierre Marzin, 22307 Lannion, France