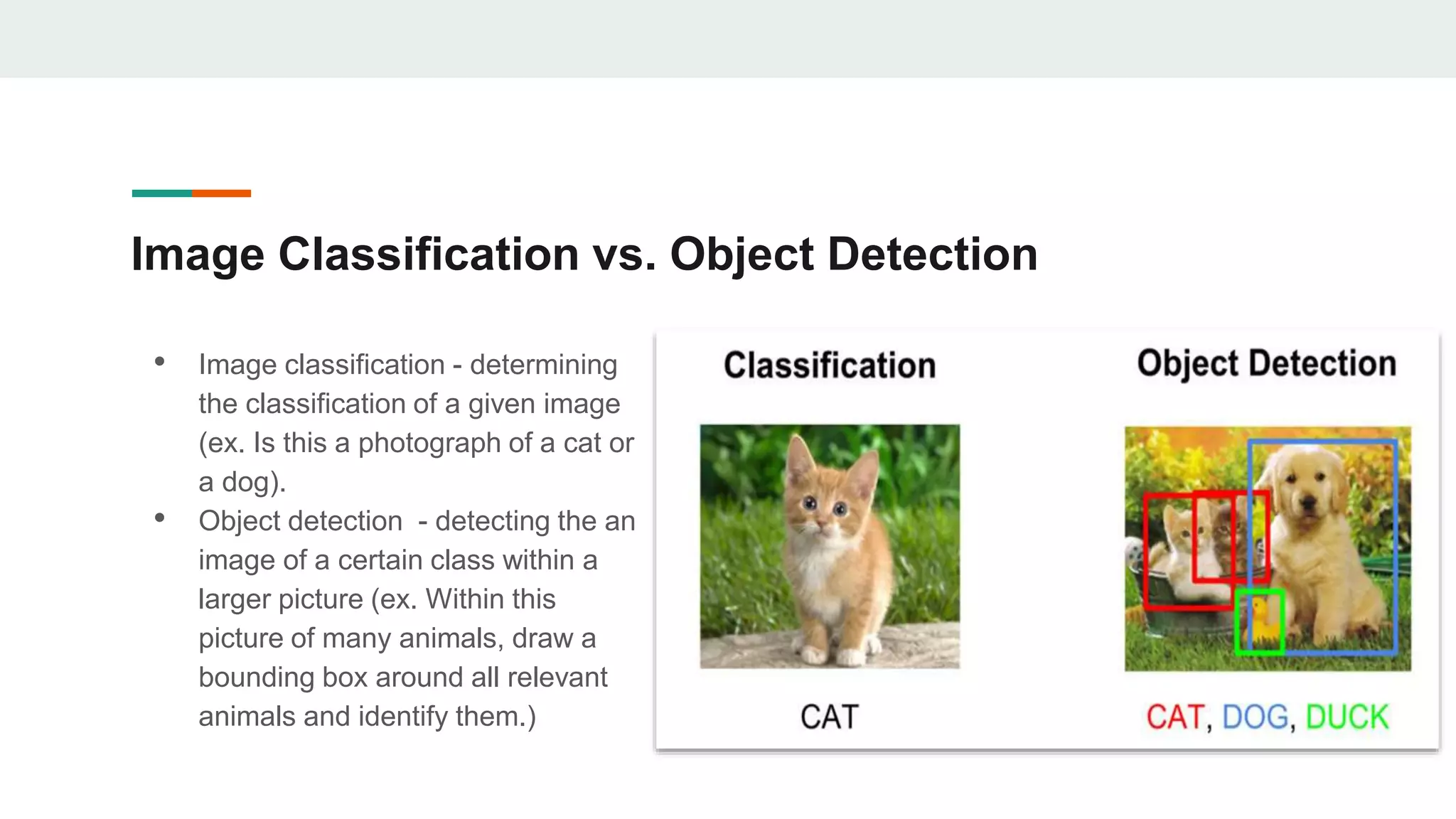

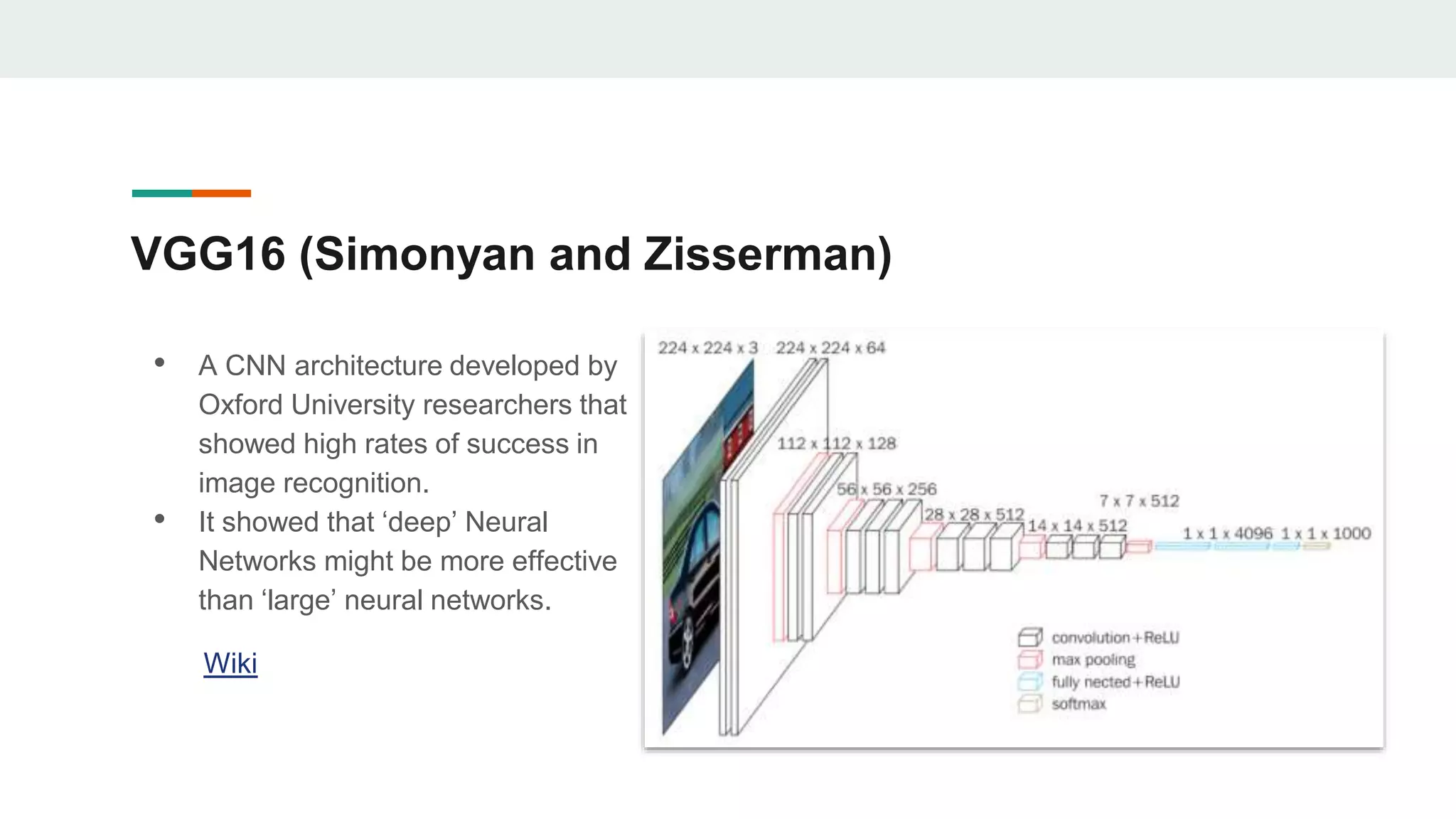

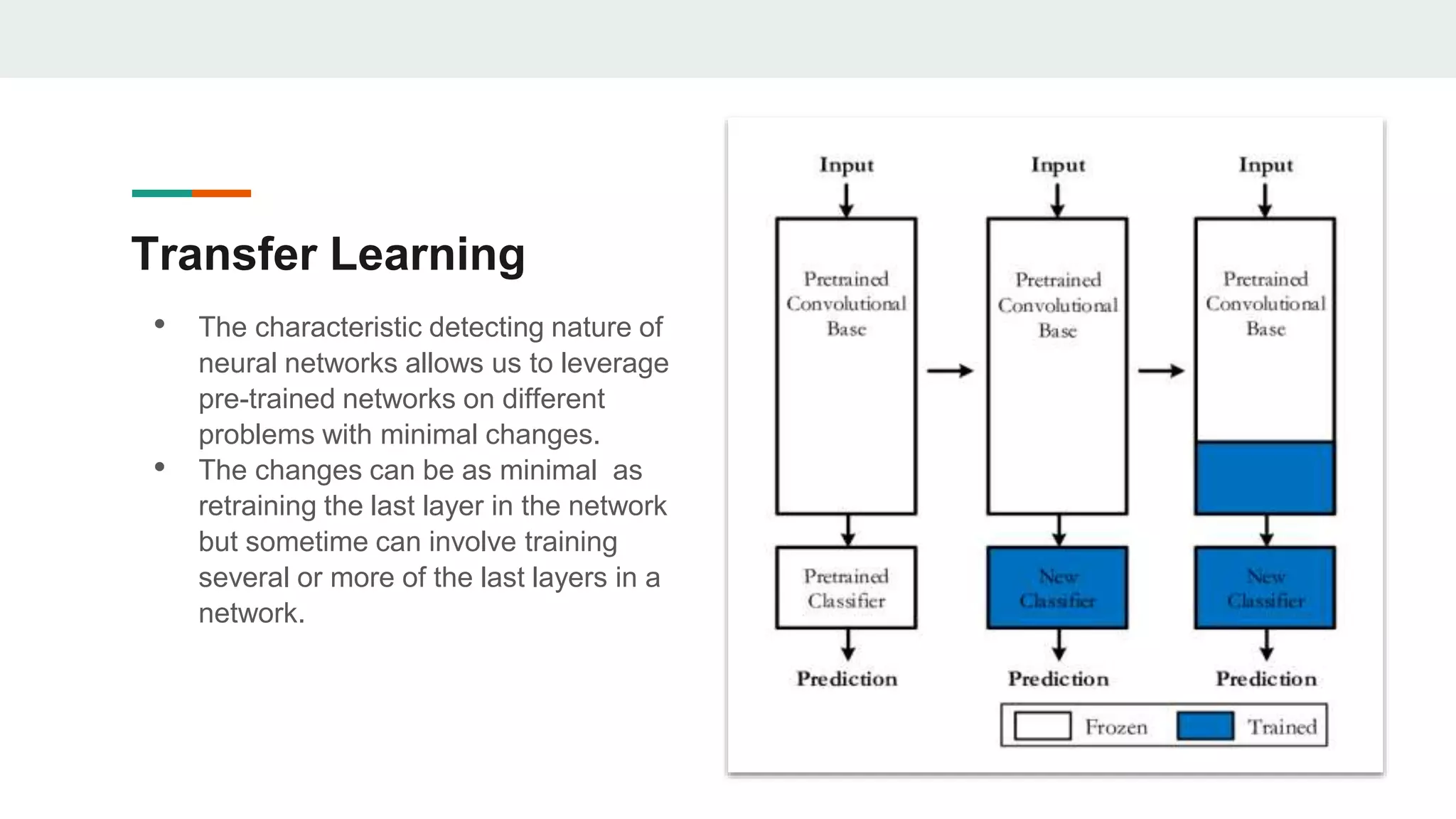

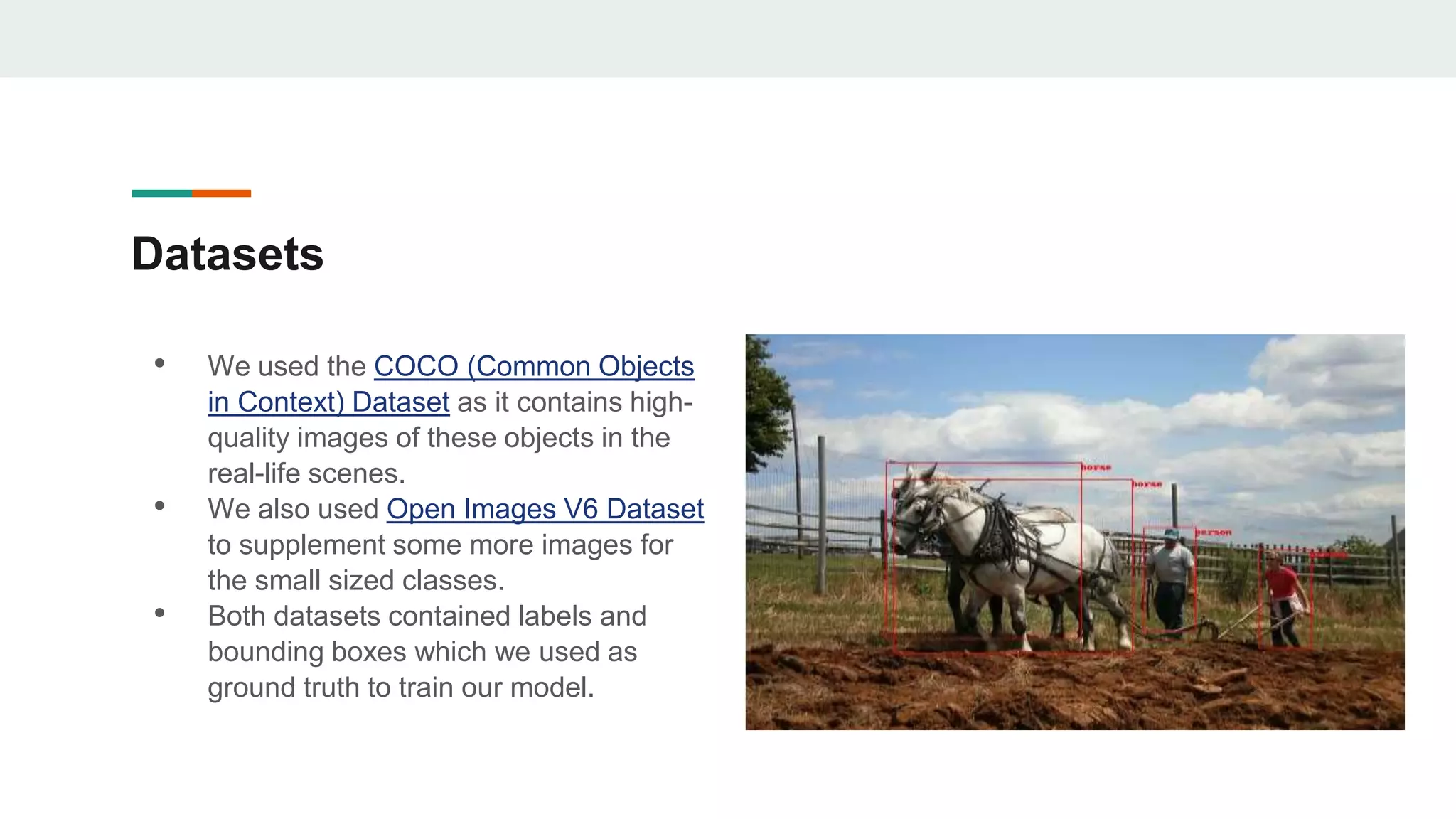

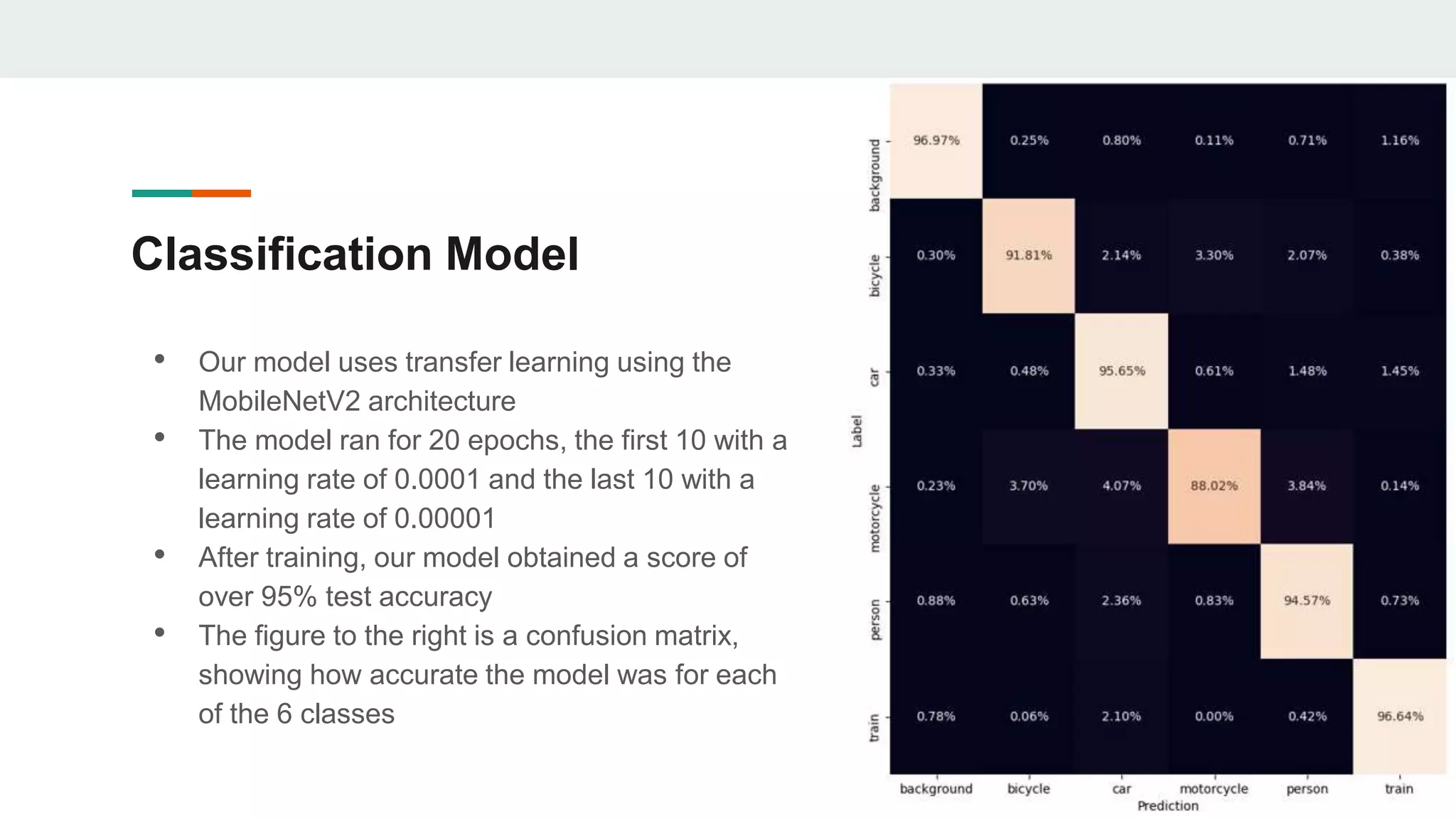

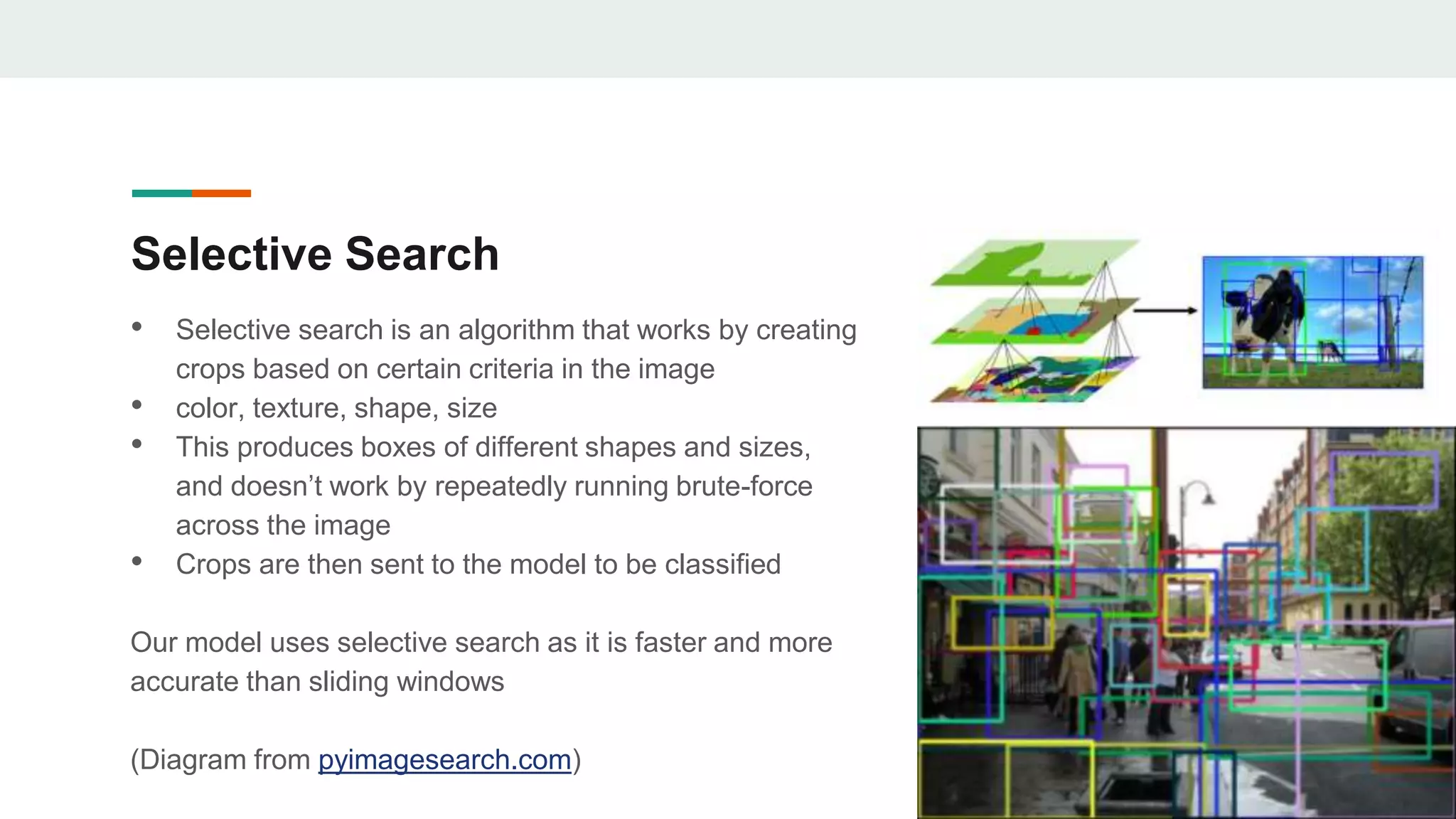

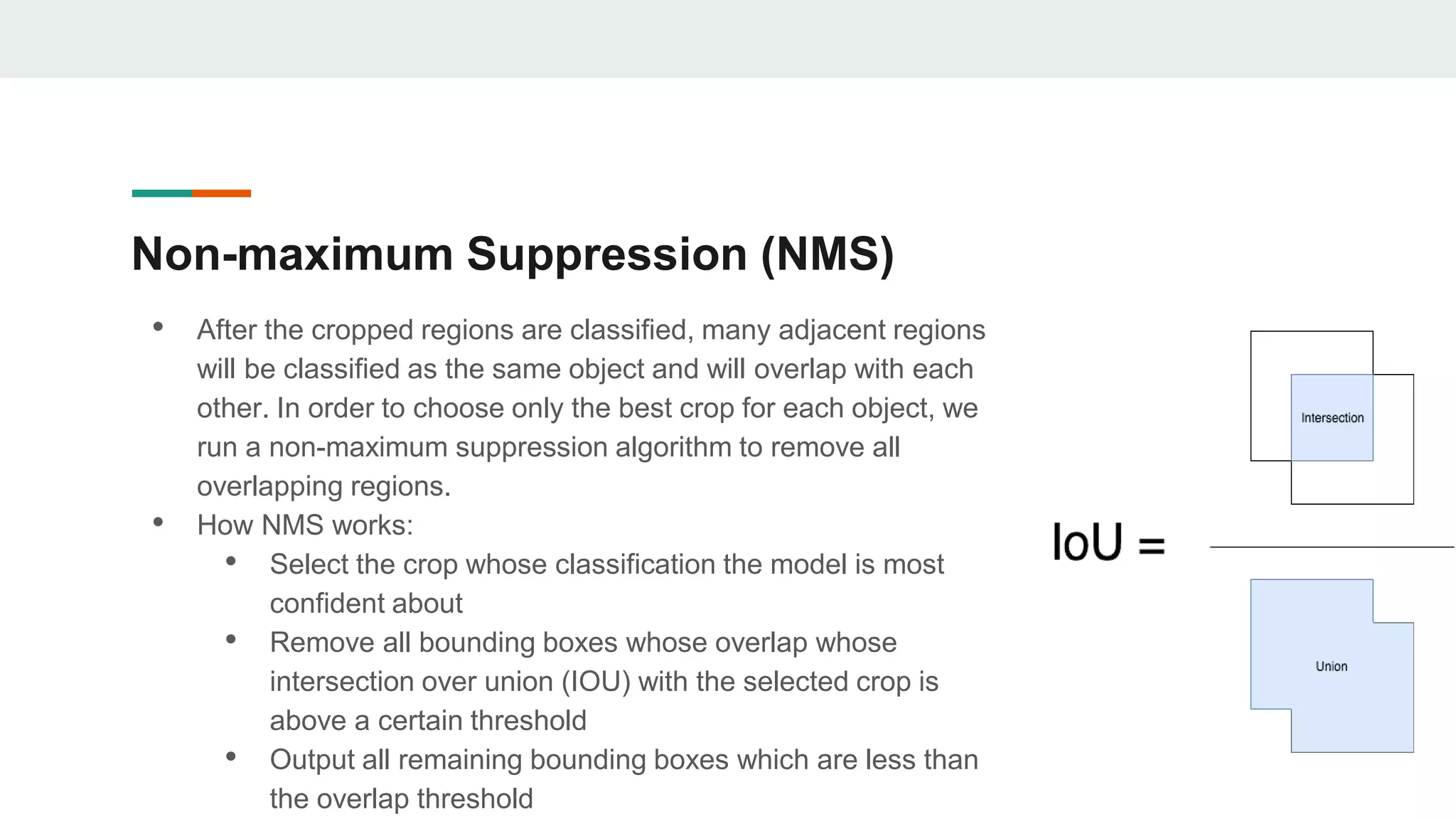

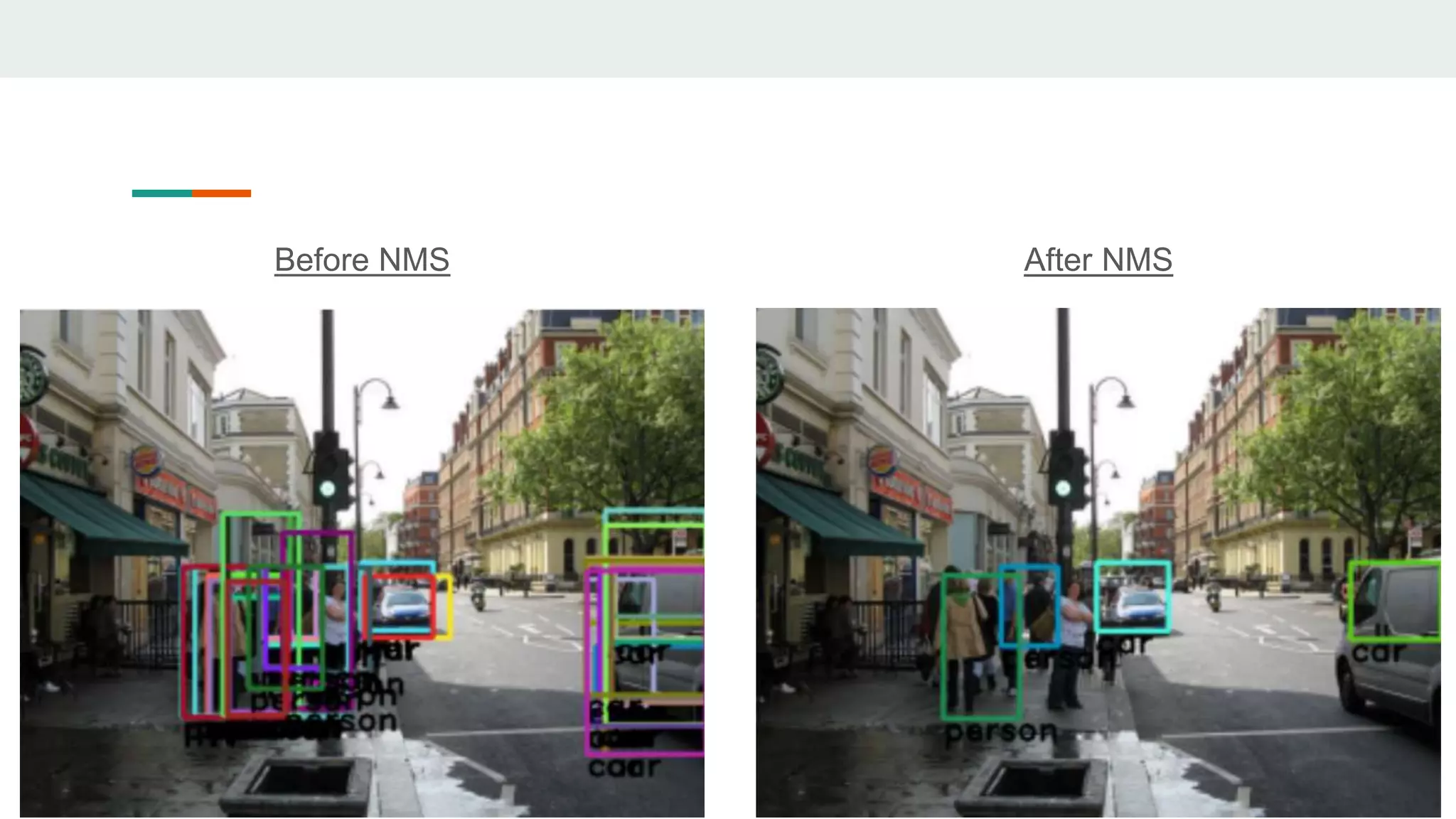

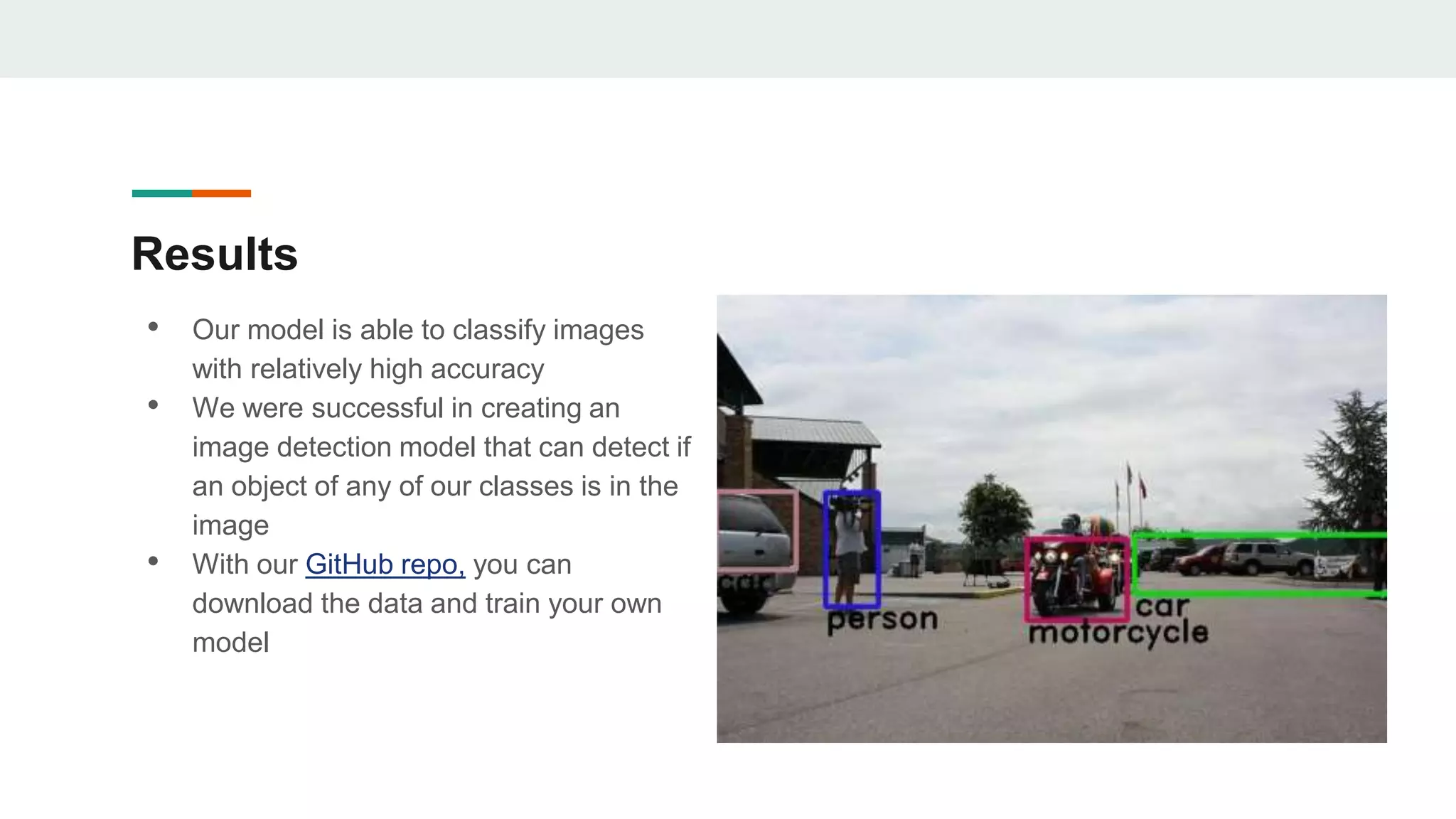

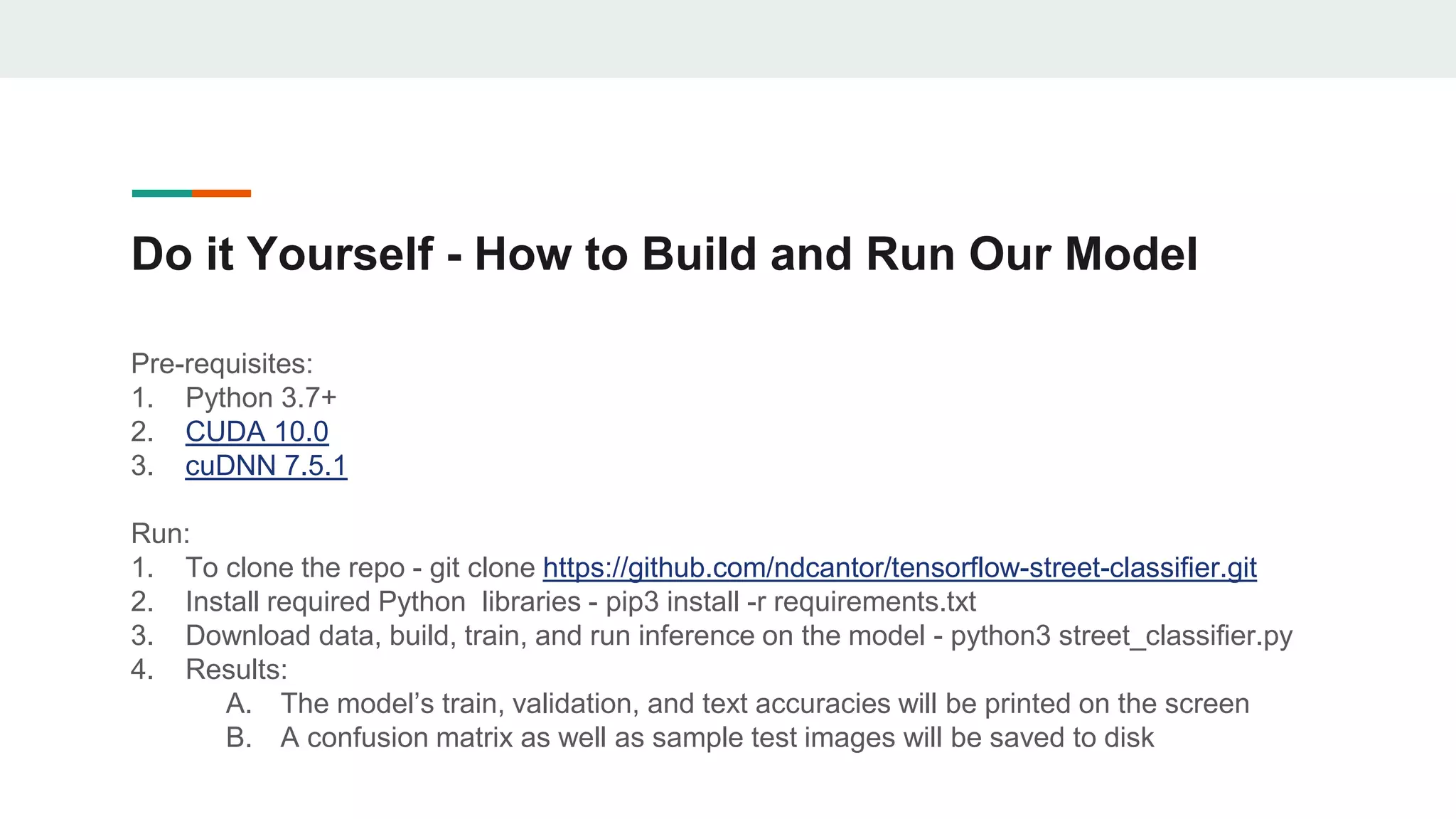

The document outlines the development of an image classification and object detection model using TensorFlow, intended for self-driving car applications. It details the model's architecture, data sources, training process, and the methodologies employed for object detection, including selective search and non-maximum suppression. The project achieved over 95% accuracy on test data and provides guidance for further enhancements and implementation on GitHub.