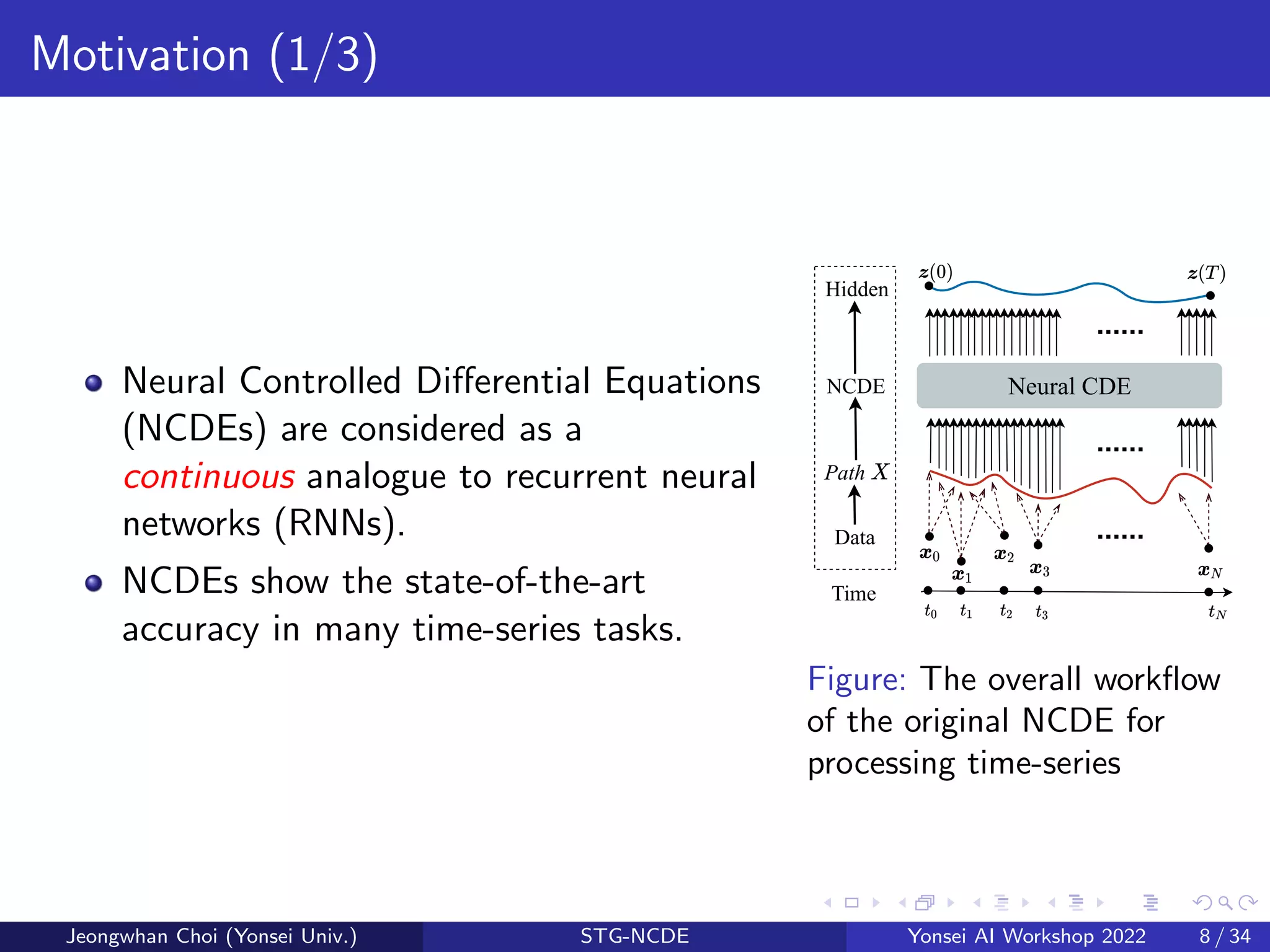

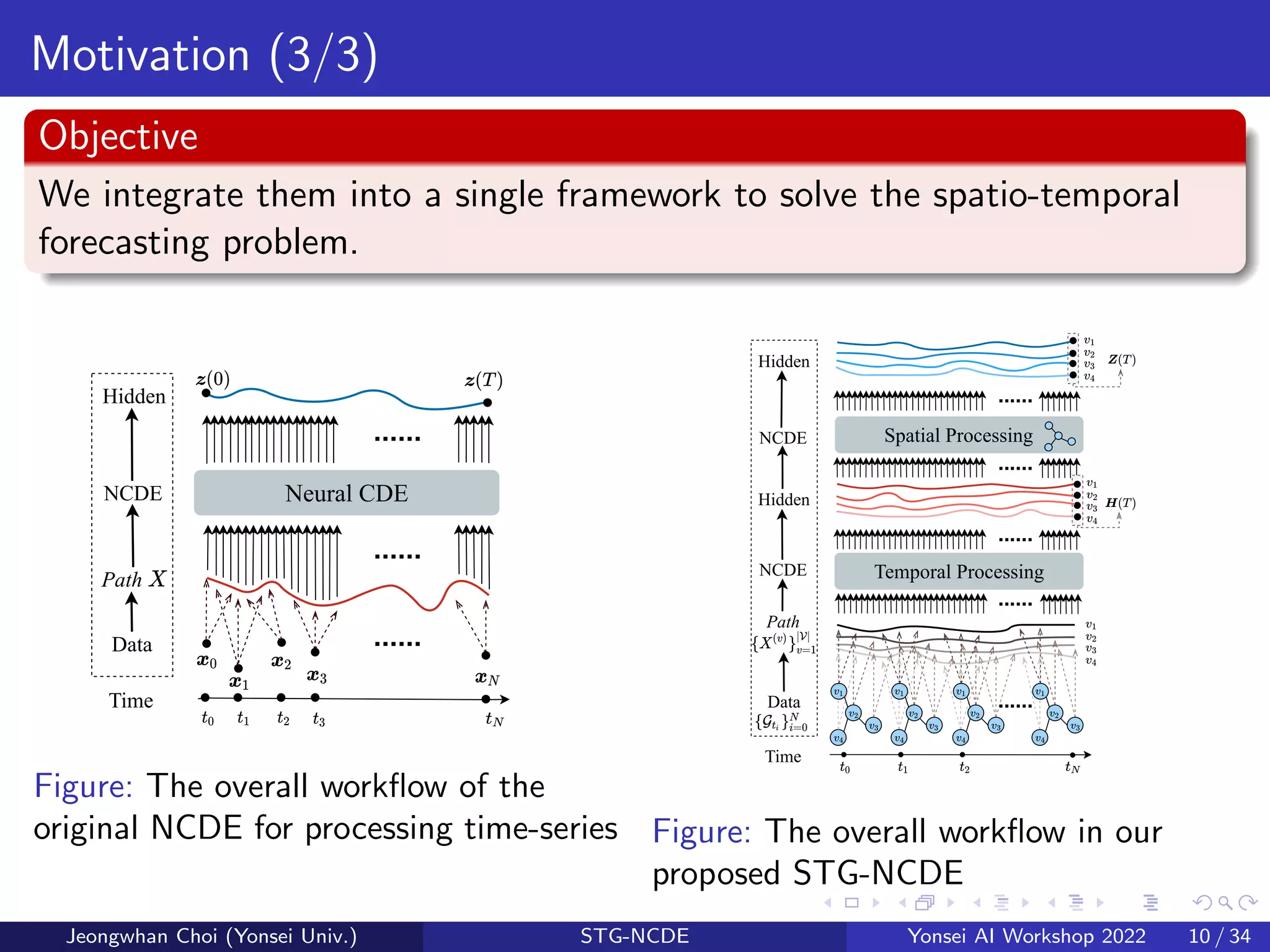

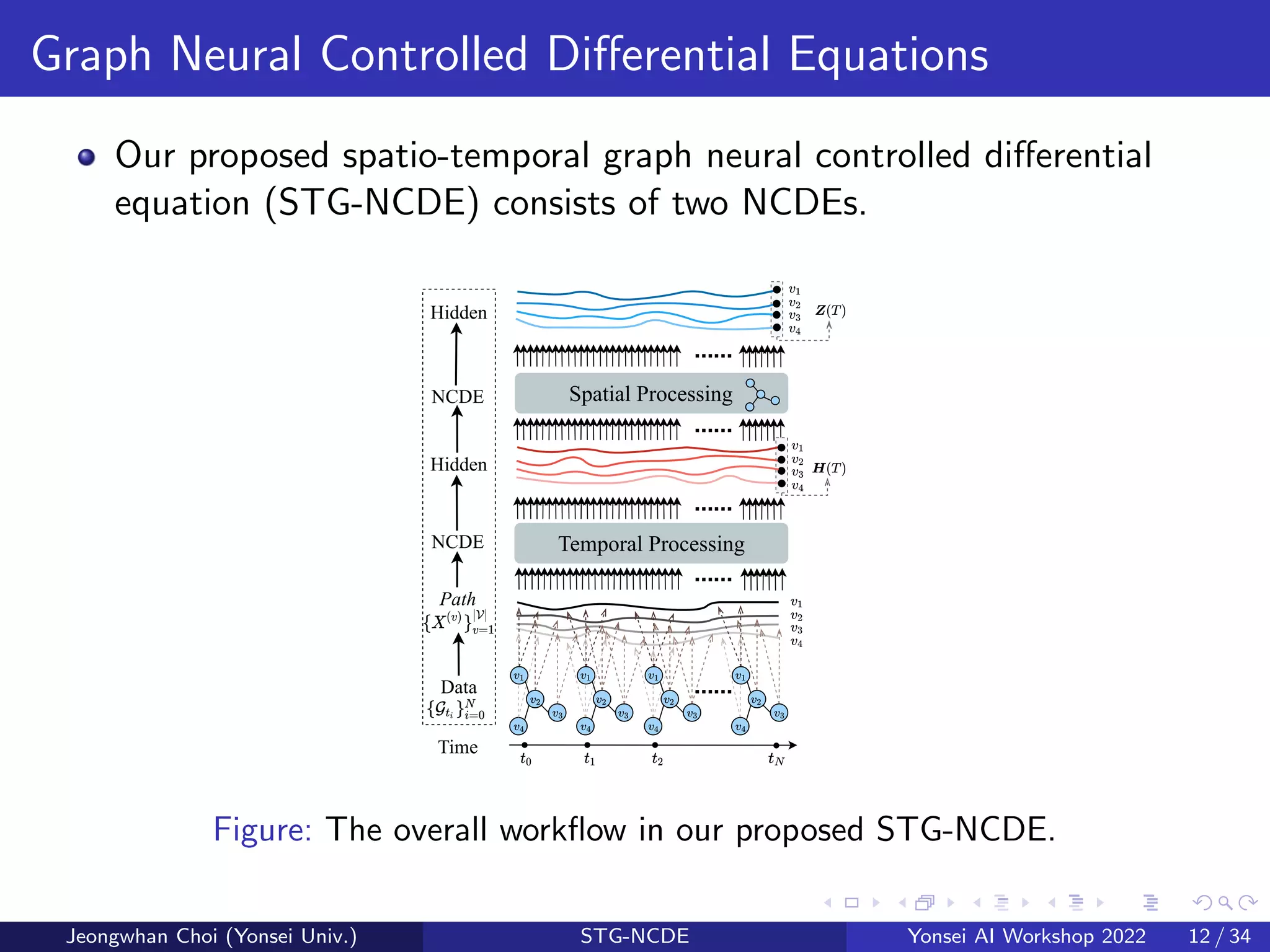

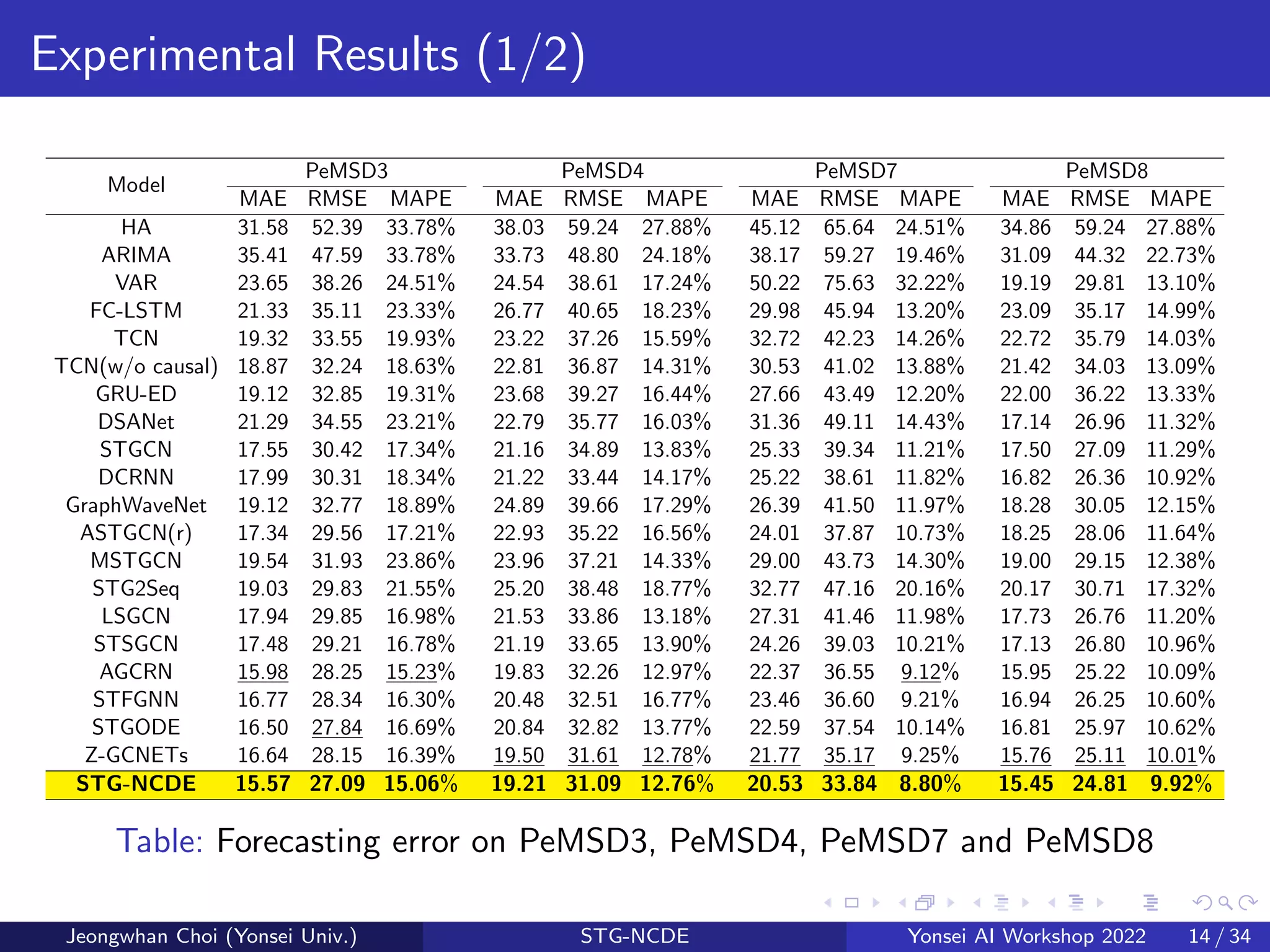

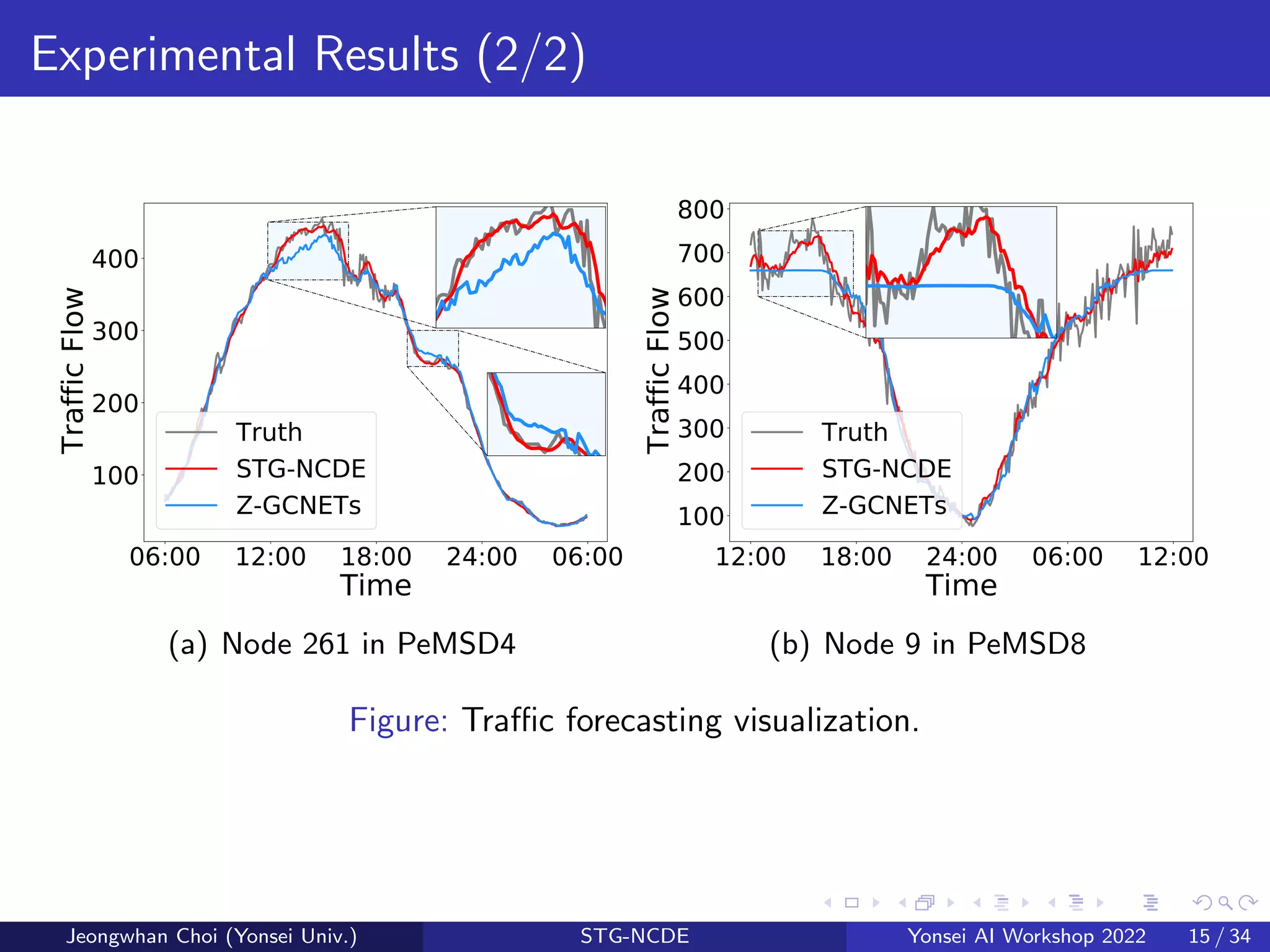

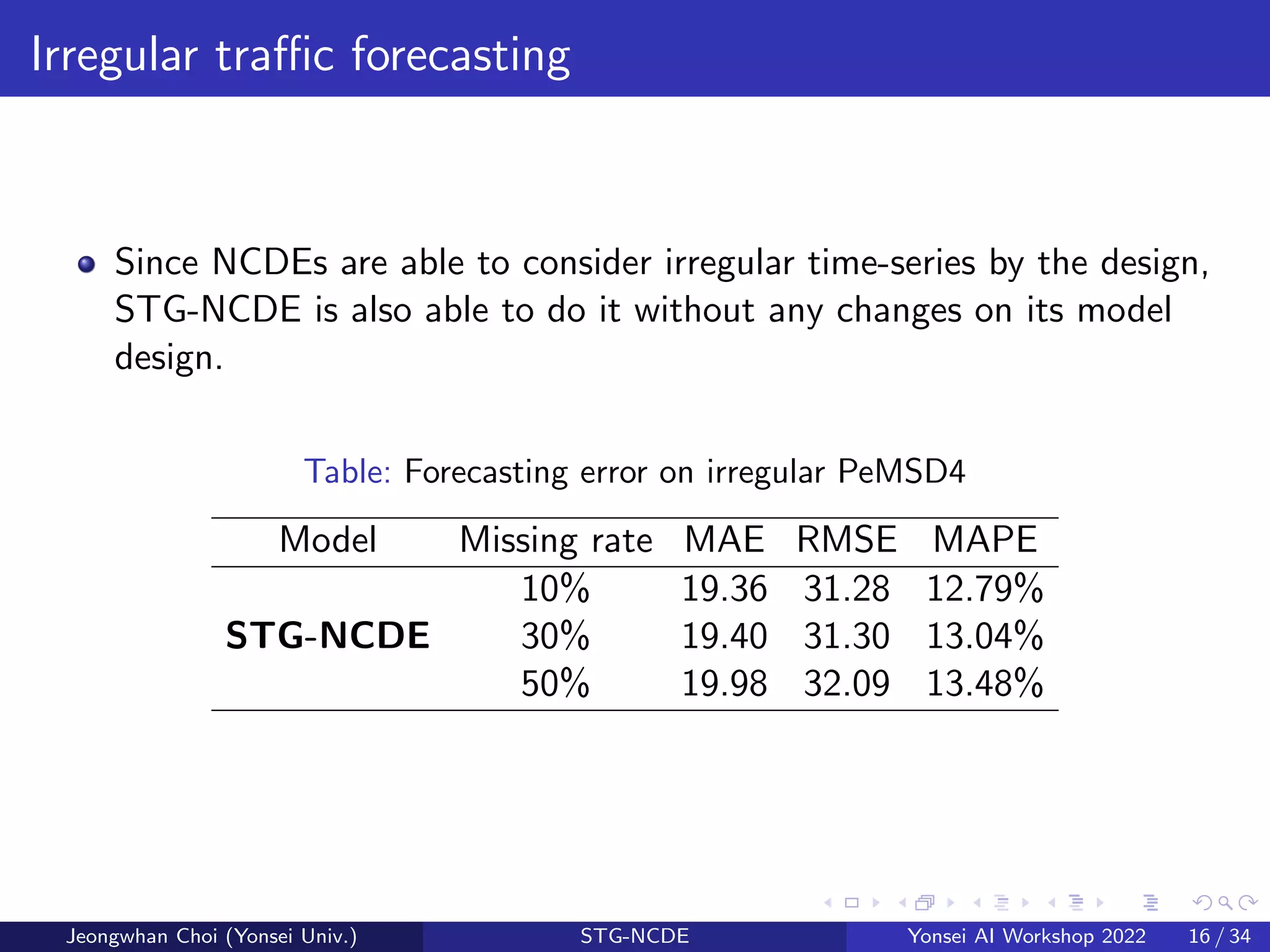

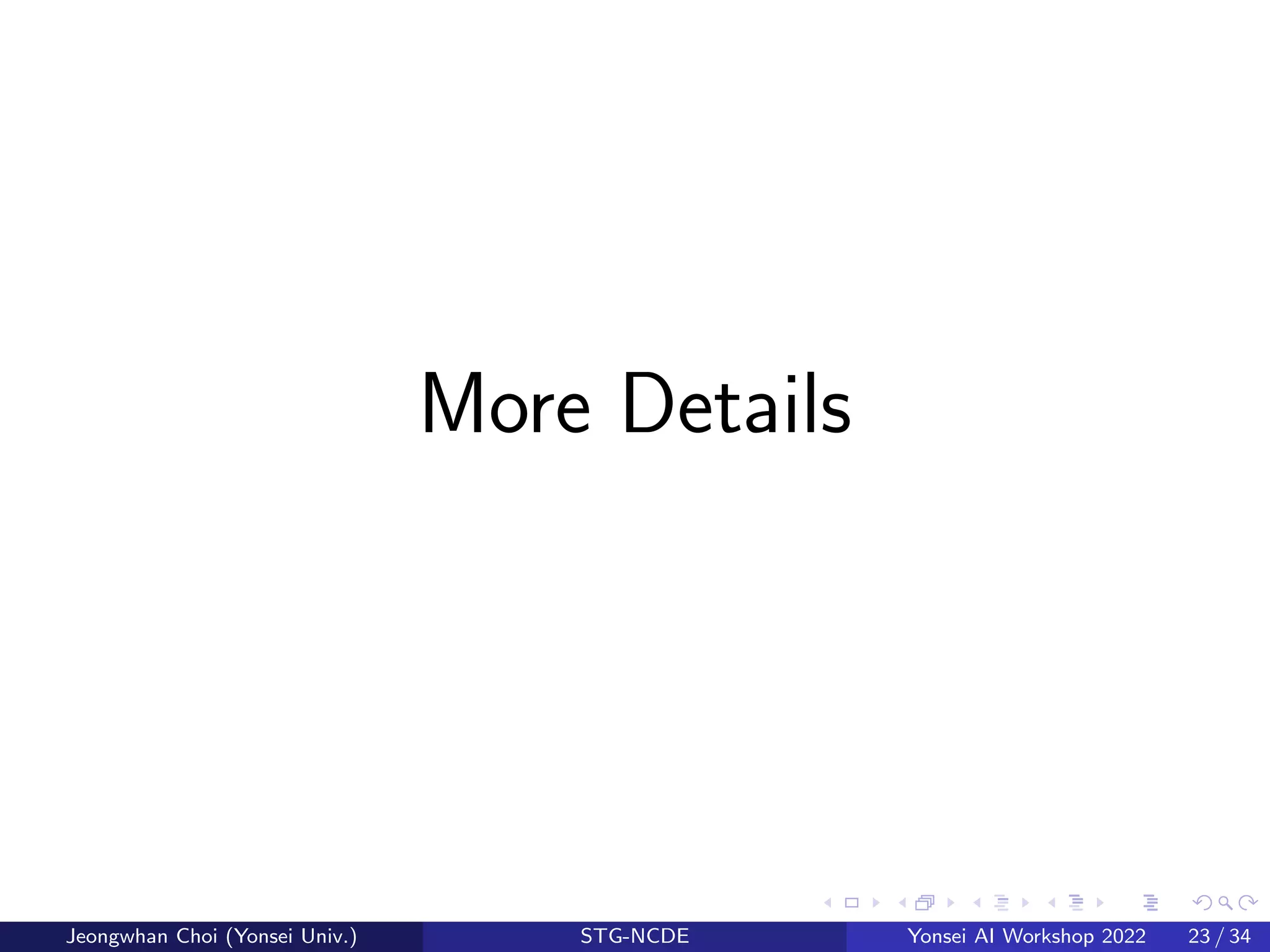

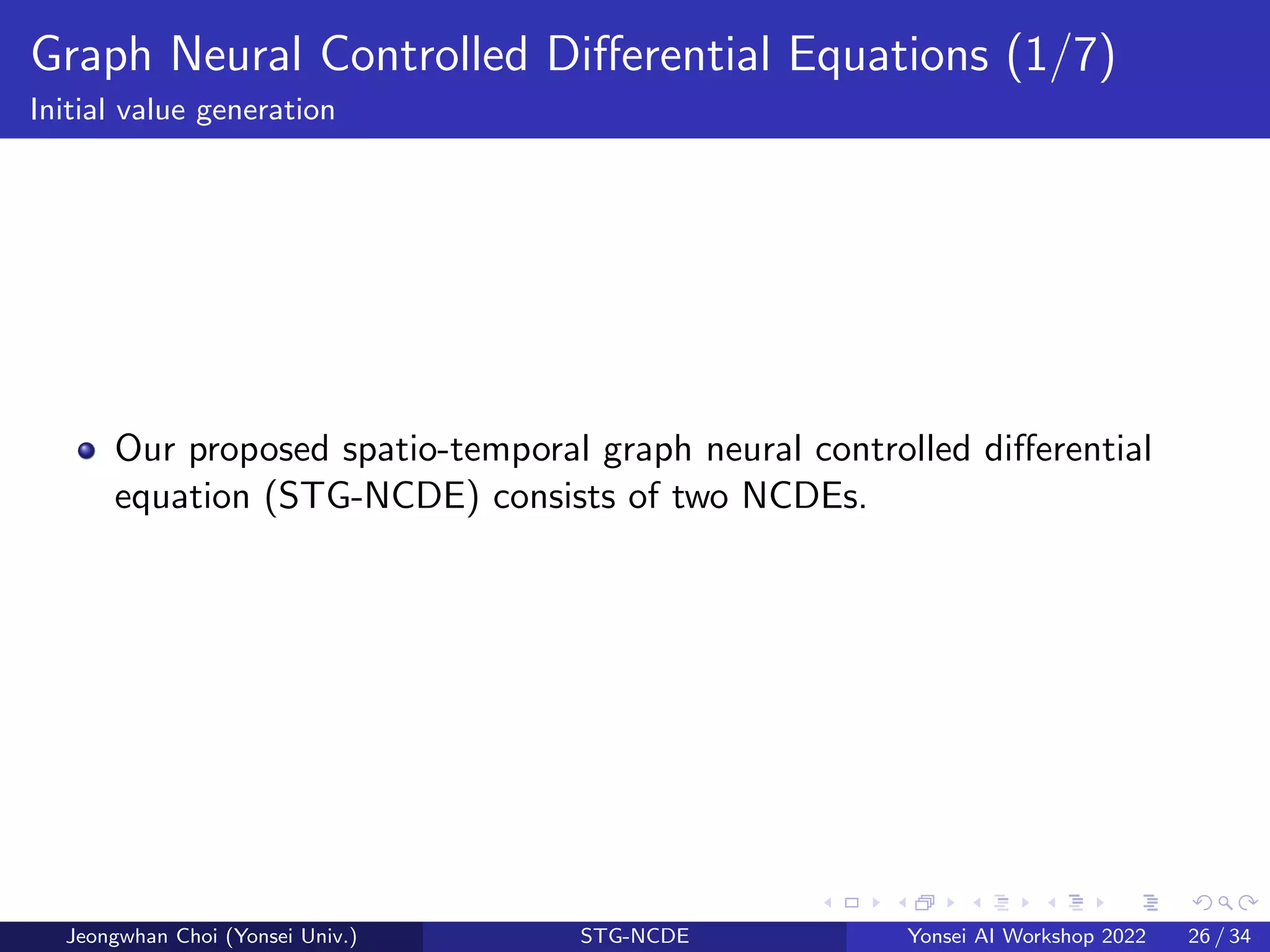

The document presents a novel spatio-temporal graph neural controlled differential equation (stg-ncde) model aimed at enhancing traffic forecasting accuracy by integrating neural controlled differential equations with graph convolutional networks. Experimental results demonstrated that stg-ncde outperforms various baseline models across multiple datasets, showcasing its capability to handle irregular time-series data without changing its architecture. The authors suggest that this combination of technologies offers promising directions for future research in spatio-temporal processing.

![Motivation (2/3)

Model Temporal Dependency Spatial Dependency

STGCN [11] GLU GCN

DCRNN [8] LSTM GCN (Pre-defined Graph)

GraphWaveNet [10] TCN GCN (Adaptive Graph)

ASTGCN [5] 1D CNN+Attention Attention+GCN(Pre-defined Graph)

STSGCN [9] GCN (Sliding Window) GCN (Multiple Graph)

AGCRN [1] GRU GCN (Adaptive Graph)

STFGNN [7] 1D CNN GCN (Multiple Graph)

STGODE [4] TCN ODE+GCN (Dynamic Graph)

STG-NDCE NCDE NCDE + GCN (Adaptive Graph)

Table: Classification of spatial-temporal modeling methods

Motivation

It has not been studied yet how to combine the NCDE technology and the

graph convolutional processing technology.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 9 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-9-2048.jpg)

![Reference (1/3)

[1] Lei Bai et al. “Adaptive Graph Convolutional Recurrent Network for

Traffic Forecasting”. In: NeurIPS. Vol. 33. 2020, pp. 17804–17815.

[2] Ricky T. Q. Chen et al. “Neural Ordinary Differential Equations”.

In: NeurIPS. 2018.

[3] Yuzhou Chen, Ignacio Segovia-Dominguez, and Yulia R Gel.

“Z-GCNETs: Time Zigzags at Graph Convolutional Networks for

Time Series Forecasting”. In: ICML. 2021.

[4] Zheng Fang et al. “Spatial-Temporal Graph ODE Networks for

Traffic Flow Forecasting”. In: KDD. 2021.

[5] Shengnan Guo et al. “Attention Based Spatial-Temporal Graph

Convolutional Networks for Traffic Flow Forecasting”. In: AAAI. July

2019. doi: 10.1609/aaai.v33i01.3301922.

[6] Patrick Kidger et al. “Neural Controlled Differential Equations for

Irregular Time Series”. In: NeurIPS (2020).

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 19 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-19-2048.jpg)

![Reference (2/3)

[7] Mengzhang Li and Zhanxing Zhu. “Spatial-Temporal Fusion Graph

Neural Networks for Traffic Flow Forecasting”. In: AAAI. May 2021.

[8] Yaguang Li et al. “Diffusion Convolutional Recurrent Neural

Network: Data-Driven Traffic Forecasting”. In: ICLR. 2018.

[9] Chao Song et al. “Spatial-Temporal Synchronous Graph

Convolutional Networks: A New Framework for Spatial-Temporal

Network Data Forecasting”. In: AAAI. Apr. 2020. doi:

10.1609/aaai.v34i01.5438.

[10] Zonghan Wu et al. “Graph WaveNet for Deep Spatial-Temporal

Graph Modeling”. In: IJCAI. July 2019, pp. 1907–1913.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 20 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-20-2048.jpg)

![Reference (3/3)

[11] Bing Yu, Haoteng Yin, and Zhanxing Zhu. “Spatio-Temporal Graph

Convolutional Networks: A Deep Learning Framework for Traffic

Forecasting”. In: IJCAI. July 2018. doi:

10.24963/ijcai.2018/505. url:

https://doi.org/10.24963/ijcai.2018/505.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 21 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-21-2048.jpg)

![Neural Ordinary Differential Equations (NODEs)

NODEs introduced how to

continuously model residual neural

networks (ResNets) with differential

equations.

NODE can be written as follows:

z(T) = z(0) +

Z T

0

f (z(t), t; θf )dt; (1)

where the neural network

parameterized by θf approximates

z(t)

dt .

Figure: ResNet and NODE figures

from [2]

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 24 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-24-2048.jpg)

![Neural controlled differential equations (NCDEs)

NCDEs [6] generalize RNNs in a continuous

manner and can be written as follows:

z(T) = z(0) +

Z T

0

f (z(t); θf )dX(t) (2)

= z(0) +

Z T

0

f (z(t); θf )

dX(t)

dt

dt, (3)

Time

Path

NCDE

Data

Hidden

Neural CDE

......

......

......

Figure: The overall workflow

of the original NCDE for

processing time-series.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 25 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-25-2048.jpg)

![Neural controlled differential equations (NCDEs)

NCDEs [6] generalize RNNs in a continuous

manner and can be written as follows:

z(T) = z(0) +

Z T

0

f (z(t); θf )dX(t) (2)

= z(0) +

Z T

0

f (z(t); θf )

dX(t)

dt

dt, (3)

The original CDE formulation in Eq. (2)

reduces Eq. (3) where z(t)

dt is approximated

by f (z(t); θf )dX(t)

dt .

Time

Path

NCDE

Data

Hidden

Neural CDE

......

......

......

Figure: The overall workflow

of the original NCDE for

processing time-series.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 25 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-26-2048.jpg)

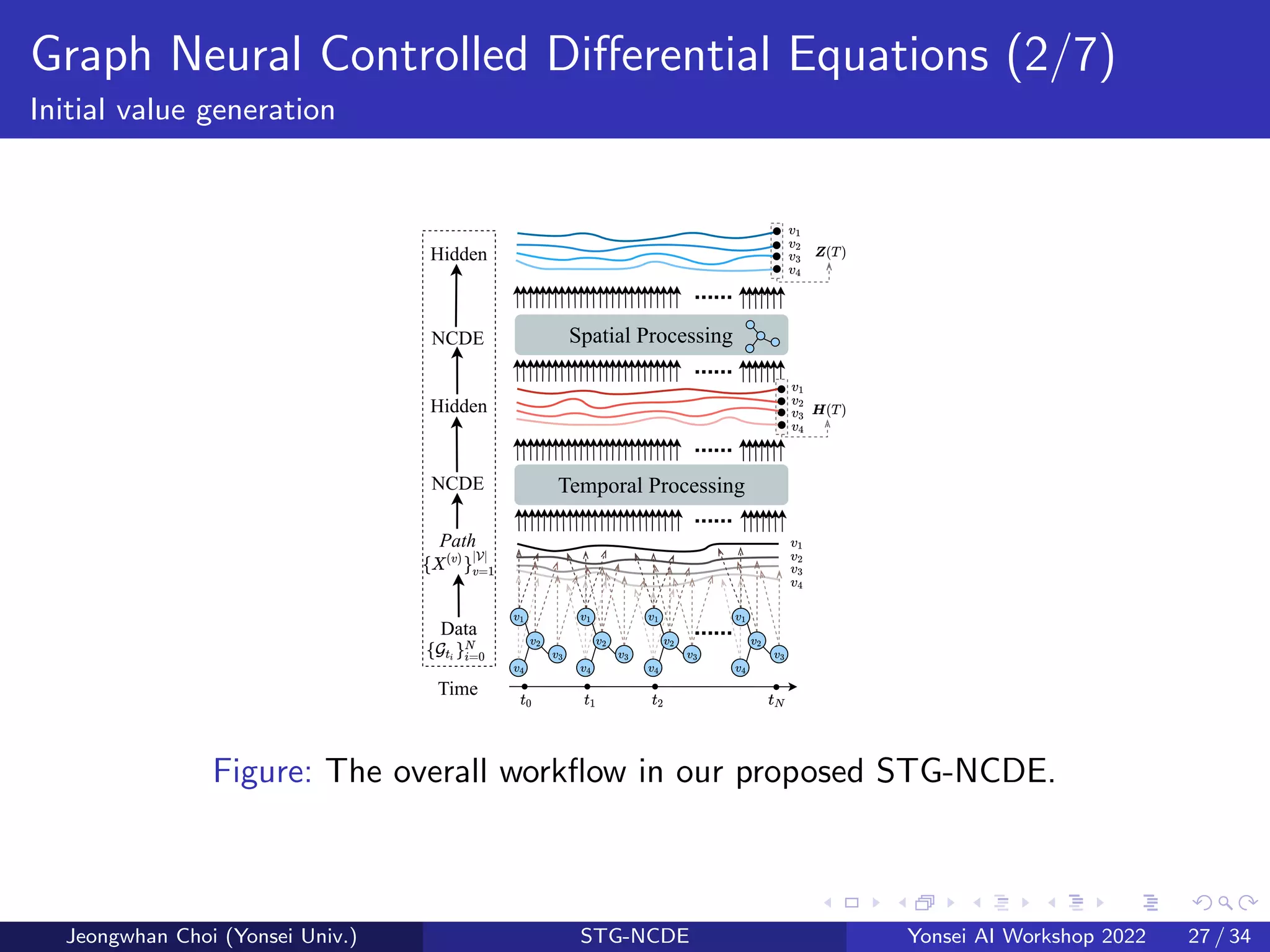

![Graph Neural Controlled Differential Equations (3/7)

Initial value generation

Time

Path

NCDE

Data

Hidden

Temporal Processing

Spatial Processing

NCDE

Hidden

......

......

......

......

......

Figure: STG-NCDE

The first NCDE for the temporal

processing can be written as follows:

H(T) = H(0) +

Z T

0

f (H(t); θf )

dX(t)

dt

dt,

(4)

where H(v)(t) is a hidden trajectory

(over time t ∈ [0, T]) of the temporal

information of node v, and X(t) is a

matrix whose v-th row is X(v).

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 28 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-29-2048.jpg)

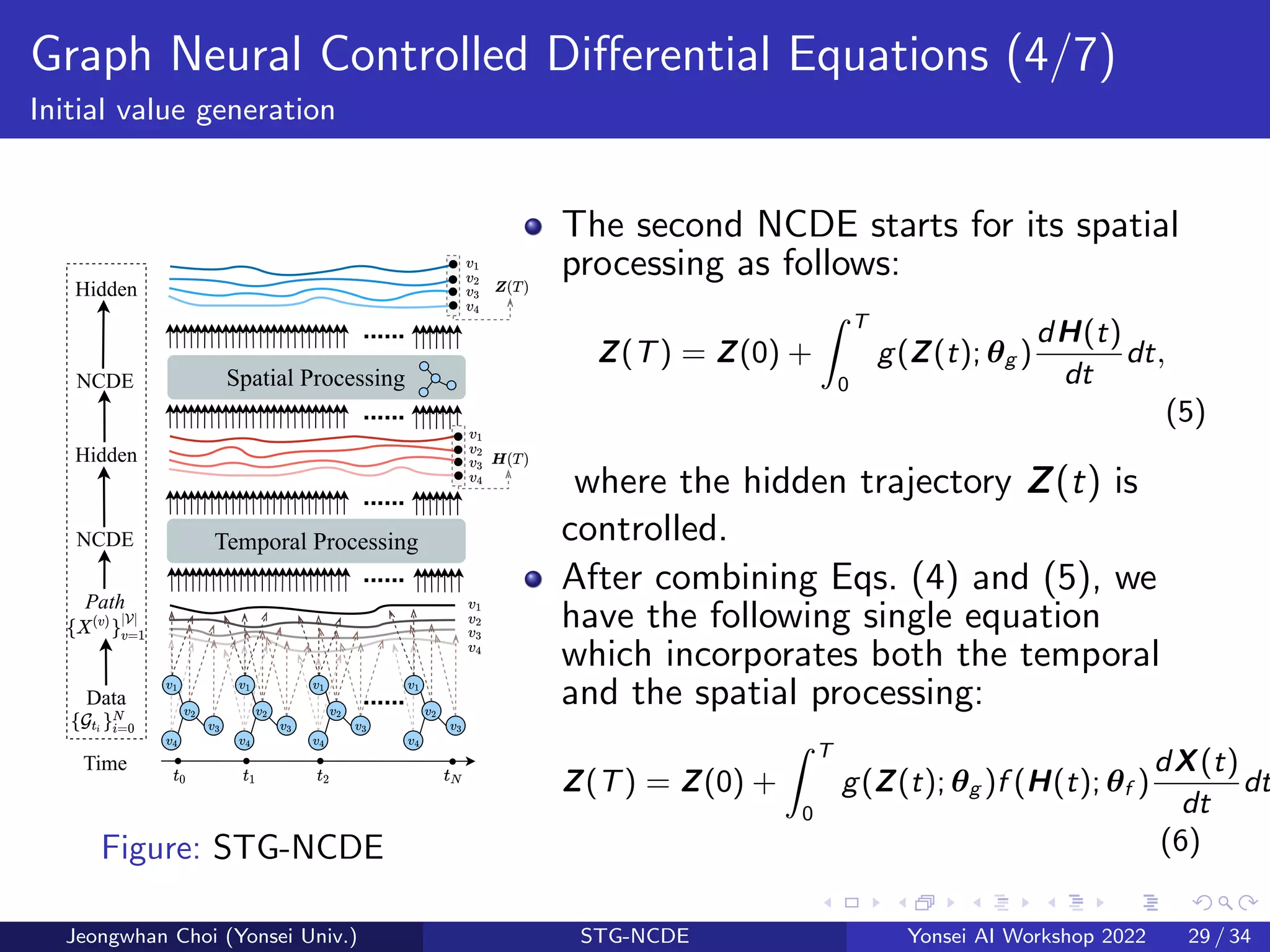

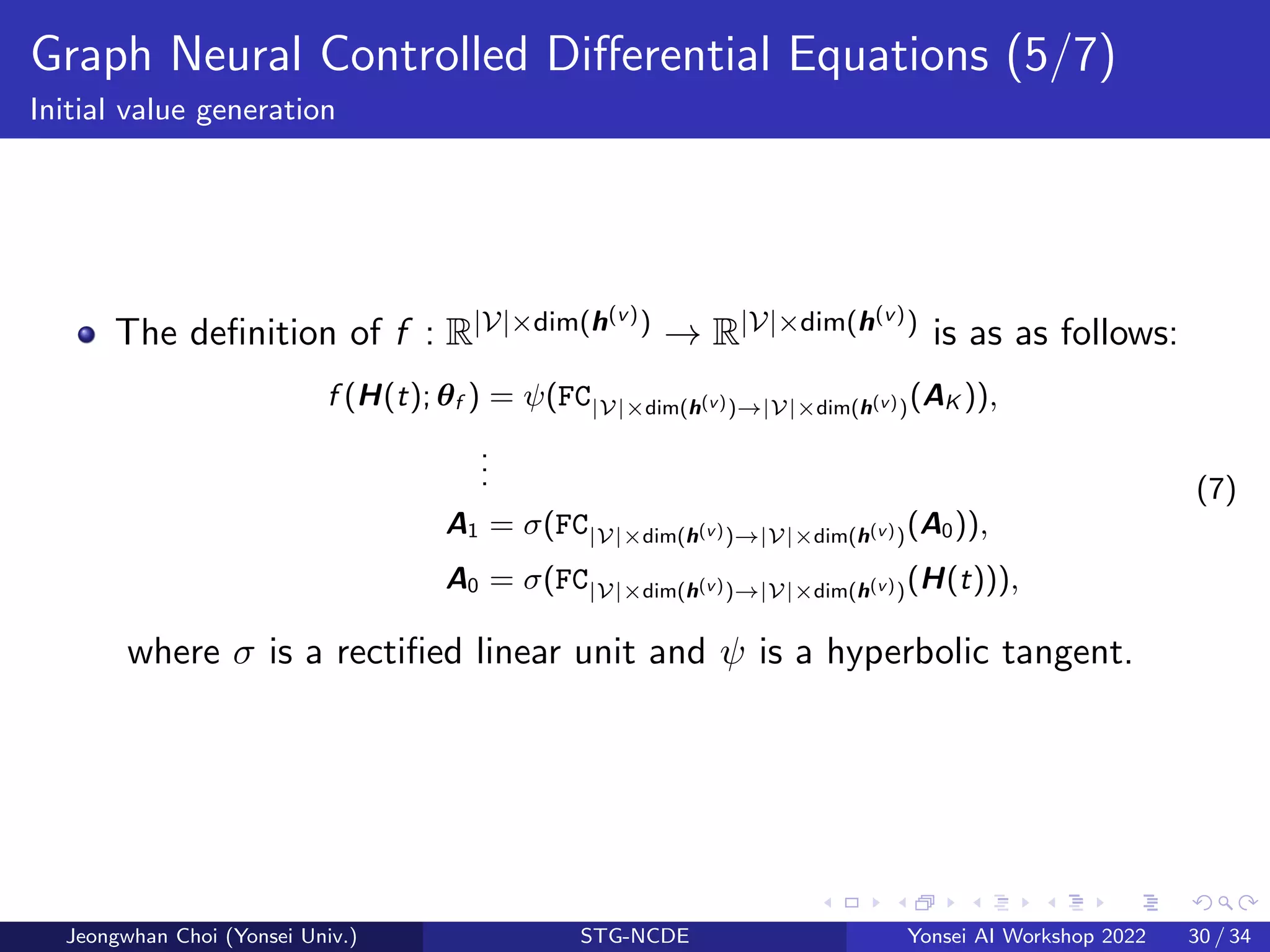

![Graph Neural Controlled Differential Equations (6/7)

Initial value generation

The definition of g : R|V|×dim(z(v)) → R|V|×dim(z(v)) is as follows:

g(Z(t); θg ) = ψ(FC|V|×dim(z(v))→|V|×dim(z(v))(B1)), (8)

B1 = (I + ϕ(σ(E · E⊺

)))B0Wspatial , (9)

B0 = σ(FC|V|×dim(z(v))→|V|×dim(z(v))(Z(t))), (10)

where I is the |V| × |V| identity matrix, ϕ is a softmax activation,

E ∈ R|V|×C is a trainable node-embedding matrix and Wspatial is a

trainable weight transformation matrix.

ϕ(σ(E · E⊺)) corresponds to the normalized adjacency matrix

D−1

2 AD−1

2 , where A = σ(E · E⊺) and the softmax activation plays a

role of normalizing the adaptive adjacency matrix [10, 1].

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 31 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-32-2048.jpg)

![Datasets

We use six real-world traffic datasets which were widely used in the

previous studies [11, 5, 4, 3, 9].

Dataset |V| Time Steps Time Range Type

PeMSD3 358 26,208 09/2018 - 11/2018 Volume

PeMSD4 307 16,992 01/2018 - 02/2018 Volume

PeMSD7 883 28,224 05/2017 - 08/2017 Volume

PeMSD8 170 17,856 07/2016 - 08/2016 Volume

PeMSD7(M) 228 12,672 05/2012 - 06/2012 Velocity

PeMSD7(L) 1,026 12,672 05/2012 - 06/2012 Velocity

Table: The summary of the datasets used in our work. We predict either traffic

volume (i.e., # of vehicles) or velocity.

Jeongwhan Choi (Yonsei Univ.) STG-NCDE Yonsei AI Workshop 2022 33 / 34](https://image.slidesharecdn.com/dhcx9l1rc2acdkwbnqyx-aaai22-workshop-221019003243-979010ab/75/Yonsei-AI-Workshop-2022-Graph-Neural-Controlled-Differential-Equations-for-Traffic-Forecasting-34-2048.jpg)