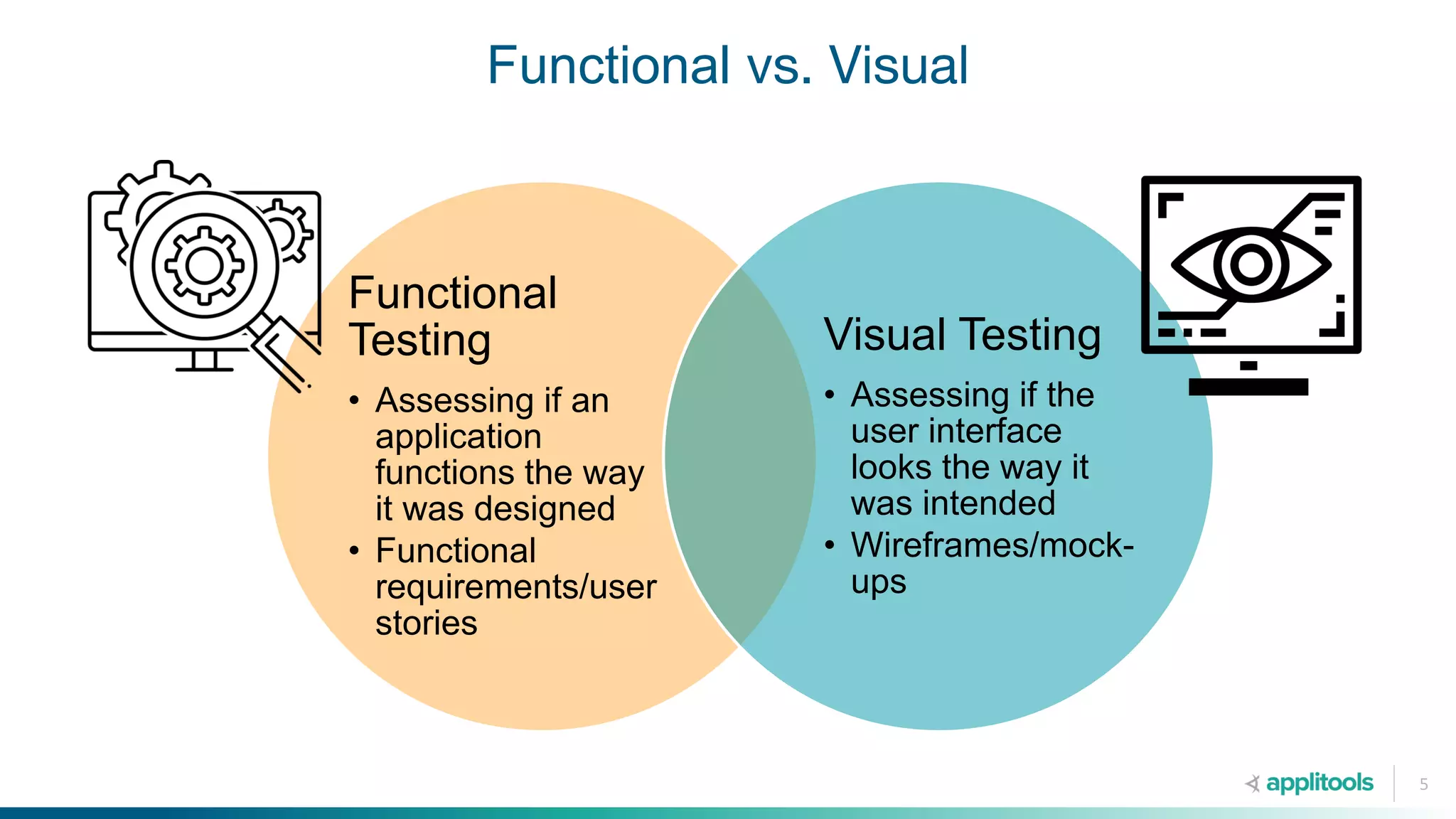

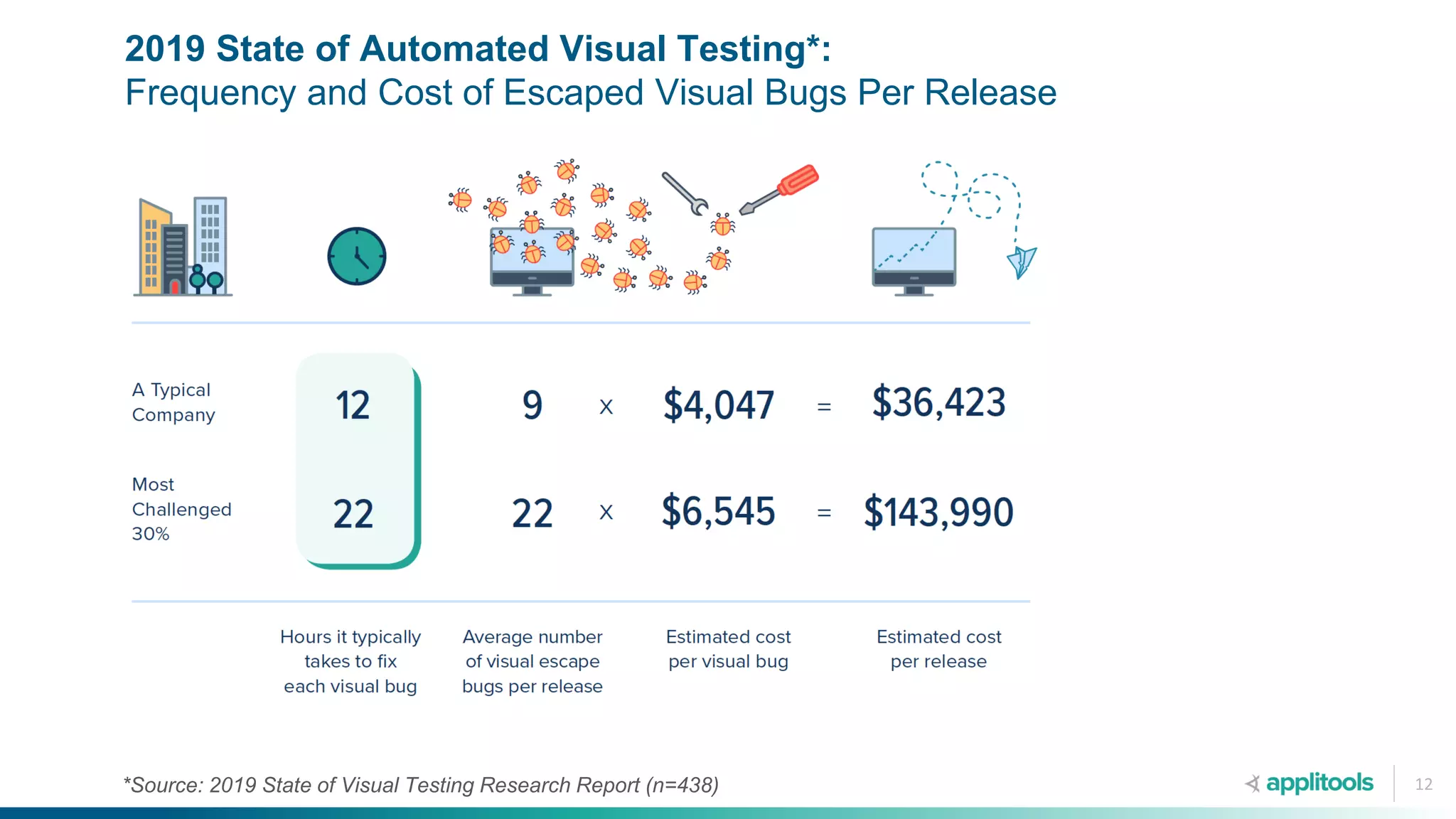

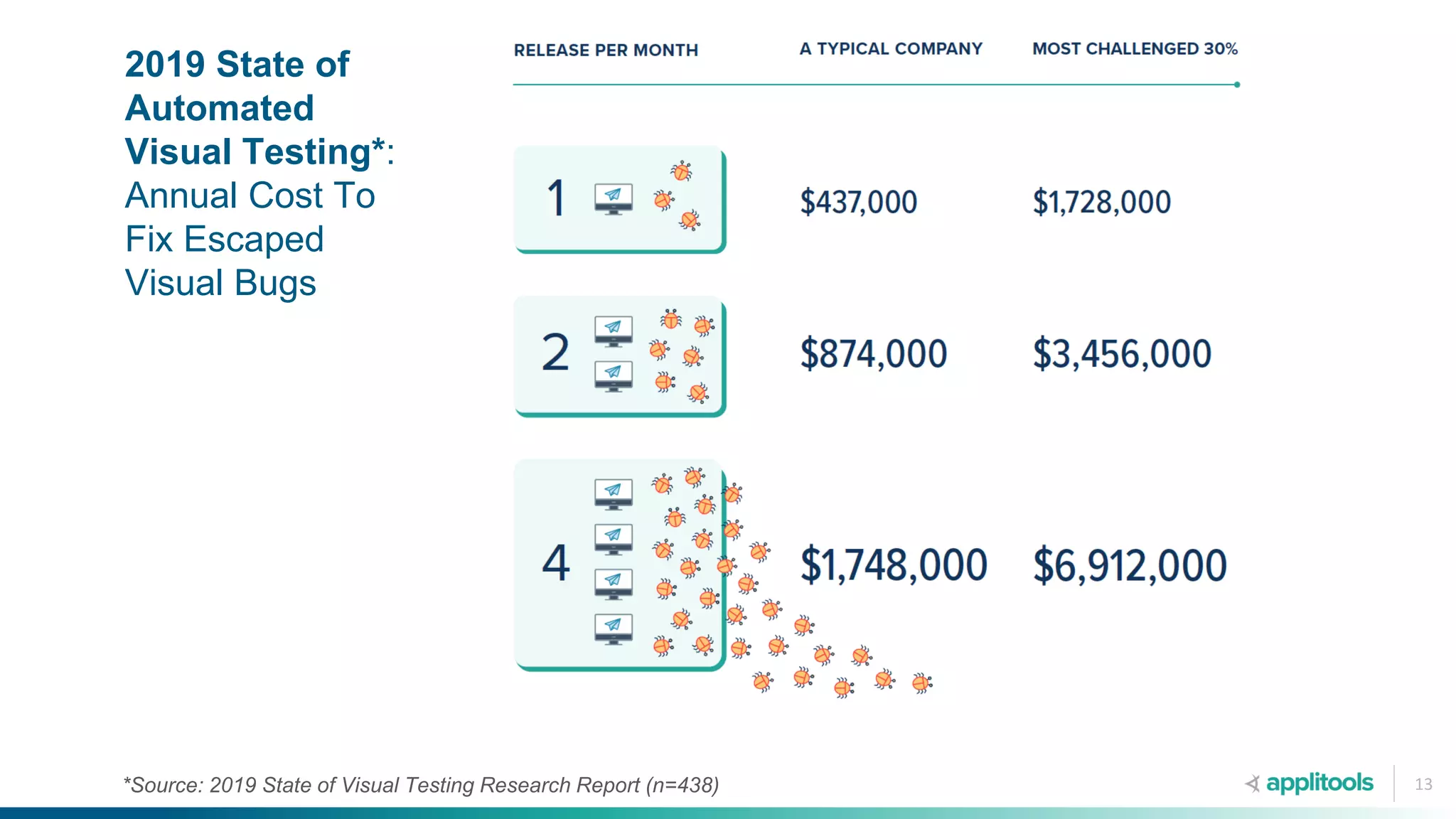

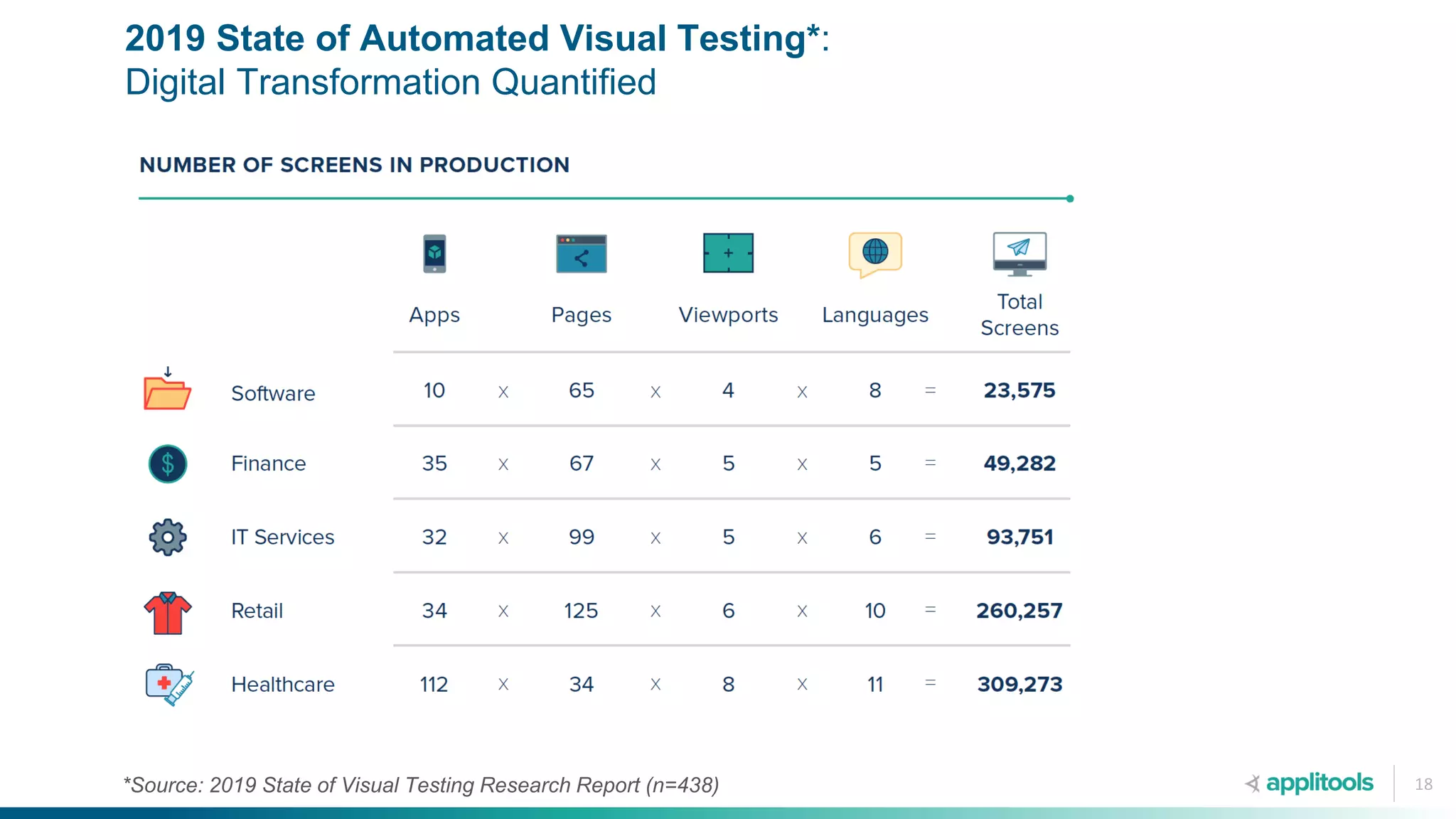

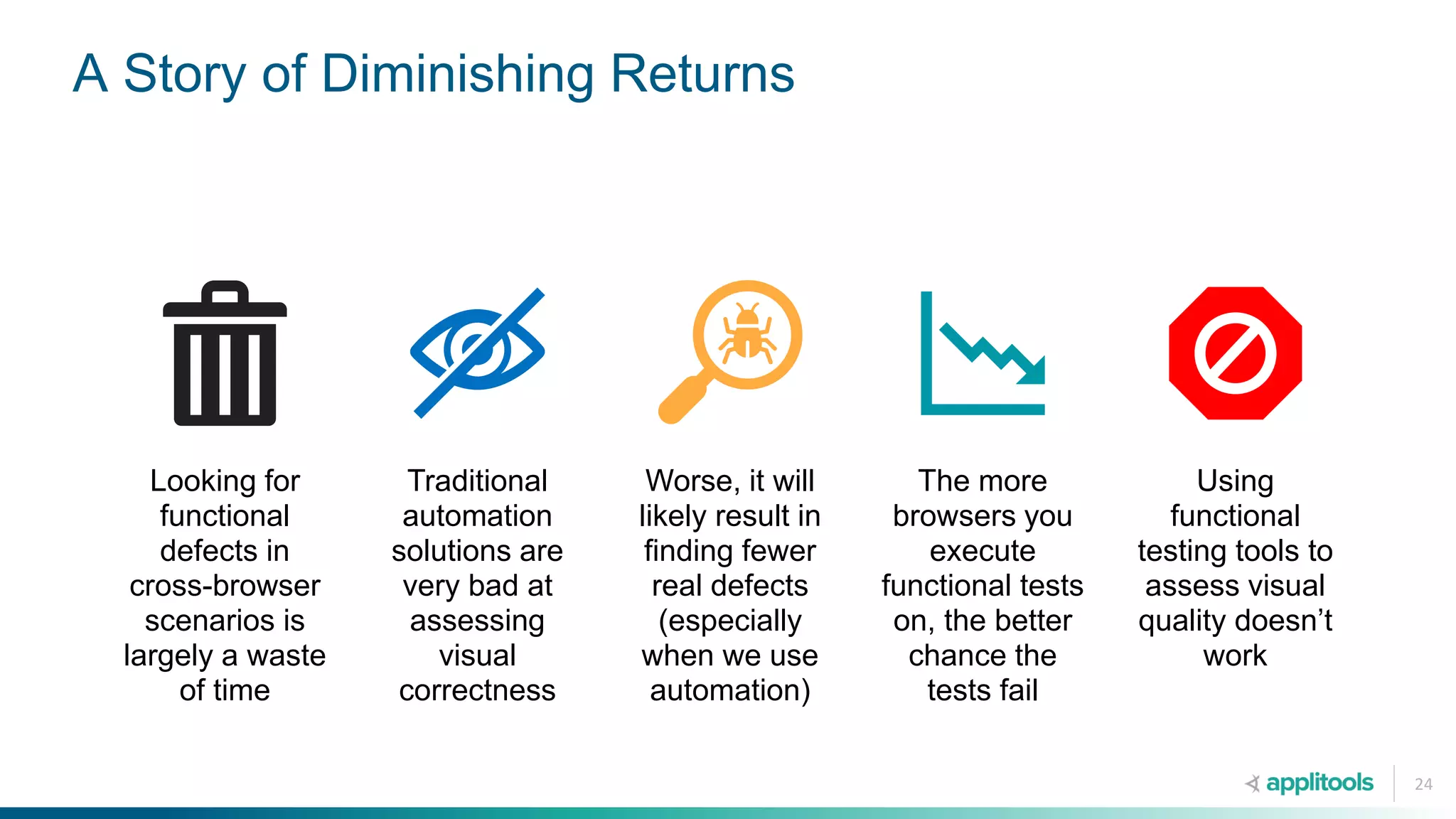

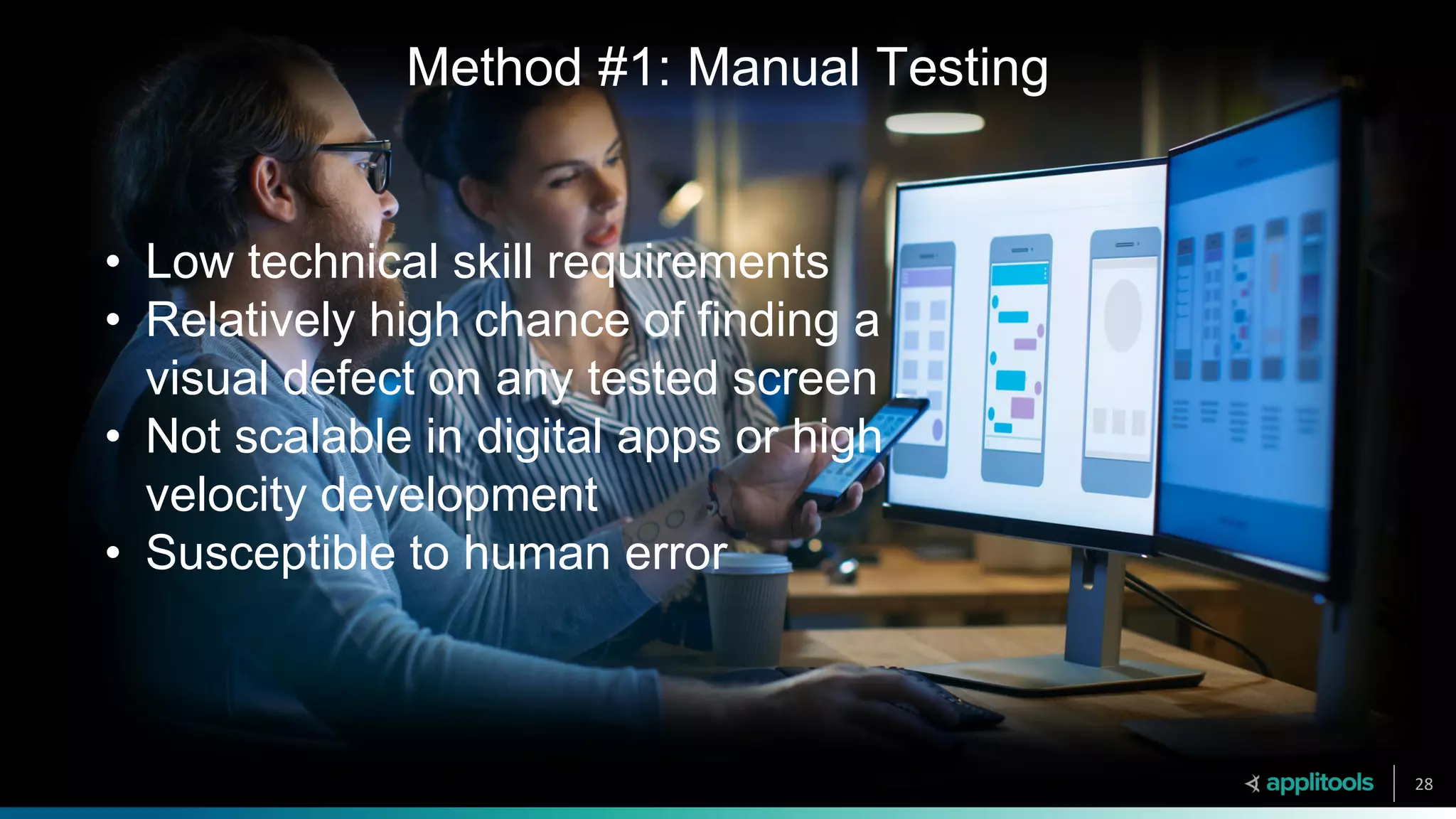

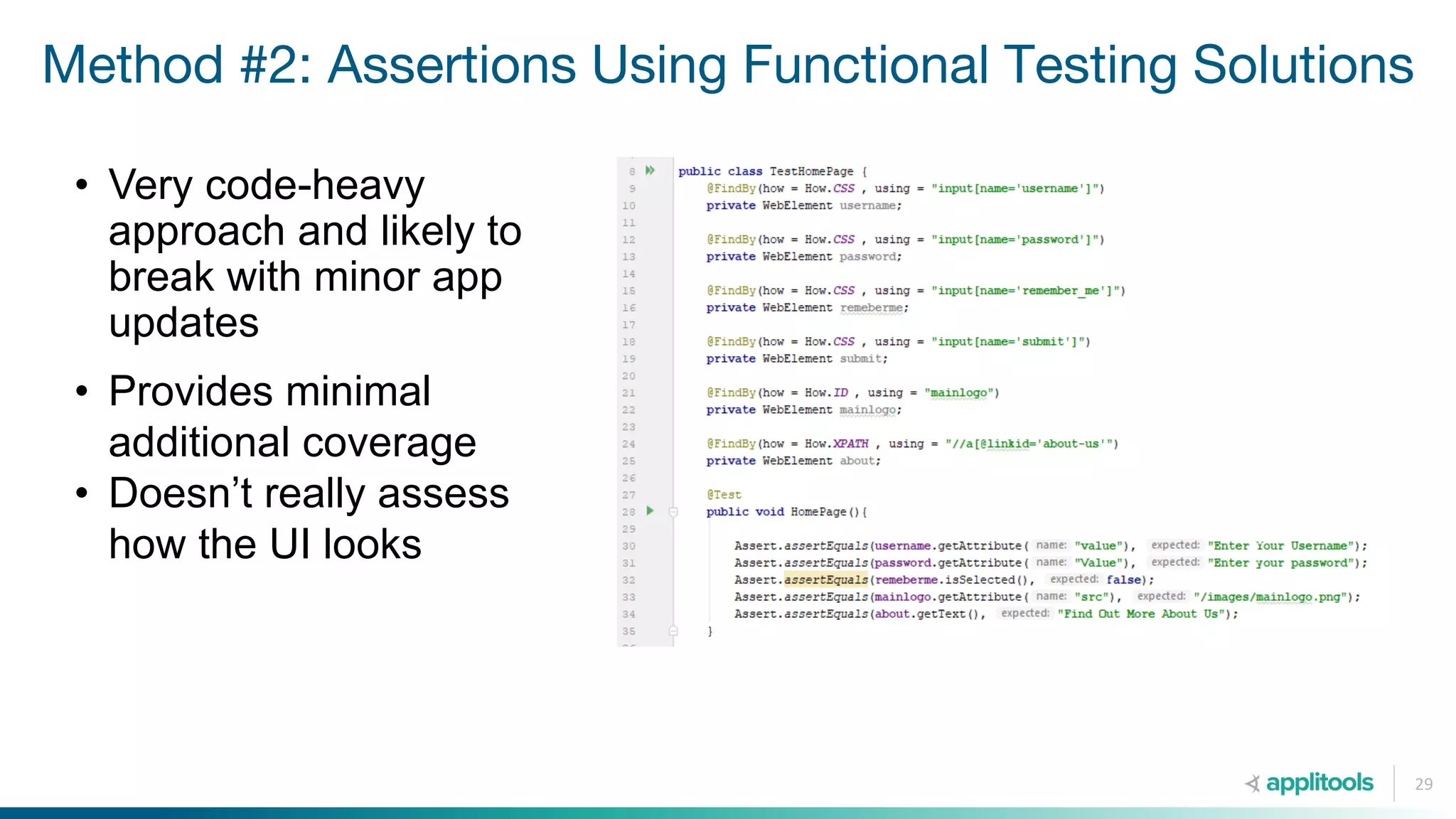

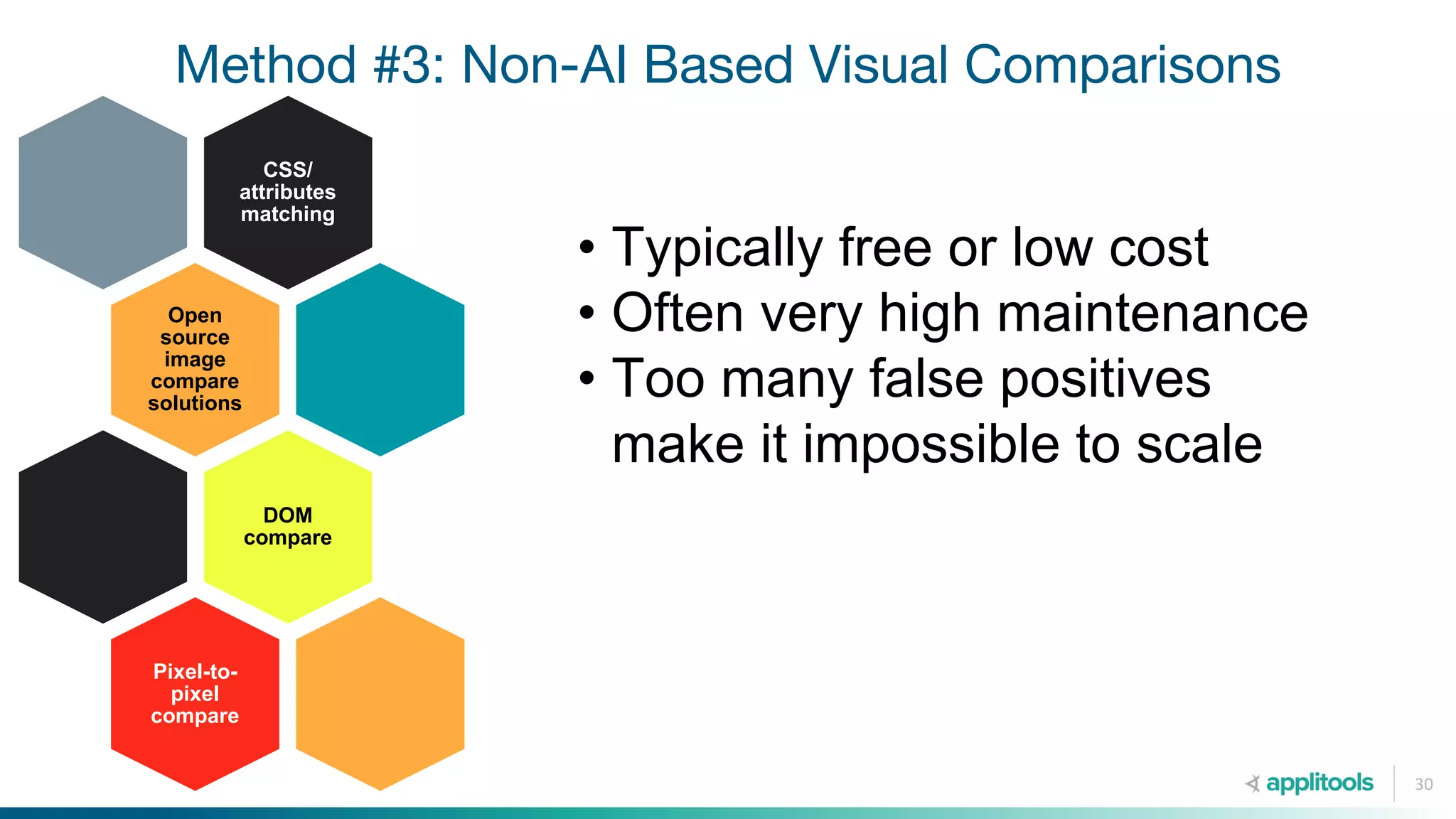

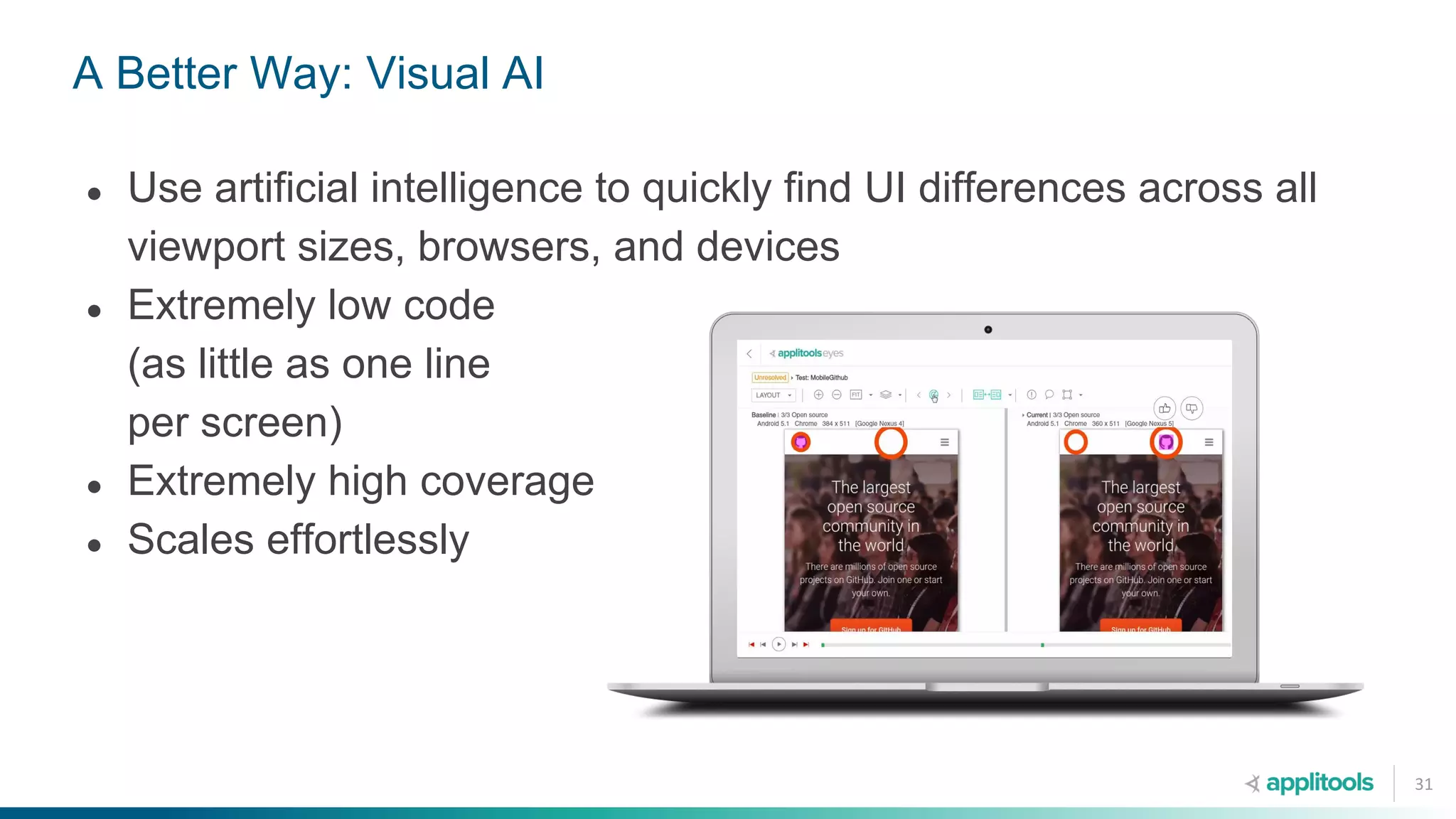

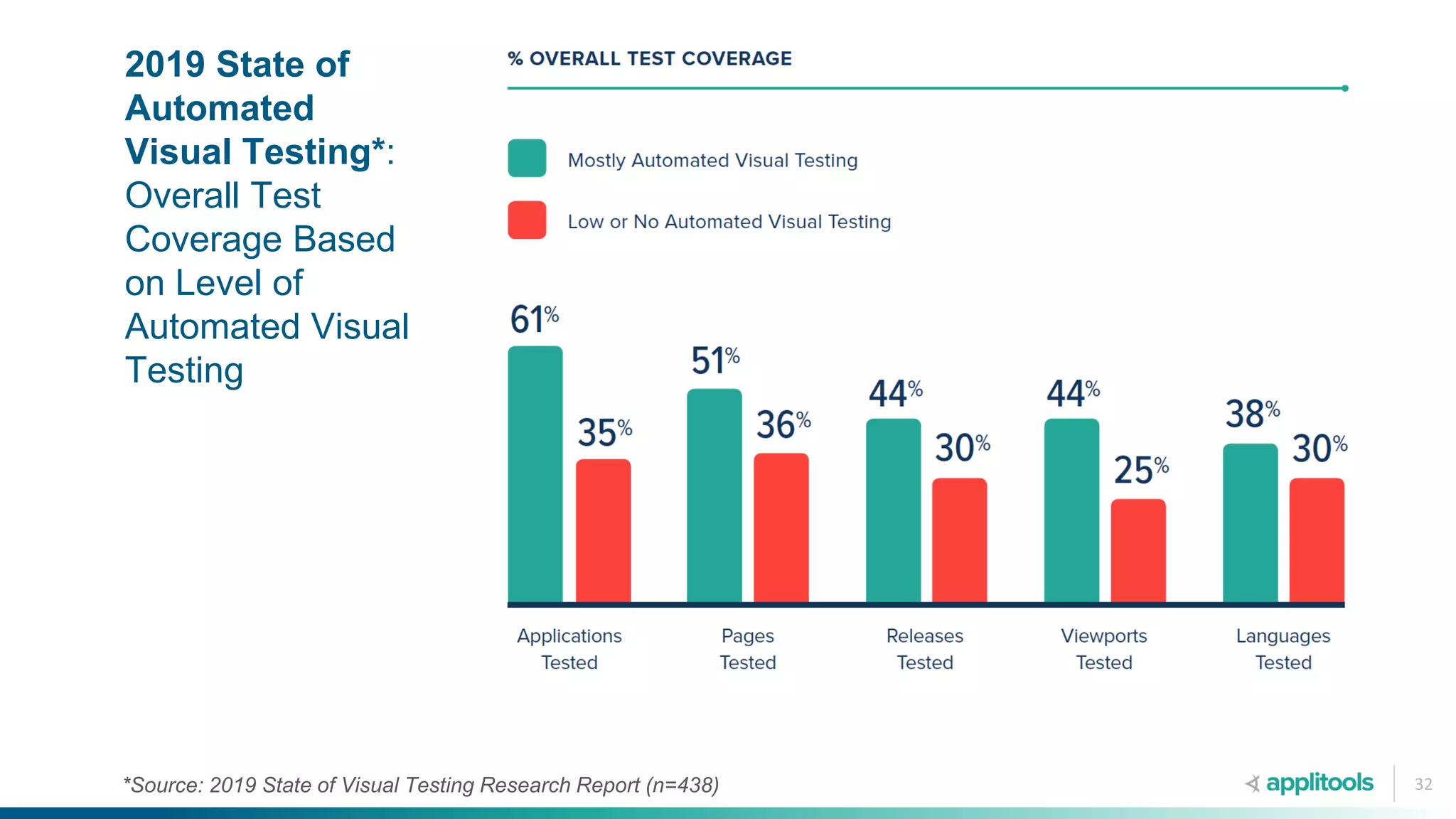

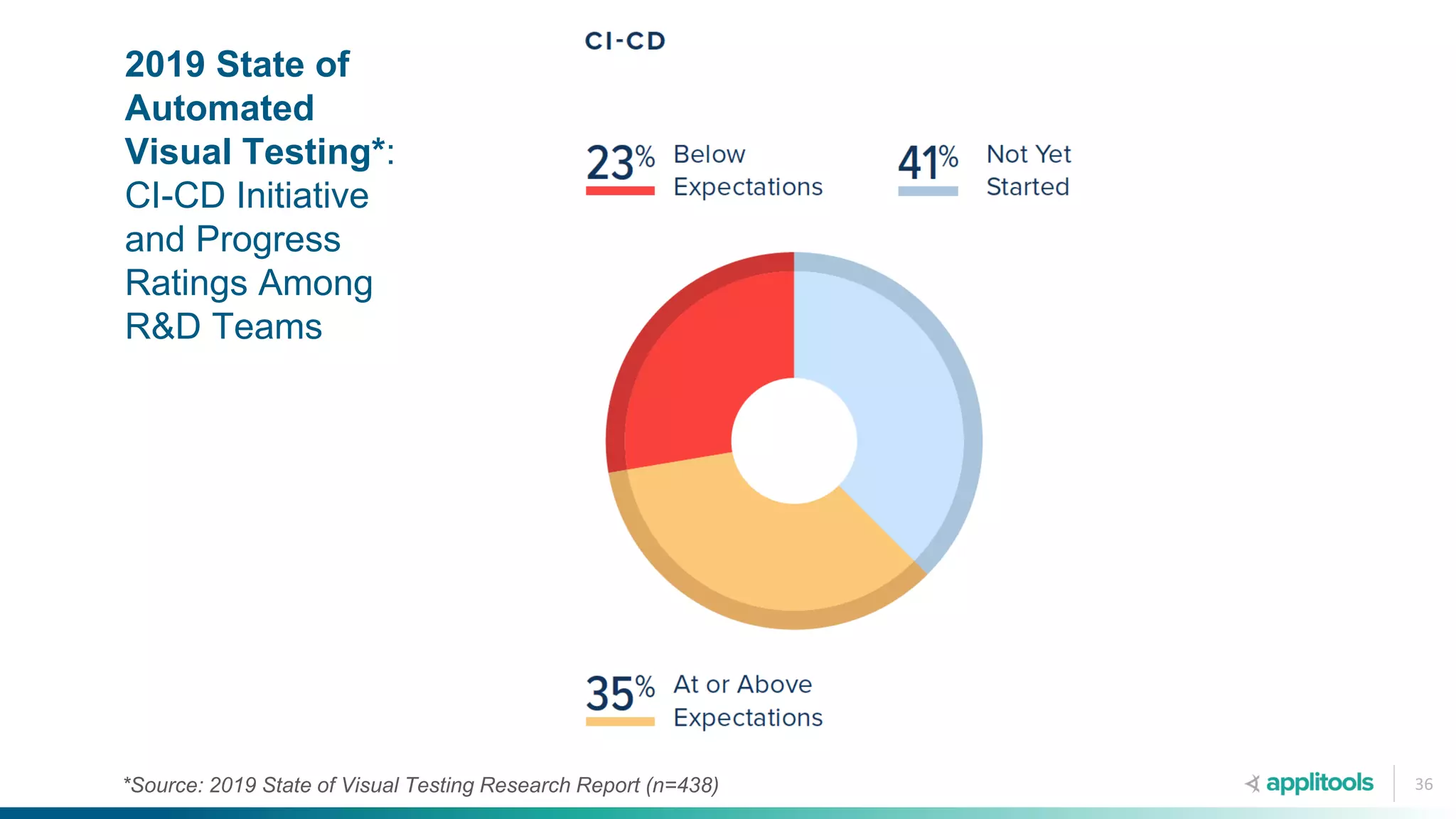

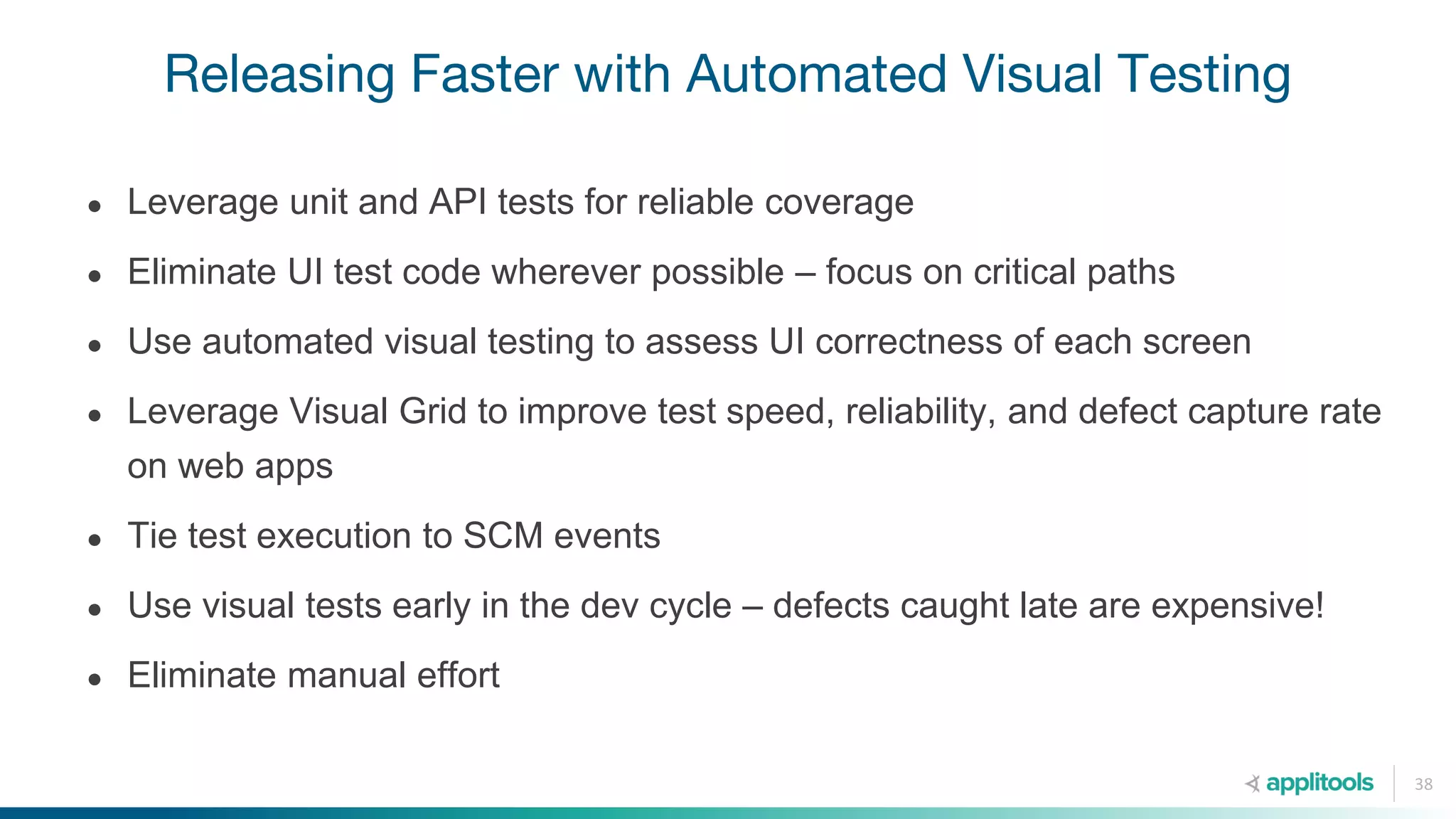

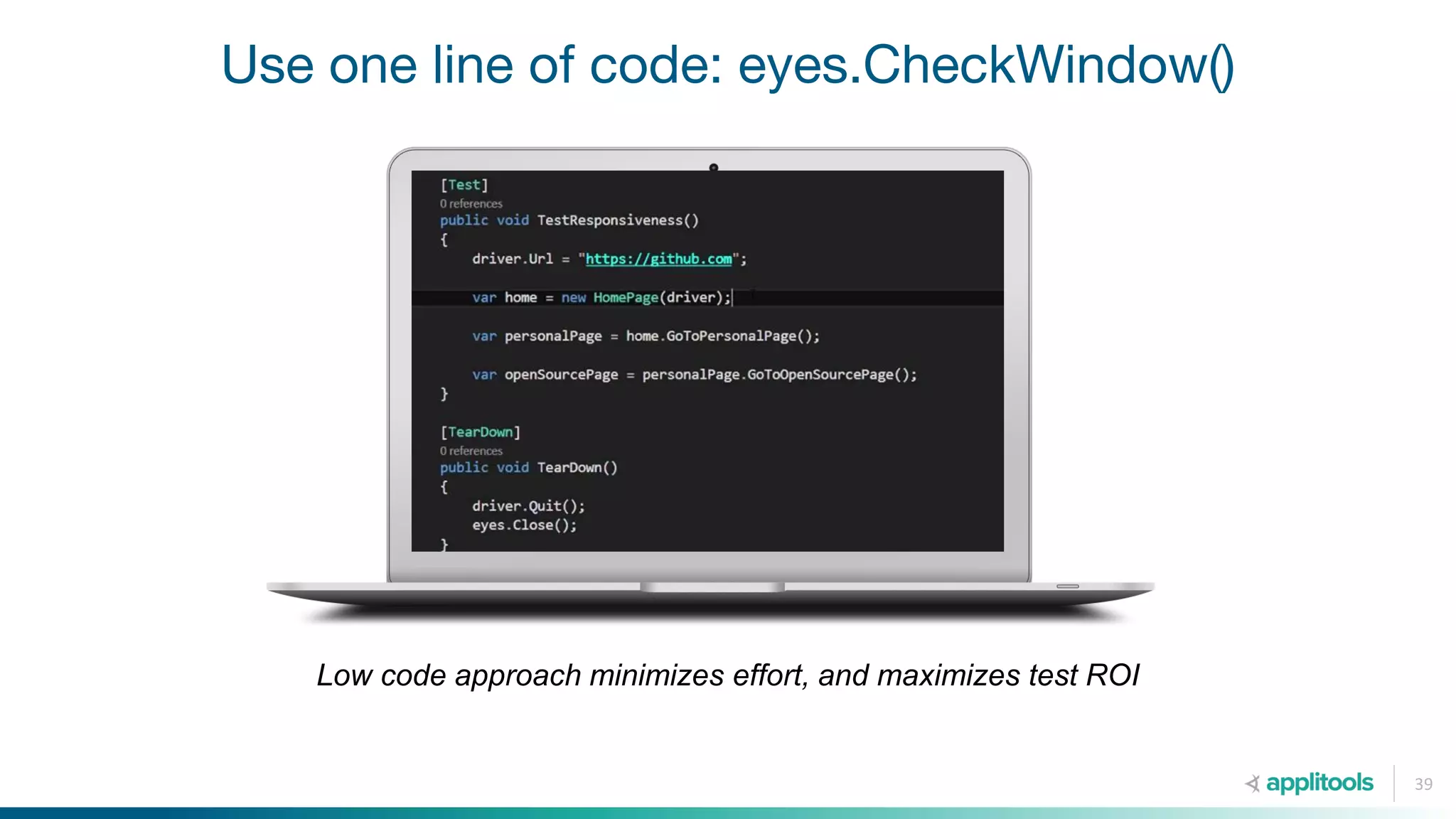

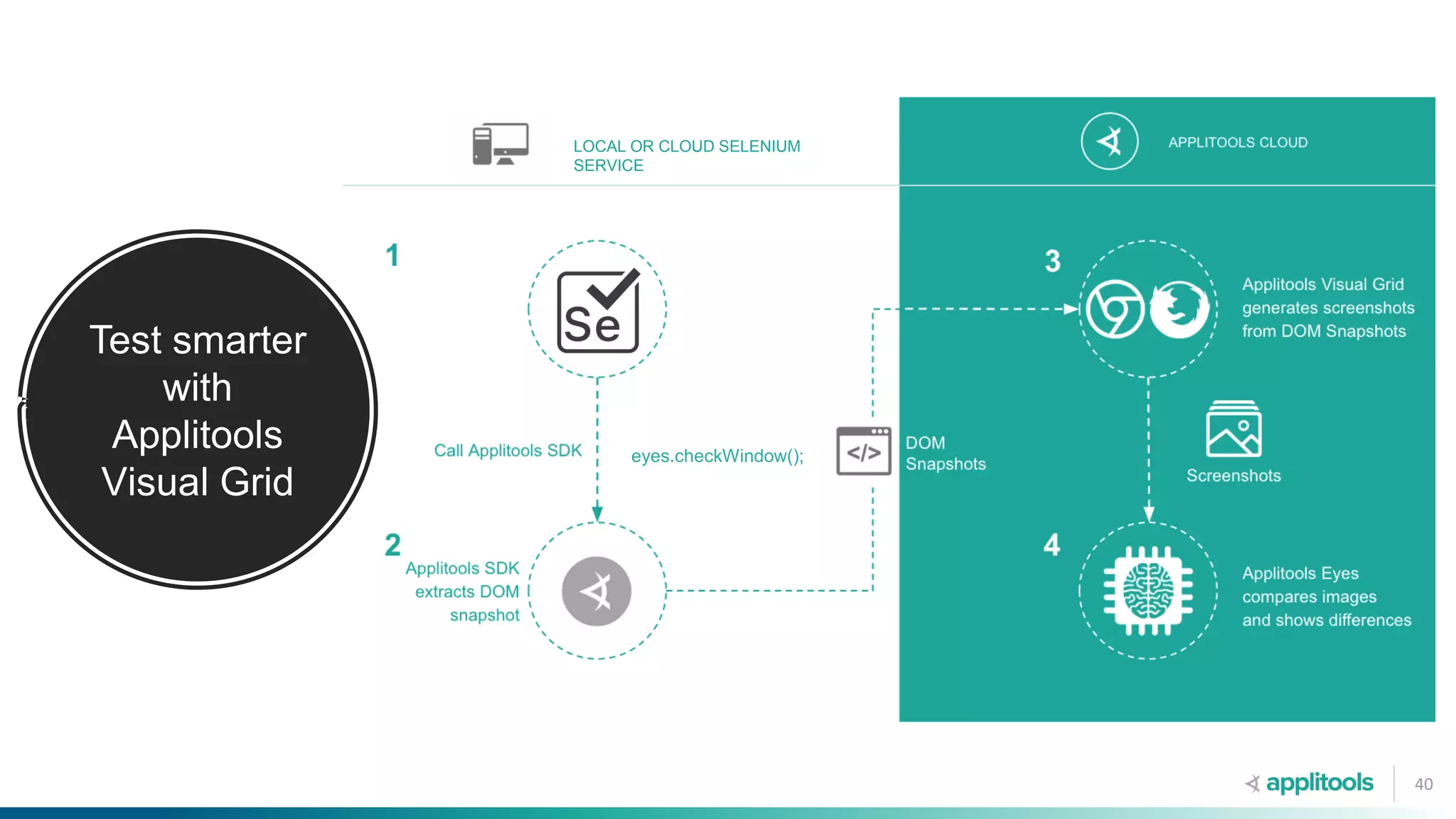

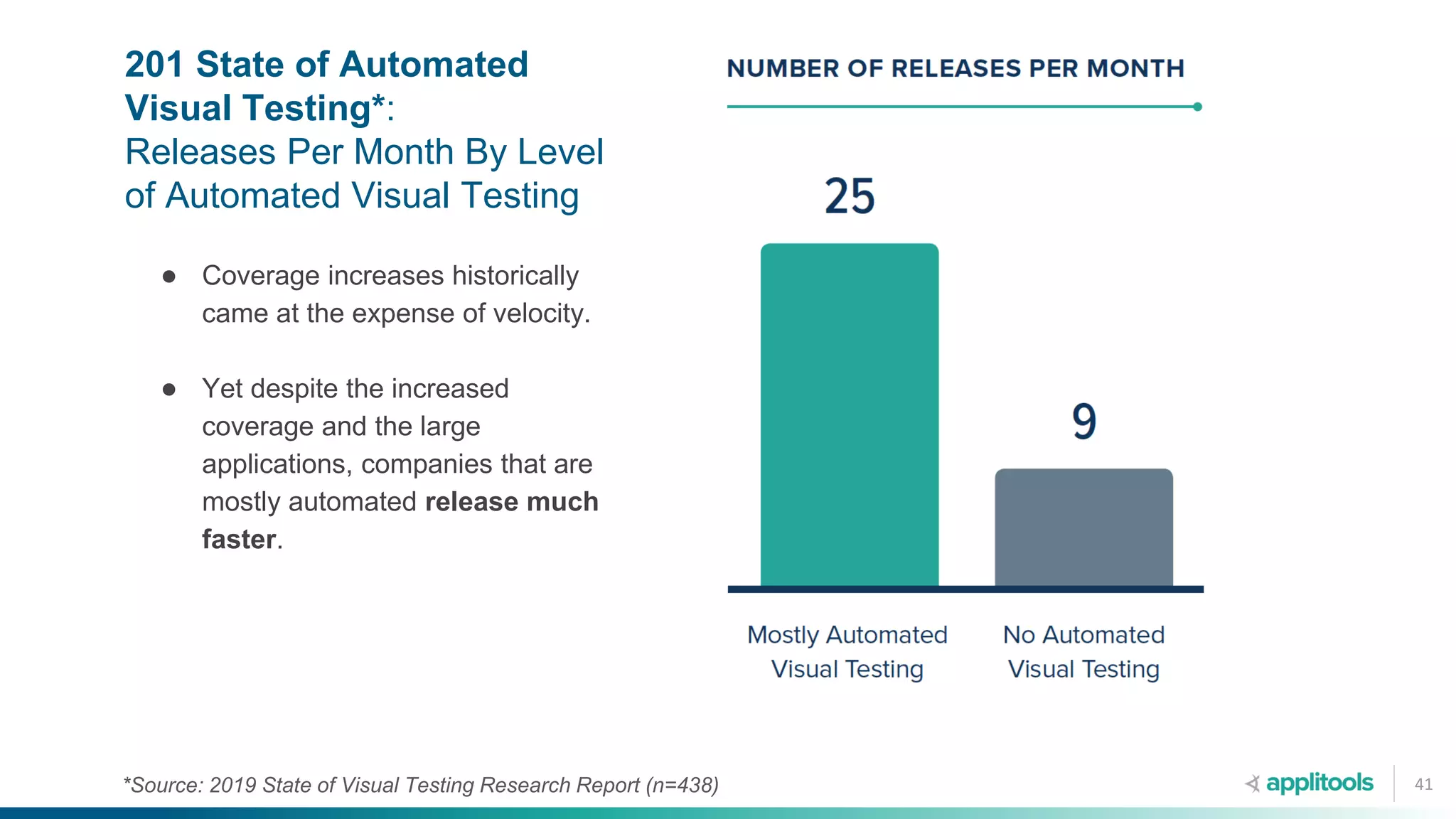

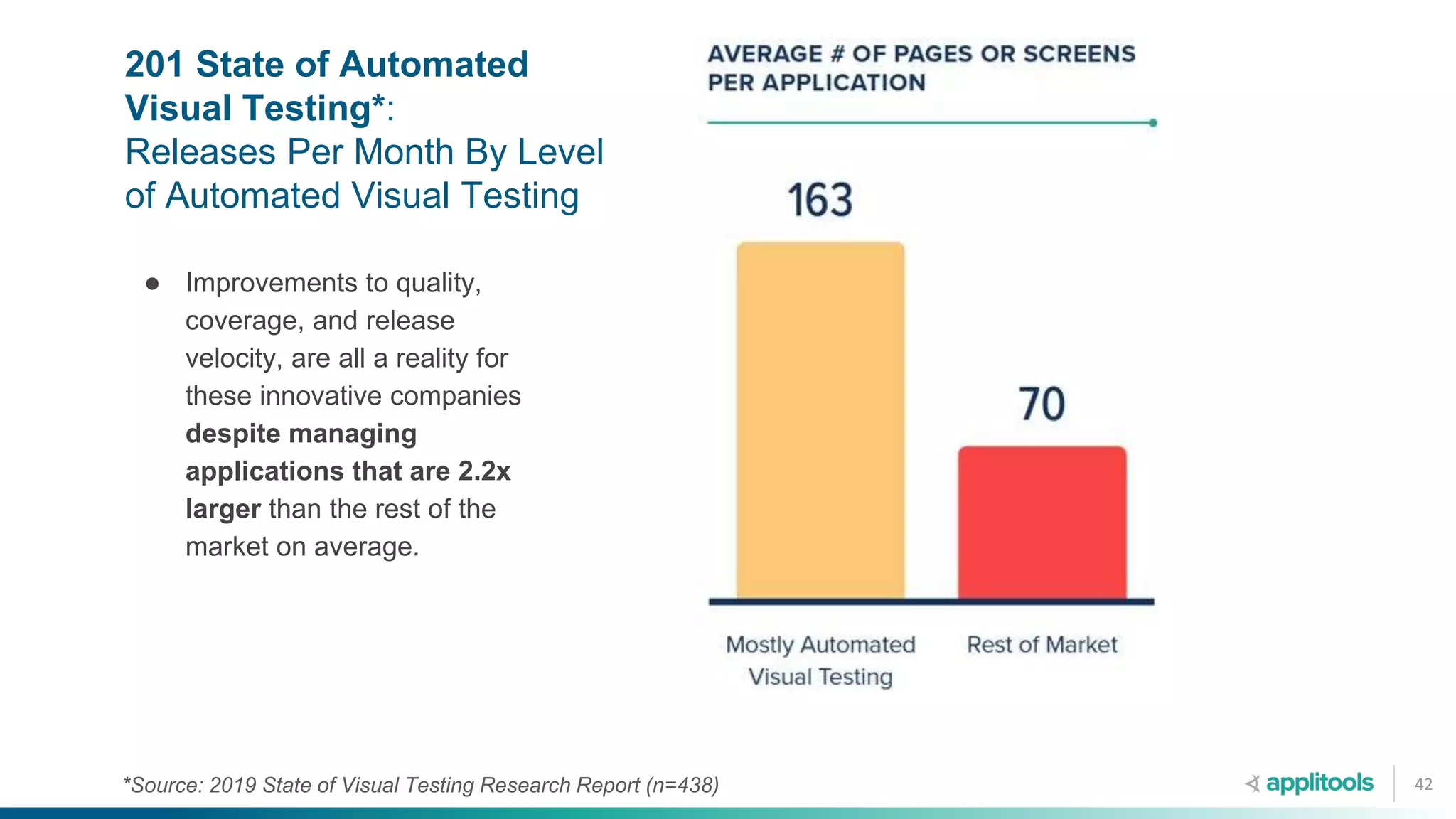

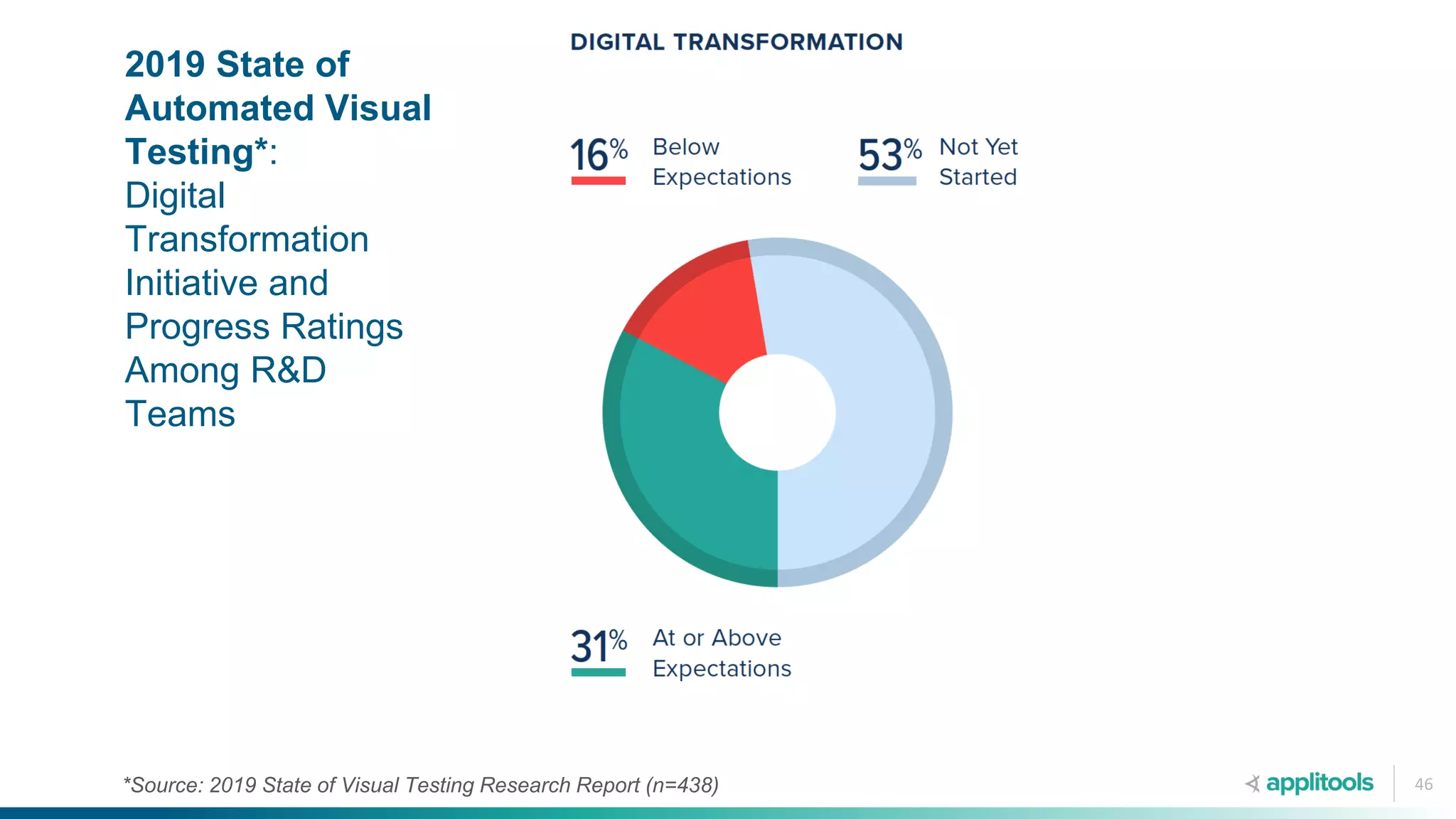

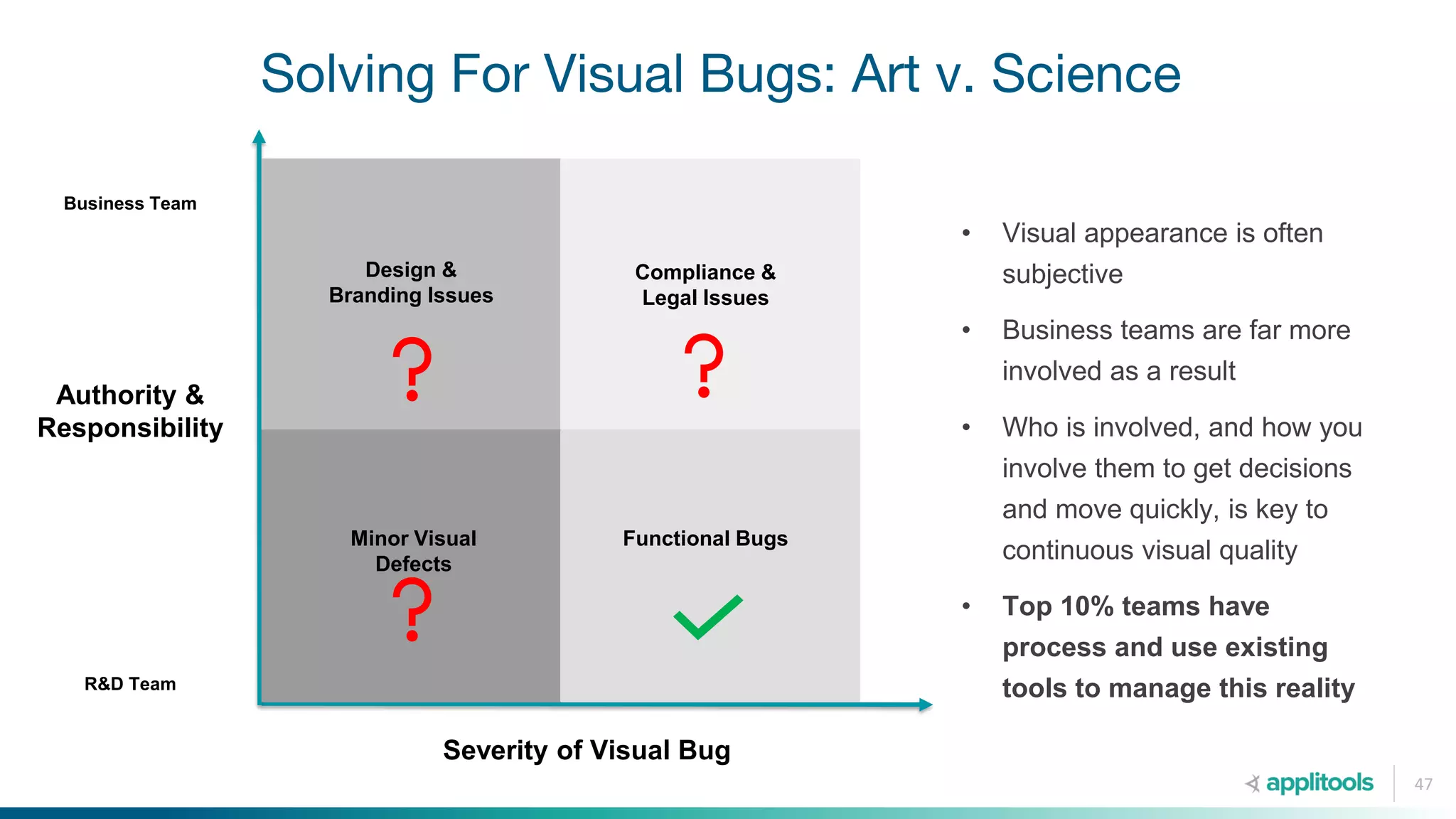

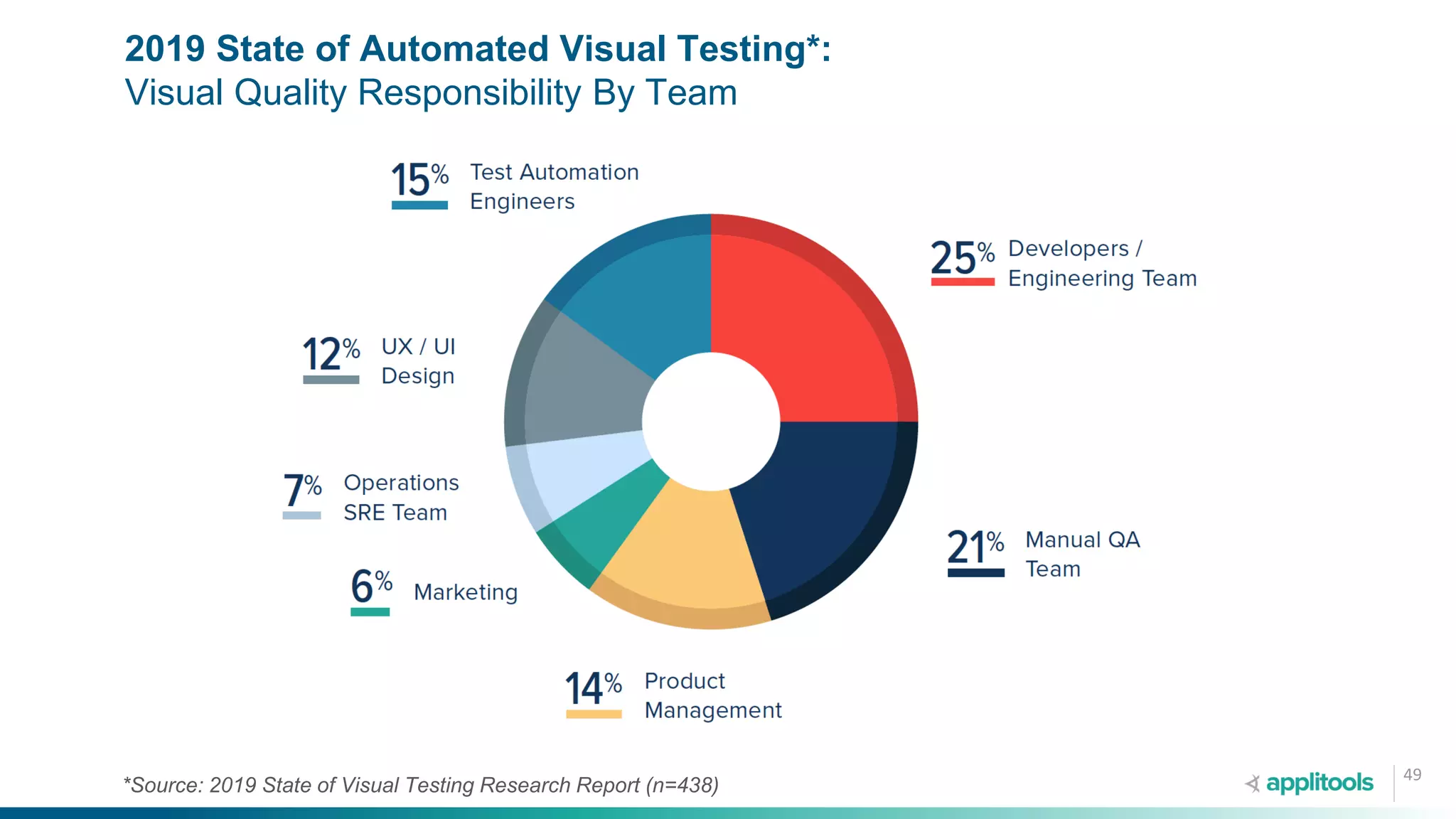

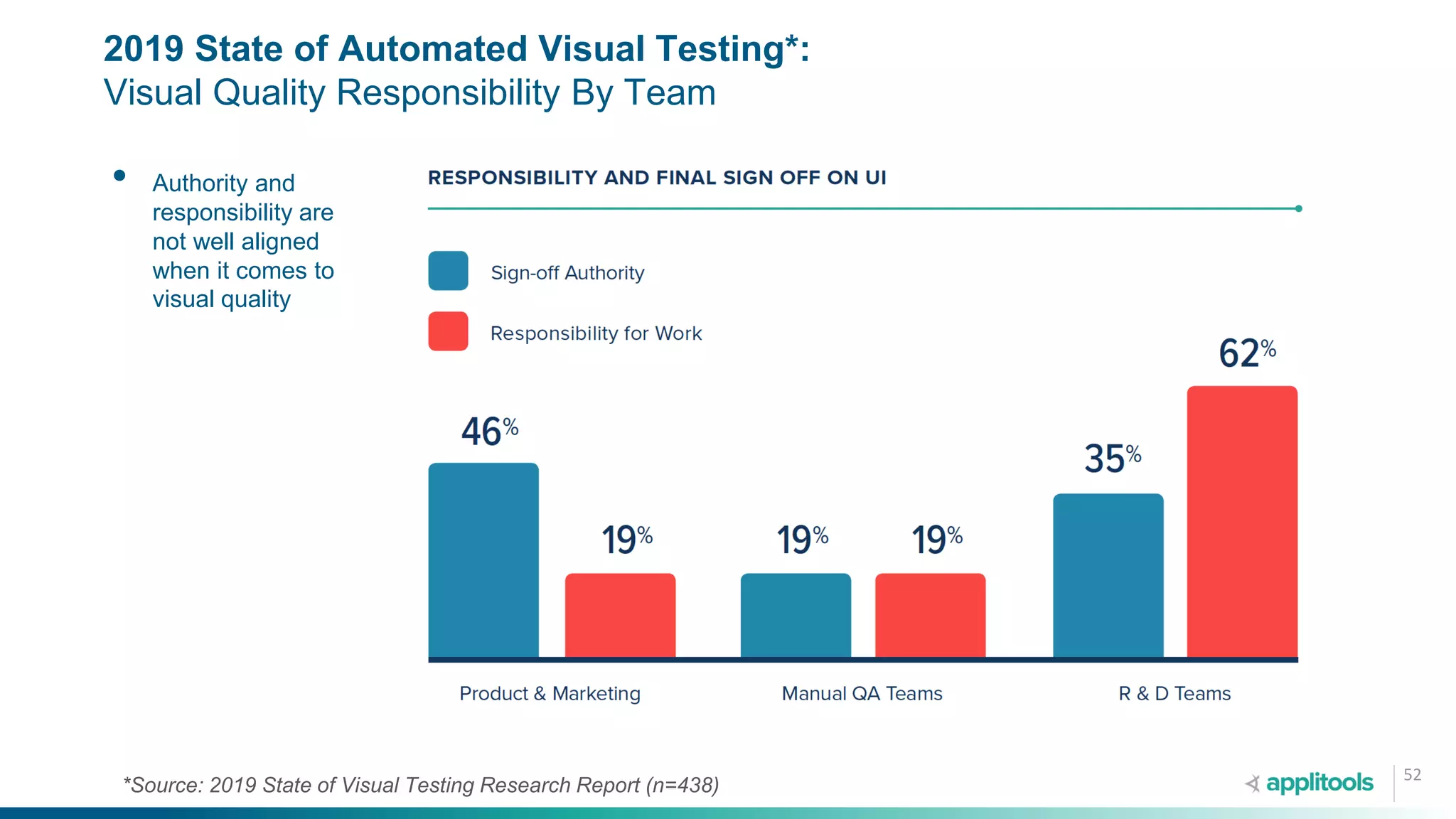

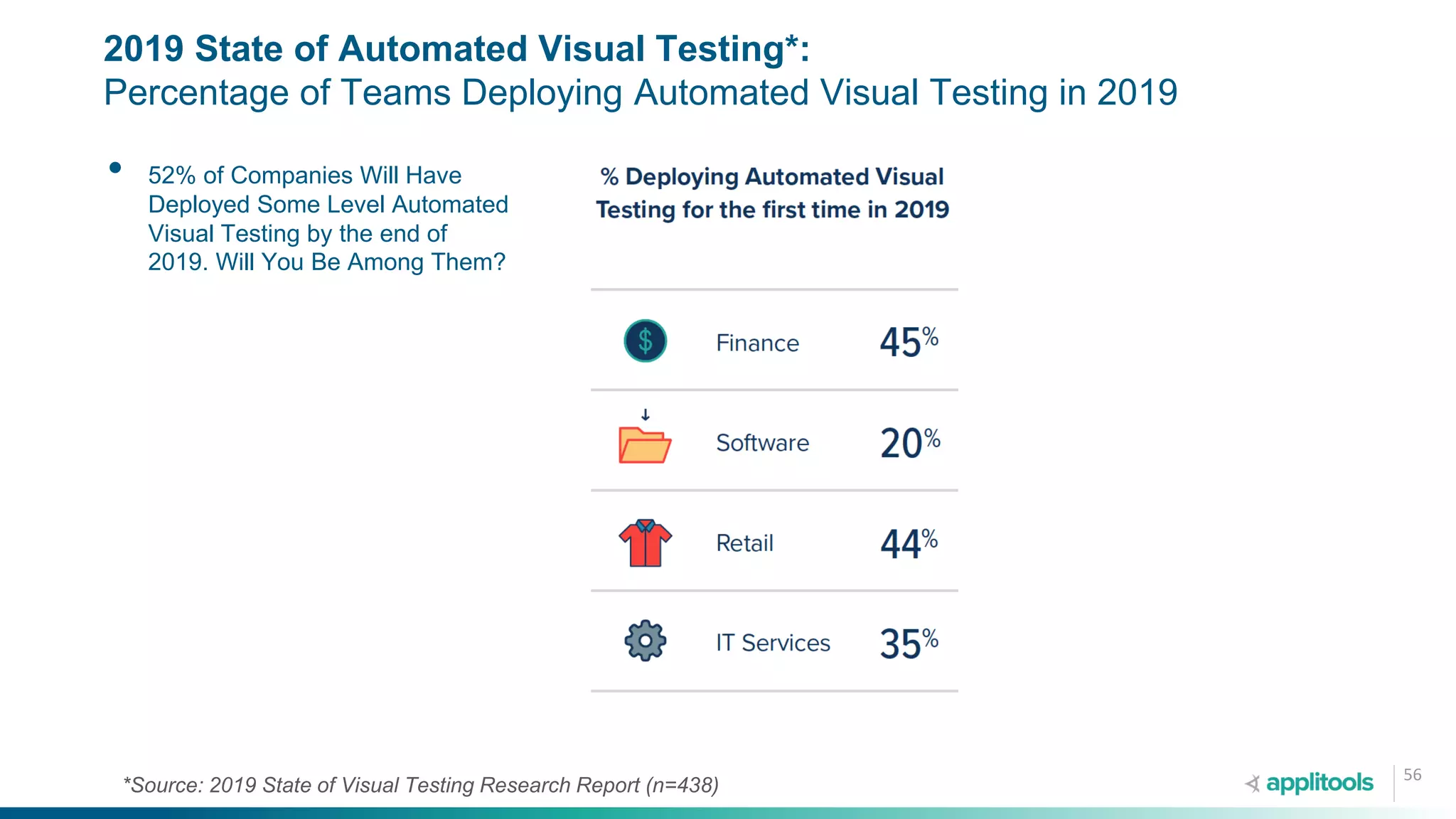

The document discusses strategies for achieving continuous visual quality in software testing, emphasizing the importance of visual AI and collaboration among teams. It identifies four key practices that top 10% testing teams implement, including smarter testing methods, faster release cycles, cross-functional responsibility, and leading change within organizations. The findings are supported by data from the 2019 state of visual testing research report.