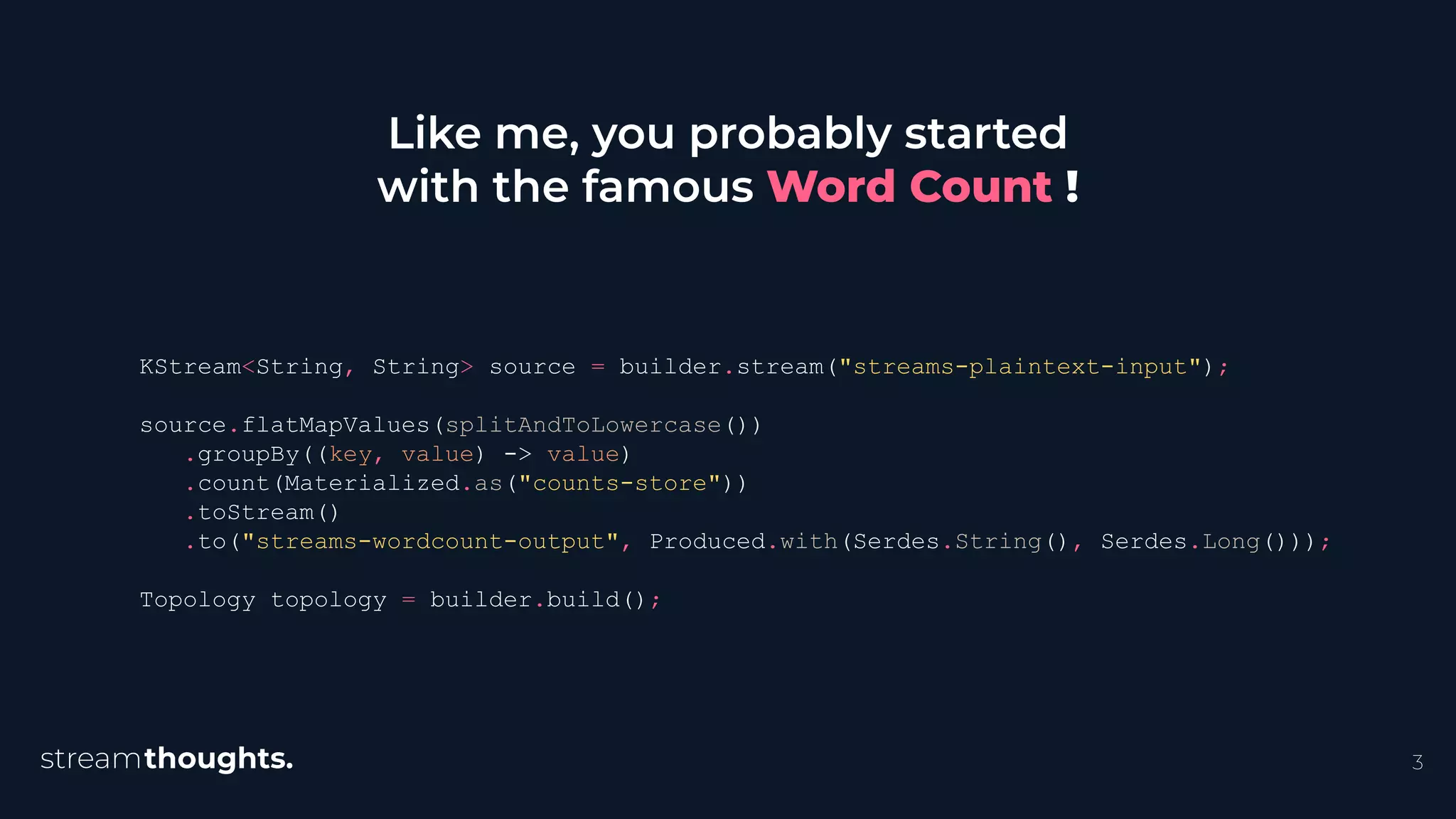

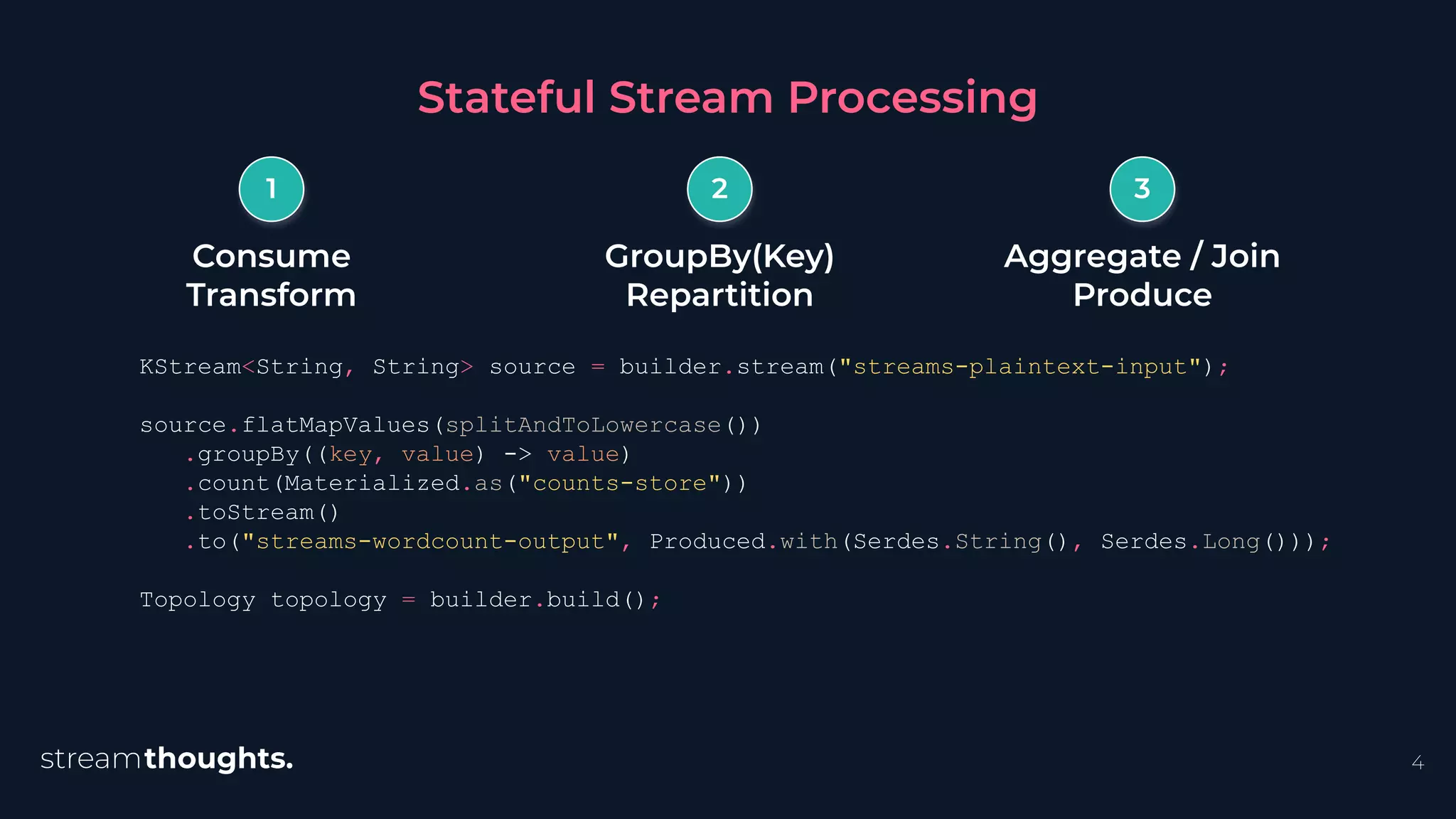

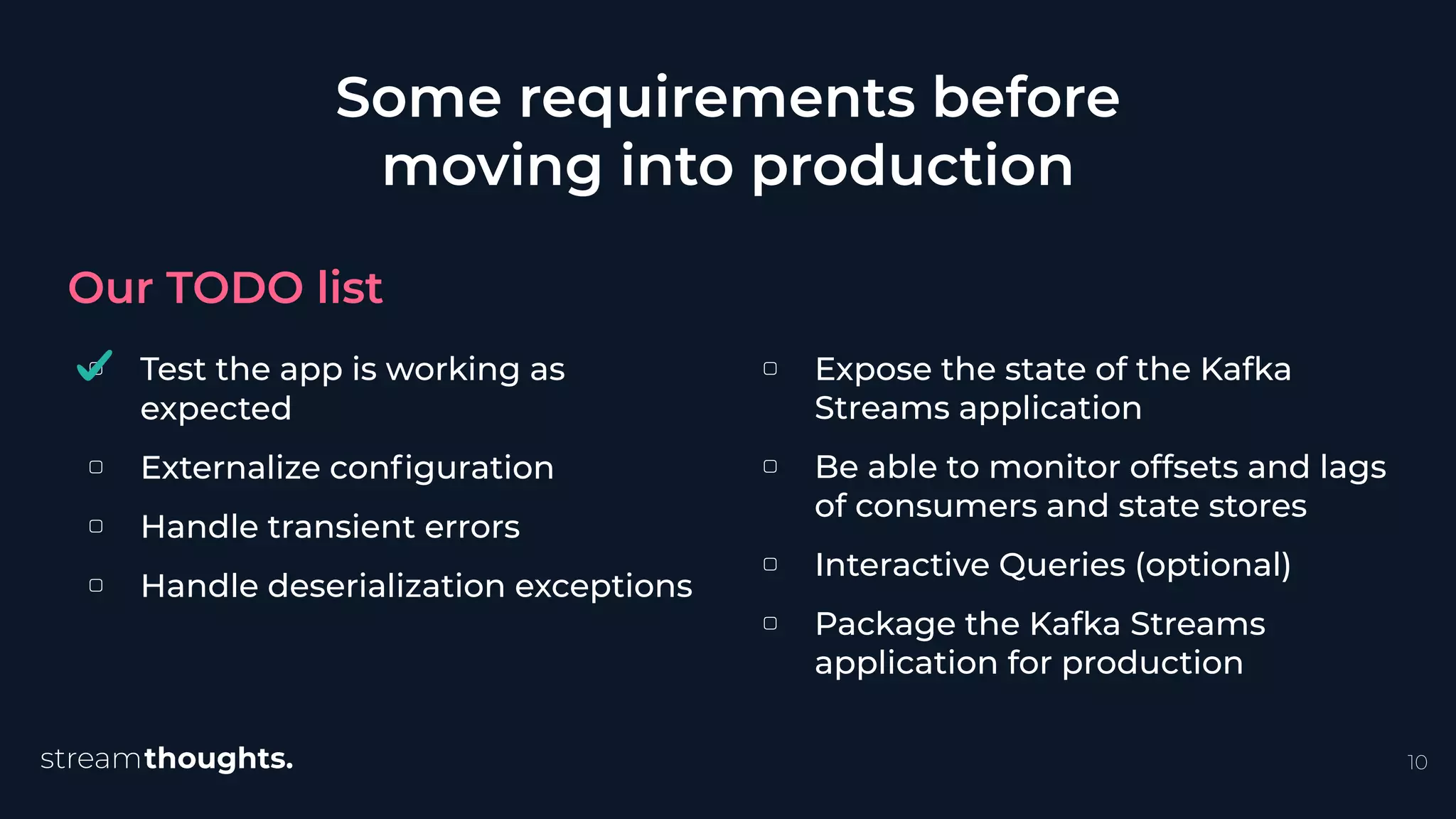

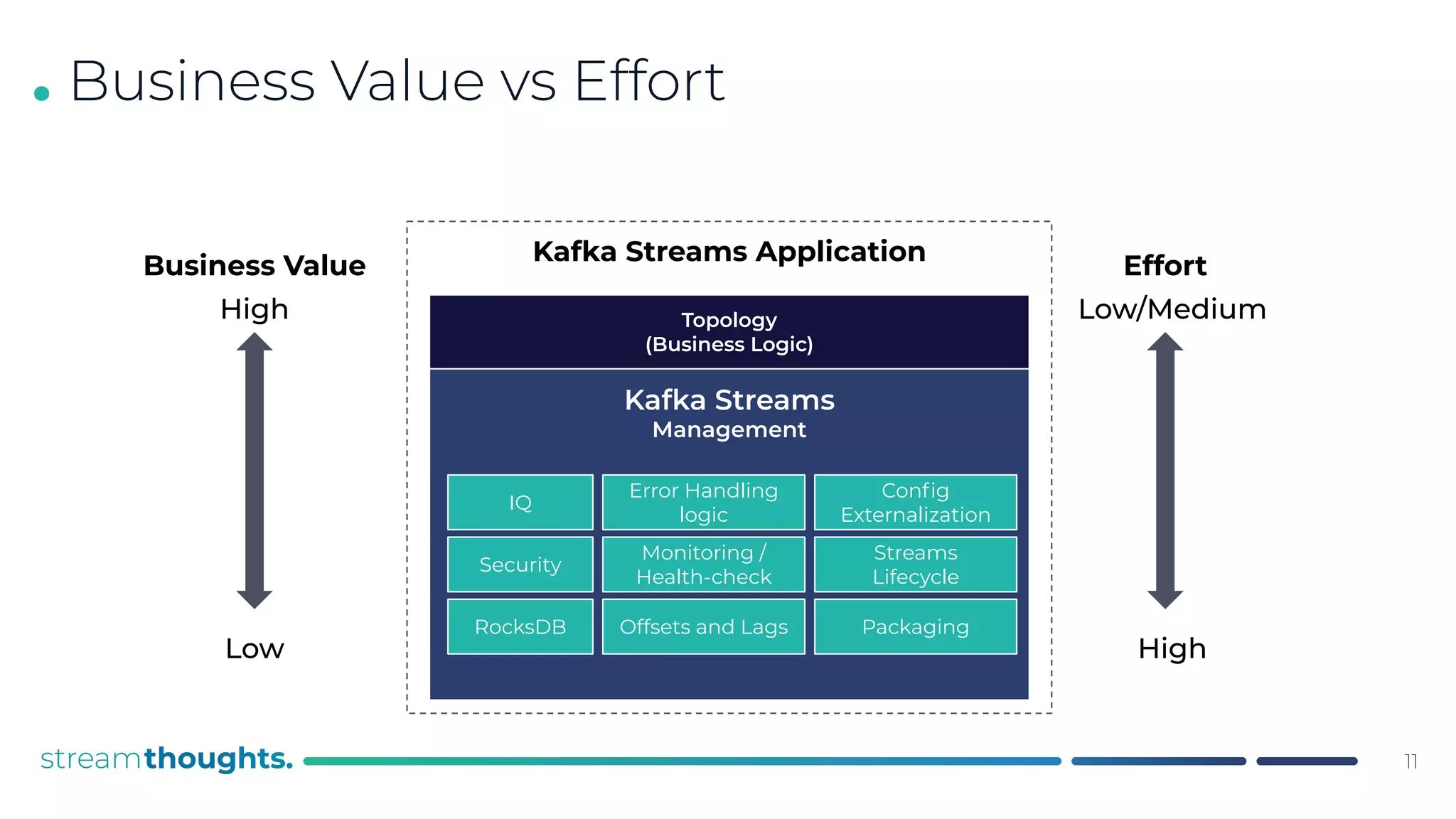

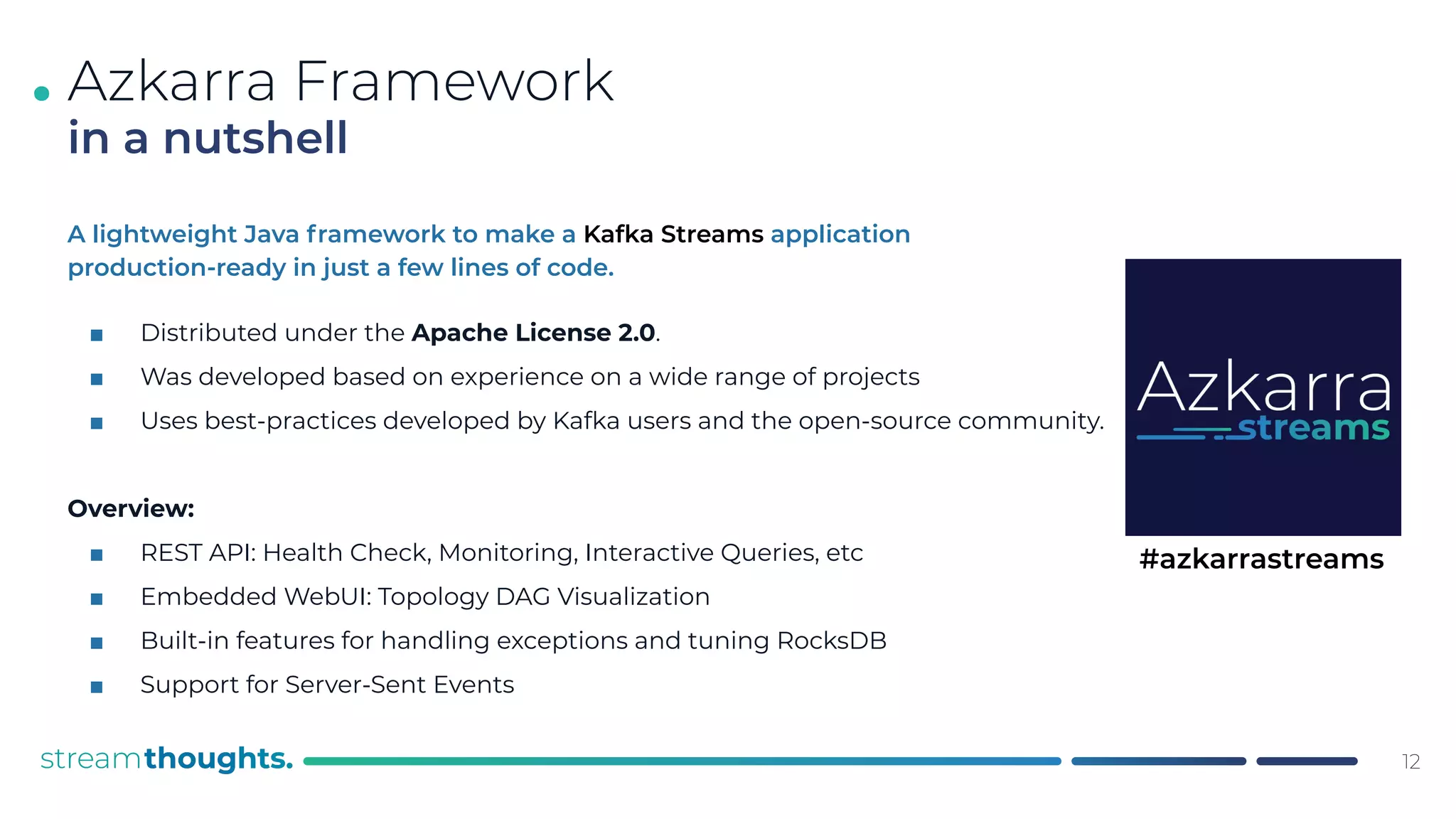

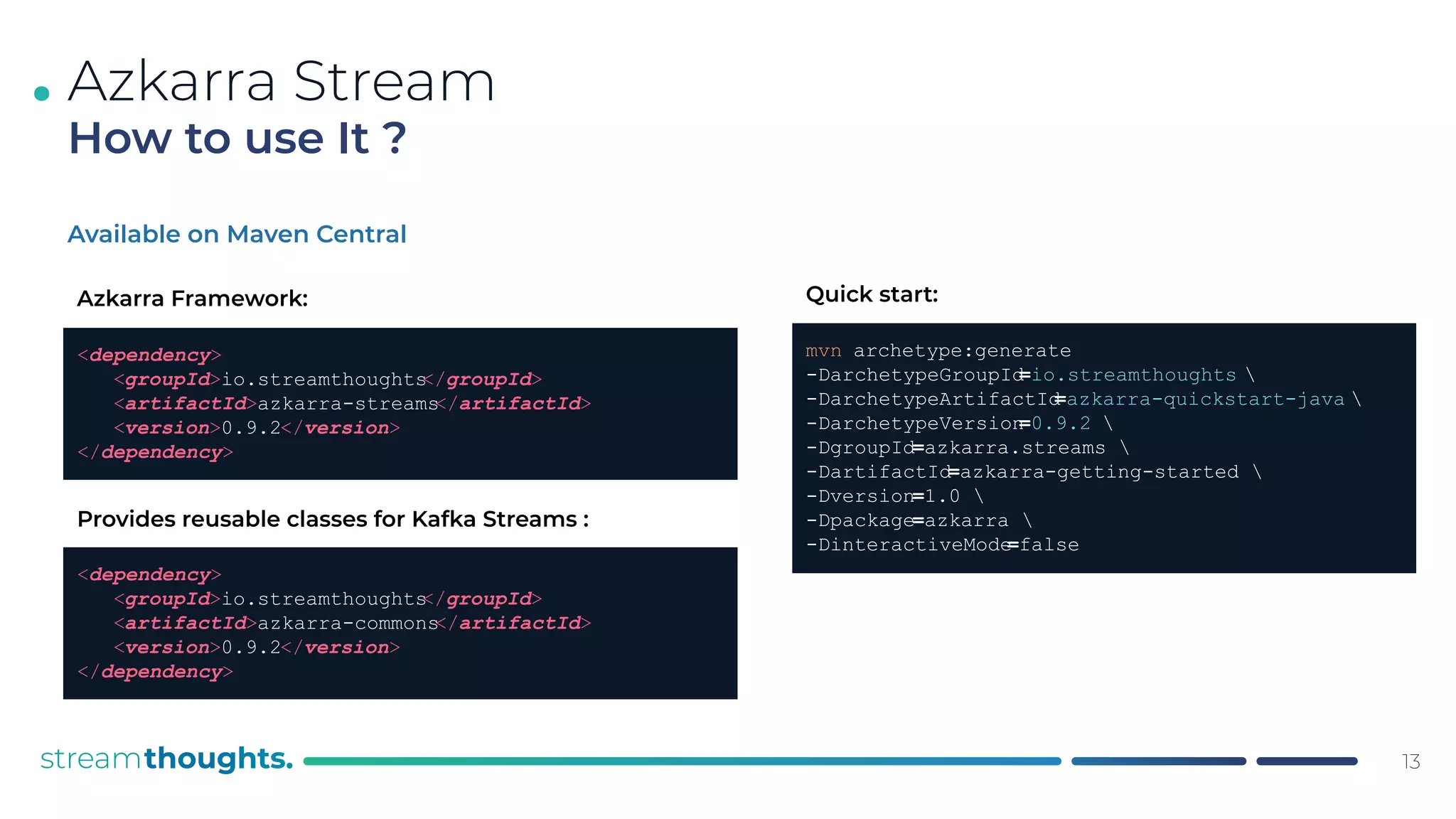

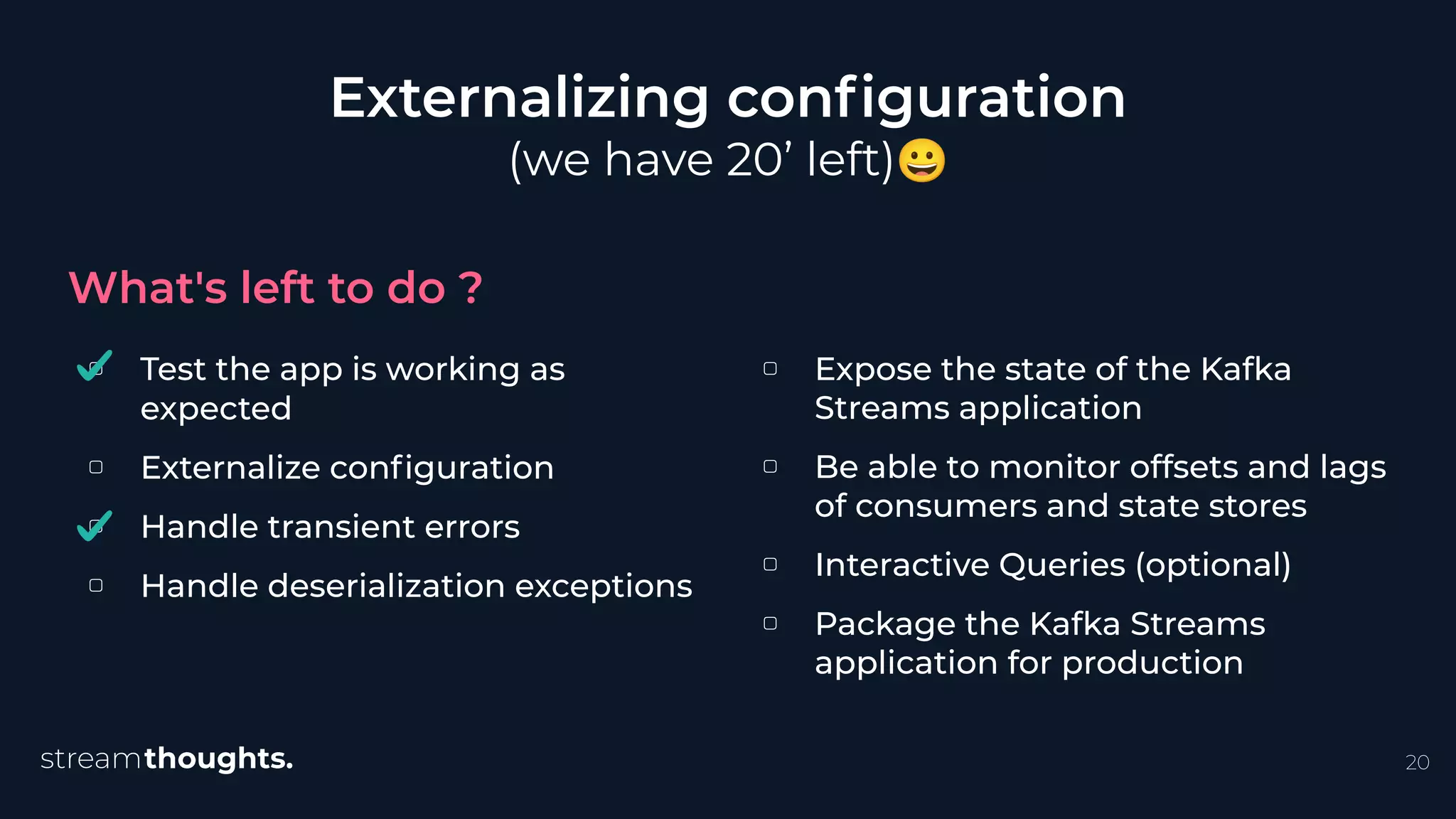

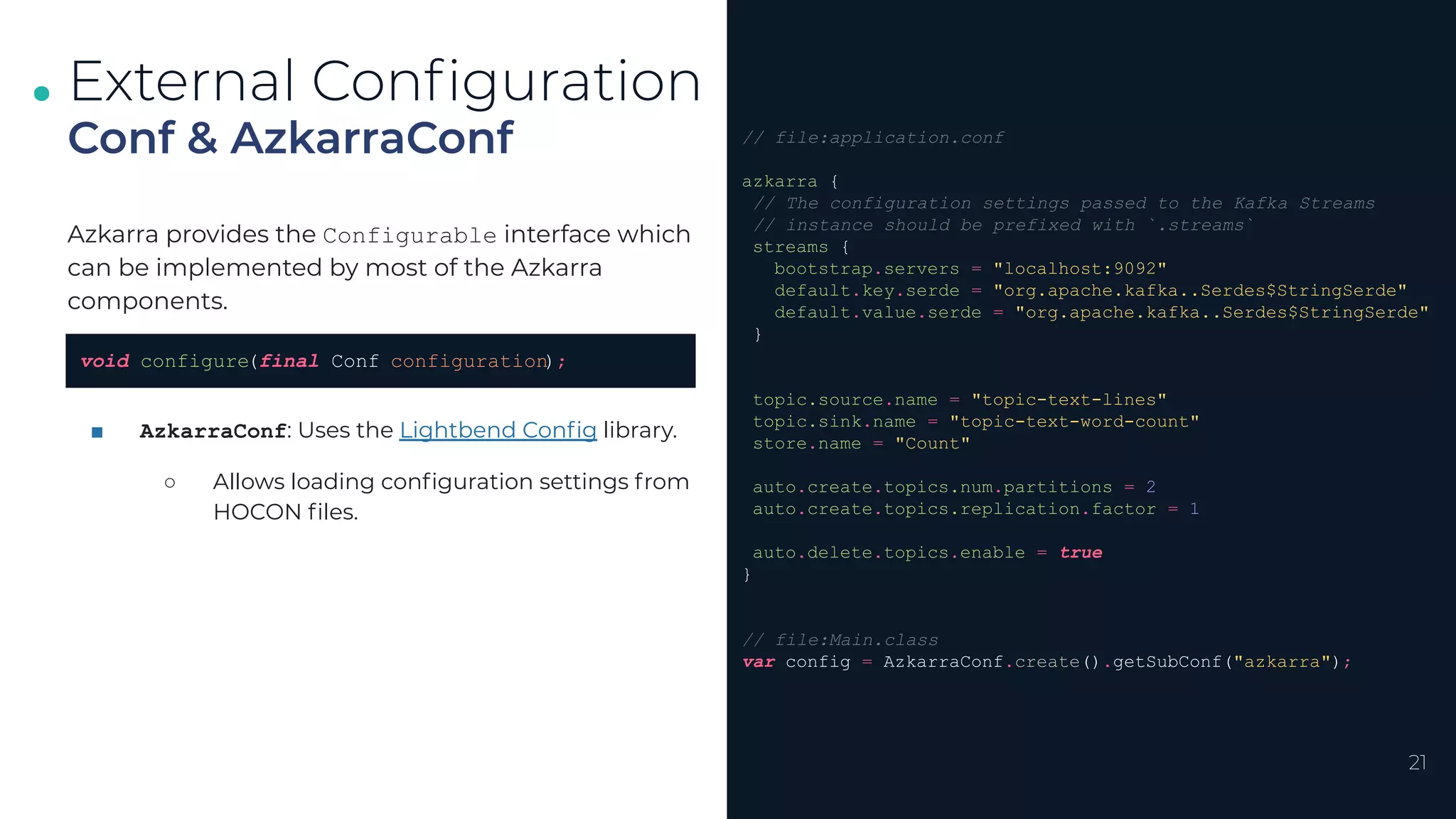

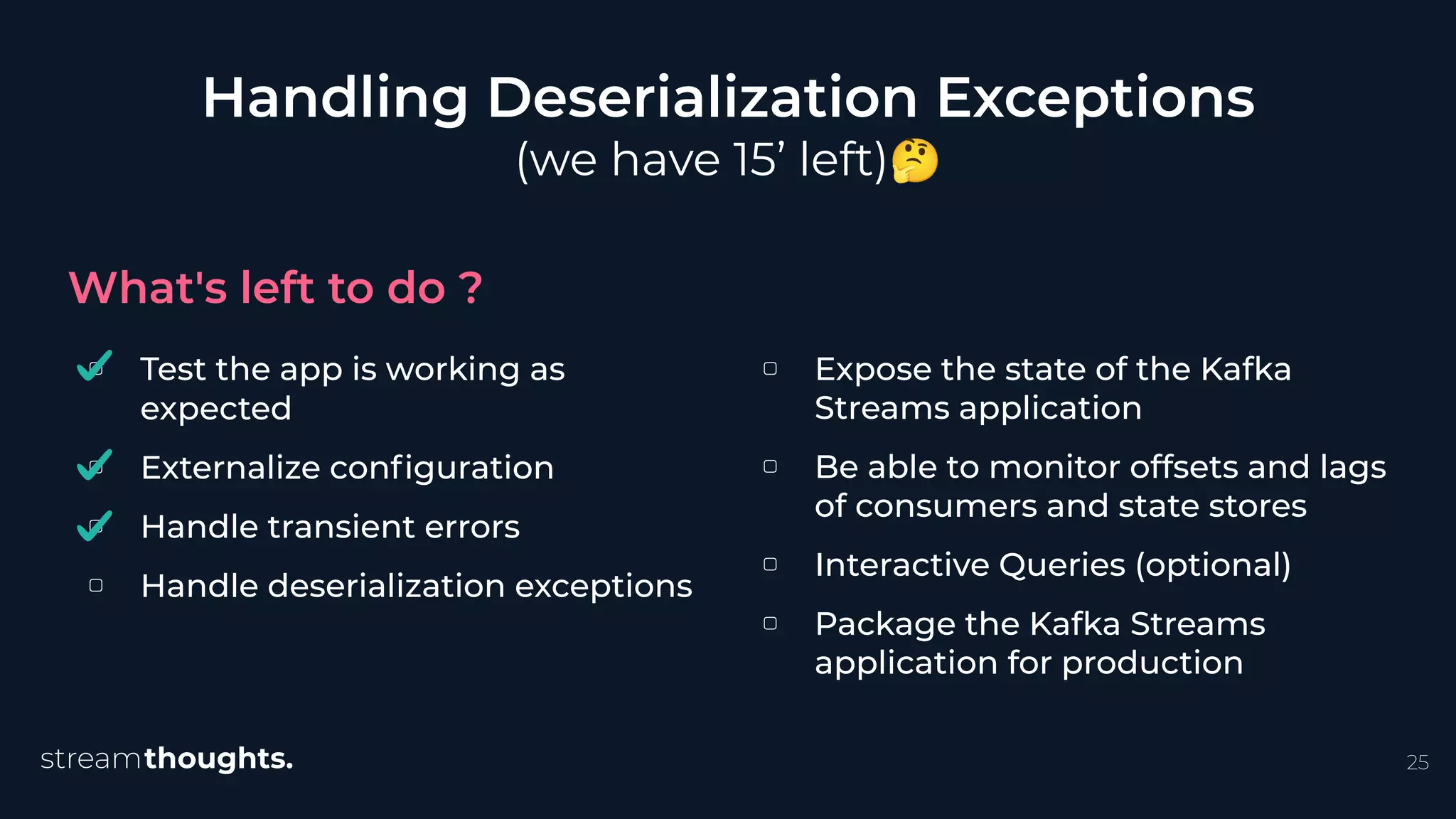

The document discusses building production-ready Kafka Streams applications quickly using the Azkarra framework, highlighting the importance of incorporating configurations, error handling, and monitoring. It provides a detailed step-by-step guide on implementing functionalities such as state management and deserialization handling, alongside the use of a lightweight Java framework that adheres to best practices. Additionally, it emphasizes the need for thorough testing and deployment strategies to ensure reliability in production environments.

![public class WordCount {

public static void main(String[] args) {

var builder = new StreamsBuilder

();

KStream<String, String> source = builder.stream("streams-plaintext-input"

);

source.flatMapValues(splitAndToLowercase

())

.groupBy((key, value) -> value)

.count(Materialized.as("counts-store"

))

.toStream()

.to("streams-wordcount-output"

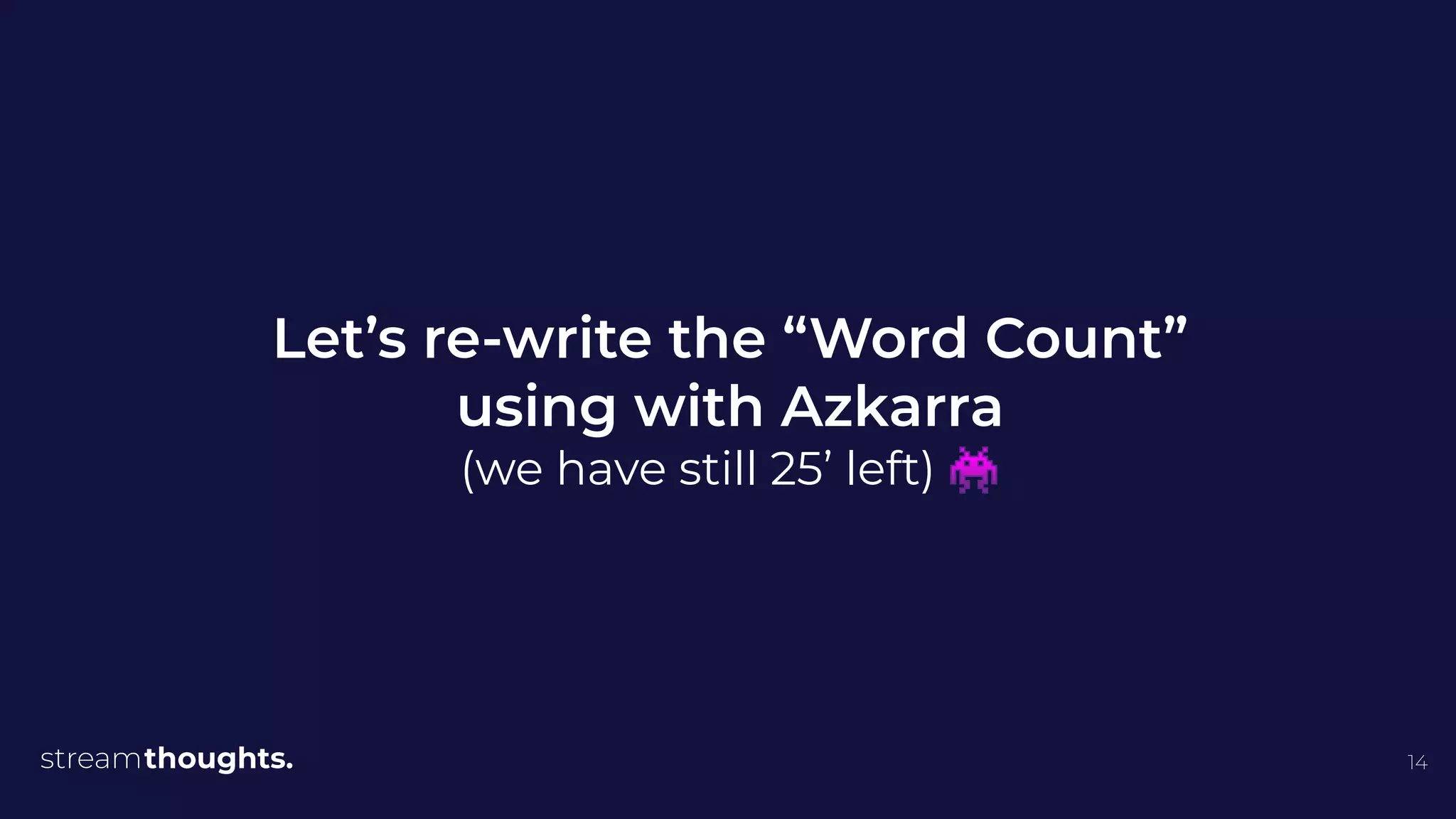

, Produced.with(Serdes.String(), Serdes.Long()));

var topology = builder.build();

Properties props = new Properties();

props.put(StreamsConfig.APPLICATION_ID_CONFIG

, "streams-wordcount"

);

props.put(StreamsConfig.BOOTSTRAP_SERVERS_CONFIG

, "localhost:9092"

);

props.put(StreamsConfig.DEFAULT_KEY_SERDE_CLASS_CONFIG

, Serdes.String().getClass());

props.put(StreamsConfig.DEFAULT_VALUE_SERDE_CLASS_CONFIG

, Serdes.String().getClass());

var streams = new KafkaStreams(topology, props);

Runtime.getRuntime().addShutdownHook

(new Thread(streams::close

));

}

}

Core Logic

Execution

5

Configuration](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-5-2048.jpg)

![.

17

Let’s start KafkaStreams

Boom! Transient Errors

word-count-1-0-ae1a9bf9-101d-4796-ad36-2e1130e83573-StreamThread-1] Received error code INCOMPLETE_SOURCE_TOPIC_METADATA

16:05:12.585 [word-count-1-0-ae1a9bf9-101d-4796-ad36-2e1130e83573-StreamThread-1] ERROR

org.apache.kafka.clients.consumer.internals.ConsumerCoordinator - [Consumer

clientId=word-count-1-0-ae1a9bf9-101d-4796-ad36-2e1130e83573-StreamThread-1-consumer, groupId=word-count-1-0] User provided listener

org.apache.kafka.streams.processor.internals.StreamsRebalanceListener failed on invocation of onPartitionsAssigned for partitions []

org.apache.kafka.streams.errors.MissingSourceTopicException: One or more source topics were missing during rebalance

at org.apache.kafka.streams.processor.internals.StreamsRebalanceListener.onPartitionsAssigned(StreamsRebalanceListener.java:57)

~[kafka-streams-2.7.0.jar:?]

at org.apache.kafka.clients.consumer.internals.ConsumerCoordinator.invokePartitionsAssigned(ConsumerCoordinator.java:293) [kafka-clients-2.7.0.jar:?]

at org.apache.kafka.clients.consumer.internals.ConsumerCoordinator.onJoinComplete(ConsumerCoordinator.java:430) [kafka-clients-2.7.0.jar:?]

at org.apache.kafka.clients.consumer.internals.AbstractCoordinator.joinGroupIfNeeded(AbstractCoordinator.java:451) [kafka-clients-2.7.0.jar:?]

at org.apache.kafka.clients.consumer.internals.AbstractCoordinator.ensureActiveGroup(AbstractCoordinator.java:367) [kafka-clients-2.7.0.jar:?]

at org.apache.kafka.clients.consumer.internals.ConsumerCoordinator.poll(ConsumerCoordinator.java:508) [kafka-clients-2.7.0.jar:?]](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-17-2048.jpg)

![.

.

. Concepts

AzkarraContext

AzkarraContext

StreamsExecution

Environment

Container for

Dependency Injection.

Used to automatically

configures

streams execution

environments.

Topology

Provider

Topology

22

public static void main(final String[] args) {

// (1) Load the configuration (application.conf)

var config = AzkarraConf.create().getSubConf("azkarra");

// (2) Create the Azkarra Context

var context = DefaultAzkarraContext.create(config);

// (3) Register StreamLifecycleInterceptor as component

context.registerComponent(

ConsoleStreamsLifecycleInterceptor.class

);

// (4) Register the Topology to the default environment

context.addTopology(

WordCountTopology.class,

Executed.as("word-count")

);

// (5) Start the context

context

.setRegisterShutdownHook(true)

.start();

}](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-22-2048.jpg)

![.

.

. Concepts

AzkarraApplication

AzkarraContext

AzkarraApplication

StreamsExecution

Environment

Used to bootstrap and

configure an Azkarra

application.

Provides Embedded

HTTP-Server

Provides

Component

Scanning

Topology

Provider

Topology

23

public class WordCount {

public static void main(final String[] args) {

// (1) Load the configuration (application.conf)

var config = AzkarraConf.create();

// (2) Create the Azkarra Context

var context = DefaultAzkarraContext.create();

// (3) Register the Topology to the default environment

context.addTopology(

WordCountTopology.class,

Executed.as("word-count")

);

// (4) Create Azkarra application

new AzkarraApplication()

.setContext(context)

.setConfiguration(config)

// (5) Enable and configure embedded HTTP server

.setHttpServerEnable(true)

.setHttpServerConf(ServerConfig.newBuilder()

.setListener("localhost")

.setPort(8080)

.build()

)

// (6) Start Azkarra

.run(args);

}

}](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-23-2048.jpg)

![.

.

. Concepts

AzkarraApplication

AzkarraContext

AzkarraApplication

StreamsExecution

Environment

Topology

Provider

Topology

24

@AzkarraStreamsApplication

public class WordCount {

public static void main(String[] args) {

AzkarraApplication.run(WordCount.class, args);

}

@Component

public static class WordCountTopology implements

TopologyProvider, Configurable {

private Conf conf;

@Override

public Topology topology() {

var builder = new StreamsBuilder();

// ...code omitted for clarity

return builder.build();

}

@Override

public void configure(Conf conf) {

this.conf = conf;

}

@Override

public String version() { return "1.0"; }

}

}

Used to bootstrap and

configure an Azkarra

application.

Provides Embedded

HTTP-Server

Provides

Component

Scanning](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-24-2048.jpg)

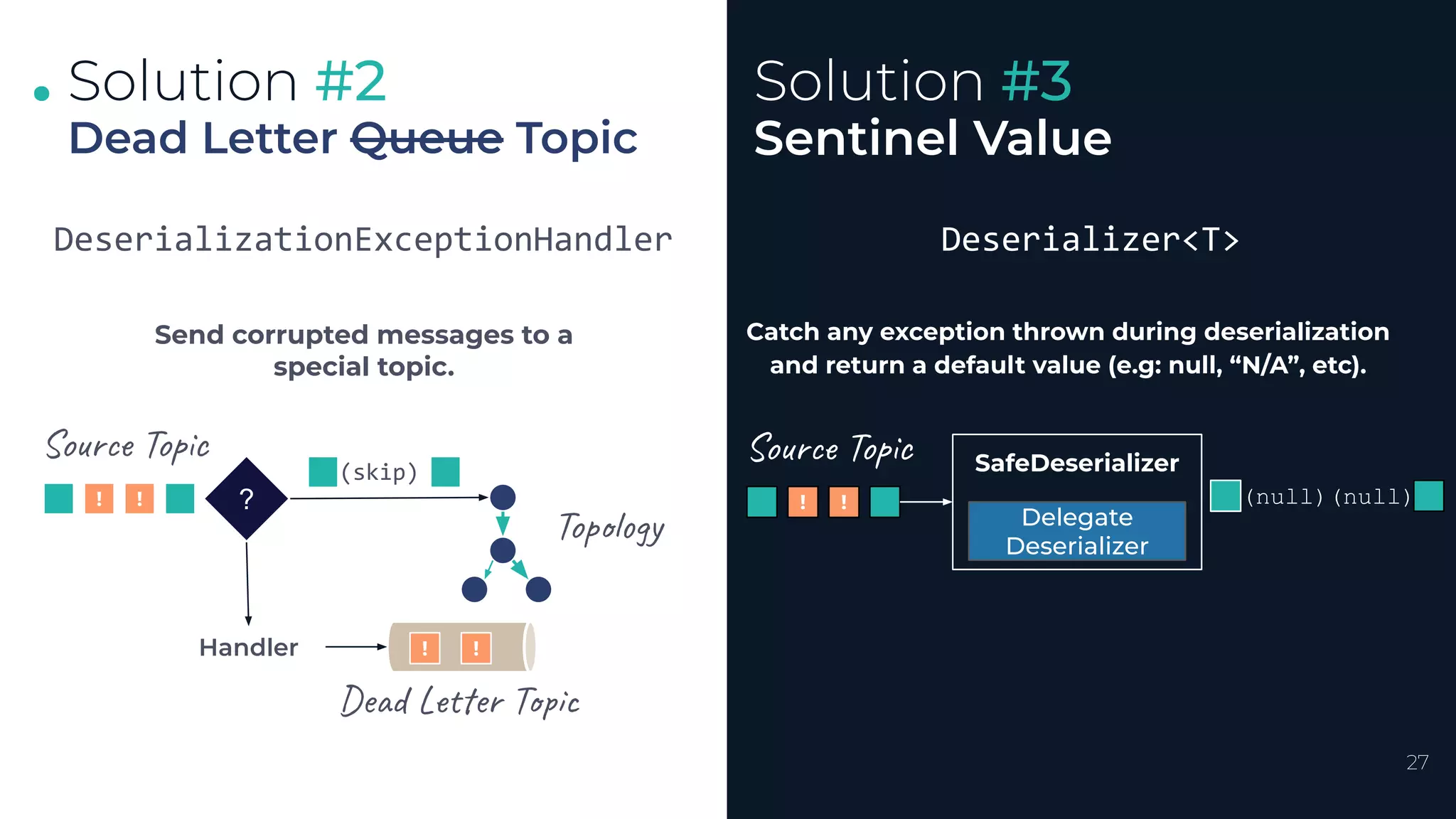

![.

.

.

28

Solution #2

Using Azkarra

Solution #3

DeadLetterTopicExceptionHandler

■ By default, sends corrupted records to

<Topic>-rejected

■ Doesn’t change the schema/format of the

corrupted message.

■ Use Kafka Headers to trace exception cause and

origin, e.g. :

○ __errors.exception.stacktrace

__errors.exception.message

○ __errors.exception.class.name

○ __errors.timestamp

○ __errors.application.id

○ __errors.record.[topic|partition|offset]

■ Can be configured to send records to a distinct

Kafka Cluster than the one used for KafkaStreams.

SafeSerdes

SafeSerdes.Long(-1L);

SafeSerdes.UUID(null);

SafeSerdes.serdeFrom(

new JsonSerializer (),

new JsonDeserializer (),

NullNode.getInstance ()

);](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-28-2048.jpg)

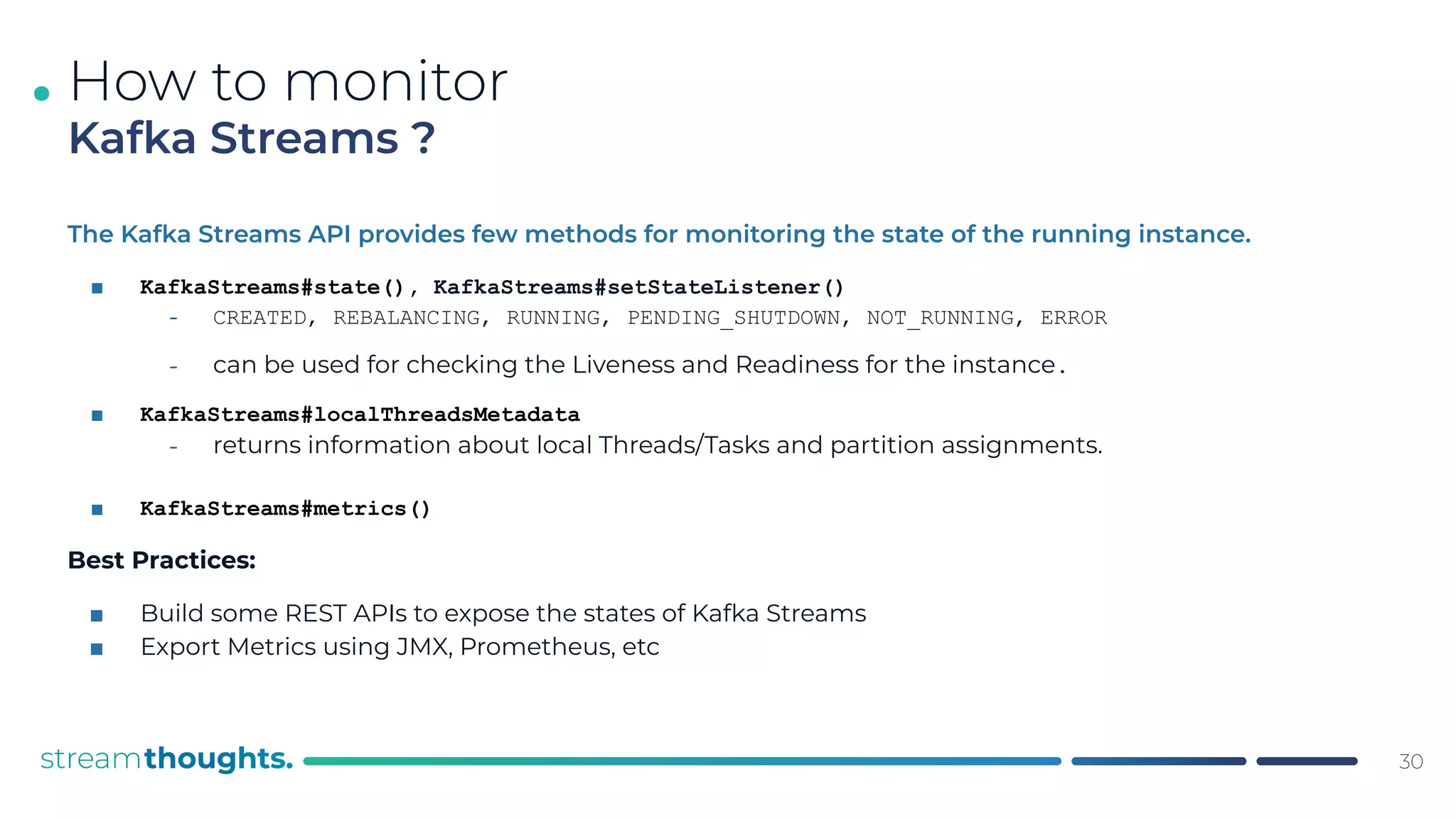

![.

.

.

Azkarra can be configured for periodically reporting

the internal states of a KafkaStreams instance.

■ Use StreamLifecycleInterceptor:

⎼ MonitoringStreamsInterceptor

■ Accepts a pluggable reporter class

⎼ Default : KafkaMonitoringReporter

⎼ Publishes events that adhere to the

CloudEvents specification.

33

Putting it all together

Exporting Kafka Streams

States Anywhere

{

"id":

"appid:word-count;appsrv:localhost:8080;ts:1620691200000",

"source": "azkarra/ks/localhost:8080",

"specversion": "1.0",

"type": "io.streamthoughts.azkarra.streams.stateupdateevent",

"time": "2021-05-11T00:00:00.000+0000",

"datacontenttype": "application/json",

"ioazkarramonitorintervalms": 10000,

"ioazkarrastreamsappid": "word-count",

"ioazkarraversion": "0.9.2",

"ioazkarrastreamsappserver": "localhost:8080",

"data": {

"state": "RUNNING",

"threads": [

{

"name": "word-count-...-93e9a84057ad-StreamThread-1",

"state": "RUNNING",

"active_tasks": [],

"standby_tasks": [],

"clients": {}

}

],

"offsets": {

"group": "",

"consumers": []

},

"stores": {

"partitionRestoreInfos": [],

"partitionLagInfos": []

},

"state_changed_time": 1620691200000

}

}

Cloud Events](https://image.slidesharecdn.com/florianhussonnoisfinalfmp-210608164549/75/Writing-Blazing-Fast-and-Production-Ready-Kafka-Streams-apps-in-less-than-30-min-using-Azkarra-Florian-Hussonnois-StreamThoughts-33-2048.jpg)