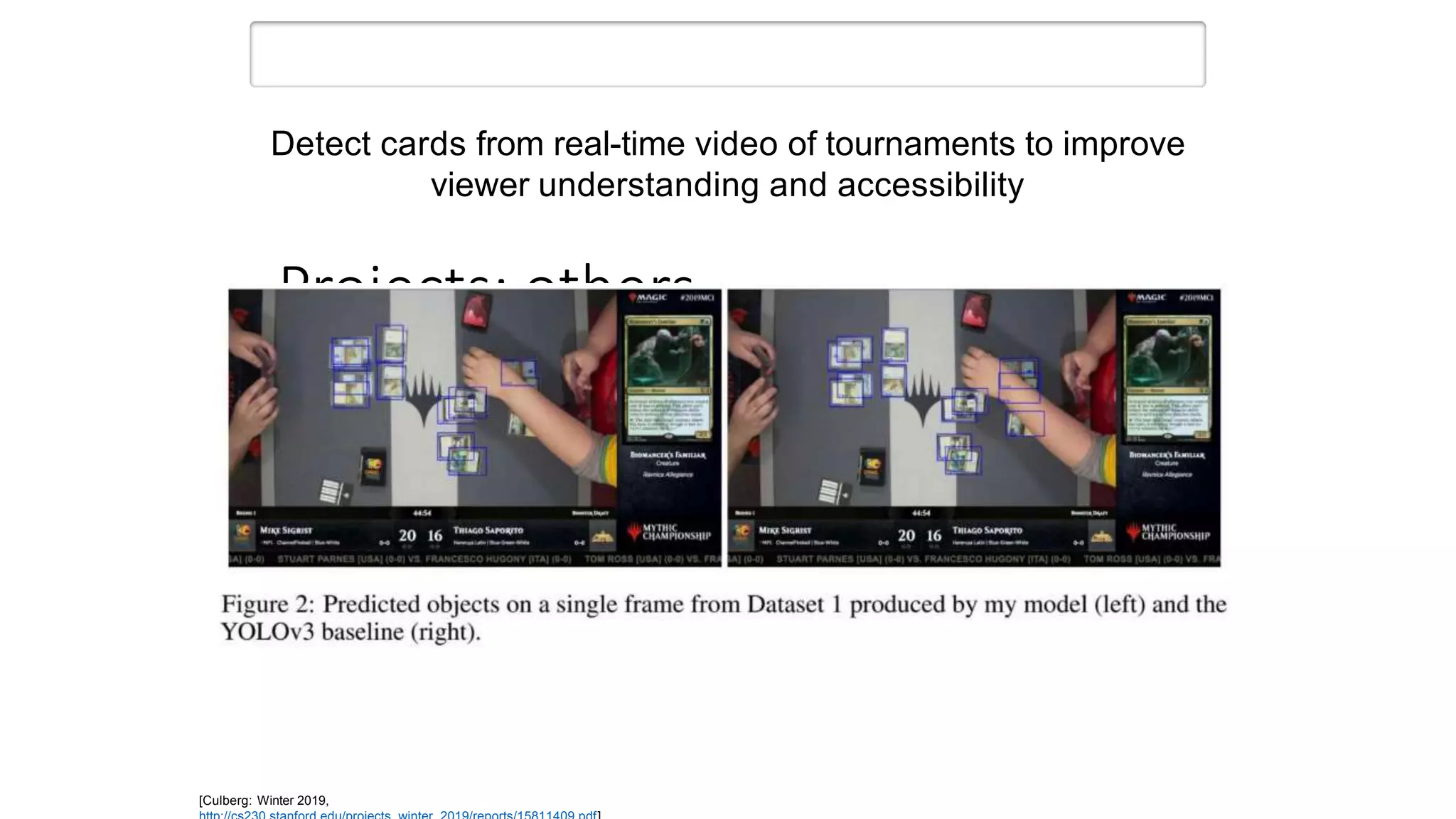

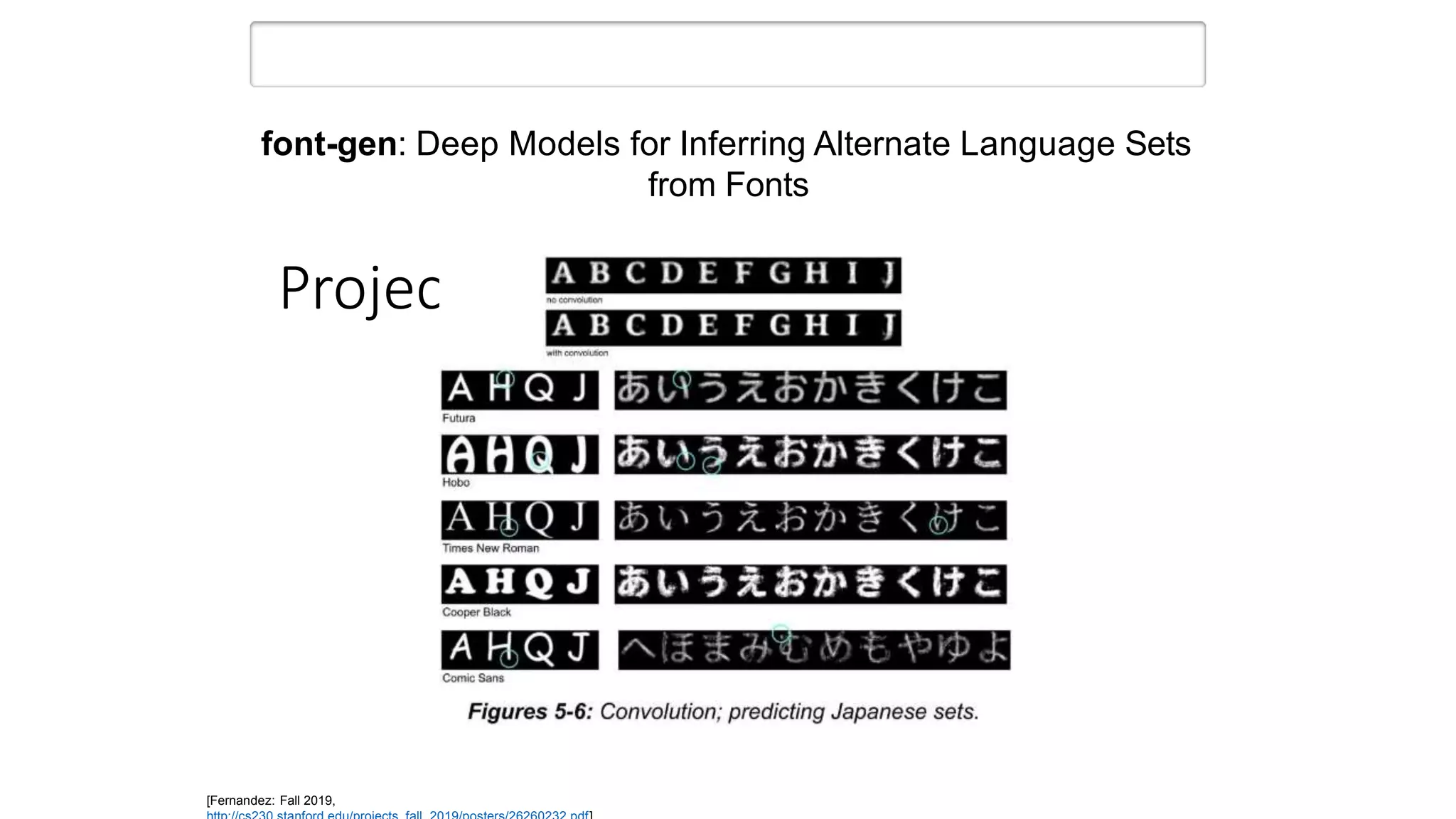

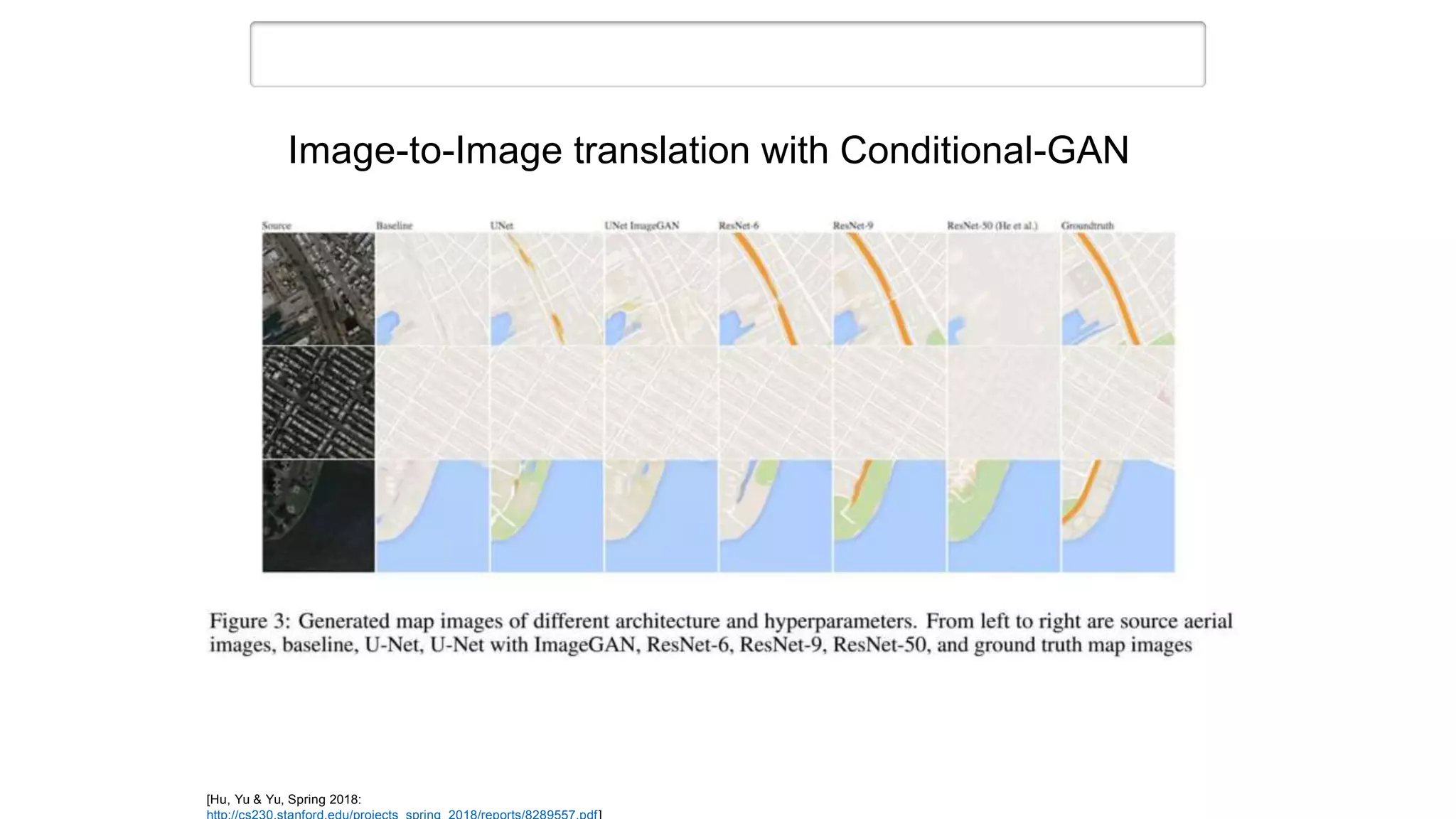

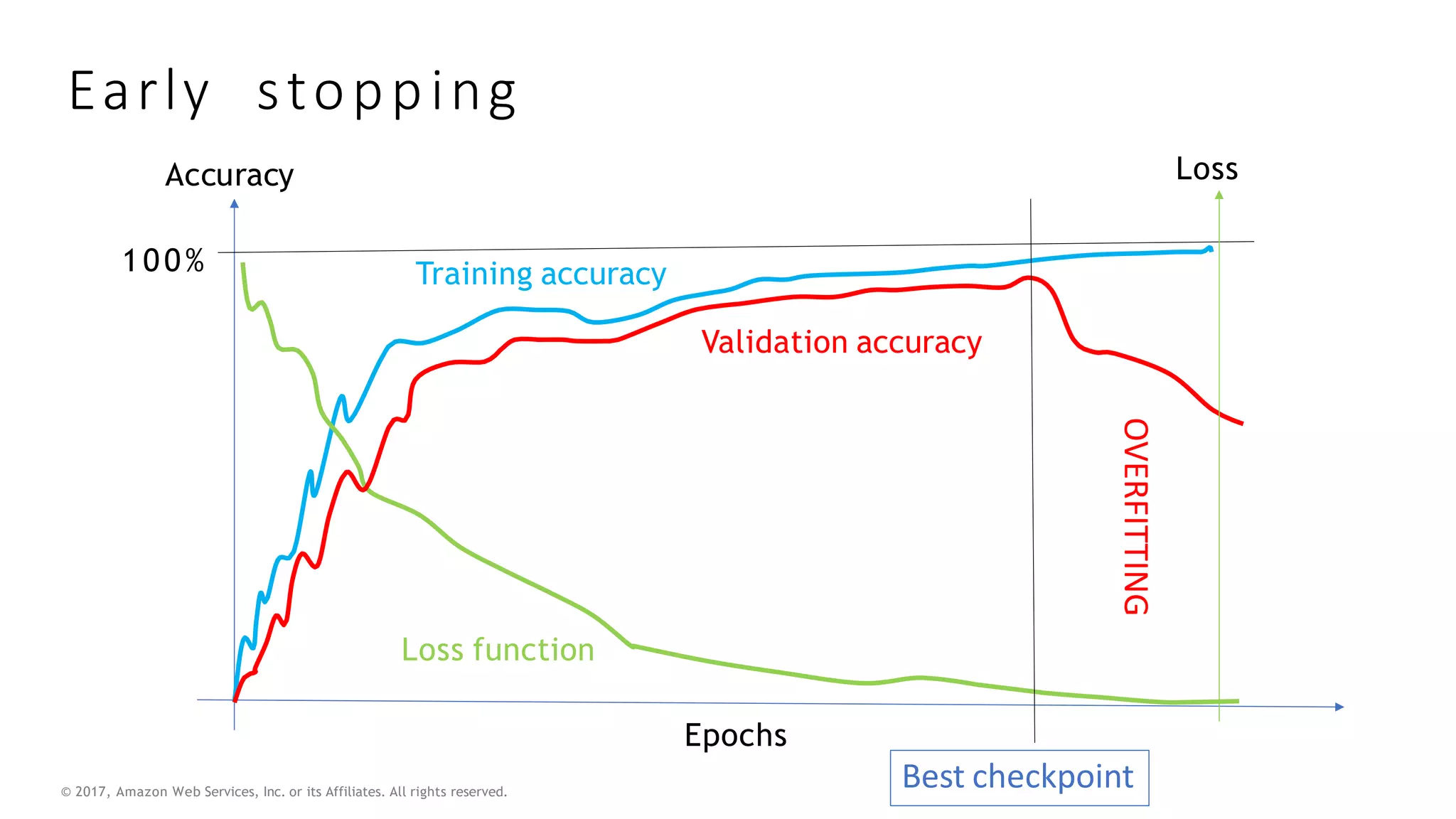

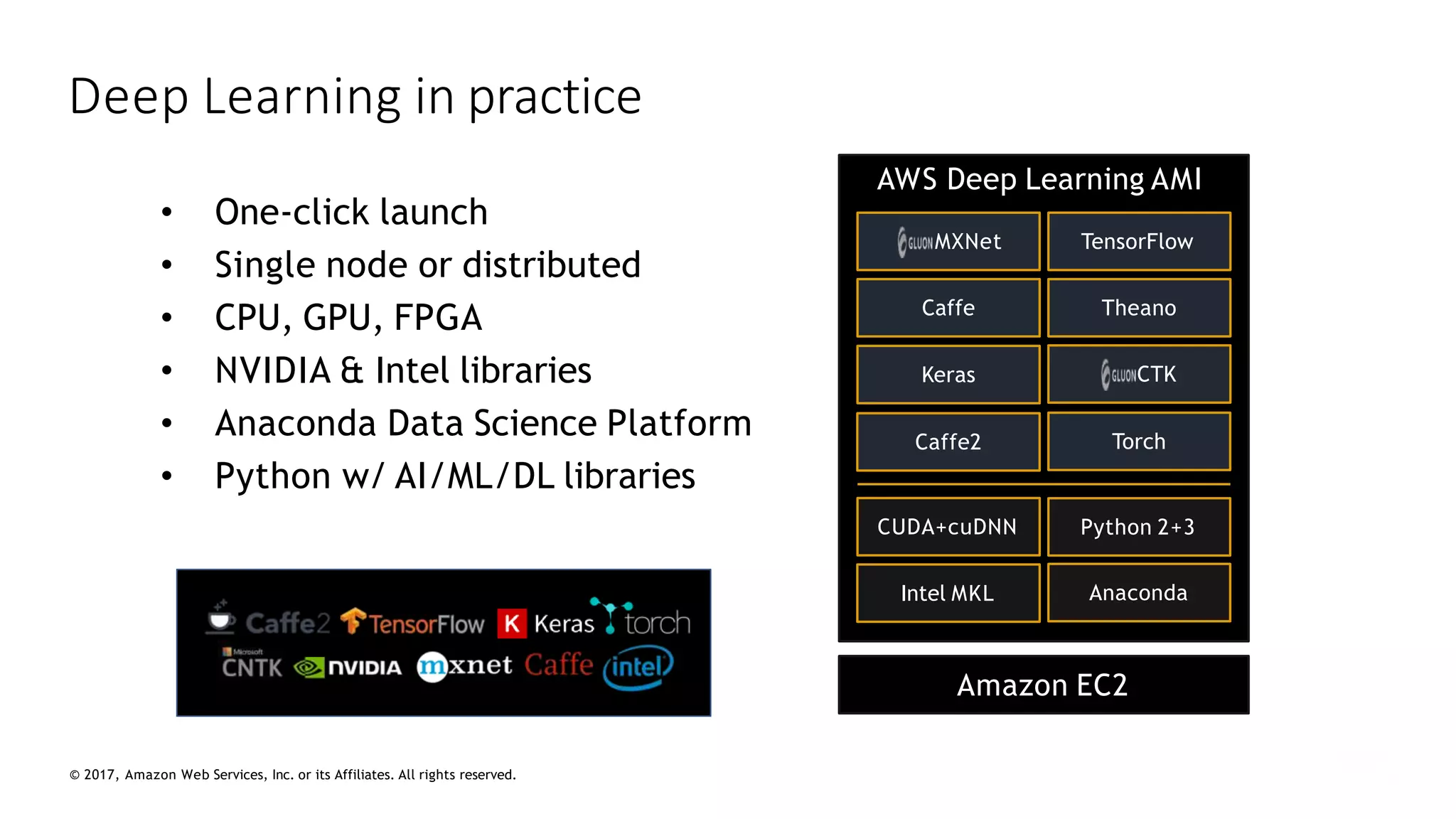

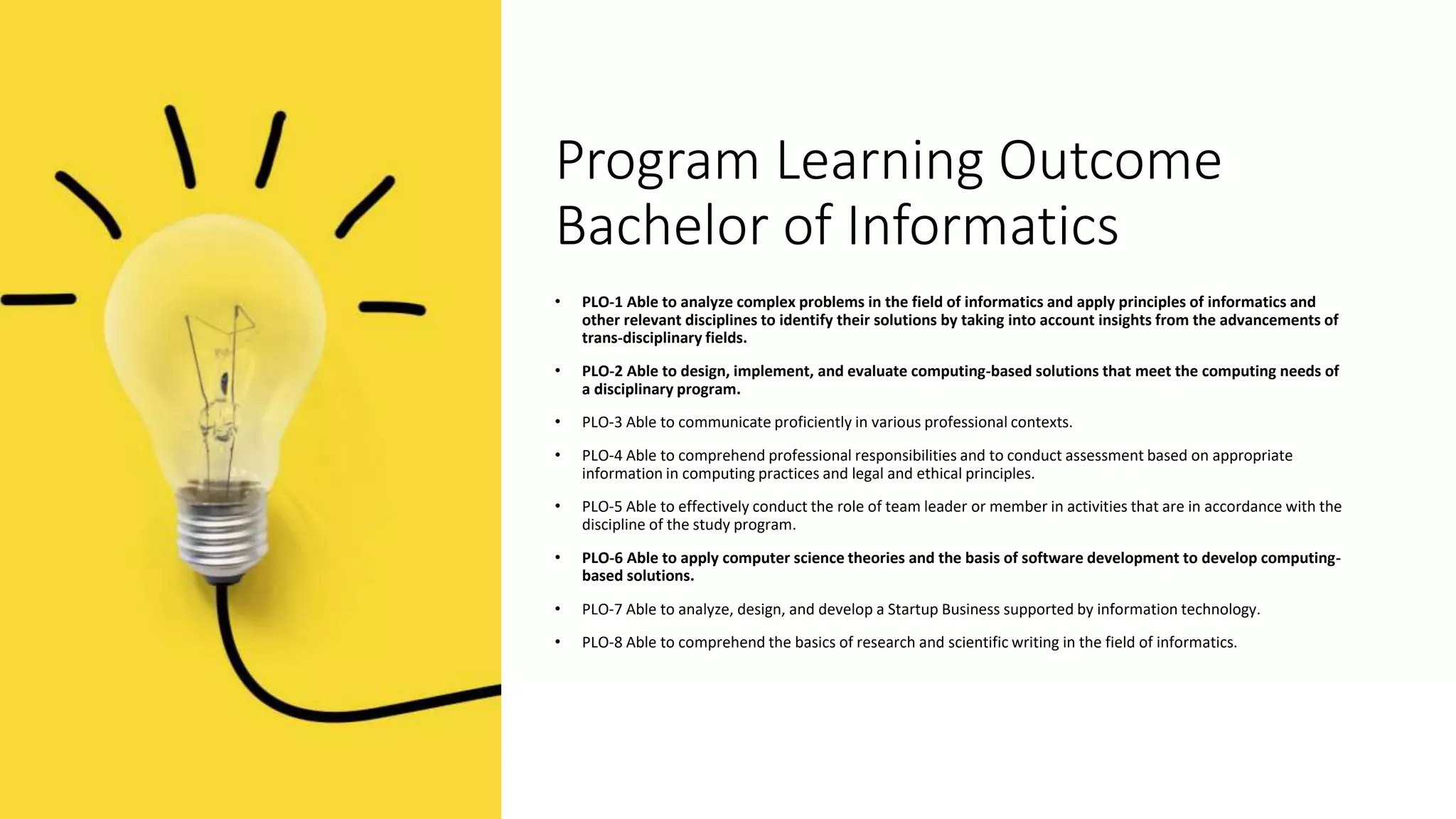

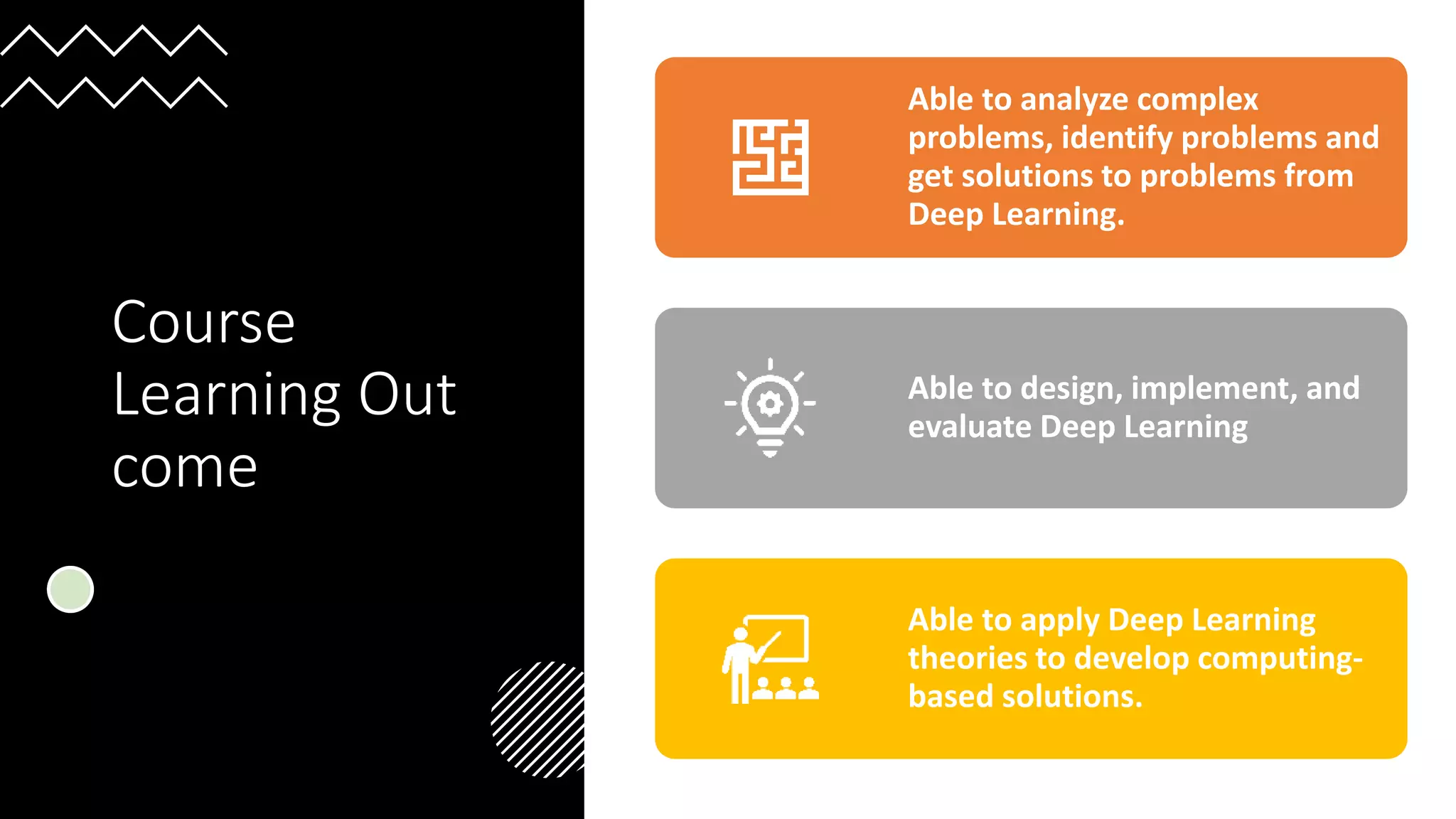

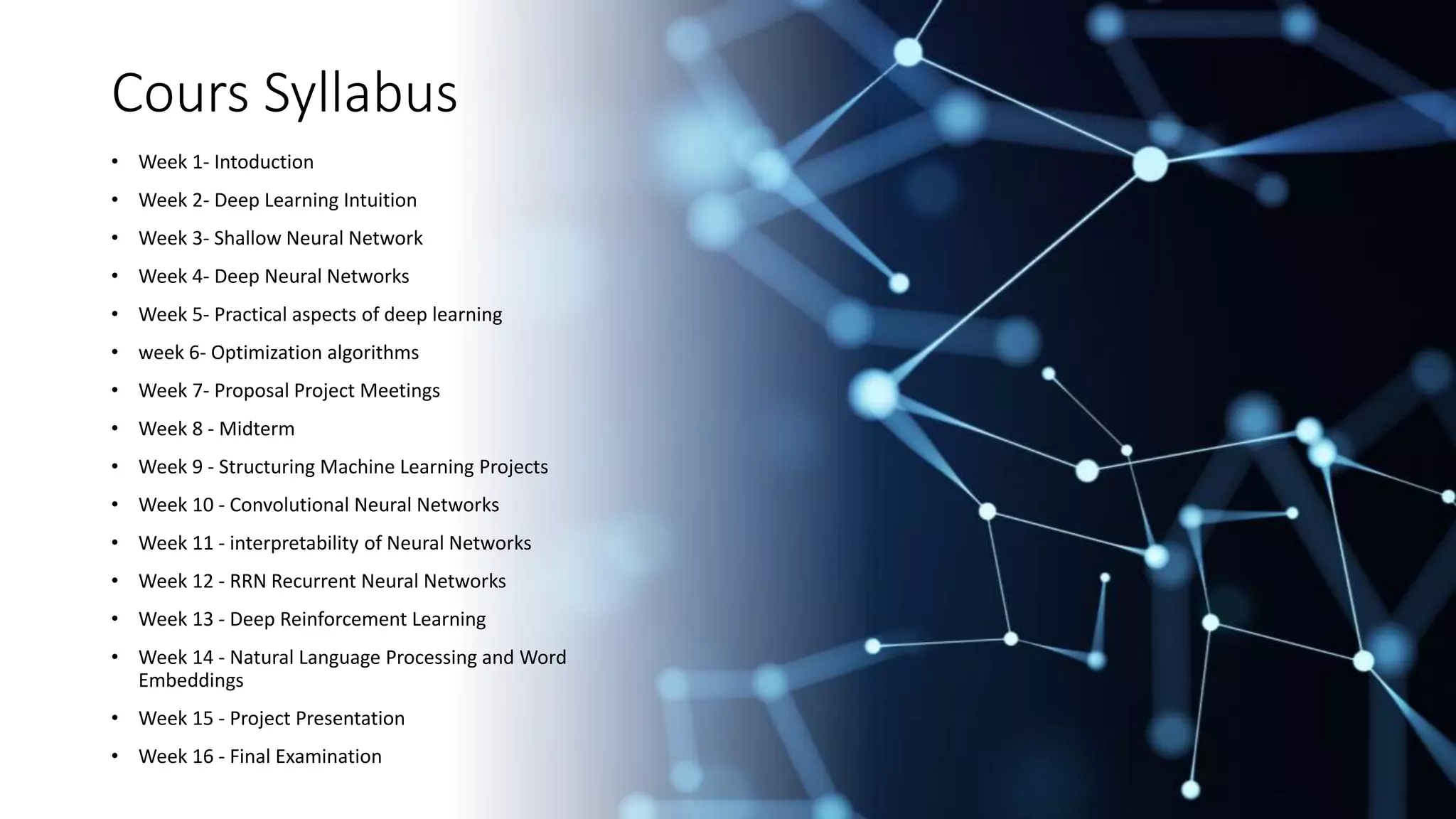

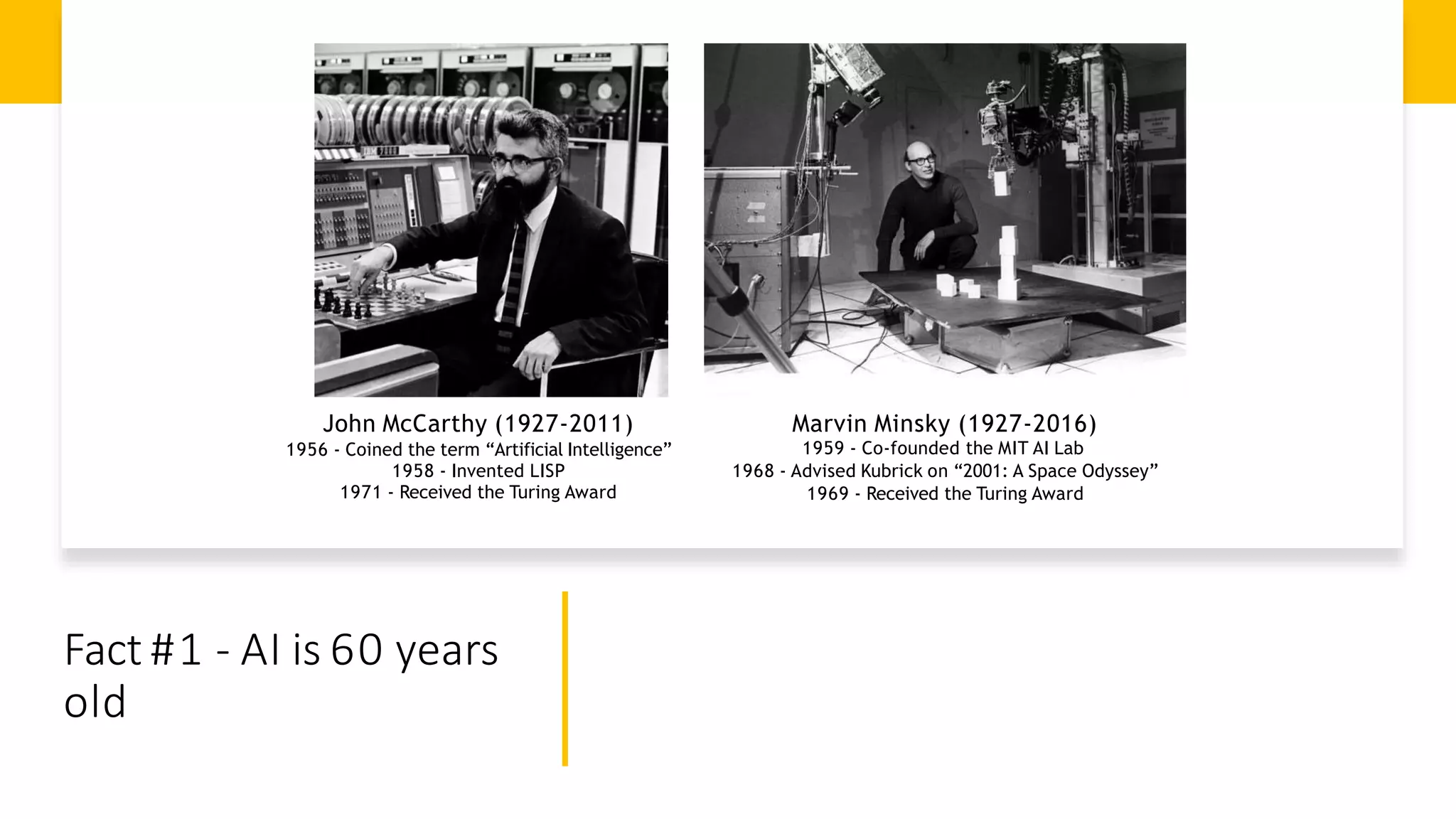

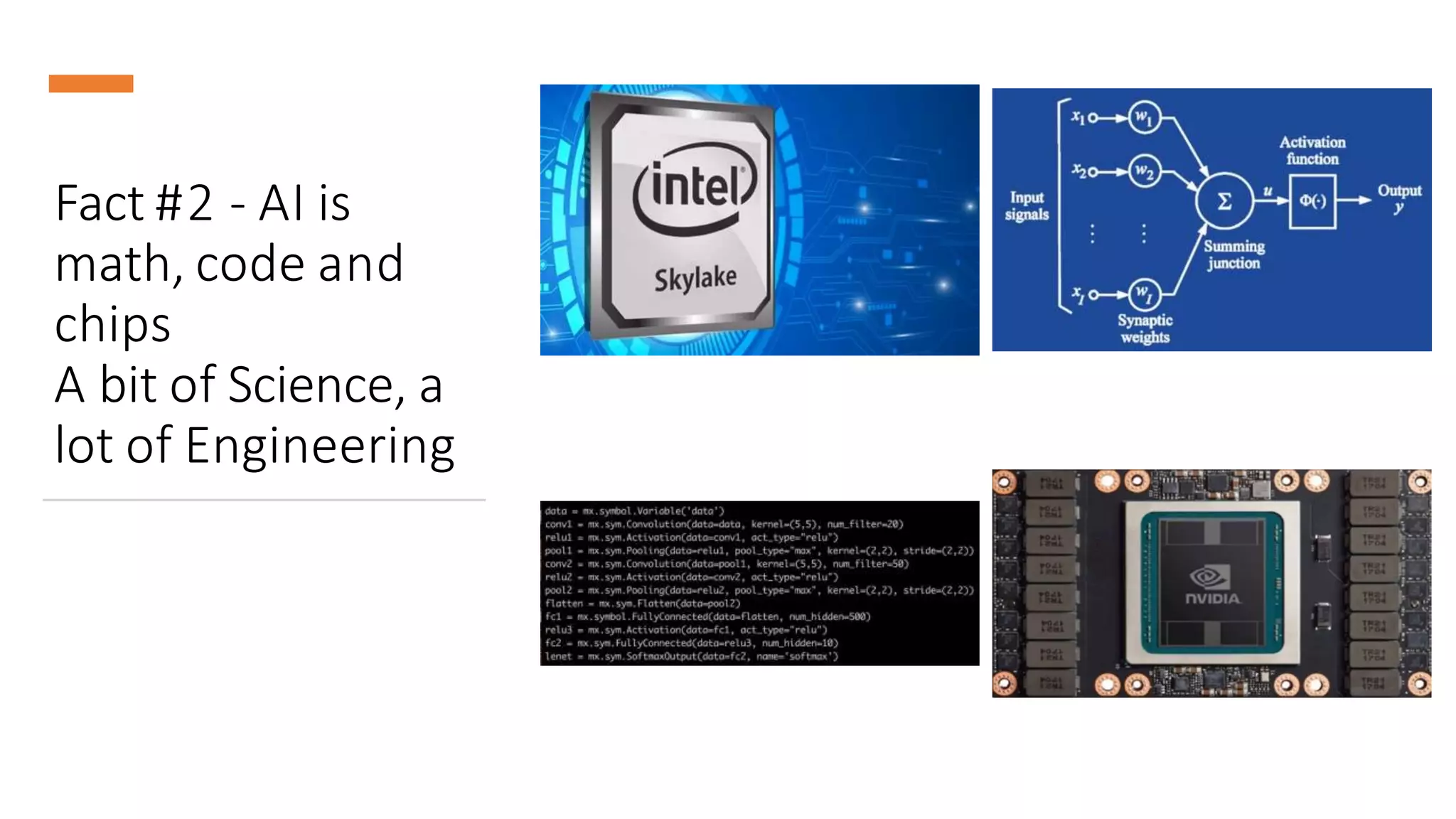

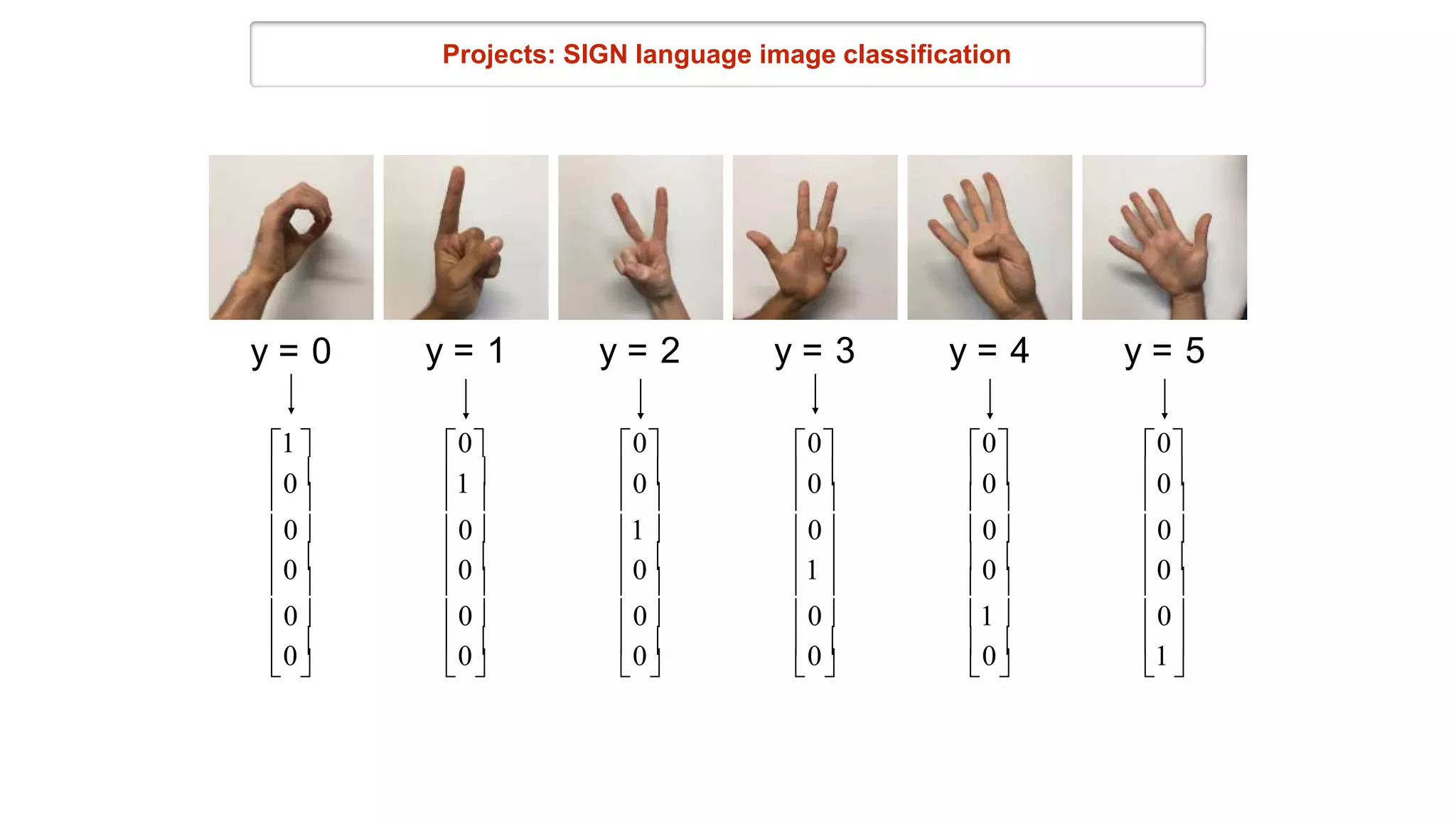

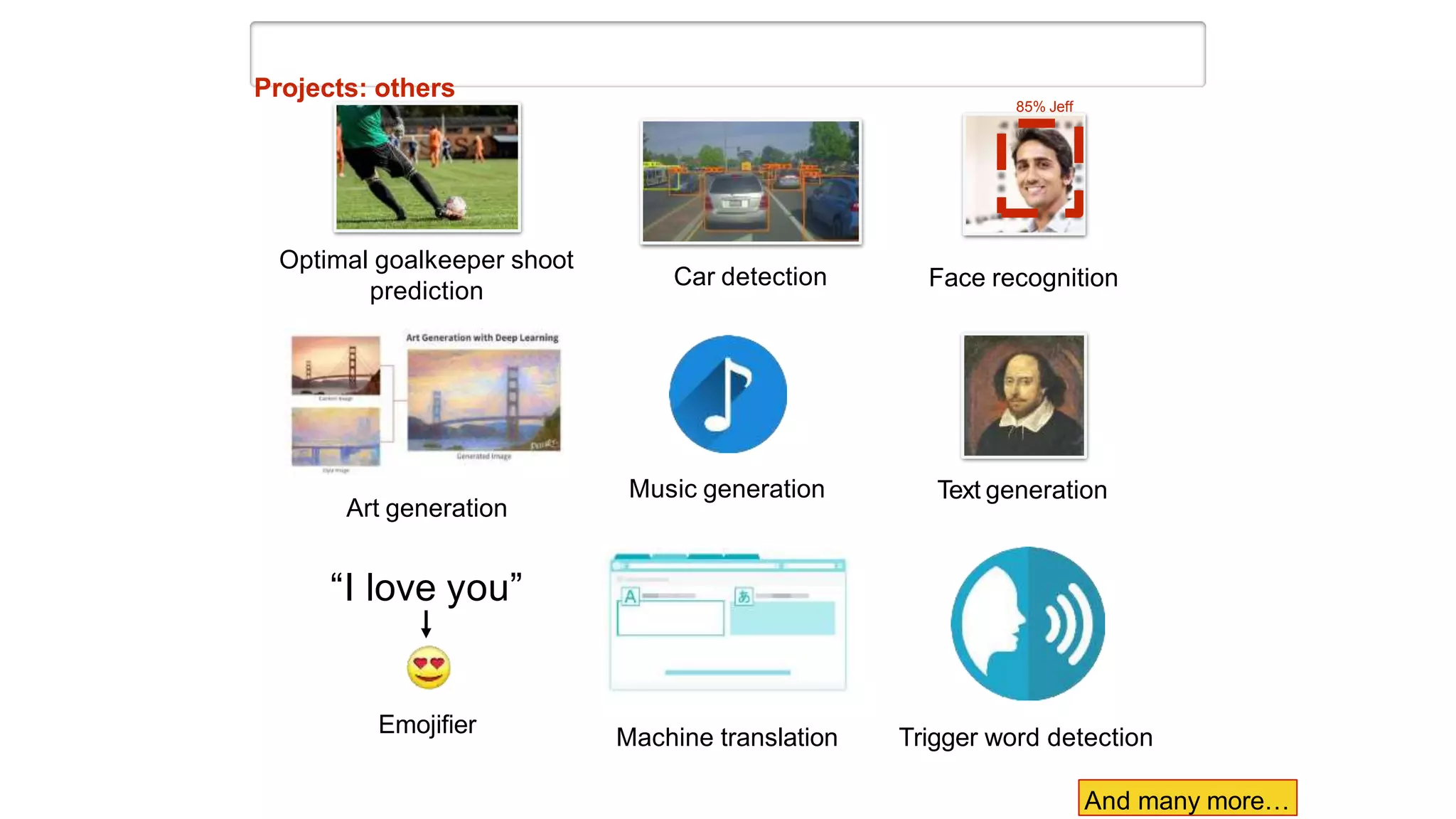

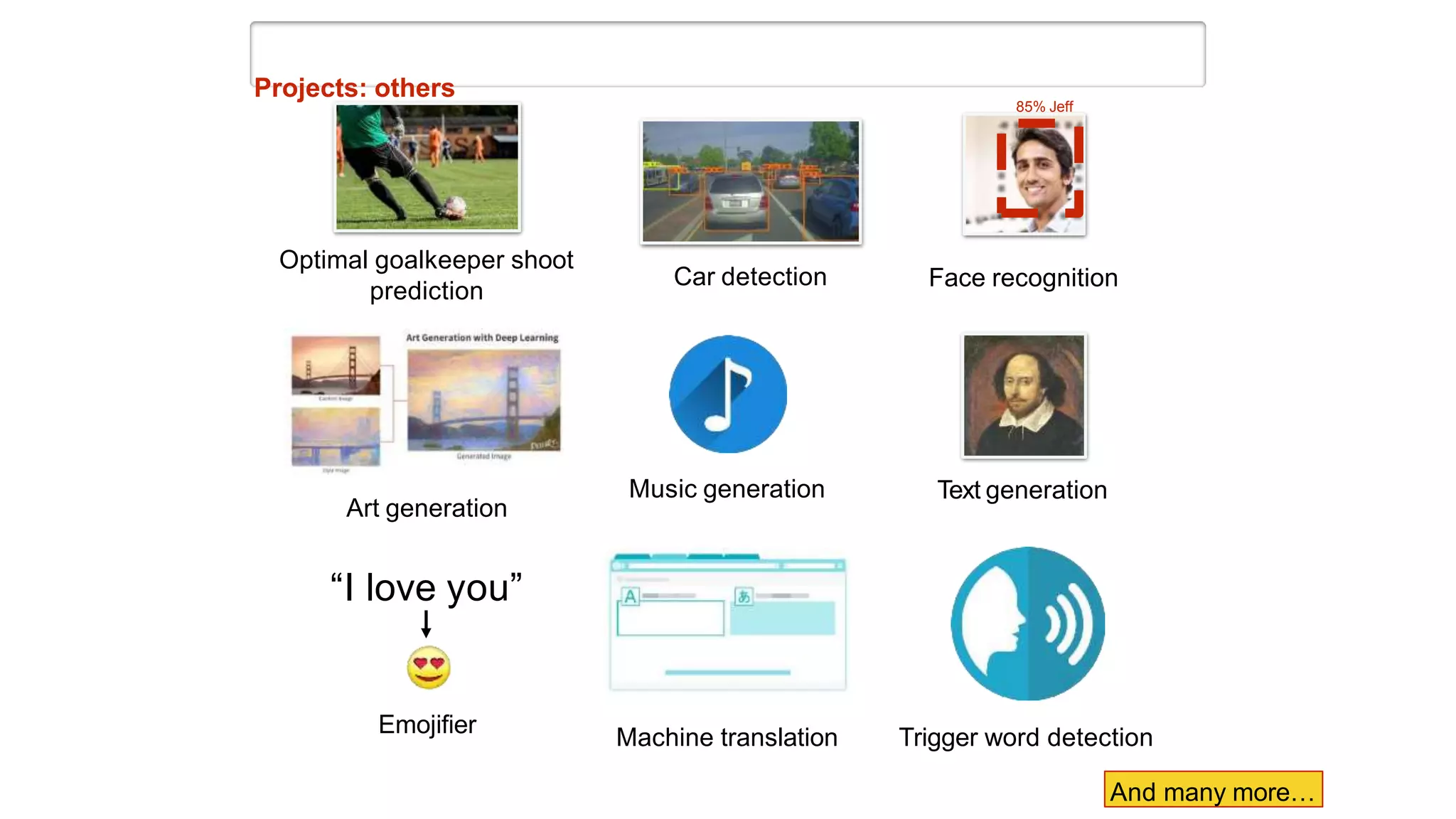

This document provides an agenda and overview for a deep learning course. The agenda includes an introduction to program and course learning outcomes, the syllabus, class management tools, and an introduction to week 1 of deep learning. The syllabus outlines 15 weekly topics on deep learning concepts and algorithms. Example student projects are provided showing applications of deep learning to areas like computer vision, natural language processing, and games. The introduction to week 1 discusses artificial intelligence, machine learning, and deep learning definitions and provides an overview of programming assignments and deep learning in action.

![[Deep Learning Specialization]

Assignment: Car detection for autonomous driving](https://image.slidesharecdn.com/week1-introduction-230917104514-debd3c3b/75/Week1-Introduction-pptx-23-2048.jpg)

![[L. Gatys et al.: Image Style Transfer Using Convolutional Neural Networks , 2015]](https://image.slidesharecdn.com/week1-introduction-230917104514-debd3c3b/75/Week1-Introduction-pptx-25-2048.jpg)

![[L. Gatys et al.: Image Style Transfer Using Convolutional Neural Networks , 2015]](https://image.slidesharecdn.com/week1-introduction-230917104514-debd3c3b/75/Week1-Introduction-pptx-26-2048.jpg)

![[L. Gatys et al.: Image Style Transfer Using Convolutional Neural Networks , 2015]](https://image.slidesharecdn.com/week1-introduction-230917104514-debd3c3b/75/Week1-Introduction-pptx-27-2048.jpg)

![Projects: others

LeafNet: A Deep Learning Solution to Tree Species Identification

[Galbally, Rao & Pacalin: Spring 2018,

http://cs230.stanford.edu/projects_spring_2018/posters/8285741.pdf] [Steven Chen: Fall 2017]

Predicting price of an object from a picture

Neural

Network

300$](https://image.slidesharecdn.com/week1-introduction-230917104514-debd3c3b/75/Week1-Introduction-pptx-28-2048.jpg)