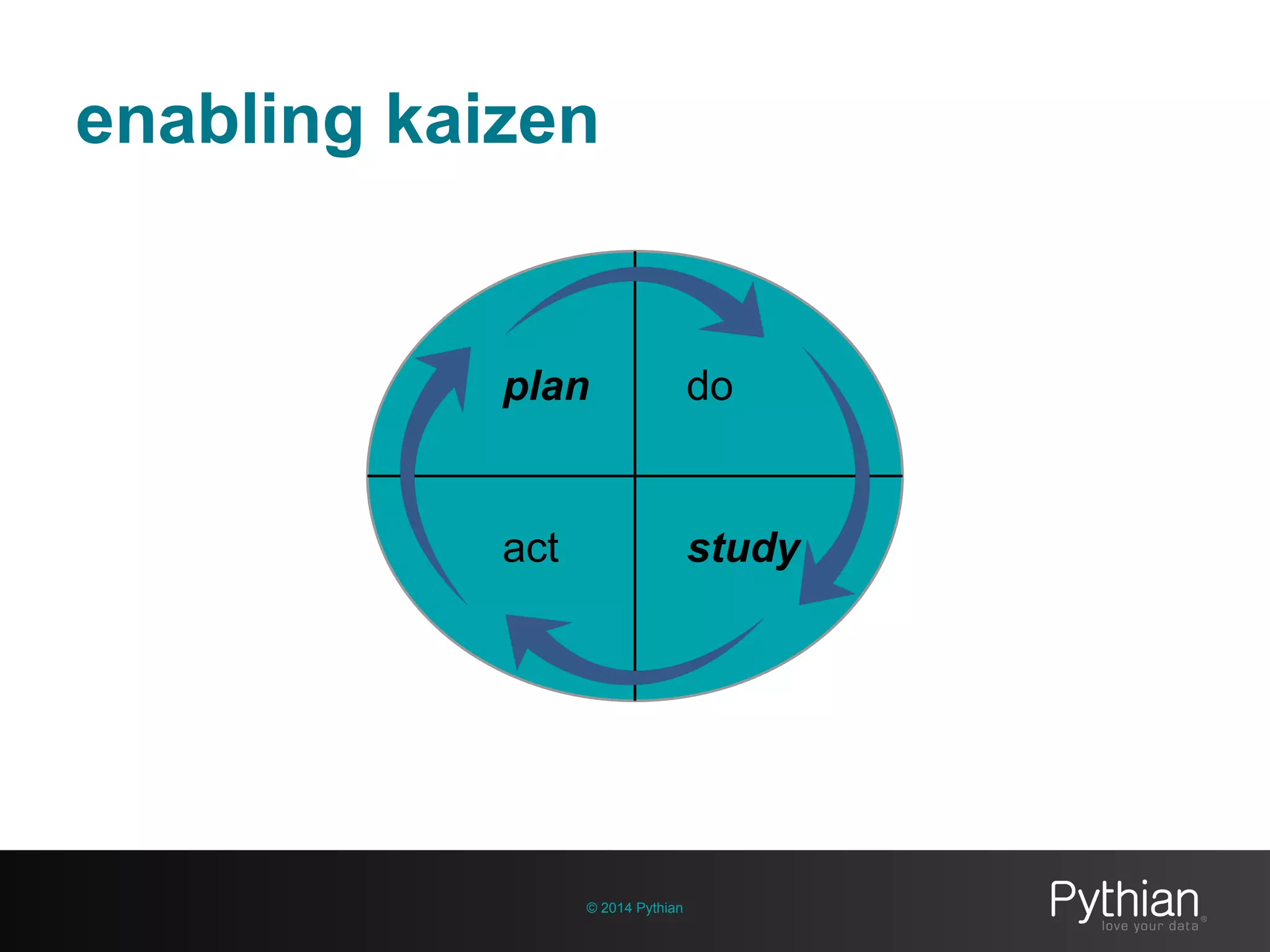

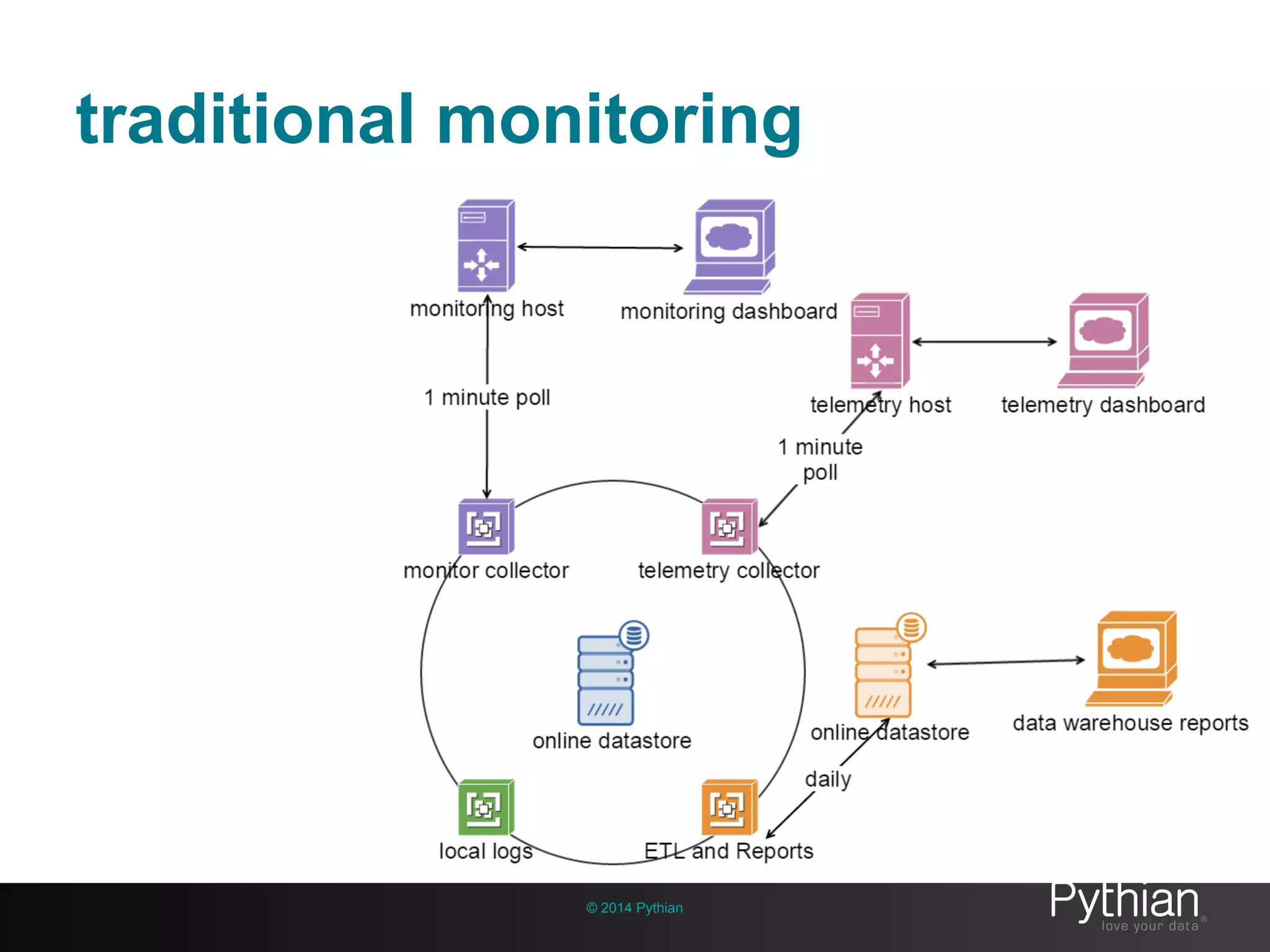

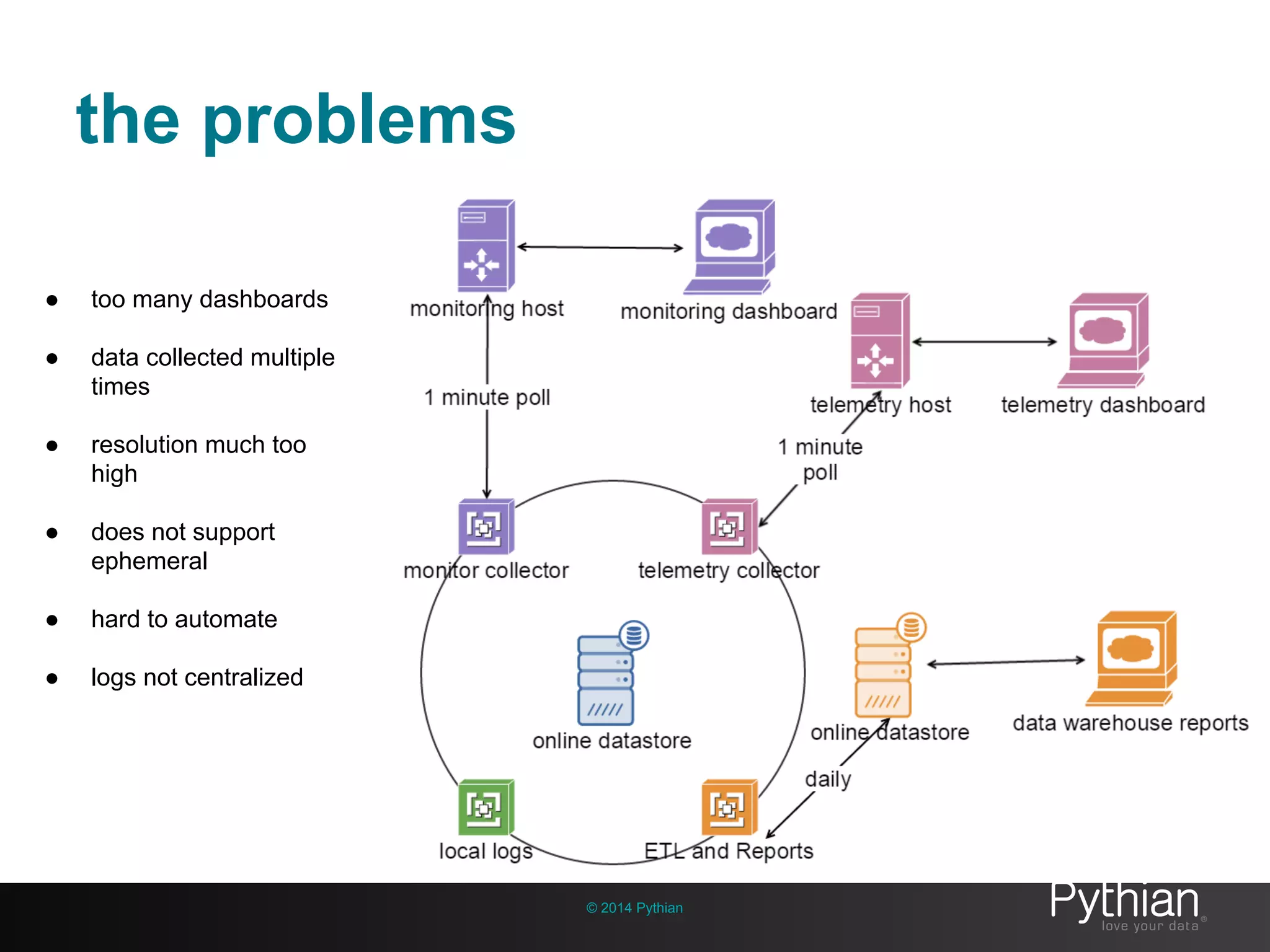

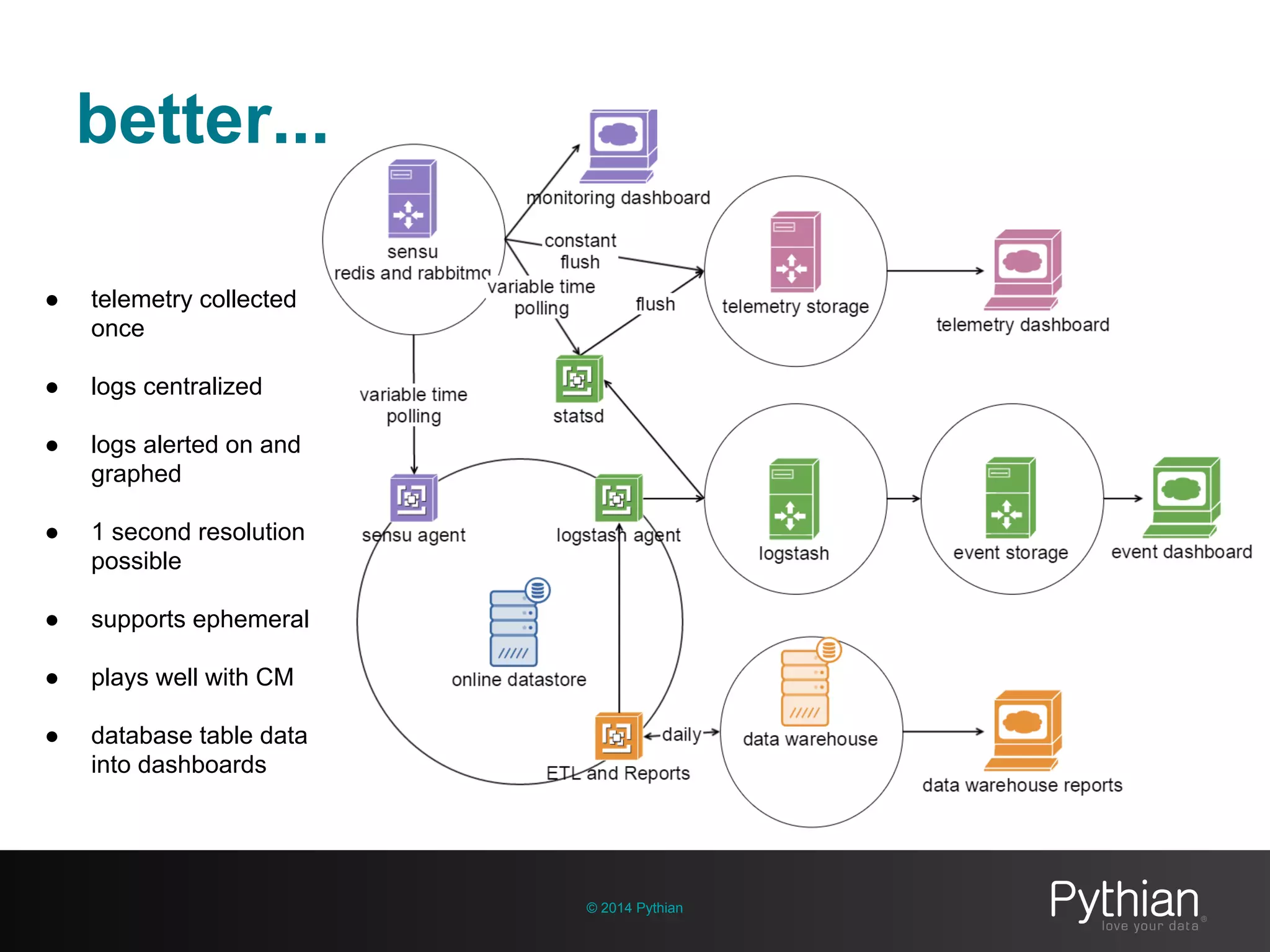

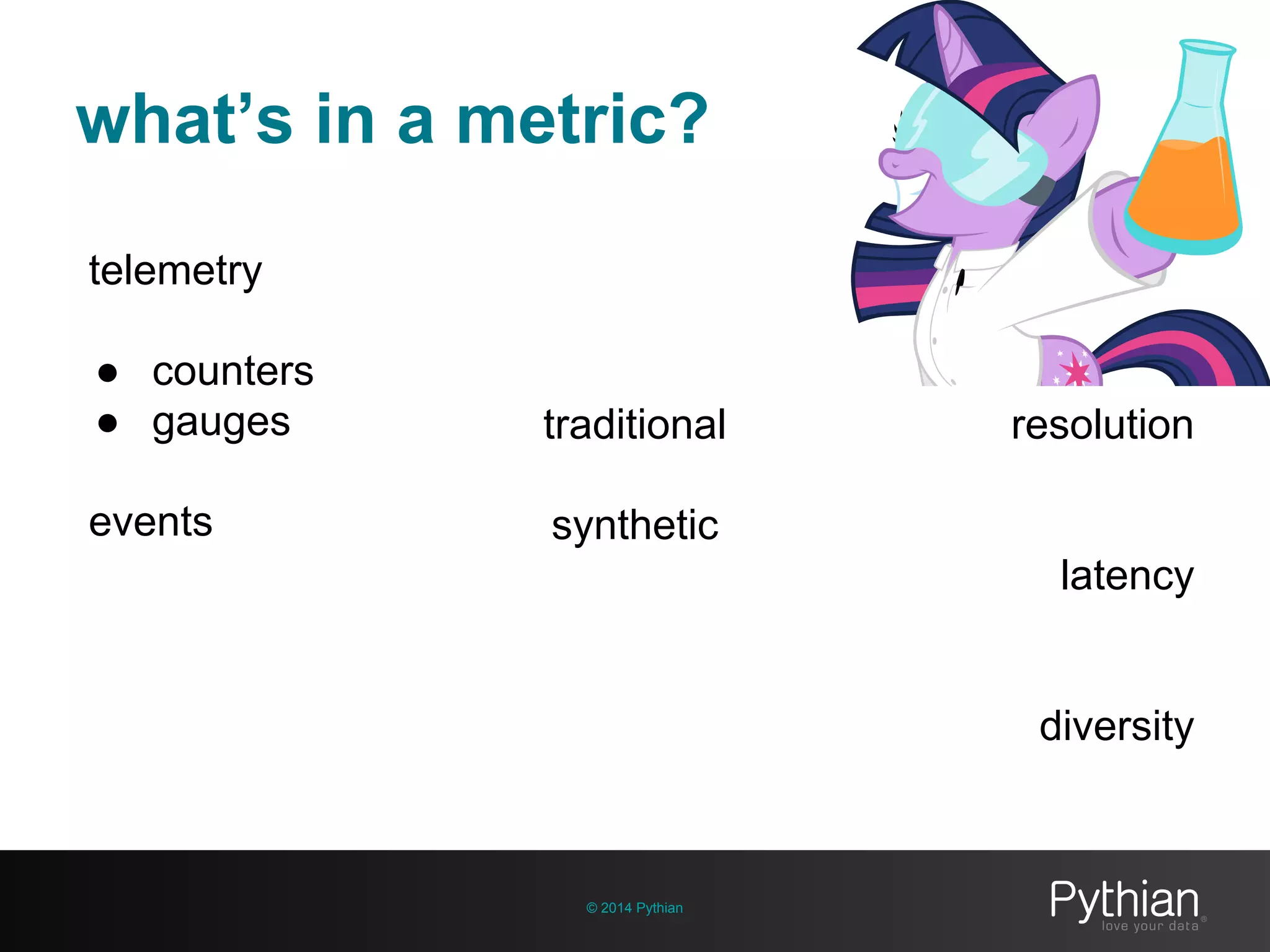

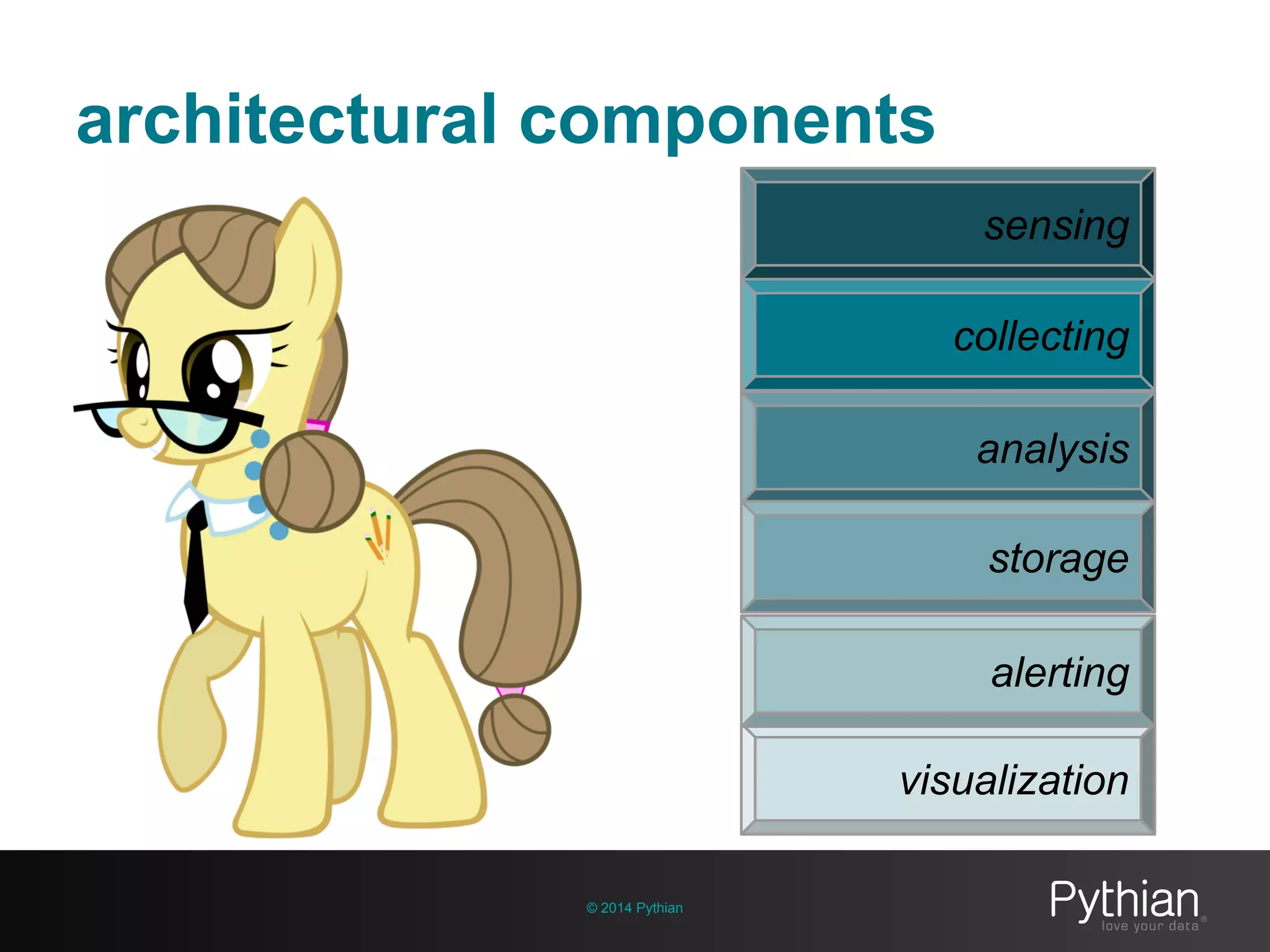

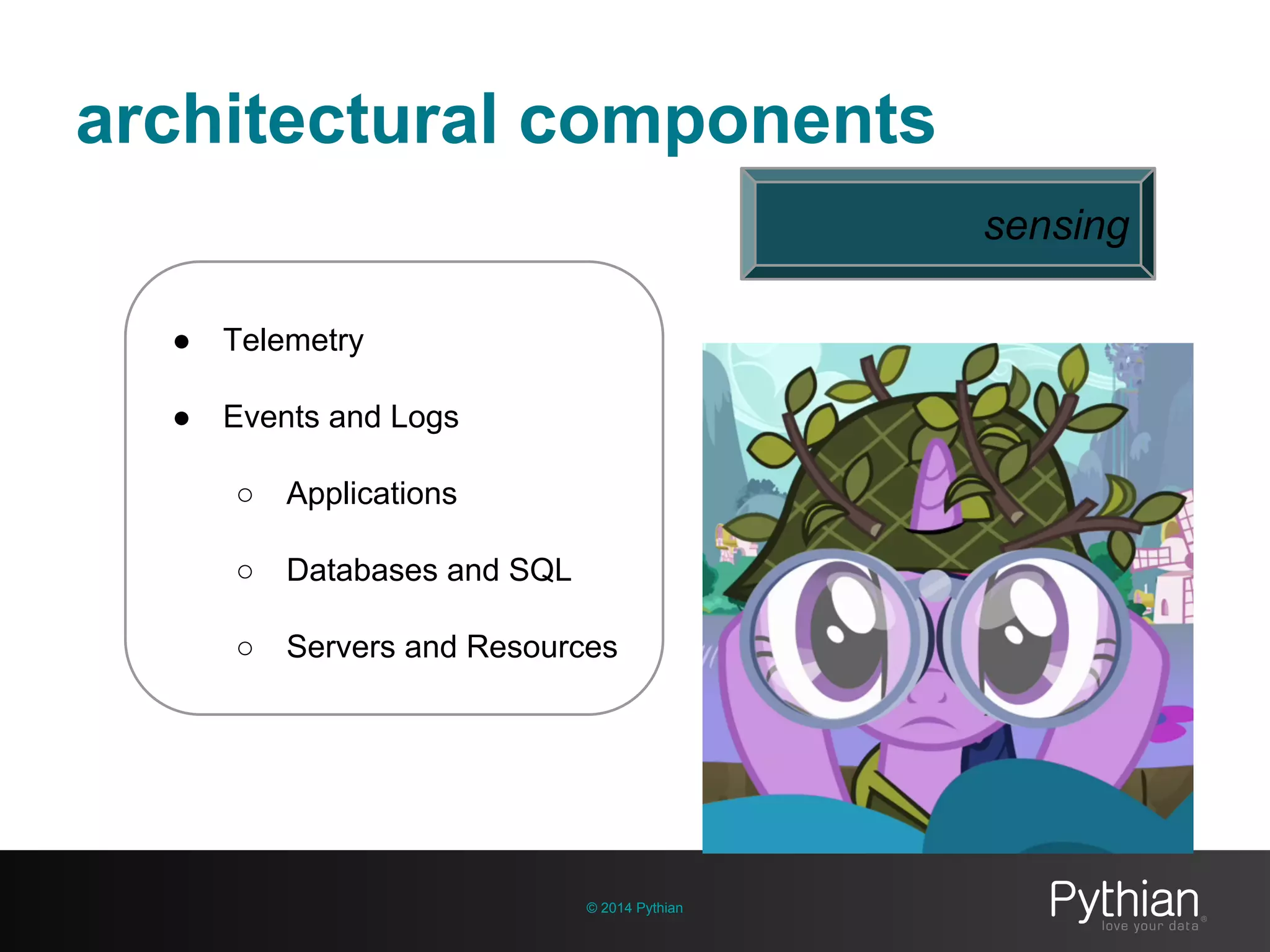

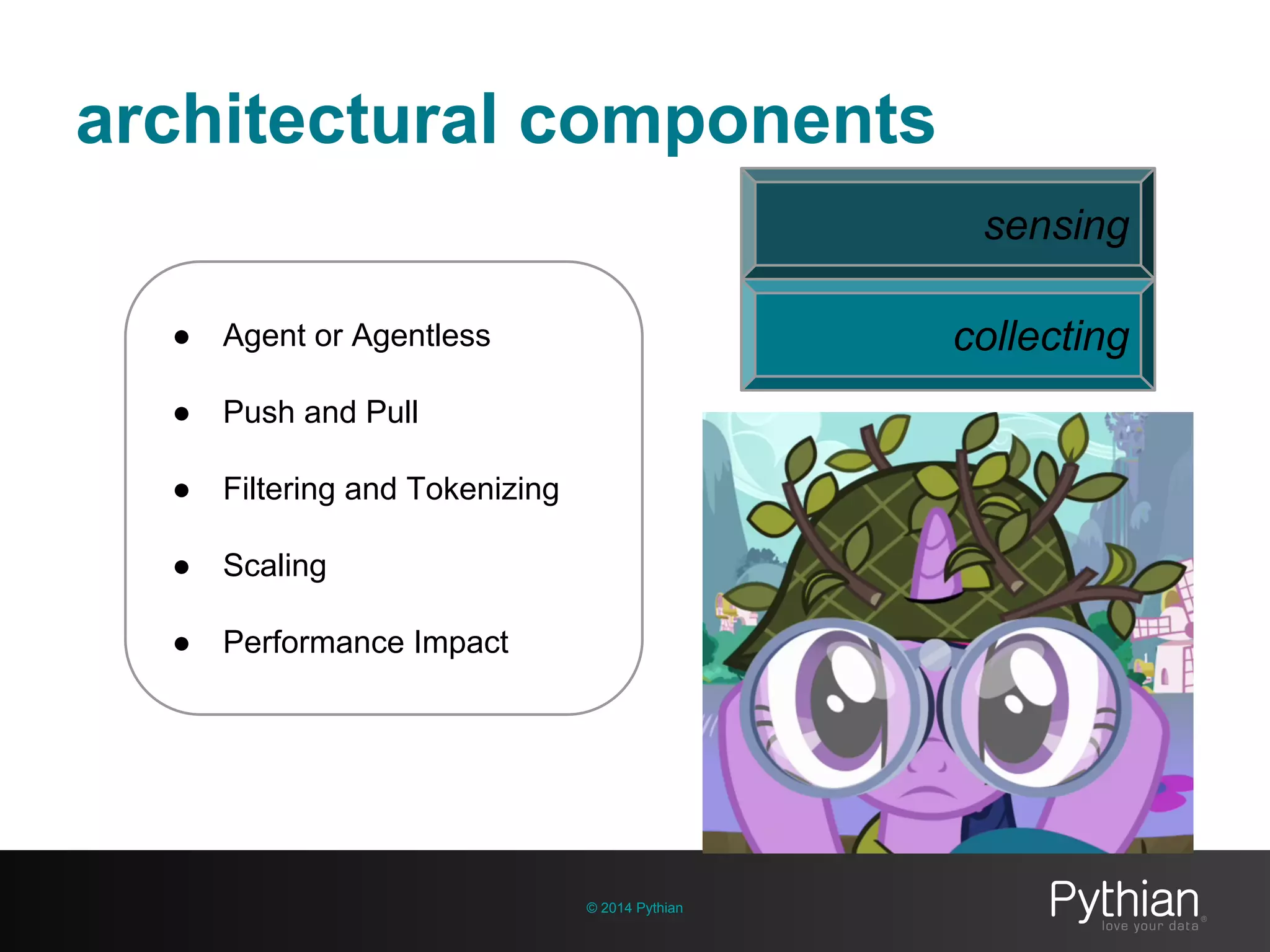

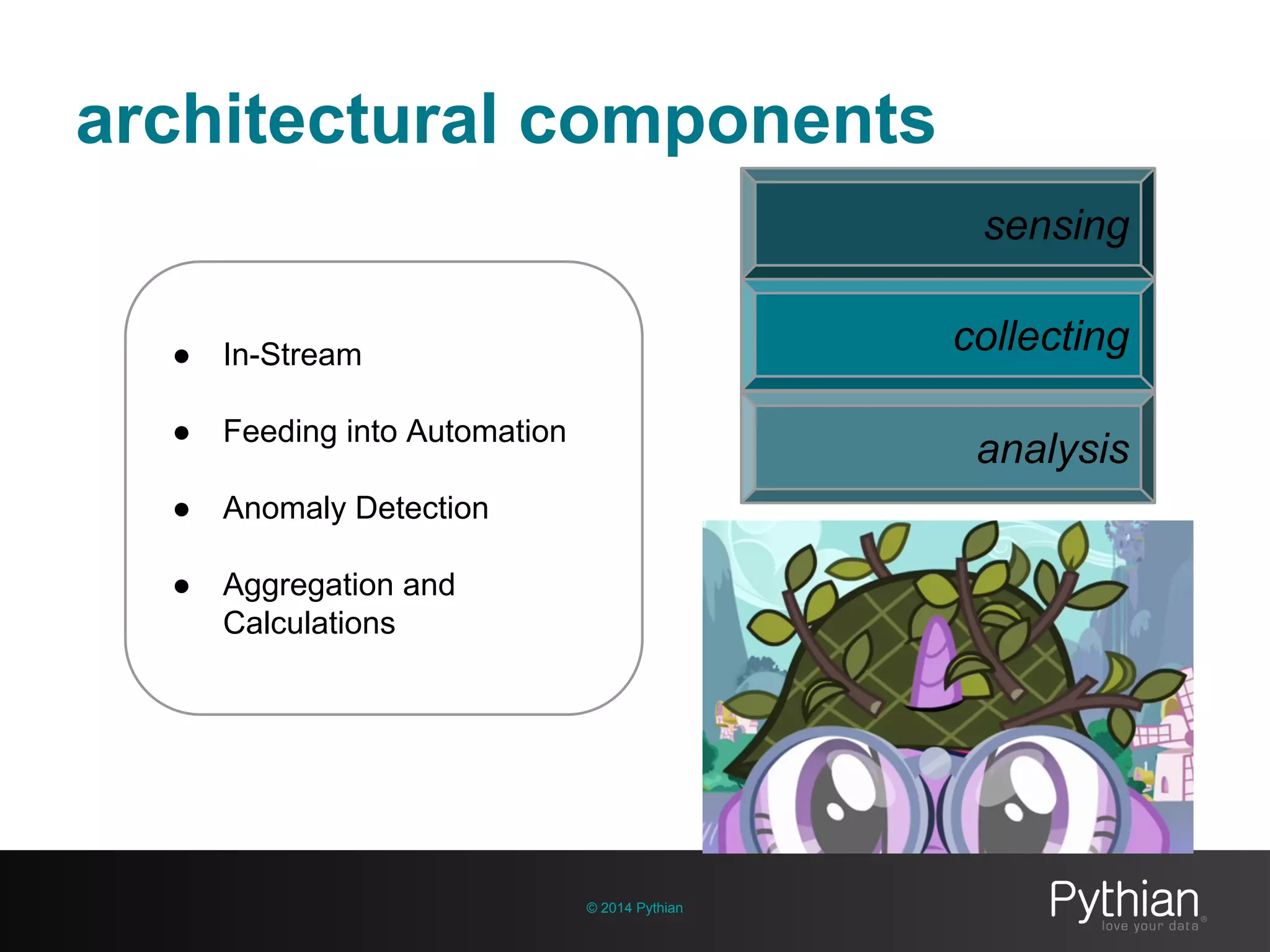

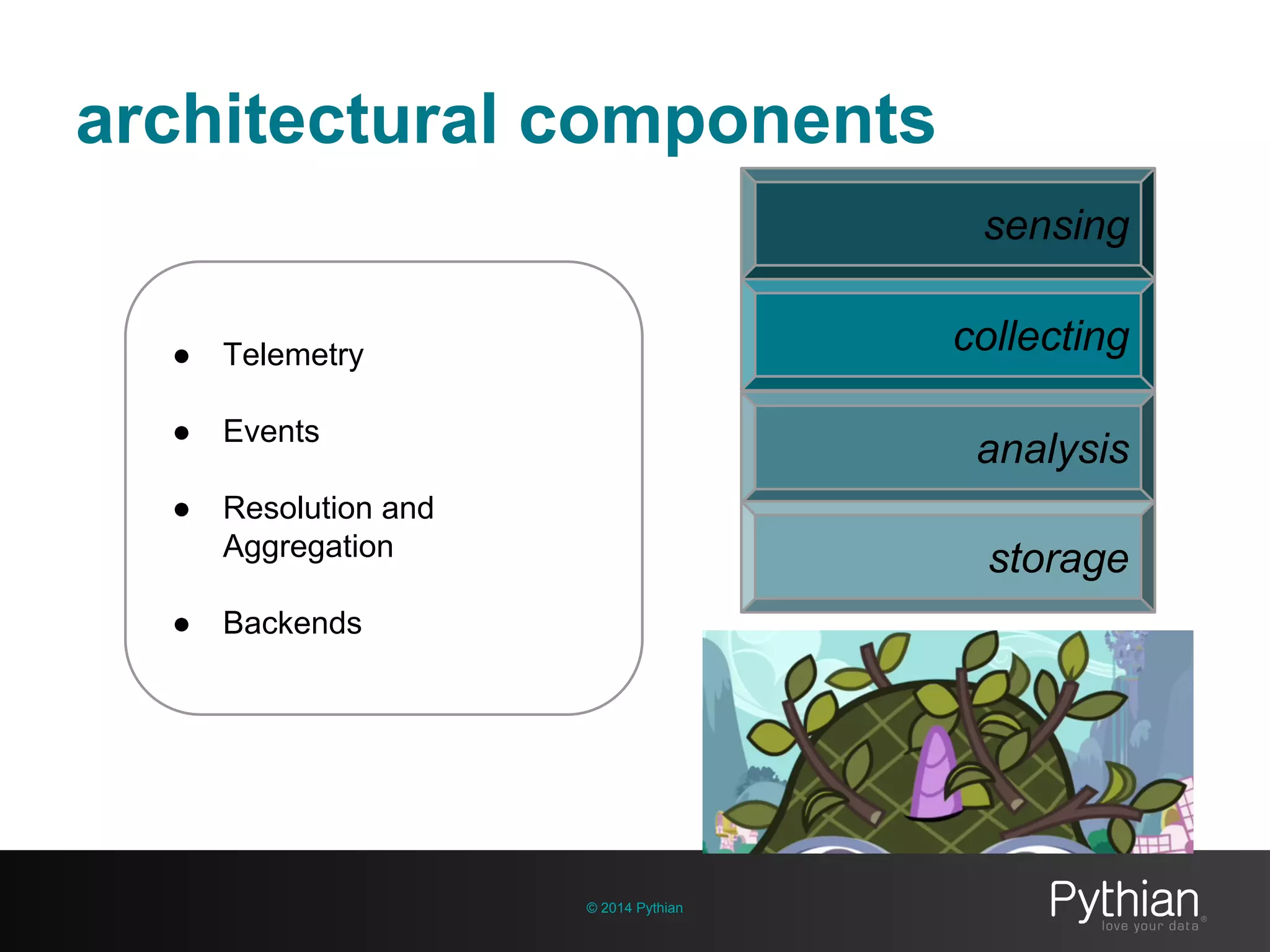

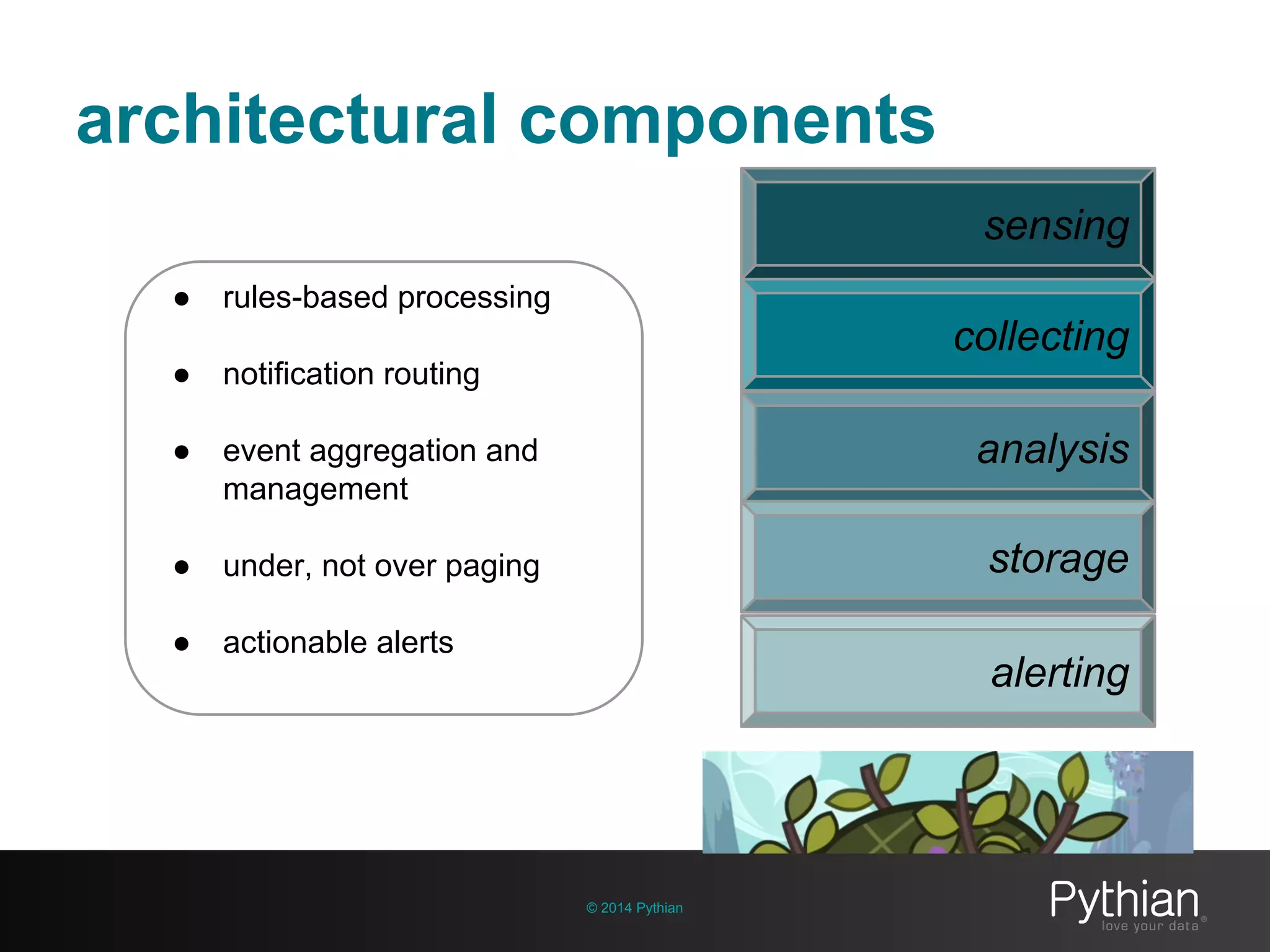

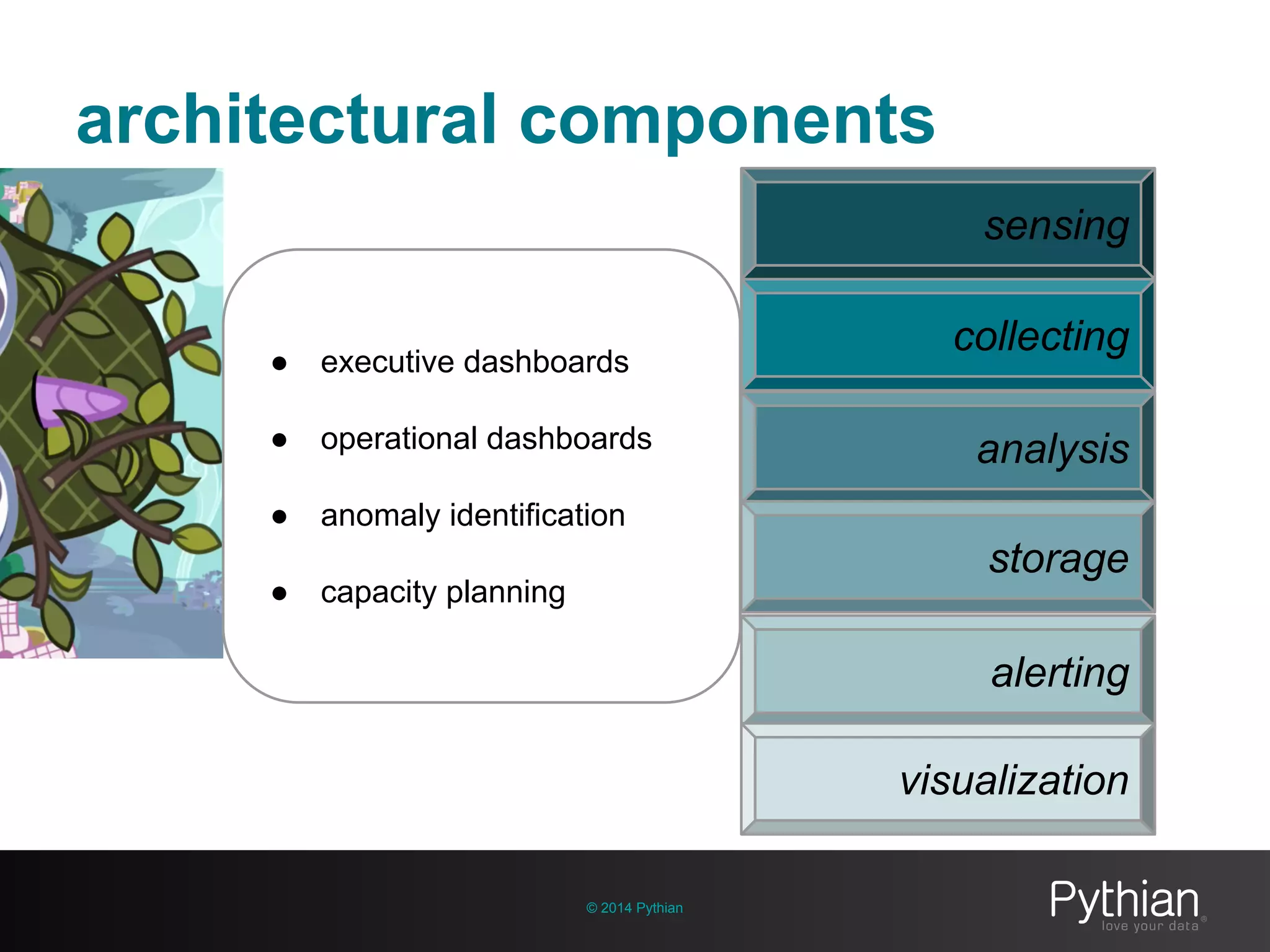

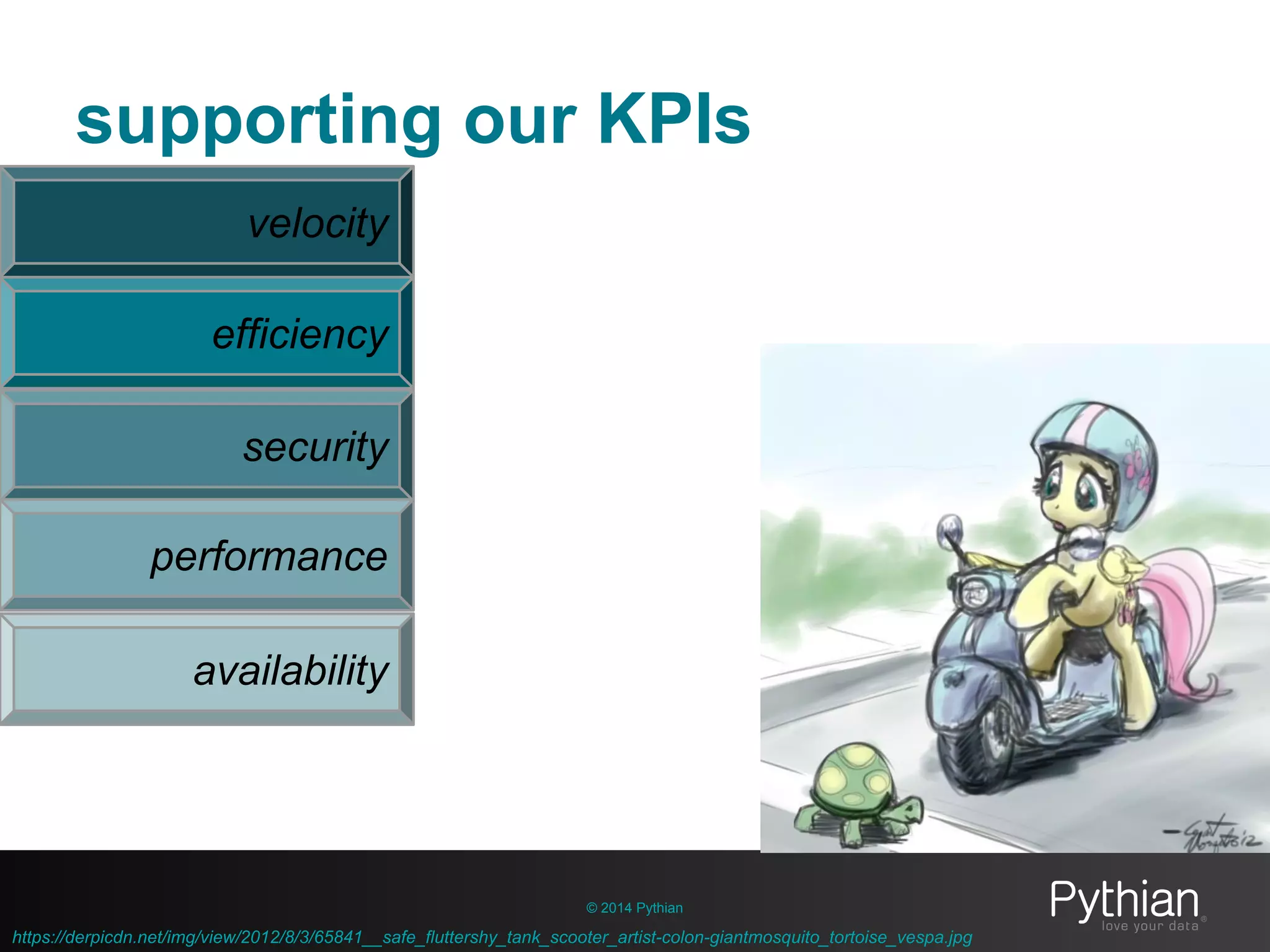

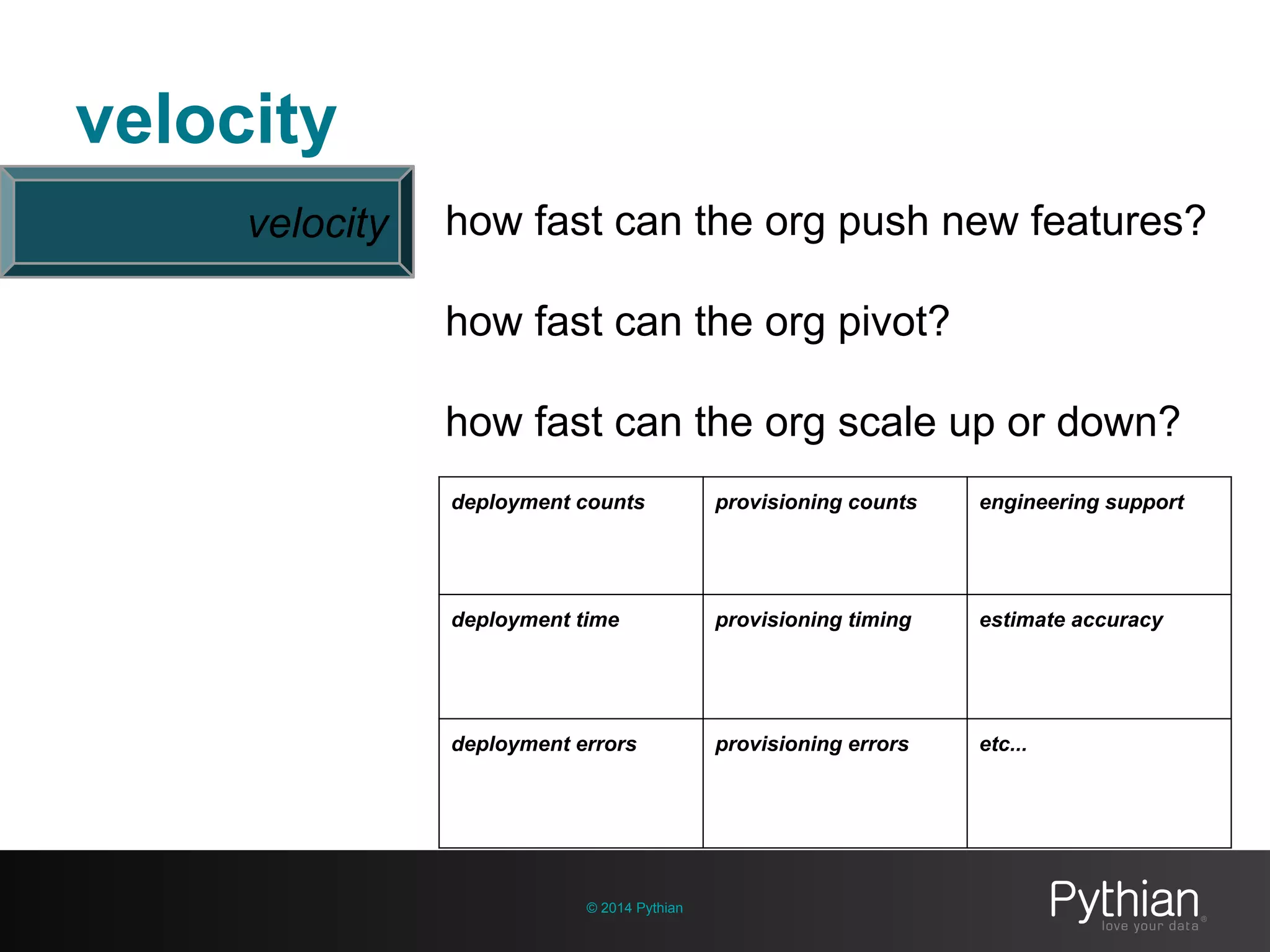

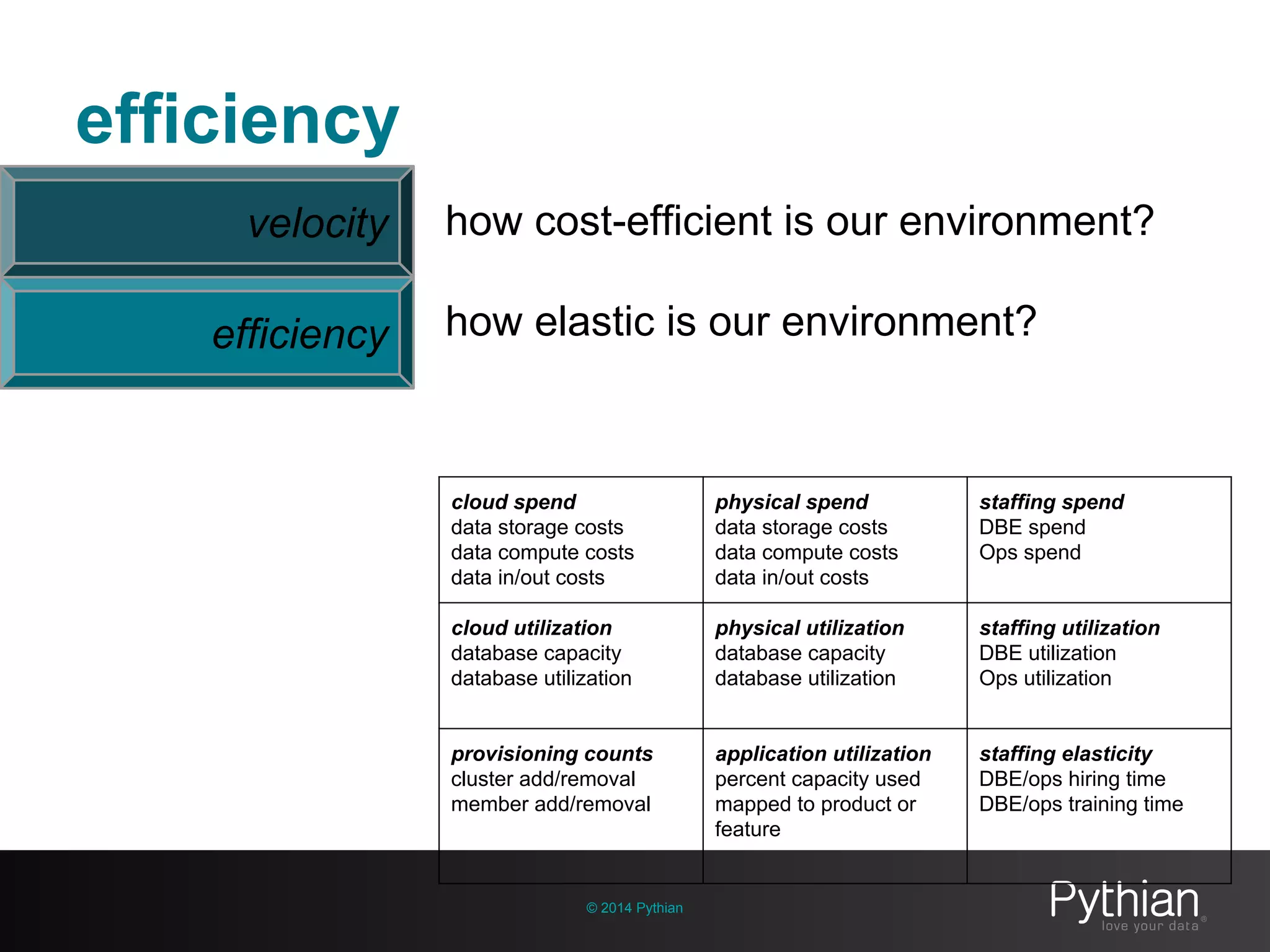

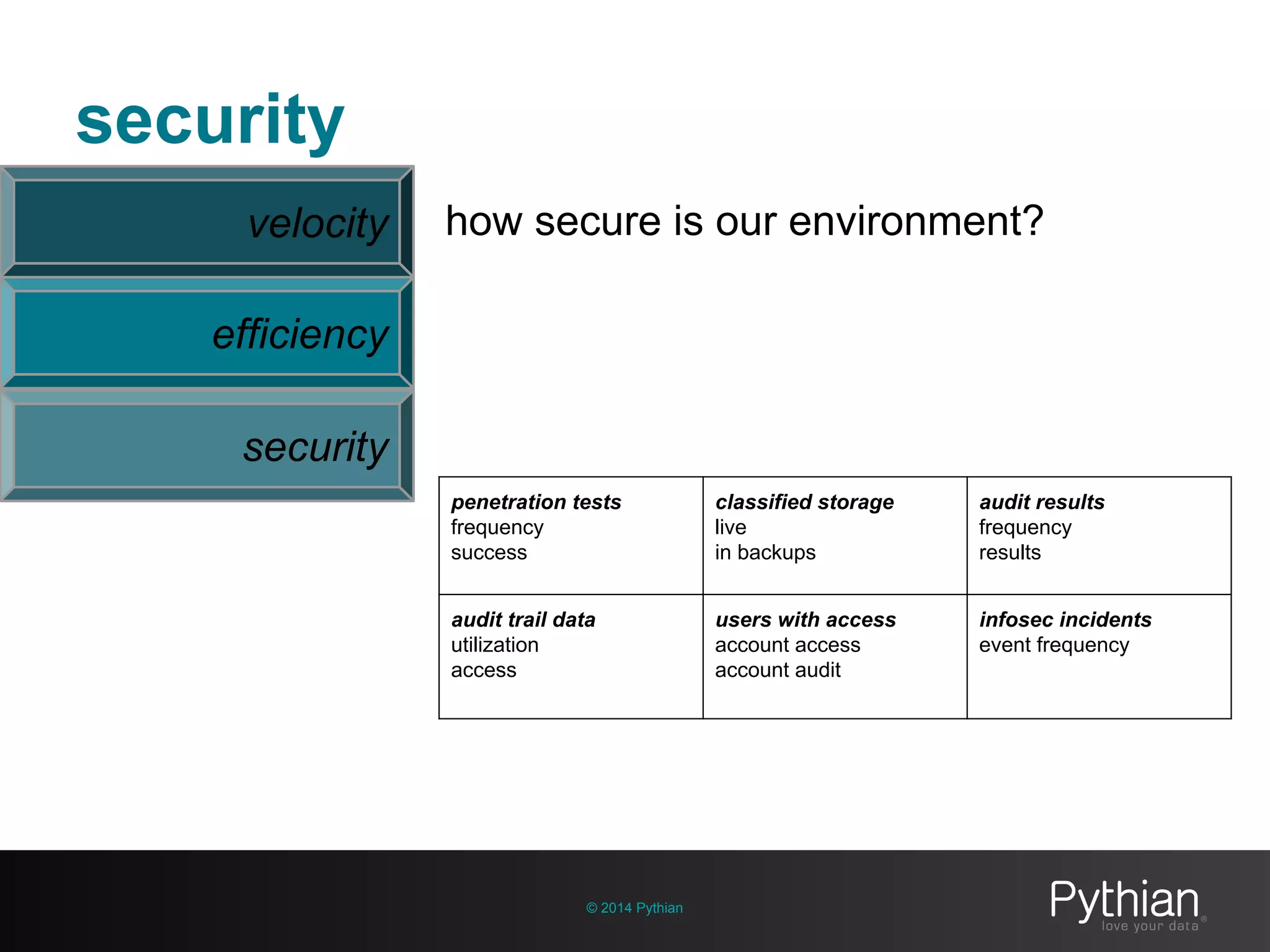

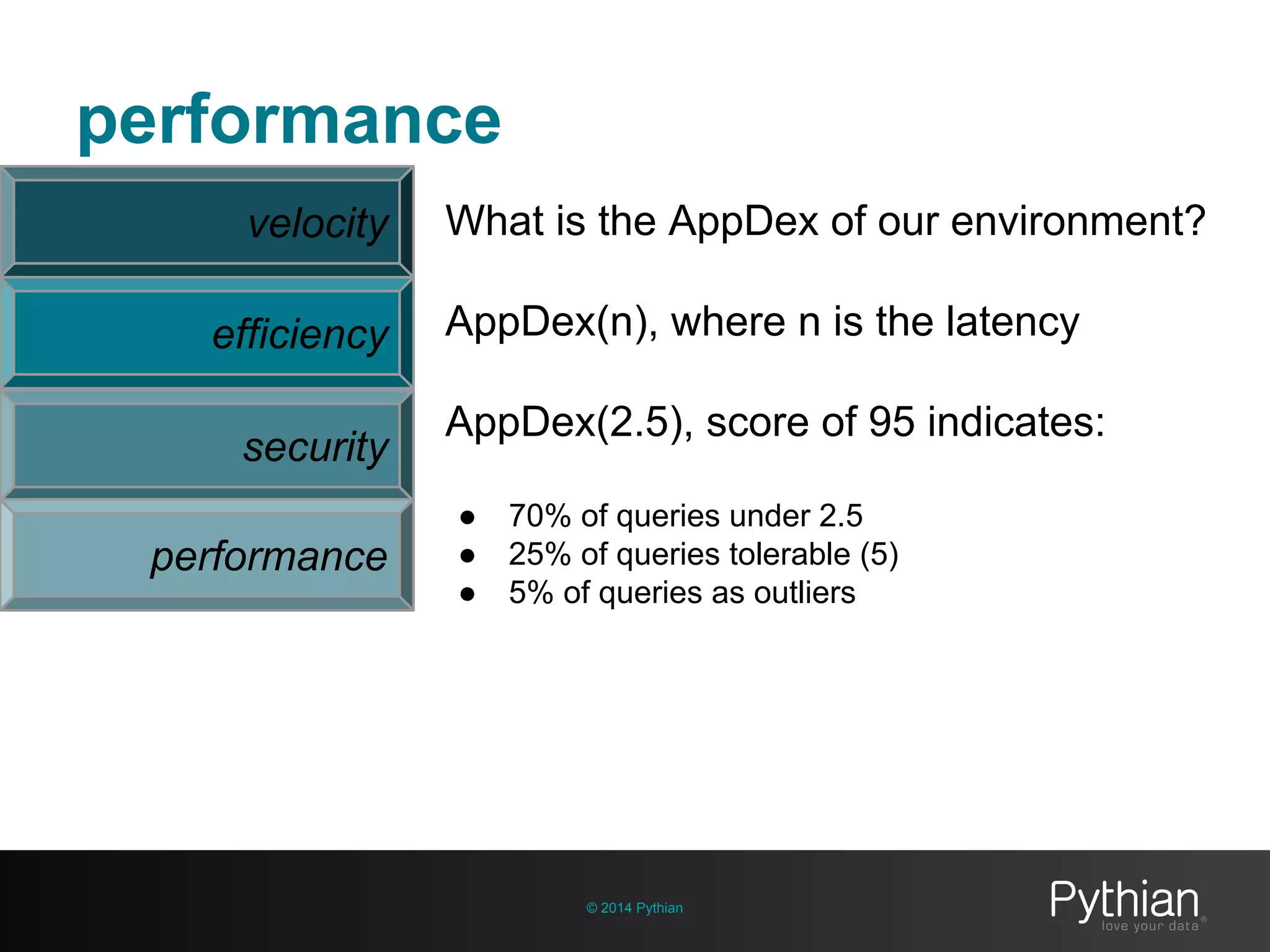

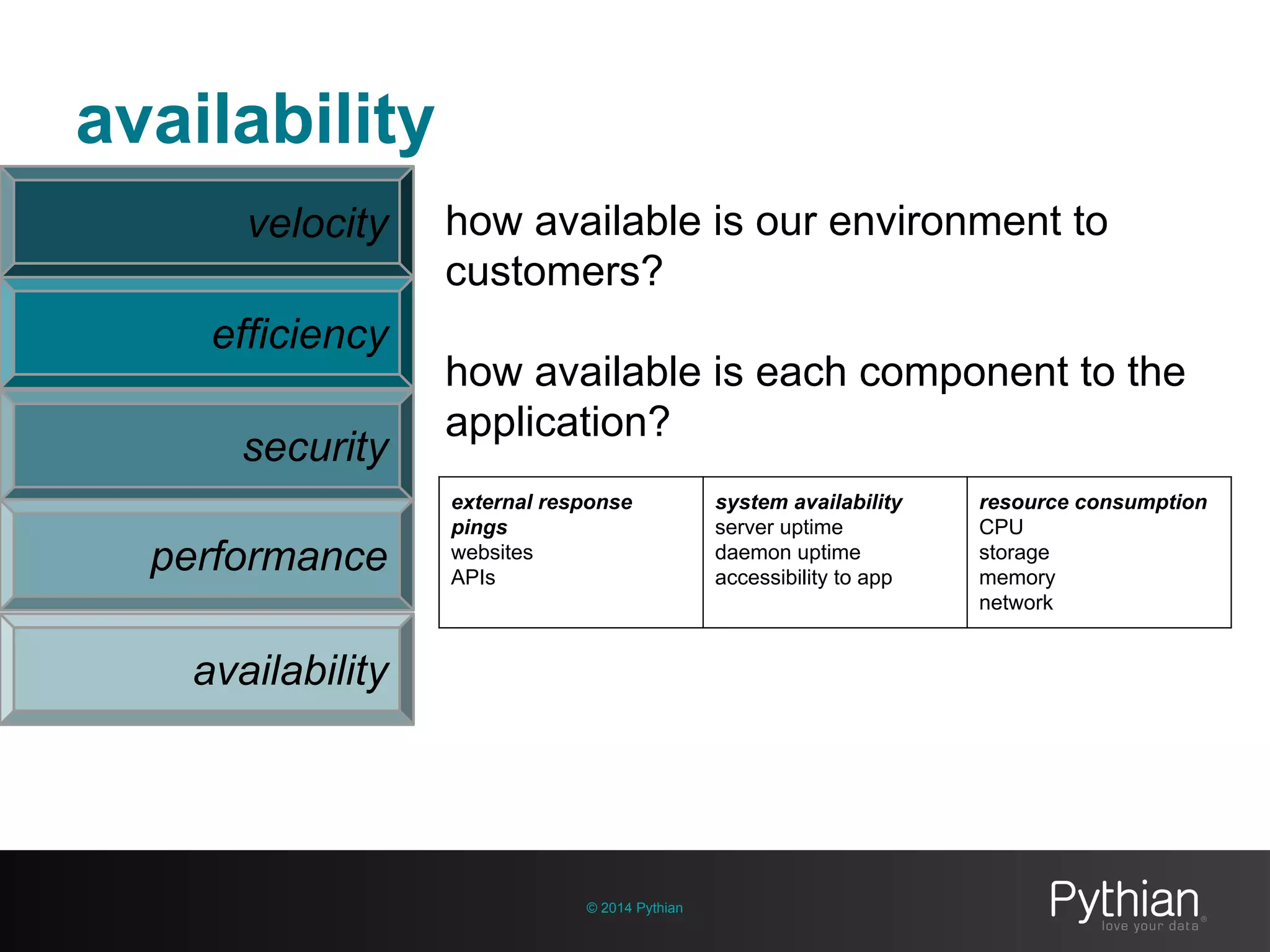

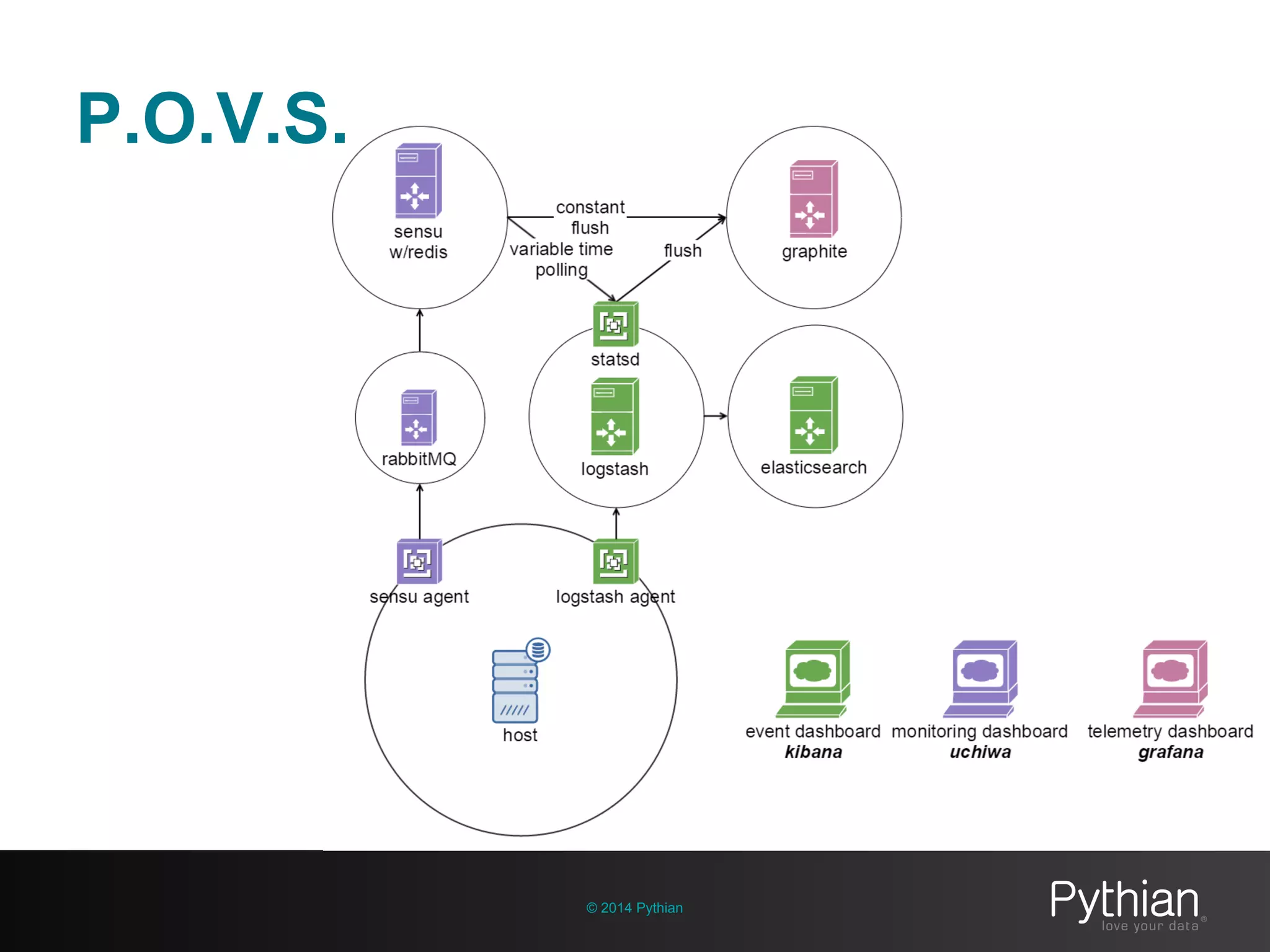

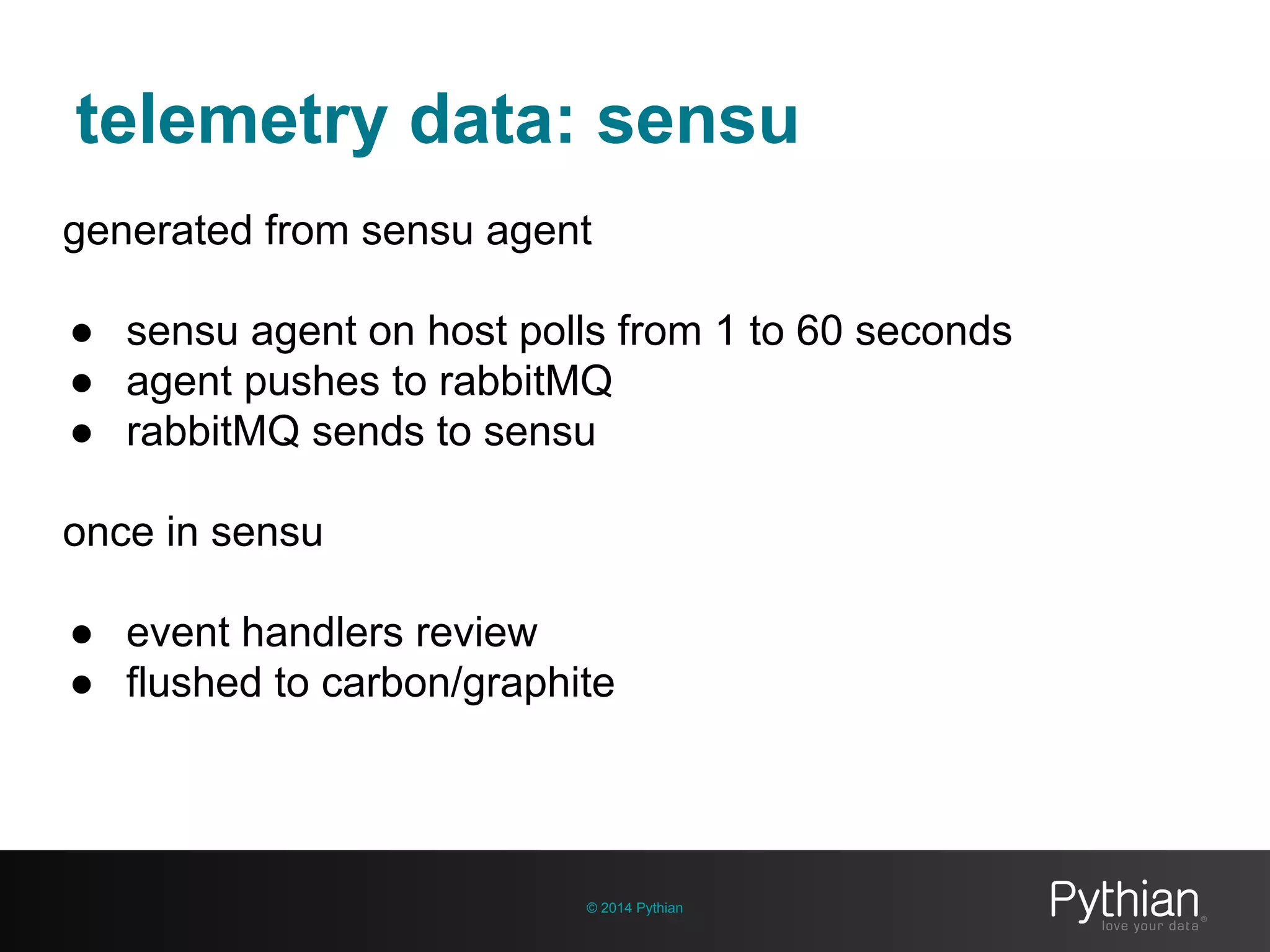

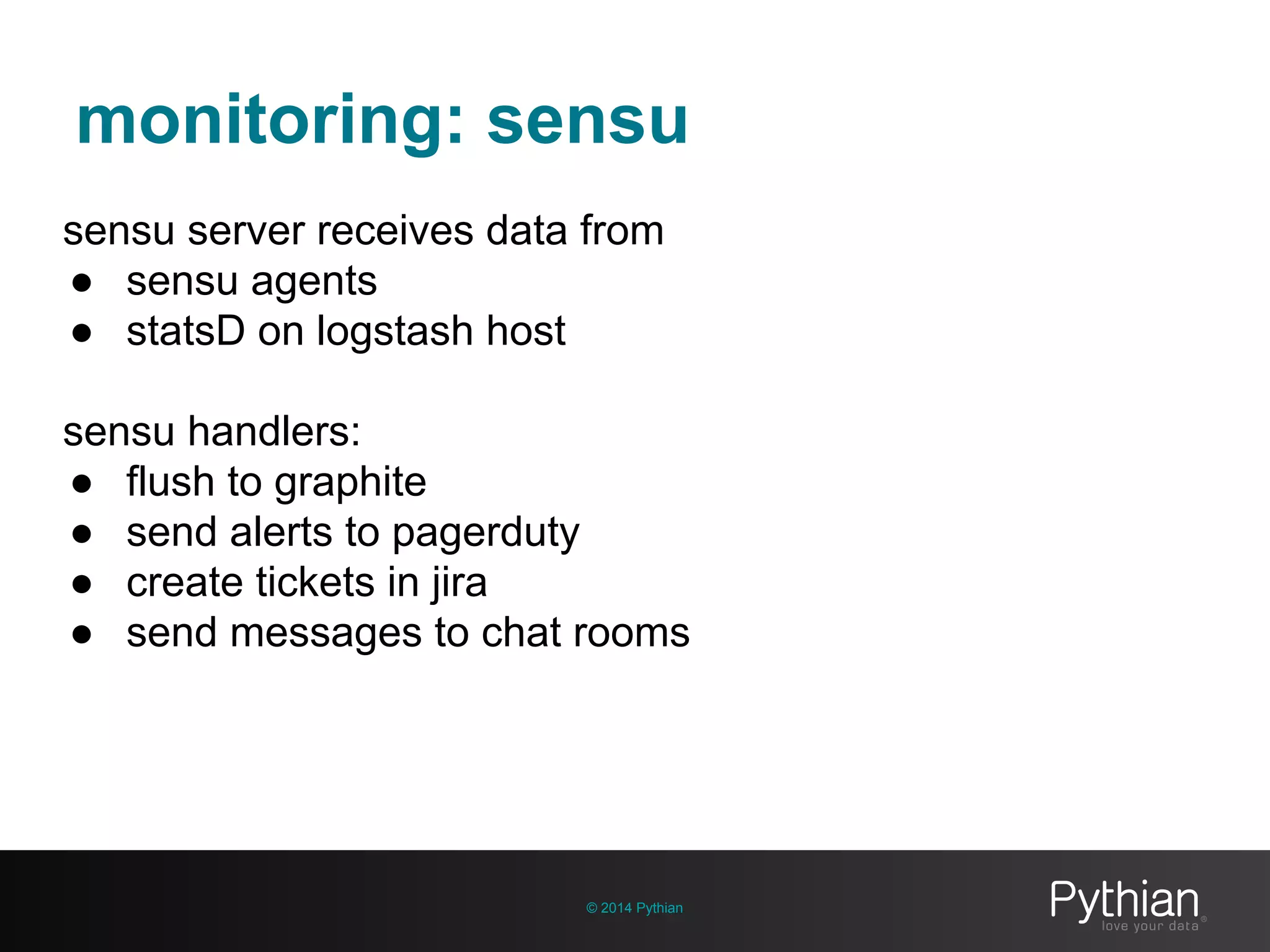

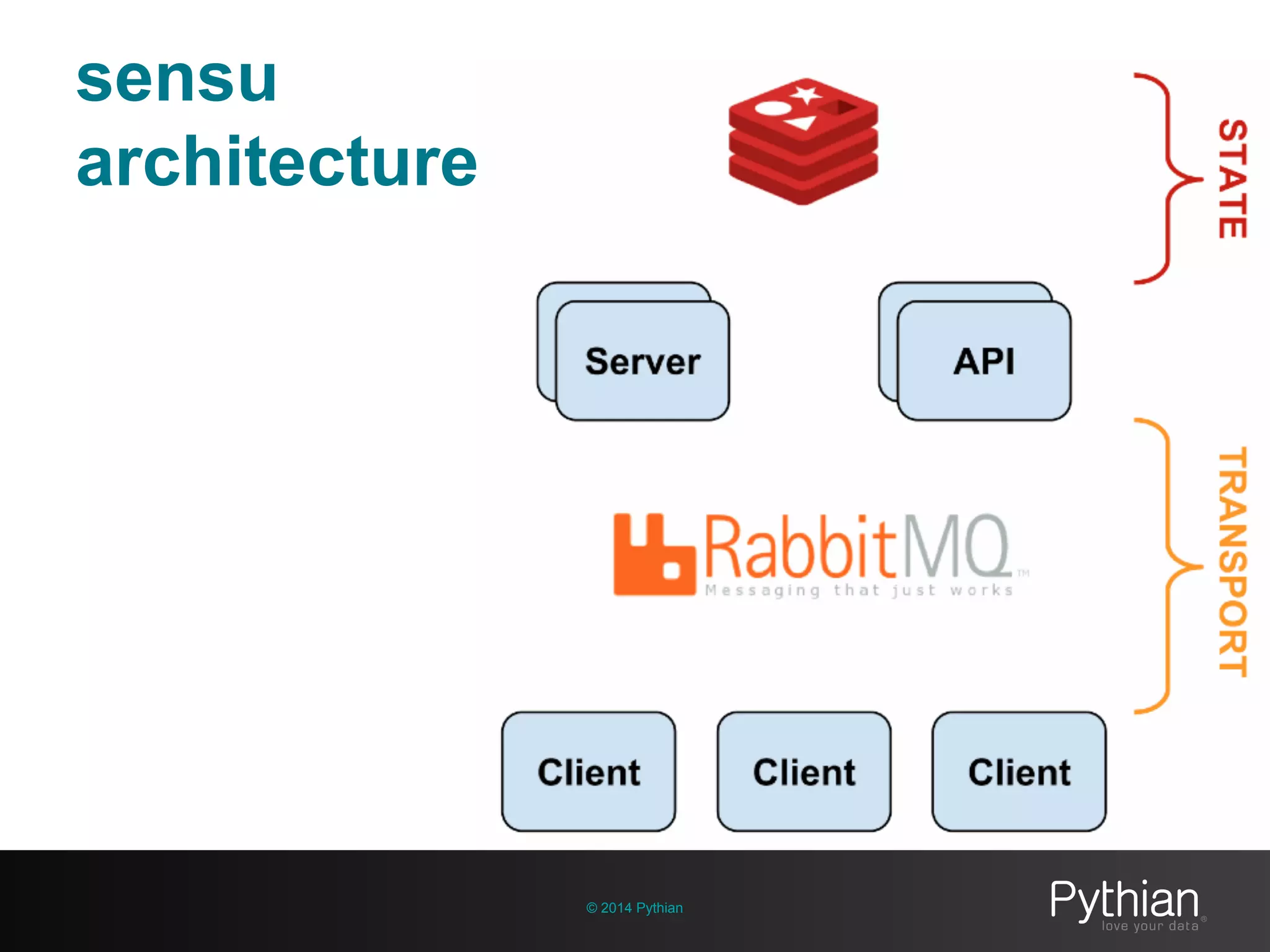

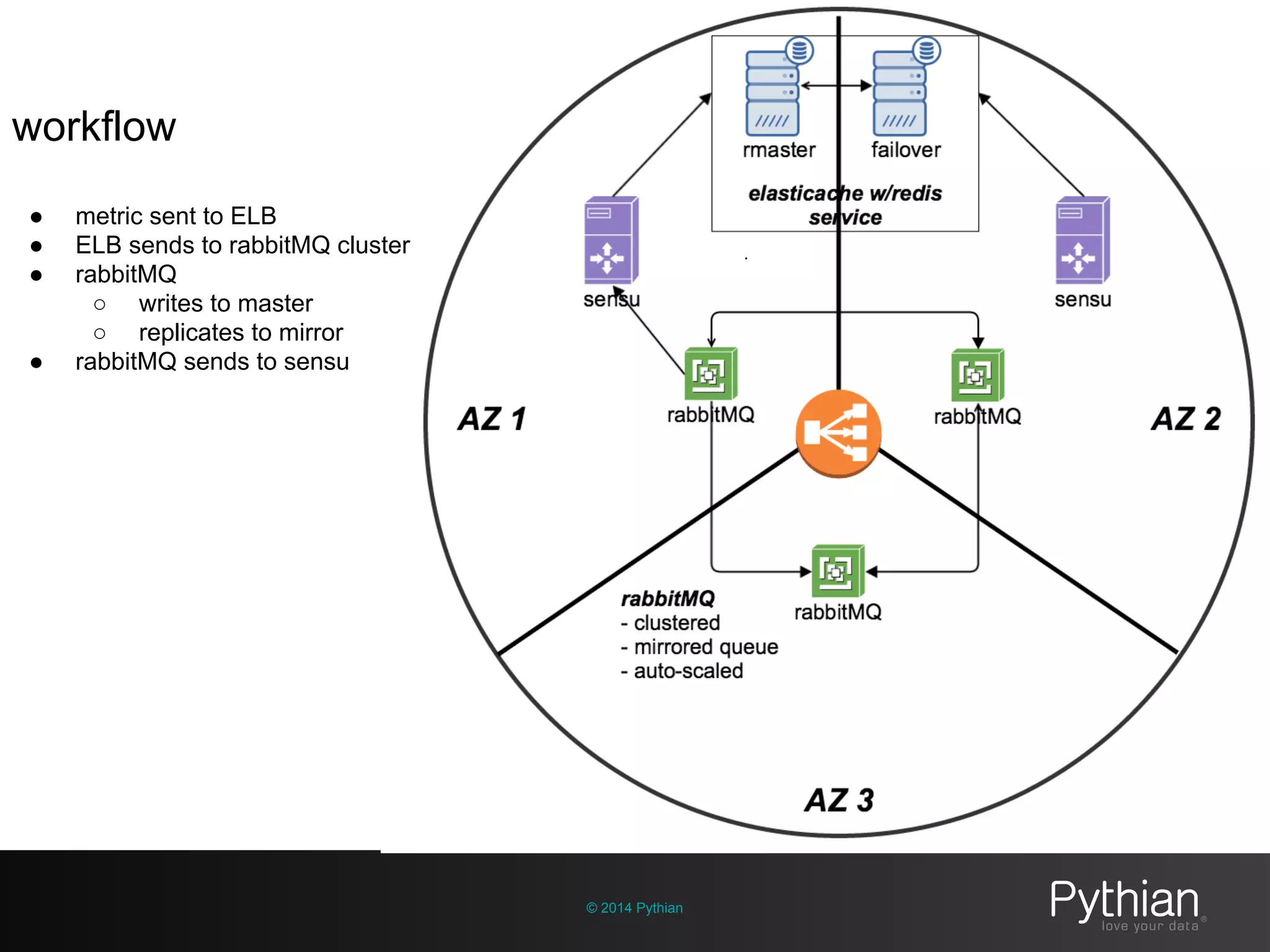

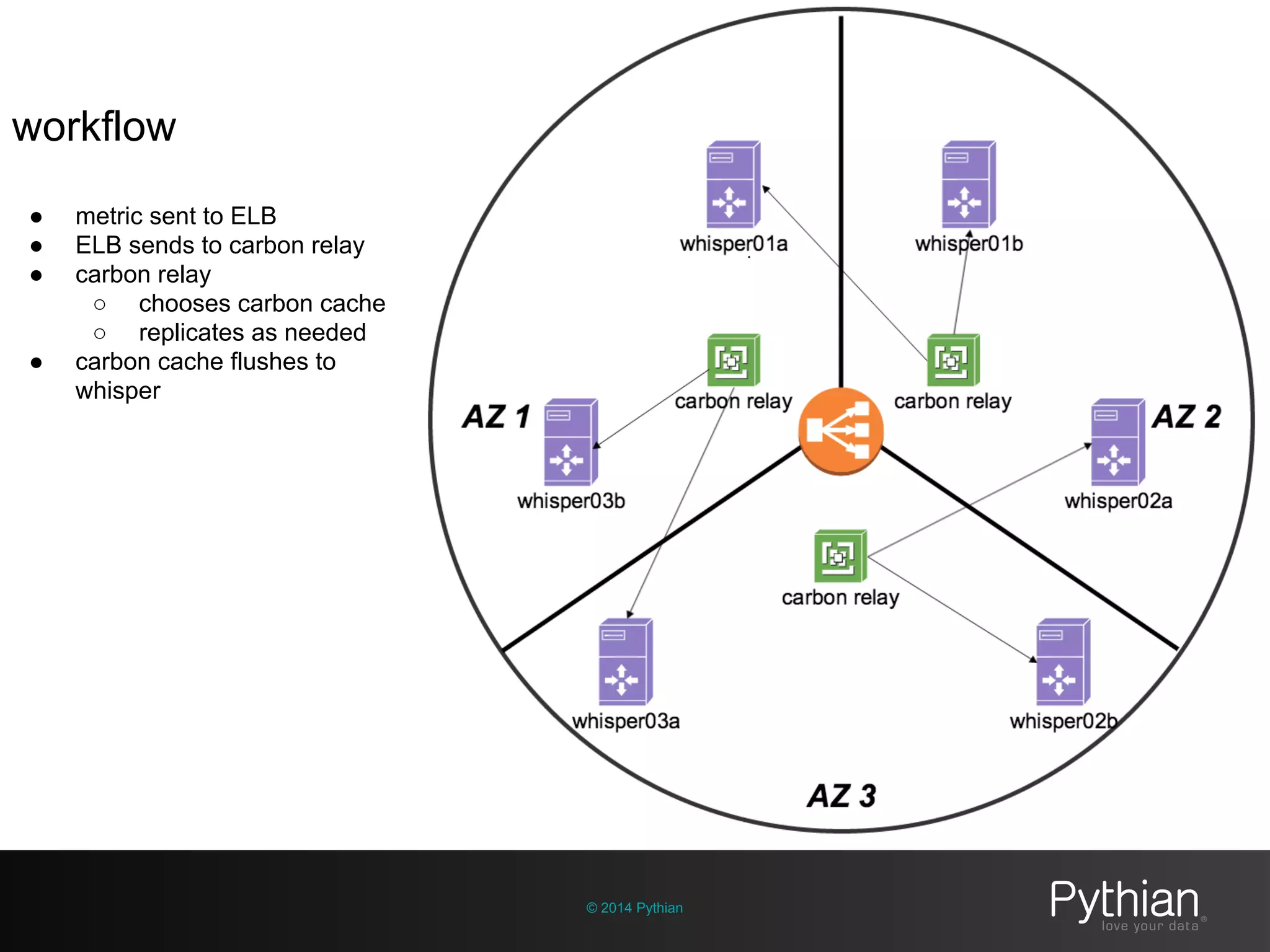

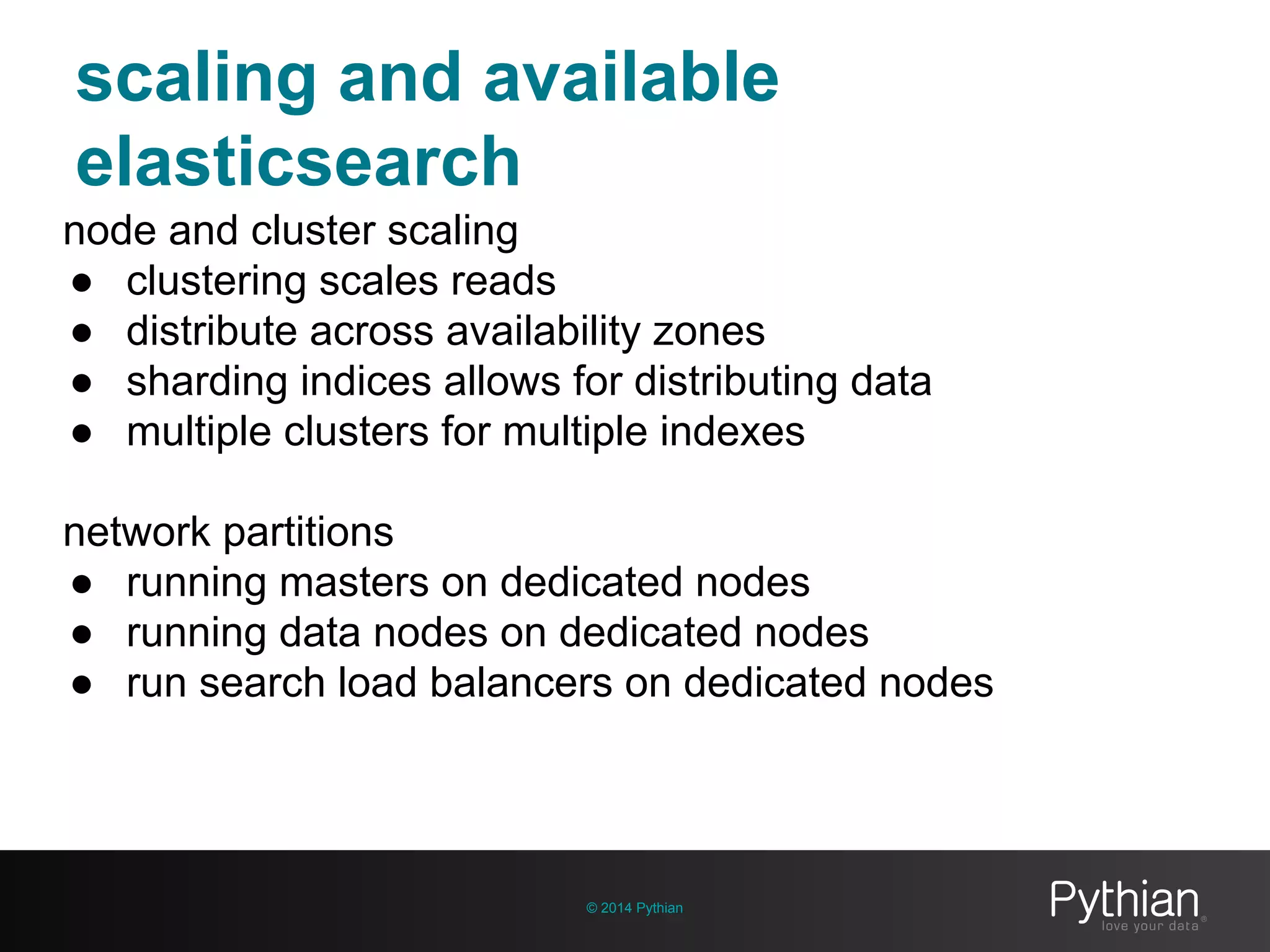

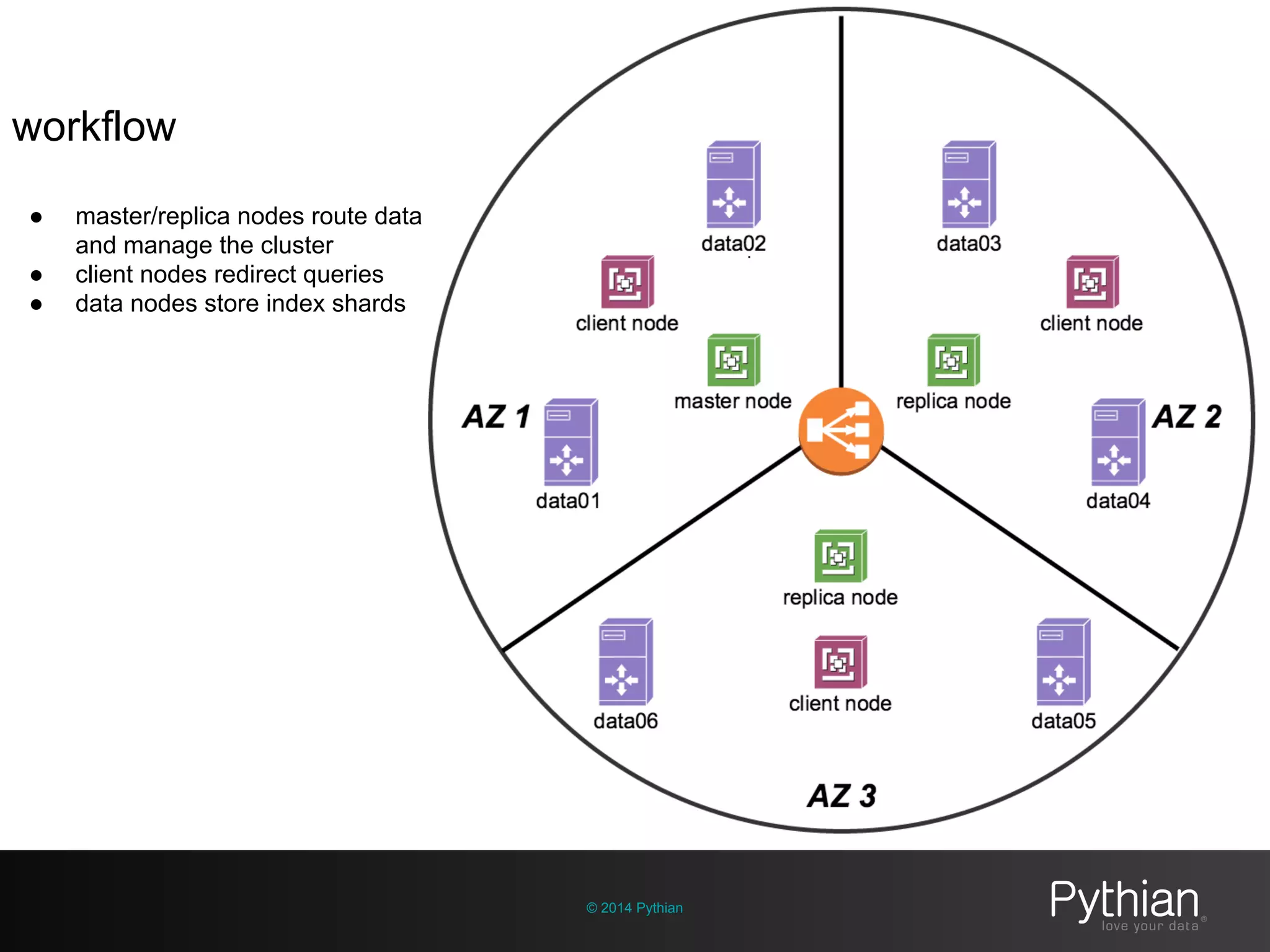

The document discusses operational visibility and observability principles crucial for modern IT environments, emphasizing the need for continuous improvement and stakeholder engagement. It outlines Pythian's approach to infrastructure management, detailing metrics for business velocity, availability, efficiency, and scalability while identifying current limitations in traditional monitoring methods. The focus is on integrating telemetry data, improving data storage, and employing machine learning for predictive analysis and anomaly detection.