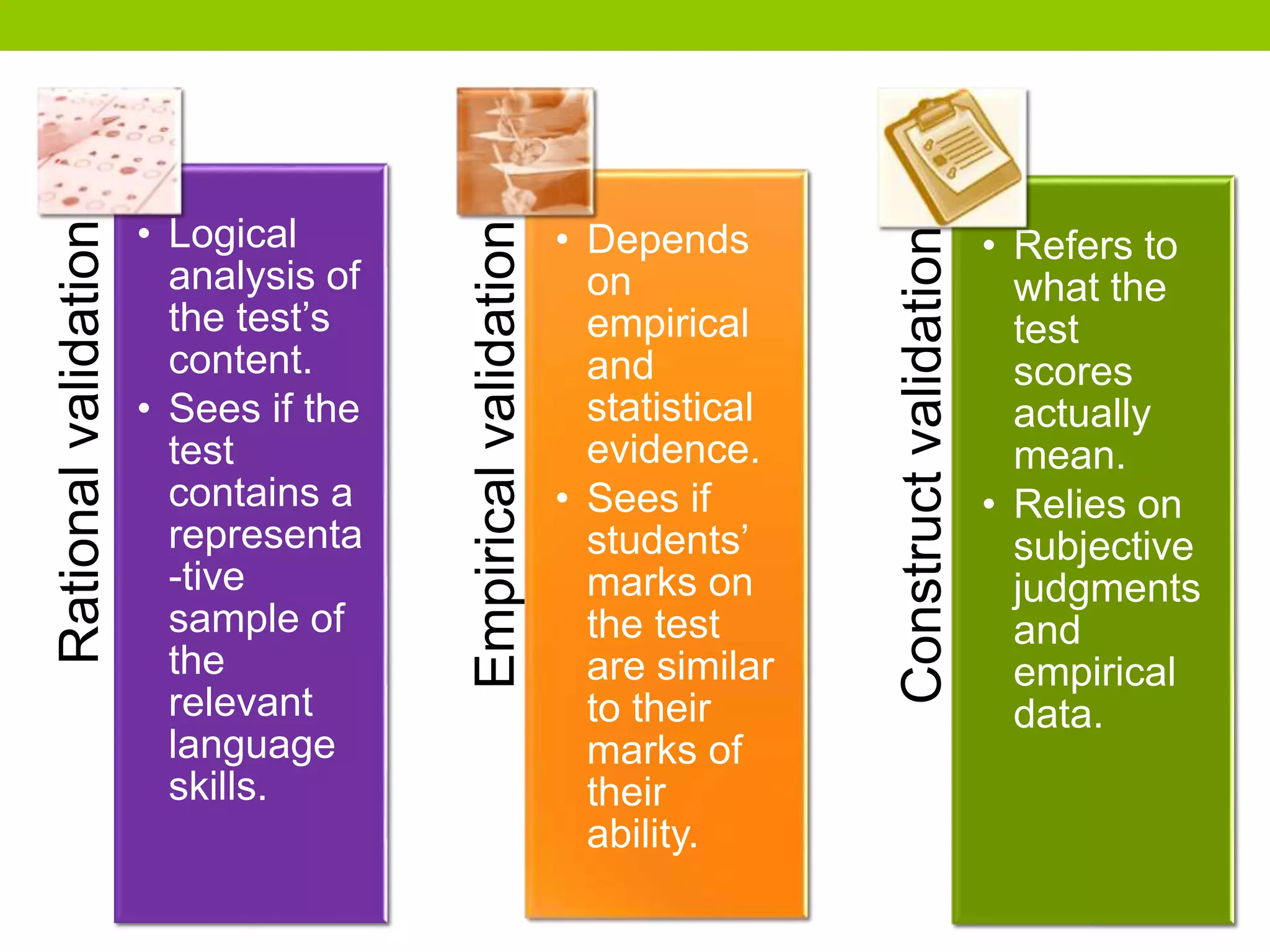

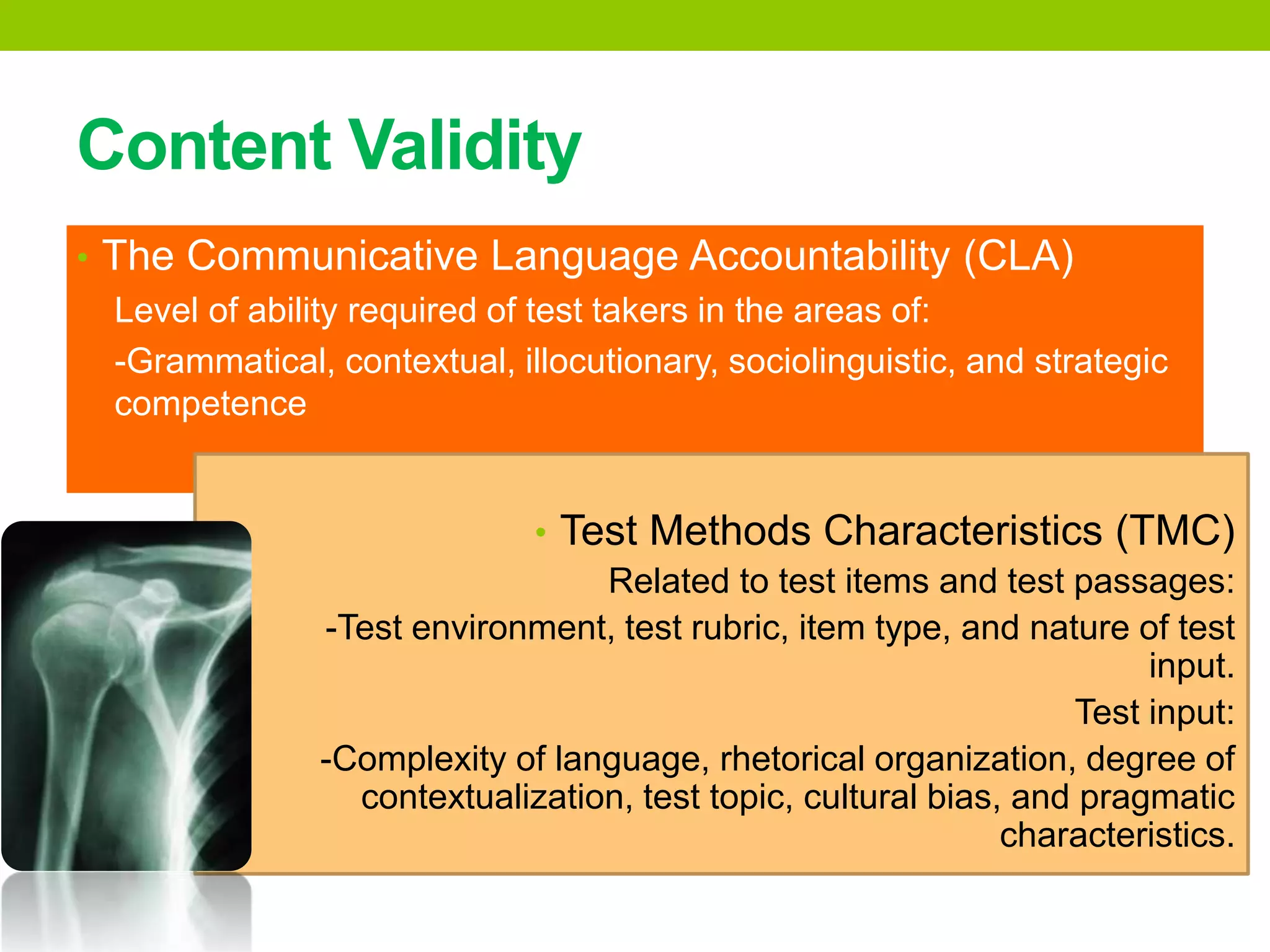

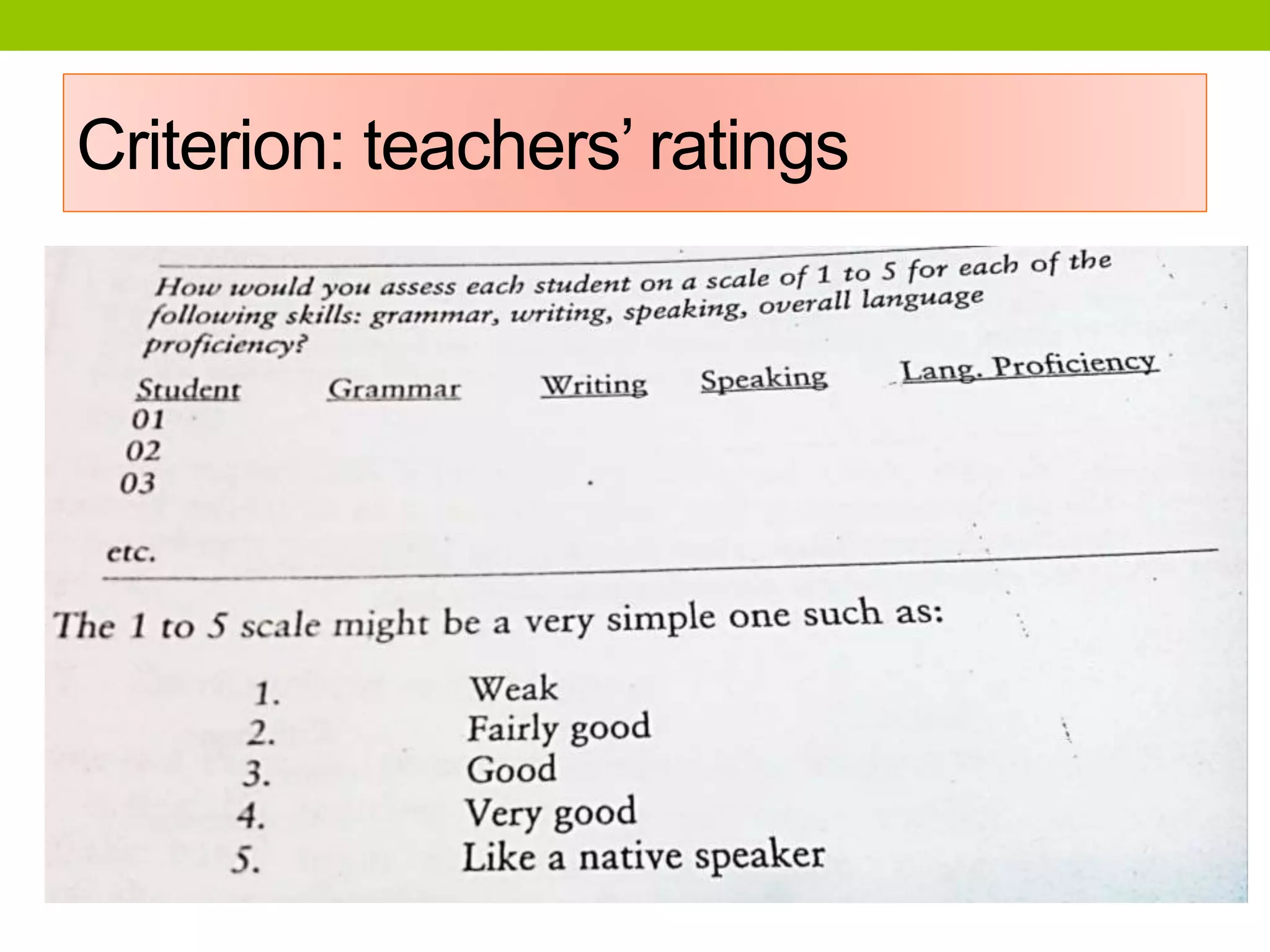

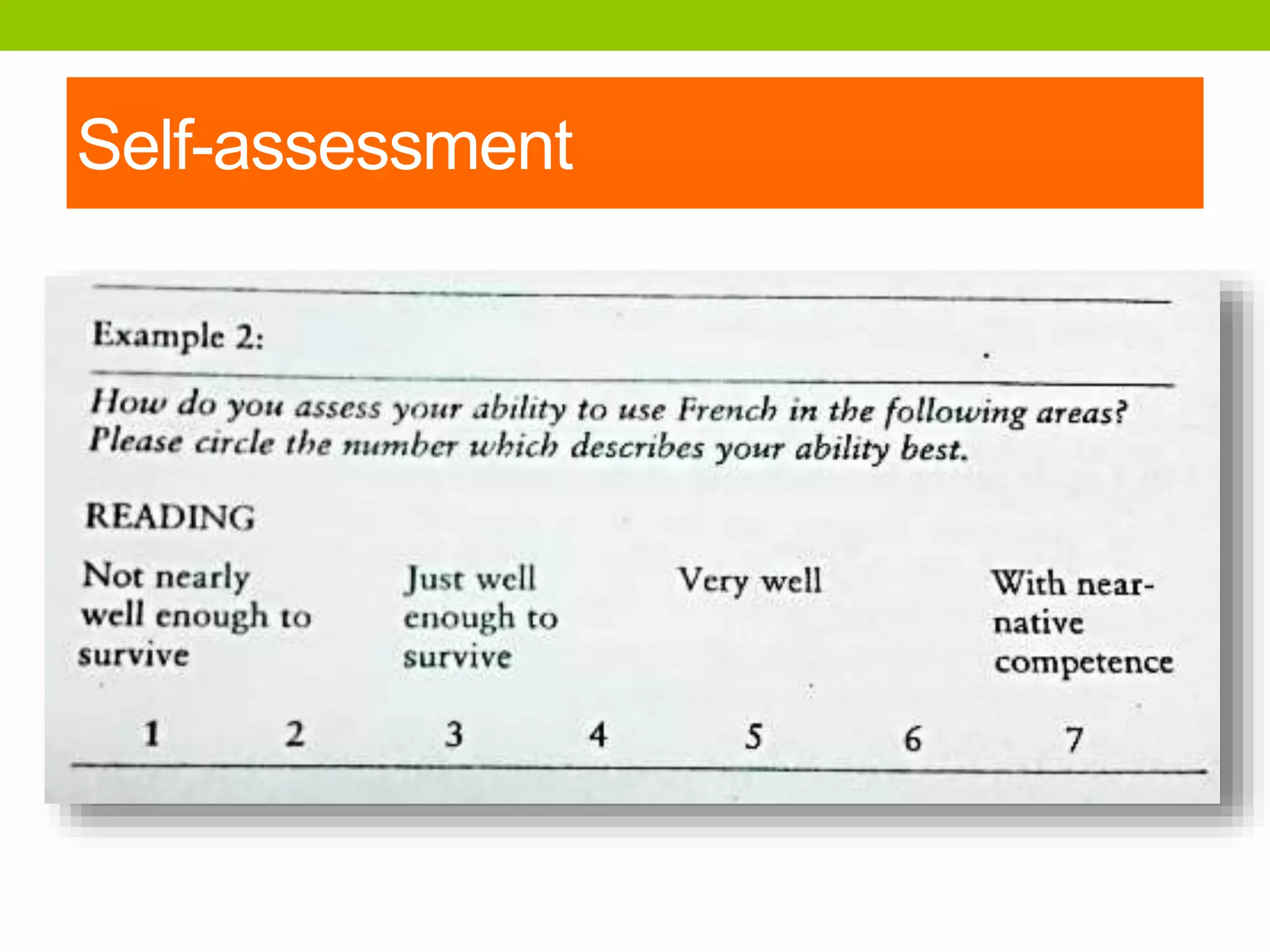

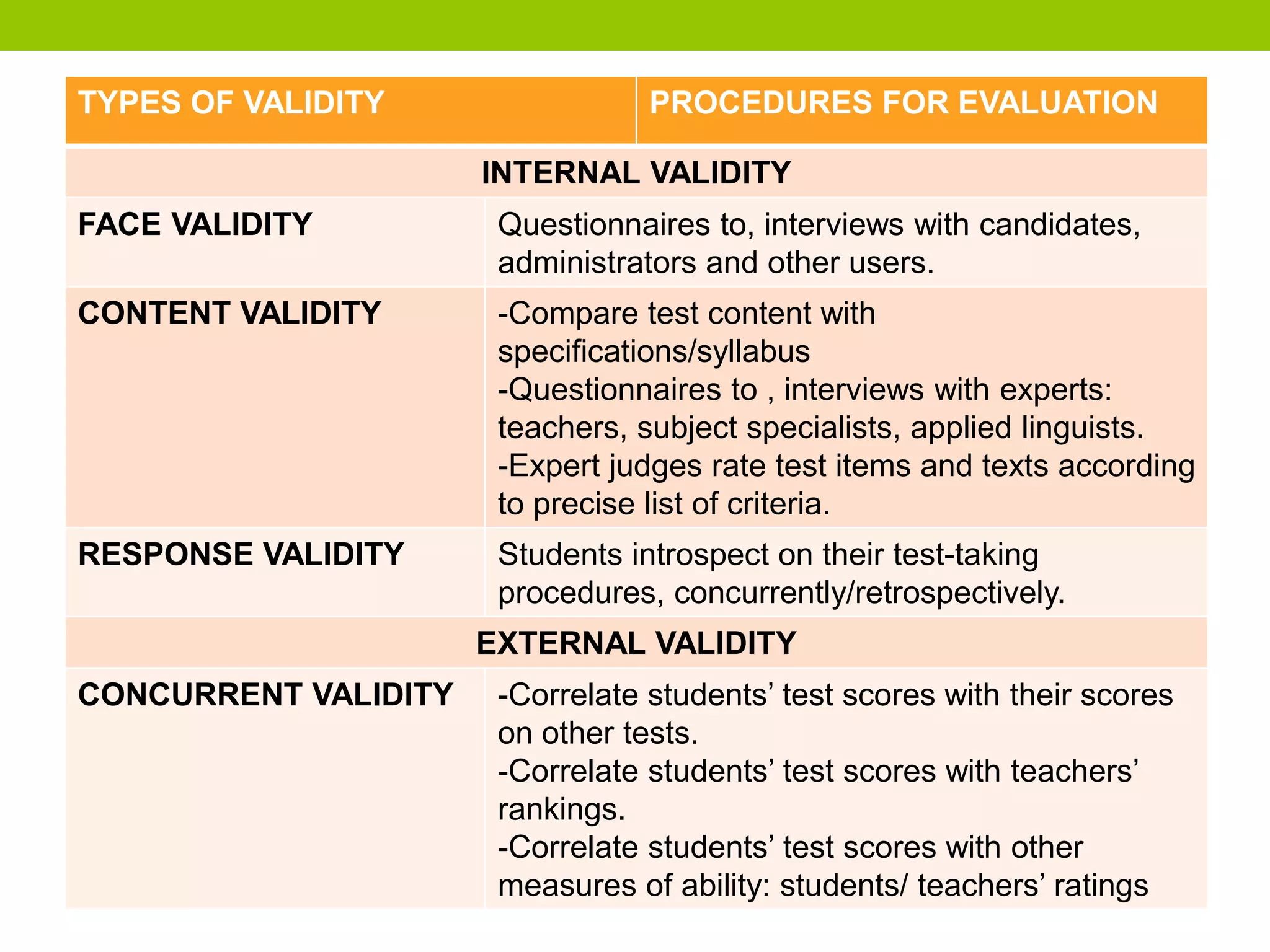

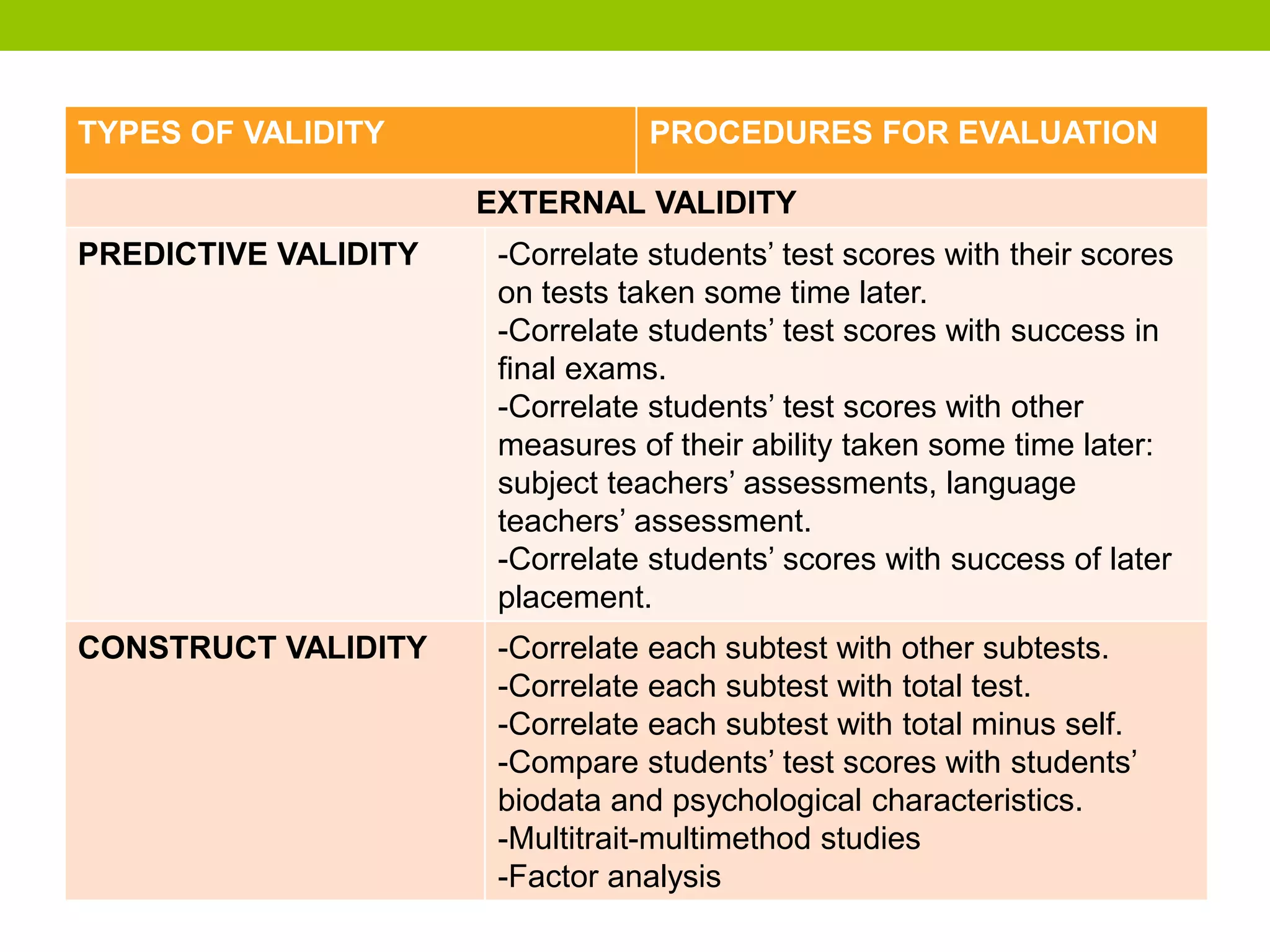

This document discusses test validity and its various types. It defines validity as measuring what a test is designed to measure. There are different types of validity evidence including rational, empirical, and construct validity. Internal validity looks at a test's content and effect, while external validity compares test scores to outside criteria. Construct validity determines what test scores represent by comparing them to other data about test takers. Gathering multiple forms of validity evidence establishes that a test is accurately measuring the intended construct. Reliability is necessary for validity, but a test can be reliable without being valid.