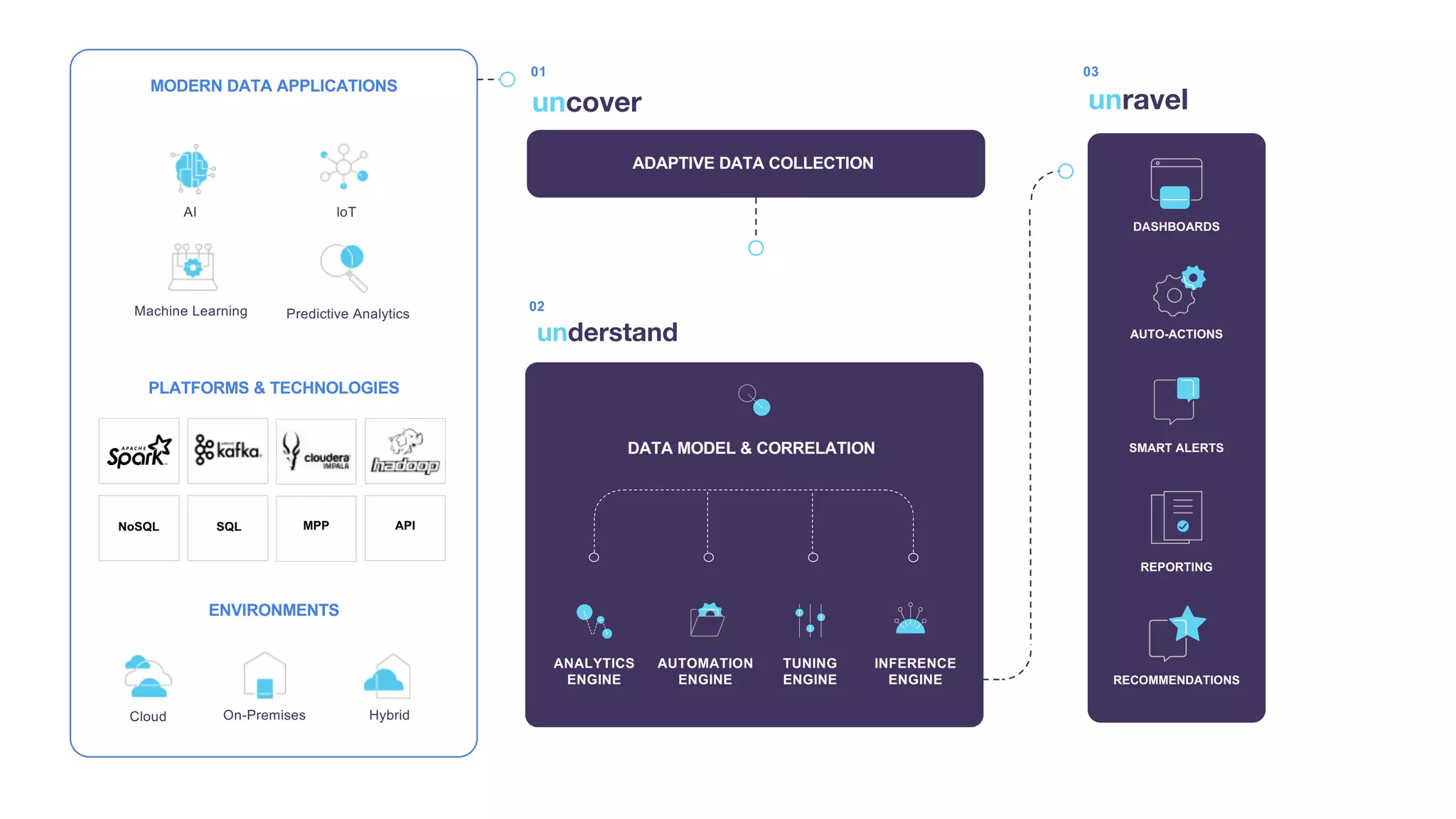

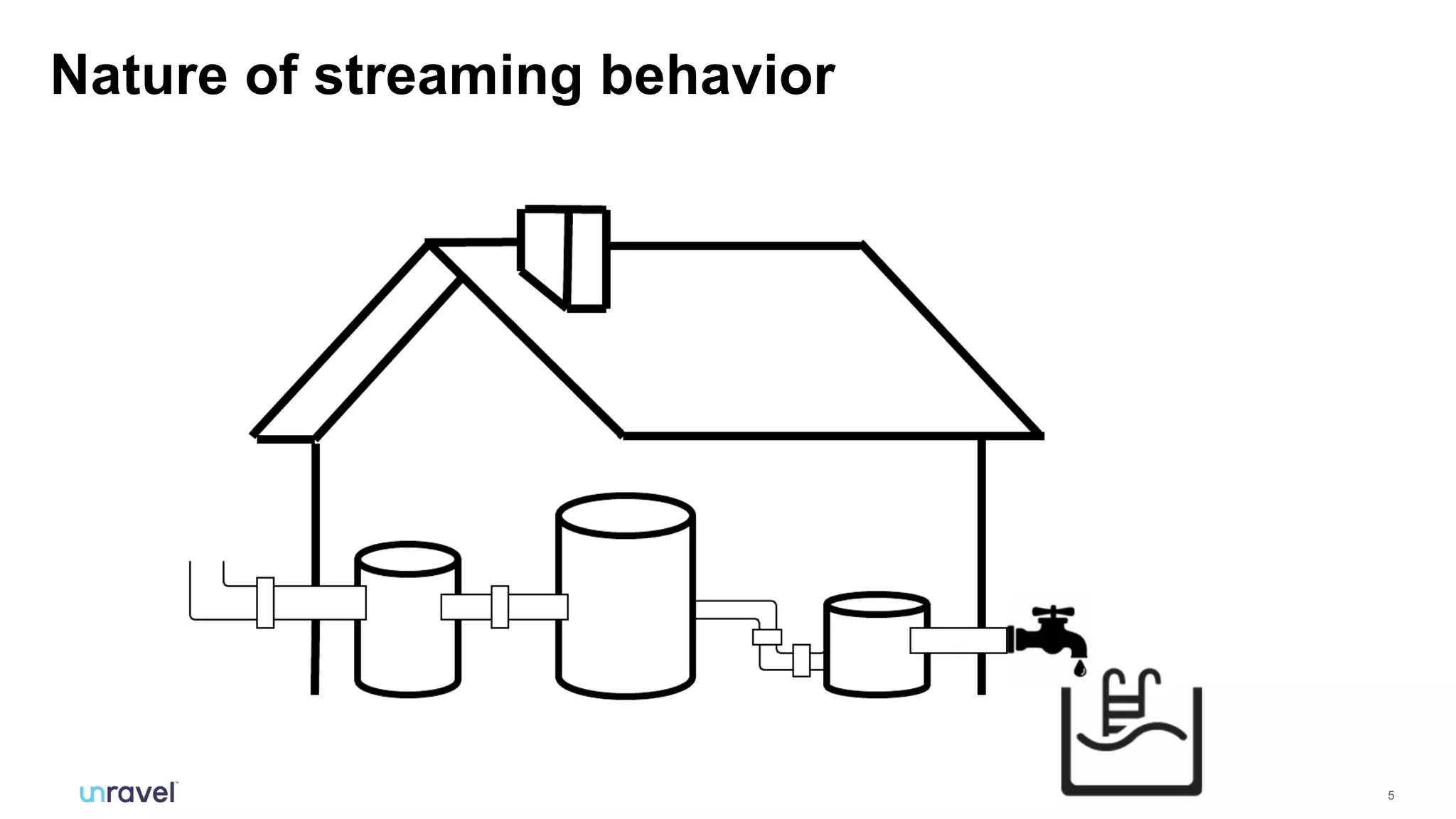

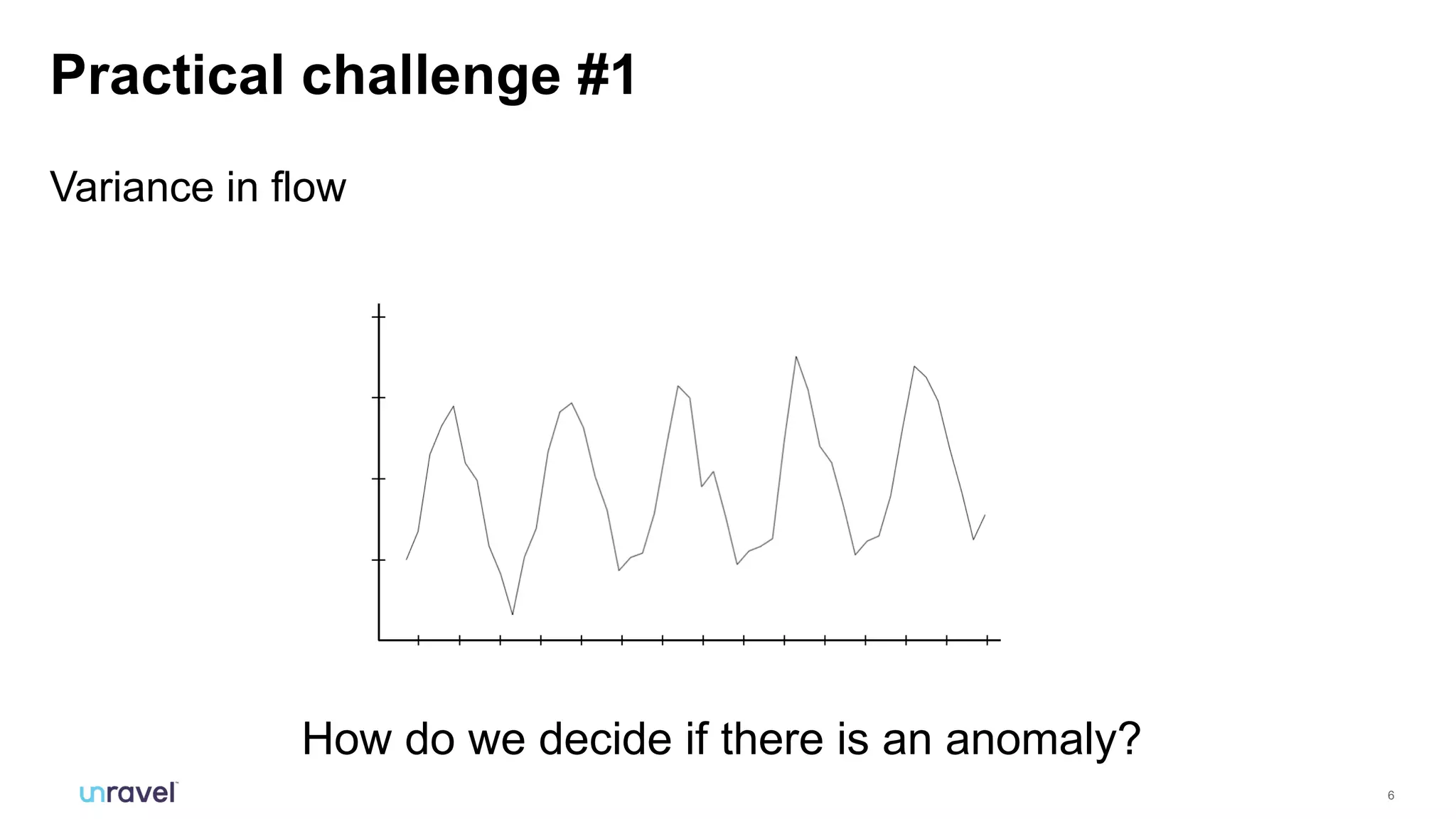

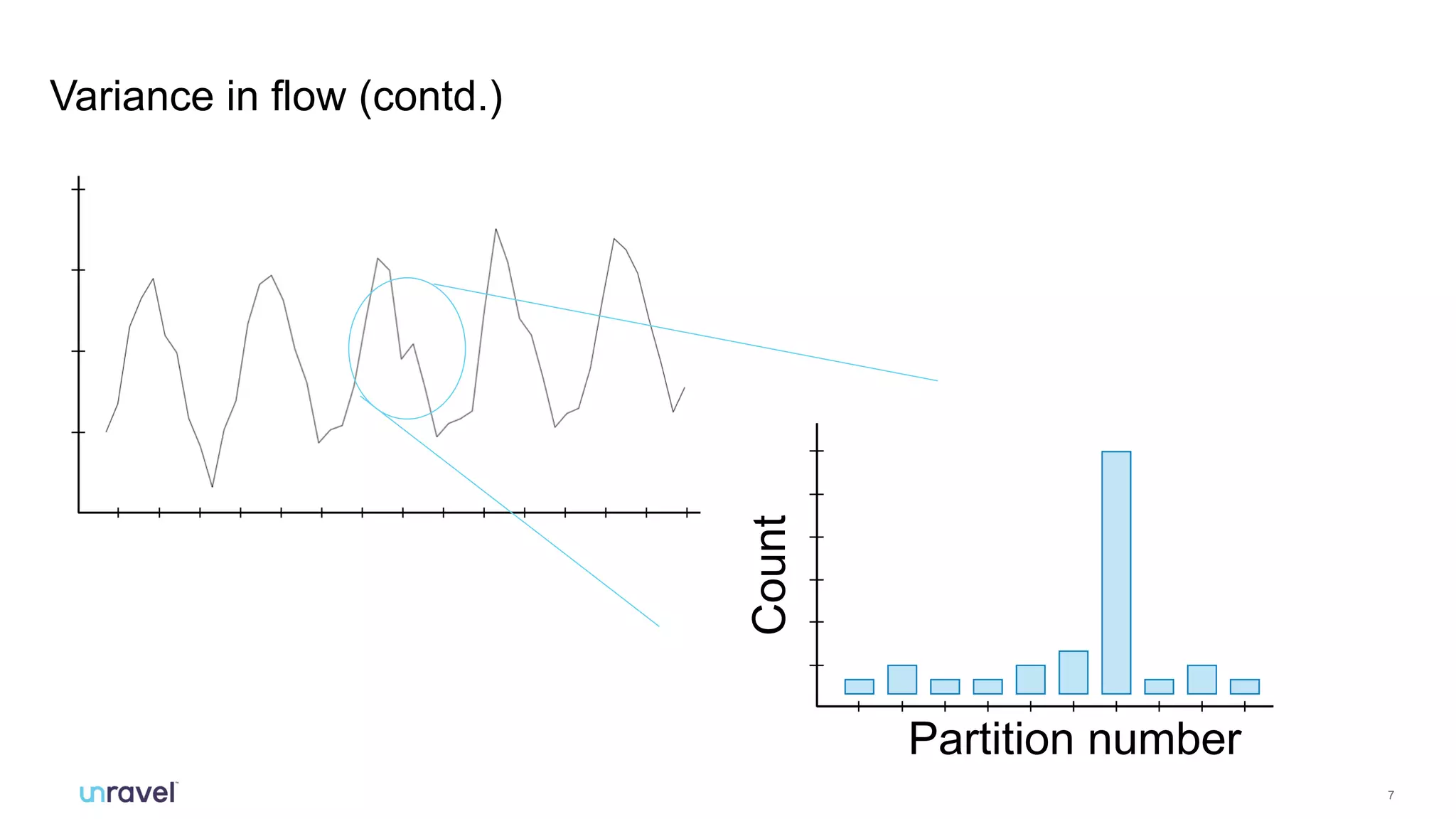

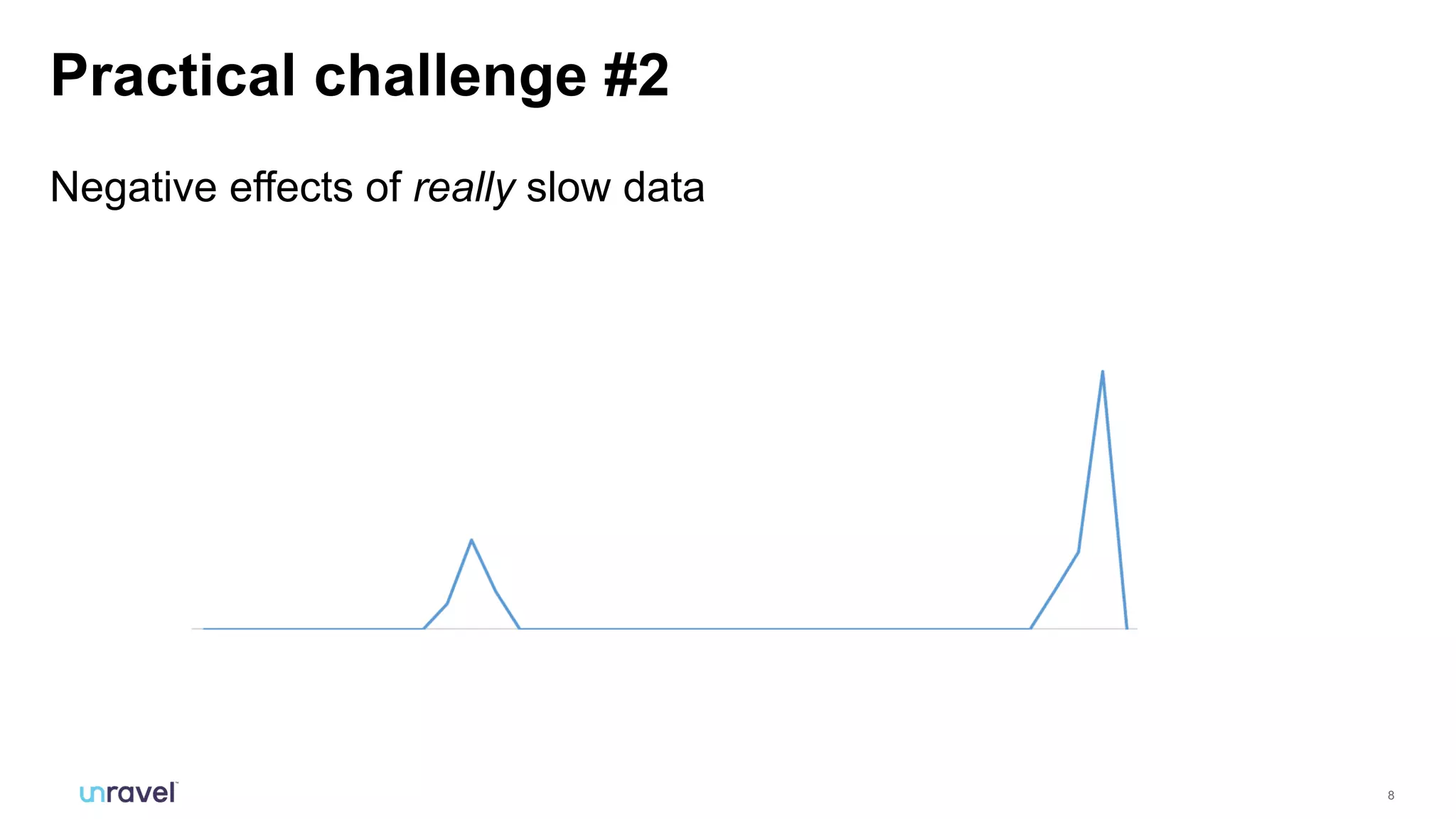

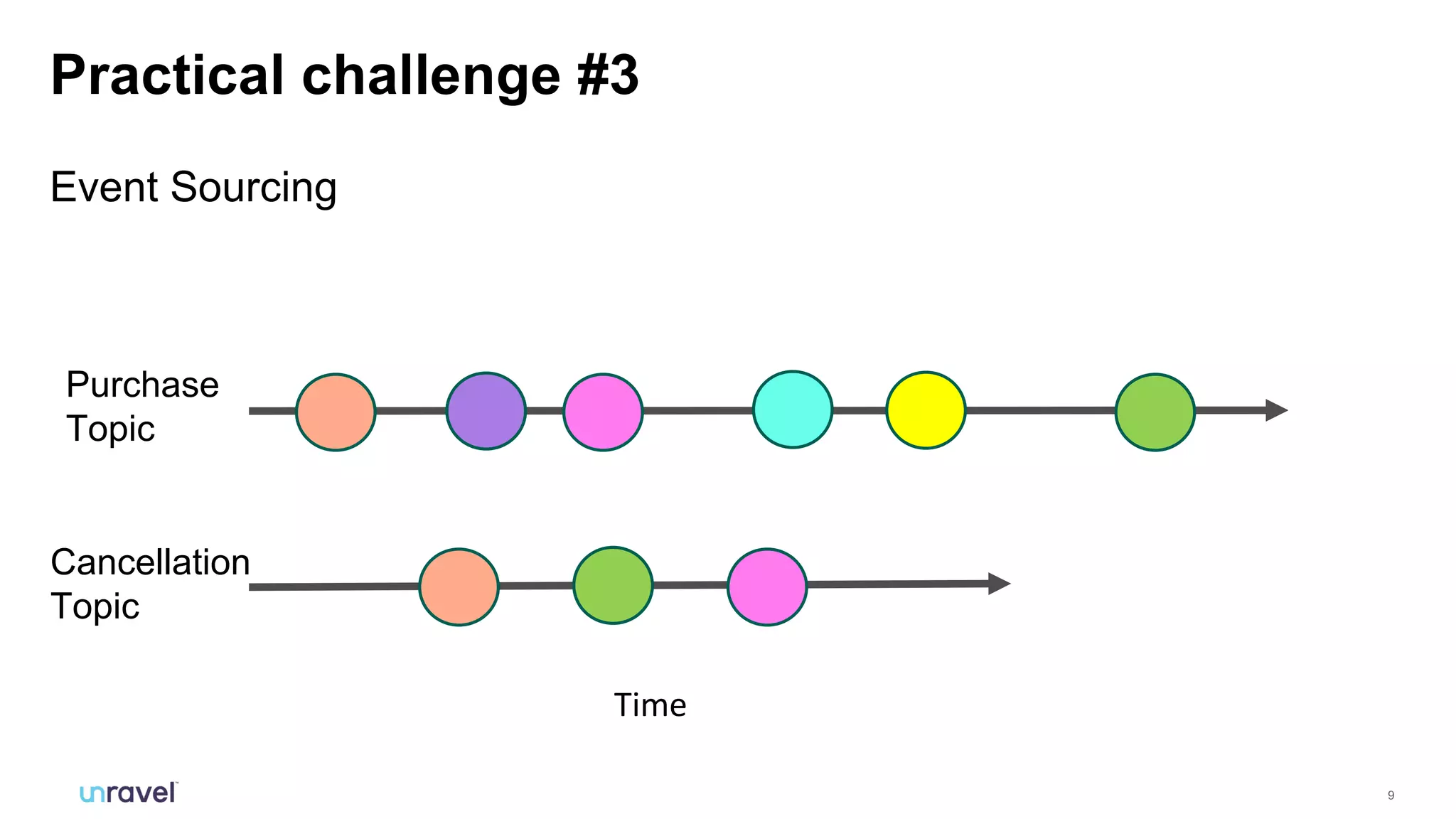

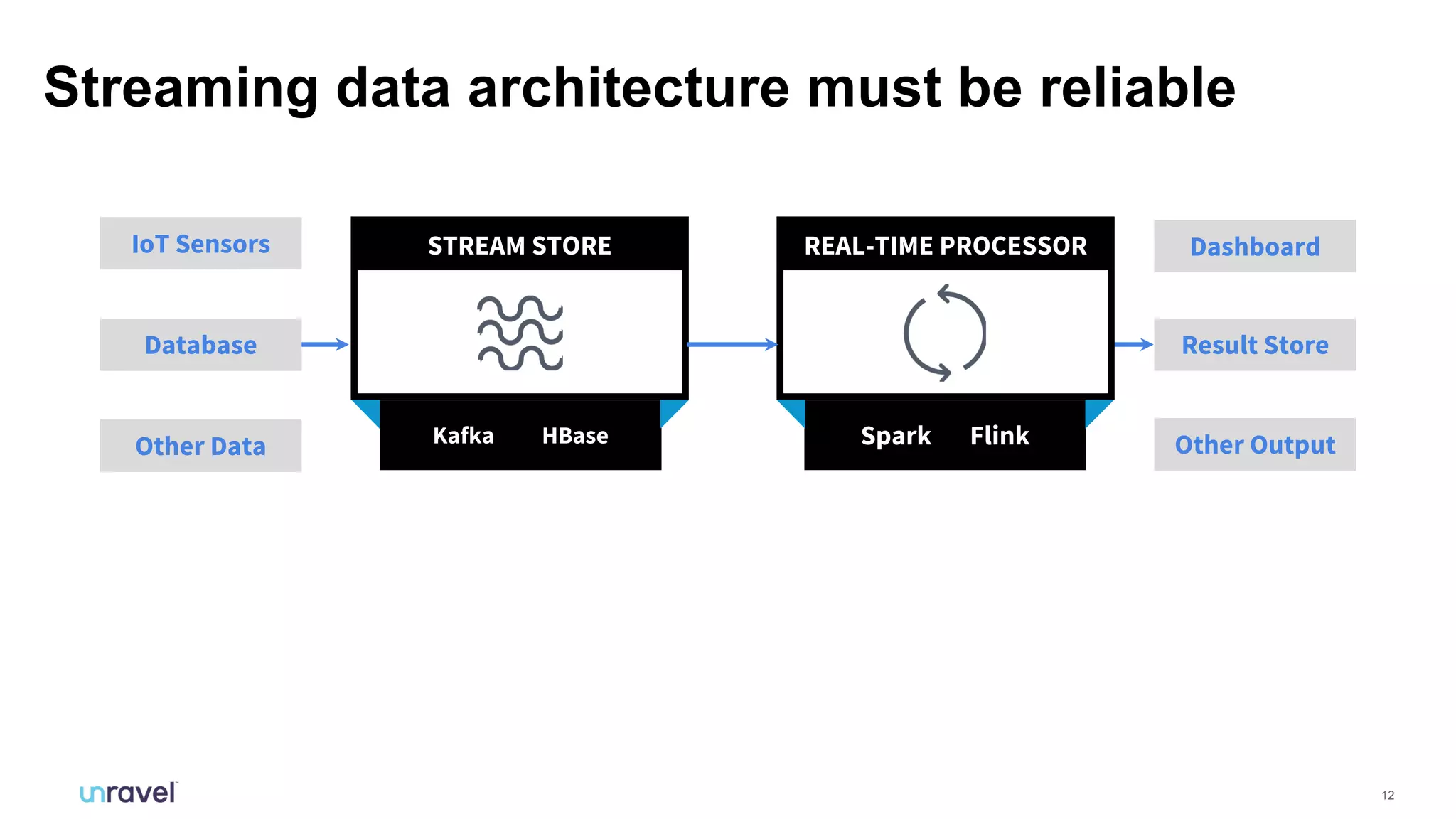

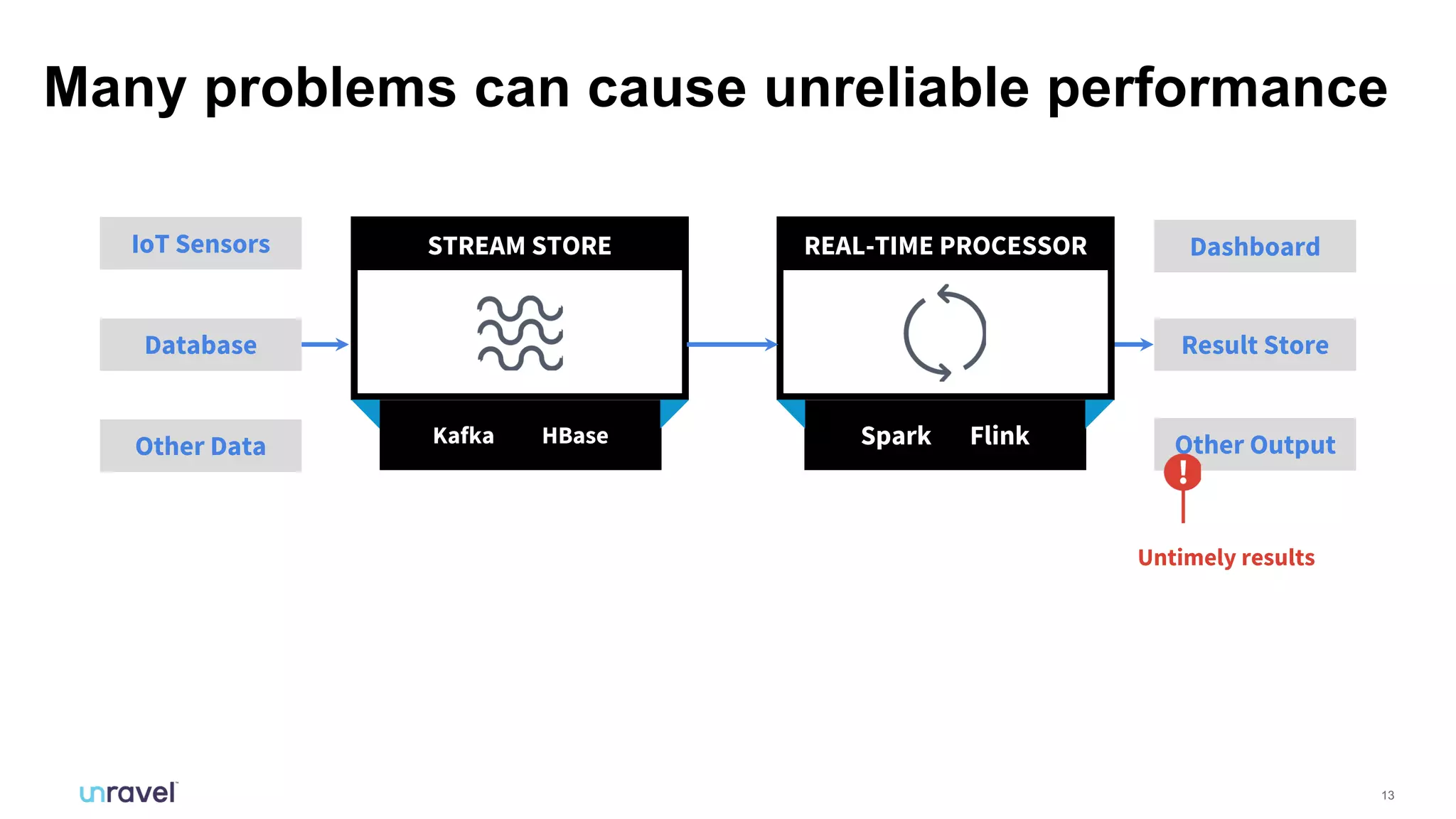

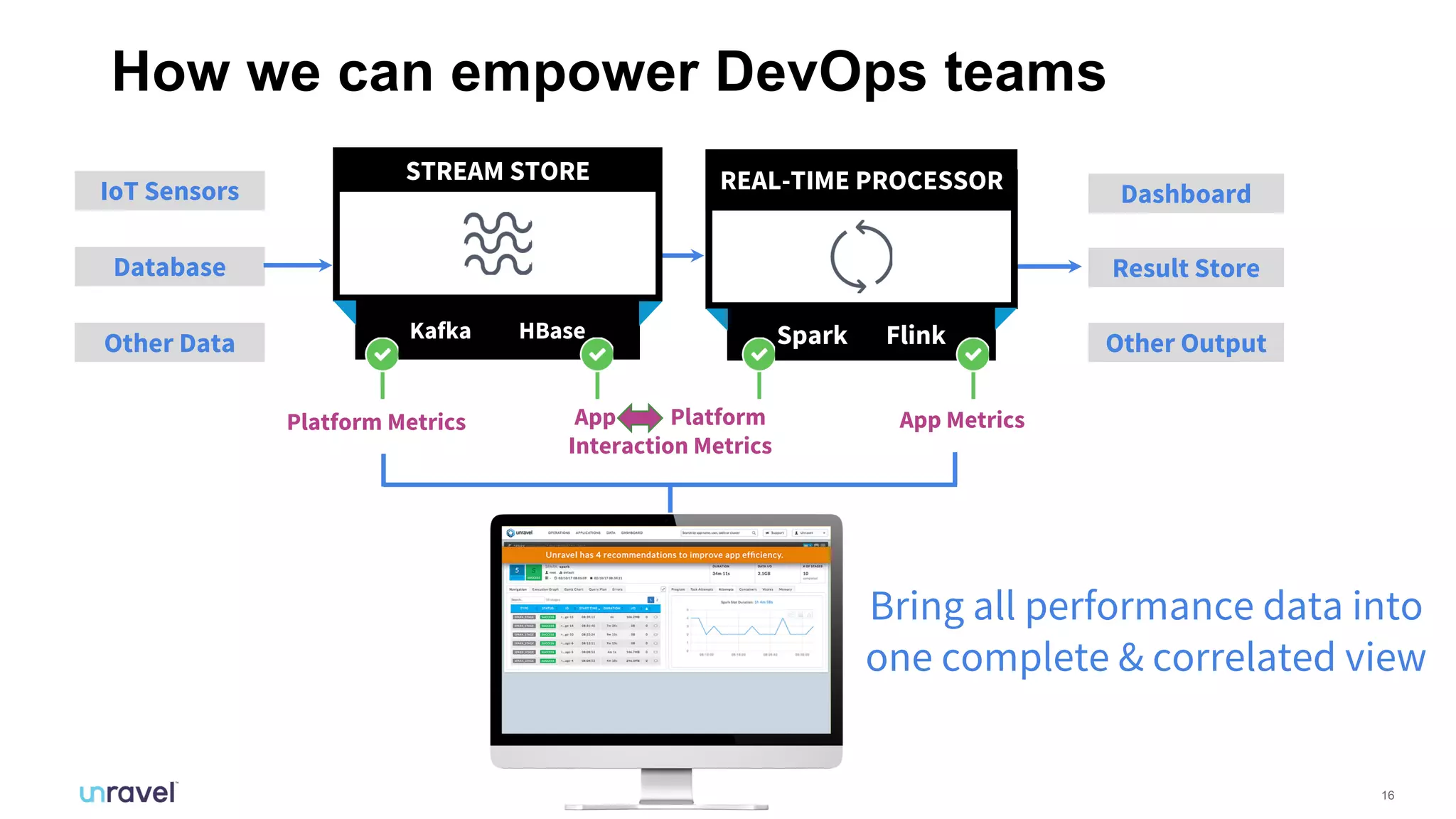

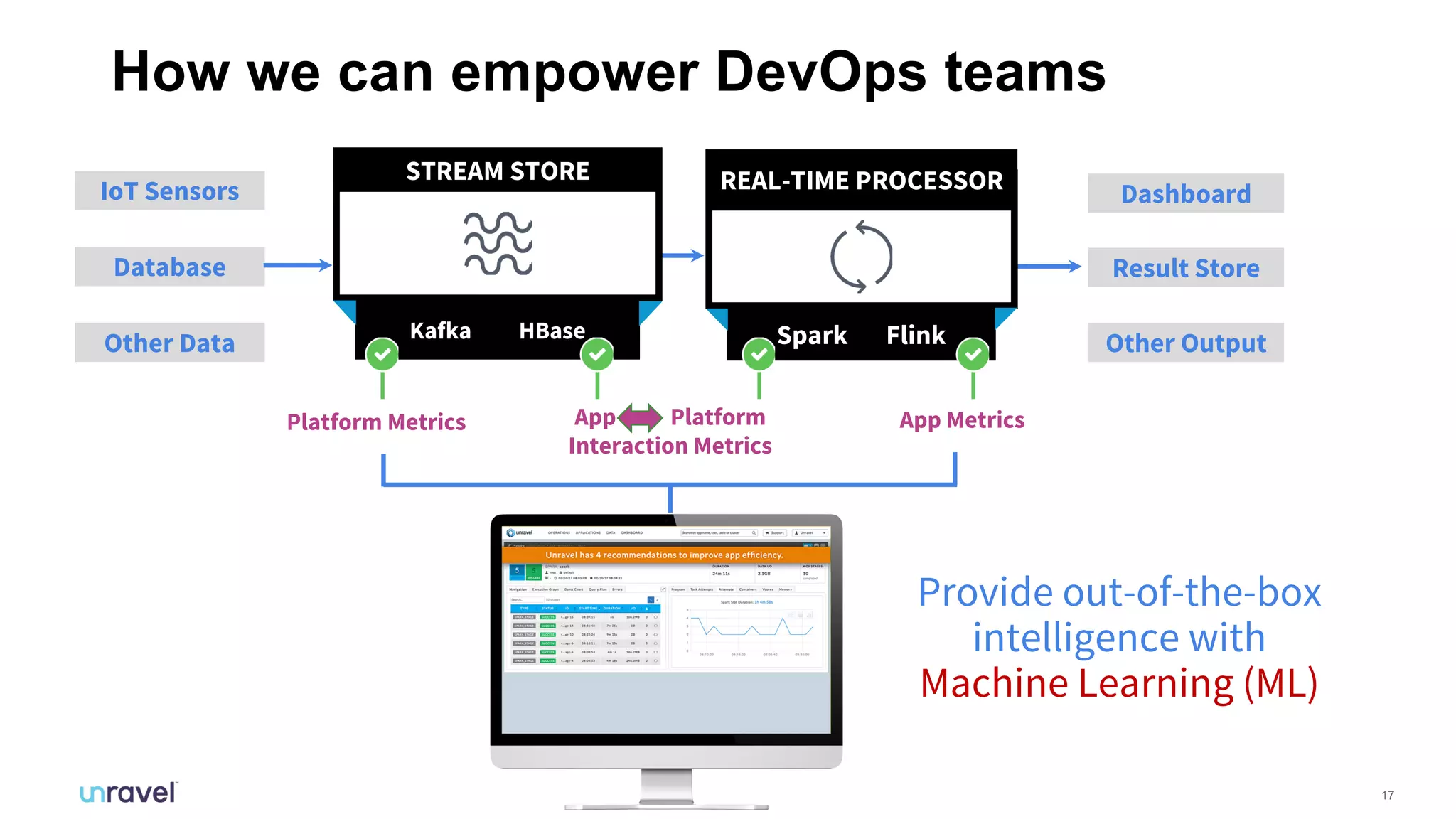

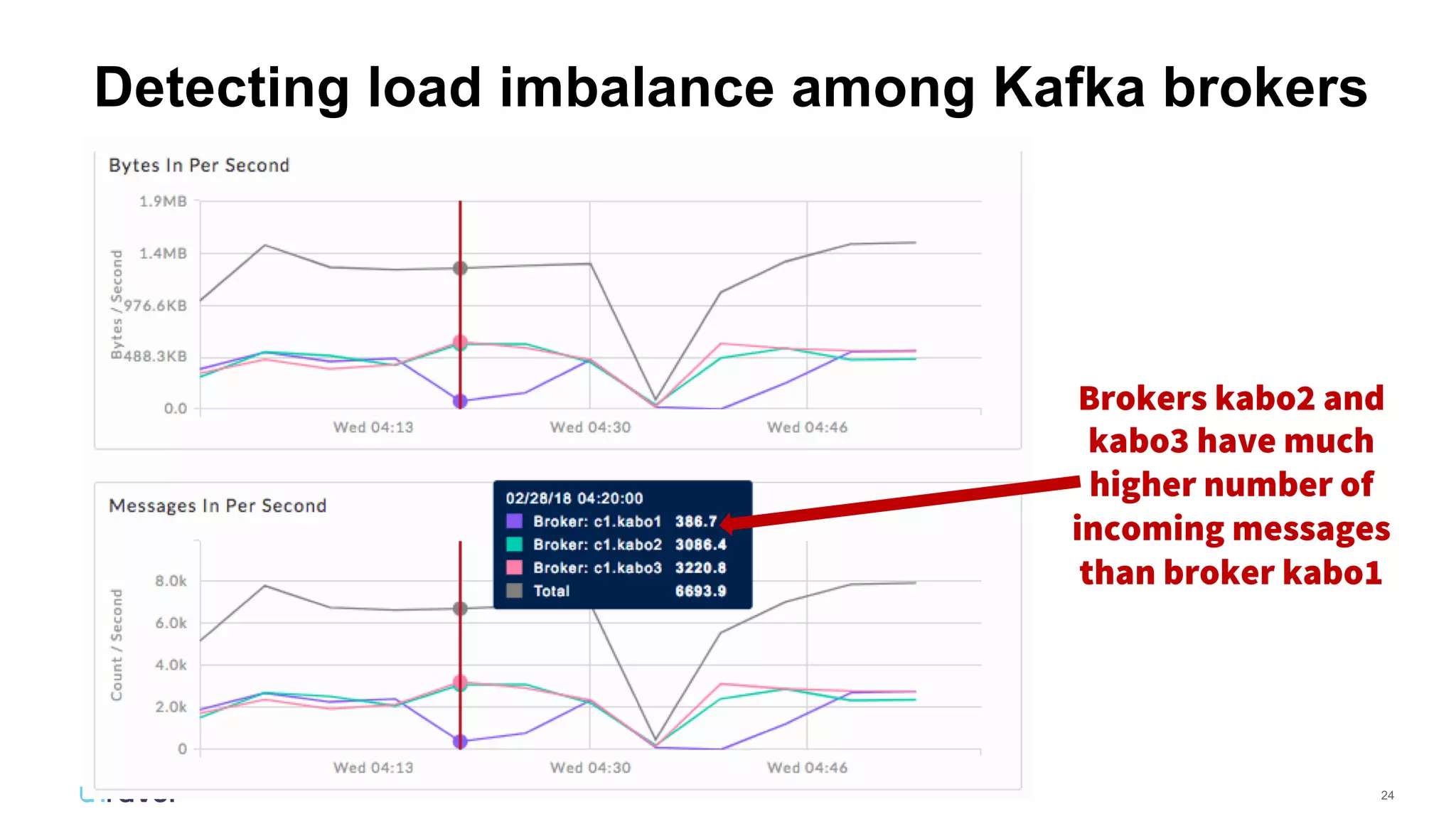

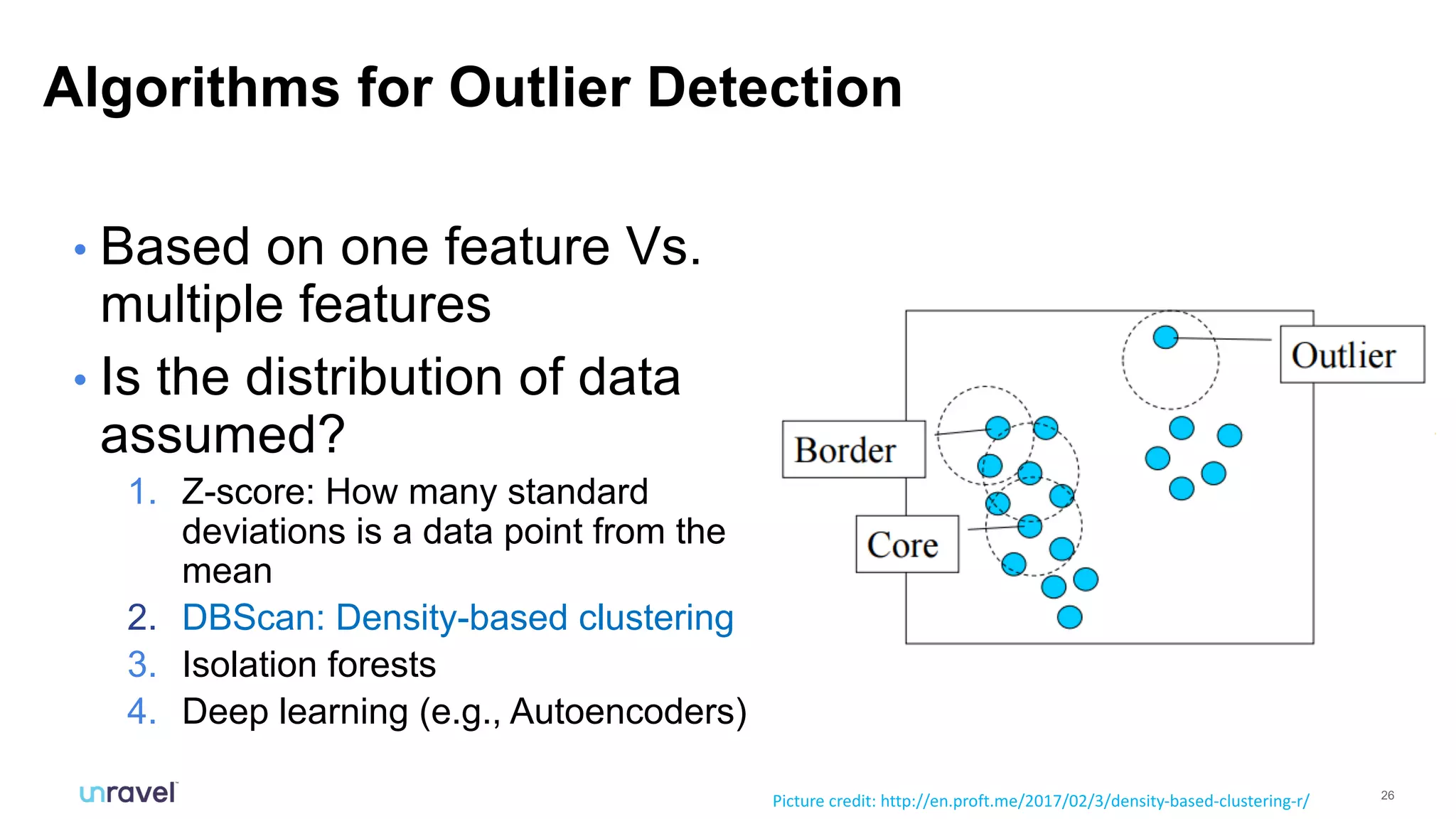

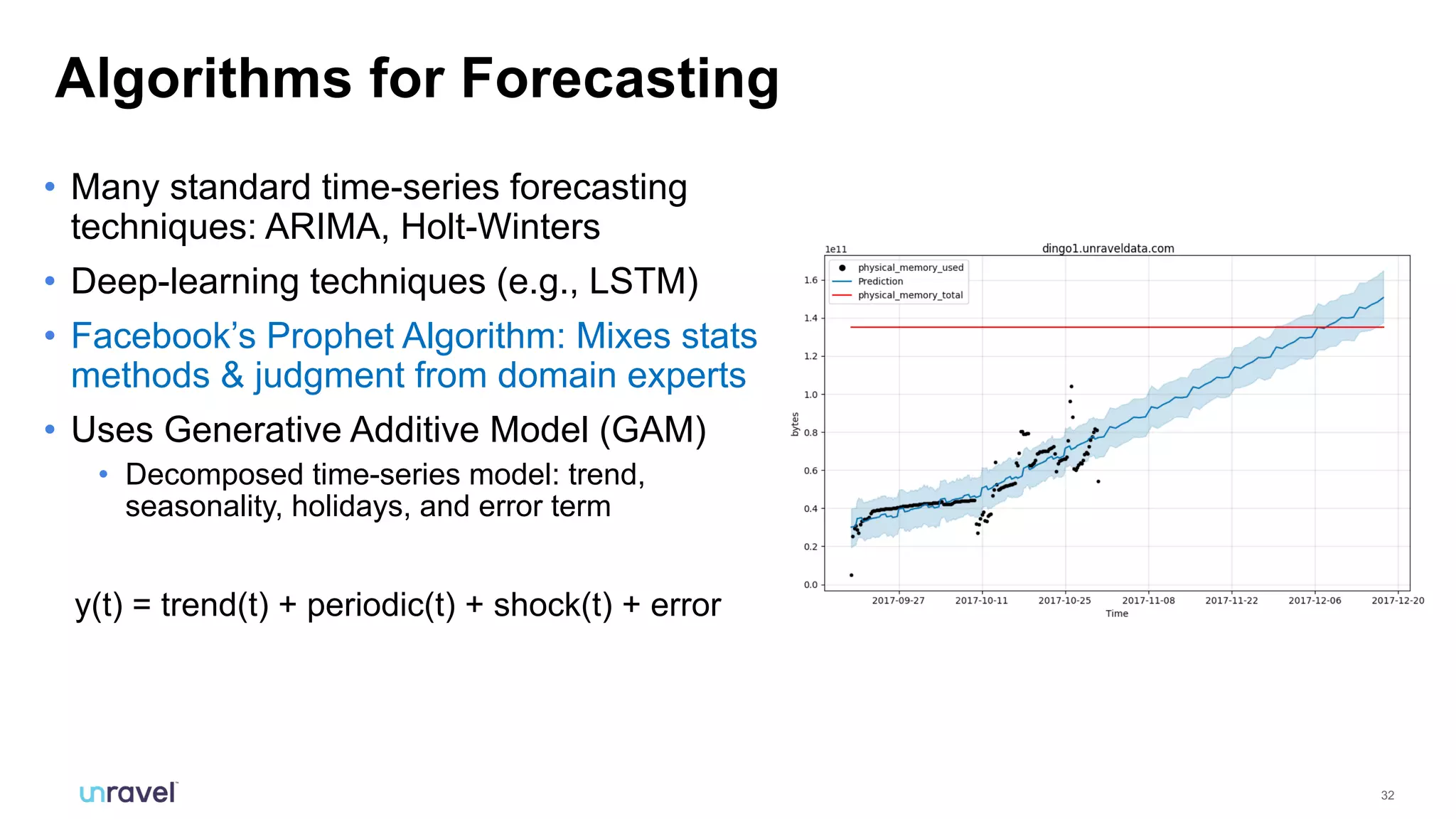

The document discusses the application of machine learning techniques to understand and improve the performance of Kafka systems in streaming data environments. It highlights challenges like anomaly detection, flow variance, and resource allocation, while detailing various methodologies such as outlier detection, forecasting, and correlation analysis. Additionally, it emphasizes the need for automated intelligence solutions to empower DevOps teams for efficient management of streaming applications.