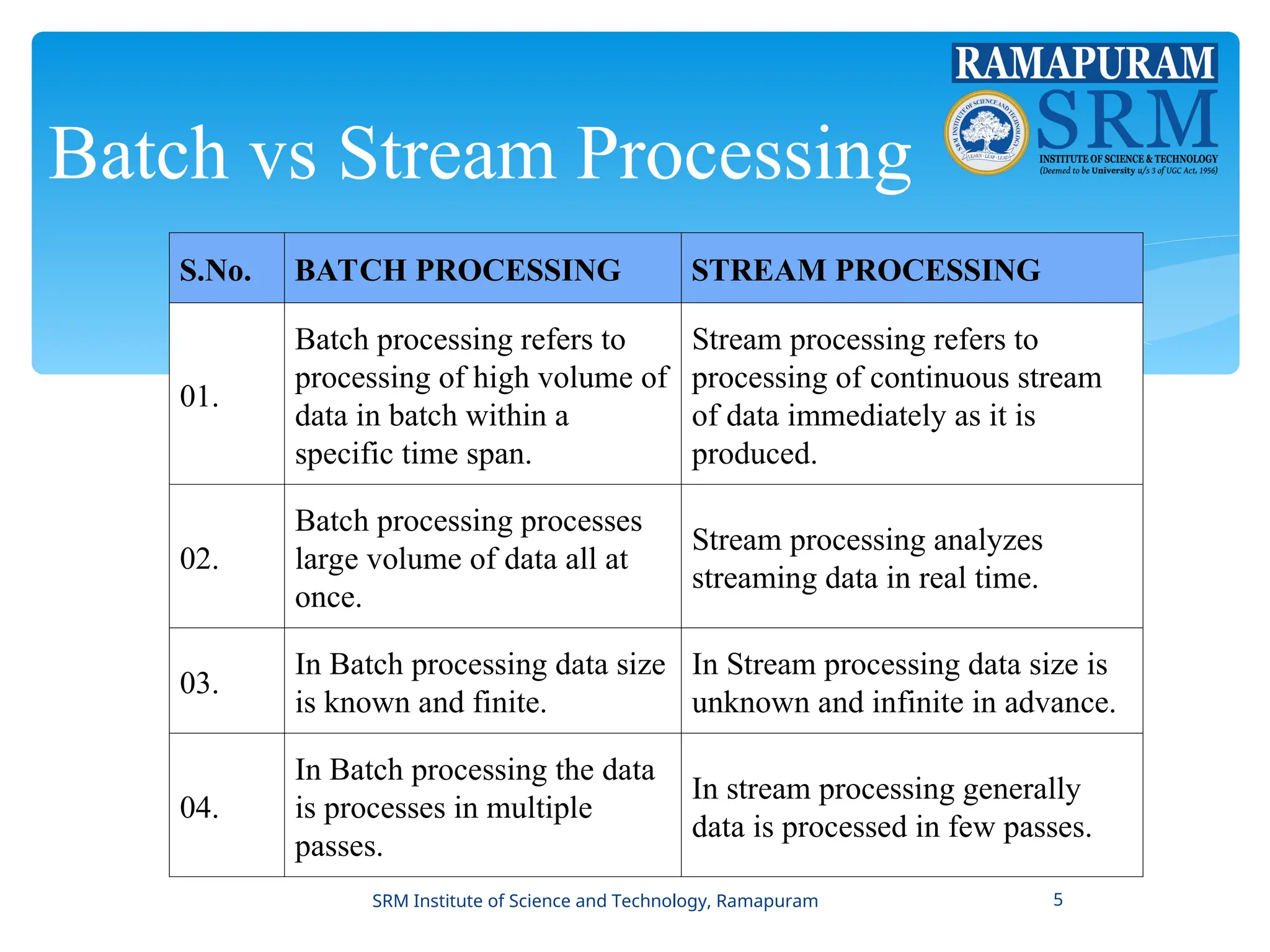

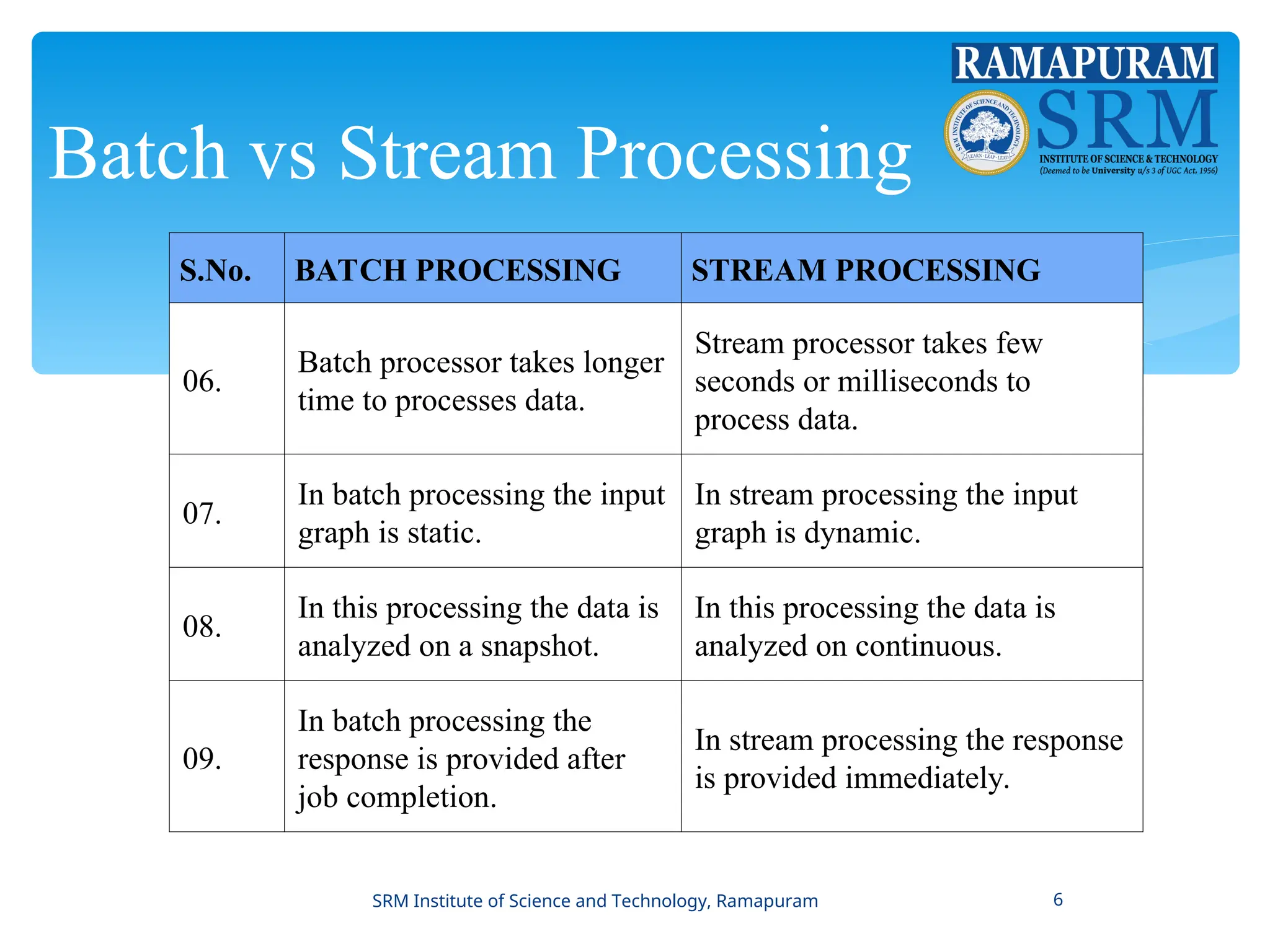

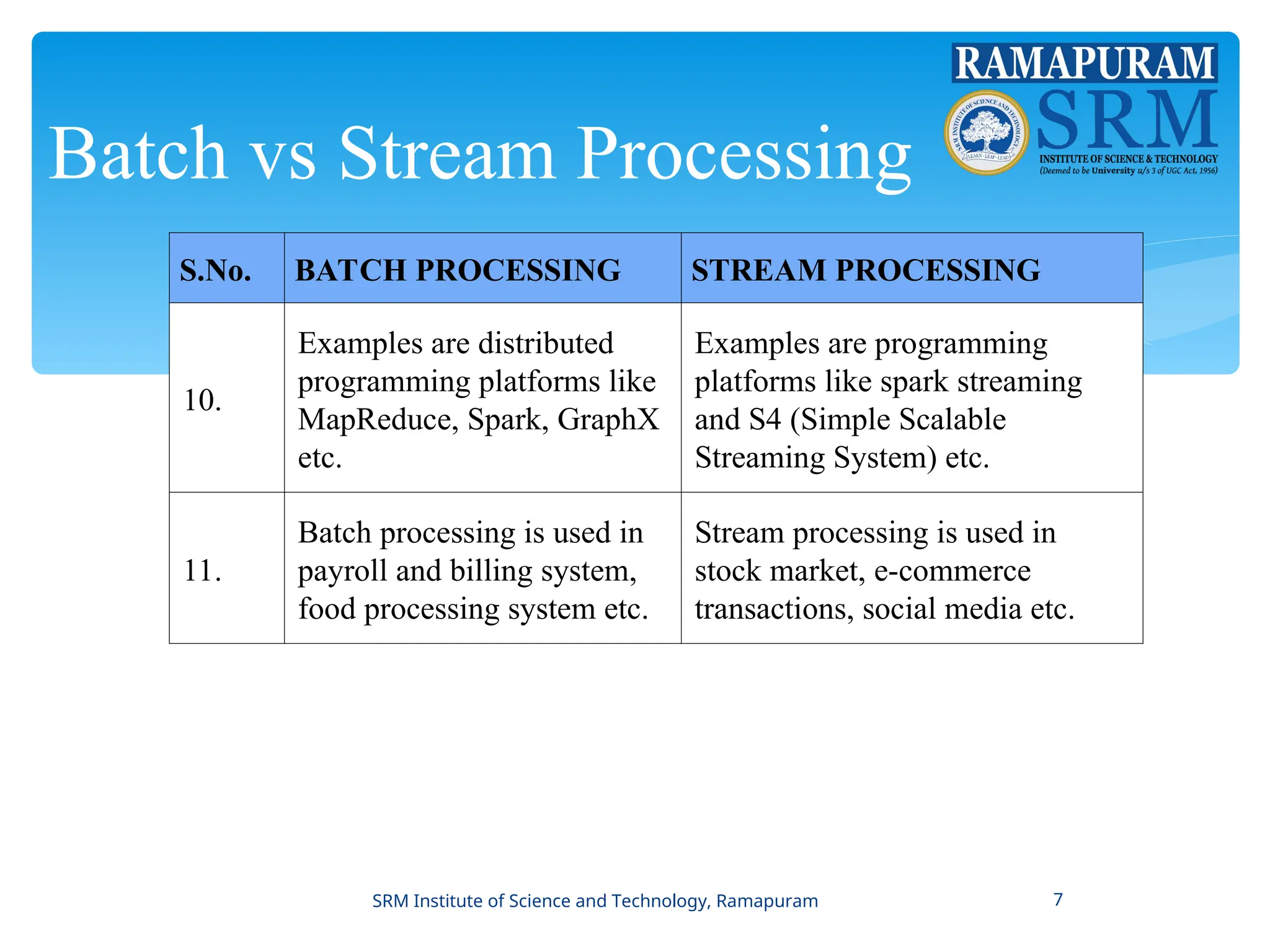

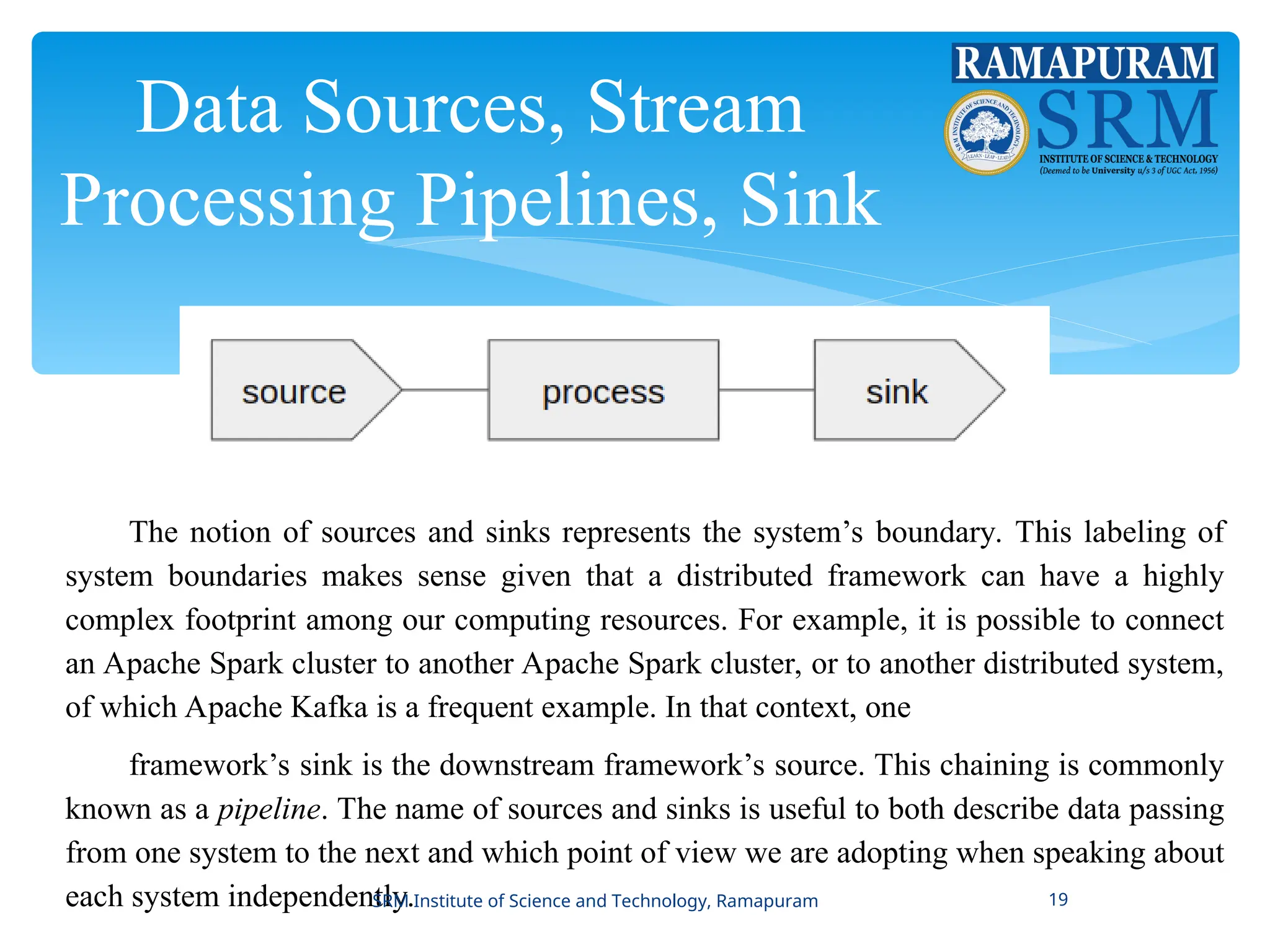

The document provides a comprehensive introduction to stream processing, differentiating it from batch processing through various aspects such as data size, speed of processing, and application areas. It discusses key concepts including bounded vs. unbounded data, popularity of tools like MapReduce, and practical applications like fault detection and real-time analytics. Additionally, the document explores stateful stream processing and essential components like data sources and sinks in the context of data streaming frameworks.