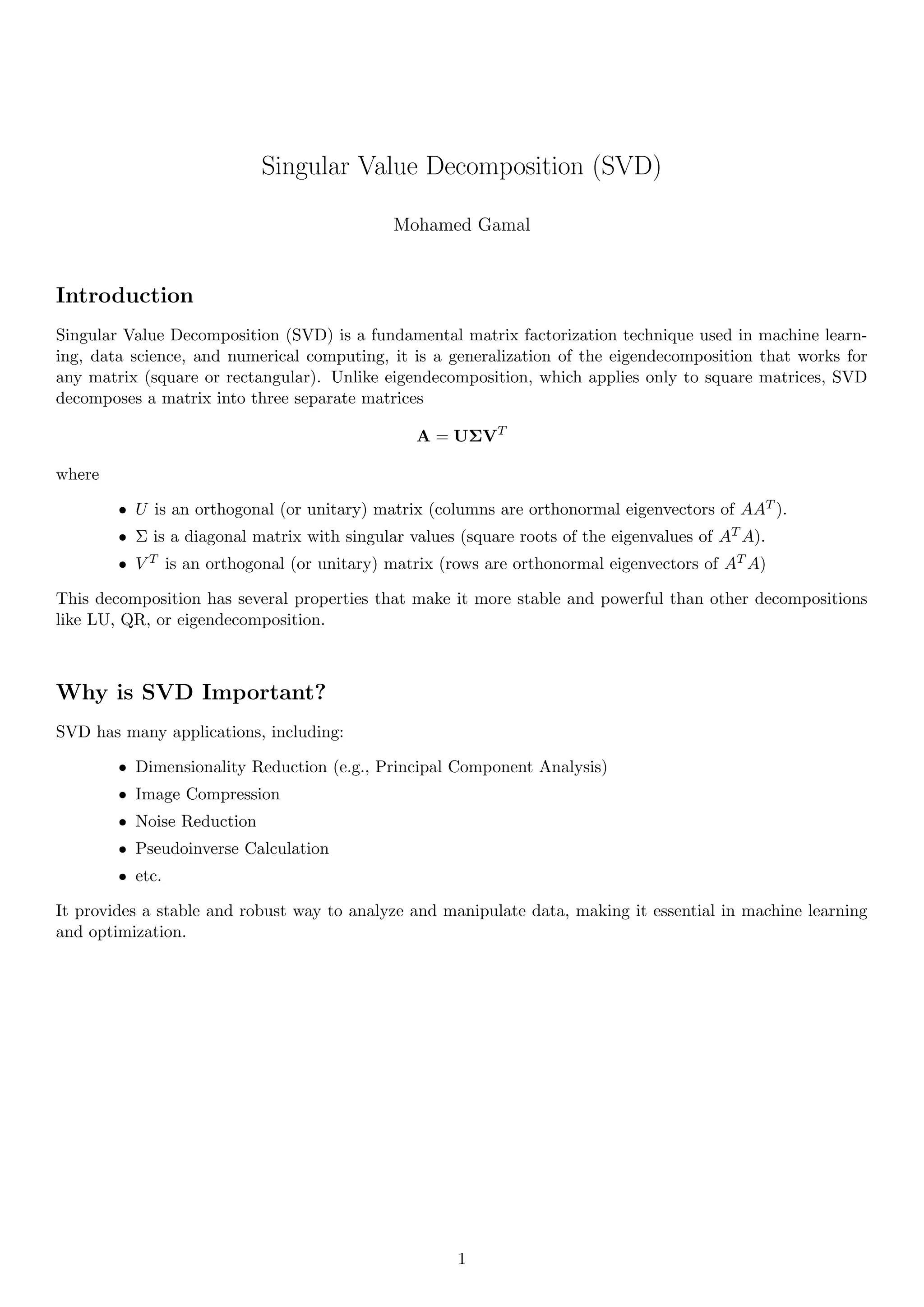

SVD is a powerful matrix factorization technique used in machine learning, data science, and AI. It helps with dimensionality reduction, image compression, noise filtering, and more.

Mastering SVD can give you an edge in handling complex data efficiently!