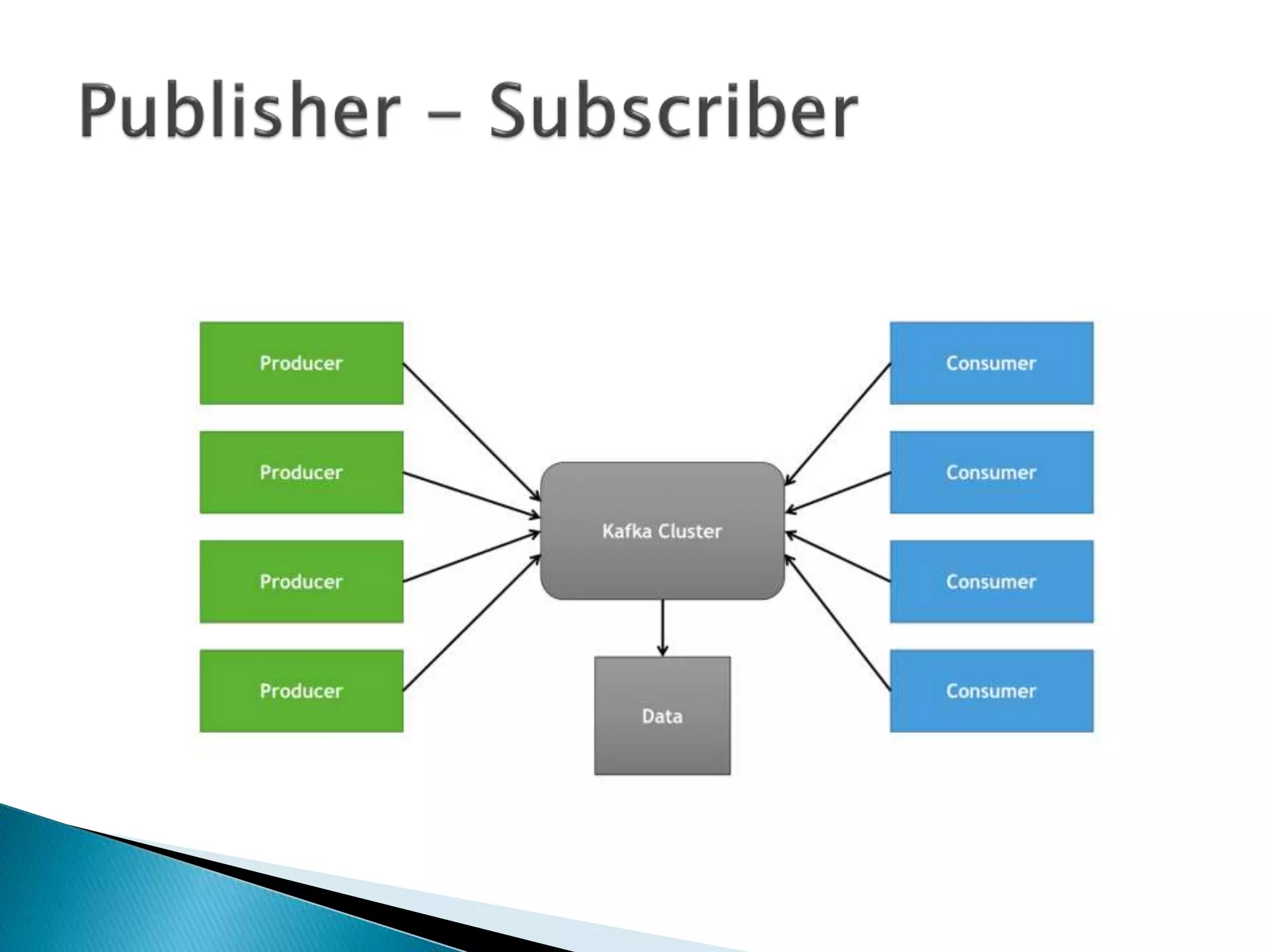

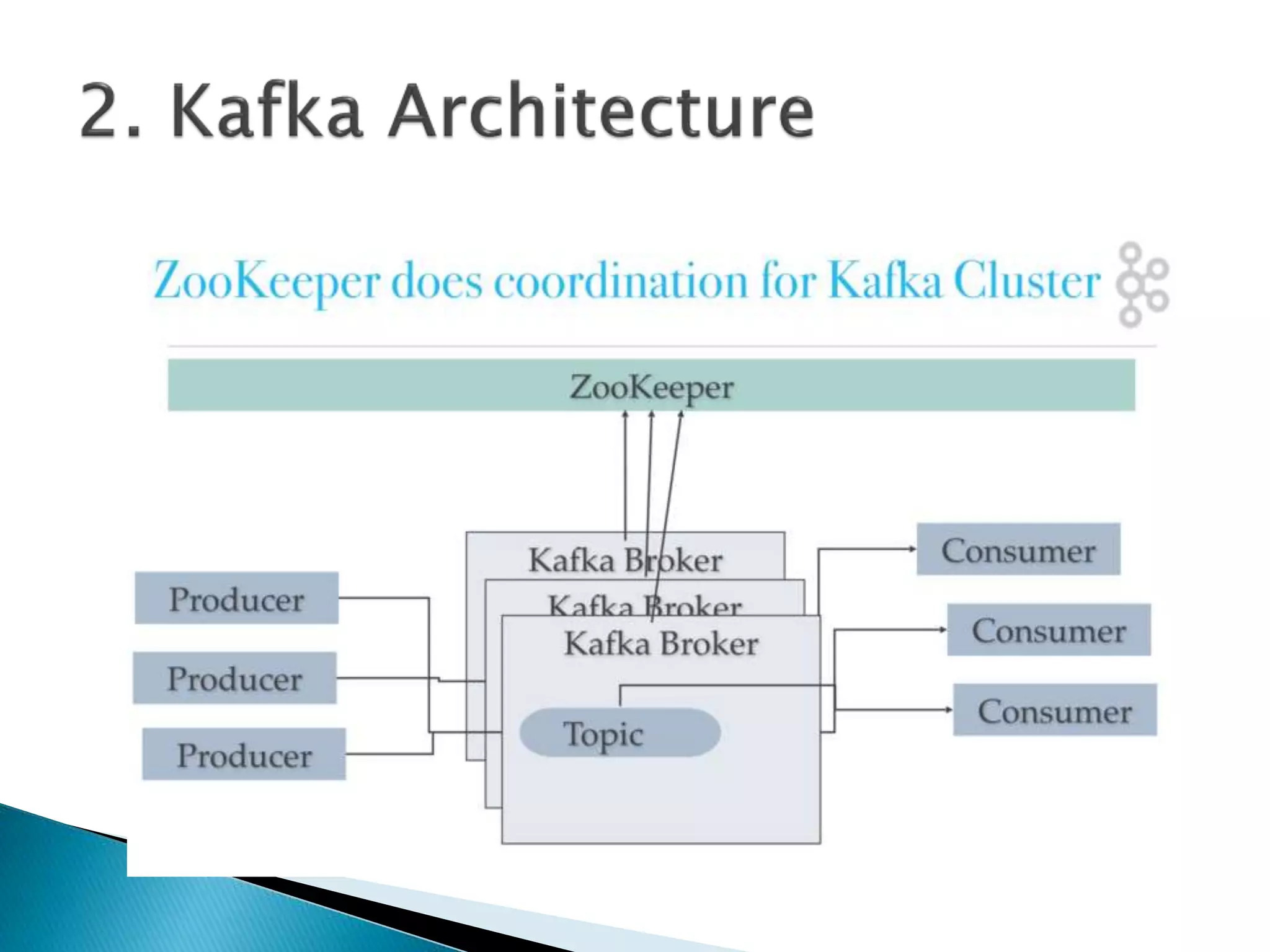

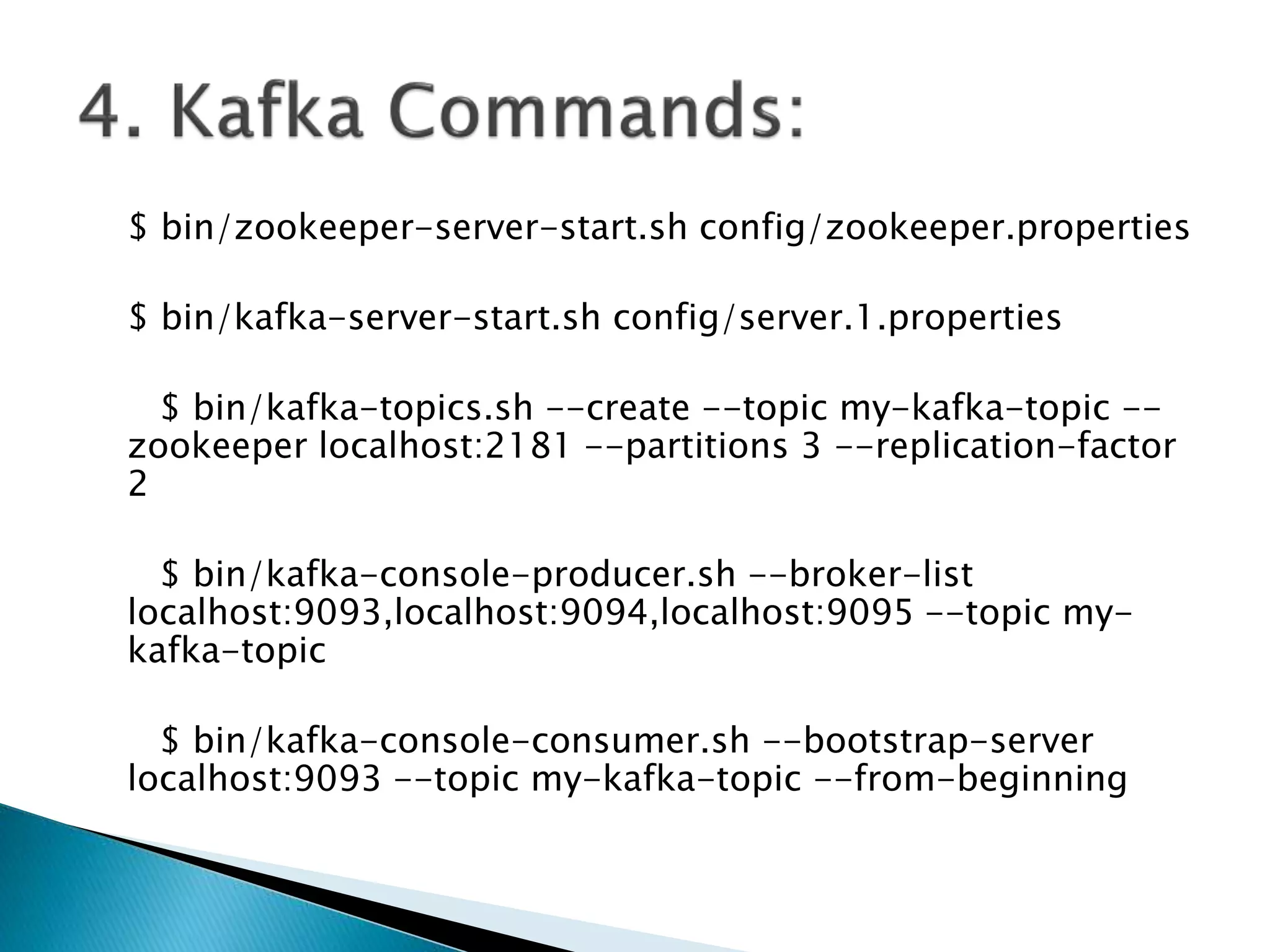

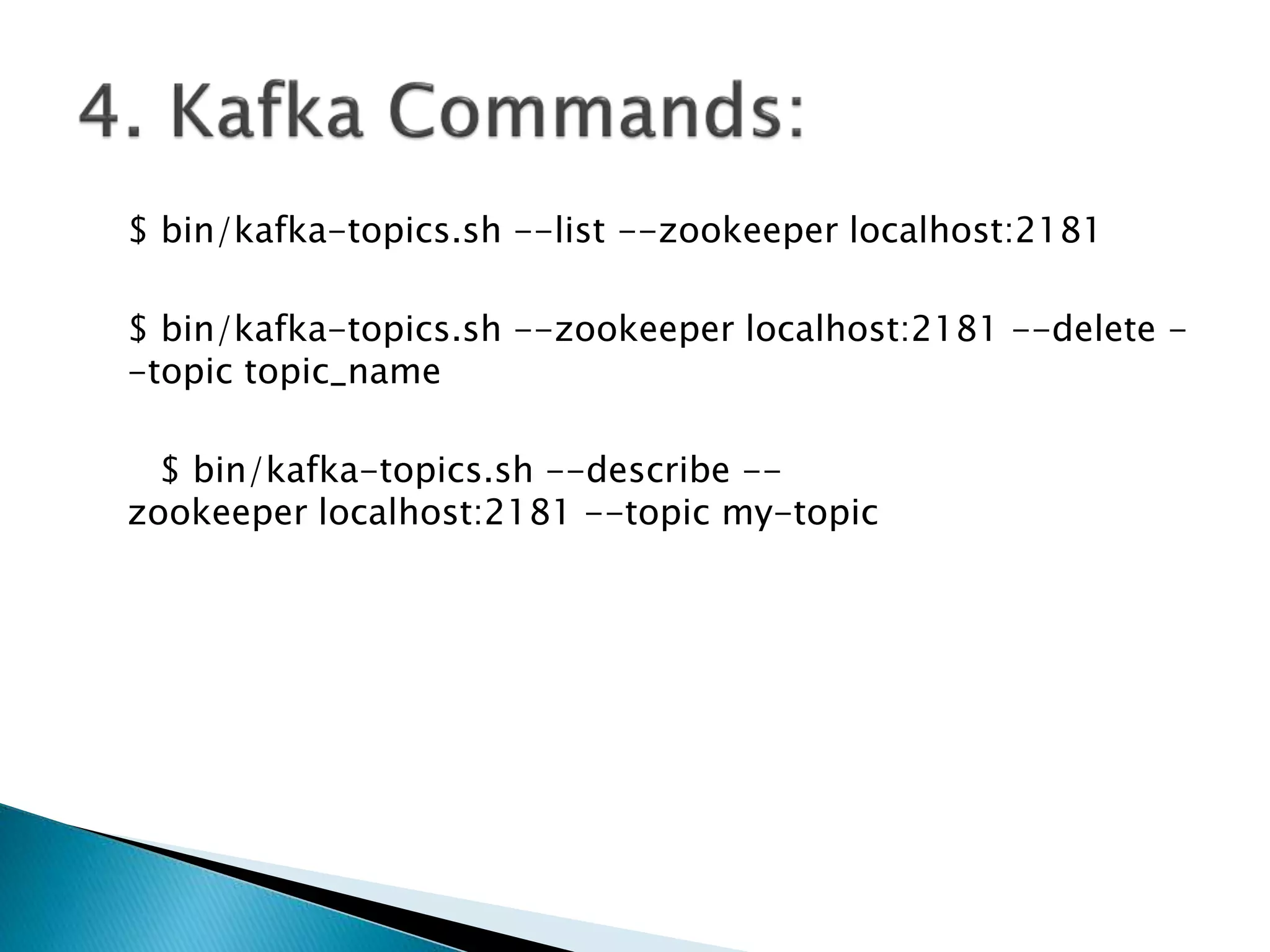

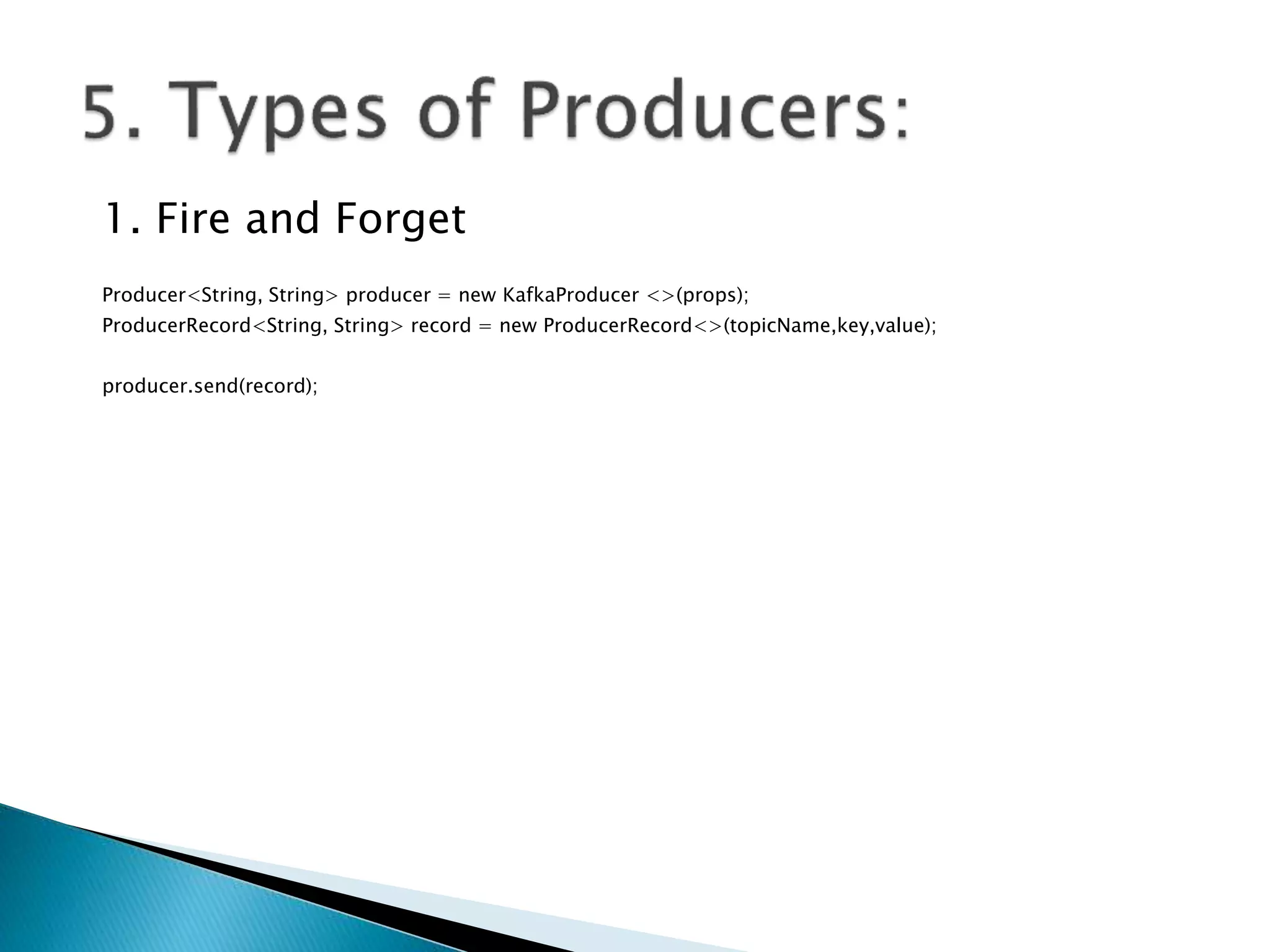

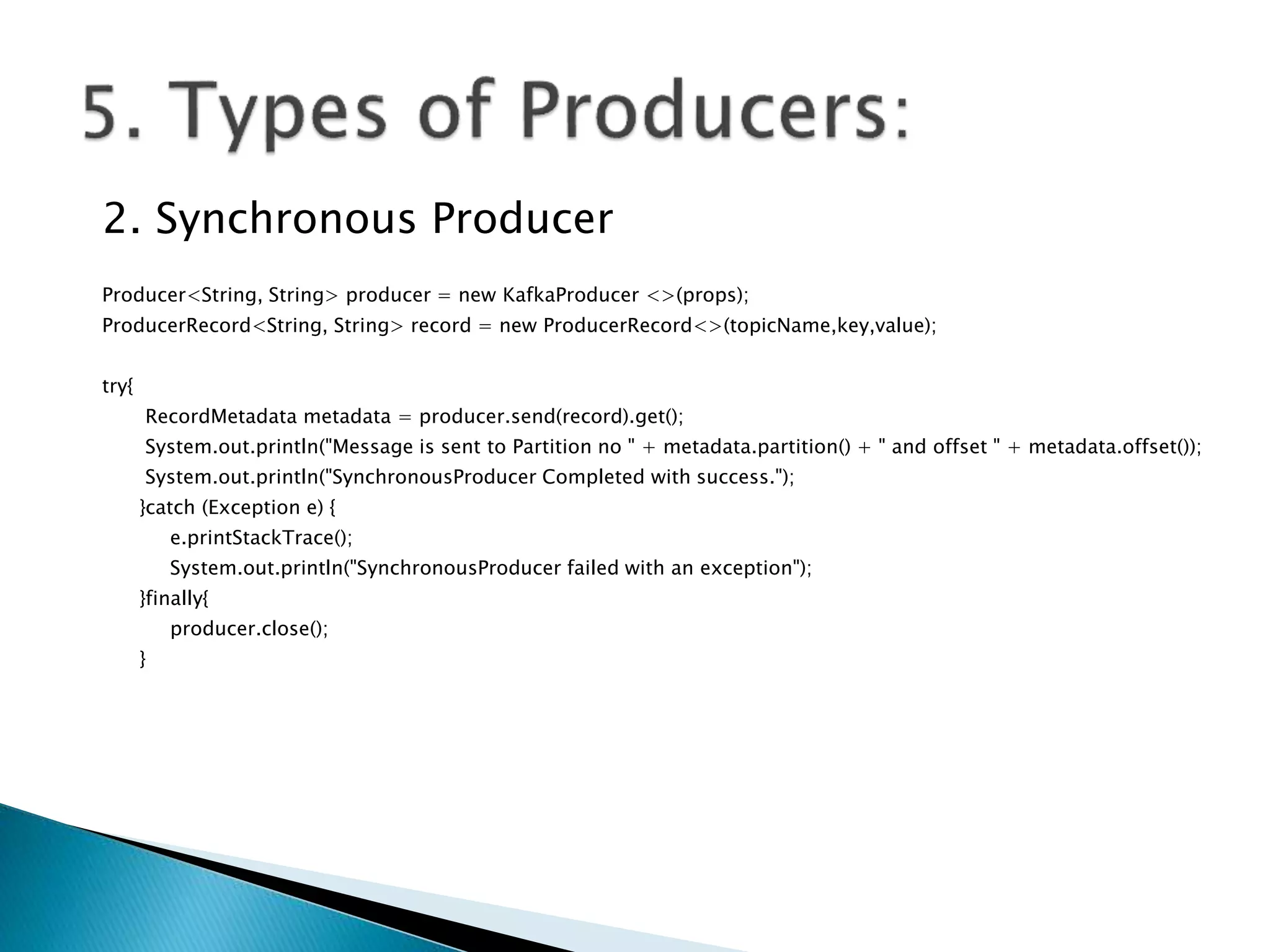

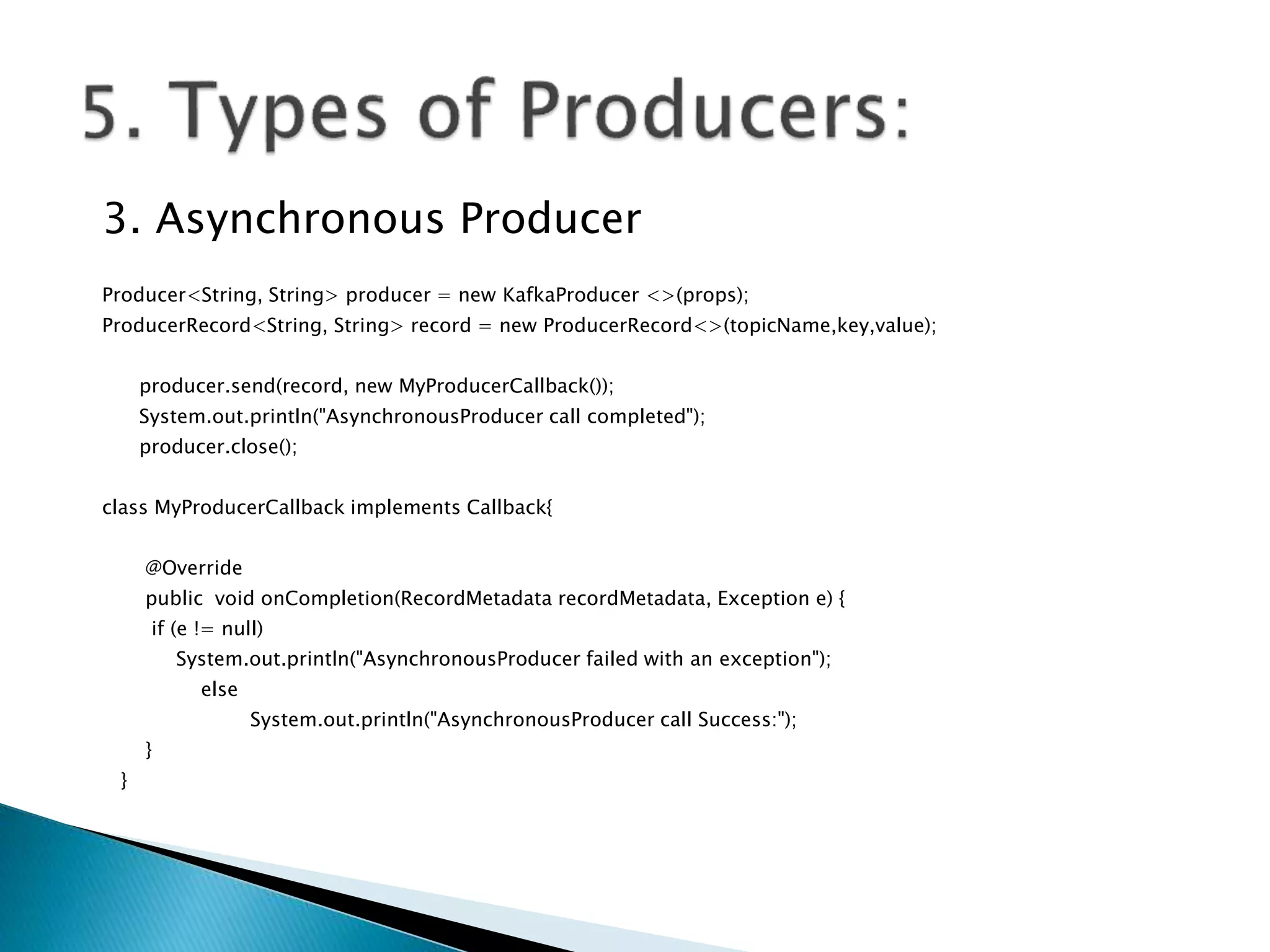

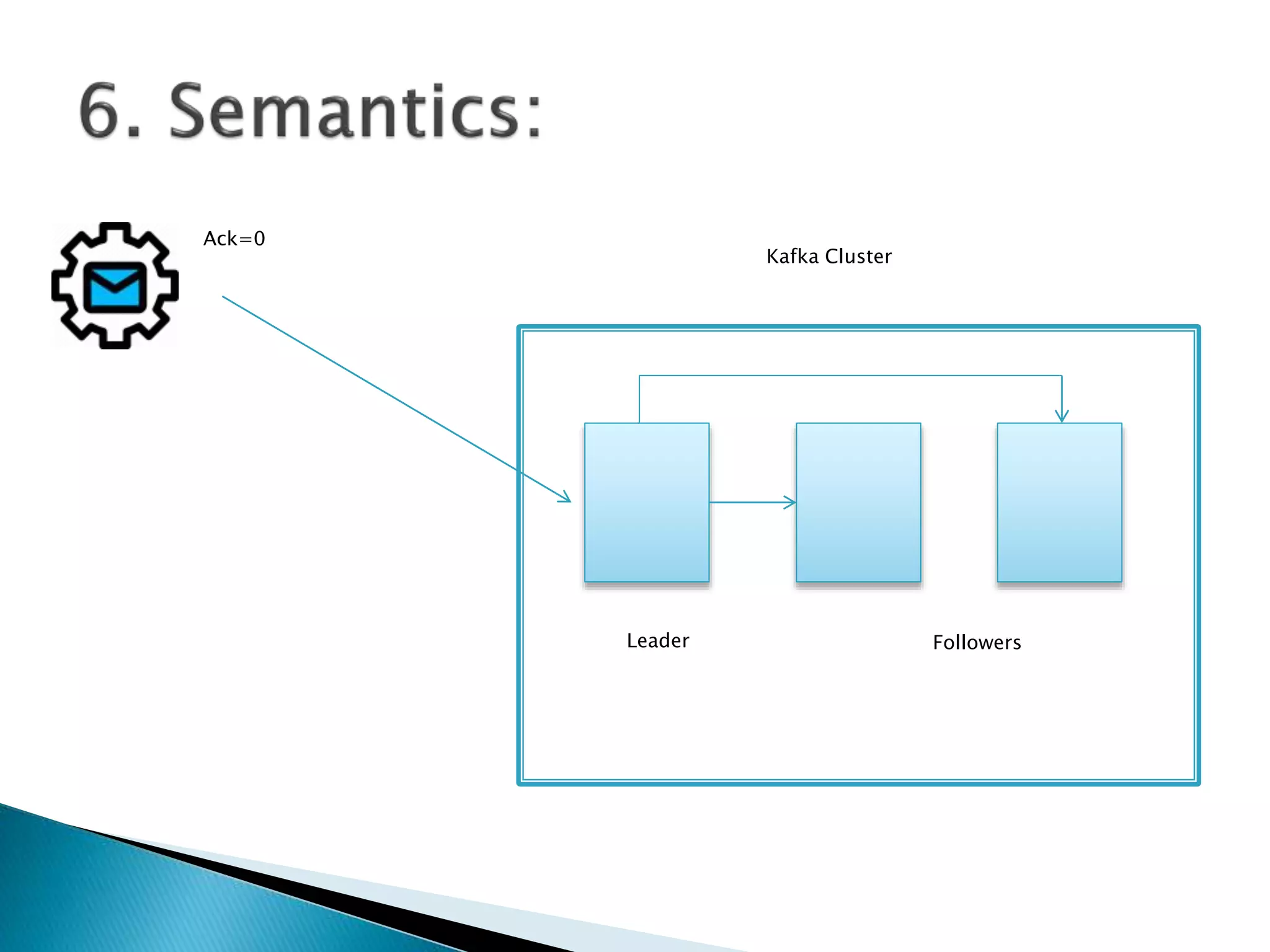

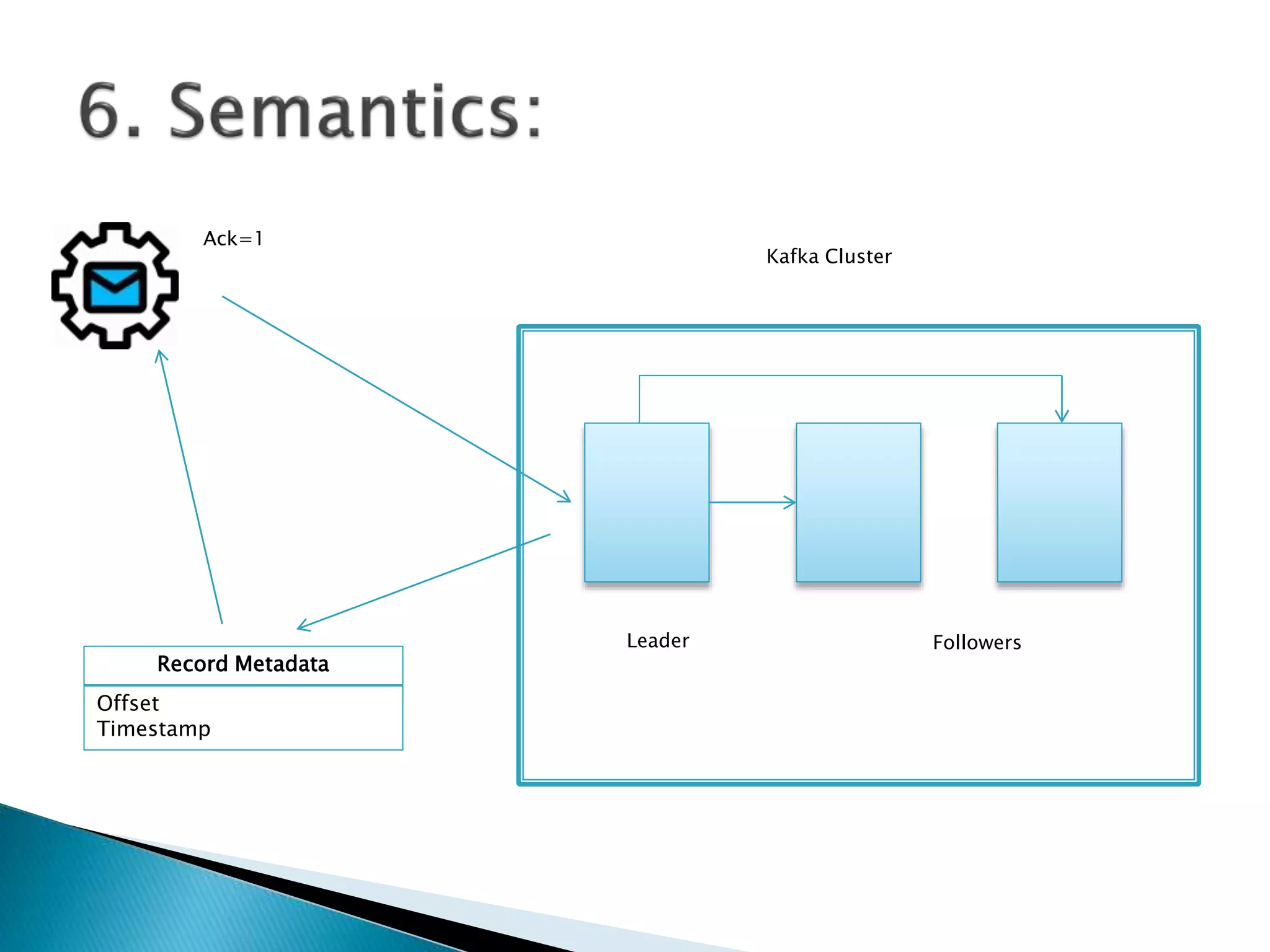

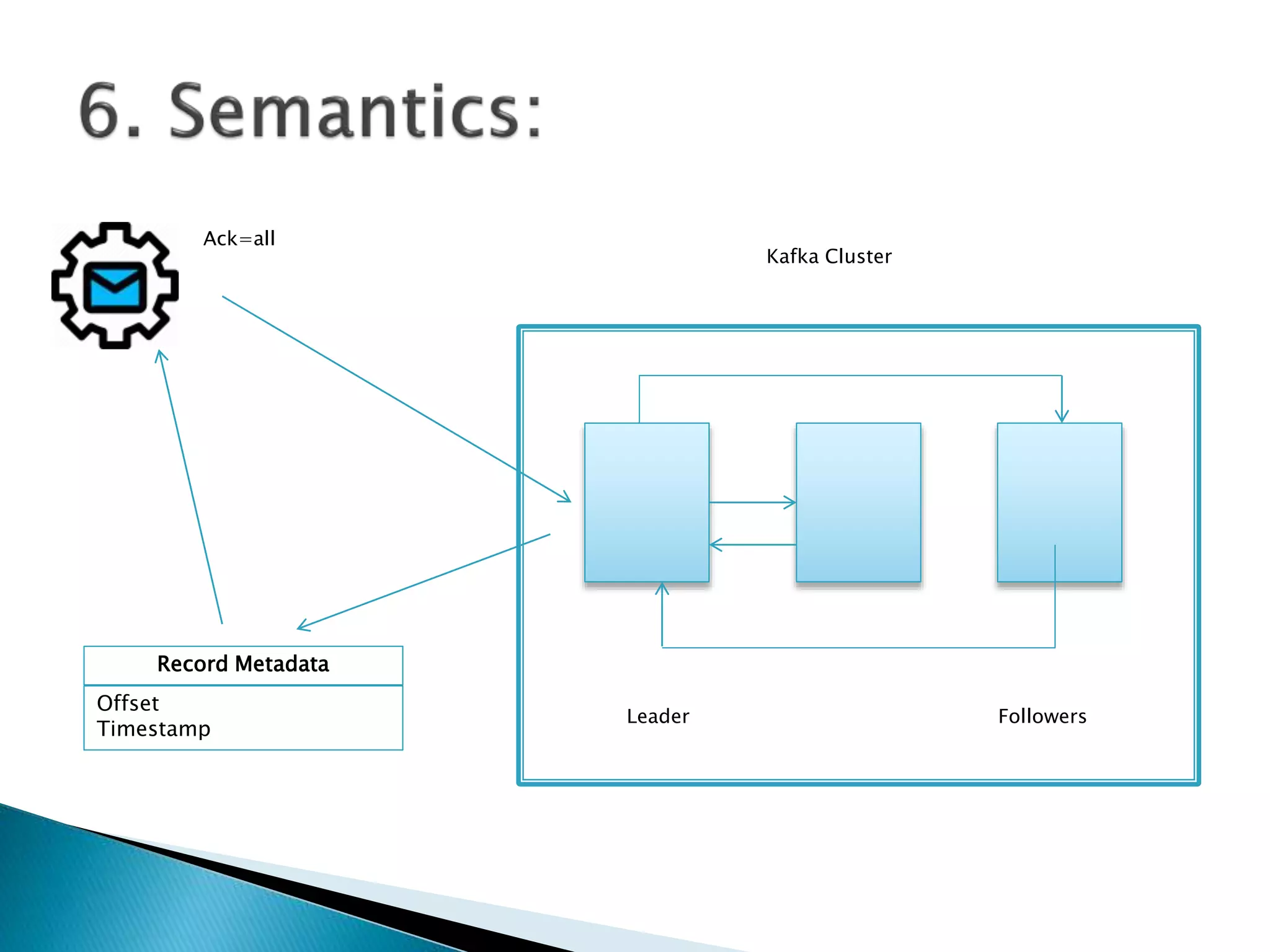

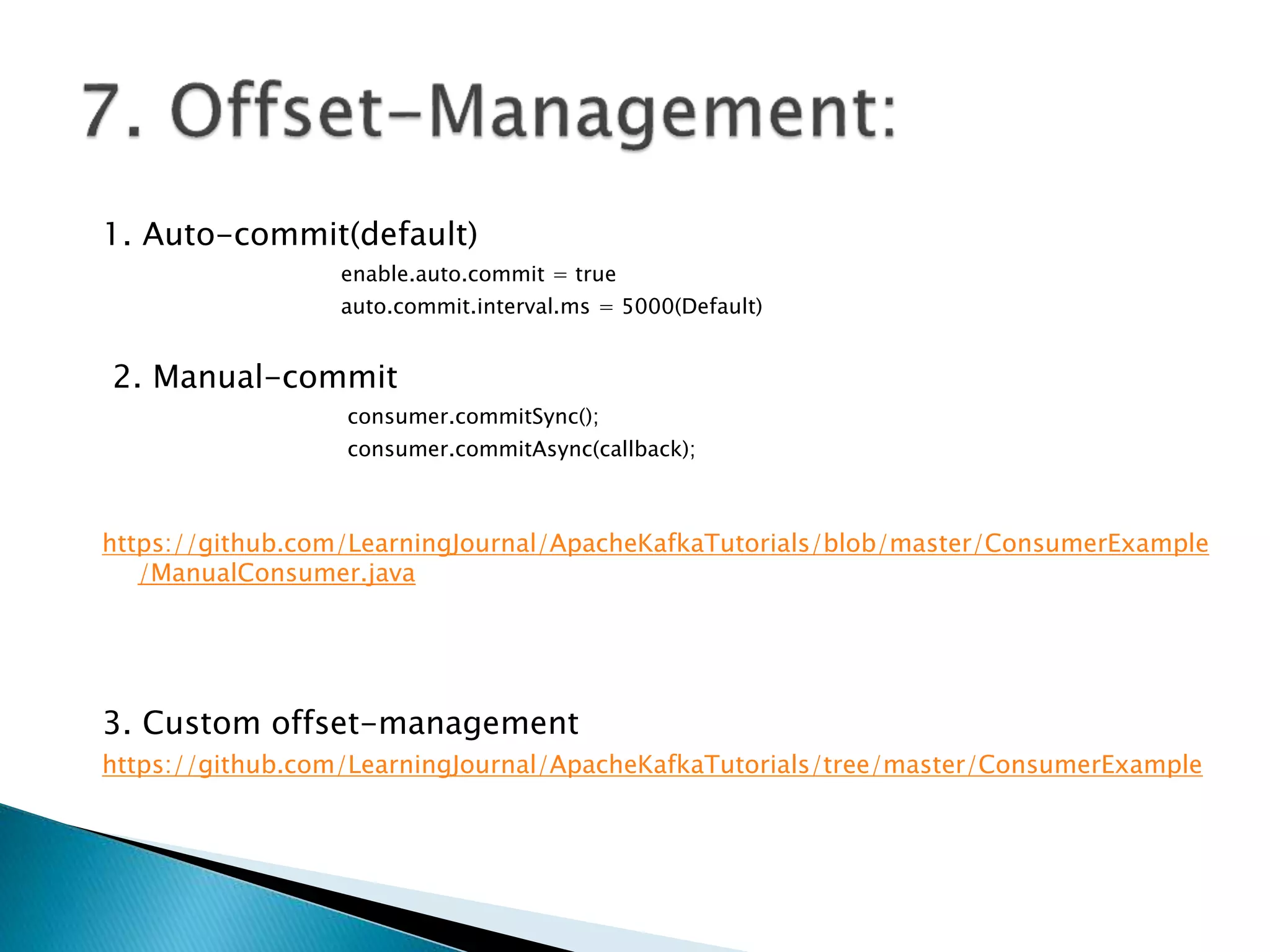

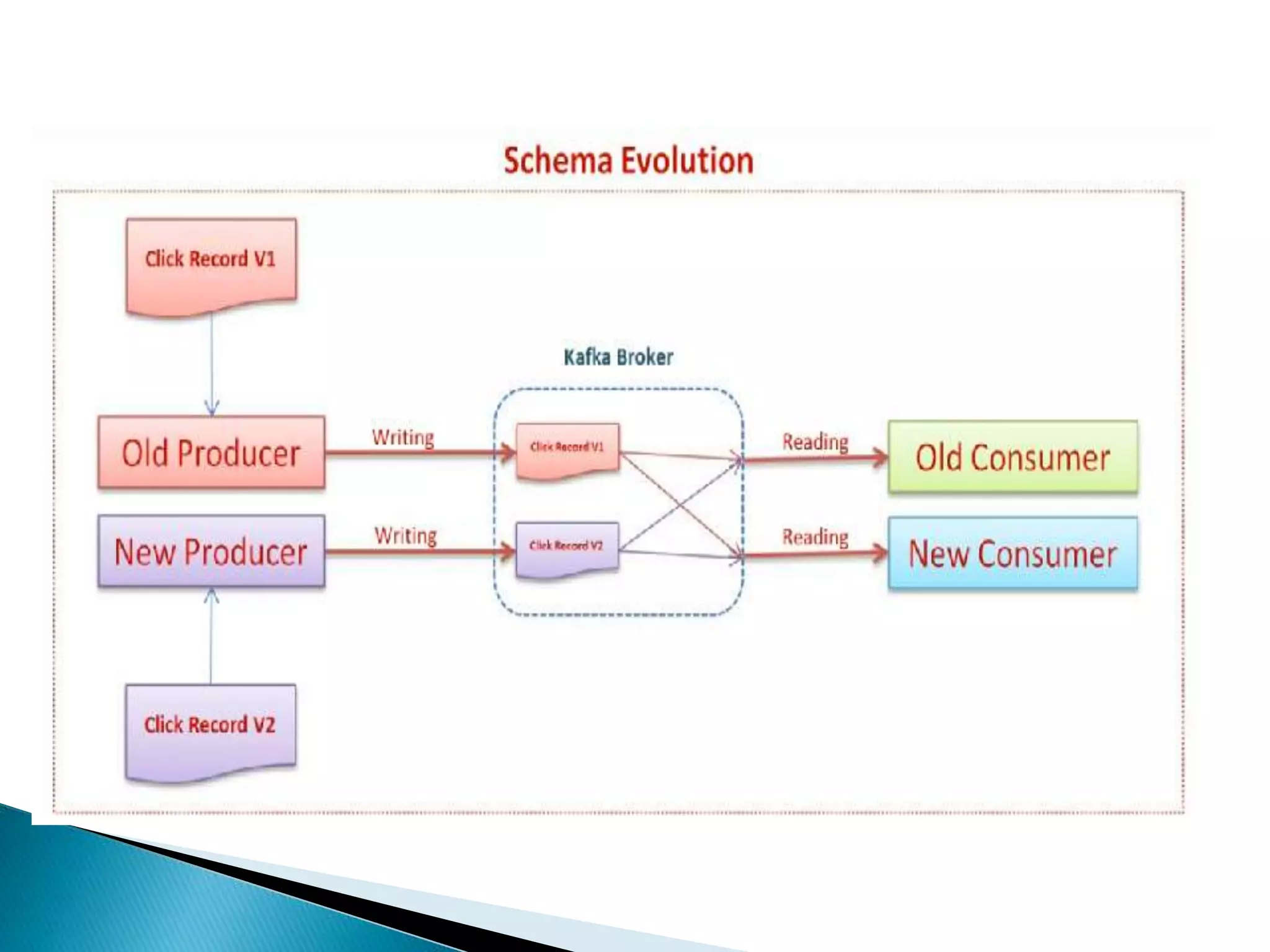

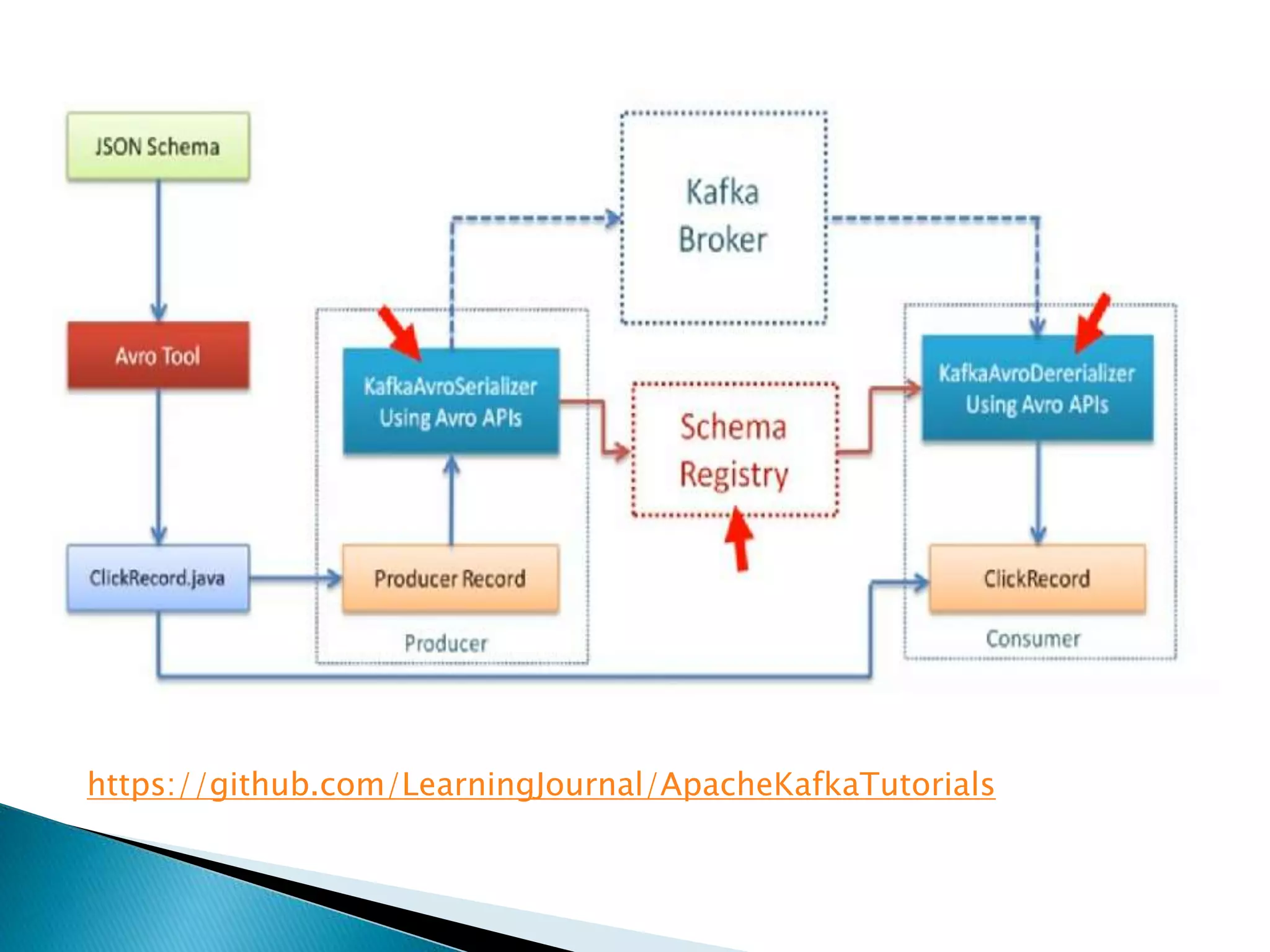

This document provides information about key concepts in Apache Kafka including producers, consumers, brokers, topics, partitions, replications, and Zookeeper. It also describes how to start the Kafka services, create and list topics, produce and consume messages, and configure producers and consumers. Finally, it briefly discusses Kafka Streams, Schema Registry, Kafka Connect, and KSQL.