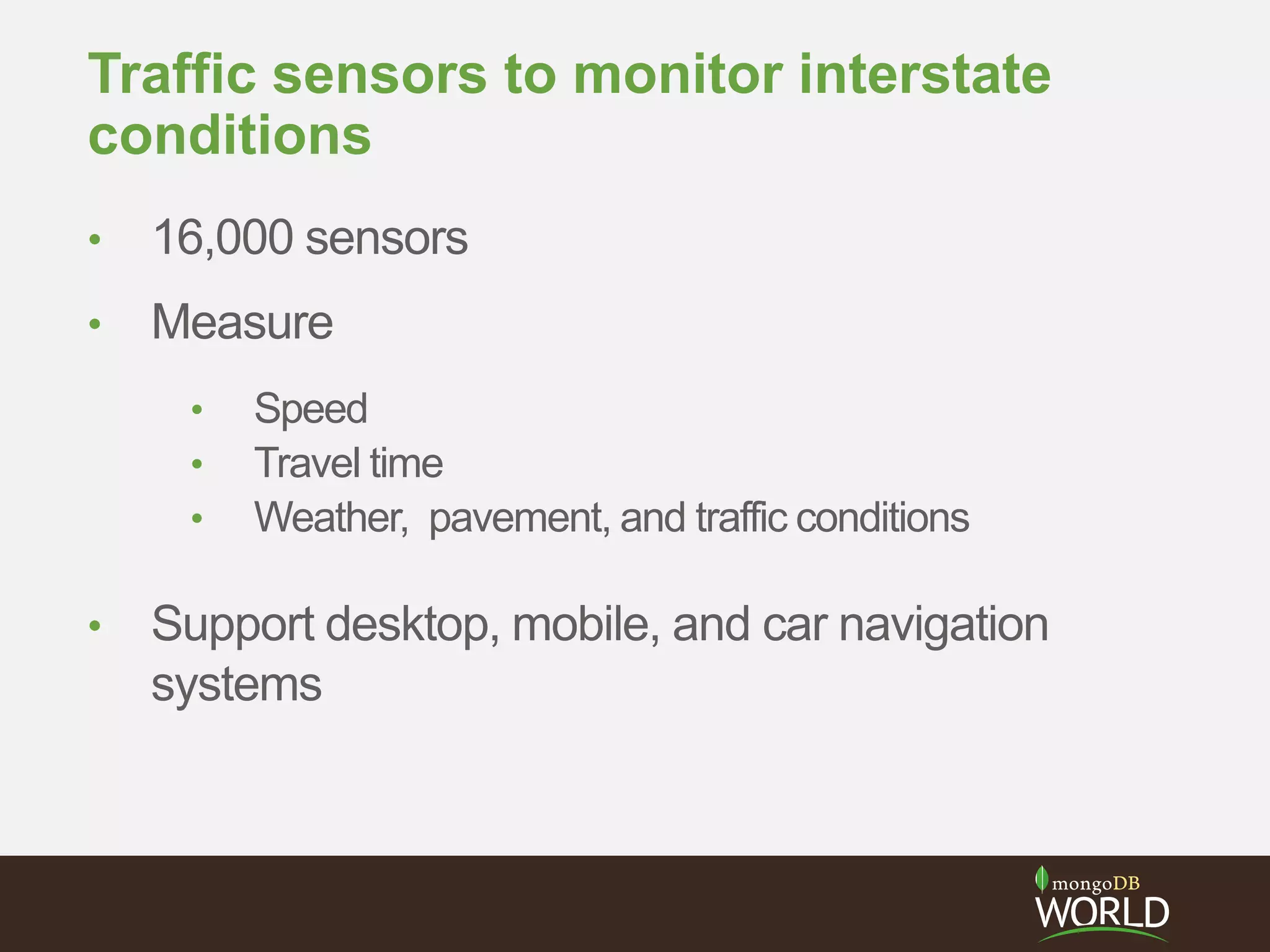

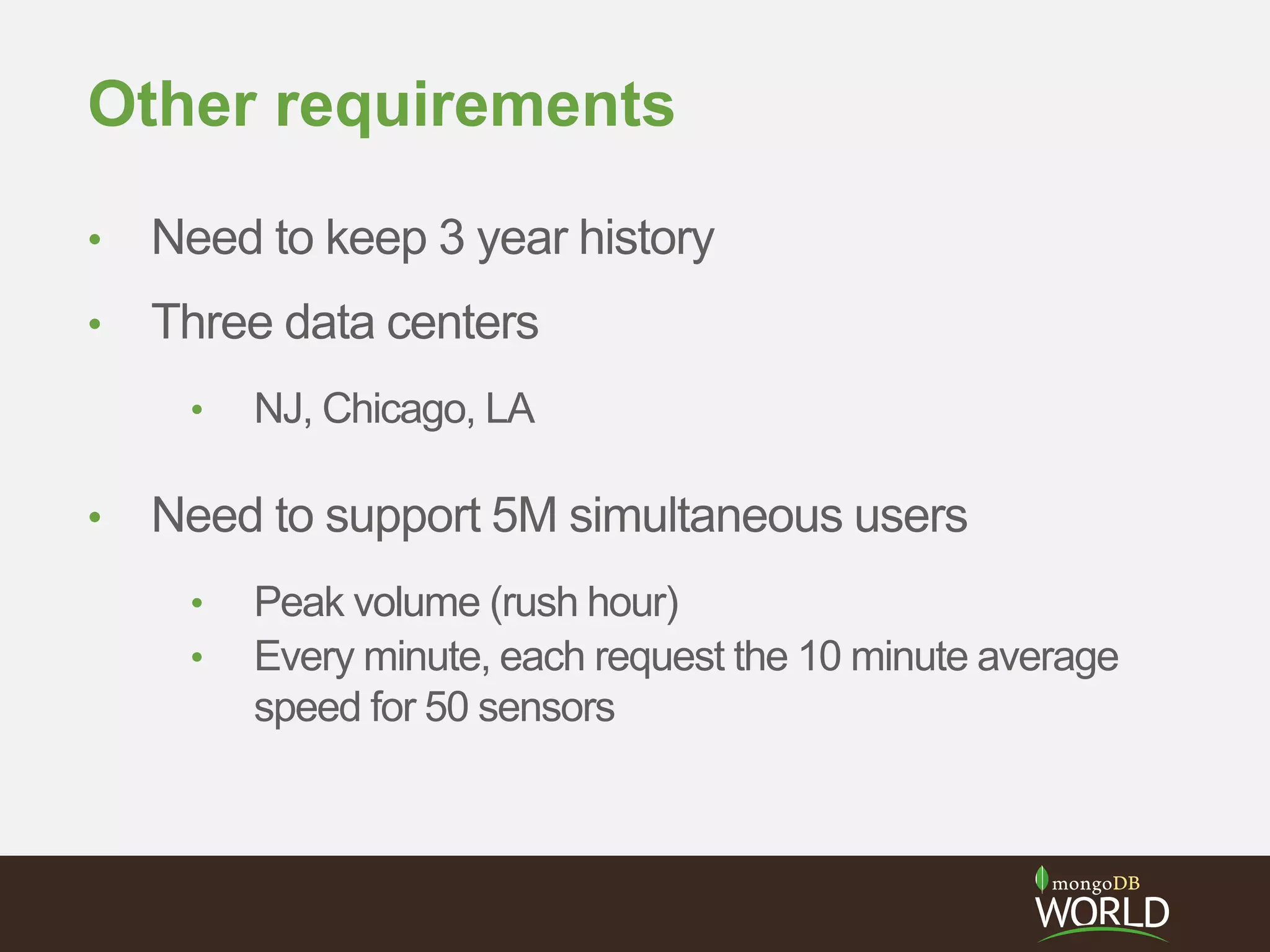

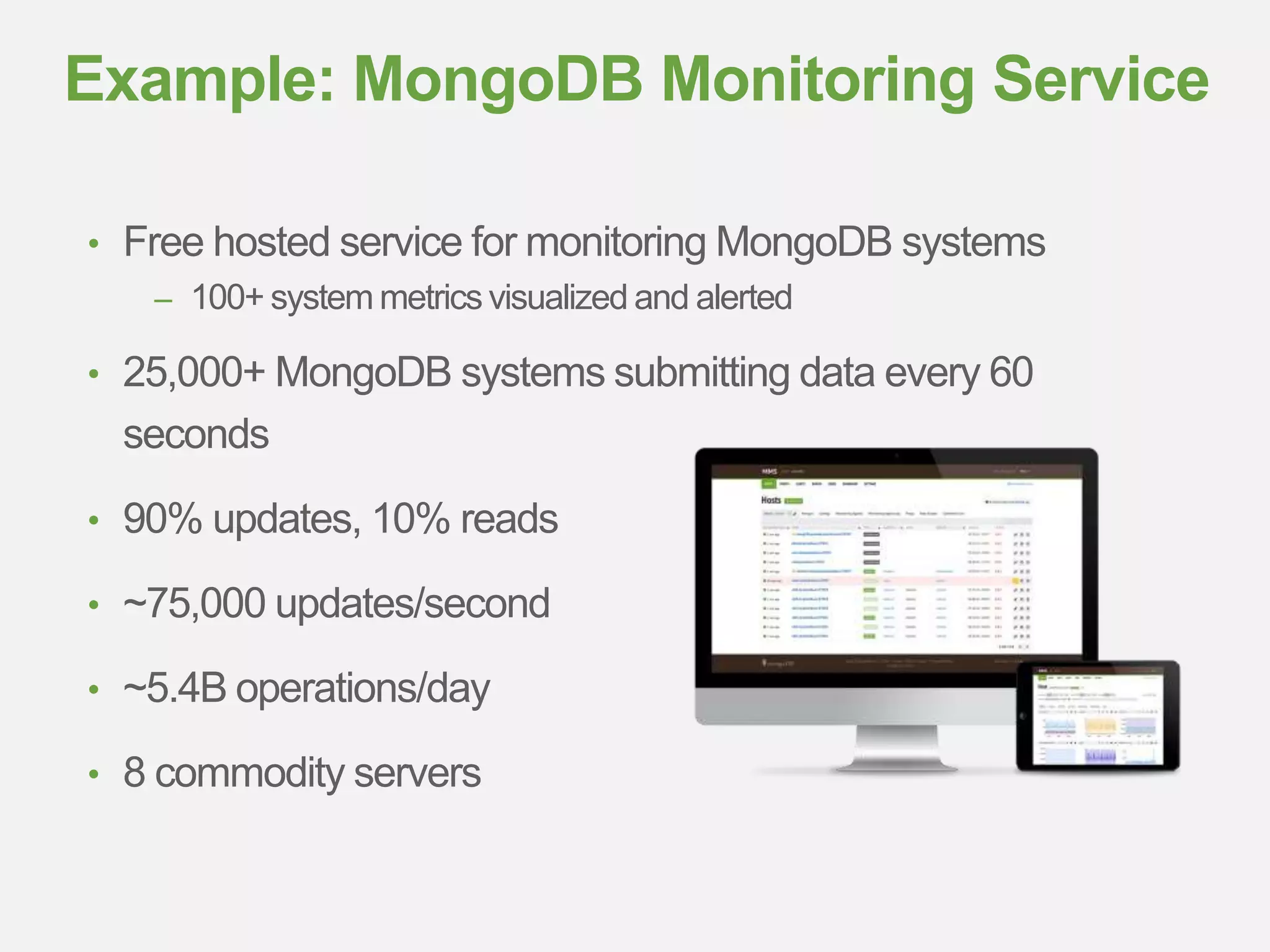

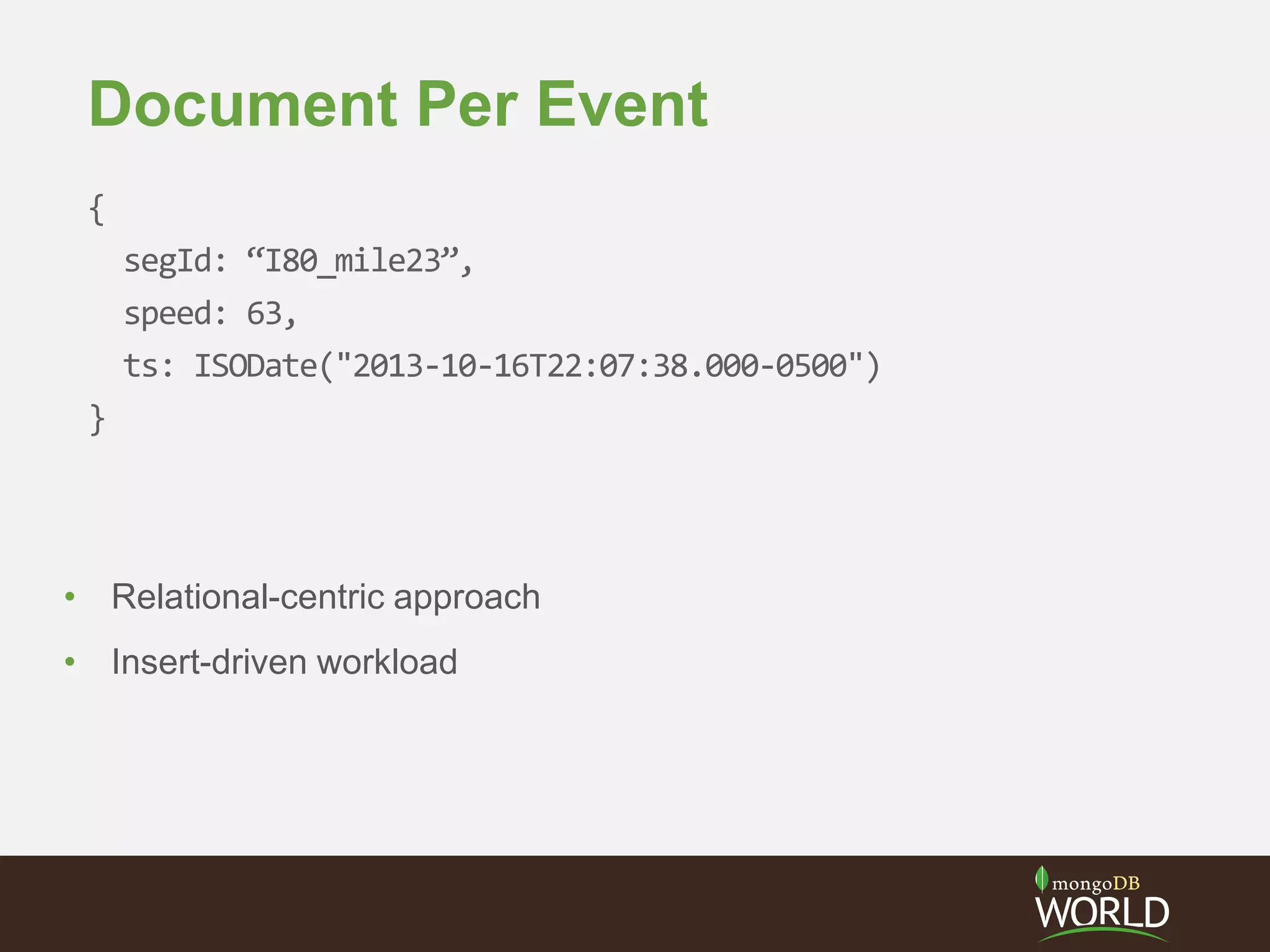

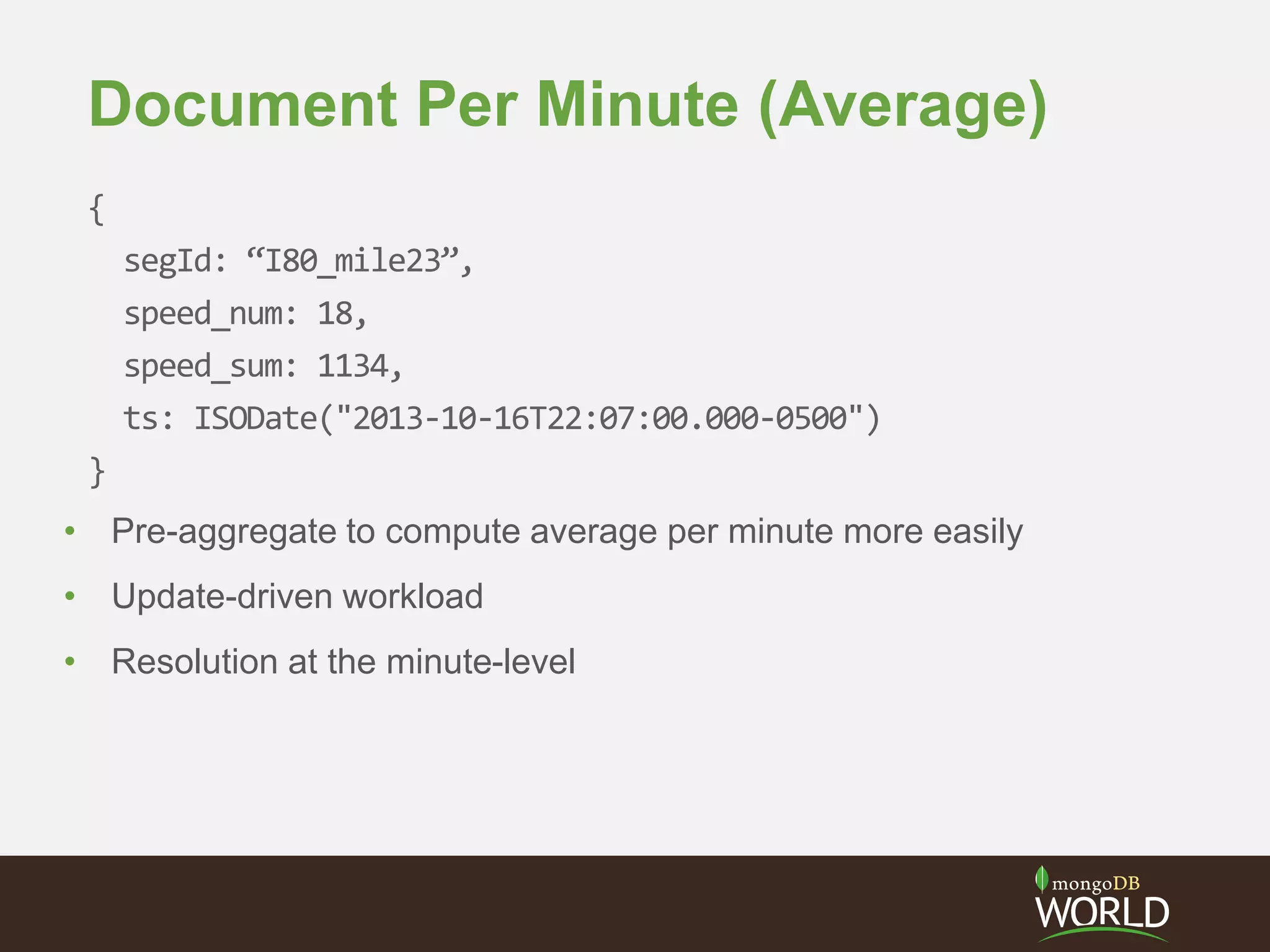

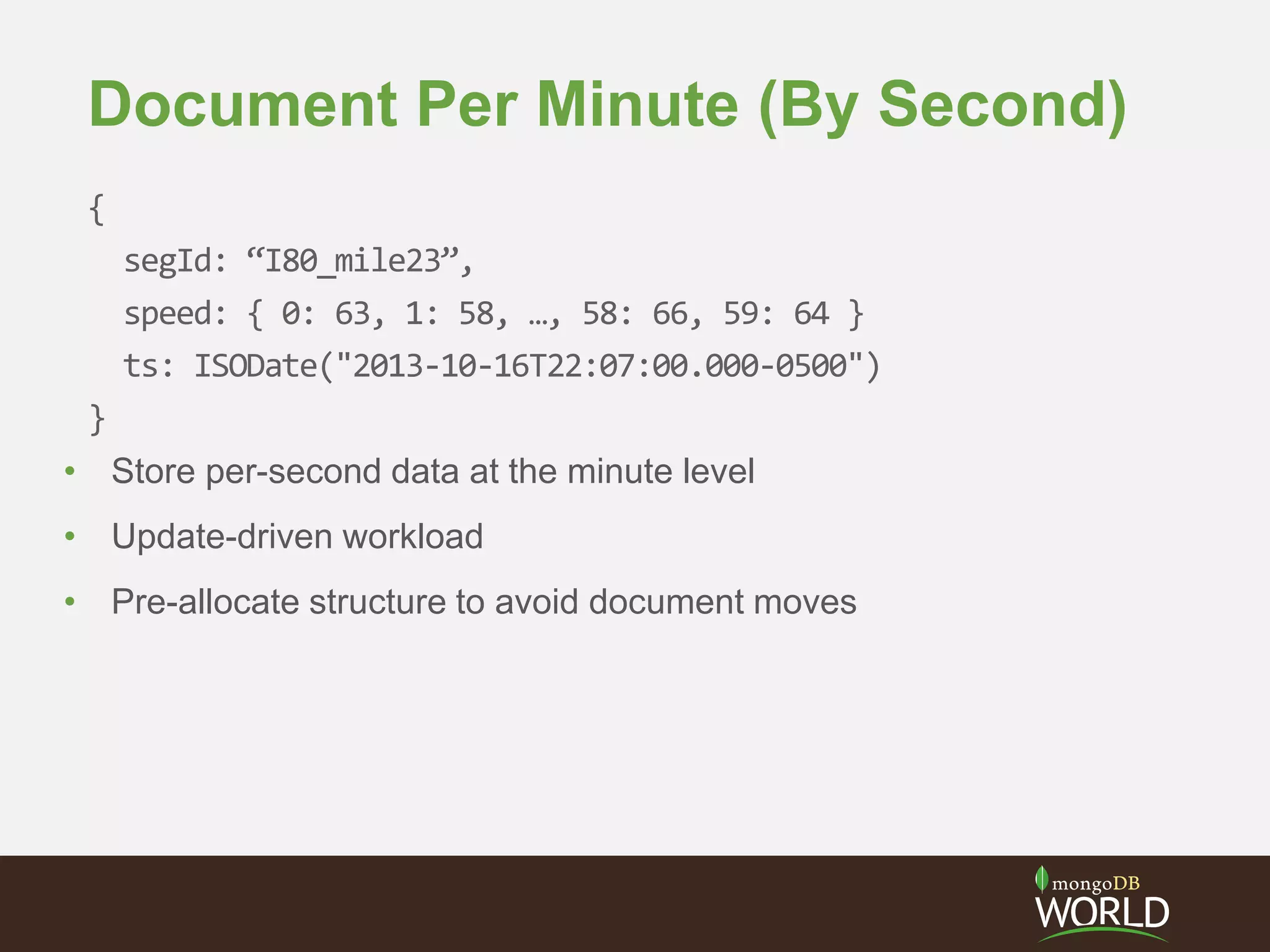

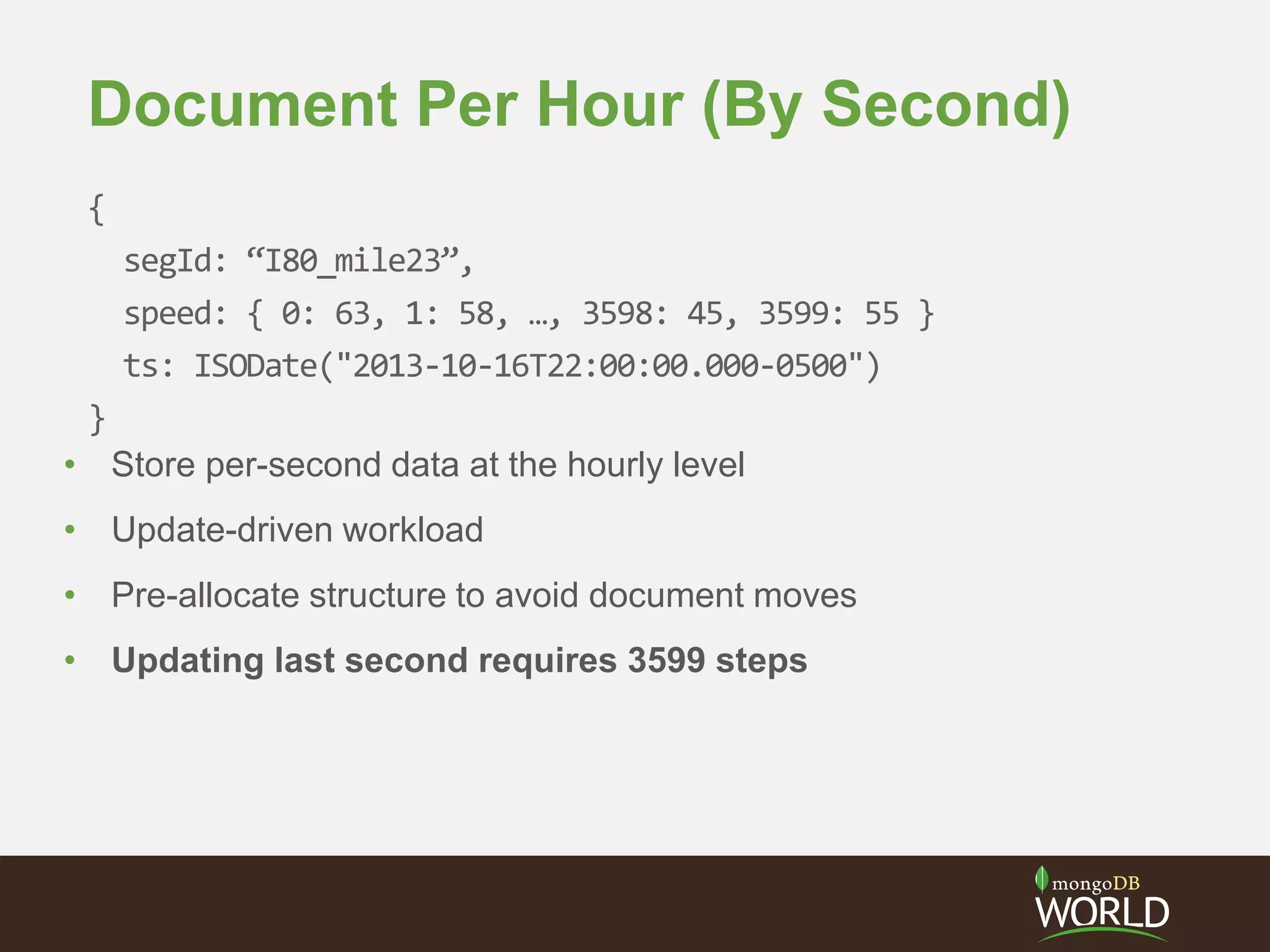

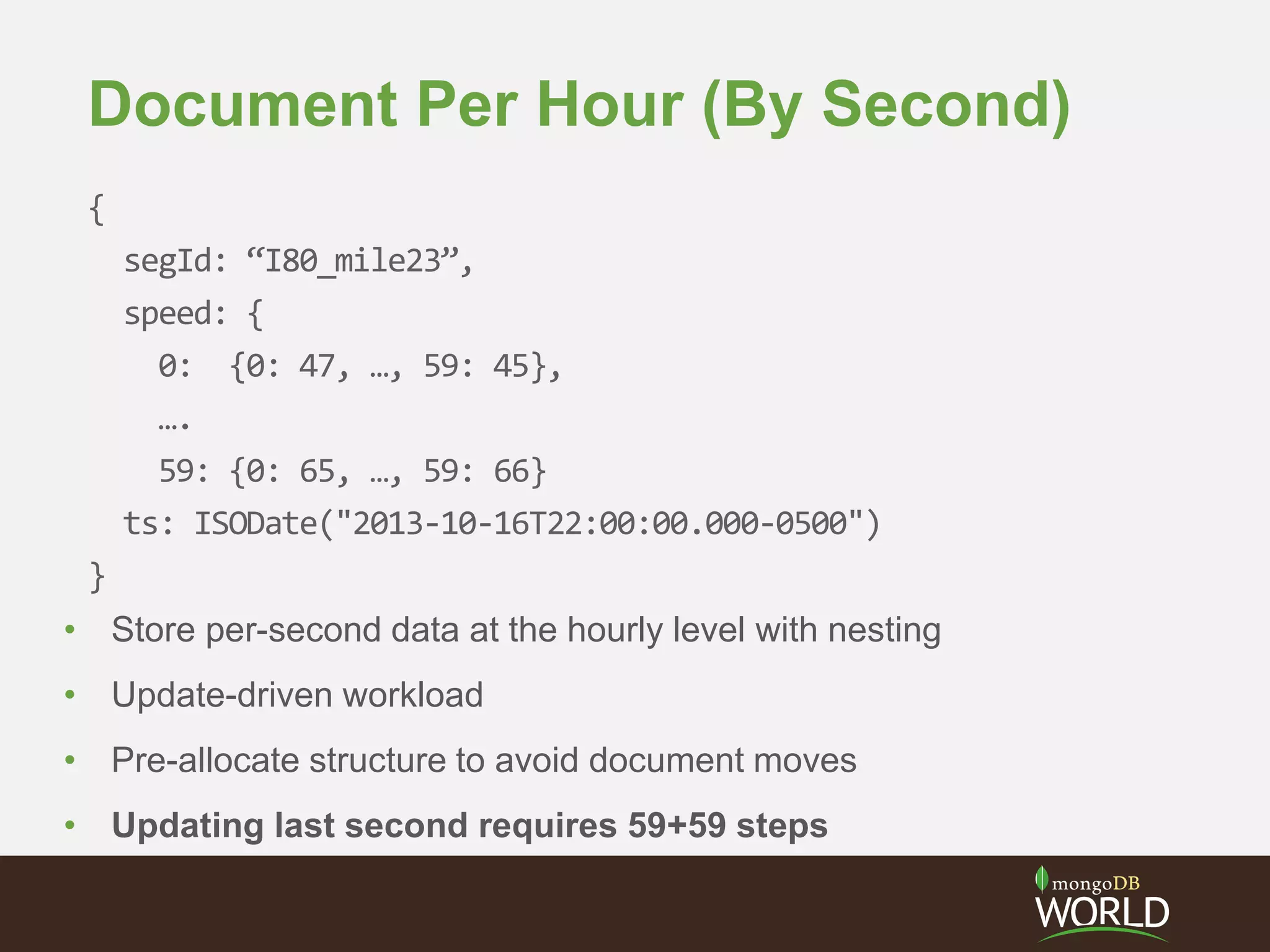

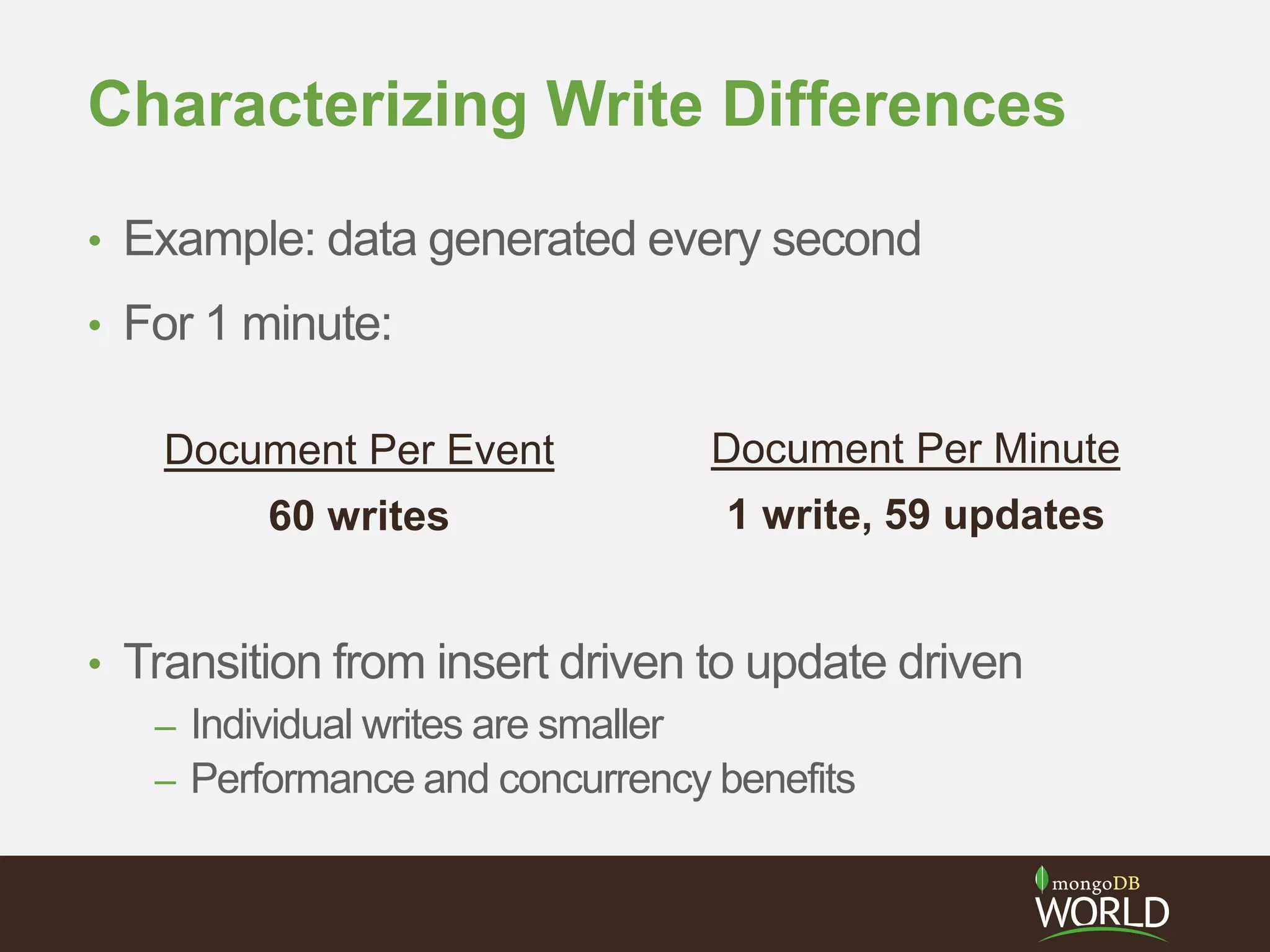

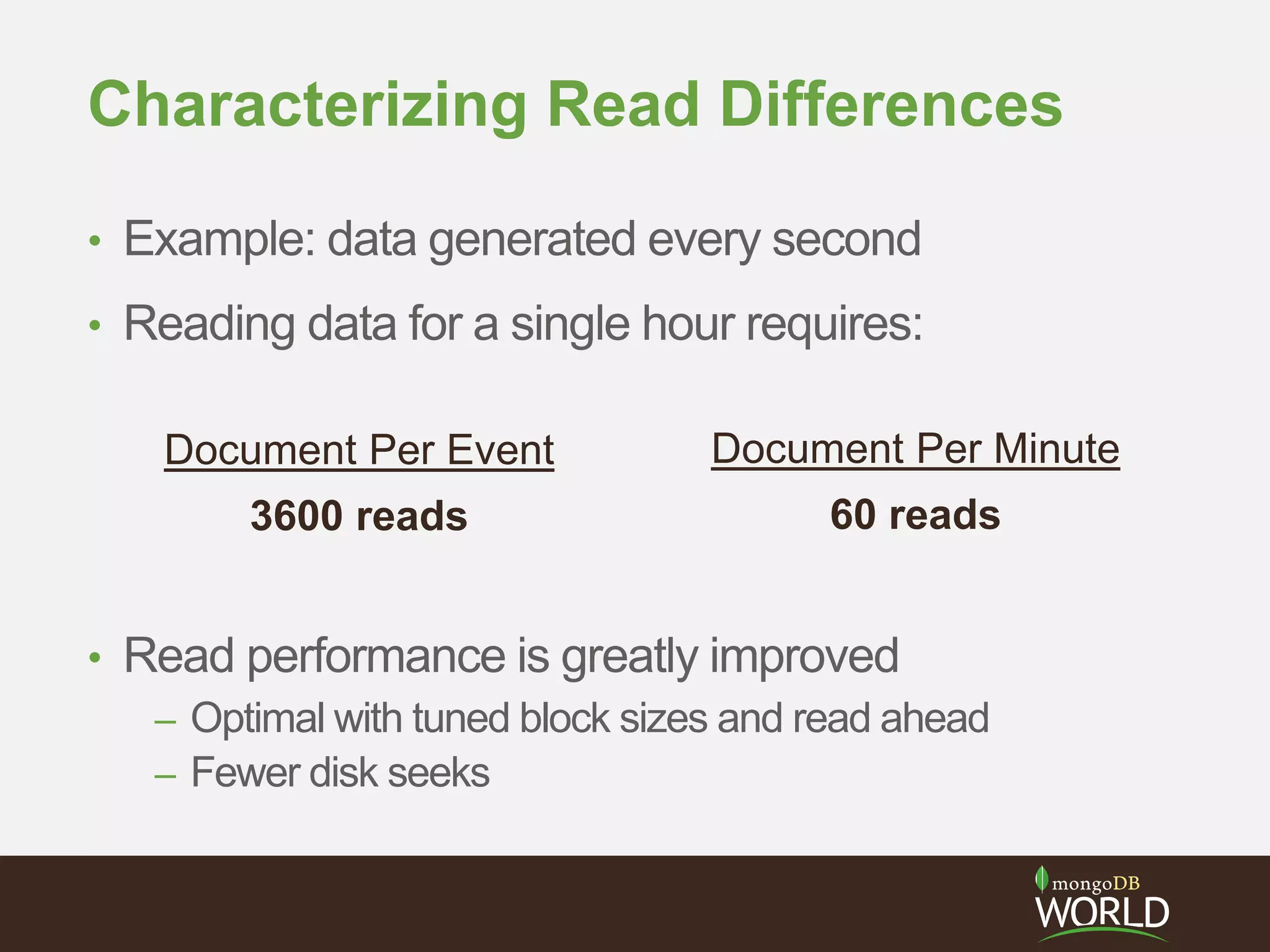

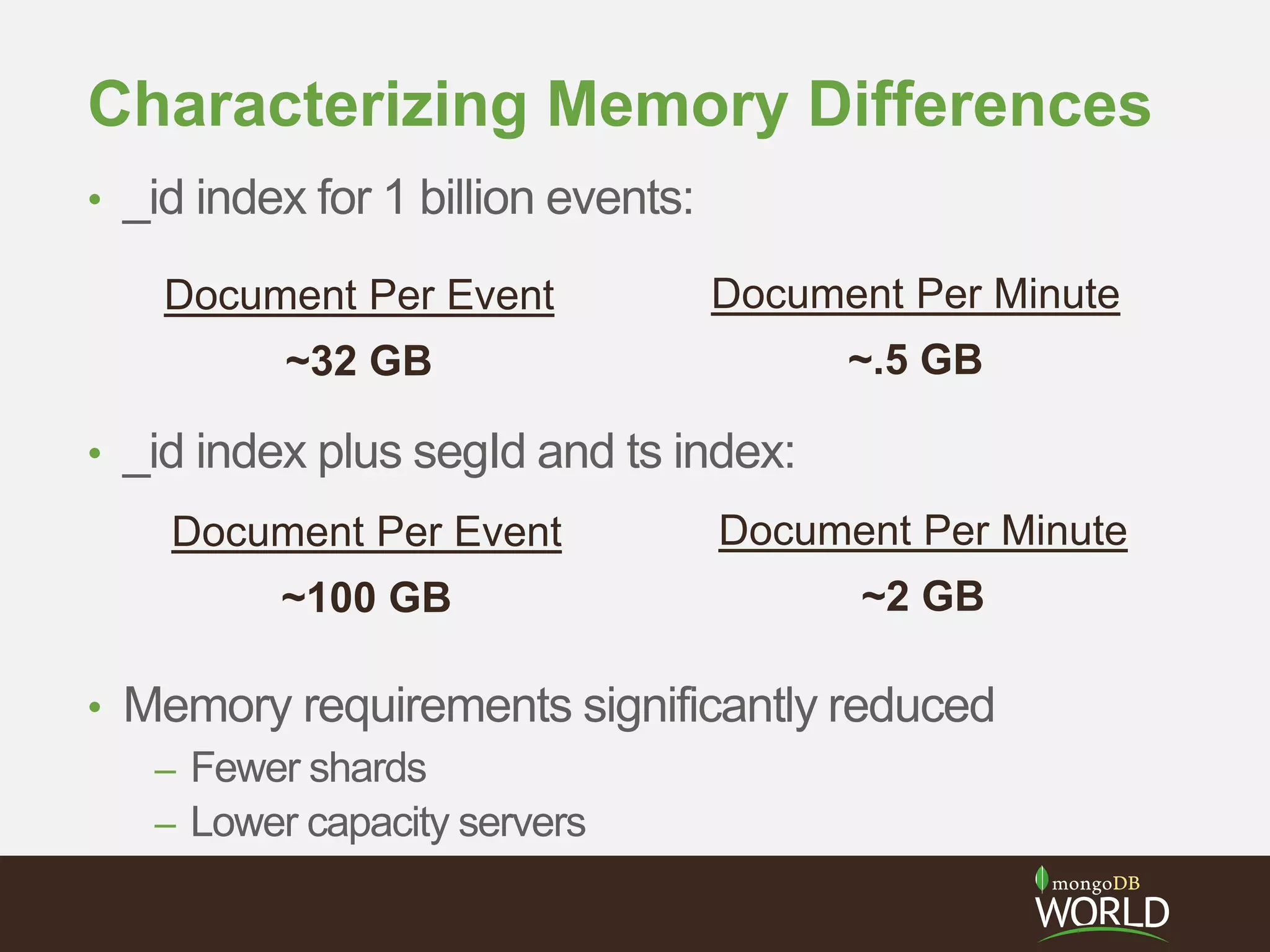

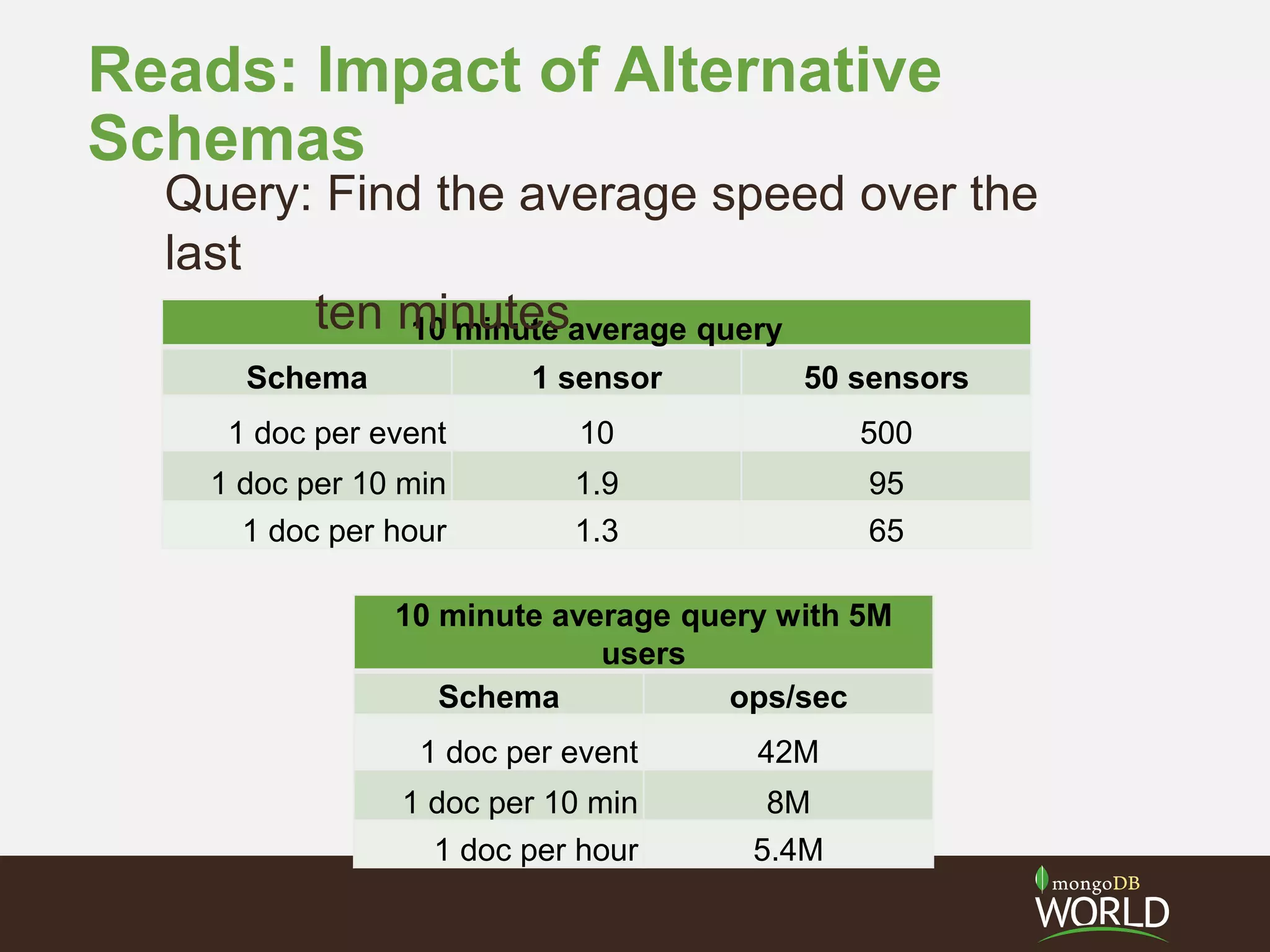

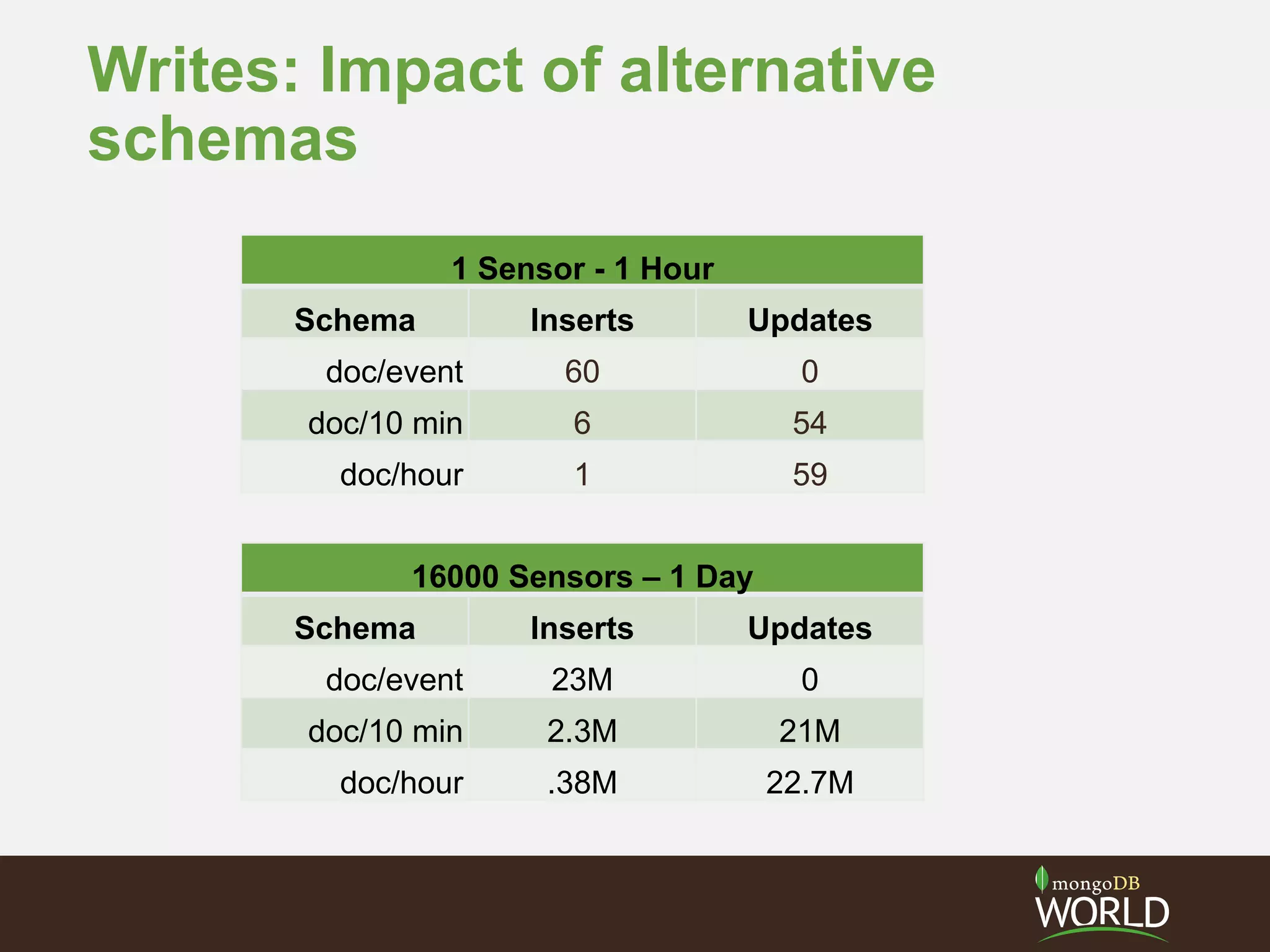

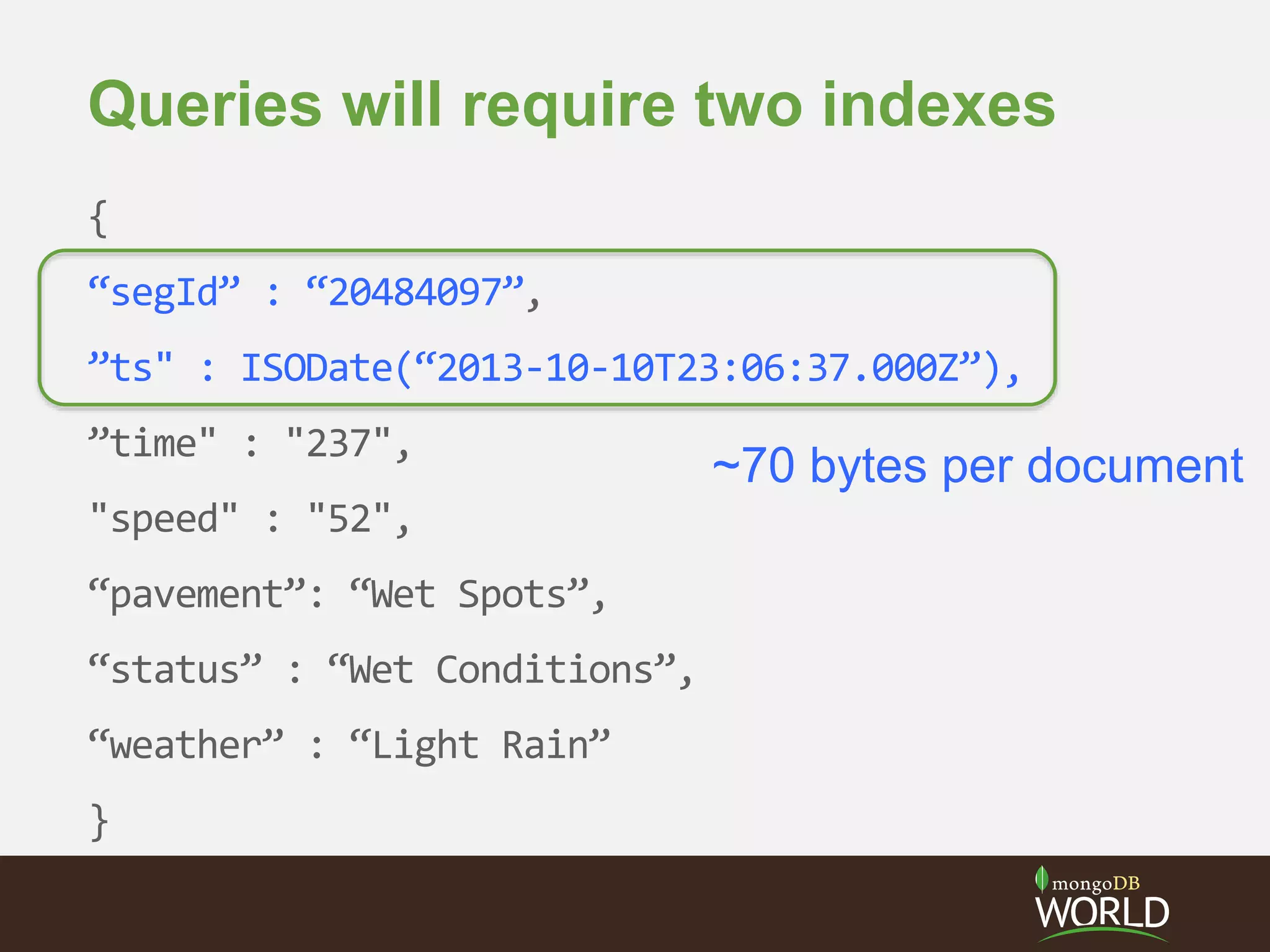

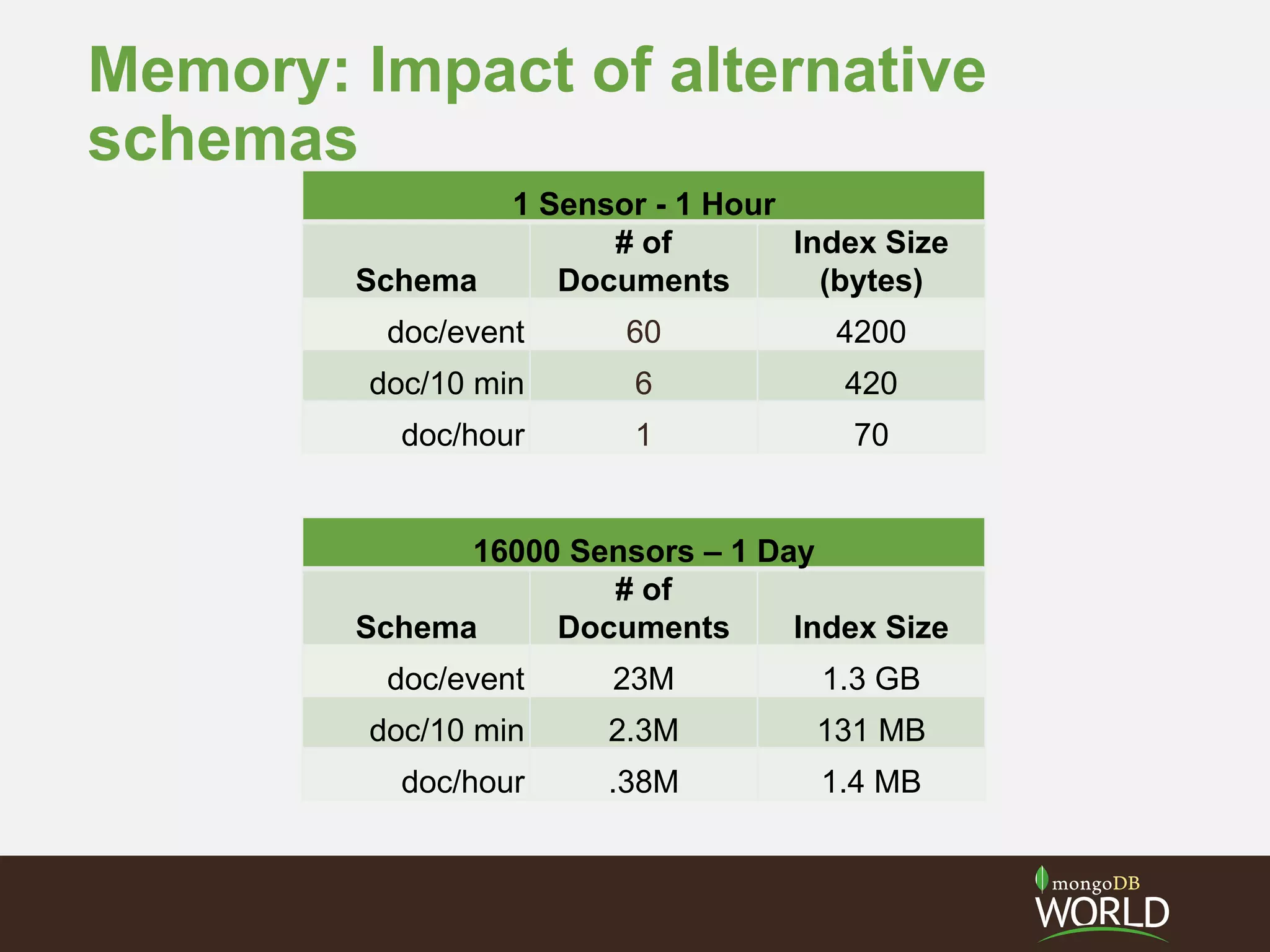

The document discusses schema design considerations for time series traffic monitoring data stored in MongoDB. It evaluates alternatives like storing a document per event, per minute, or per hour. Storing aggregated data improves write performance by changing inserts to updates, improves analytic queries by reducing document reads, and reduces memory requirements by decreasing index size. The best schema depends on the read and write workload of the specific application.