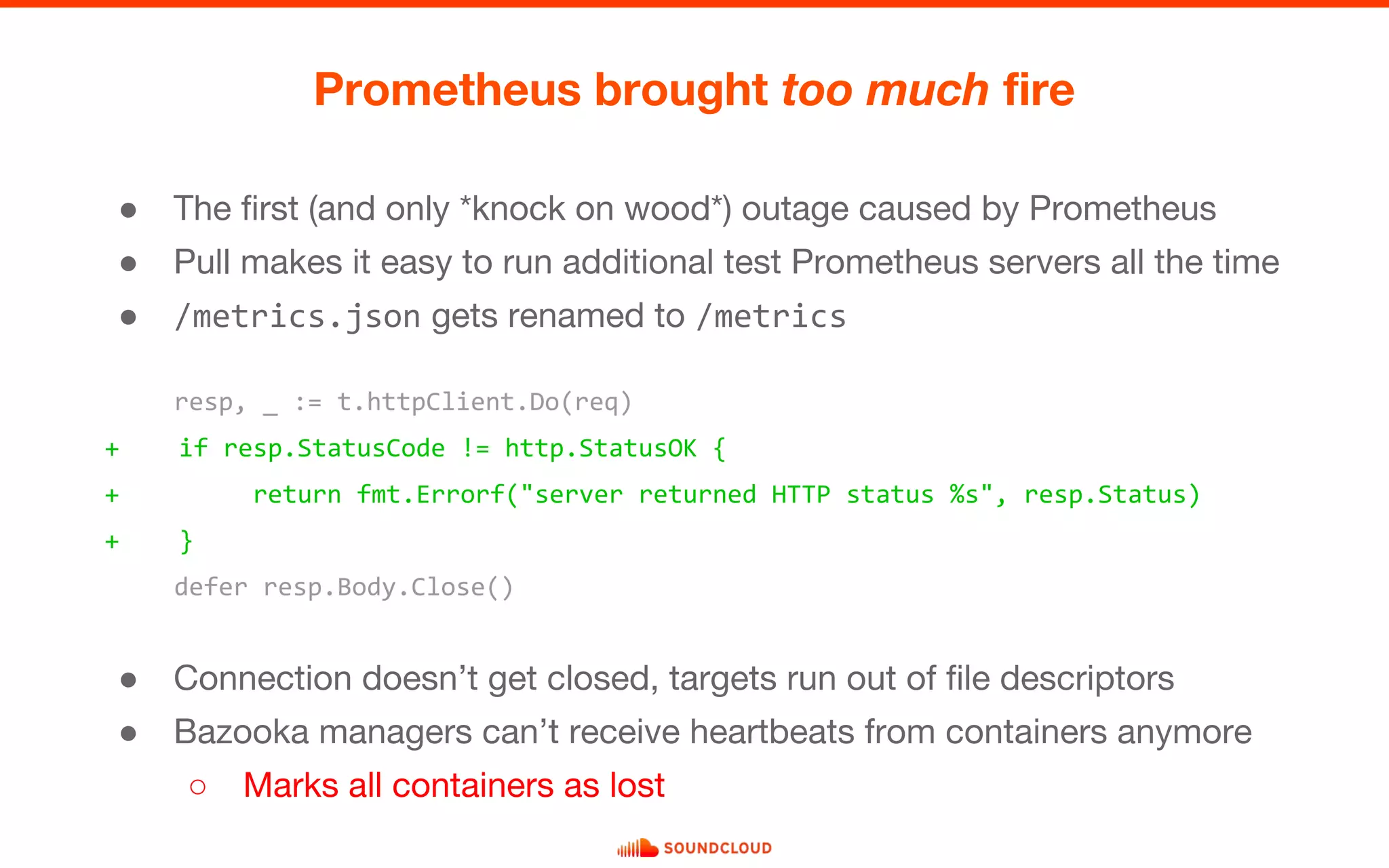

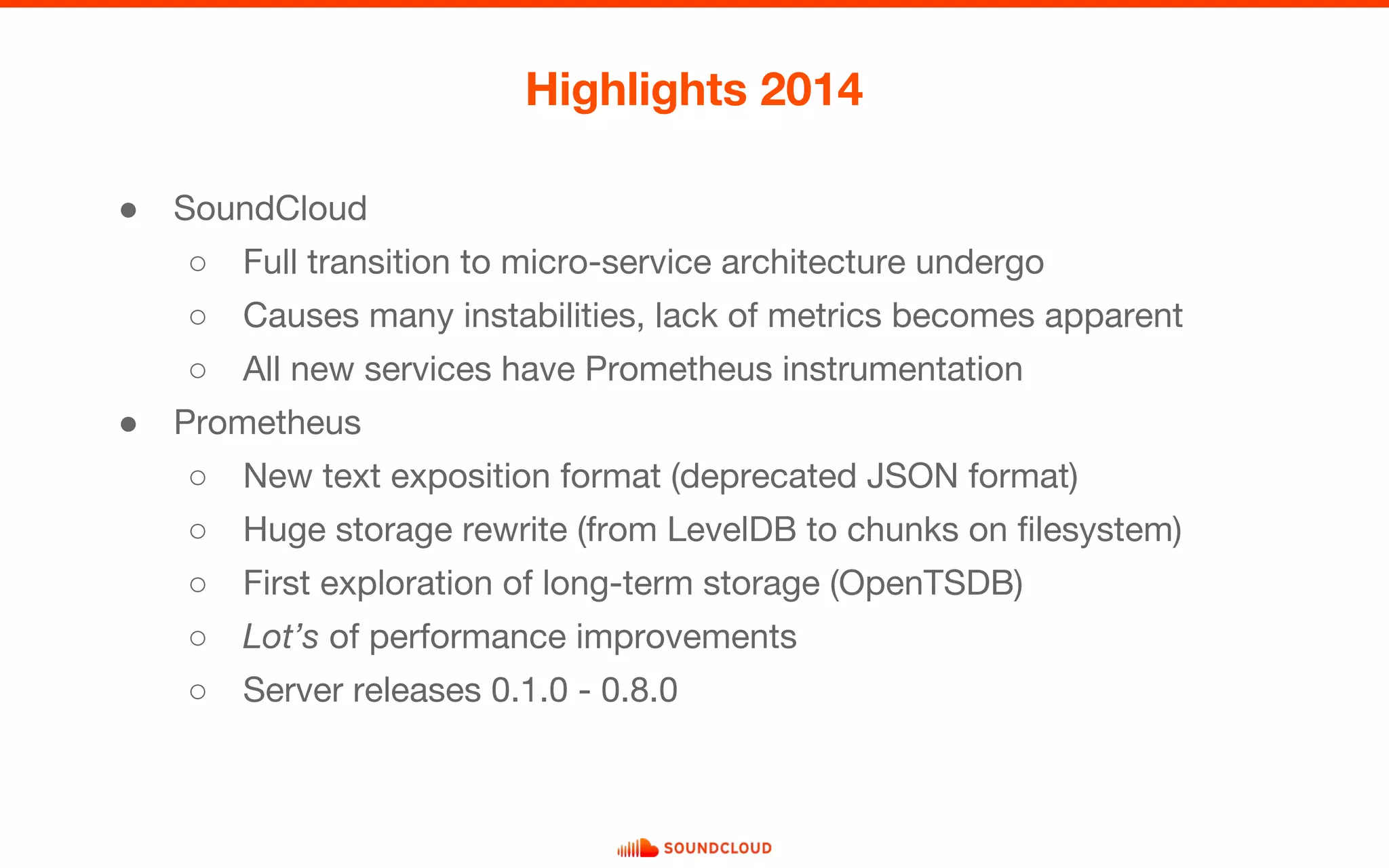

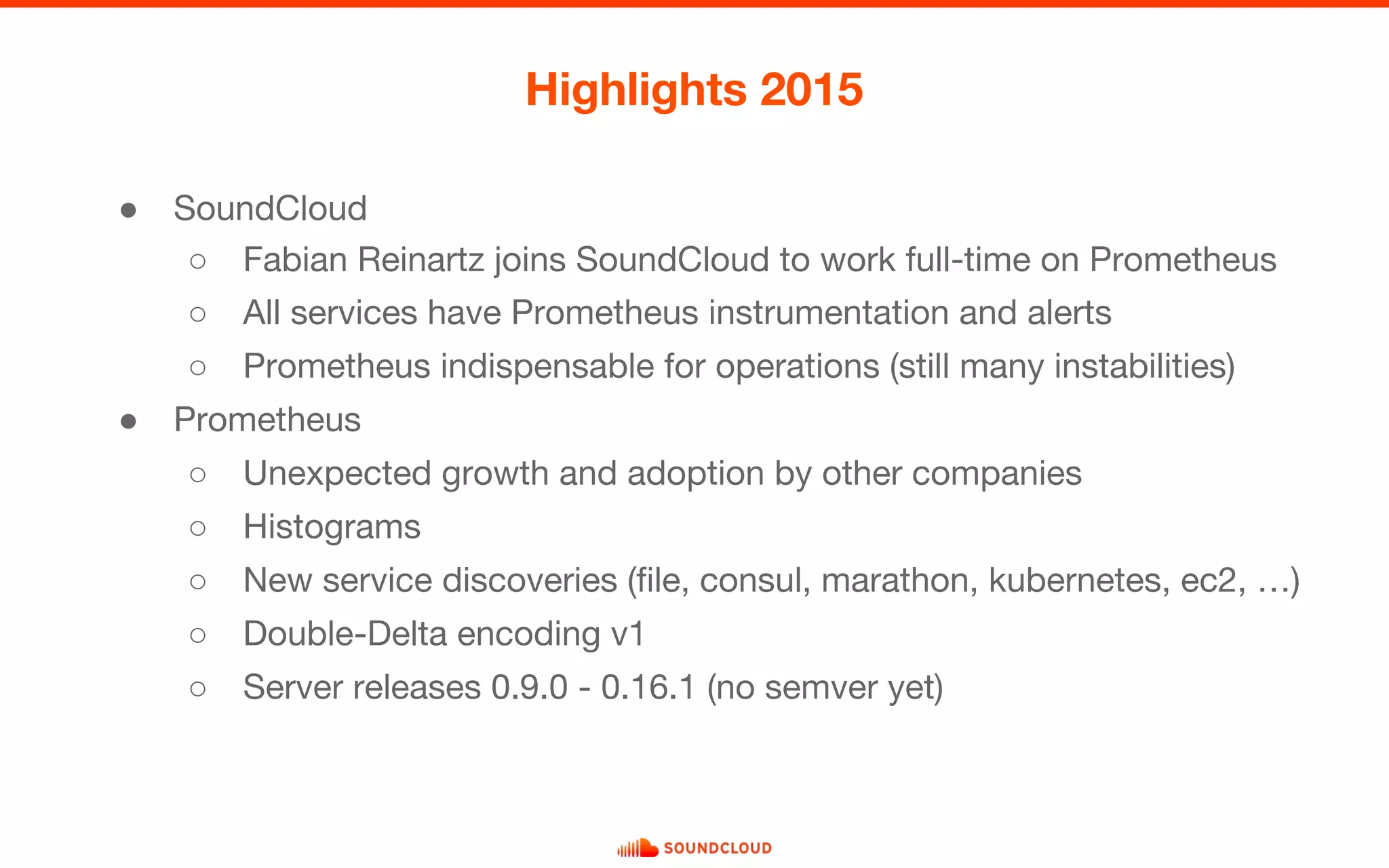

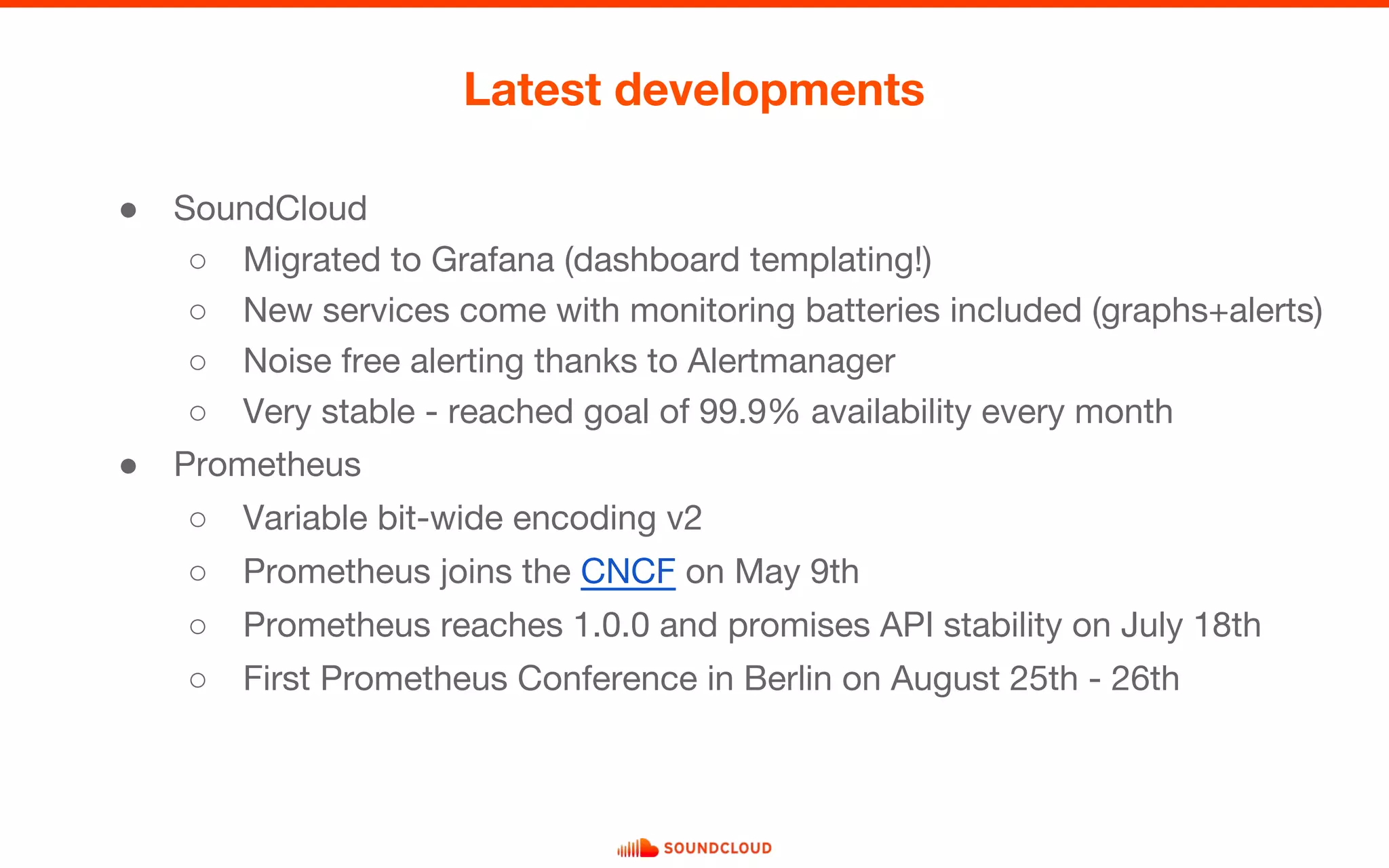

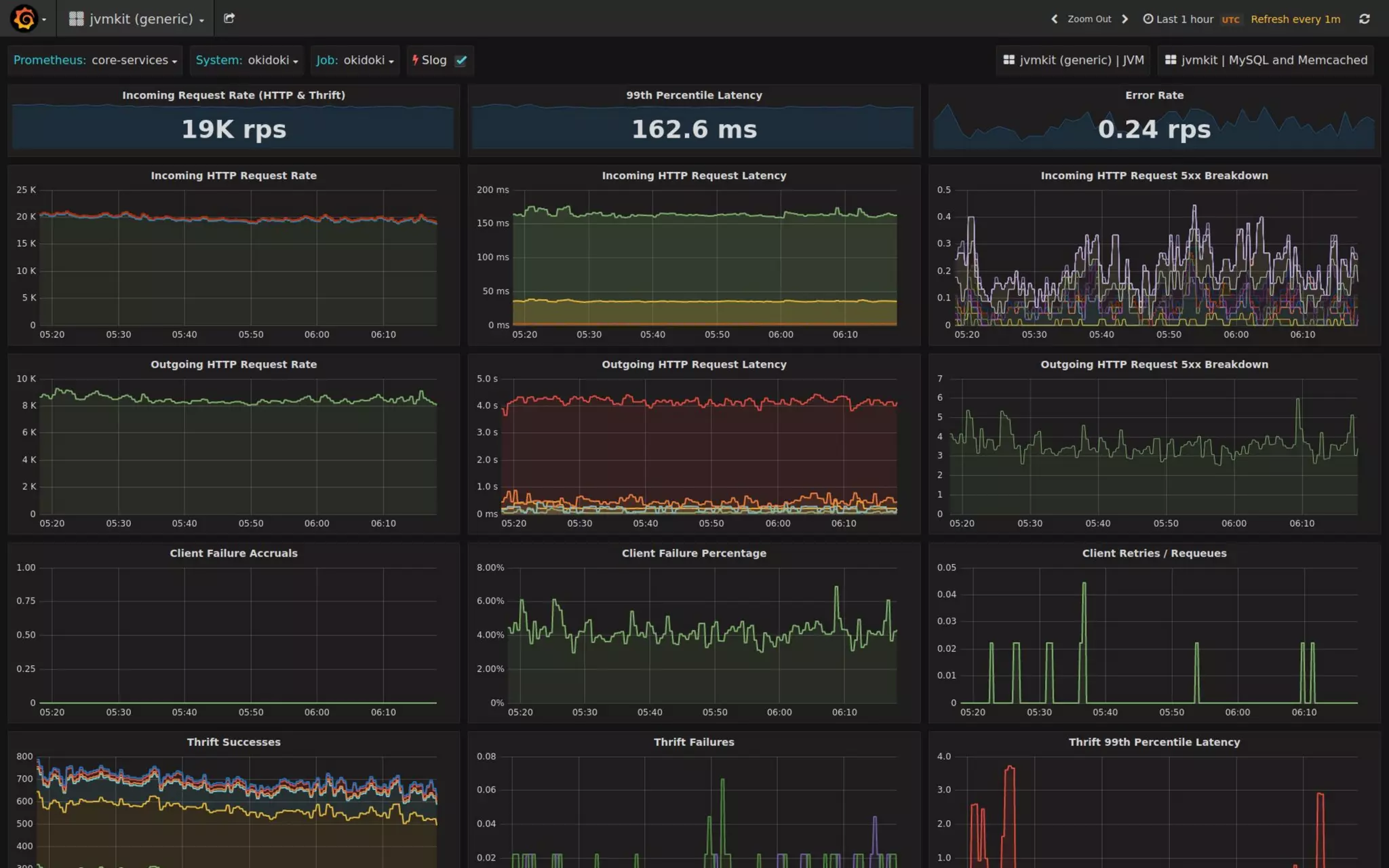

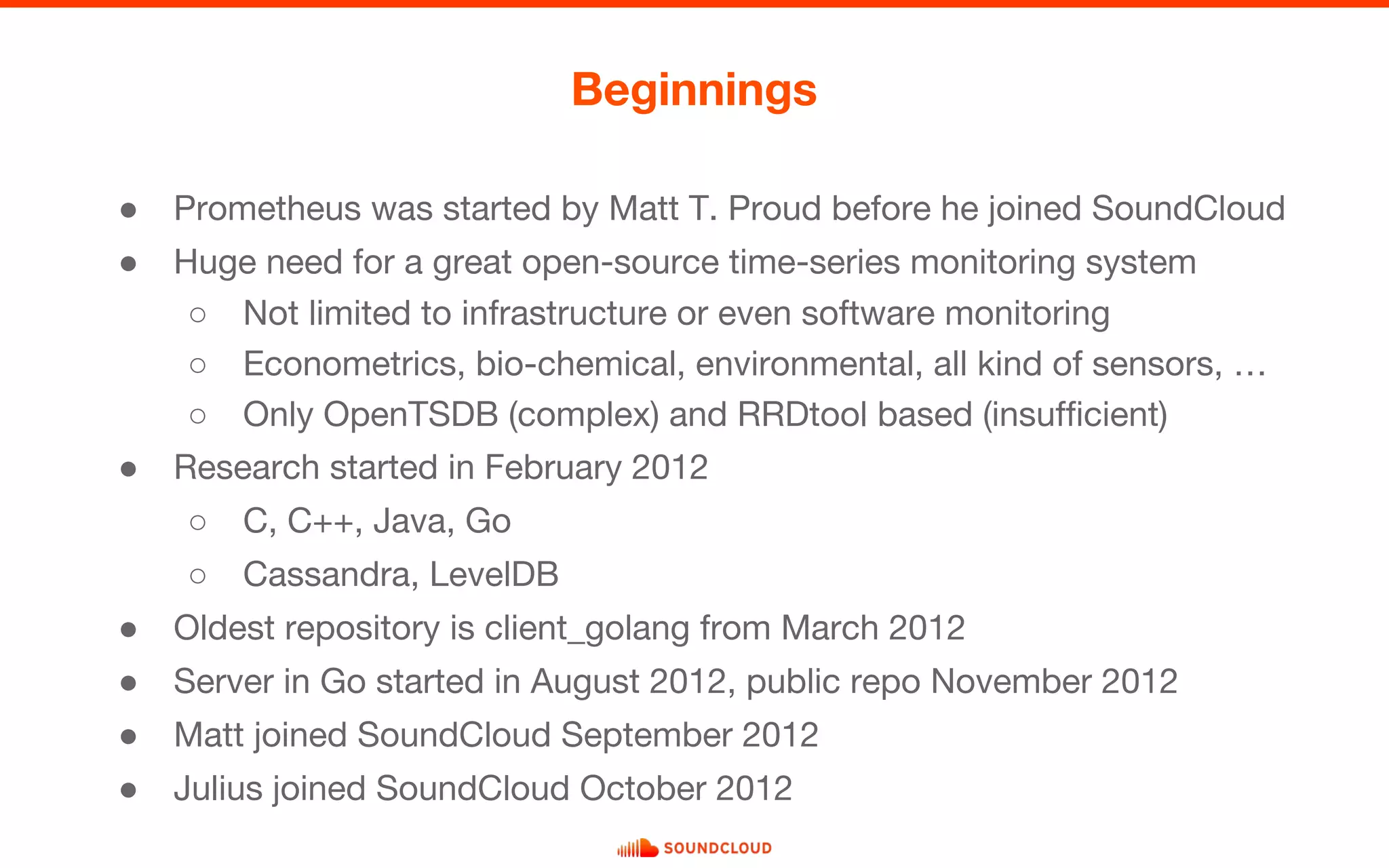

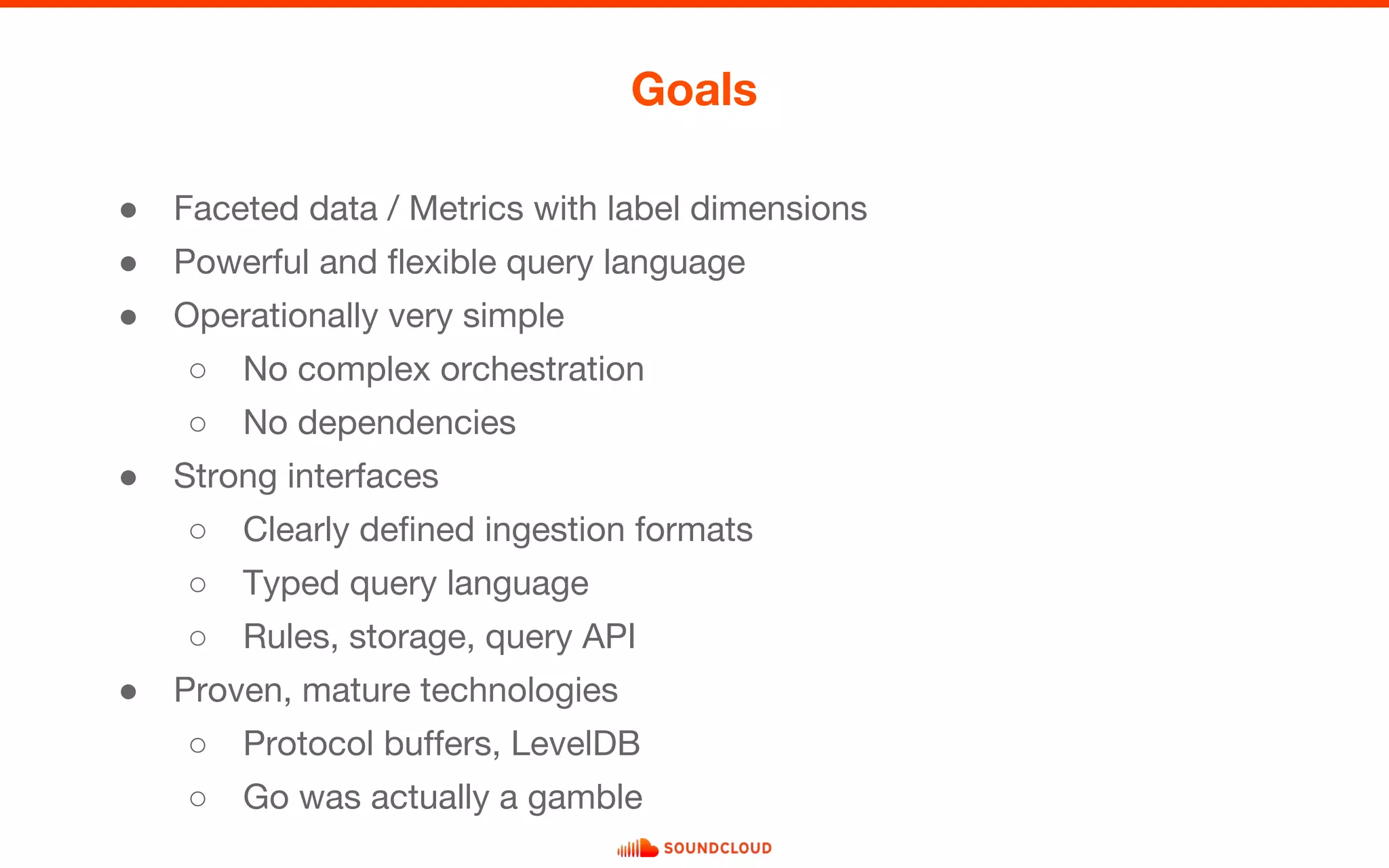

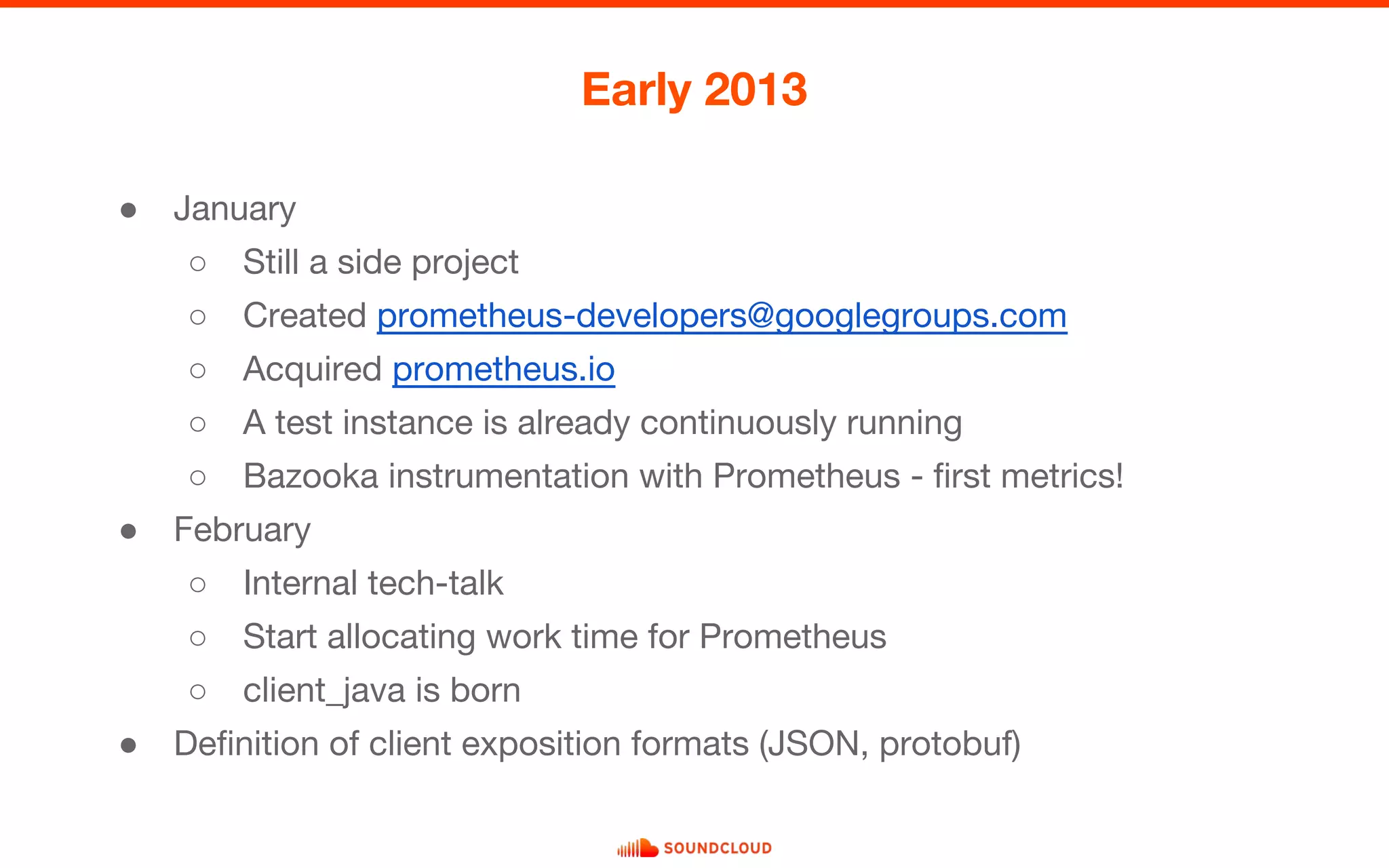

Prometheus was initiated at SoundCloud in 2012 by Matt T. Proud to create an open-source monitoring system capable of handling diverse time-series data. Over time, it evolved with contributions from various developers, leading to significant advancements such as service discovery and a stable architecture. By 2016, Prometheus became a standalone project, achieving 1.0.0 and demonstrating its importance through widespread adoption in the industry.

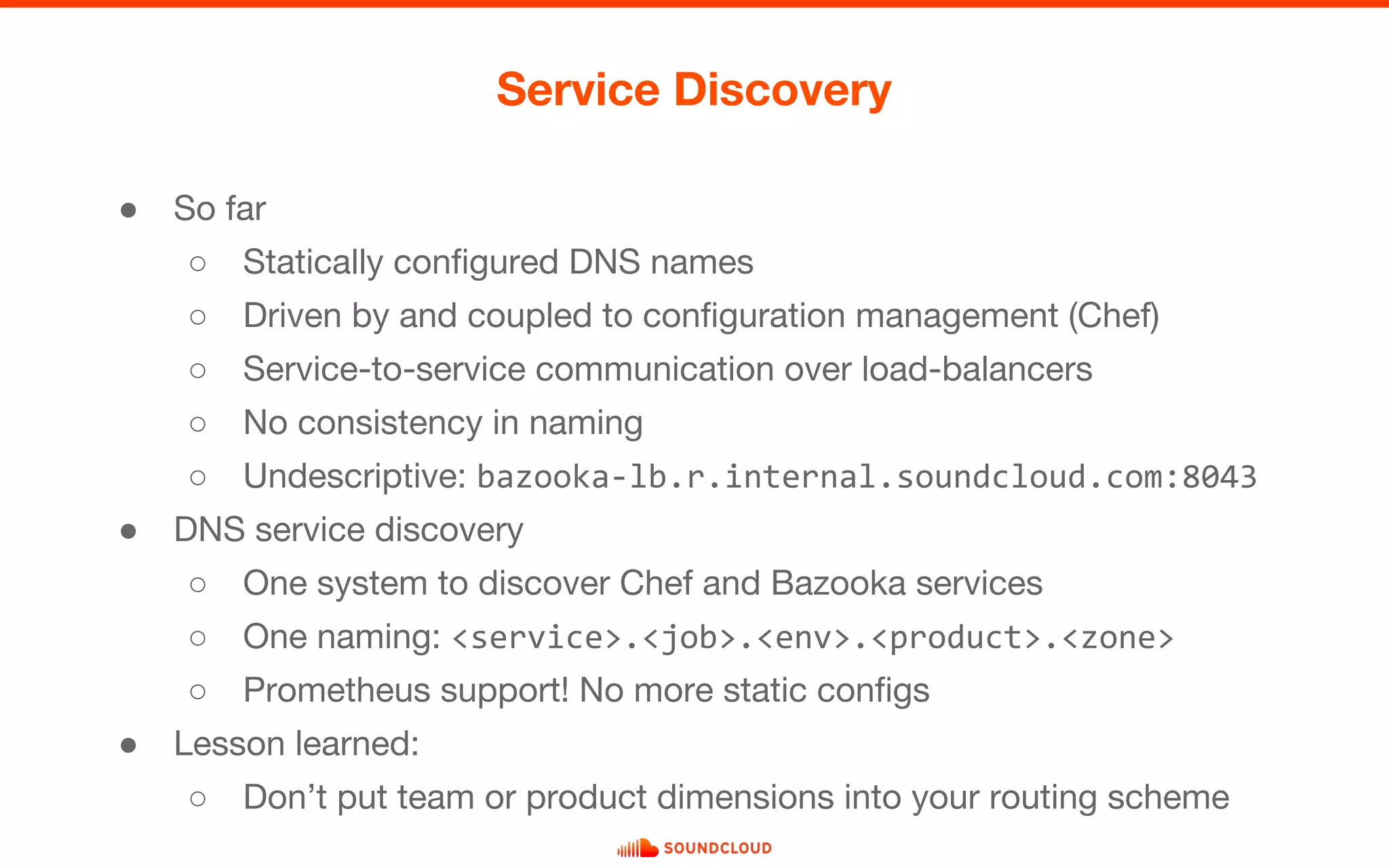

![● Pull vs. Push - A religious debate.

● Need to monitor ephemeral jobs (Map/Reduce on Hadoop, chef-client, ...)

● Work on Pushgateway starts in January

Pushgateway

“It is explicitly not an aggregator, but

rather a metrics cache [...]”

Bernerd Schaefer, January 2013](https://image.slidesharecdn.com/promcon-historyofprometheus-160829122656/75/The-history-of-Prometheus-at-SoundCloud-17-2048.jpg)