The January 2017 ISSA Journal discusses key topics in cybersecurity, highlighting articles on enterprise security architecture, mobile device fragmentation, and the impact of machine learning on security practices. It addresses the ongoing need for qualified professionals in cybersecurity education and the challenges posed by ransomware attacks. The journal also reflects on significant events from 2016, emphasizing the importance of cybersecurity awareness and regulatory compliance in shaping future practices.

![The information and articles in this mag-

azine have not been subjected to any

formal testing by Information Systems

Security Association, Inc. The implemen-

tation, use and/or selection of software,

hardware, or procedures presented

within this publication and the results

obtained from such selection or imple-

mentation, is the responsibility of the

reader.

Articles and information will be present-

ed as technically correct as possible, to

the best knowledge of the author and

editors. If the reader intends to make

use of any of the information presented

in this publication, please verify and test

any and all procedures selected. Techni-

cal inaccuracies may arise from printing

errors, new developments in the indus-

try, and/or changes/enhancements to

hardware or software components.

The opinions expressed by the authors

who contribute to the ISSA Journal are

their own and do not necessarily reflect

the official policy of ISSA. Articles may

be submitted by members of ISSA. The

articles should be within the scope of in-

formation systems security, and should

be a subject of interest to the members

and based on the author’s experience.

Please call or write for more information.

Upon publication, all letters, stories, and

articles become the property of ISSA

and may be distributed to, and used by,

all of its members.

ISSA is a not-for-profit, independent cor-

poration and is not owned in whole or in

part by any manufacturer of software or

hardware. All corporate information se-

curity professionals are welcome to join

ISSA. For information on joining ISSA

and for membership rates, see www.

issa.org.

All product names and visual represen-

tations published in this magazine are

the trademarks/registered trademarks

of their respective manufacturers.

4 – ISSA Journal | January 2017

editor@issa.org

The Best Articles of 2016

Thom Barrie – Editor, the ISSA Journal Editor: Thom Barrie

editor@issa.org

Advertising: vendor@issa.org

866 349 5818 +1 206 388 4584

Editorial Advisory Board

Phillip Griffin, Fellow

Michael Grimaila, Fellow

John Jordan, Senior Member

Mollie Krehnke, Fellow

Joe Malec, Fellow

Donn Parker, Distinguished Fellow

Kris Tanaka

Joel Weise – Chairman,

Distinguished Fellow

Branden Williams,

Distinguished Fellow

Services Directory

Website

webmaster@issa.org

866 349 5818 +1 206 388 4584

Chapter Relations

chapter@issa.org

866 349 5818 +1 206 388 4584

Member Relations

member@issa.org

866 349 5818 +1 206 388 4584

Executive Director

execdir@issa.org

866 349 5818 +1 206 388 4584

Advertising and Sponsorships

vendor@issa.org

866 349 5818 +1 206 388 4584

W

e’d like

to ac-

knowl-

edge the passing

of 2016, not with

reminiscing the

breaches, malware,

privacy invasions,

legislations—Andrea, Geordie, and

Randy help us out with that—but by cel-

ebrating the articles the Editorial Advi-

sory Board deemed the best of the year.

The 2016 Article of the Year

“Machine Learning: A Primer for Se-

curity” by Stephan Jou [Toronto Chap-

ter]. Stephan lays out the workings of

machine learning and artificial intel-

ligence, painting a clear picture of this

growing technology that some argue is

still not ready for prime time. But the

promise of combining big data and ma-

chine learning—whether for analyzing

unimaginably huge amounts of data for

business processes or picking up on the

bad actors knocking, poking, and prod-

ding our infrastructures—has me excit-

ed to see how 2017 plays out in this field.

The Best of 2016

“Enterprise Security Architecture: Key

for Aligning Security Goals with Busi-

ness Goals,” by Seetharaman Jegana-

than—Seetharaman deserves an hon-

orable mention as his article was a very

close runner up.

“The Role of the Adjunct in Educating

the Security Practitioner,” by Karen

Quagliata [St. Louis Chapter].

“Fragmentation in Mobile Devices,” by

Ken Smith.

“Gaining Confidence in the Cloud,” by

Phillip Griffin [Raleigh Chapter] and

Jeff Stapleton [Fort Worth Chapter].

“Crypto Wars II,” by Luther Martin [Sil-

icon Valley Chapter] and Amy Vosters.

Congratulations to our best authors of

the year! A number are already plan-

ning to submit further works in the up-

coming year.

Readers’ Choice for 2016

So, these are the board’s choices. Do

you concur? Please take a look through

the year and let us know your top three

or four selections. We’d love to have a

Readers’ Choice. Some of my favorites

not mentioned are “Impact of Social

Media on Cybersecurity Employment

and How to Use It to Improve Your Ca-

reer,” Tim Howard [South Texas Chap-

ter]; “Stop Delivery of Phishing Emails,”

Gary Landau [Los Angeles Chapter];

“Beware the Blockchain,” Karen Mar-

tin; “The Race against Cyber Crime Is

Lost without Artificial Intelligence,”

Keith Moore [Capitol of Texas Chapter];

and “Why Information Security Teams

Fail,” Jason Lang.

Let me know at editor@issa.org.

It’s been a great year in the ISSA Journal.

Here’s looking forward to an even bet-

ter year. Do you have an article to share.

Bring it on.

—Thom](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-4-2048.jpg)

![Sabett’s Brief

By Randy V. Sabett – ISSA Senior Member, Northern Virginia Chapter

(Not) The Best of Cybersecurity,

2016 Version

S

o how many cybersecurity “Best of

2016” lists have you seen over the

past few weeks? Well, this won’t be

one of those lists, because as I’ve done

in prior years, I’m going to cover events

that I think were notable but that weren’t

necessarily “best of.” And, as in past

years, my wife thinks that this is a silly

approach, but here goes anyway…

First off, the Internet has survived an-

other year. Despite all of the predictions

of gloom and doom that have been pos-

ited over the past decade or more, we’re

still plugging away with the same basic

infrastructure we’ve had for several de-

cades. To some extent, this survival is a

testament to its original design—adapt-

able to changing conditions and attacks.

Turning to a legislative event from very

early in the year, the passage of the Con-

solidated Appropriations Act of 2016

included the Cybersecurity Information

Sharing Act (CISA). CISA created a vol-

untary process for sharing cybersecu-

rity information without legal barriers

or threats of litigation. DHS and DOJ

released additional guidance on infor-

mation sharing under CISA in February

and June. Based on personal experience

in 2016, I find CISA has influenced a

number of decisions to share informa-

tion, including B2B, B2G, and G2B.

Continuing for a moment on the gov-

ernment side of things, in February the

Administration released the Cyberse-

curity National Action Plan (“CNAP”).

The CNAP provides a combination

of near-term tactical actions and lon-

ger-term strategy components intended

to “enhance cybersecurity awareness

and protections, protect privacy, main-

tain public safety as well as economic

and national security, and empower

Americans to take better control of their

digital security.”1

Good stuff, but proper

implementation will be critical.

On the commercial side, businesses

continued to be subjected to a variety

of ever-evolving threats, including the

incredible rise in both frequency and

insidiousness of ransomware. 2016

saw ransomware evolve from phish-

ing-based attacks on individual ma-

chines into an attack mechanism that

threatened entire networks. In particu-

lar, SamSam (which exploits unpatched

servers, moves laterally to any machine

it finds, and then encrypts the entire

network) proved to be particularly over-

whelming. Only robust patching and

diligent backups offer resiliency.

In 2016, we saw cybersecurity become

an integral part of the due diligence

process for most M&A transactions

(and personal experience bore this out).

In fact, according to a recent survey, 85

percent of public company directors and

officers say that an M&A transaction in

which they were involved would likely

or very likely be affected by “major se-

curity vulnerabilities.” In addition, 22

percent say that they wouldn’t acquire

a company that had a high-profile data

breach, while 52 percent said they would

still go through with the transaction but

only at a significantly reduced value.2

This interest in cybersecurity diligence

is not just theoretical: in the midst of

an October M&A transaction involv-

ing Verizon and Yahoo!, news broke of

a Yahoo! breach that had occurred ap-

proximately two years earlier. This event

raised speculation around what it might

do to the deal. To me, the bigger question

will be how the overall scope of the due

1 https://www.whitehouse.gov/the-press-

office/2016/02/09/fact-sheet-cybersecurity-national-

action-plan.

2 https://www.nyse.com/publicdocs/Cybersecurity_and_

the_M_and_A_Due_Diligence_Process.pdf.

diligence process

will be influenced

by cybersecurity in

future deals.

To round out the year, I will end on a

hopefully positive note. In December,

the findings of the Commission on En-

hancing National Cybersecurity were

released.3

The Commission had been

tasked with developing recommenda-

tions for ways to strengthen cybersecu-

rity across both the federal government

and the private sector. In a statement,

President Obama stated that “[t]he

Commission’s recommendations...make

clear that there is much more to do and

the next administration, Congress, the

private sector, and the general public

need to build on this progress.”

Amen to that—all stakeholders must

meaningfully participate and address

cybersecurity so that everyone benefits.

Let’s hope that 2017 sees that partici-

pation increase. With that, I hope that

your holiday season has been enjoyable

and that your new year is off to a great

start. Now I’m headed off to the refrig-

erator to come up with a top 10 list of

leftovers for my wife. Looking forward

to hearing from you in 2017!

About the Author

RandyV.Sabett,J.D.,CISSP,isViceChair

of the Privacy & Data Protection practice

group at Cooley LLP, and a member of

the Boards of Directors of ISSA NOVA,

MissionLink, and the Georgetown Cy-

bersecurity Law Institute. He was named

the ISSA Professional of the Year for

2013, and chosen as a Best Cybersecurity

Lawyer by Washingtonian Magazine for

2015-2016. He can be reached at rsabett@

cooley.com.

3 https://www.nist.gov/cybercommission.

January 2017 | ISSA Journal – 5](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-5-2048.jpg)

![In this article, the author shares his insights about why security architecture is critical for

organizations and how it can be developed using a practical framework-based approach.

By Seetharaman Jeganathan

Enterprise Security

Architecture: Key for Aligning

Security Goals with Business

Goals

22 – ISSA Journal | January 2017

ISSA

DEVELOPING AND CONNECTING

CYBERSECURITY LEADERS GLOBALLY

Abstract

Enterprise security architecture is an essential process that

aims to integrate security as a part of business and technolo-

gy initiatives handled by any organization. When the security

goals and objectives are aligned with organizational business

goals and objectives, any organization can make informed

decisions about business ventures and protect organizational

assets from ever-emerging security threats and risks. In this

article, the author shares his insights about why security ar-

chitecture is critical for organizations and how it can be de-

veloped using a practical framework-based approach.

Introduction

E

nterprise security architecture (ESA) is a design pro-

cess where the current state of enterprise security is

analyzed, gaps are identified based on effective risk

management processes, and the identified gaps are fulfilled

by applying cost-effective security controls. It is a life-cycle

process that enables any organization to protect itself from

advanced security threats. Until recently, ESA was a major

technology effort wherein the IT technical team owned the

definition, implementation, and operation of security pro-

cesses and controls. However, this model has created a vac-

uum with respect to business involvement and has failed to

align the IT security functions with the organizational goals

and objectives [11].

Security goals and objectives

Traditionally, information security functions have been pro-

viding confidentiality, integrity, availability, and accountabil-

ity services to information systems and infrastructure. These

services are often referred to as primary goals for informa-

tion security functions. The primary objective is to secure the

overall IT system and business functions as well as support

growth of the underlying business. ESA is a key enabling

factor to ensure that the security goals and objectives are

achieved as per the expectations of the senior management

[11].

Why security architecture?

• Security architecture is a key in aligning security func-

tions with the organization’s business functions

• Without a clearly defined architecture, security solutions

cannot be balanced between over protection and under

protection

• Security architecture functions enable accountability and

help obtain support and commitment from senior man-

agement](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-22-2048.jpg)

![Even though the proposed security architecture framework

is a part of the enterprise architecture, it can also be rolled

out separately as a new initiative for organizations that are

not matured yet with respect to enterprise architecture. In

the sections below, the author shares his practical experienc-

es in implementing the proposed framework with several of

his industry customers. The primary goal of the framework is

to provide an organization-wide security architecture review

process to ensure that security is an integral part of all busi-

ness critical systems and processes [2][7].

Note: Since this article focuses on security architecture in general

rather than information security architecture specifically, it will be

appropriate to include corporate security, personnel security, and

physical security aspects in this exercise.

People factor

This area focuses on several actors (people) who must operate

together to effectively roll out the proposed framework. The

enterprise security architecture group (ESAG) or enterprise

security review board (ESRB) is a governance body that must

be formed if not available already, as an initial step. The effec-

tiveness of the framework will be dependent vis-a-vis the in-

volvement and participation of the identified team members.

They must fulfill their required roles and responsibilities as

effectively as possible. Human resources being expensive as-

sets for organizations, it is indispensable to get adequate sup-

port and commitment from the senior management to effec-

tively utilize human resources to protect the interests of the

stakeholders. Senior management support can be obtained by

developing a charter of this proposed ESA group by identi-

fying key roles and responsibilities of the group members. It

is important to map the goals and objectives of this group to

the overall organizational business goals and objectives and

portray how this group will enable or support the growth of

the underlying business functions [1].

Figure 2 depicts the proposed people factor top-down ap-

proach model to form the ESA group.

• Security architecture functions support IT functions

during changes in the business processes

• Security architecture provides a snapshot of an organiza-

tion’s security posture at any point of time [9]

Enterprise security architecture framework

Figure 1 shows the proposed enterprise security architecture

framework discussed throughout this paper.

The framework begins with defining the security strategy,

based on risk profile of the organization. An organization’s

security requirements are derived mainly from security

threats and risks faced by the organization [4]. These require-

ments are analyzed in the framework to clearly define a se-

curity strategy for the organization. The framework leverag-

es three major factors; people, processes, and technology to

implement the defined strategy across the organization. It is

supported by other essential elements such as organizational

governance, risk management, and IT governance bodies to

effectively achieve total security of the organization. The au-

thor has referenced “The Business Model for Information Se-

curity” (BMIS) model and designed this article with exclusive

focus on the security architecture function. The BMIS model

was originally created by Dr. Laree Kiely and Terry Benzel

at the USC Marshall School of Business Institute for Critical

Information Infrastructure Protection. Later in 2008, ISA-

CA adopted this model and has been promoting its concepts

globally.

Figure 1 – Enterprise security architecture framework

TOTAL SECURITY

Organizational Governance

Executives, Board of Directors, Stakeholders

Enterprise Risk Management

Chief Risk Officer, Risk management Group

Enterprise IT / Security Governance

CIO, CISO, CSO, etc.

Enterprise Architecture

Enterprise Architects

Enterprise Security Architecture

Framework

Security Strategy

Company Assets

Information Security

Corporate Security

Physical Security

Organizational Entities

IT

Functions

Business

Units

Business

Partners

Customers

Enterprise

Security

Architecture

Group

Enterprise

Security

Governance

Board

Senior

Management

• Board Members

• Stakeholders

• Chief Risk Officer

• Chief Security Officer

• Corporate Security Head

• Chief Information Security Officer

• BU Heads

• Security Architec ts

• Information Risk Manager(s)

• Information Security Manager(s)

• Corporate Security Group Members

Figure 2 – People factor (top-down approach) model

January 2017 | ISSA Journal – 23

Enterprise Security Architecture: Key for Aligning Security Goals with Business Goals | Seetharaman Jeganathan](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-23-2048.jpg)

![The ESA group must consist of people representing all busi-

ness units of the organization such as HR, finance, R&D, IT,

products, manufacturing, etc. It is important to note that the

focus of this group is not only securing the information sys-

tems but also securing the organization with a holistic ap-

proach. Business insights and guidance are essential to derive

a holistic “organization wide” security approach. A top-down

approach will provide necessary commitment and oversight

from senior management; also, when there is a disagreement

between business groups, senior management can liaise and

resolve critical issues. It is extremely important for this group

to cascade the architectural functions and decisions to the

entire organization below and/or above them. The head of

this group or its representatives must conduct regular “con-

nect meetings” with the business units to provide security

architecture oversights and guidance for all their technology

and business initiatives [1]

One of the primary expectations and outcomes of this work-

ing group should be developing security policies and stan-

dards for all organizational functions wherein security is a

key requirement. Security policies are directions by the se-

nior management to the organization on what is allowed and

what is not allowed from the security standpoint. Security

standards are guidelines developed to substantiate/support

each policy and set directions for business units on how to

adhere to the required policies [8].

Note: The author is highly inspired by the series of books, In-

formation Security Policies Made Simple, by Charles Cresson

Wood and recommends them as reference material(s) to create

relevant security policies by any organization. However, the

samples provided in the book should be used as an inspira-

tion and must not be adopted directly without careful review.

The teams working on defining the policies must also take into

consideration industry regulations, country-specific laws, and

compliance requirements before defining the policies.

Process factor

This area focuses on how the security architecture review

process should work in real time at any given organization.

The need for an organization-wide risk management pro-

cess is now more than ever because information systems and

technology are widely used for business functions across the

world. Information systems are subject to serious security

threats. Threat agents exploit known and unknown vulner-

abilities and cause damages to information systems. This

will impact the confidentiality, integrity, availability, and

accountability goals of security functions. Security breach-

es even cause permanent damage to organizations and can

make them go out of business. Recent laws and compliance

requirements make senior management personally account-

able for any negligence in securing their customer’s personal-

ly identifiable information (PII), financial data, and personal

health information (PHI) in the healthcare industry. There-

fore, it is critical and of utmost importance that the senior

management, mid-level, and lower-level employees of an

organization understand their roles and responsibilities in

protecting organization’s resources effectively from security

risks [1].

Enterprise risk management is focused on managing risks

faced by the organization. Security risks are one among sev-

eral others risks faced, but security risks are more severe than

the others. Organizations generally follow widely known

risk management frameworks (NIST, ISACA, etc.) or cus-

tom-made frameworks specific to the organization based on

its culture, laws, and compliance requirements. The author

discusses and illustrates this article based on the NIST (SP

800-39) risk management process, which suggests that risk

management is carried out as a holistic, organization-wide

activity that addresses risk from the strategic level to the tac-

tical level. This enables organizations to make informed deci-

sions about their security activities based on the outcome of

the risk management process already in place [10].

Figure 3 depicts the NIST risk management process and

multi-tiered organization-wide risk management approach.

Note: As the scope of this paper is not to detail the NIST risk

management process, readers are encouraged to read the NIST

SP 800-39 document to understand the risk management

framework.

An important discussion in SP 800-39 is that information

security architecture is an integral part of an organization’s

enterprise architecture. However, the author from his experi-

ence suggests that organizations that do not have a matured

enterprise architecture yet must also roll out the security ar-

chitecture processes in their IT program initiatives. The pri-

mary purpose of the security architecture review process is to

ensure that specific security requirements are reviewed and

cost-effective security solutions (management, operational,

and technical) are suggested/designed for qualified risks that

must be mitigated as per the risk management strategy. Or-

ganizational security requirements could also arise from oth-

er factors such as policies, standards, laws, and compliance

regulations among others. These requirements must also flow

Figure 3 – NIST risk management process

Strategic Risk

Tactical Risk

Multitiered Organization-Wide Risk Management

Risk Management Process

Tier 1

Organization

Tier 2

Mission / Business

Processes

Tier 3

Information

Systems

Assess

Frame

Monitor Respond

24 – ISSA Journal | January 2017

Enterprise Security Architecture: Key for Aligning Security Goals with Business Goals | Seetharaman Jeganathan](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-24-2048.jpg)

![After security design recommendations are submitted to the

implementation team, it is vital for security architects to pro-

vide required support throughout the implementation pro-

cess and ensure that the proposed design recommendations

are implemented as per the suggestions made in the design

review. This process is referred to as the security implementa-

tion review. Post implementation of a security control, quality

assurance testing must be done thoroughly to ensure whether

the security control is mitigating the underlying risk(s) as per

the requirements. If there is still any gap in risk mitigation,

then the whole process must be repeated until the underlying

risk is mitigated to the acceptable level. This way, the enter-

prise security review process could also be aligned with the

organization’s SDLC process being followed in the informa-

tion systems project implementation [8]. Figure 5 describes

the proposed review process flow from the beginning to end.

Technology factor

This area focuses on the technology aspects of the informa-

tion systems layer supporting the business functions. The

into the security architecture review process as depicted in

figure 4 and be addressed properly [10].

Enterprise security architecture review process

An enterprise security architecture review process is primar-

ily conducted to derive the most appropriate security solu-

tion(s) for the qualified requirements. Enterprise security ar-

chitecture review board members should meet and review the

security requirements and brainstorm possible cost-effective

solutions that could be management, operational, technical,

or combination of these controls to mitigate risks to an ac-

ceptable level. These steps are referred to as a security require-

ments review and security controls review. Once a cost-effec-

tive security control is identified, a security design review is

conducted for functional and non-functional requirements,

depending on the type of the security control(s) identified [8].

A high-level summary of the security design review process

for each control type is briefed in table 1; this is not an exten-

sive list of design review steps but suggestions to kick start

the process [8].

Security

Control Types

Design Review

Management

• Conduct current-state assessment and identify gaps

• Review the associated security policies and

standards

• If required, create new security policies/stan-

dards or modify the existing policies to meet

the security requirements

• Document and submit the recommendations

Operational

• Conduct current-state assessment and identify gaps

• Suggest new or amendments to the operational

model/process to meet the security requirements

• Document and submit the recommendations

Technical

• Conduct current-state assessment and identify gaps

• Suggest new controls or changes to the existing

technical control to meet the security requirements

• Conduct cost-benefit analysis, security return

of investment (SROI) analysis to prove the cost

effectiveness of the solutions

• Provide functional design inputs

• Provide non-functional design inputs

• Document and submit the recommendations

Table 1 – Security controls design review (high-level summary)

Figure 4 –Enterprise security architecture process drivers

Figure 5 – Enterprise security architecture process flow

No

Start

Security Requirements Review

Security Controls Review

Decision

Management Operational Technical

Security Design Review

Security Implementation Support

Security Post-Implementation Review

Risk Mitigation ?

Yes

Stop

Other

Drivers

Risk

Management

Framework

Enterprise Security

Architecture Review Process

Security

Requirements

Security

Requirements

26 – ISSA Journal | January 2017

Enterprise Security Architecture: Key for Aligning Security Goals with Business Goals | Seetharaman Jeganathan](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-26-2048.jpg)

![ate a detailed inventory of the current state of information

security controls in place at the physical, network, infrastruc-

ture, and application levels [3].

There are several possible approaches in assessing the cur-

rent-state security controls of physical, network, infrastruc-

ture, and application layer components; one such approach is

creating a 3X3 matrix of current security controls classified

into types such as preventive, detective, and corrective con-

trols (table 2) [8].

After completing the above exercise at all the levels, the cur-

rent security posture (“as-is”) of the organization would be

created successfully. The next critical steps are:

a) Conduct a gap analysis of current-state security

b) Conduct a risk analysis of critical information systems

An effective risk management process is a key here to find

out the gaps in the current state and the residual risk on

the business critical information systems. The architecture

team must review the gap analysis and risk analysis reports

to derive the requirements for the desired (“to-be”) state ar-

chitecture to adequately protect the identified assets. The

requirements must be presented to the senior management,

governance board, etc., for approval, funding, and adequate

support for implementation. It is extremely important to map

the goals of the security architecture with business goals and

process begins with creating a blueprint(s) of the current

state (“as-is”) of the information systems layer (logical and

technical) in the primary and secondary data center(s) of the

organization. Figure 6 provides a high-level logical view of

the primary data center.

After a high-level blueprint is created, the team has to move

on to creating more focused blueprints about the following

areas but not limited to:

1. Detailed blueprints about the network segments such as

the DMZ layer, internal network layers (intranet), and

external networks such as partner networks, Internet,

VPN networks, etc.

2. Detailed blueprints about the infrastructure layer such as

servers, databases, mail servers, file servers, etc.

3. Request the individual application teams (mission criti-

cal and all others) to derive their application-specific ar-

chitecture diagrams if not already available [4]

Figure 7 depicts a high-level logical and physical deployment

sample architecture blueprint of an e-commerce application

in a financial services company.

After completion of above mentioned exercise, the architec-

ture team would have relevant information to review and cre-

Preventive Detective Corrective

Administrative • Acceptable usage policy

• Change management policy

• Effective change management process

Technical

• Data encryption

• SSL/TLS transport encryption

• Secure configuration

• Access control

• Vulnerability scanning

• Secure coding/review

• Effective incident response

Physical • Application-specific control(s) if any • Application-specific control(s) if any • Application-specific control(s) if any

Table 2 –Sample controls review matrix (e-commerce application)

Figure 6 – Organization data center – logical view

Figure 7 – Sample e-commerce application – logical/deployment architecture

January 2017 | ISSA Journal – 27

Enterprise Security Architecture: Key for Aligning Security Goals with Business Goals | Seetharaman Jeganathan](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-27-2048.jpg)

![• US Department of Defense architectural framework, and

much more

Organizations can leverage these frameworks and custom-

ize them to meet security architecture requirements. As the

scope of this paper is not to review these frameworks in detail,

the author notes that organizations must choose an architec-

ture framework that is flexible enough to support business

growth and adapt for changes in the business environment

and processes [5].

Conclusion

The enterprise security architecture process streamlines se-

curity functions of an organization to achieve effective total

security. In this article, the author proposed an enterprise se-

curity architecture framework and detailed the pillars (peo-

ple, process, and technology) of the framework. Whether

adopting this framework or an industry-recognized frame-

work is a decision to be made by the organization, depending

on the culture, business environment, and industry com-

pliance requirements. Any framework will be effective only

when adequate support is given. In order to obtain desired

outcomes of an architecture framework, it must be under-

stood and supported by the senior management. In today’s

economy-centric business environments, a security architec-

ture framework must be increasingly business oriented rather

than a technology-centric framework to obtain expected re-

turns on security investments.

References

1. Architecture Compliance. (n.d.), The Open Group. Re-

trieved November 09, 2016, from http://pubs.opengroup.

org/architecture/togaf9-doc/arch/chap48.html.

2. Breithaupt, J., & Merkow, M. S. Principle 11: People,

Process, and Technology Are All Needed to Adequately

Secure a System or Facility, Information Security Prin-

ciples of Success, Pearson IT Certification (2014, July 4).

Retrieved from http://www.pearsonitcertification.com/

articles/article.aspx?p=2218577&seqNum=12.

3. Building an e-commerce Solution Architecture, Dami-

con. Retrieved November 9, 2016, from http://www.dam-

icon.com/resources/Architecture_Practices.pdf.

objectives to garner the support from management and board

of directors [5].

As the technology environment is ever changing due to sev-

eral factors such as changes in the business processes, en-

vironment, technology adoption, automation, etc., security

architecture must always be a live and vigilant group in any

organization in order to adopt the changes and support the

business to function without disruptions. It is essential to

remember that IT and security functions must be business

enablers rather than creating road blocks for business func-

tions.

Note: Organizations that are matured in information securi-

ty processes have adopted one or more industry frameworks

listed below to implement the minimum required security

controls and desired security architecture for effective infor-

mation security:

• ISO 27001:2013 Information Security Standard

• NIST Cybersecurity Framework

• ISACA COBIT 5 Information Security

• SANS Critical Security Controls, etc. [5]

Incorporating security architecture in enterprise

architecture

MIT Sloan School’s Center for Information Systems Research

(CISR) defines enterprise architecture as “the organizing log-

ic for business process and IT infrastructure reflecting the

integration and standardization requirements of the firm’s

operating model. The enterprise architecture provides a long-

term view of a company’s processes, systems, and technol-

ogies so that individual projects can build capabilities—not

just fulfill the immediate needs” [12].

In simple terms, enterprise architecture provides a logical

view of the enterprise business layer (functions and process-

es), the IT infrastructure layer, the data layer, and the applica-

tions layer. As enterprise architecture is not the focus of this

article, the author would emphasize that security architecture

could be part of the enterprise architecture and provide ad-

equate security for all the architecture functions. Figure 8

depicts a security-enabled enterprise architecture view of an

organization [13].

Security architecture frameworks

There are several industry frameworks that provide architec-

tural approaches for meeting security requirements. Some of

them to be noted are:

• SABSA comprehensive framework for enterprise security

architecture and management

• Zachman framework of IBM

• The Open Group architecture framework

• The Open Security architecture group framework

• UK Ministry of Defense architecture framework

Enterprise Architecture

Business Architecture

Information

Architecture

Technology

Architecture

SECURITY

SECURITY

Figure 8 – Security enabled enterprise architecture view

28 – ISSA Journal | January 2017

Enterprise Security Architecture: Key for Aligning Security Goals with Business Goals | Seetharaman Jeganathan](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-28-2048.jpg)

![TouchWiz. Additionally, carriers such as Verizon, AT&T, and

T-Mobile are able to leverage their control of the airwaves to

drop proprietary third-party software onto many Android

devices before they hit the market. The NFL Mobile applica-

tion and Verizon’s line of in-house applications are examples

of such installations. These applications cannot normally be

removed by the user without gaining root access to the phone

or tablet, which, of course, creates additional security prob-

lems and exposures.

Microsoft controls a much smaller portion of the mobile mar-

ket; according to the International Data Corporation (IDC),

in Q2 2015, a mere 2.6 percent of the world’s mobile users

were on Windows-powered phones.3

Nevertheless, Windows

mobile devices, specifically Windows phones, have historical-

ly been subjected to significant device fragmentation (though

it is not as big as a problem as it is with Android) due in large

part to a lack of support from carrier brands. When Micro-

soft’s Denim update for Windows Phones was first released in

September 2014, it took months for T-Mobile and AT&T to

push the update to compatible phones. Verizon was quicker

to respond, but only after it had continuously refused to push

the previously available update, Cyan, to compatible devices.4

In response to these kinds of problems, in the spring of 2015

Microsoft announced that it would be taking over updates for

all Windows 8 devices, assuring customers with compatible

devices that they would receive an upgrade to Windows 10

in a timely manner.5

Unfortunately, at the time of the an-

nouncement there were still many users on Windows 7 devic-

es, many of which would not be able to make the upgrade. In

November 2014, nearly 17 percent of Windows phones on the

market were running Windows 7, and a large portion of those

3 IDC. “Smartphone OS Market Share.” International Data Corporation. 2015. Web. 10

Feb. 2016 – http://www.idc.com/prodserv/smartphone-os-market-share.jsp.

4 Murphy, David. “Microsoft Going after Smartphone Fragmentation in Windows 10

Mobile.” PC Magazine. 16 May 2015. Web. 10 Feb. 2016 – http://www.pcmag.com/

article2/0,2817,2484312,00.asp.

5 Bott, Ed. “Microsoft Says It’s Taking Over Updates for Windows 10 Mobile Devices.”

ZDNet, 25 May 2015. Web. 10 Feb 2016 – http://www.zdnet.com/article/microsoft-

says-its-taking-over-updates-for-windows-10-mobile-devices/.

devices had already been unable to make the

jump to Windows 8/8.16

(figure 3). This has

subsequently left a significant portion of the

Windows Phone customer base unsupported,

necessitating an upgrade to newer hardware.

Consumer vulnerabilities

It goes without saying that device fragmentation presents a

number of serious problems for consumers. First-party ap-

plications and changes to the underlying operating system

introduced by device manufacturers can put users at serious

risk. Ryan Welton, a security researcher with NowSecure, re-

vealed at BlackHat 2015 that Samsung’s update process for its

customized version of the Swift keyboard application could

be exploited to create a man-in-the-middle scenario through

which remote code execution could be achieved. According

to a 2015 report by Dan Goodin for Ars Technica:7

Attackers in a man-in-the-middle position can imperson-

ate the [update] server and send a response that includes

a malicious payload that’s injected into a language pack

update. Because Samsung phones grant extraordinarily

elevated privileges to the updates, the malicious payload is

able to bypass protections built into Google’s Android op-

erating system that normally limit the access third-party

apps have over the device.

Welton successfully demonstrated the attack at the confer-

ence, compromising a variety of Samsung phones attached to

Verizon, Sprint, and AT&T carrier networks.8

The vulnera-

bility was quickly patched by Samsung, but carrier networks

were slow to push the update to affected devices.

Often times, very serious vulnerabilities are discovered and

problems arise when patches must be written, tested, mod-

ified, and verified across a variety of devices, operating sys-

tem versions, and carrier or manufacturer builds. On July

27, 2015, Joshua Drake of Zimperium announced that he had

6 Kumar, Ramesh. “Microsoft Hopes 0% Windows Phone 8.x OS fragmentation;

Windows 10 Is the Future.” Inferse, 15 Nov 2014. Web. 10 Feb 2016 – http://www.

inferse.com/19397/microsoft-hopes-0-windows-phone-8-x-os-fragmentation-

windows-10-future/.

7 Goodin, Dan. “New Exploit Turns Samsung Galaxy Phones into Remote Bugging

Devices.” Ars Technica. 16 Jun 2015. Web. 10 Feb. 2016 – http://arstechnica.com/

security/2015/06/new-exploit-turns-samsung-galaxy-phones-into-remote-bugging-

devices/.

8 Welton, Ryan. “Remote Code Execution as System User on Samsung Phones.”

NowSecure Blogs. NowSecure, 15 June 2015. Web. 19 Jan. 2016 – https://www.

nowsecure.com/blog/2015/06/16/remote-code-execution-as-system-user-on-

samsung-phones/.

Figure 2 – Android Market Shares by Manufacturer (OpenSignal, 2015)

Figure 3 – Q4 2014, Windows Phone OS Distribution

(Kumar, 2014)

36 – ISSA Journal | January 2017

Fragmentation in Mobile Devices | Ken Smith](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-36-2048.jpg)

![of mobile device manufacturers. Earlier this year, a Dutch

consumer group issued a lawsuit against Samsung over what

they perceived to be the manufacturer’s slow security update

policy. According to a January 2016 Neowin report by Andy

Weir:

The [consumer group] cites a survey that it carried out,

which it says shows that “82% of the Samsung phones ex-

amined had not been provided with the latest Android

version in the two years after being introduced.” Indeed,

it’s worth noting that Samsung isn’t exactly known for

delivering updates with any great sense of urgency, as its

record with the newest major version of Android shows.15

The group ultimately wants Samsung to provide a full two

years of updates for its devices starting on the day that the de-

vice is sold (as opposed to the date of its initial release or even

manufacturing). While these demands may be overzealous,

and keeping in mind that the case has yet to be decided, it is

the first time such action has taken place: consumers break-

ing new security ground in the mobile device market.

Conclusion

This means that users are likely, at least a small extent, to

have more positive control over the security of their devices

of choice from the very beginning. And that’s a crucial step

in the growth and maturity of the mobile device market. And

as that market continues to expand, all stakeholders, includ-

ing consumers, have a responsibility to ensure that issues like

device fragmentation do not seriously impact the security

posture of the market at large, thus ensuring that all mobile

devices are as secure as possible from initial

release to end-of-life.

About the Author

Ken Smith works for SecureState, a global

management consulting firm specializing in

information security. Ken works primarily on

wireless and physical assessments as well as

in mobile device and application security. He enjoys presenting

and regularly speaks at industry conferences. Reach Ken via

email at ksmith@securestate.com or call 216.927.8200.

15 Weir, Andy. “Samsung Sued by Dutch Consumer Group over ‘Poor Software Update

Policy for Android Phones.’” Neowin. 20 Jan. 2016. Web. 10 Feb. 2016 – http://www.

neowin.net/news/samsung-sued-by-dutch-consumer-group-over-poor-software-

update-policy-for-android-phones.

whose primary concern is device fragmentation, iOS is the

best option of the “big three.” Consumers with a preference

for Android or Windows devices should purchase the newest

technologies whenever possible and stick to the more well-

known manufacturers. These companies are the most likely

to maintain the resources that will allow them to continue

to support future versions of Android or Windows on legacy

hardware. Additionally, the devices of those manufacturers

with larger portions of the market (Google, Samsung, HTC,

and LG) typically receive security updates faster and more

frequently than the lesser-known manufacturers.

Application developers can also help alleviate the sting of de-

vice fragmentation. While the latest hardware can be allur-

ing, responsible developers should continue to support lega-

cy hardware for as long as possible. A significant portion of

the smartphone market is comprised of outdated hardware.14

Dropping support for those devices that still see regular use

can place large portions of the market at risk. Whenever pos-

sible, developers should seek to support at least two gener-

ations of hardware. Businesses and organizations, including

those with bring-your-own-device (BYOD) policies, should

consider the implementation of a mobile-device-manage-

ment (MDM) solution. Though not perfect, MDM solutions

like AirWatch and MobileIron offer a variety of benefits in

the mobile security space:

• Automatically update corporate-relevant applications

and services

• Flag jailbroken or rooted devices and disconnect

them from corporate resources

• Group devices together logically for ease of control

and monitoring

• Prevent vulnerable or unsupported devices from be-

ing connected to corporate networks and resources

• Push corporate mobile policies to all employee devic-

es

Finally, consumers also have a responsibility to protect them-

selves. Users should avoid jailbreaking or rooting their phones

and tablets. Doing so can significantly amplify the fallout of a

successful exploit, giving attackers complete control over the

contents of compromised devices. There may also be emerg-

ing methods through which customers can force the hands

14 Ibid.

38 – ISSA Journal | January 2017

Fragmentation in Mobile Devices | Ken Smith

ISSA Special Interest Groups

Special Interest Groups — Join Today! — It’s Free!

ISSA.org => Learn => Special Interest Groups

Security Awareness

Sharing knowledge,

experience, and

methodologies regarding

IT security education,

awareness and training

programs.

Women in Security

Connecting the world, one

cybersecurity practitioner at

a time; developing women

leaders globally; building

a stronger cybersecurity

community fabric.

Health Care

Driving collaborative thought

and knowledge-sharing

for information security

leaders within healthcare

organizations.

Financial

Promoting knowledge

sharing and collaboration

between information security

professionals and leaders

within financial industry

organizations.](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-38-2048.jpg)

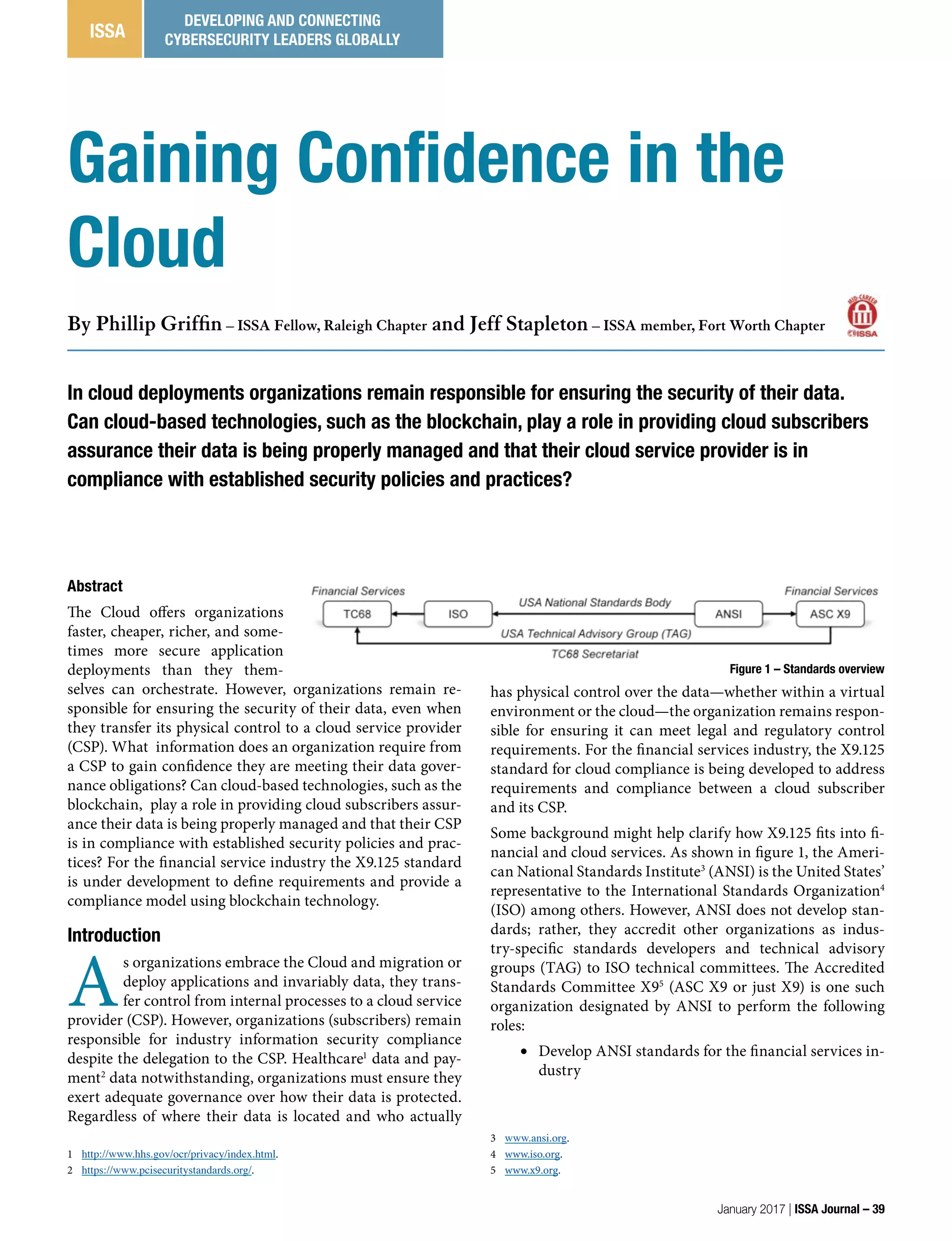

![Figure 2 – Controls overview

• Represent the United States as the

TAG to ISO technical committee 68

Financial Services (TC68)

• Manage TC68 and as the official

secretariat

Consequently, many X9 standards are sub-

mitted to ISO for international standardization. Further, X9

often initiates ISO work items, adopts ISO financial stan-

dards, and retires ANSI standards in favor of its ISO version.

Sometimes the US markets are uniquely distinct that a do-

mestic X9 standard is needed in absence or parallel with an

ISO standard. The cloud security work item is assigned to the

X9F4 cryptographic protocols and application security work

group; Jeff Stapleton is the X9F4 chair, and Phil Griffin is the

X9.125 editor.

One of the first X9F4 actions was to review the existing body

of work including special publications from the National

Institute of Standards and Technology (NIST),6

Federal Fi-

nancial Institutions Examination Council (FFIEC)7

cloud

computing recommendations, Central Intelligence Agency

(CIA) views on cloud computing, and the Cloud Security Al-

liance (CSA) research on comparable audit programs. These

materials were digested to formulate a core set of security

requirements for managing and securing information in the

cloud, whether this information is located in a private cloud

completely under control of the organization, or managed in

a hybrid or public cloud environment. Regardless of the cloud

service type or environment, these basic questions were iden-

tified:

1. What security controls does the cloud subscriber (the

consumer of the cloud services) need to protect the confi-

dentiality and integrity of its data?

2. What security controls does the cloud service provider

offer to protect the confidentiality and integrity of its sub-

scriber’s data?

3. What security controls provided by the cloud service pro-

vider can be monitored by the cloud subscriber to verify

compliance?

While the development of X9.125 is still work in progress

and has undergone several redesigns, cloud services and its

adoption in the financial industry have continued to evolve.

Thus the X9.125 standard is attempting to hit a moving tar-

get. X9 standards provide requirements (“shall”) and recom-

mendations (“should”) that are practical and verifiable. Thus,

as shown in figure 2, the standard needs to address security

controls and interoperability between the cloud service pro-

vider and the cloud subscriber, in addition transparency of

any service sub-providers.

Cloud service providers, like any organization relying on in-

formation technology (IT), need to have their security con-

trols documented in policy (why), practices (what), and pro-

6 http://csrc.nist.gov/publications/PubsSPs.html.

7 http://ithandbook.ffiec.gov/media/153119/06-28-12_-_external_cloud_

computing_-_public_statement.pdf

cedures (who). They also need to securely manage resources,

including people, places, and processes. IT controls include

network, systems, and applications addressing authentica-

tion, authorization, and accountability (AAA). Data must

also be managed across its life cycle including creation, dis-

tribution, storage, and termination. When cryptography is

used, the keys must be managed in a secure manner. How

the controls are deployed and managed depends on the re-

lationship between the CSP and the subscriber is depicted in

figure 2:

• The topmost solid arrow shows the case when controls are

provided solely by the CSP to the subscriber. For example,

the CSP might encrypt the subscriber’s data in storage us-

ing cryptographic keys managed solely by the CSP.

• The middle arrows show cases when controls are mutually

managed by both the CSP and the subscriber. For exam-

ple, data in transit is encrypted using a session key that is

dynamically established, based on an exchange of public

key certificates between the CSP and the subscriber.

• The bottommost dotted arrow shows the case when con-

trols are provided solely by the subscriber. For example,

the subscriber might encrypt or tokenize data before it is

sent to the CSP for storage or processing.

• The dotted arrow between the CSP and its service sub-pro-

vider shows the case when controls are provided indirect-

ly to the subscriber by the sub-provider. For example, the

sub-provider might be a tokenization service used by the

CSP to protect the subscriber’s data in storage.

While the X9.125 is still work in progress, another major as-

pect is to develop a reporting model such that a cloud sub-

scriber can verify a CSP’s compliance. Compliance might be

to the CSP policy and practices aligned with the subscriber,

or preferably the security requirements being defined in the

X9.125 standard adopted by both parties. Regardless, this im-

plies that the CSP provides compliance information that is

reliable and verifiable. One method for a digital ledger might

be blockchain technology, more contemporarily known be-

cause of Bitcoin.

Blockchains

Blockchains have been around for decades. Notably Merkle

trees were addressed in a US Patent [2] issued in 1982; so

the technology is well vetted. While the Bitcoin blockchain

is used as a general ledger for Bitcoin transactions, any in-

formation can be encapsulated within a blockchain that can

provide data integrity. Incorporating timestamps within the

blockchain (as does Bitcoin) also provides a historical record

40 – ISSA Journal | January 2017

Gaining Confidence in the Cloud | Phillip Griffin and Jeff Stapleton](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-40-2048.jpg)

![of what happened when and by whom. Consider figure 3 as

an example.

The blocks are number sequentially: Block 0, 1…to N. There is

always an initial block conventionally numbered “0” to indi-

cate its special nature. There is always a last block (N) which is

the most current addition to the blockchain. Each block con-

tains data, in this example a cloud service provider’s policy

numbered accordingly to its block number: P0, P1…PN and

so on. In this example, each block contains a hash (H) of its

own policy data, essentially a link to itself, so Block 0 contains

H(P0), Block 1 contains H(P1), and Block N contains H(PN).

Additionally, each block contains a hash of its processor, so

Block 1 contains H(P0) as a link to Block 0, and Block N con-

tains H(PN-1) as a link to Block N-1. Note that Block 0 does

not contain a previous link since Block 0 is the blockchain

origin. At this point one might think that the blockchain is

completely reliable, but it turns out that simple links based

on a hash of just the data in the previous block is unreliable.

Consider an attacker that takes some intermediary Block K

which links to Block K-1 and has Block K+1 linked to it. The

attacker makes a replica of Block K, which we will call Block J,

modifies the data so it contains PJ and no longer PK, and pub-

lishes it as the real Block K. The attacker then updates Block

K+1 to link to Block J instead of Block K. Thus, the blockchain

has been compromised but yet still appears to be valid since

all of the links are valid. Without some method of either ver-

ifying the publisher or the whole blockchain, a simple substi-

tution attack is possible. Replacing the previous link as a sim-

ple hash of the previous data with a digital signature would

prevent the substitution attack; however, this would require

the support of a public key infrastructure (PKI) with certif-

icates, private key storage, certificate authorities, revocation

lists, and the like. Alternatively, replacing the previous link

with a hash chain achieves the same anti-substitution control

without the PKI overhead.

Referring back to figure 3, we have provided another chain

field where each block contains a chain numbered by its block

number: C0, C1…CN. Each chain is a link to all of the pre-

vious blocks, which is a hash of two elements: the previous

chain and a hash of its own data. Thus, Block N contains a

hash of CN-1 and a hash of its own data H(PN), that is H(CN-

1, H(PN)). Likewise, Block 1 contains a hash of C0 and a hash

of its own data H(P1), namely H(C0, H(P1)). Block 0 only con-

tains a hash of a hash of its own data H(H(P0)) because there

Figure 3 – Simple blockchain

is no previous chain. In this manner an attacker cannot re-

place any of the published blocks without updating the whole

chain, which is the basis of the Bitcoin blockchain security.

The presumption is that it is cheaper to be honest than dis-

honest.

If a majority of CPU power is controlled by honest nodes,

the honest chain will grow the fastest and outpace any

competing chains. To modify a past block, an attacker

would have to redo the proof-of-work of the block and all

blocks after it and then catch up with and surpass the work

of the honest nodes. We will show later that the probability

of a slower attacker catching up diminishes exponentially

as subsequent blocks are added. [3]

Much of the media discussion around Bitcoin has focused

on its role as a crypto currency. Bitcoin provides a means

for achieving efficient, anonymous financial transactions. In

this context, Bitcoin is sometimes described as a disruptive

technology, one that facilitates the activities of drug deal-

ers and terrorists, one that threatens to disintermediate and

undermine the existing financial services industry, or one

that presents banks who serve Bitcoin industry players with

heightened “Bank Secrecy Act (BSA)/Anti-Money Launder-

ing (AML) Act compliance risks” [1].

On the other hand, Bitcoin has seen adoption by e-commerce

stalwarts such as PayPal, Overstock, Dish Network, and Dell

Computers, as well as “many community-driven organiza-

tions” that “allow anonymous donations using Bitcoin” [6].

Despite any negative aspects associated with Bitcoin, “there

remain many legitimate uses for Bitcoin and businesses that

facilitate these legitimate transactions” [7]. There is also

growing interest in leveraging the blockchain technology

that underpins Bitcoin to both reduce transaction costs and

strengthen financial services security. To this end, more gen-

eral purpose applications of the blockchain that are far re-

moved from the use of Bitcoin to facilitate financial services

transactions are being considered. For example, blockchains

might be used to evaluate, monitor, assess, or even audit a

cloud services provider:

• The CSP might publish its information security policy and

practices in a blockchain providing an historical record of

versions and changes. In this manner, new subscribers can

evaluate the CSP, existing subscribers can monitor chang-

es, internal audit can assess the CSP, and professionals can

perform independent audits of the CSP.

January 2017 | ISSA Journal – 41

Gaining Confidence in the Cloud | Phillip Griffin and Jeff Stapleton](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-41-2048.jpg)

![• The CSP might distribute information security news in a

blockchain providing notifications or alerts to its subscrib-

ers about incidents or events about new vulnerabilities in

a reliable manner. Today, this information is typically pro-

vided via emails or blogs.

• The CSP might issue information security details in a

blockchain providing real-time data about its controls.

In this manner, existing subscribers can monitor the CSP

for its dependability, consistency, and overall trustworthi-

ness. Another name for this would be compliance.

Hence, the concept of using blockchains to record and ver-

ify CSP compliance data is not as farfetched as might have

been initially considered. For cloud subscribers to gain such

assurance, and to exercise due diligence in the conduct of

their governance and risk management responsibilities, they

need some insight into what goes on under the covers at their

CSP. Cloud subscribers need the same types of operational

evidence of compliance from their CSP that they would ex-

pect their internal IT departments to provide. Whether an

organization’s data is inside its firewall or floating around in

the cloud, informed information security management prac-

tices still depend on access to the basics: vulnerability scan

results, penetration test results, system logs, application logs,

analytical results, security alerts, and summarized informa-

tion. Compliance evidence must have origin authenticity,

data integrity, and often confidentiality safeguards that pro-

hibit access by attackers and other unauthorized individuals.

The attractiveness of the Bitcoin blockchain includes its de-

centralization. Bitcoin spenders submit their transactions

(signature, inputs, outputs) to multiple Bitcoin nodes such

that the transaction gets published in the next block that is

originated by the next miner to solve the hash solution. The

idea is that the amount of work to perpetrate fraud far ex-

ceeds the work factor for mining. Sometimes a race condition

creates a bifurcated blockchain generated by two different

Bitcoin nodes; however, consensus processing will eventually

prune the blockchain to only one authentic version. There is

no central authority that provides a processing choke point, a

single point of failure, or a single point of attack.

However, blockchain management is not without its prob-

lems. There are orphaned blocks, which are valid but did not

make it into the main Bitcoin chain. There are always uncon-

firmed transactions waiting for the next block, which might

get lost during the bifurcation and pruning process. There are

double spends, transactions where the same Bitcoin fractions

get spent by the same entity to two different receivers. There

are strange transactions, where the syntax or semantics are

invalid. And there are outright rejected transactions dropped

by Bitcoin nodes that never get included in the chain. Some

of these might be processing errors due to software bugs, Bit-

coin versions, or rules issues. Alternatively, some transactions

might be fraudulent in nature. Bitcoin fraud management is

relatively nascent, and without a central authority there are

no arbitration or adjudication programs available.

Bitcoin information is publicly accessible by definition. Hash

algorithms provide the links between blocks and transac-

tions, and digital signatures provide transaction integrity

and authentication. Non-repudiation is not feasible as Bitcoin

identifiers support anonymity, and the lack of arbitration does

not meet legal needs discussed in the Digital Signature Guide-

lines [4] and the PKI Assessment Guideline [5]. Further, the

Bitcoin blockchain does not offer data confidentiality. Some

of the cloud server provider’s information security manage-

ment data is sensitive such that it might need to be encrypted,

but only accessible by authorized clients or regulatory bodies.

Thus, key management schemes need to be considered.

There is also growing interest in cloud data confidentiali-

ty and user anonymity. In a paper presented at the Security

Standardization Research (SSR) 20158

conference held re-

cently in Tokyo, Japan, researchers McCorry, Shahandashti,

Clarke, and Hao proposed a new category of Authenticated

Key Exchange (AKE) protocols. These new protocols, which

“bootstrap trust entirely from the blockchain,” are identi-

fied by the authors as “Bitcoin-based AKE” [6]. The SSR 2015

paper describes two new protocols, one with a guarantee of

forward secrecy and offers proof-of-concept prototypes with

experimental results to demonstrate their practical feasibil-

ity. Both protocols provide greater anonymity than can be

achieved using digital certificate- or password-based AKE.

Following the guidance of international security standards

can help ensure that the same information security policies

used to manage risk when information systems reside in

traditional non-cloud environments are also applied in the

cloud. Recently, the big three international security standard-

ization bodies published Recommendation ITU-T X.1631 |

ISO/IEC 27017 Code of practice for information security con-

trols based on ISO/IEC 27002 for cloud services.9

This standard

builds on selected parts of the familiar ISO/IEC 27002 Code

of practice for information security management10

but adds

additional cloud-specific recommendations and guidance.

Although ITU-T X.1631 | ISO/IEC 27017 provides import-

ant recommendations and guidance, it contains no actual

requirements. Conversely, the draft X9.125 standard hardens

the ISO, IEC, and ITU-T recommendations and guidance

into a set of specific information security management re-

quirements. Where ITU-T X.1631 | ISO/IEC 27017 relies on

clauses 5 through 18 of the ISO/IEC 27002 Code of Practice,

X9.125 defines requirements based on comparable clauses in

the ISO/IEC 27001 Information security management systems

– Requirements.11

Conclusions

Blockchains, a decades old cryptographic technology, has

become a creature of the Cloud. Its adoption and use carry

many of the same security concerns as other cloud-based ap-

8 http://www.ssr2015.com/.

9 http://www.iso.org/iso/home/store/catalogue_tc/catalogue_detail.

htm?csnumber=43757.

10 http://www.iso.org/iso/catalogue_detail?csnumber=54533.

11 https://en.wikipedia.org/wiki/ISO/IEC_27001:2013.

42 – ISSA Journal | January 2017

Gaining Confidence in the Cloud | Phillip Griffin and Jeff Stapleton](https://image.slidesharecdn.com/60ce2b4ffb4f79fd698a5a8ced68982025467dc2-170202082843/75/The-Best-Articles-of-2016-DEVELOPING-AND-CONNECTING-CYBERSECURITY-LEADERS-GLOBALLY-42-2048.jpg)