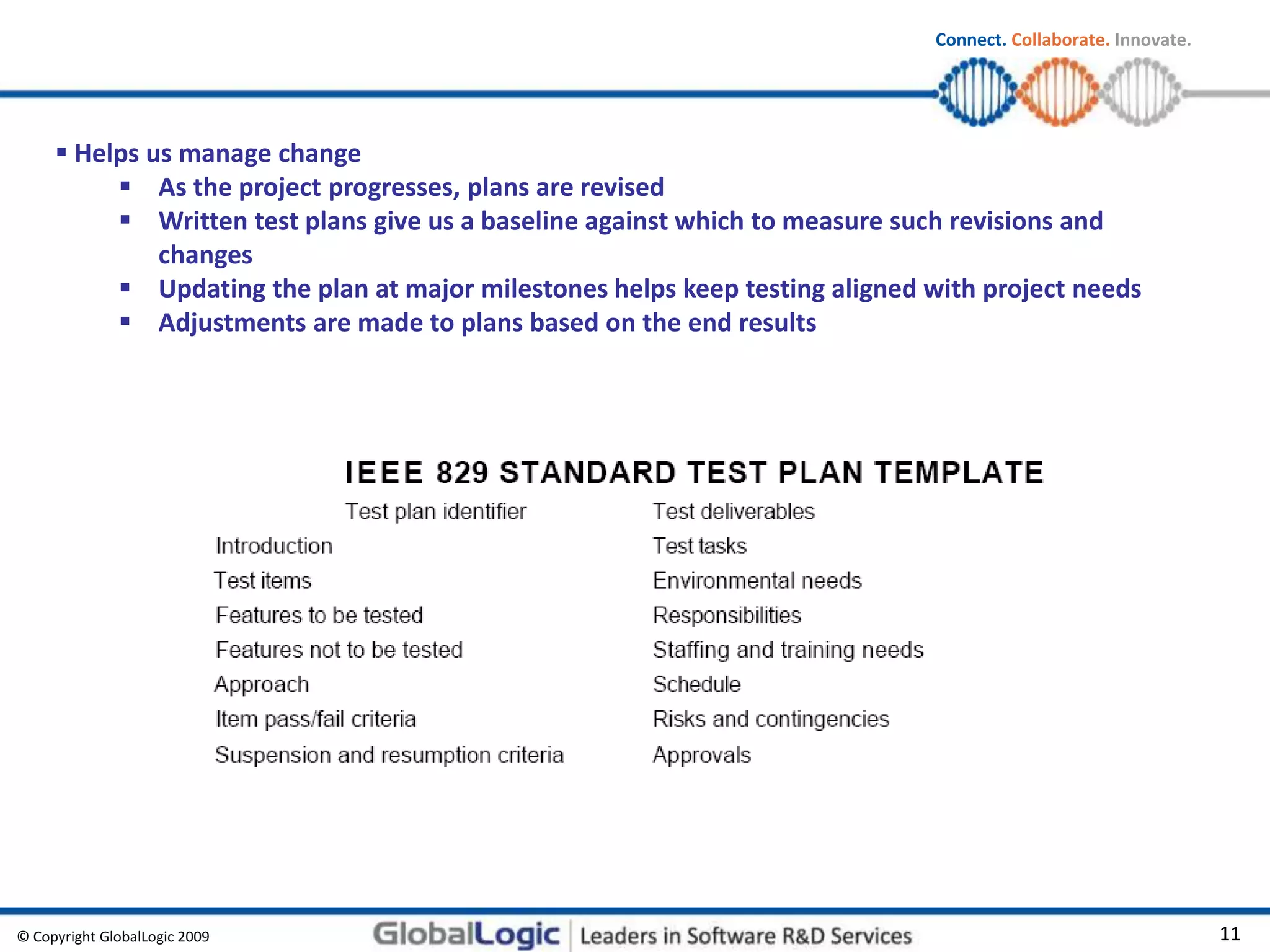

This document provides information on test management based on the ISTQB (International Software Testing Qualifications Board) syllabus. It discusses the importance of independent testing, test planning, estimation strategies, test progress monitoring, configuration management, risk management, and reporting test status. Key aspects covered include organizing independent versus integrated test teams, factors to consider in test planning, estimation techniques, test strategies, and test leader and tester roles and responsibilities.