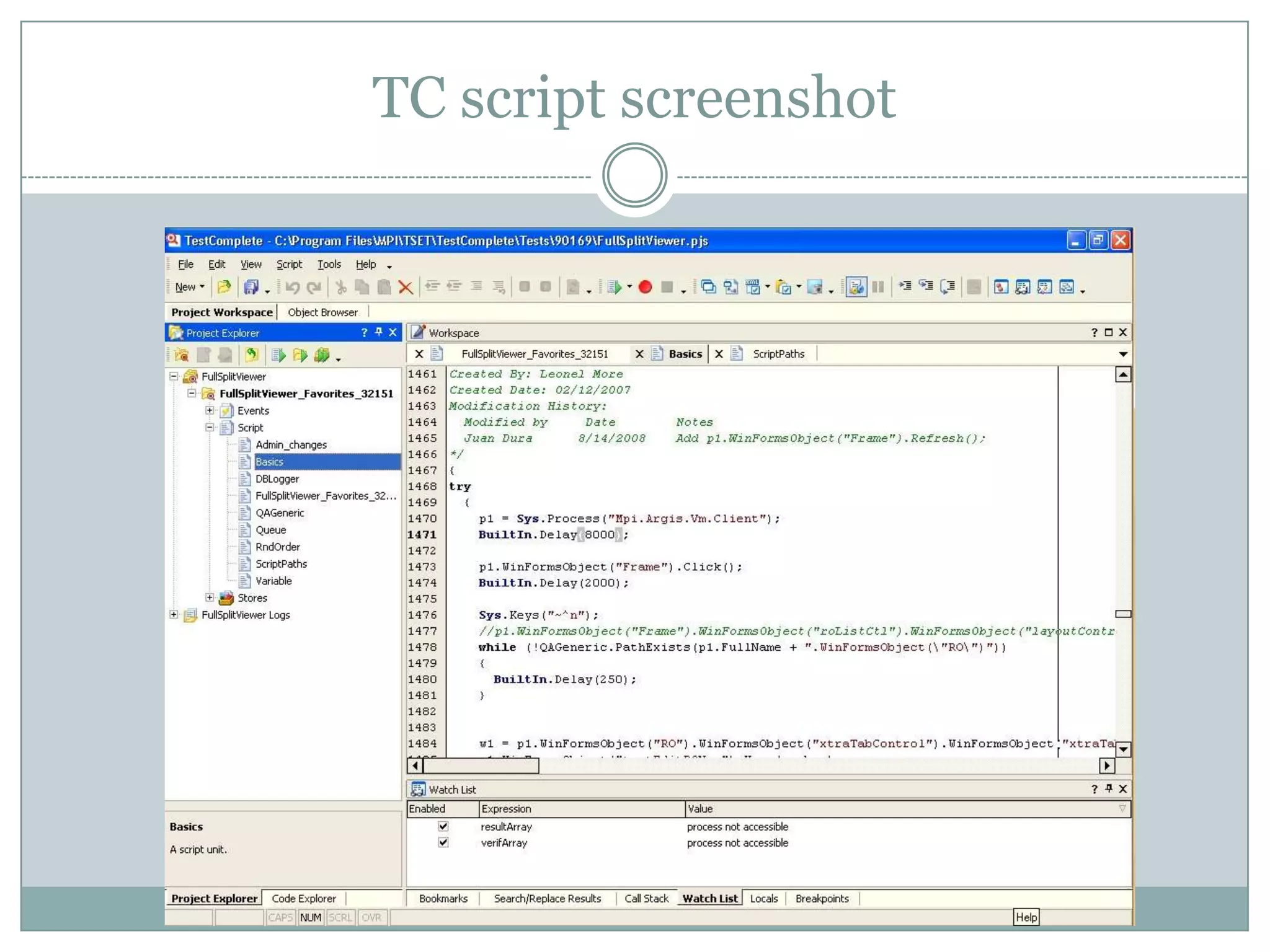

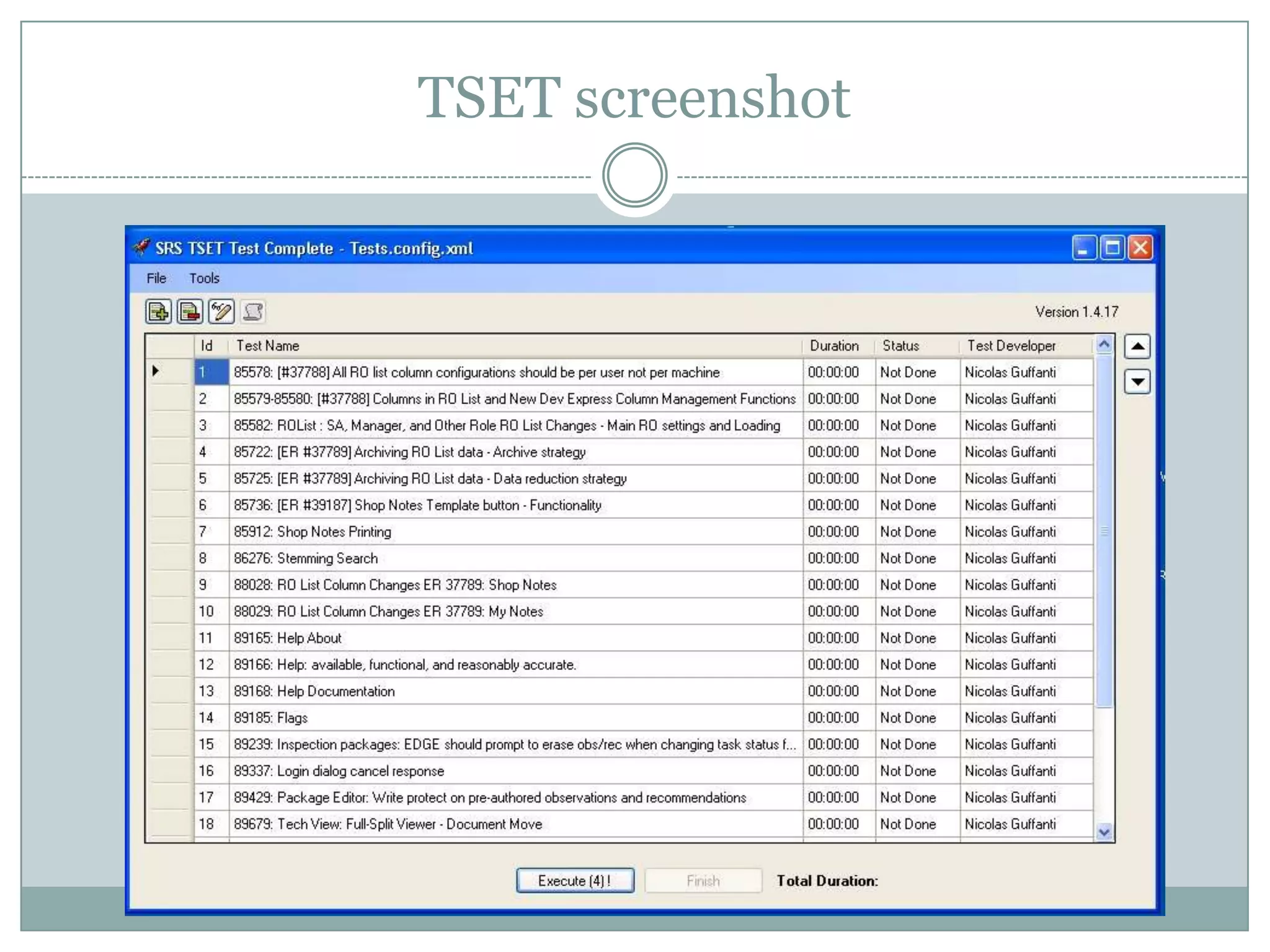

- TestComplete is a tool for automated testing that has been used at SRS for over 5 years to create over 50 test scripts and an automated execution tool called TSET.

- While TestComplete is still used to run some edge case and daily check scripts, it has limitations including slow script execution times, difficulty maintaining scripts over time, and incompatibility with newer technologies like mobile and Silverlight.

- To improve their TestComplete usage, SRS should address issues like false test failures, develop a script naming standard, create guidelines for developing testable applications, and leverage best practices to reduce maintenance overhead.