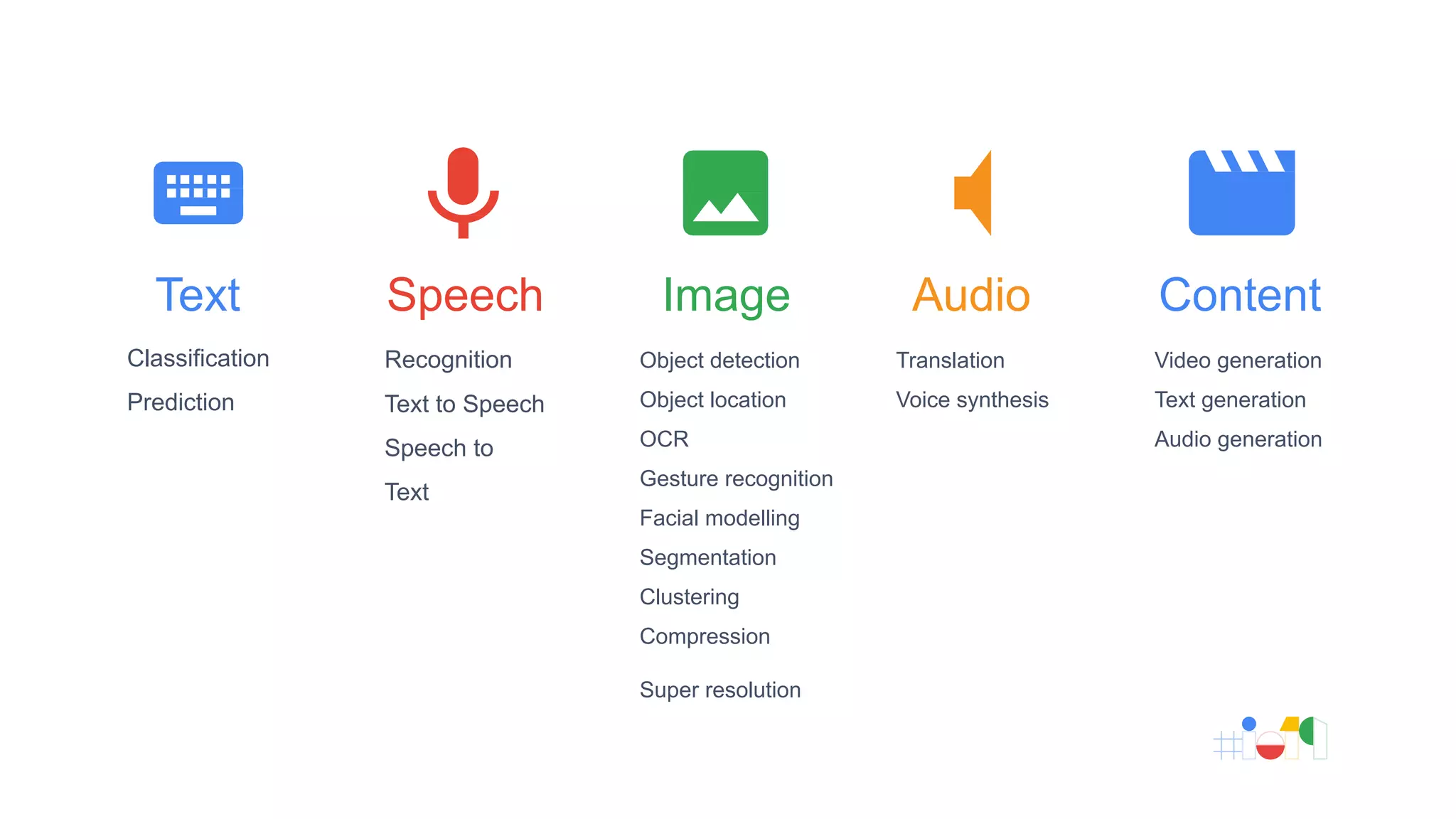

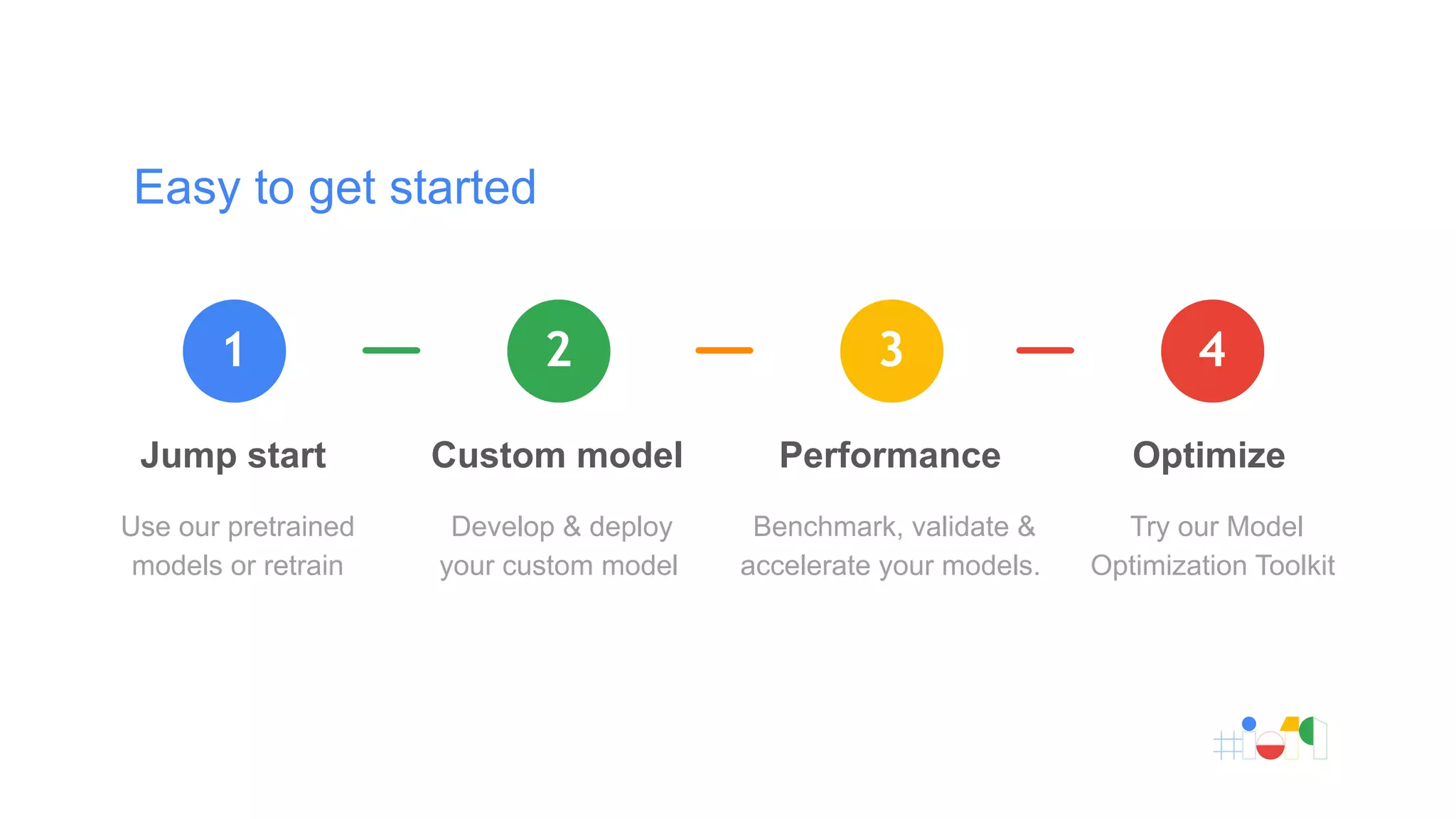

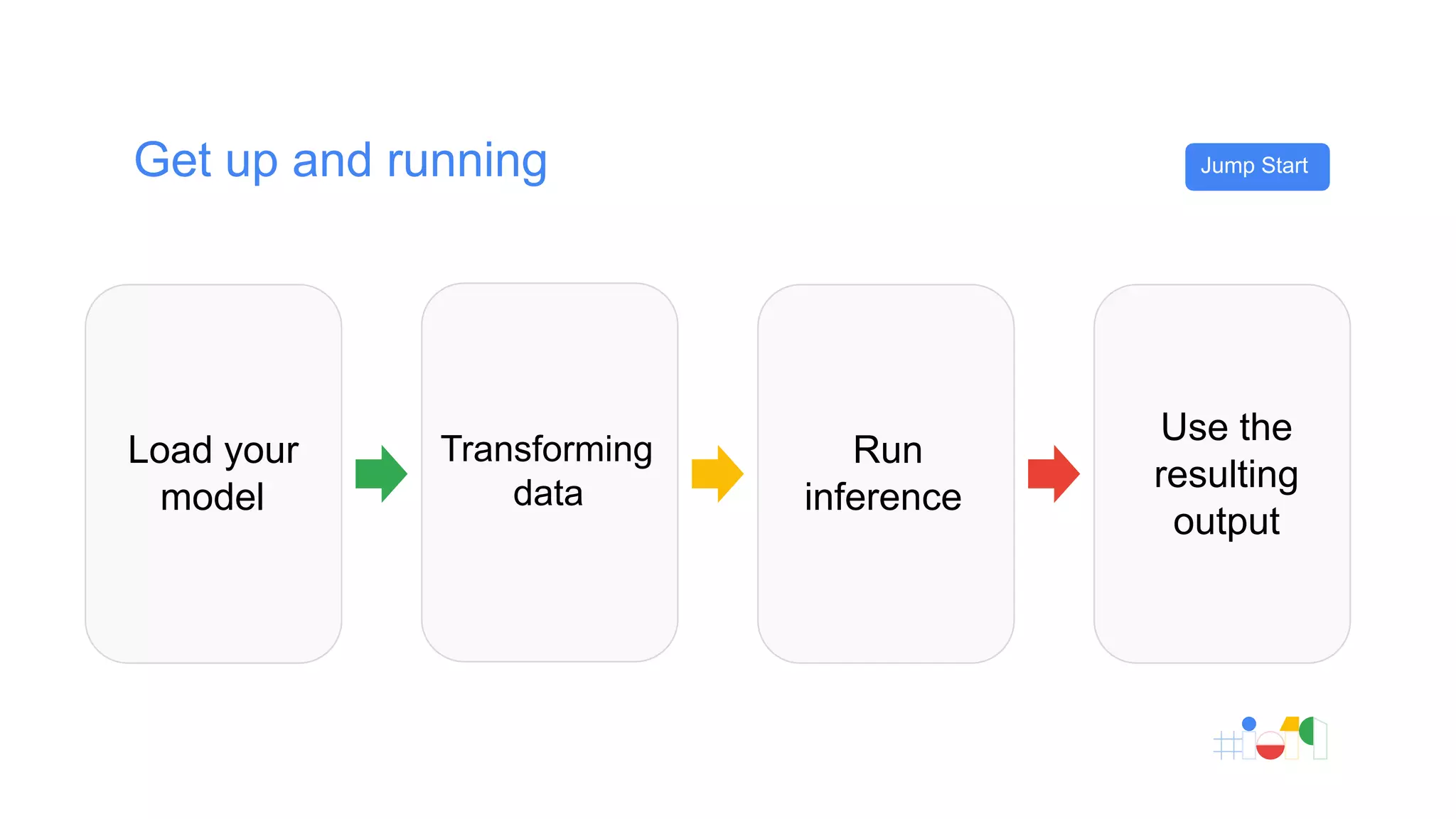

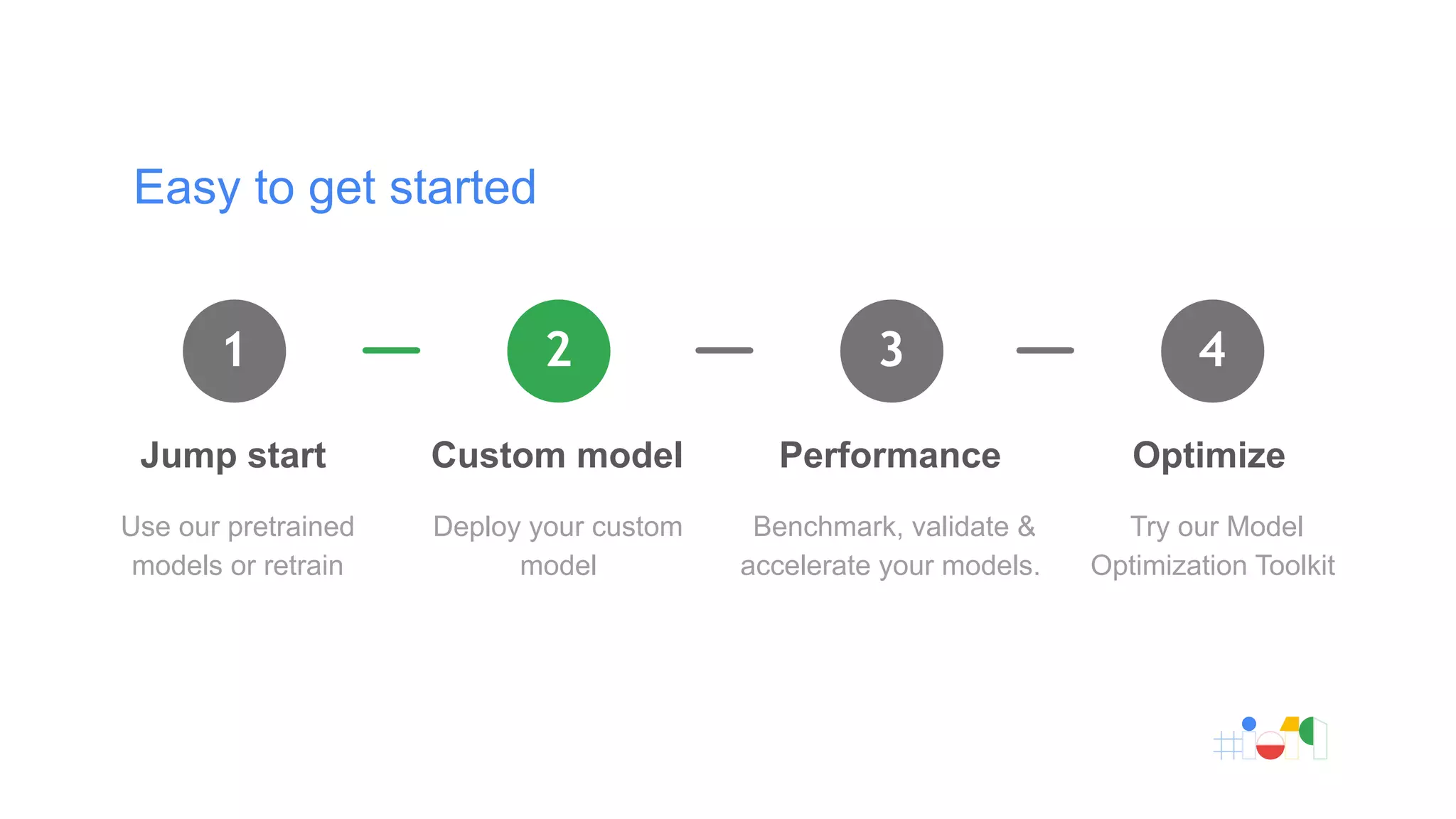

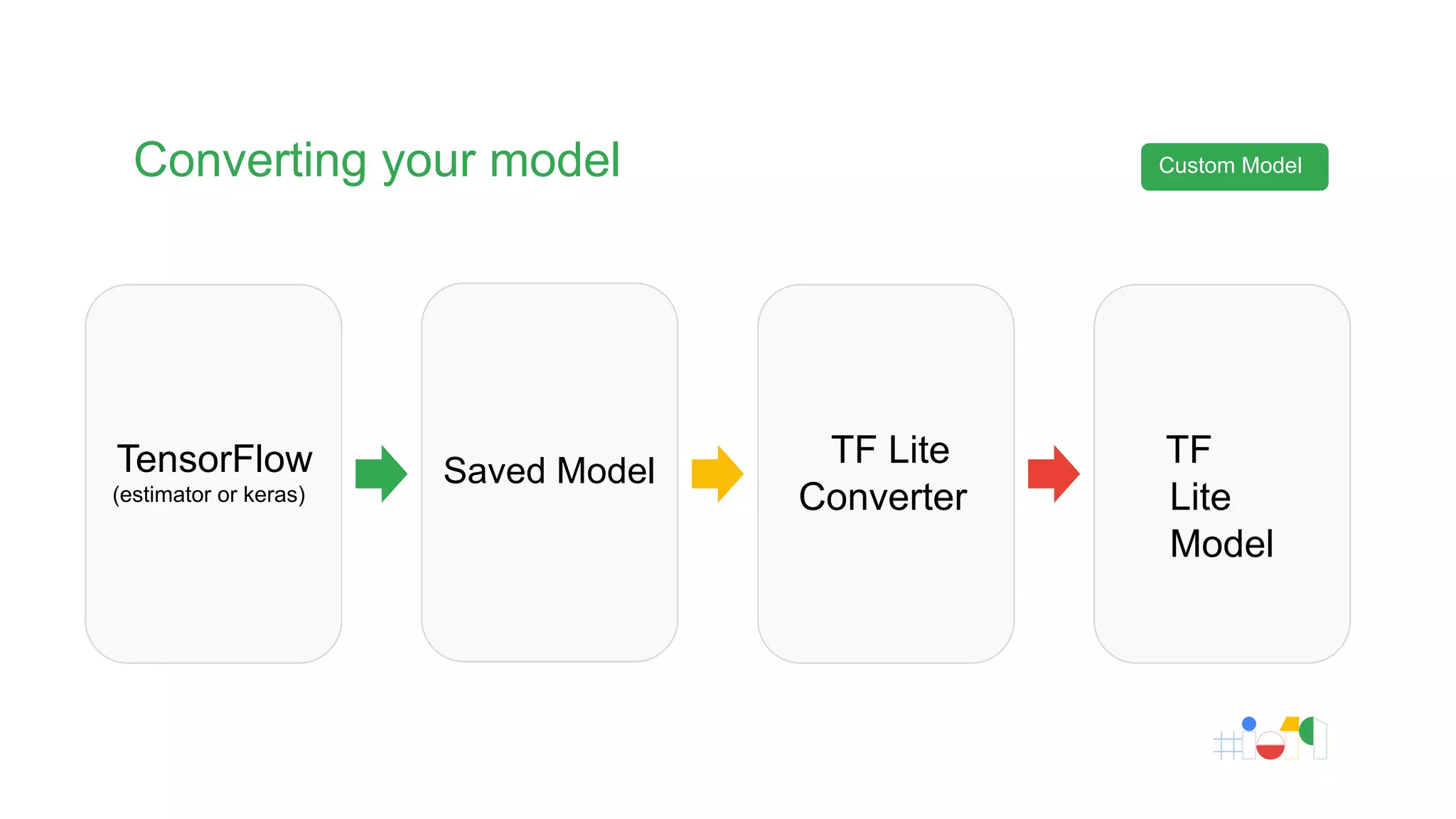

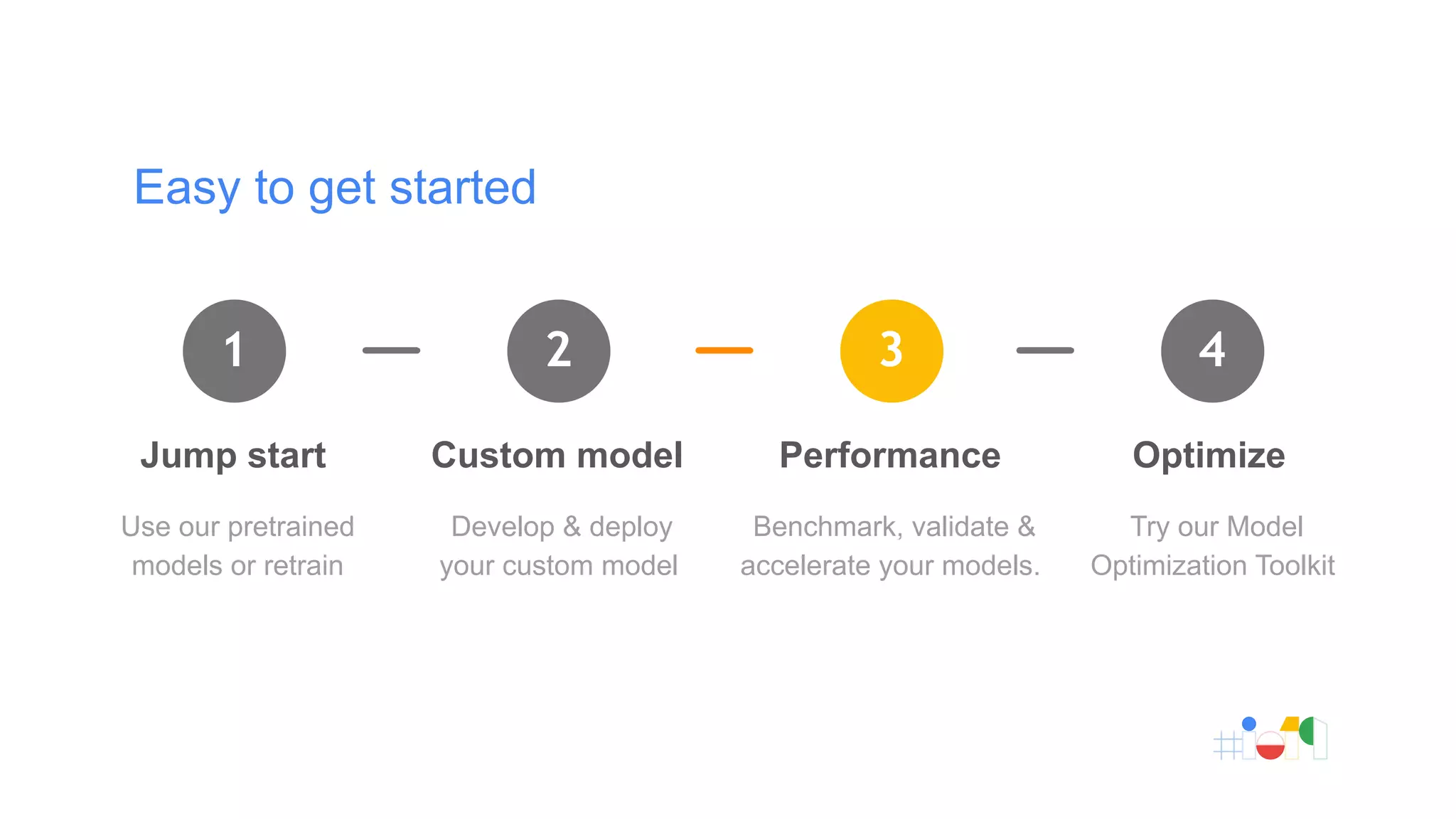

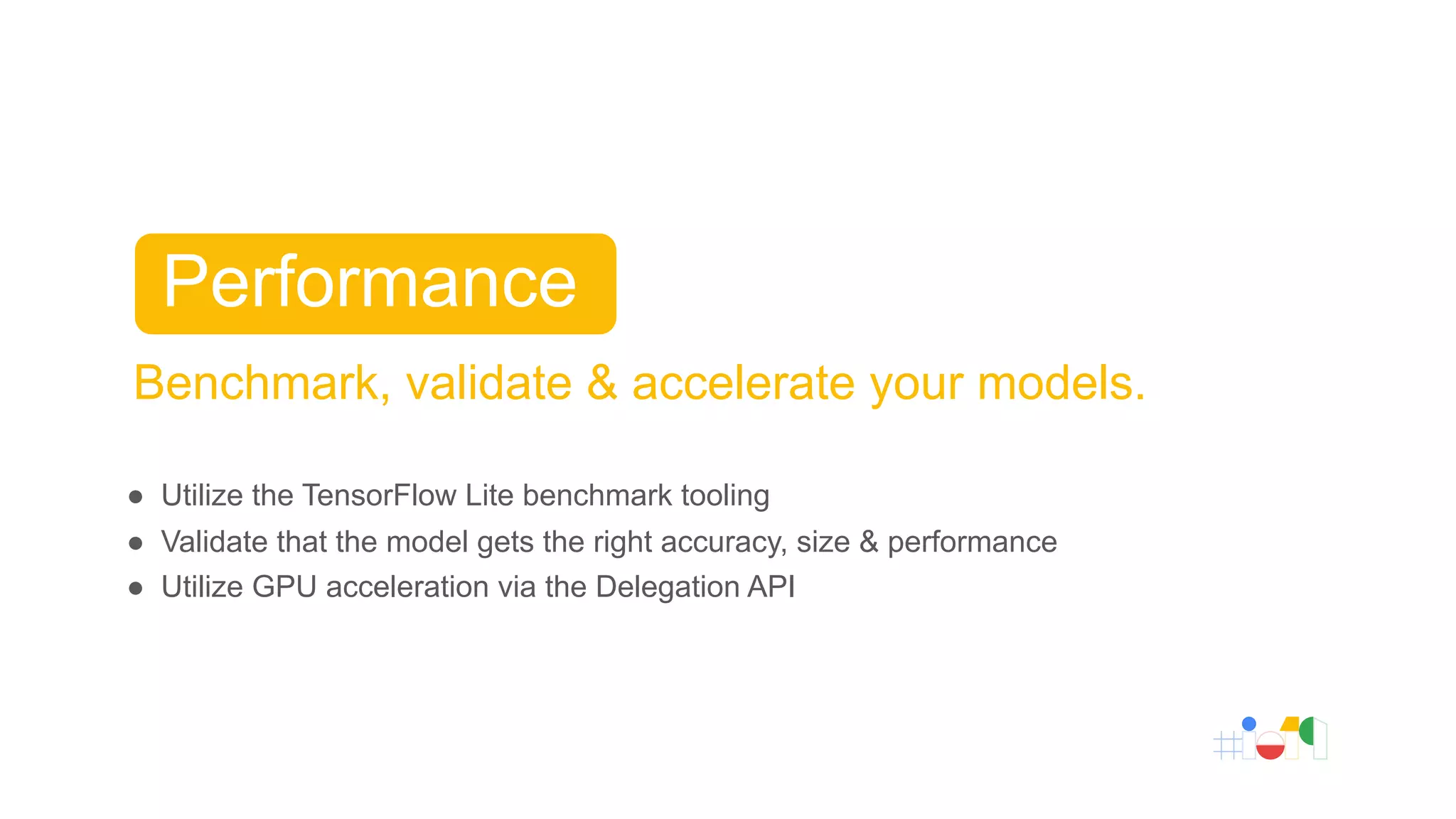

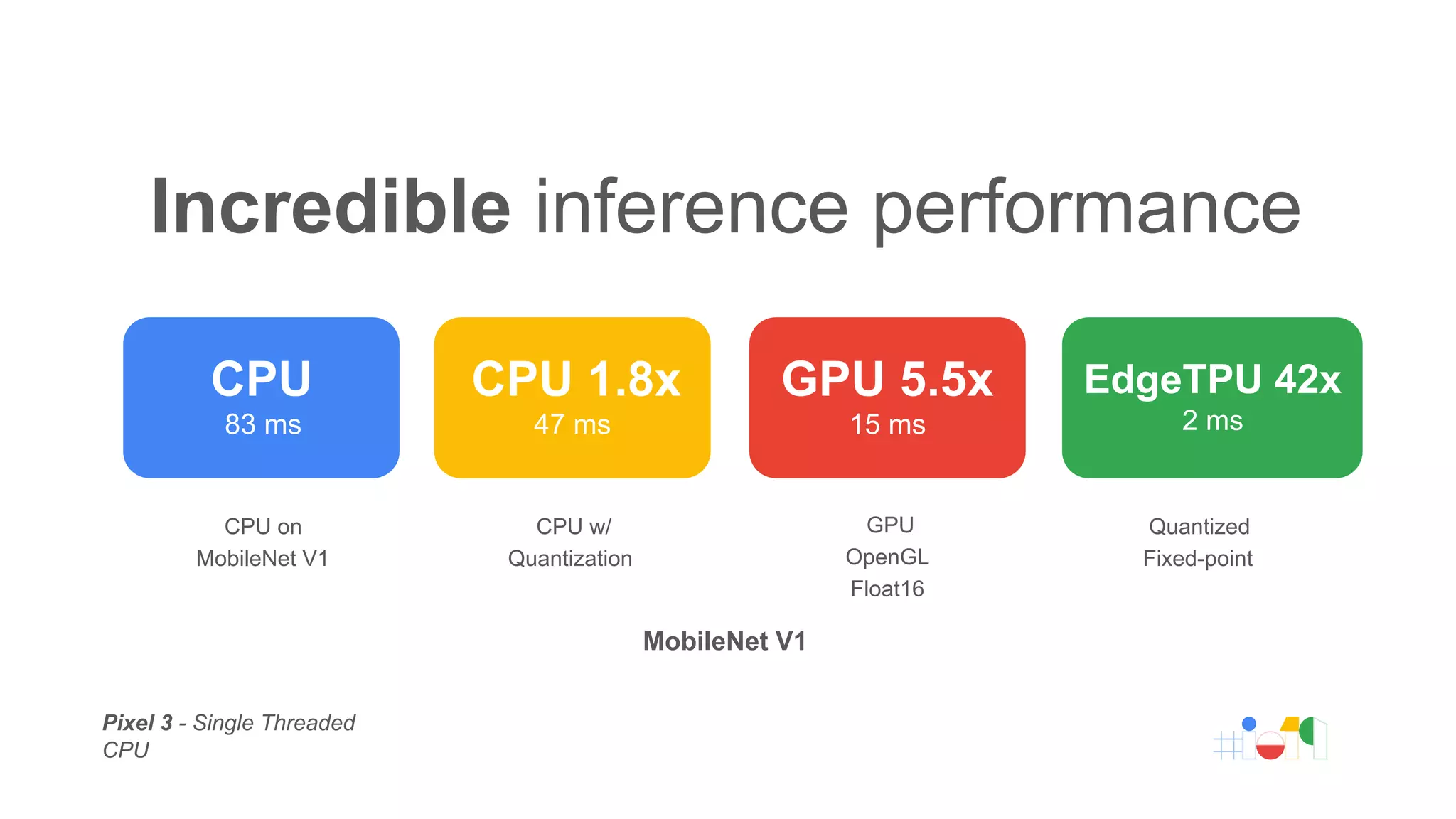

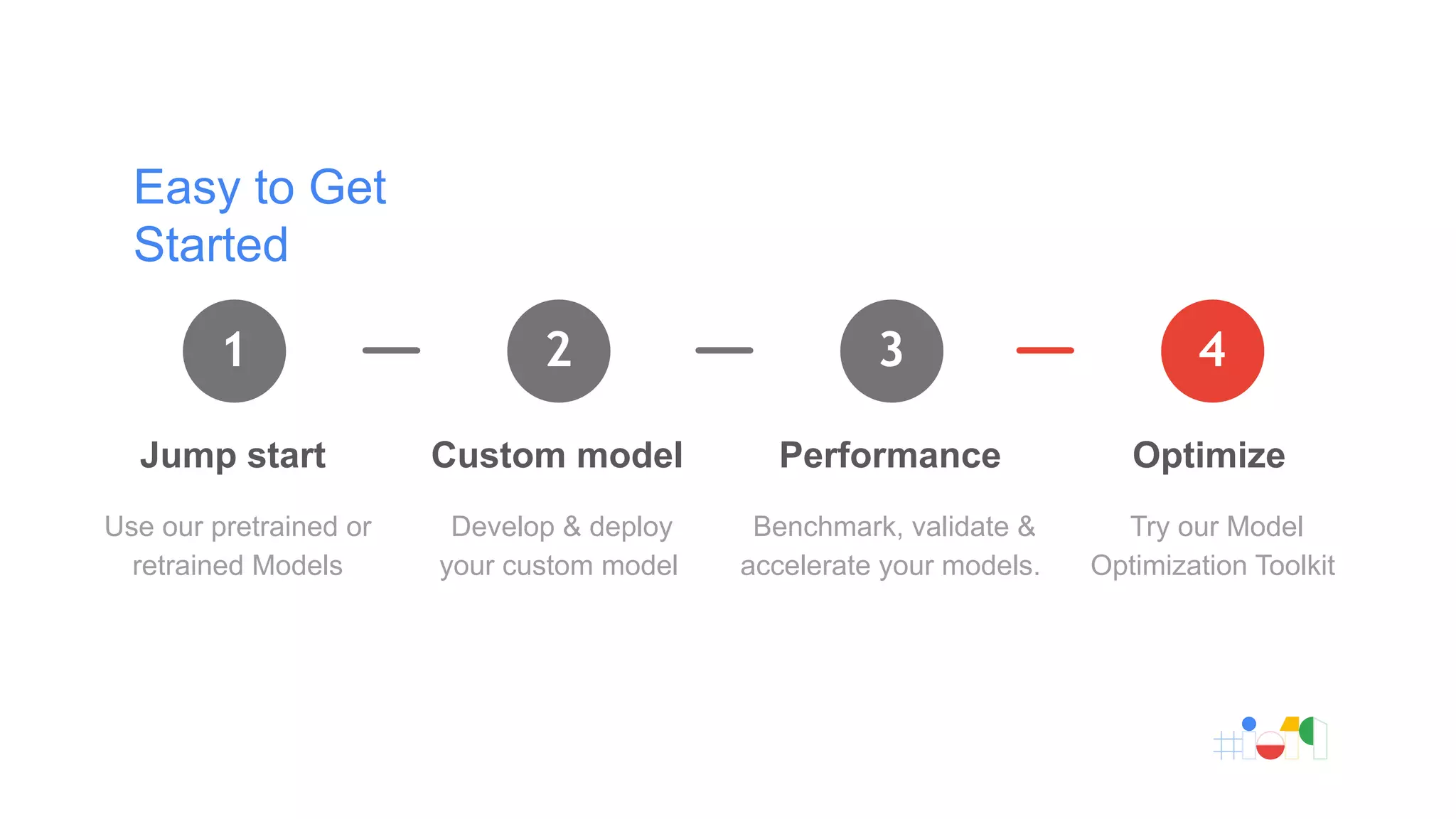

TensorFlow Lite is a machine learning framework designed for deploying models on mobile and IoT devices, enabling edge computing with lower latency and minimal resource consumption. It supports various applications such as image recognition and voice synthesis, with over 2 billion devices utilizing it globally. The document outlines the process for getting started with TensorFlow Lite, including using pretrained models, developing custom models, and optimizing performance.