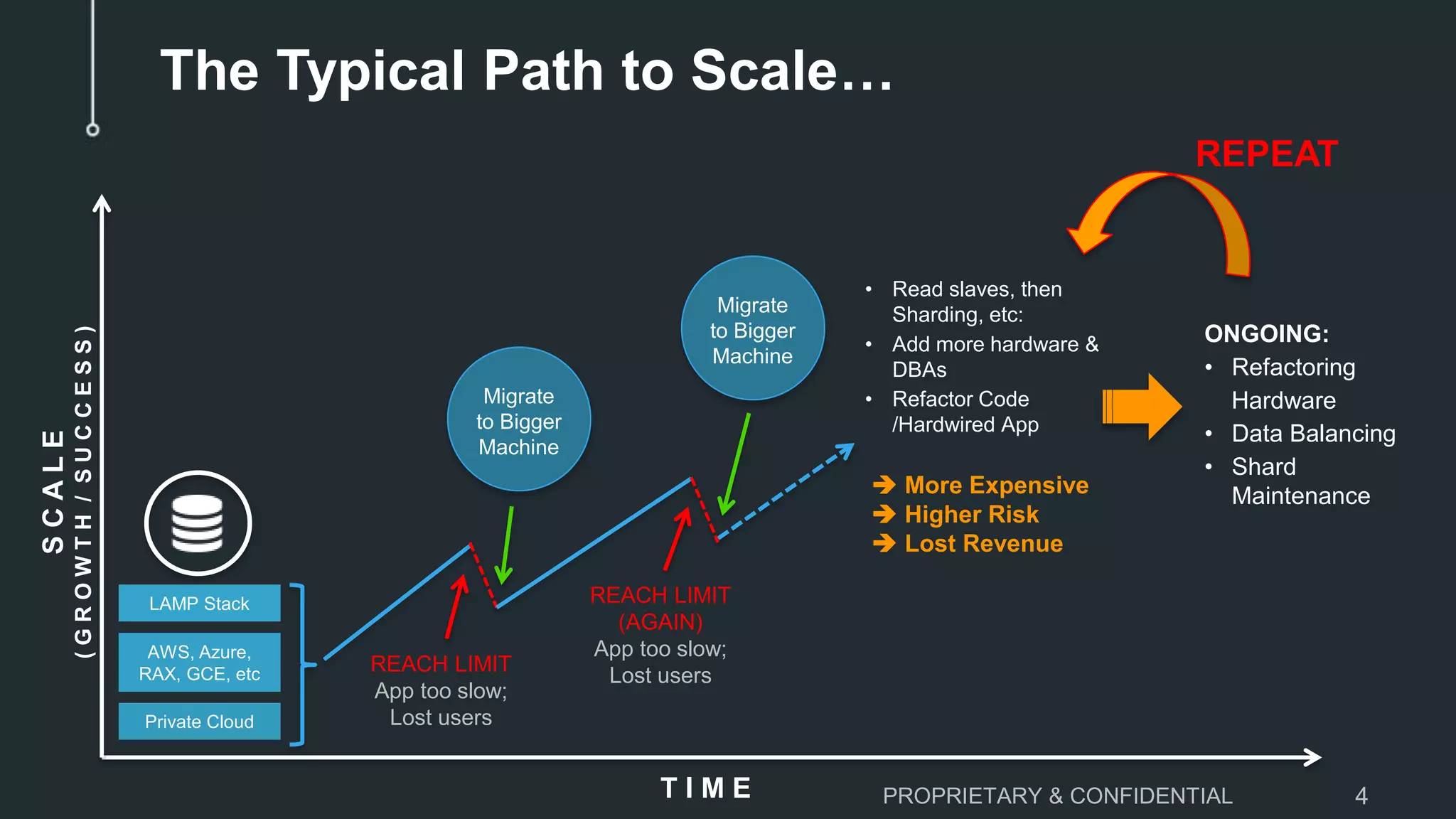

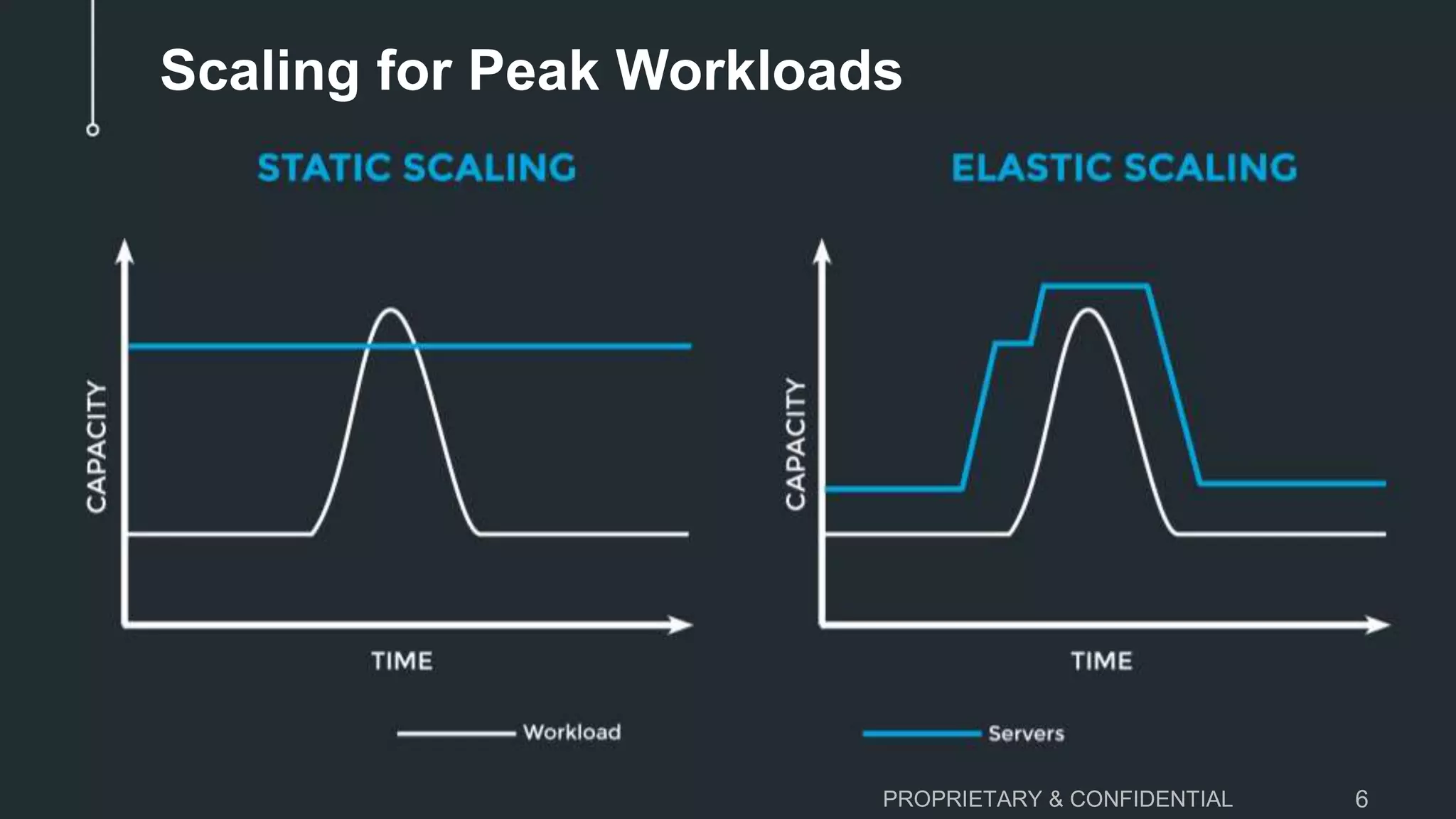

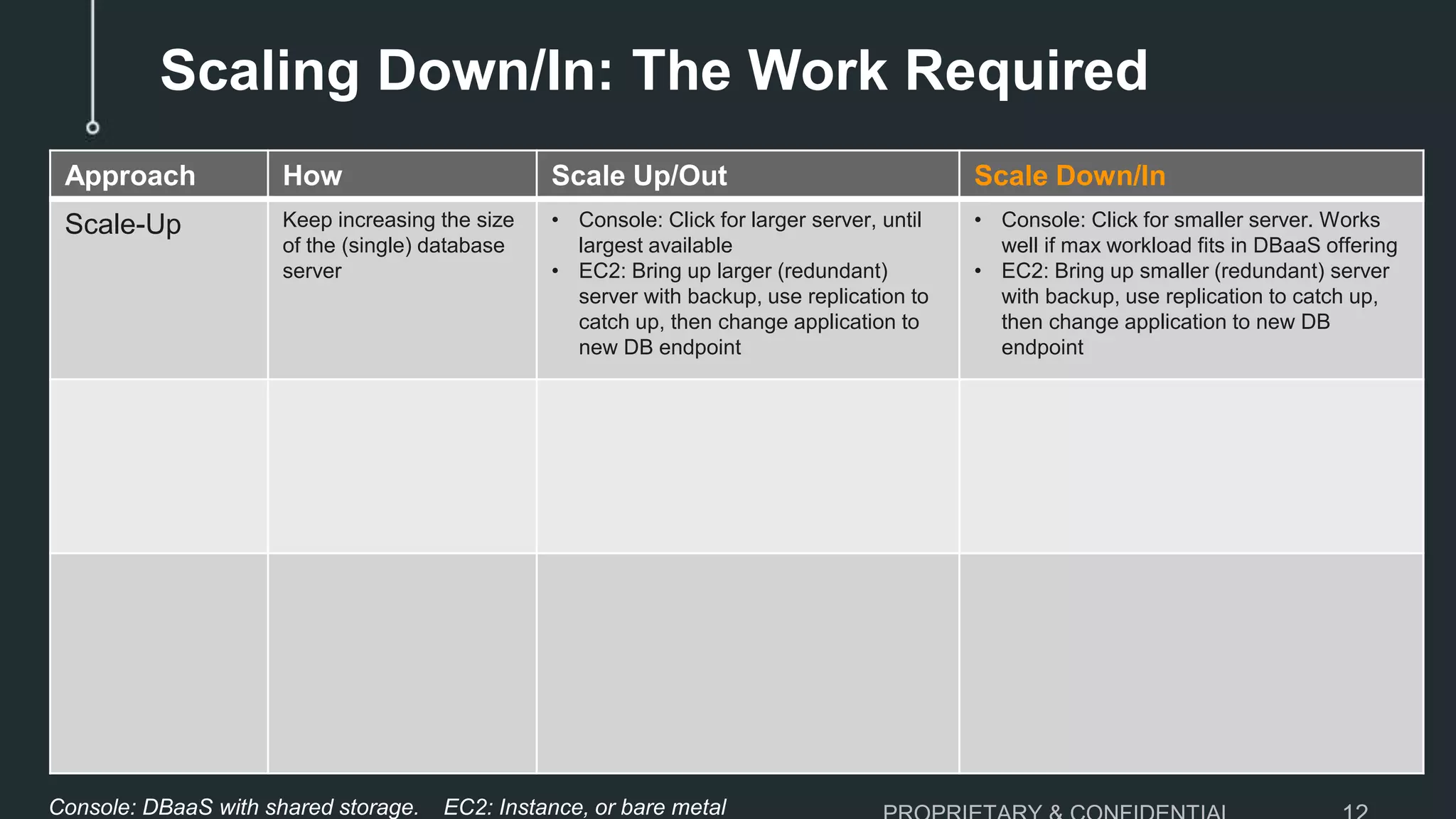

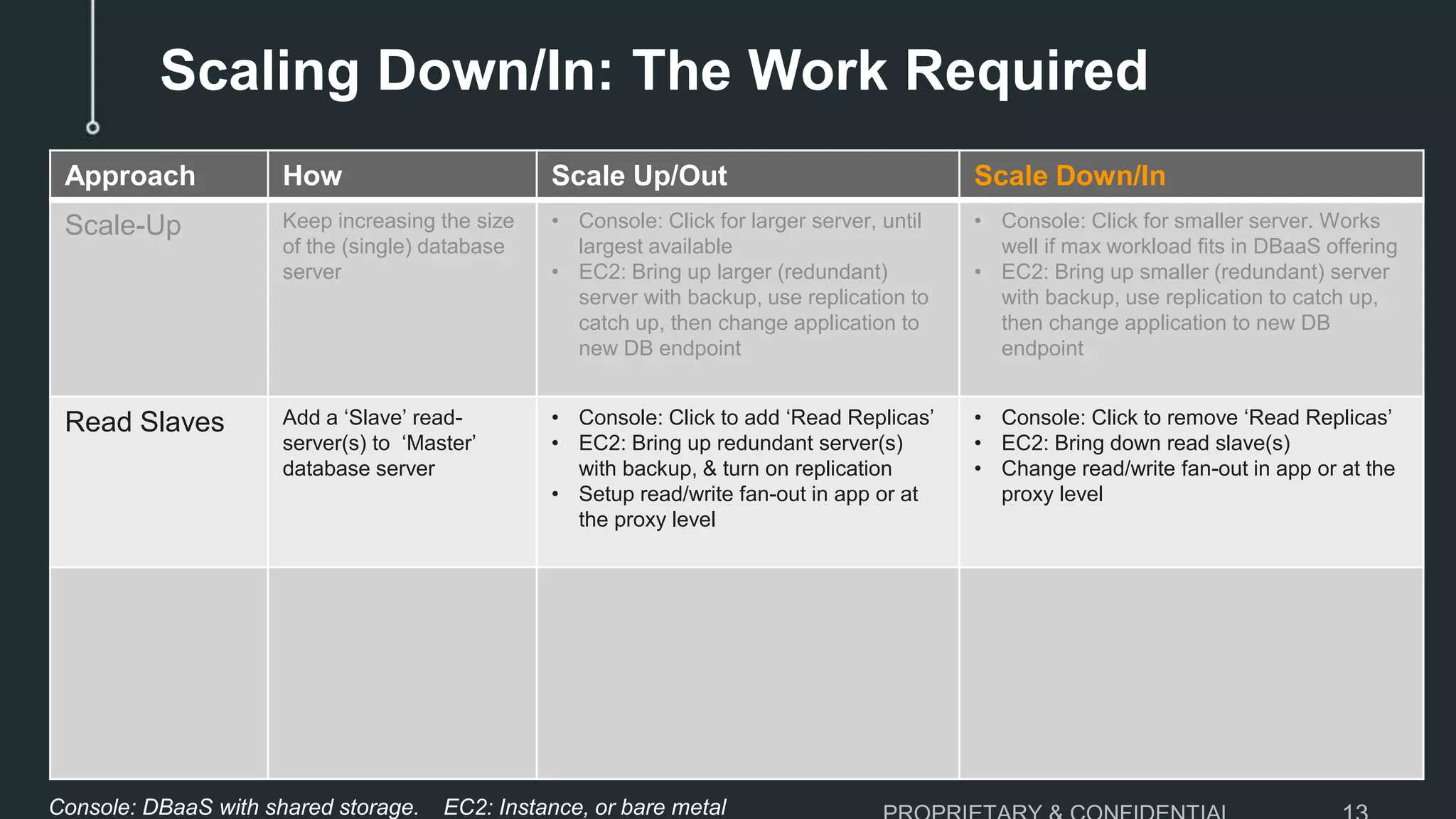

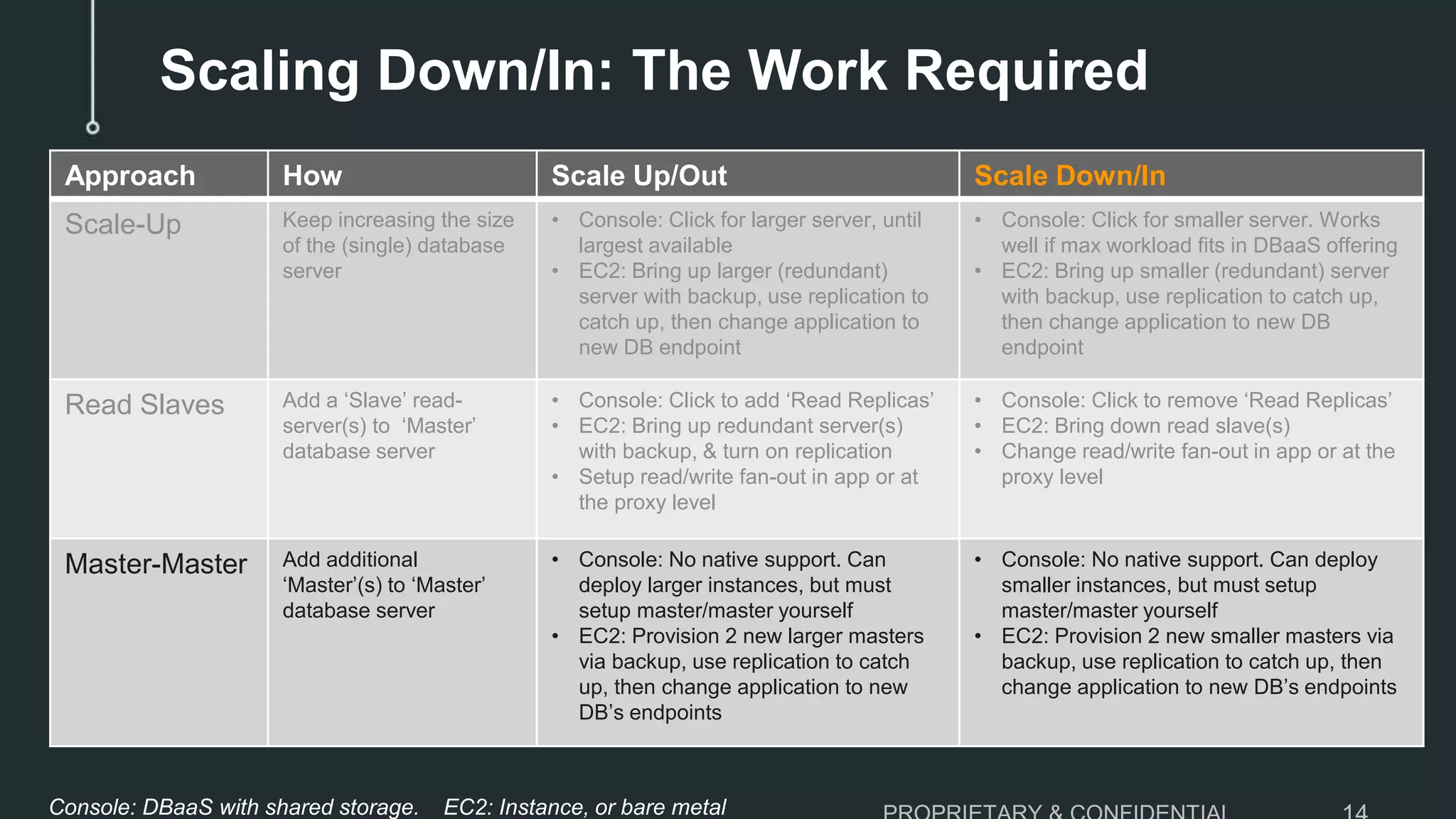

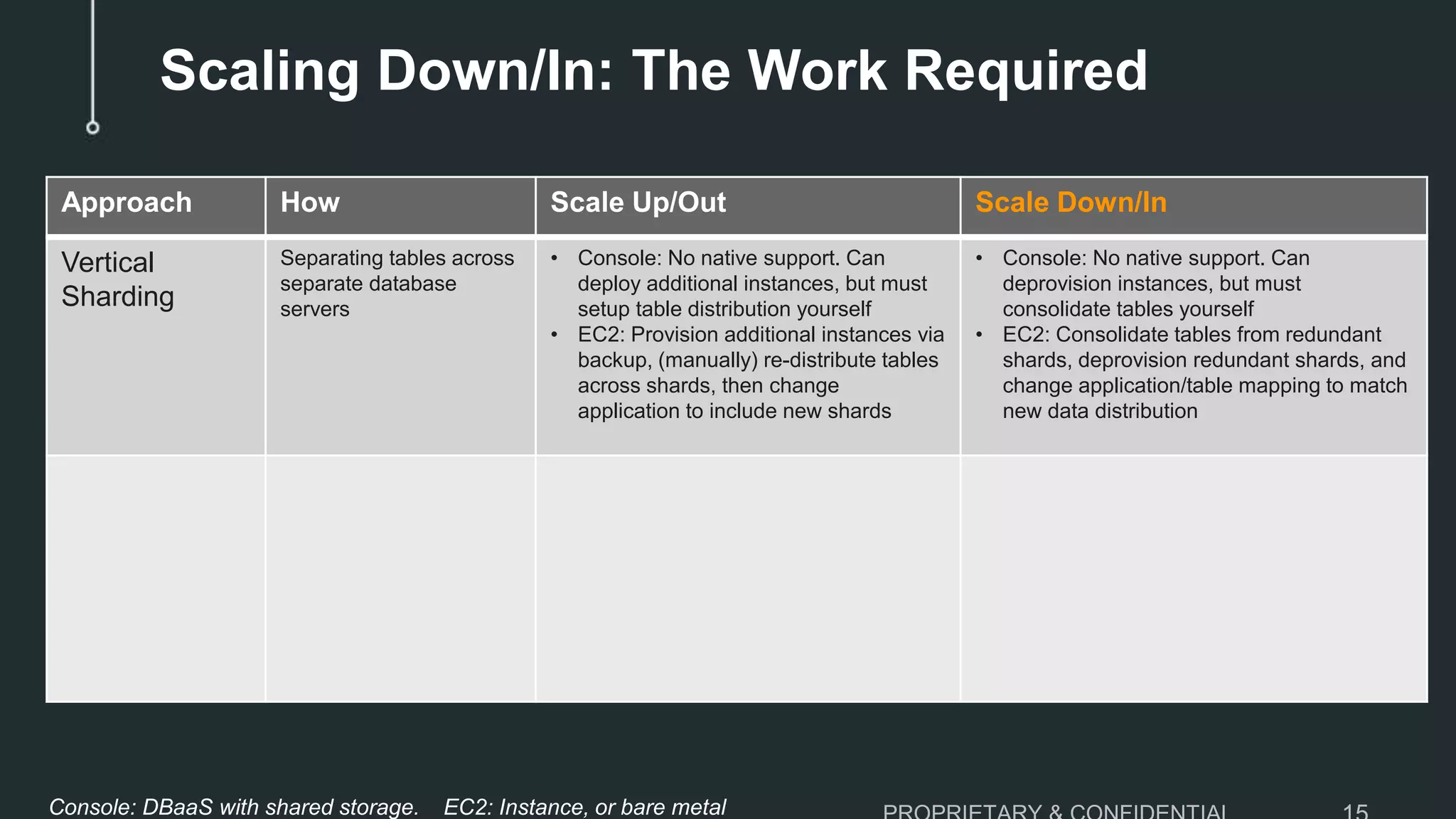

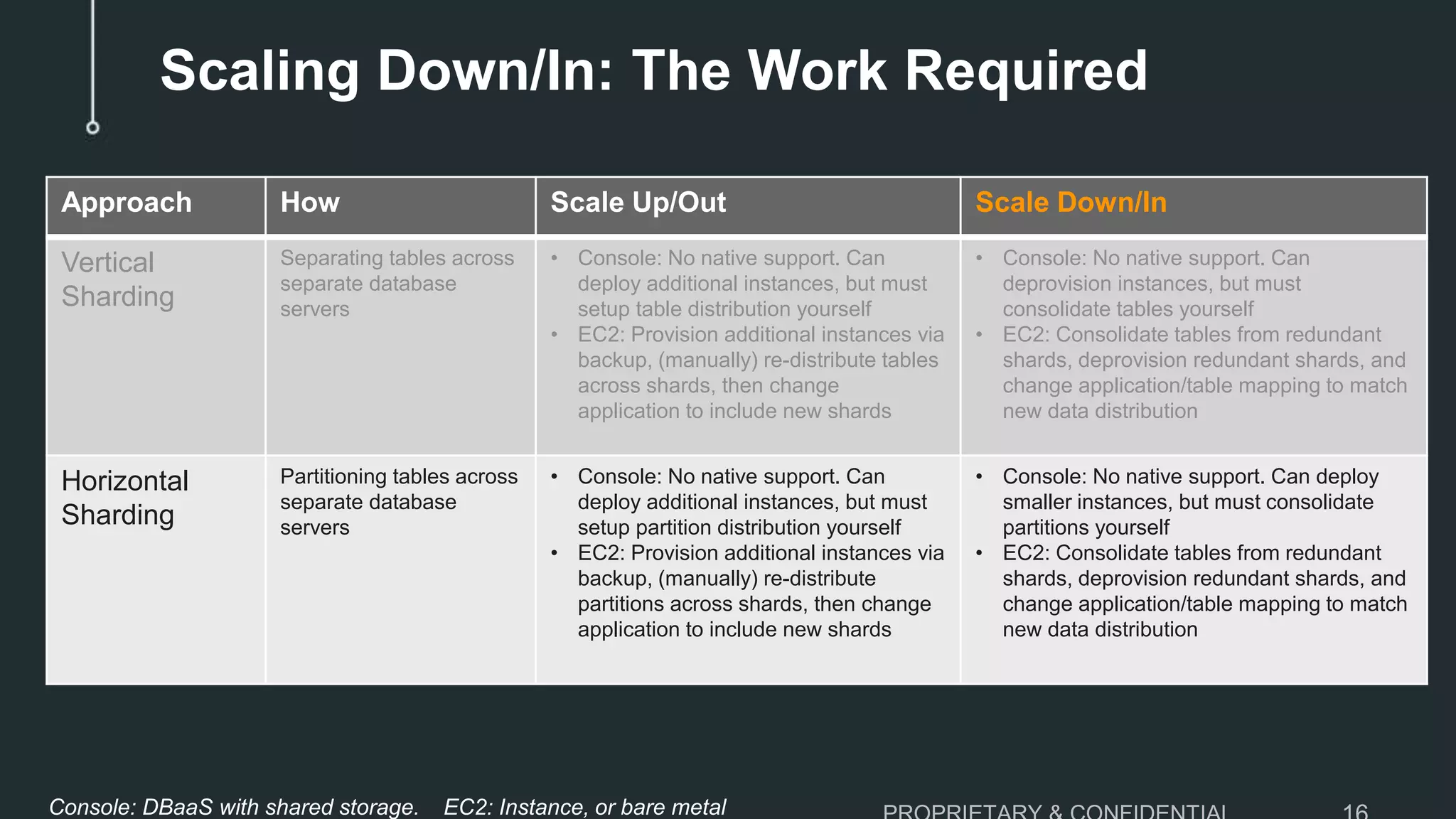

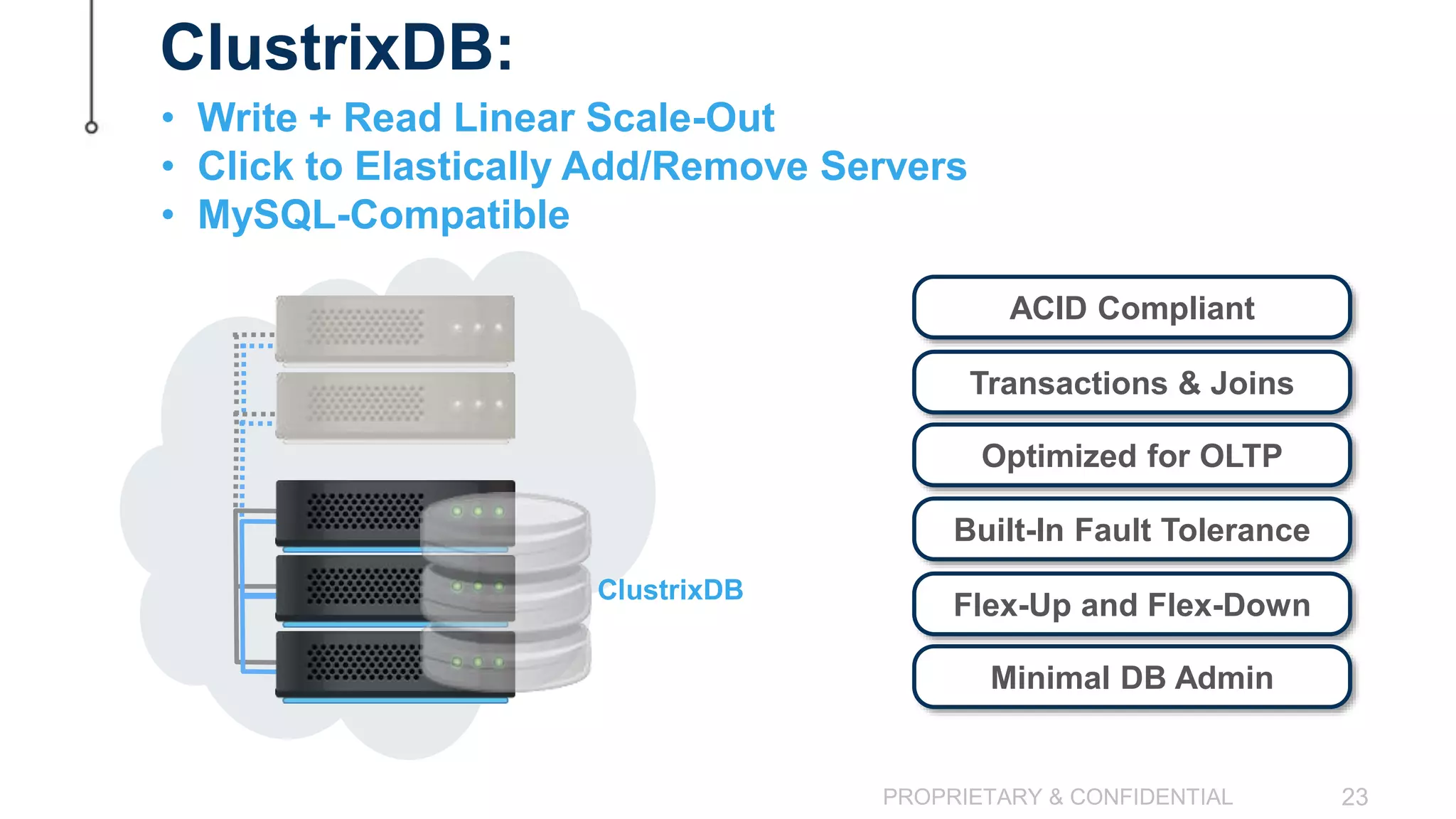

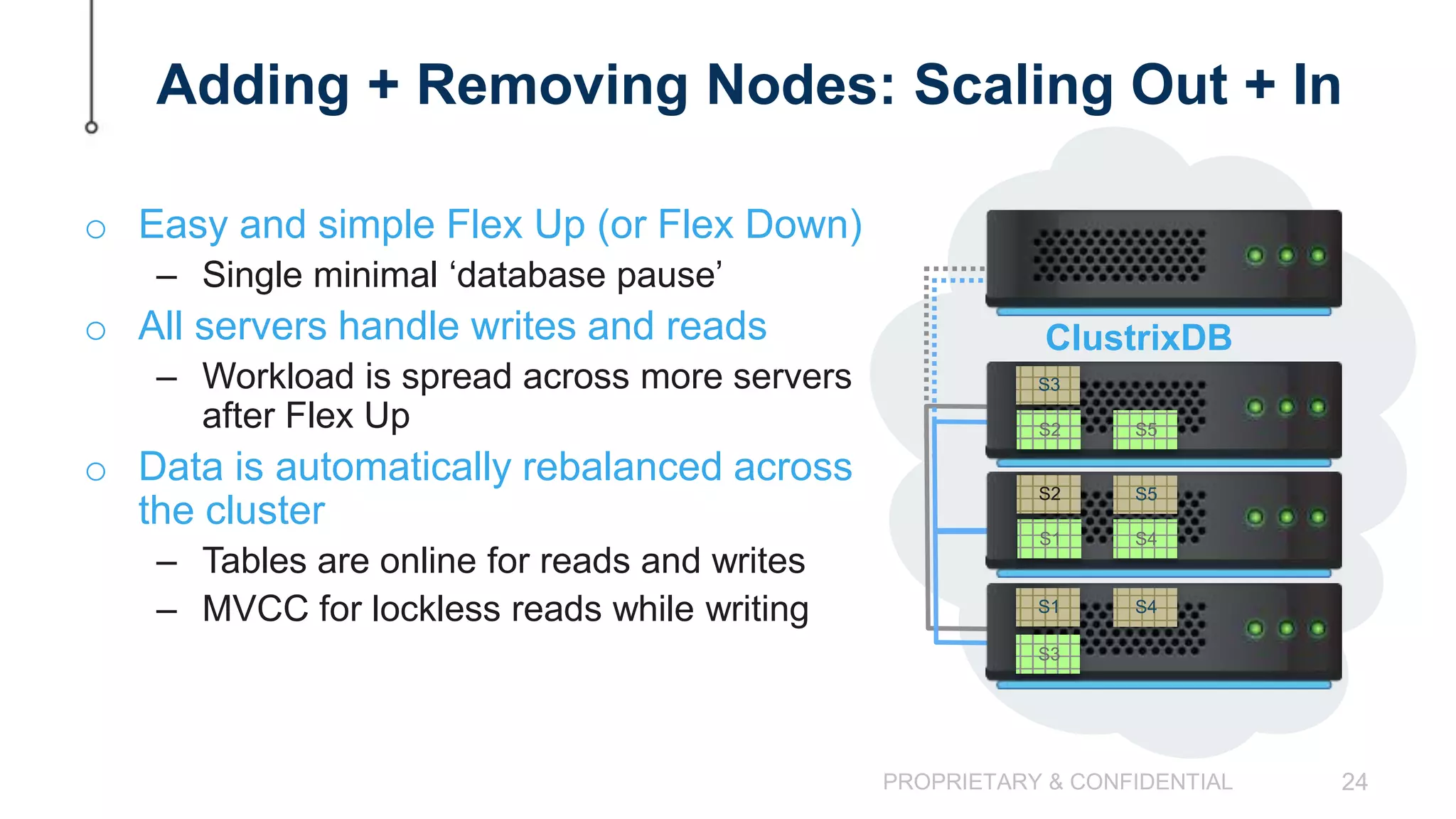

The document discusses the challenges of scaling down MySQL databases and emphasizes the importance of managing resources efficiently to avoid unnecessary costs, particularly during peak workloads. It outlines various scaling strategies and the implications of overprovisioning on budgets and operational efficiency. The advantages of ClustrixDB for flexible scaling compared to traditional MySQL solutions are highlighted, particularly in handling dynamic workloads and scaling requirements.