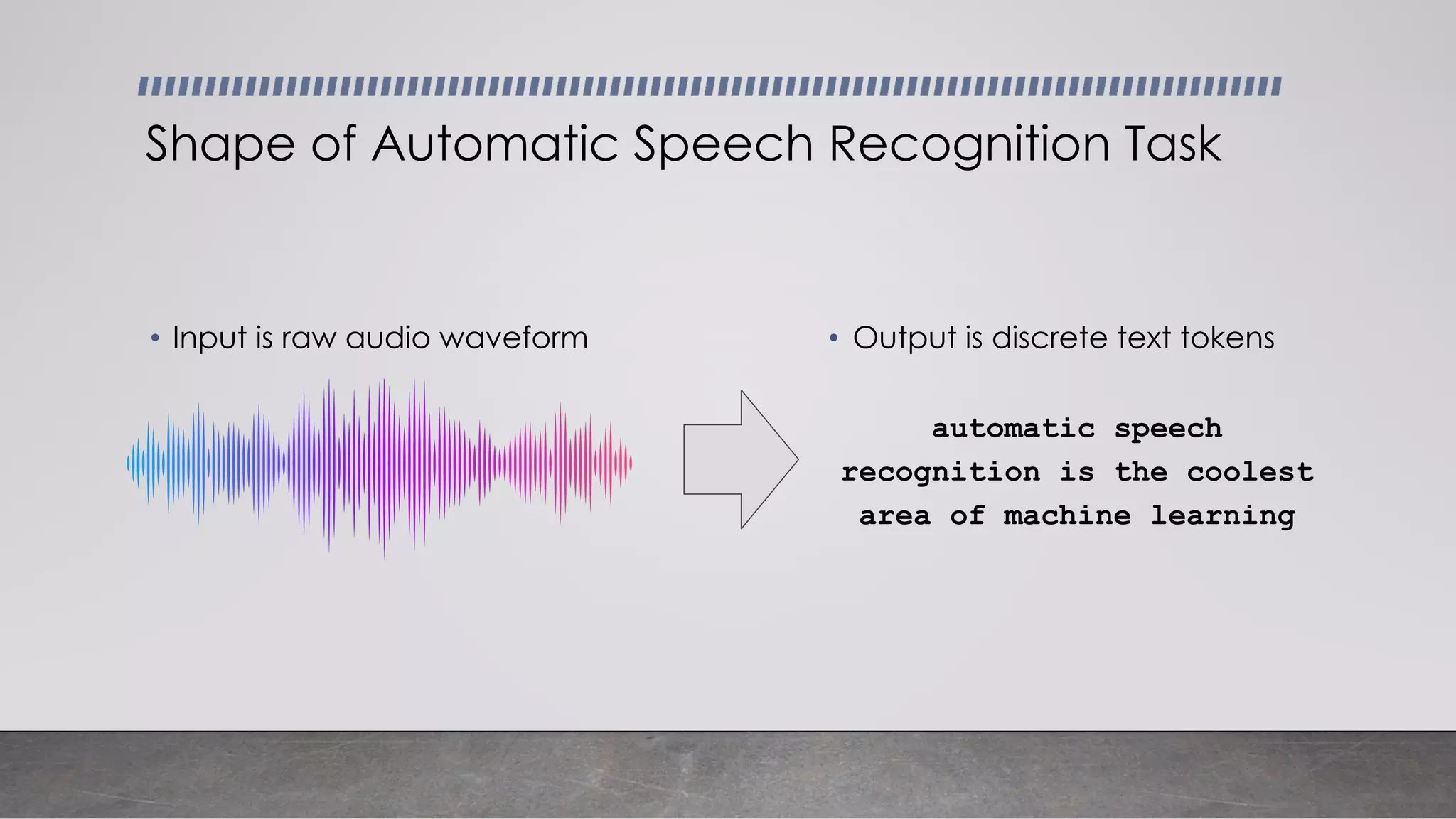

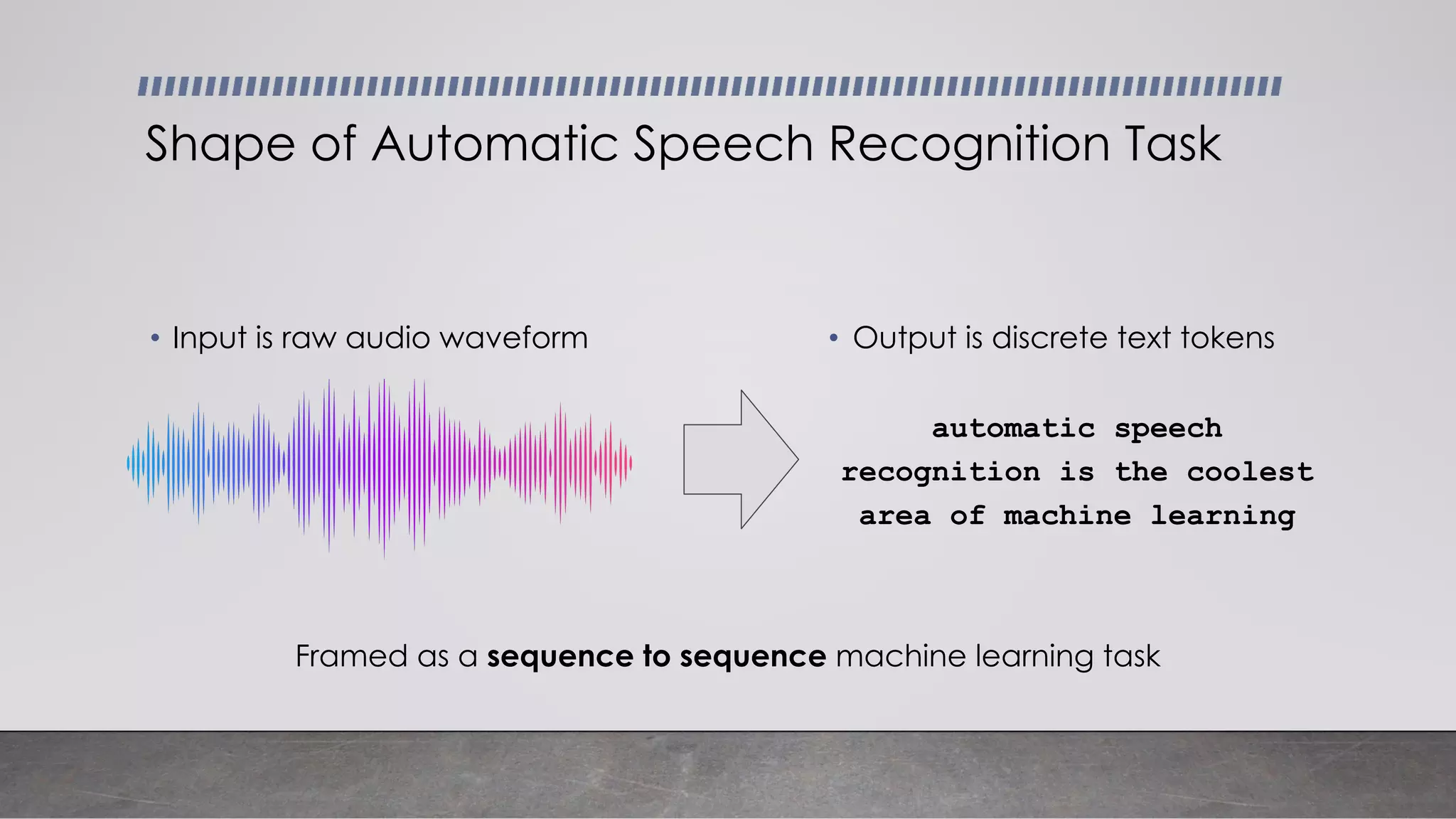

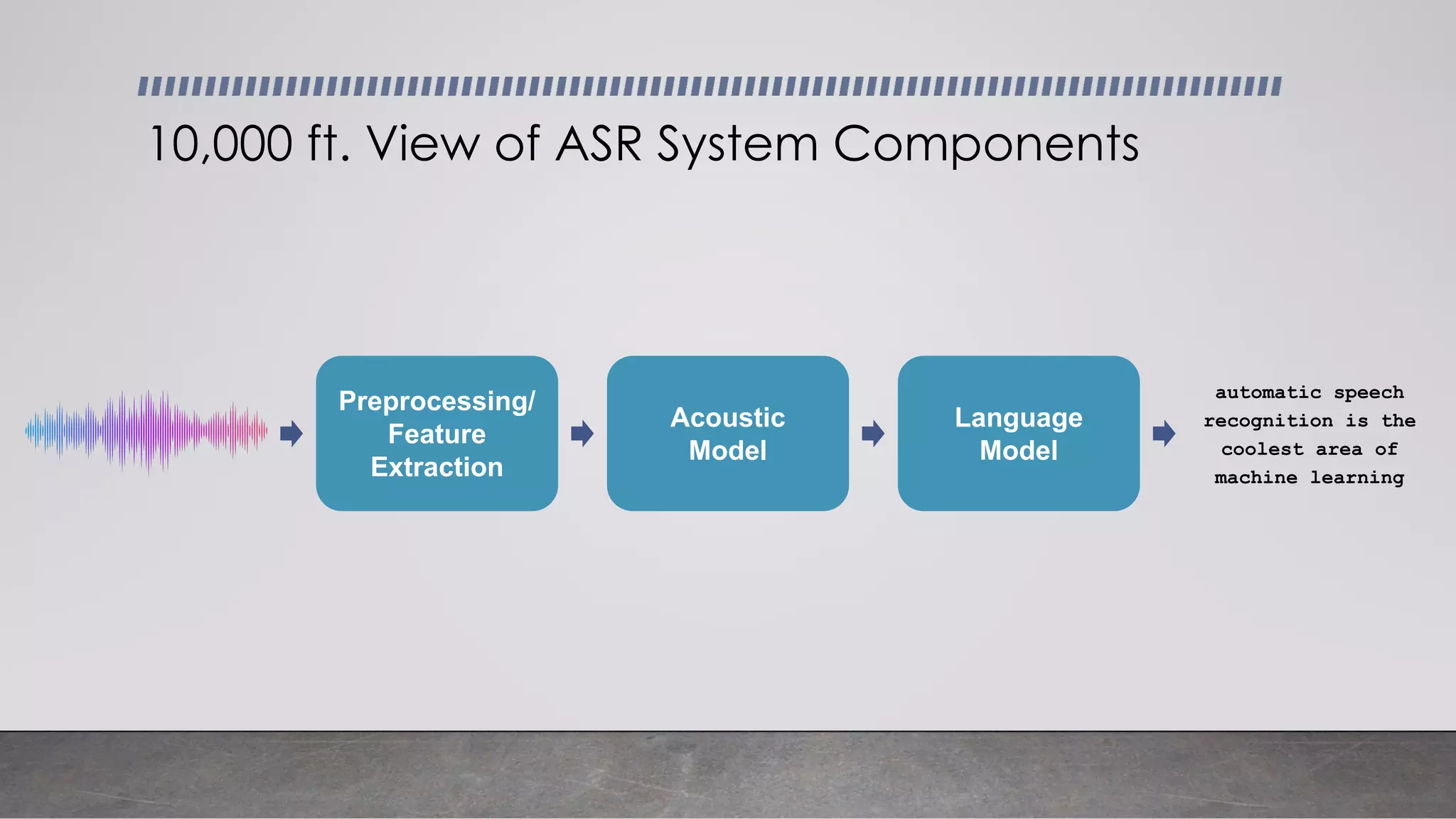

This document provides an overview of automatic speech recognition systems and their components. It discusses:

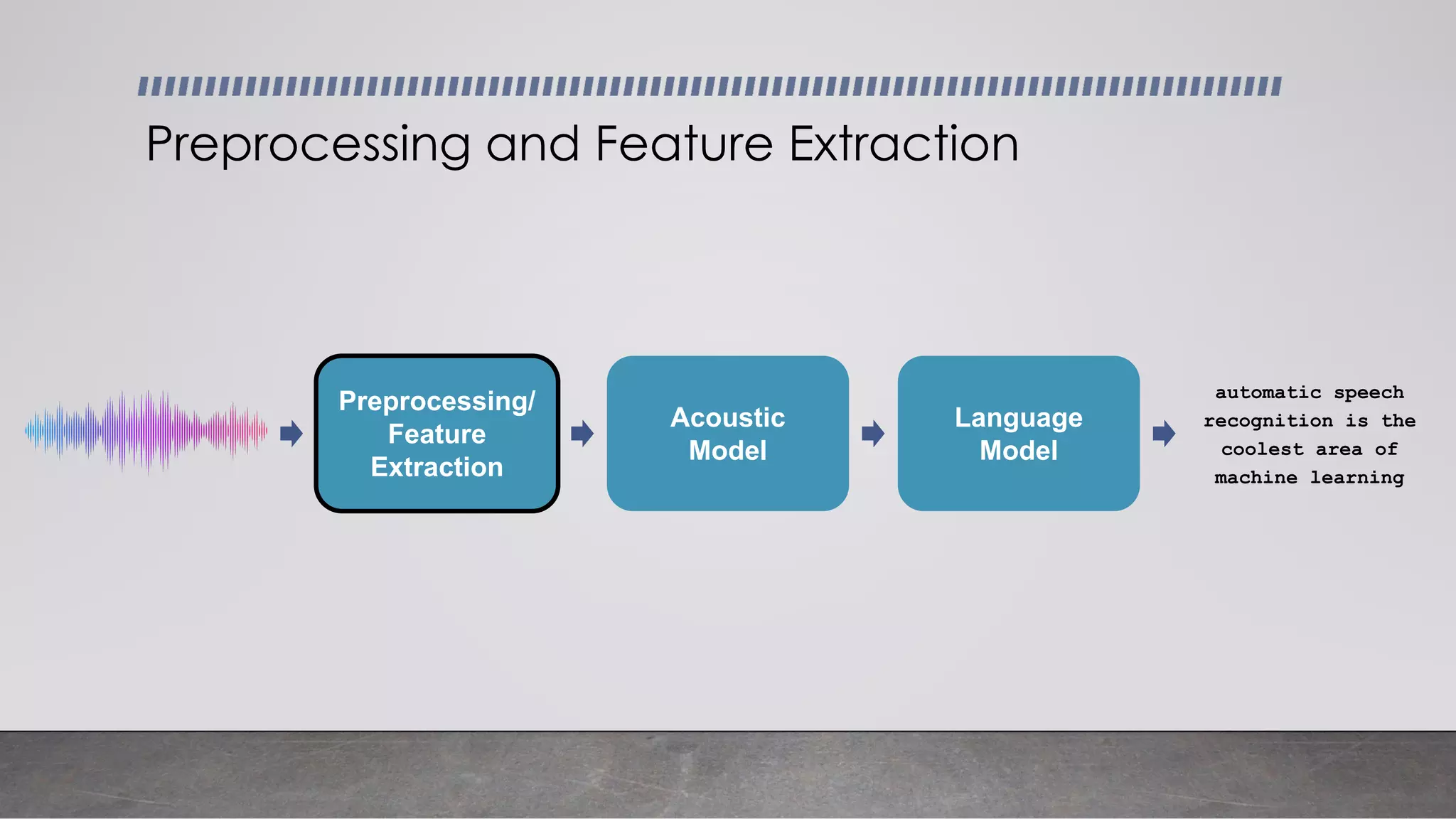

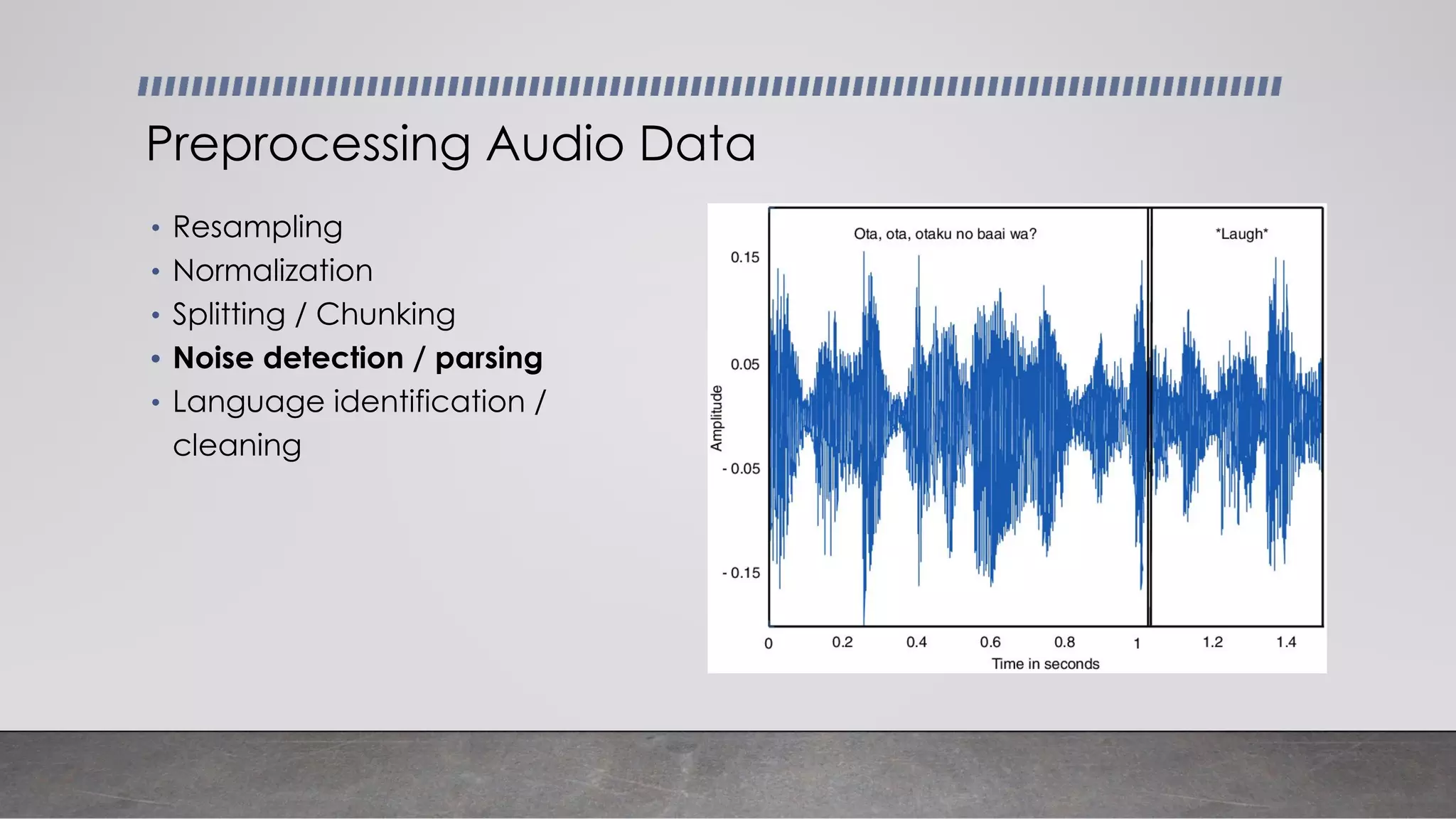

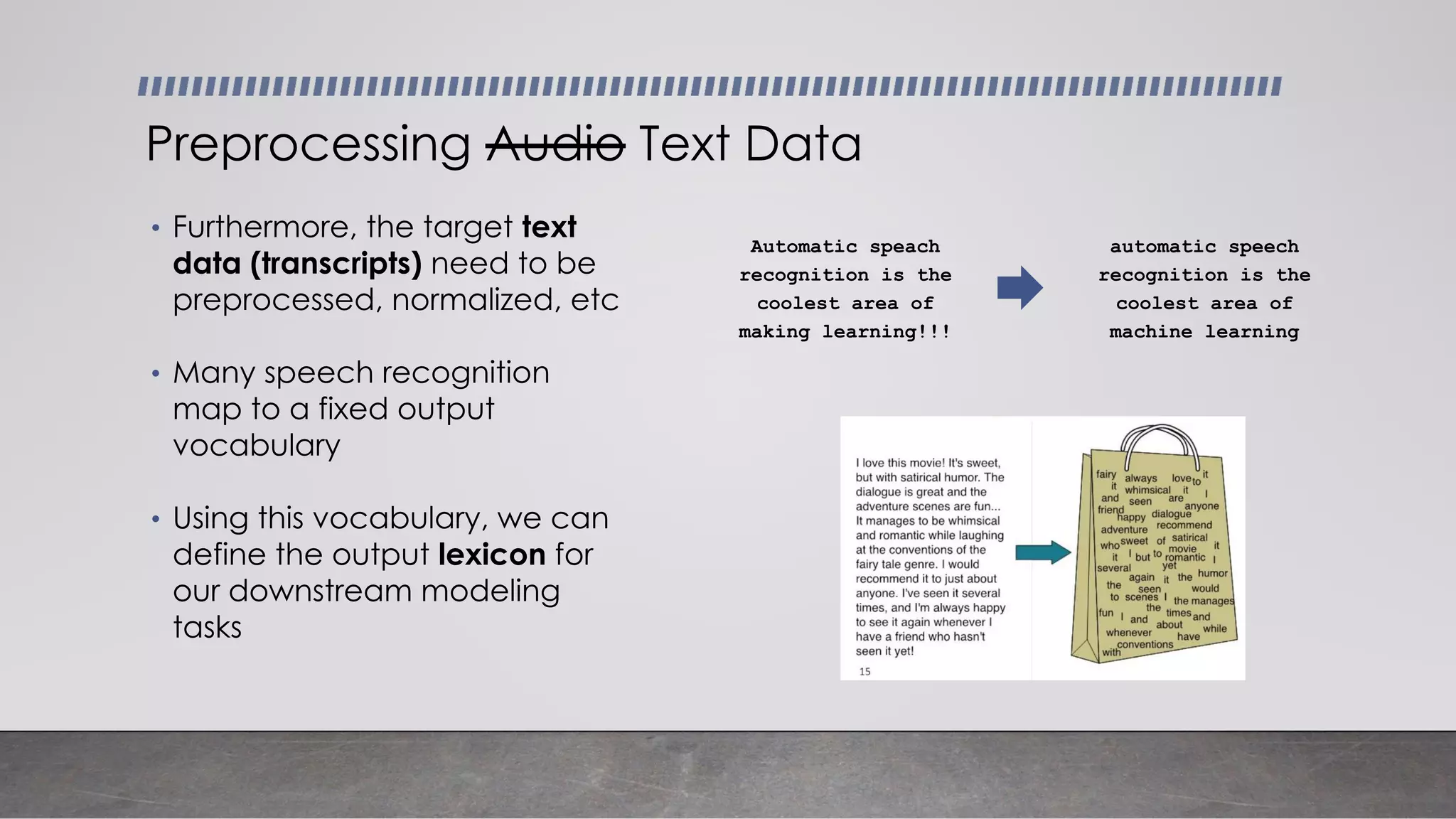

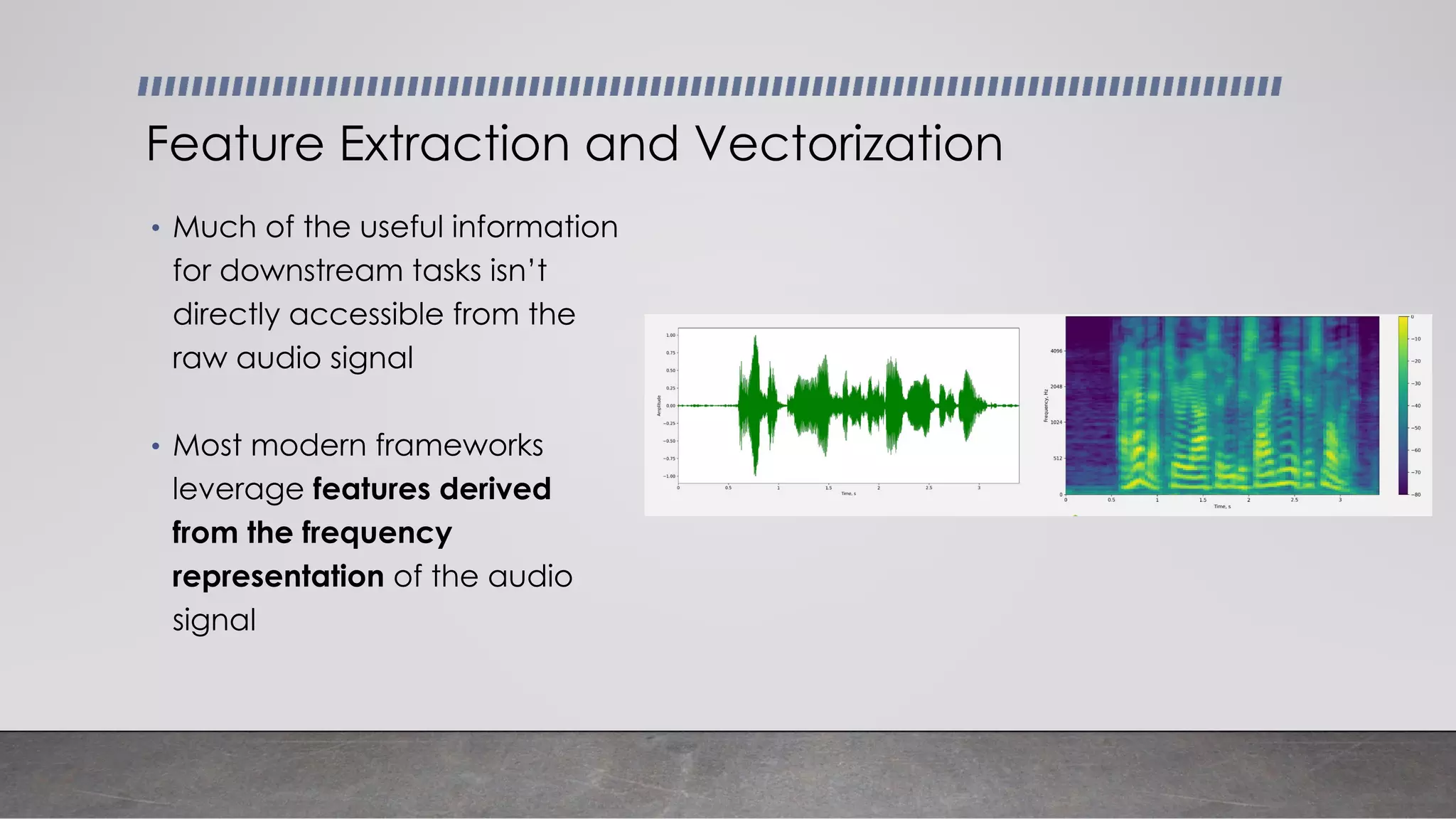

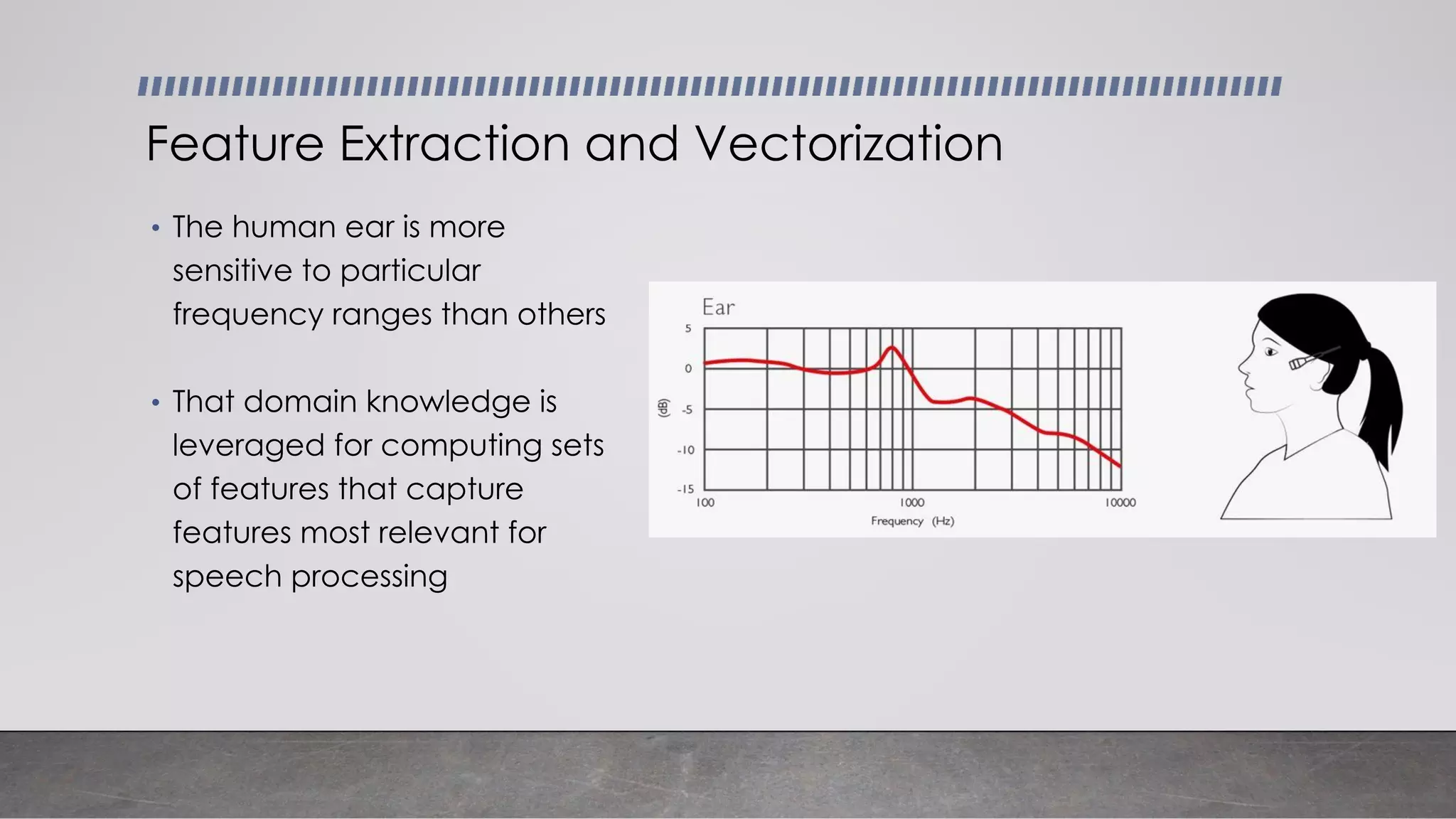

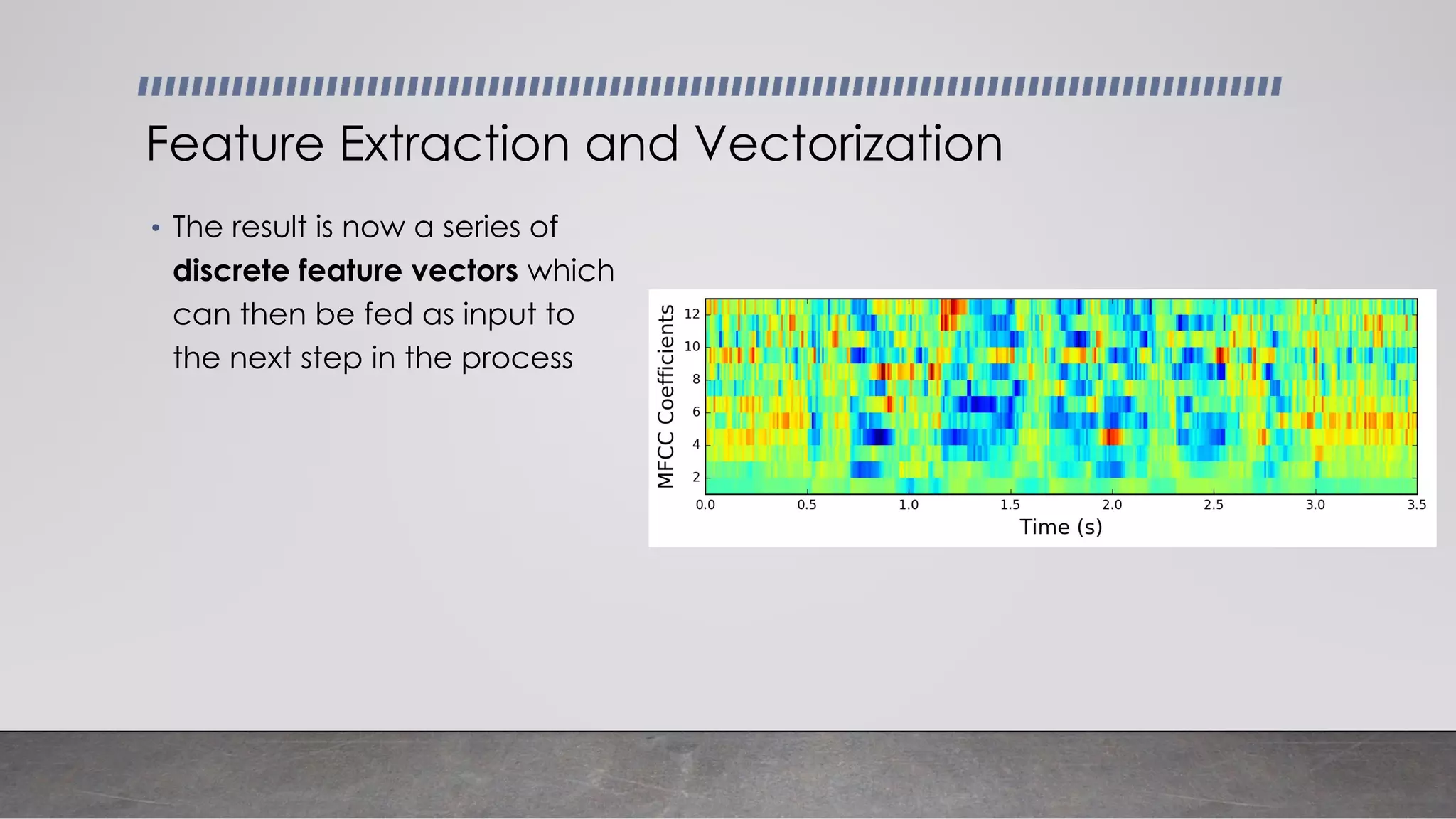

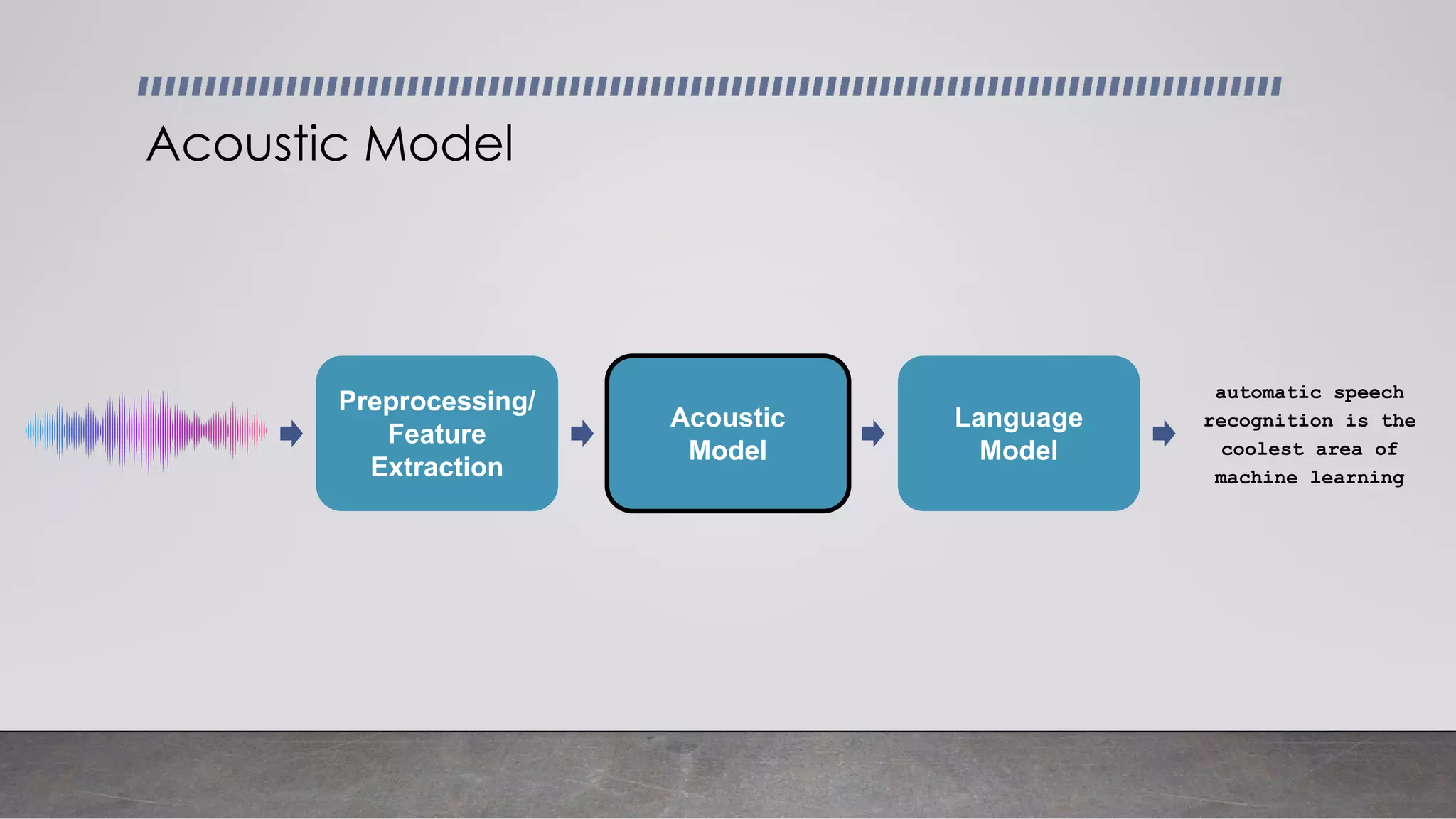

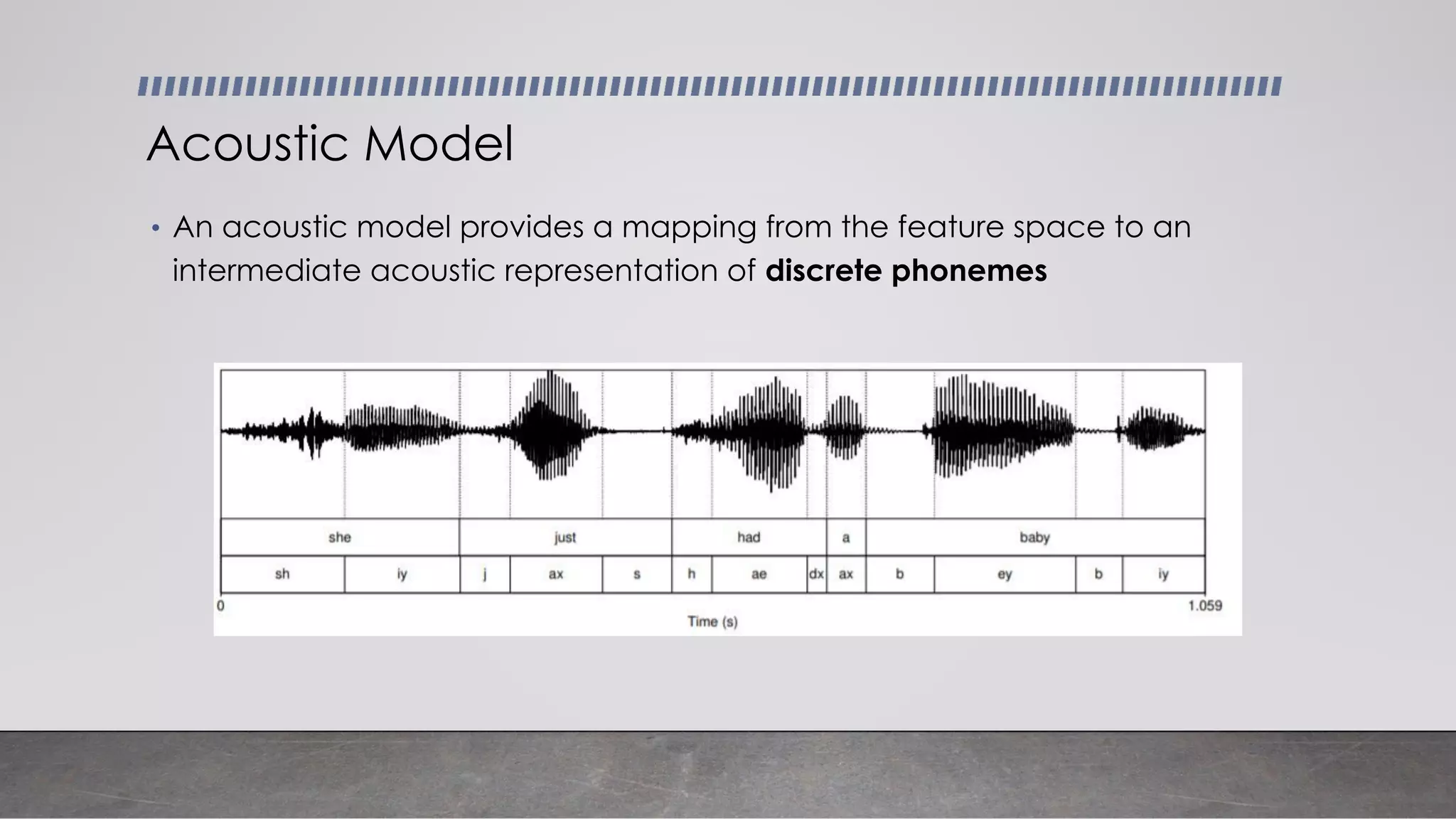

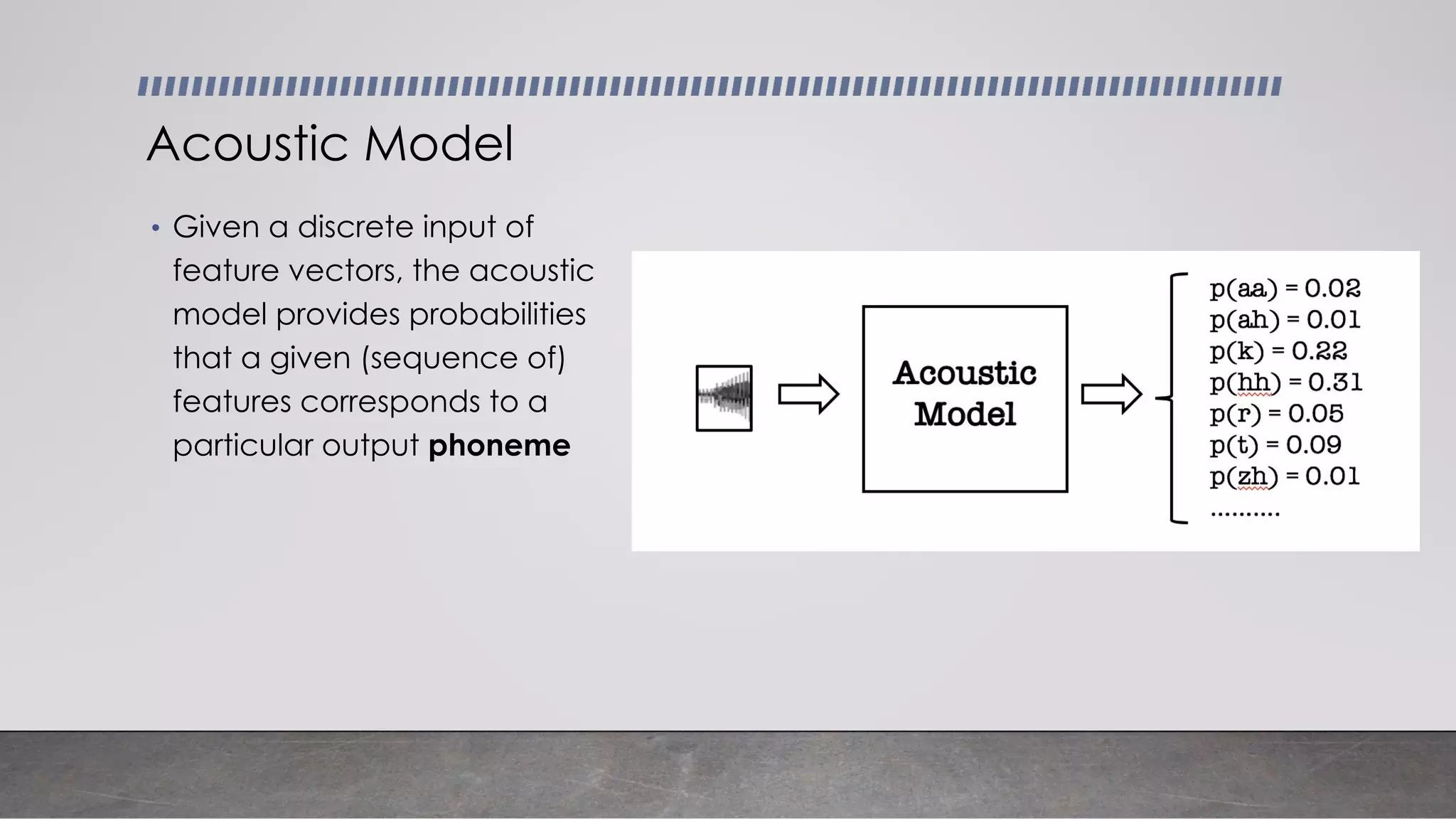

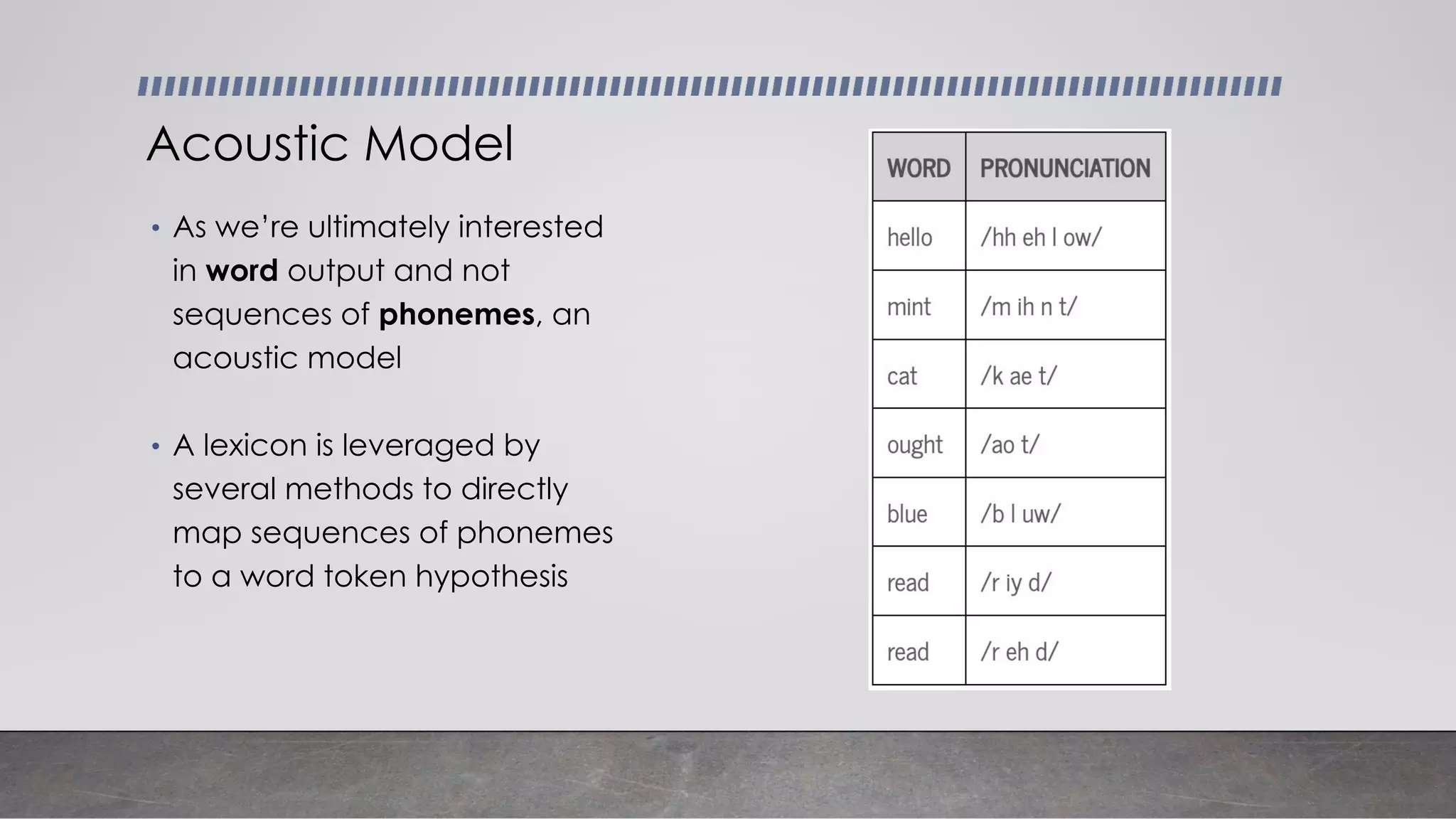

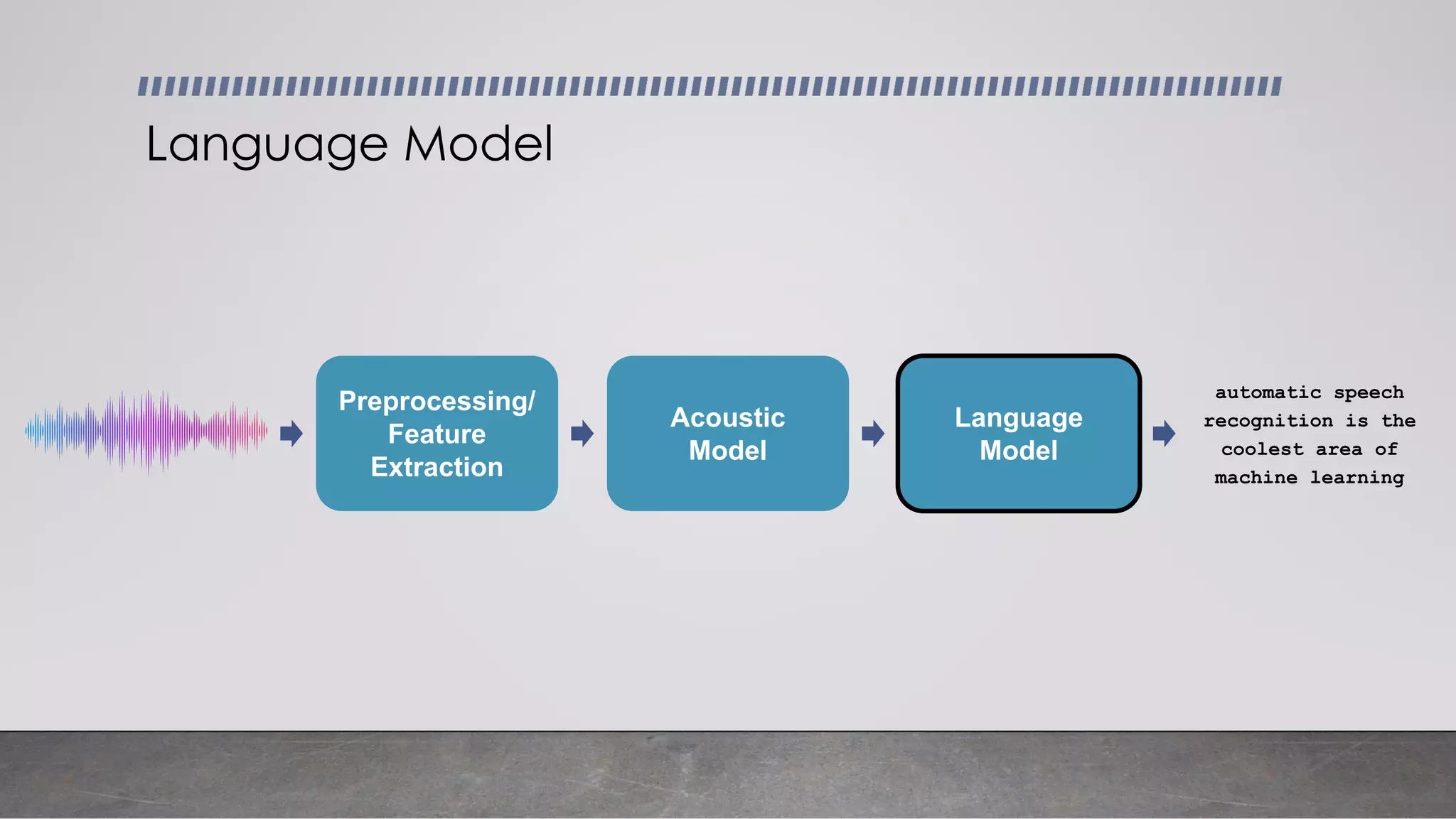

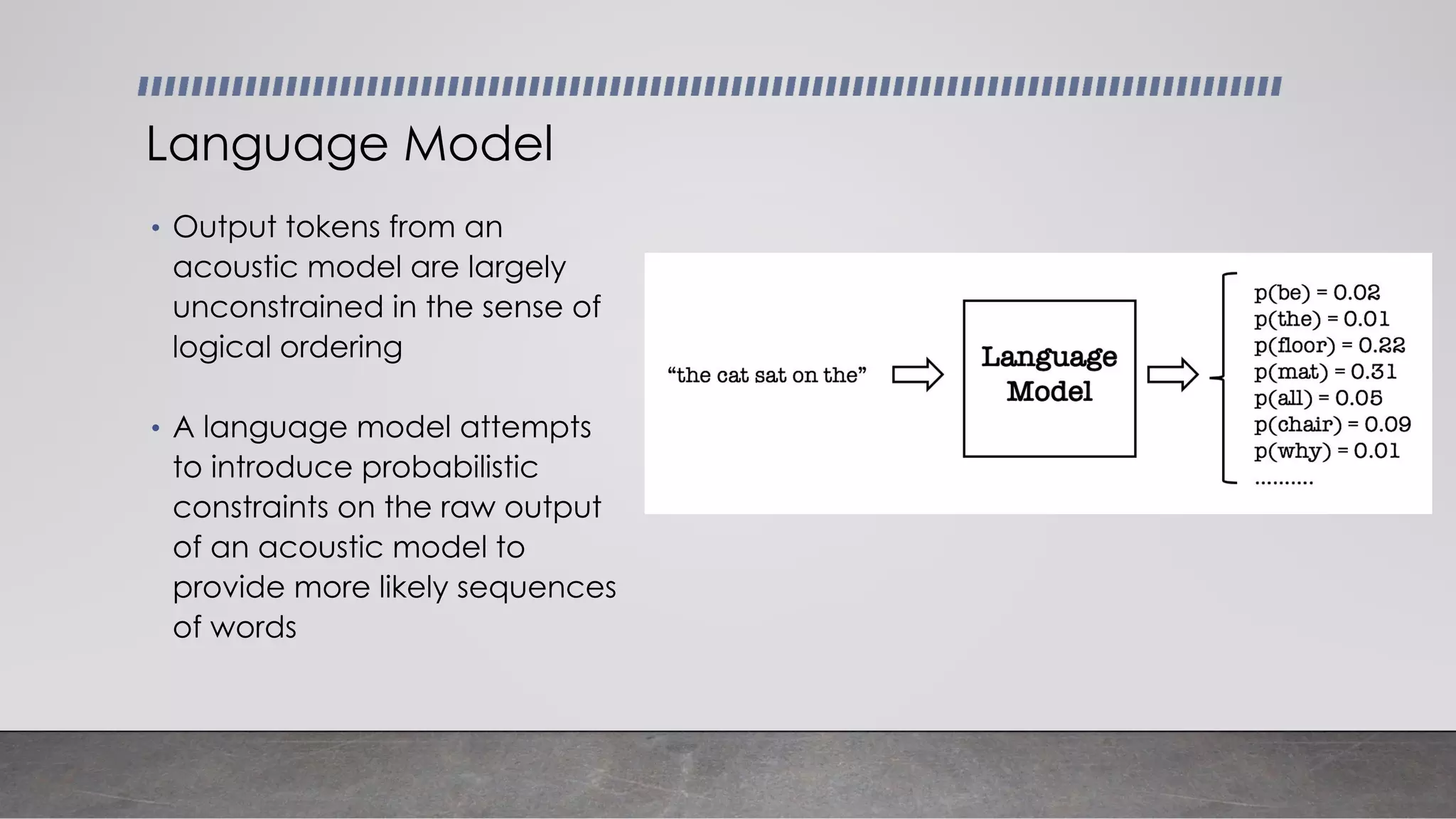

- The main components of ASR systems including preprocessing/feature extraction, acoustic models, and language models.

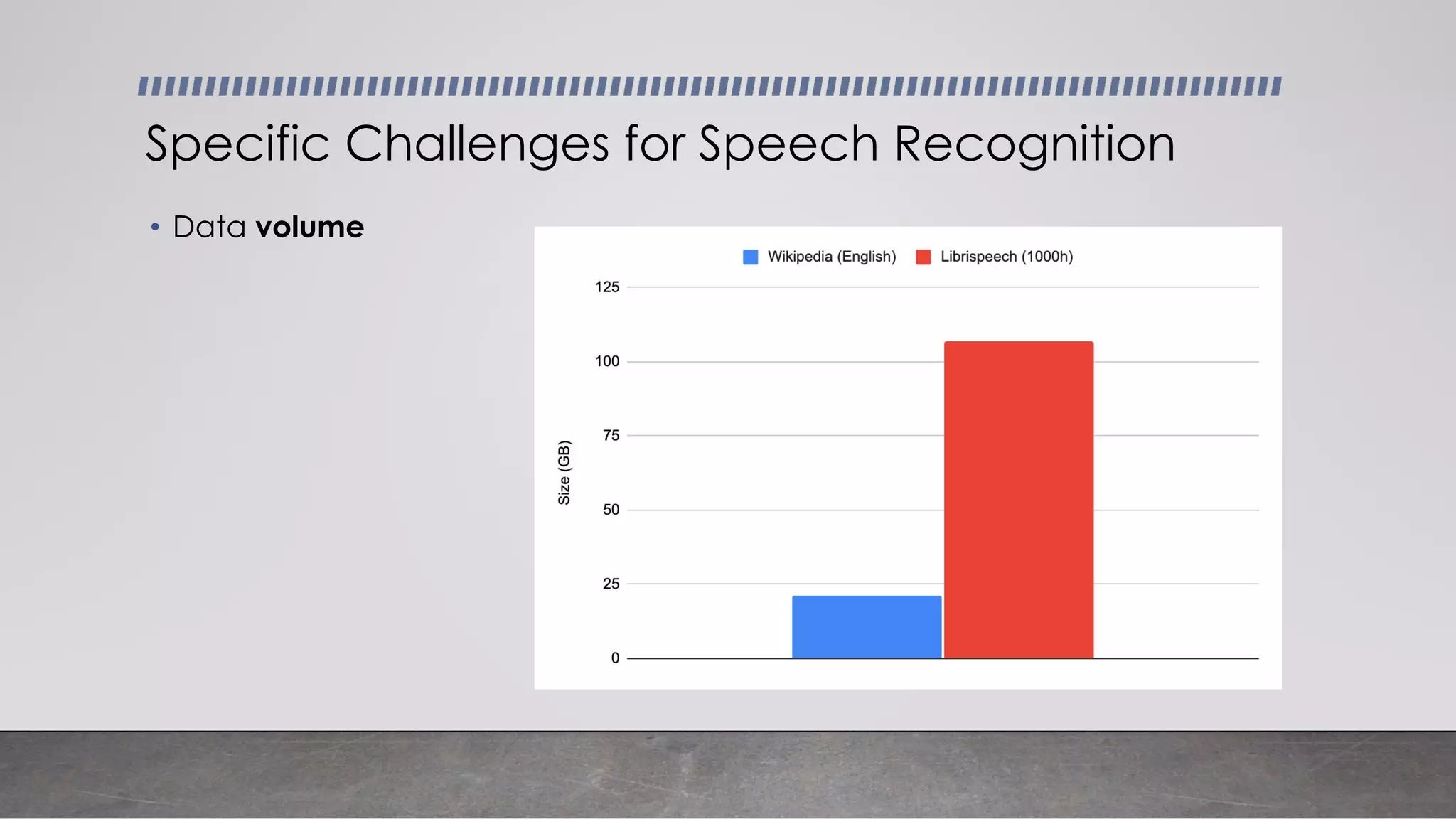

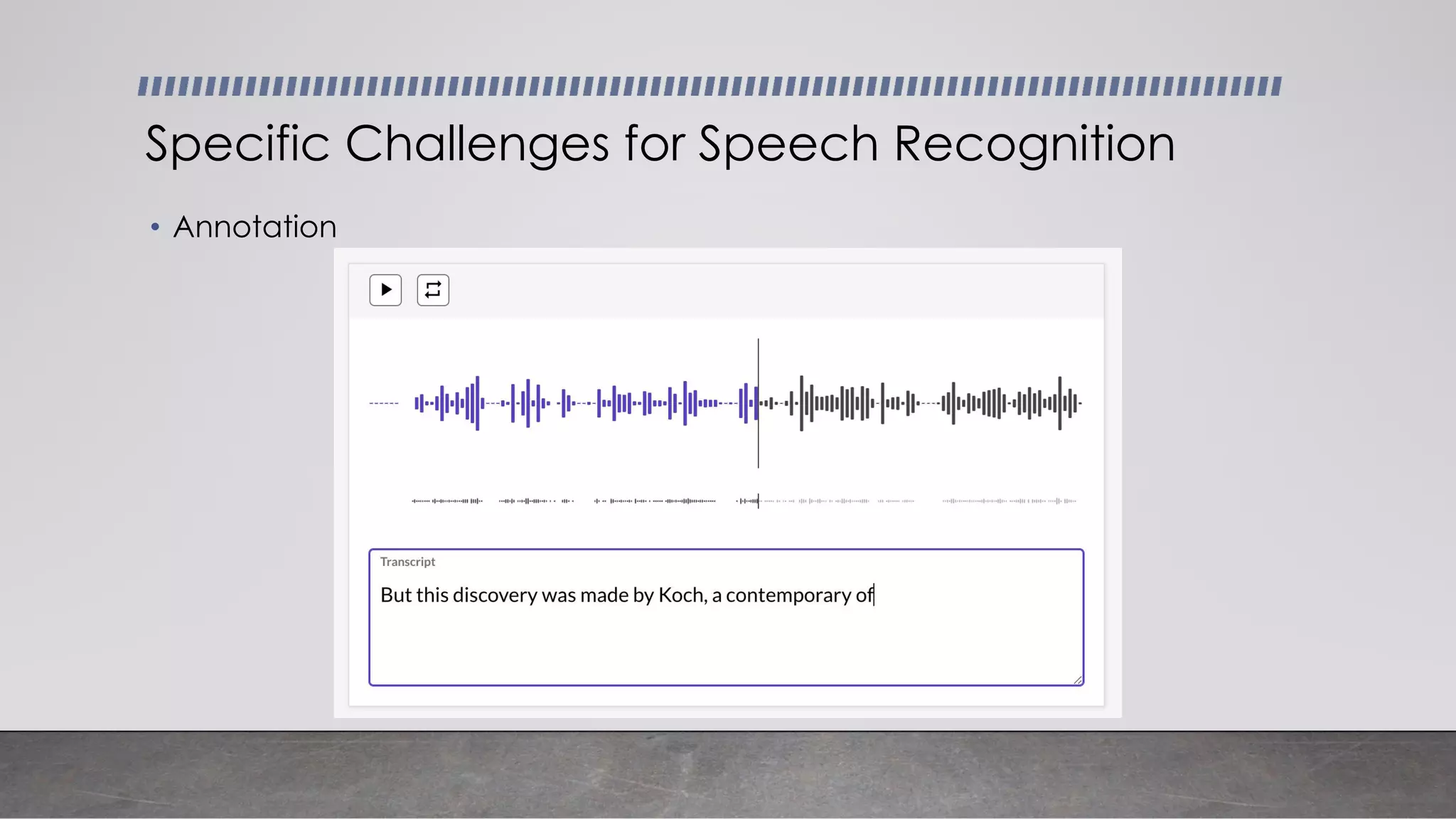

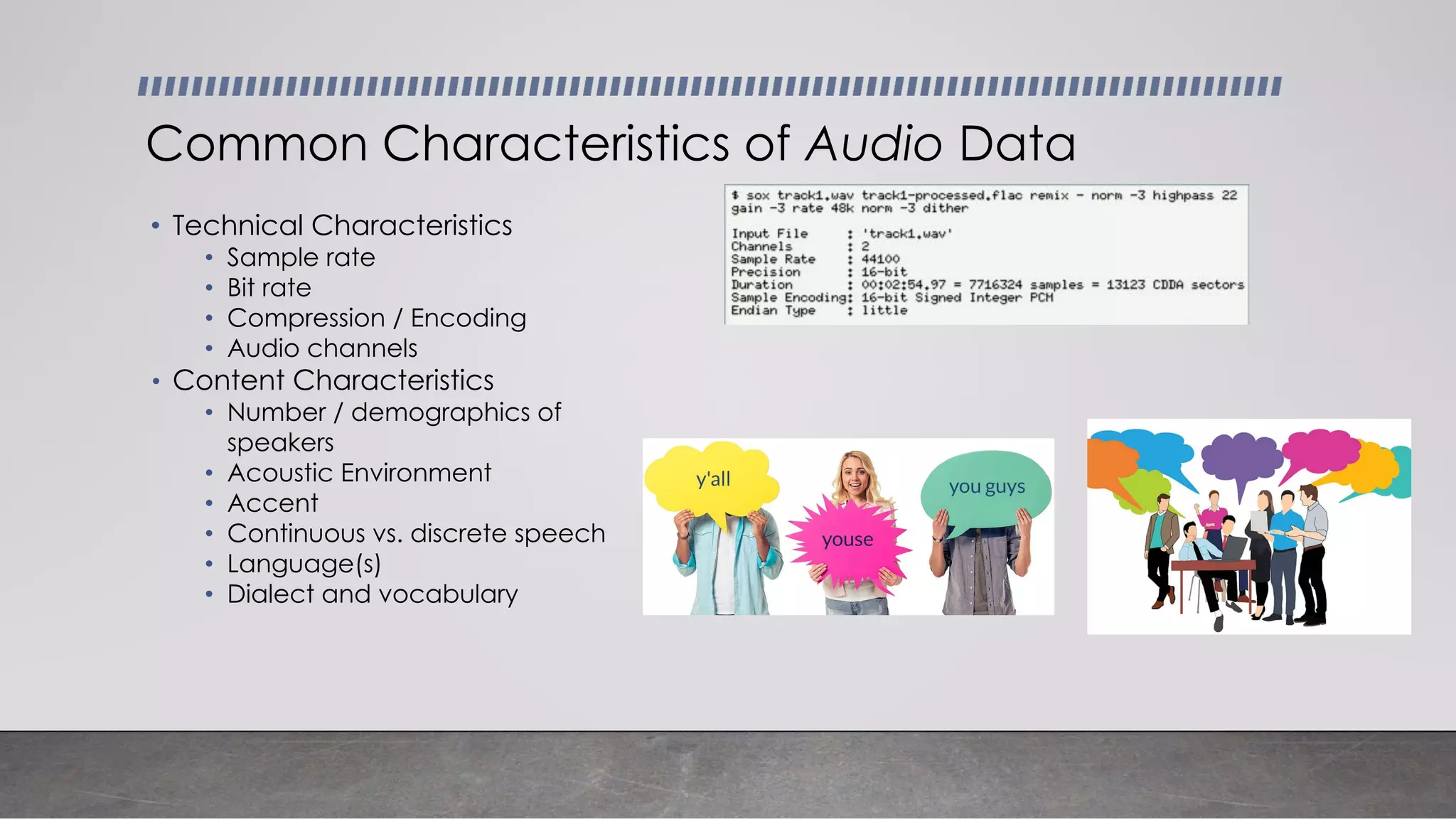

- Unique challenges of working with speech data like data volume, quality, and annotation.

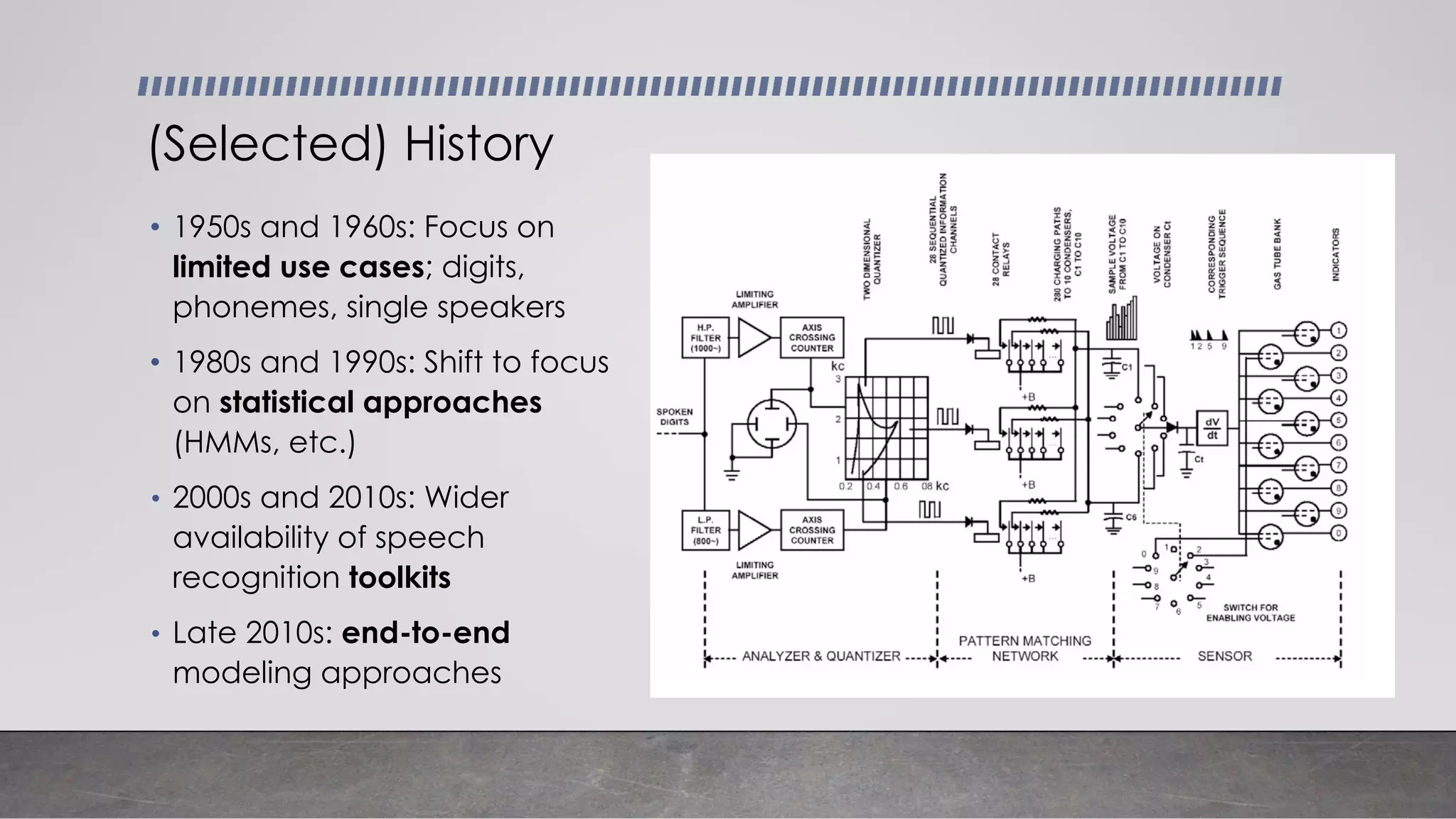

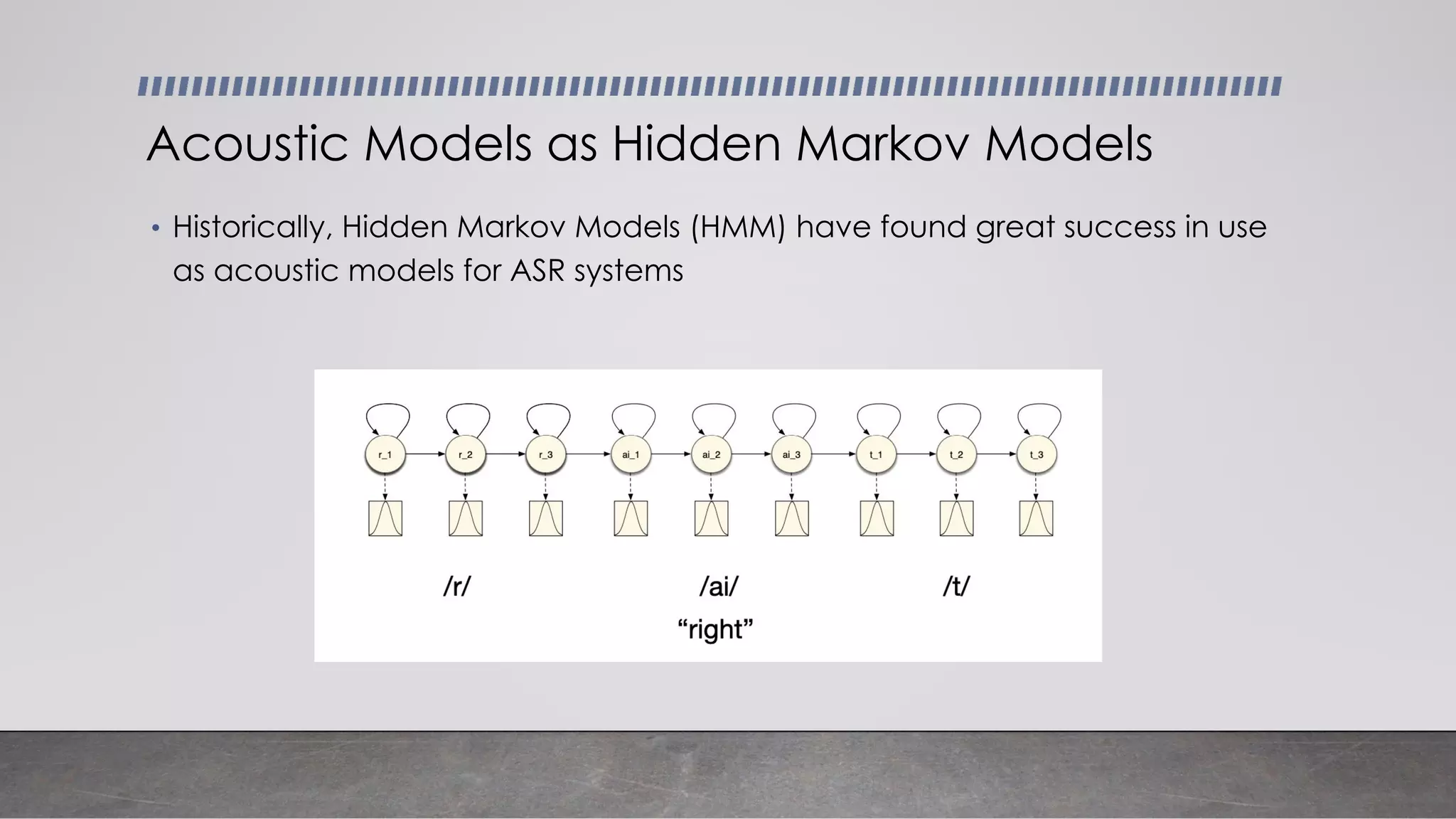

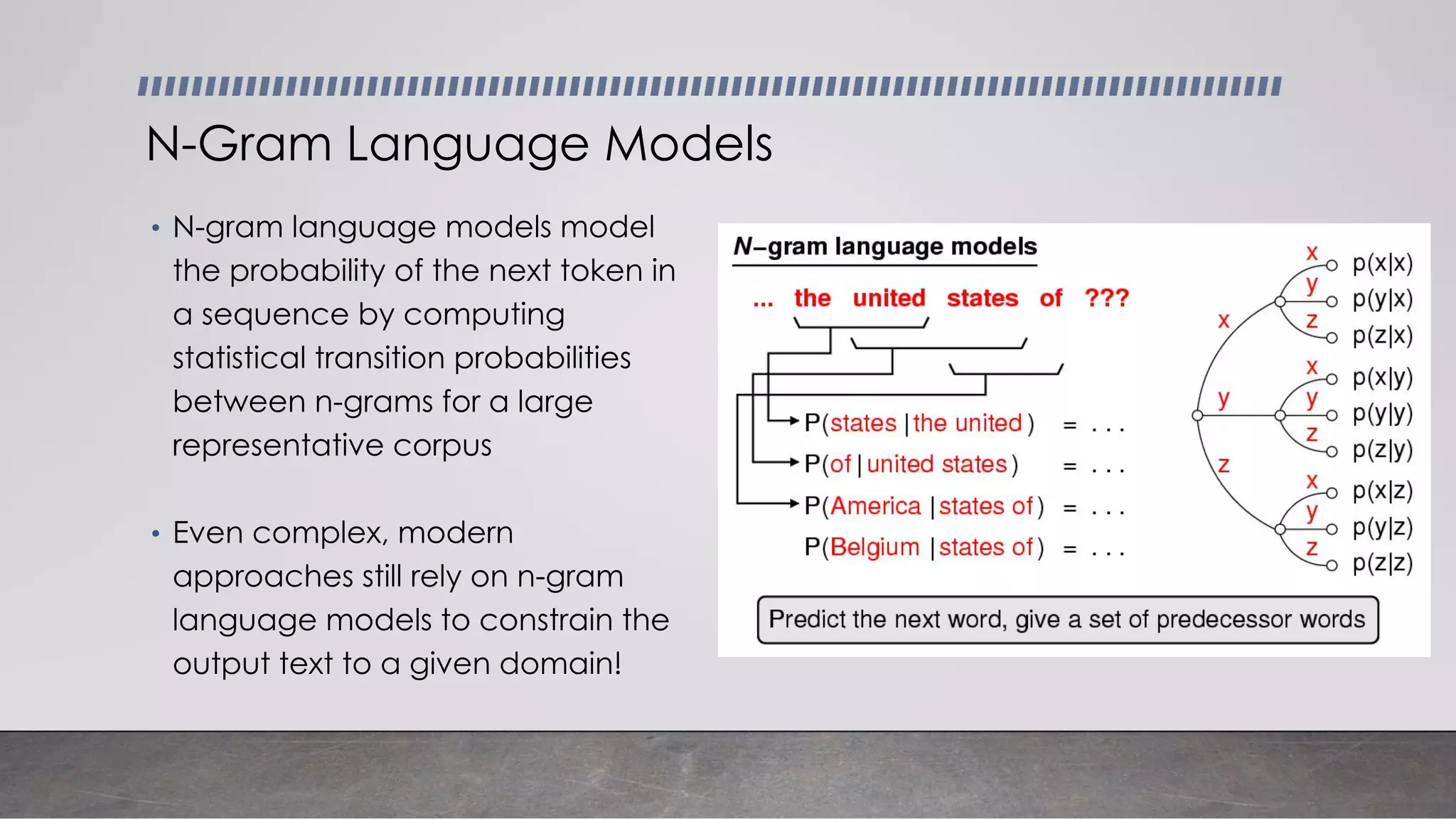

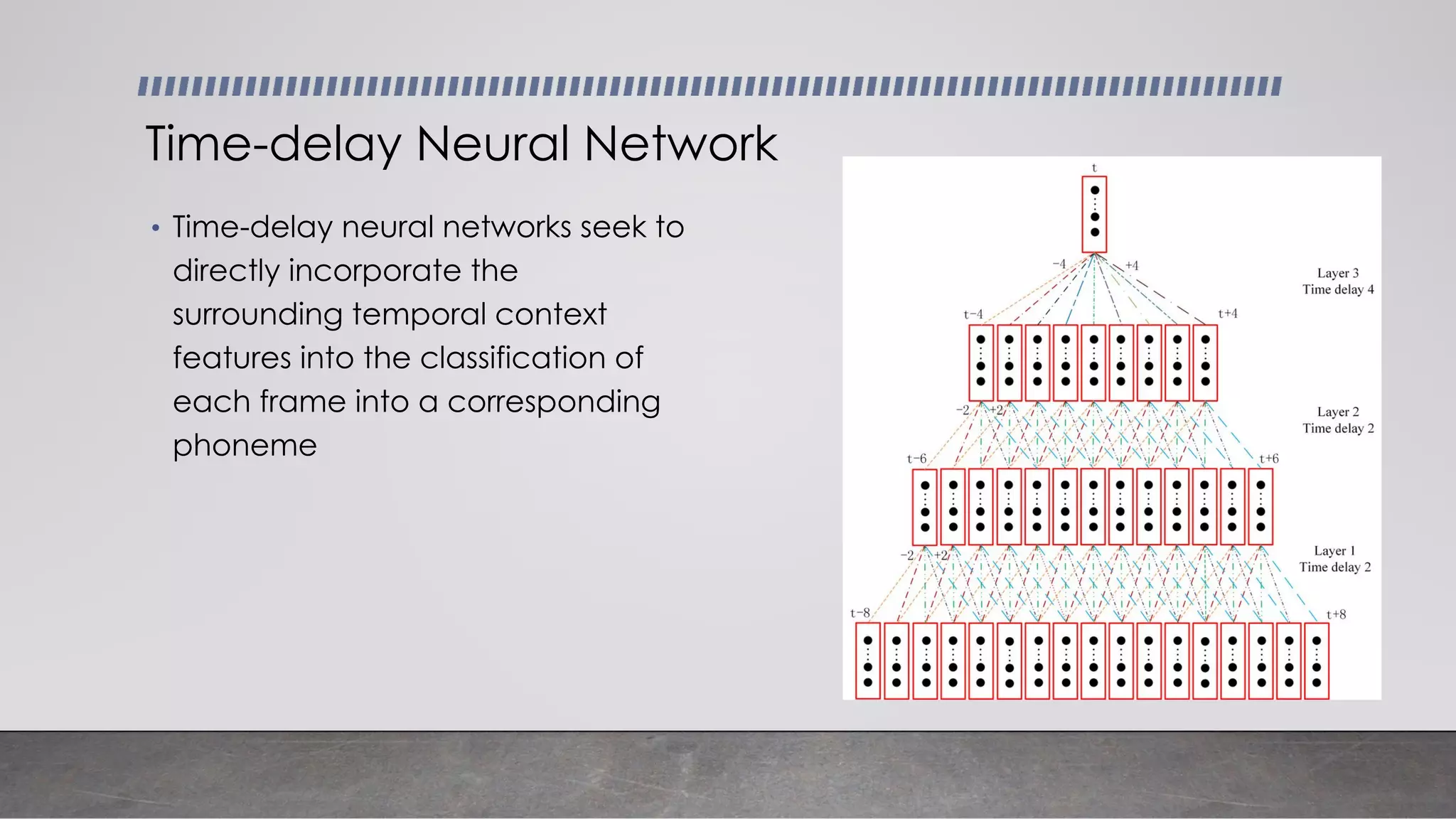

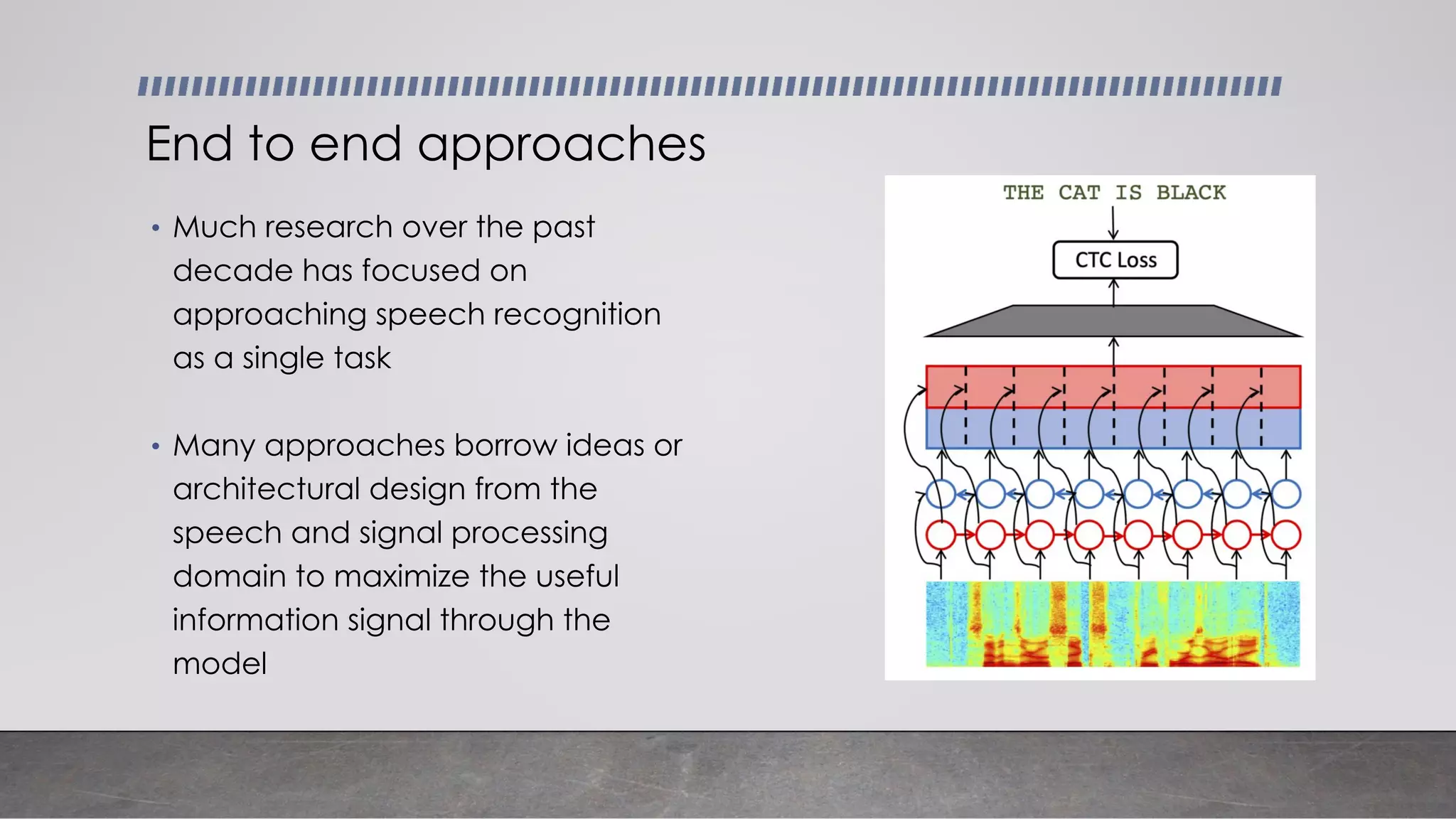

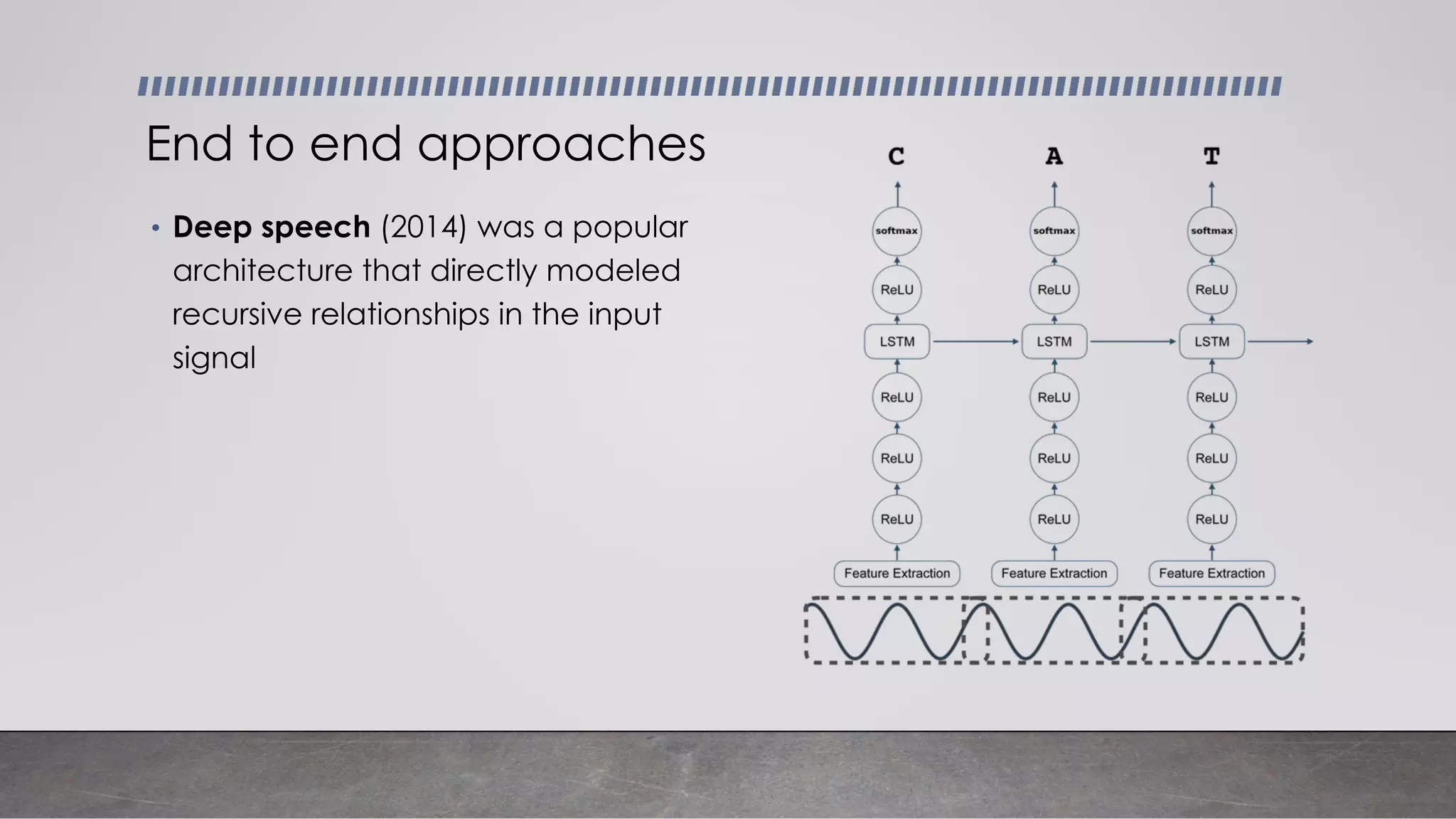

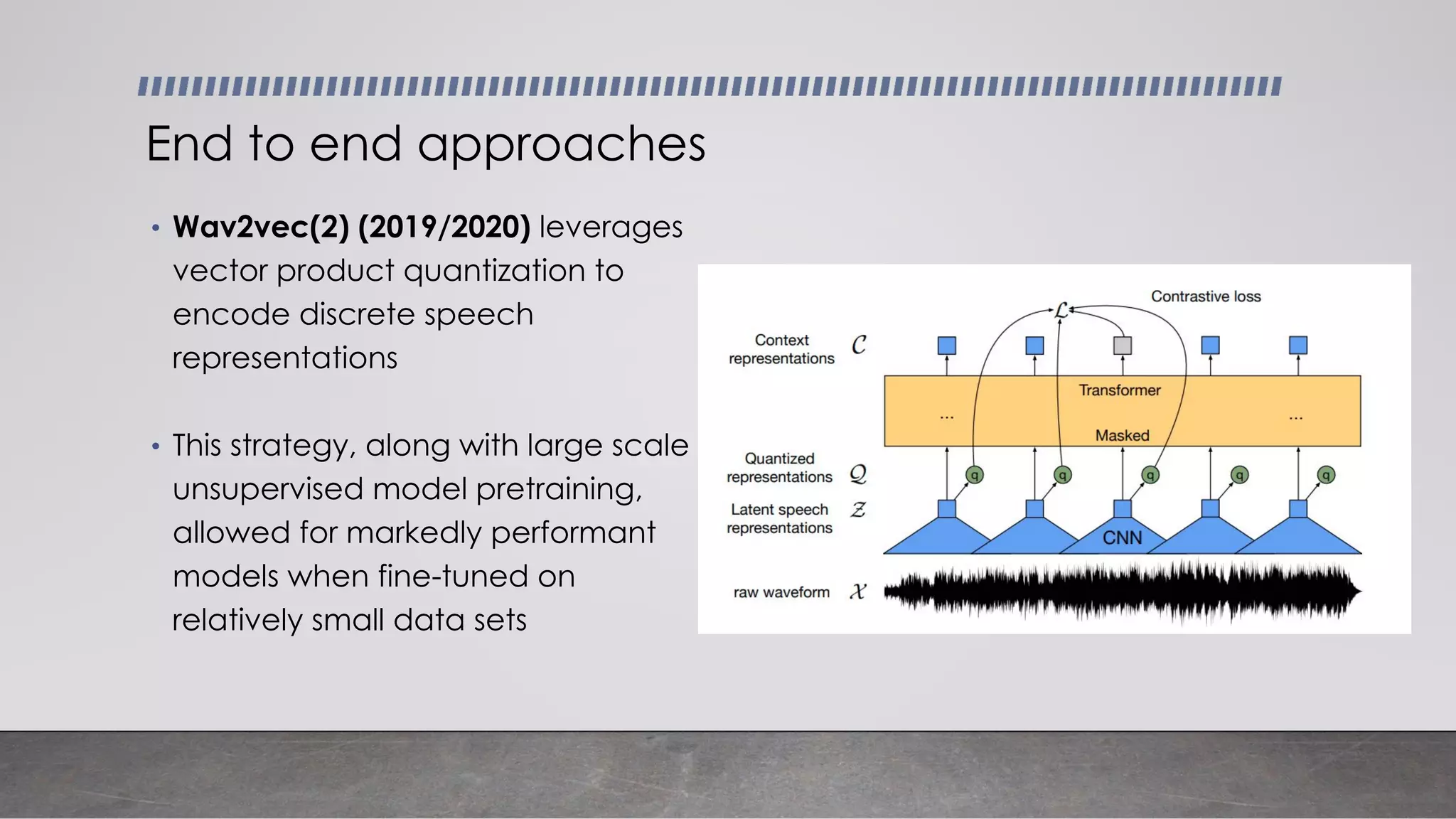

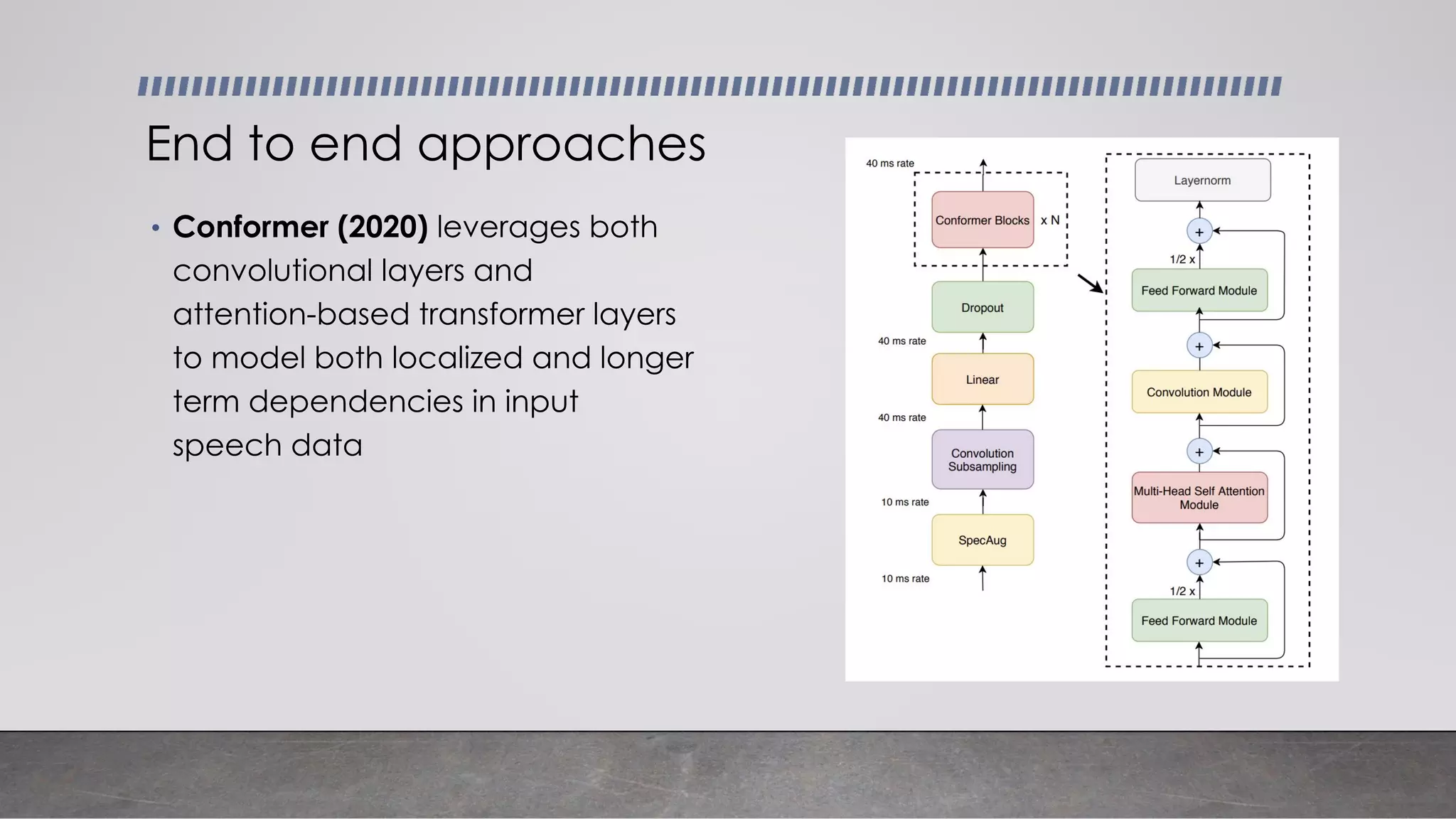

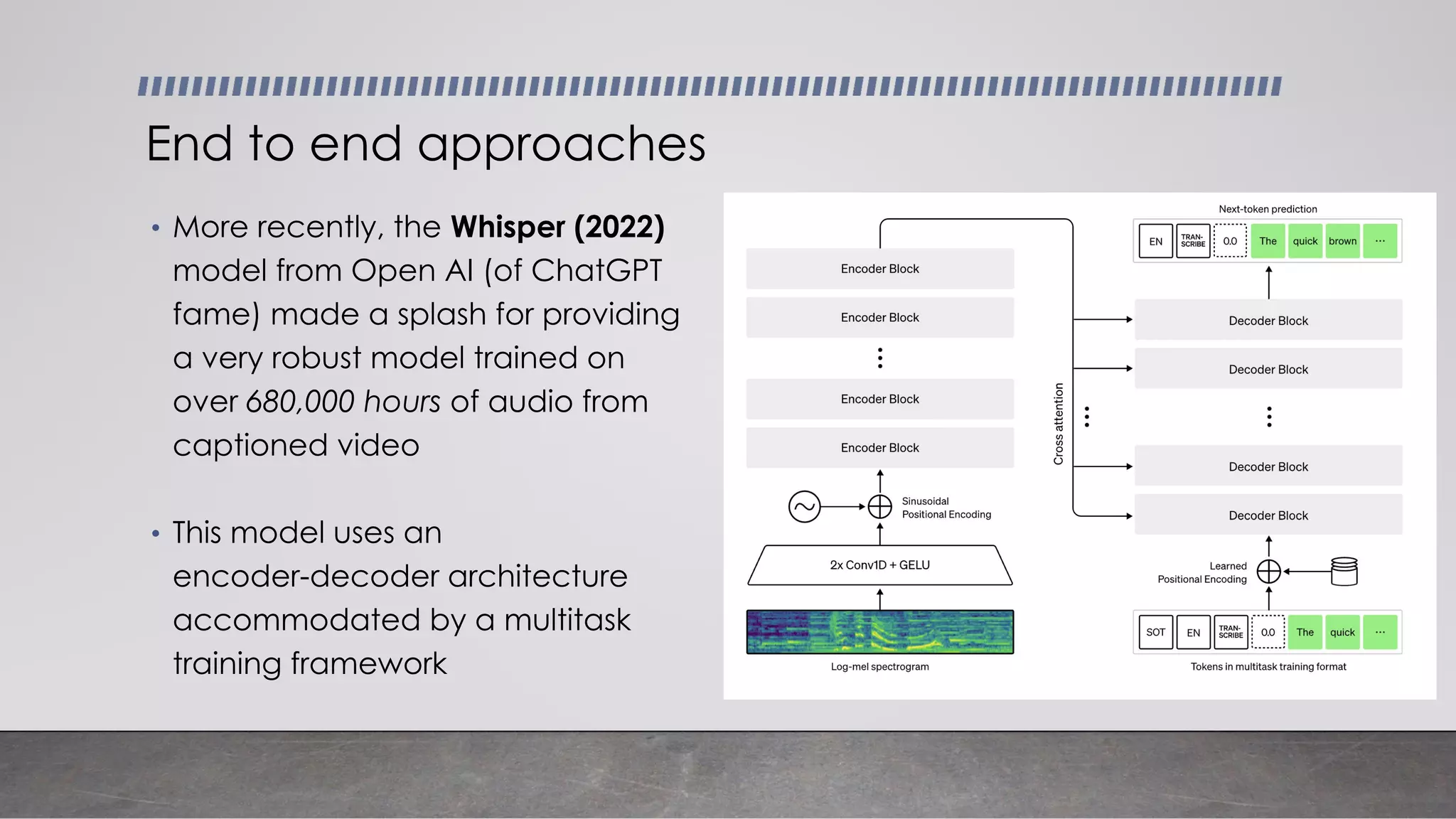

- Common modeling approaches used in ASR systems have historically included hidden Markov models and n-gram language models, but more recent approaches use end-to-end neural networks like deep speech, wav2vec, conformer and whisper models.