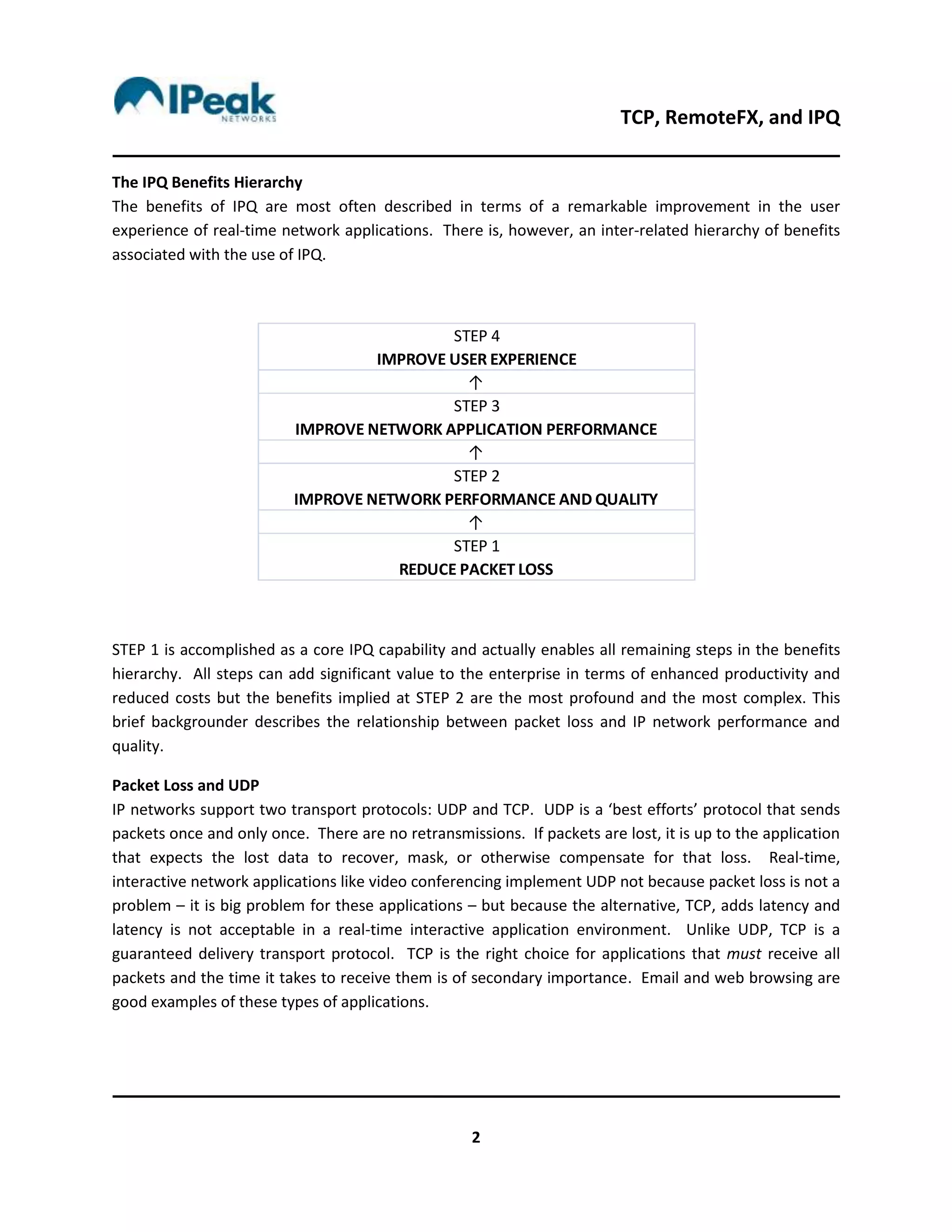

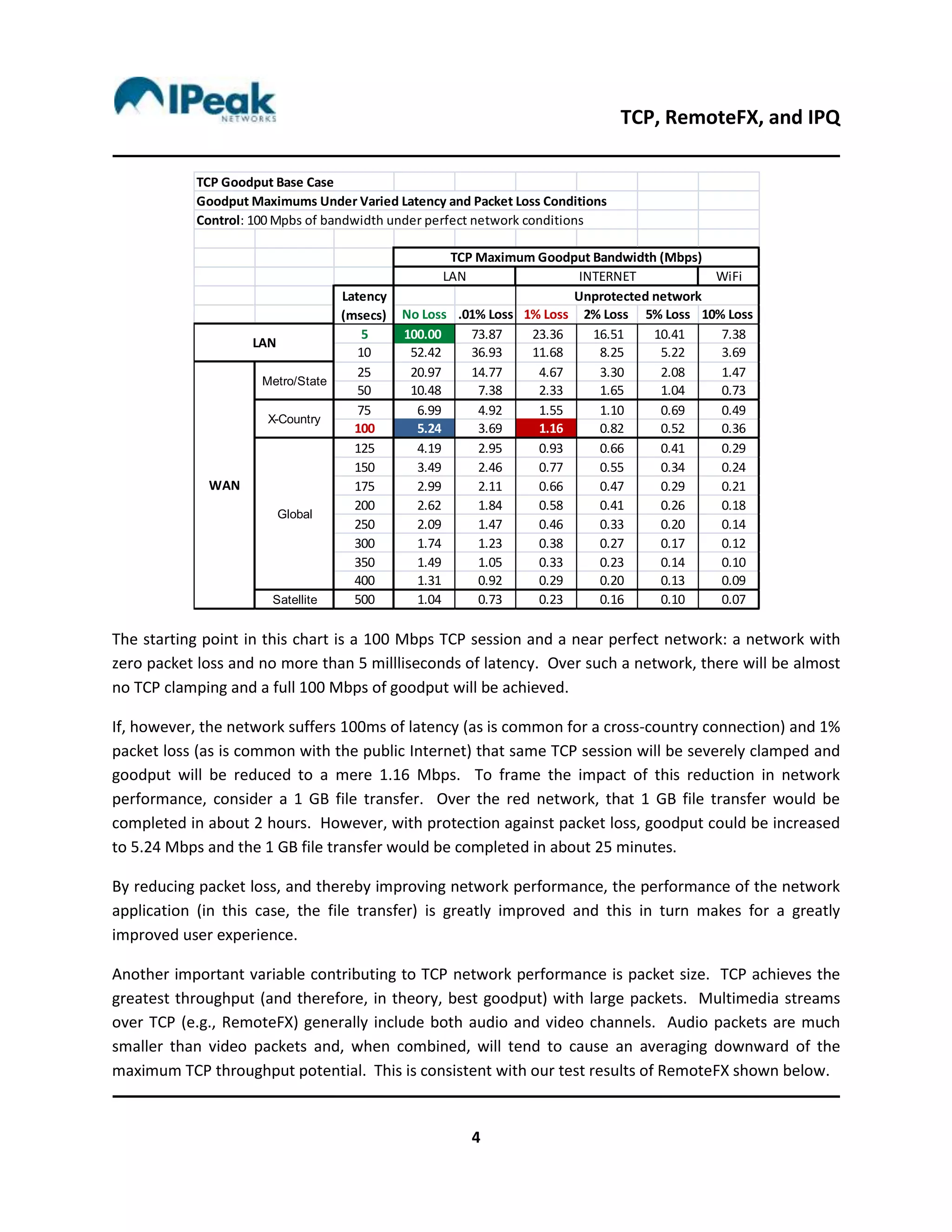

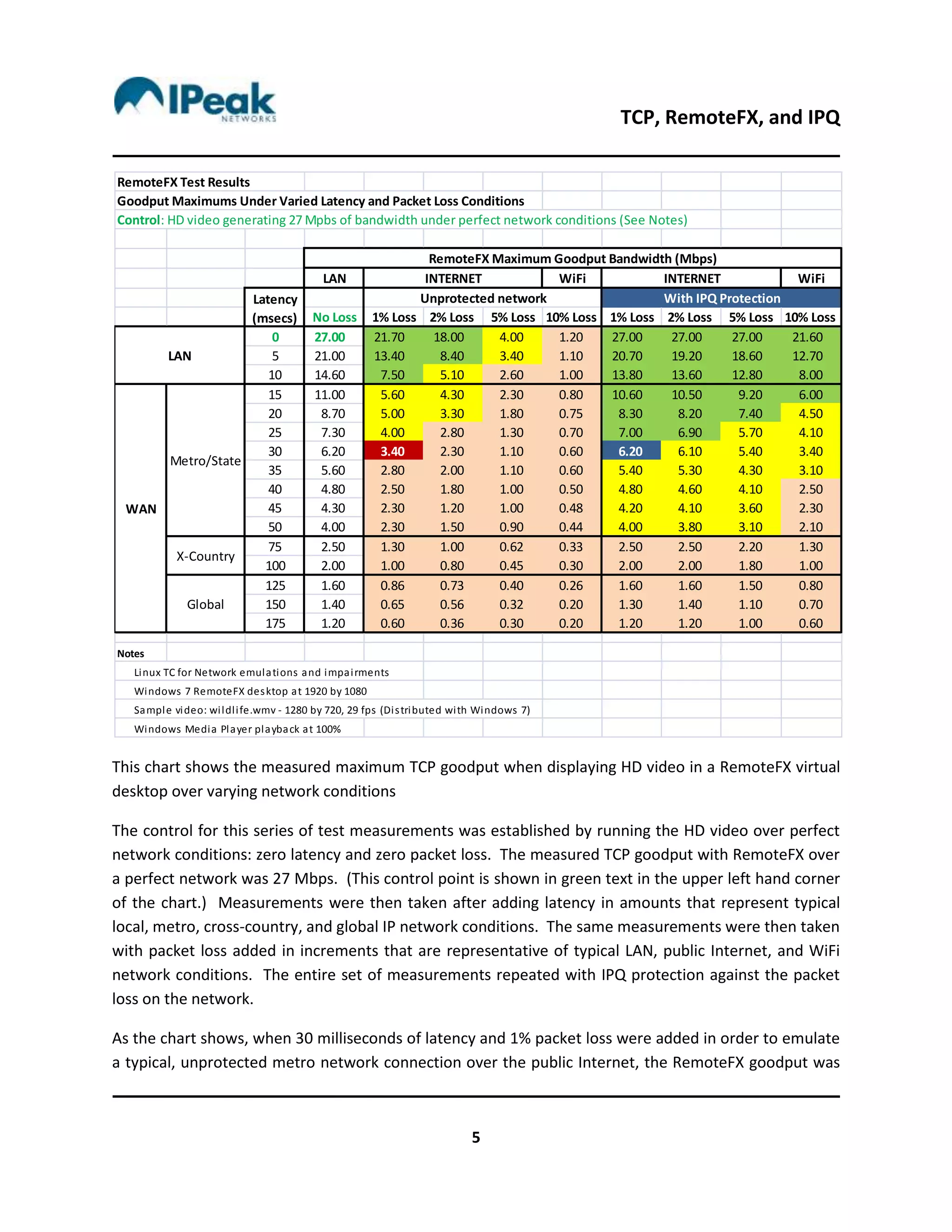

The document discusses the effects of latency and packet loss on TCP throughput, particularly focusing on the benefits of IPQ (Intelligent Packet Queue) in improving user experience for real-time applications like video conferencing. It explains how TCP's acknowledgment and retransmission requirements lead to reduced goodput in the presence of network issues, and presents tests showing that IPQ can effectively double goodput even under challenging conditions. Additionally, it concludes that IPQ enables RemoteFX to operate efficiently over the public internet, expanding its potential market beyond high-quality MPLS networks.