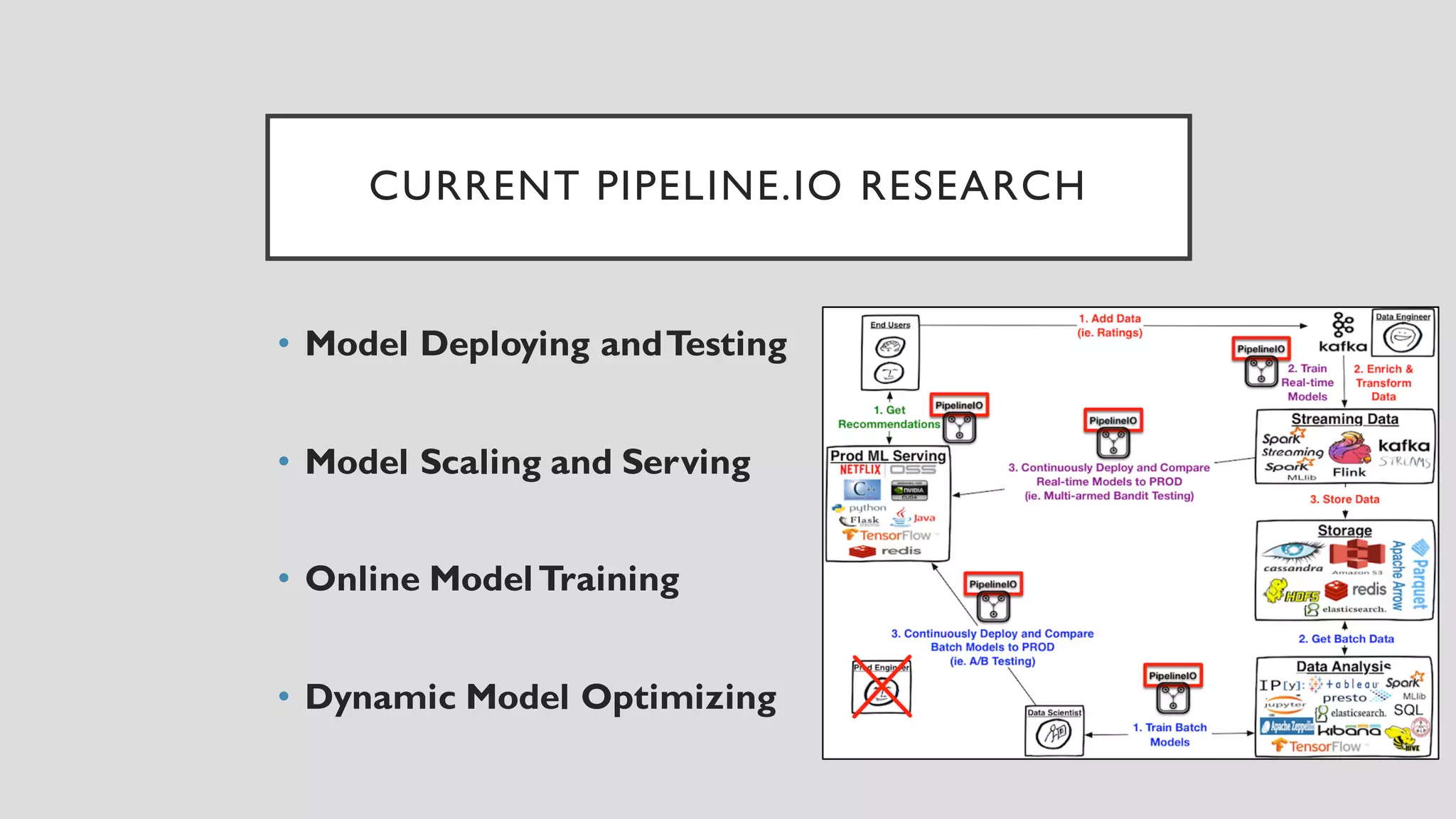

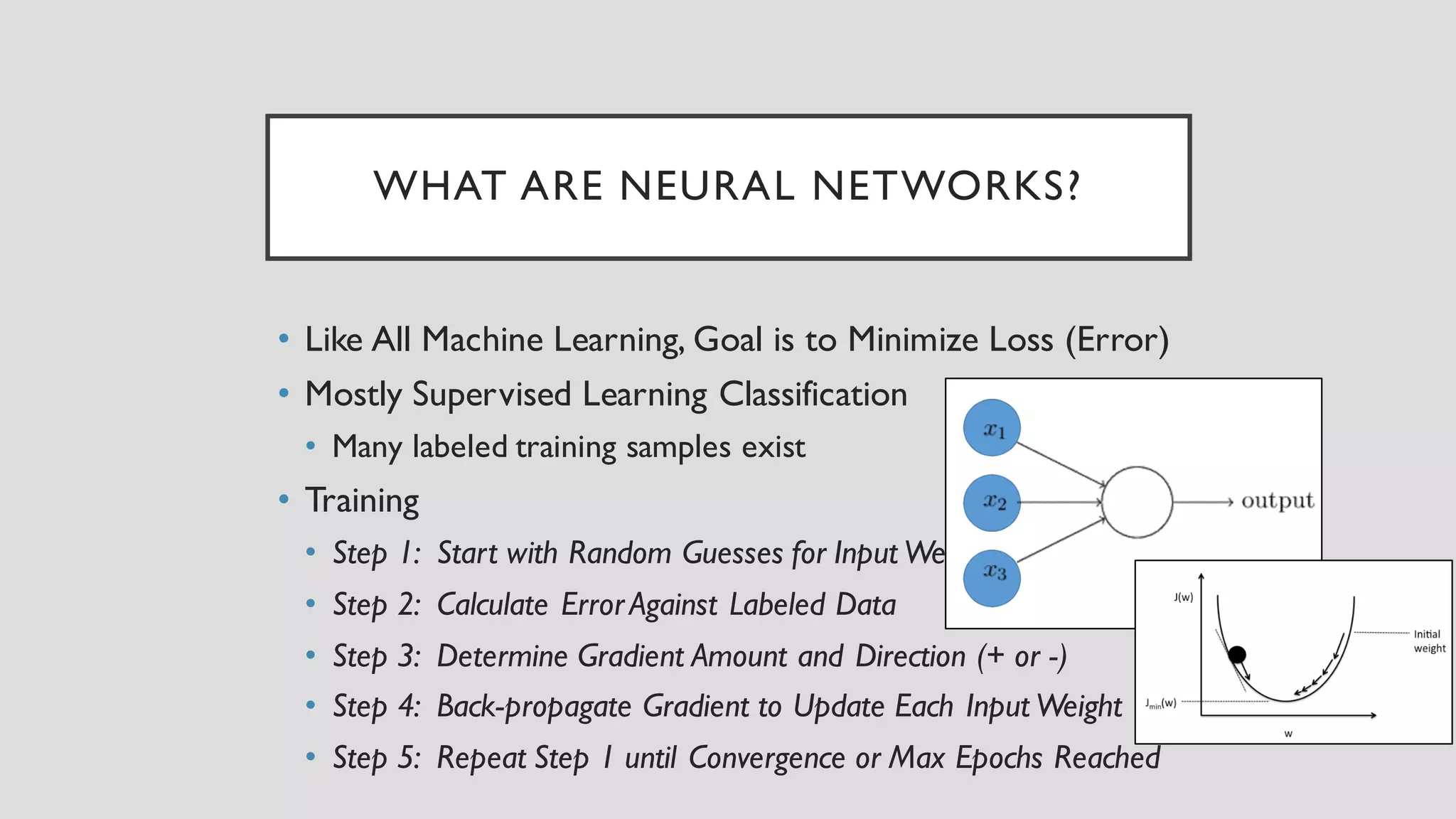

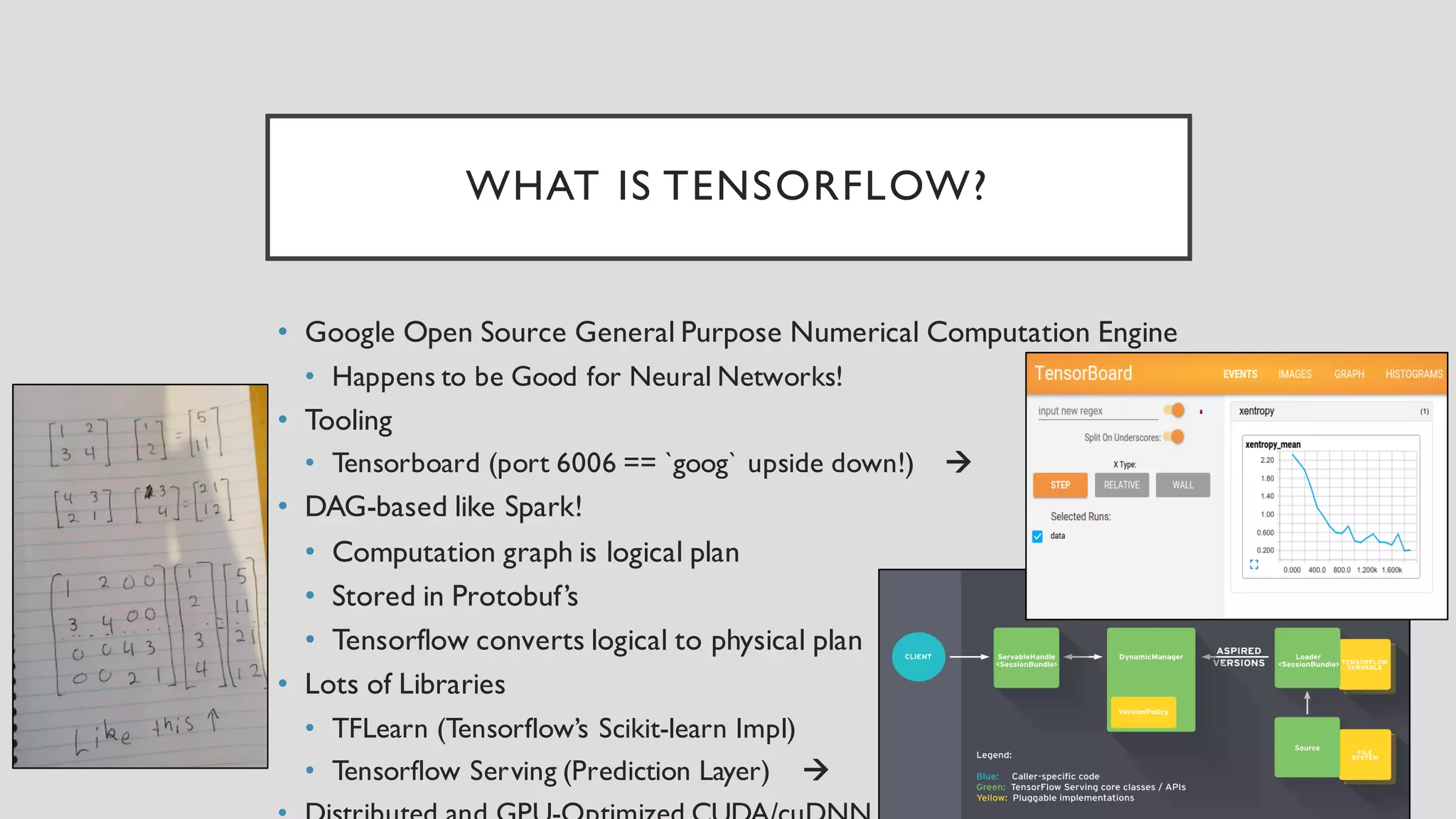

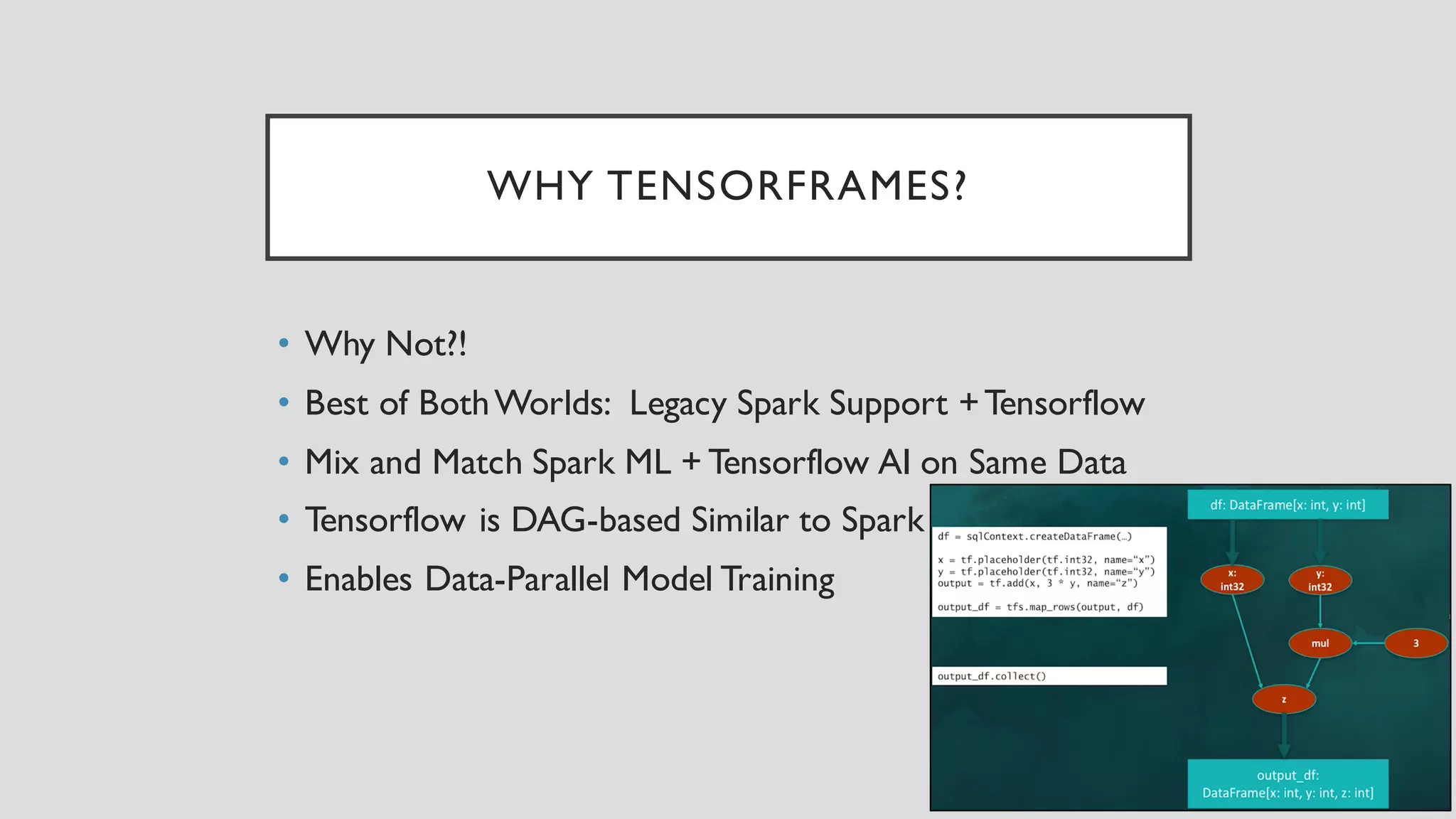

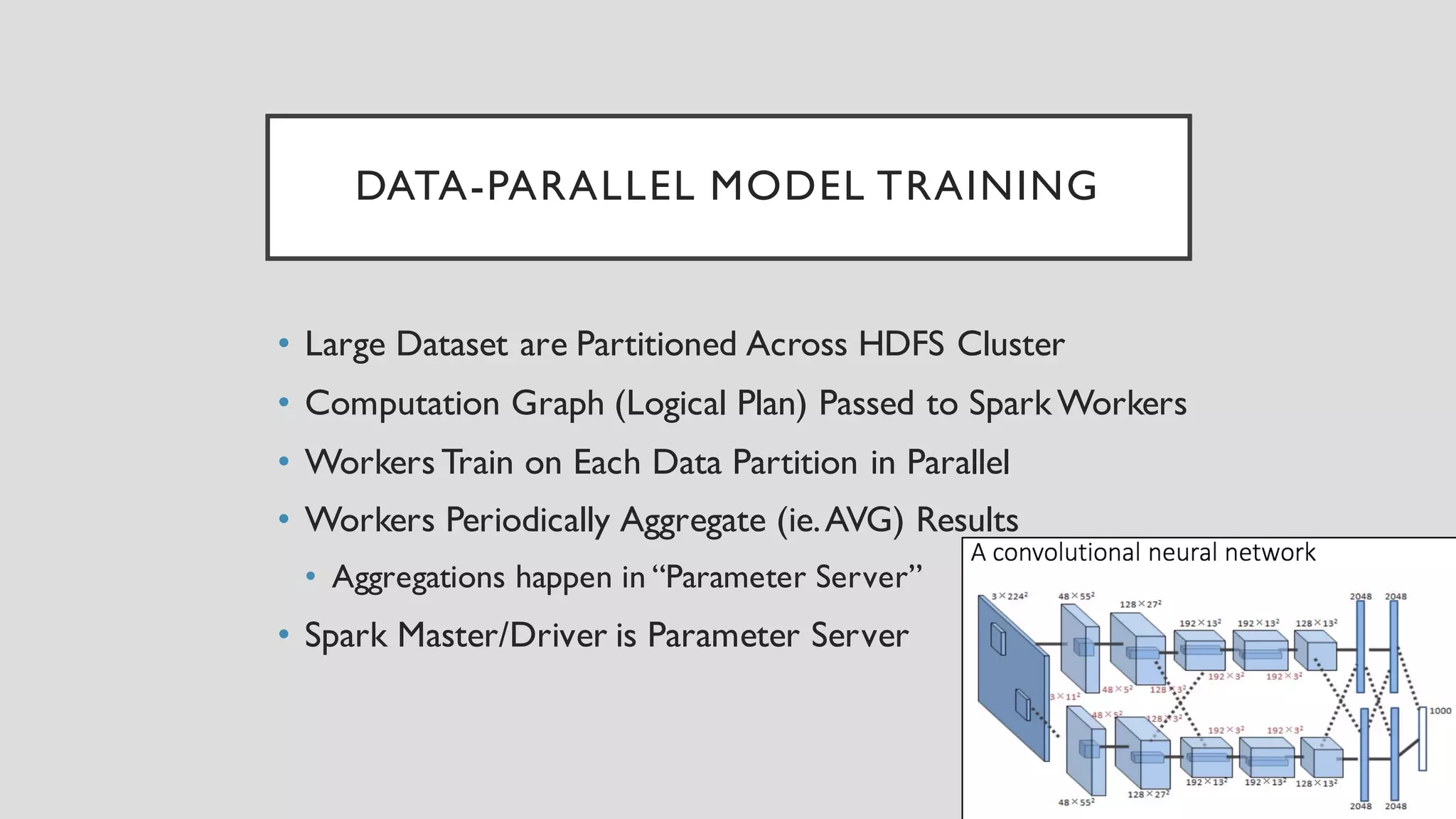

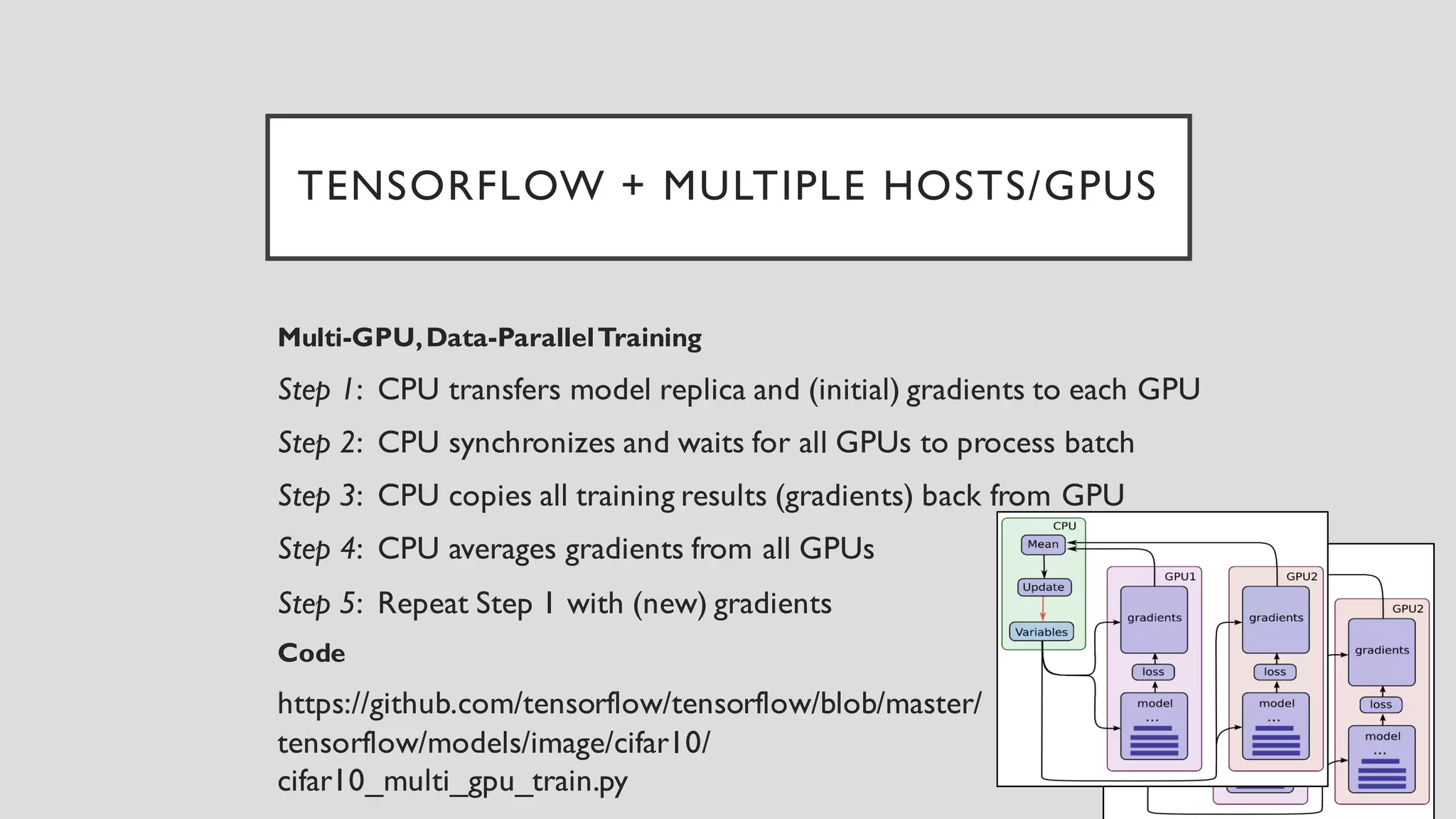

This document discusses TensorFrames, which bridges Spark and TensorFlow to enable data-parallel model training. TensorFrames allows Spark datasets to be used as input to TensorFlow models, and distributes the model training across Spark workers. The workers train on partitioned data in parallel and periodically aggregate results. This combines the benefits of Spark's distributed processing with TensorFlow's capabilities for neural networks and other machine learning models. A demo is provided of using TensorFrames in Python and Scala to perform distributed deep learning on Spark clusters.