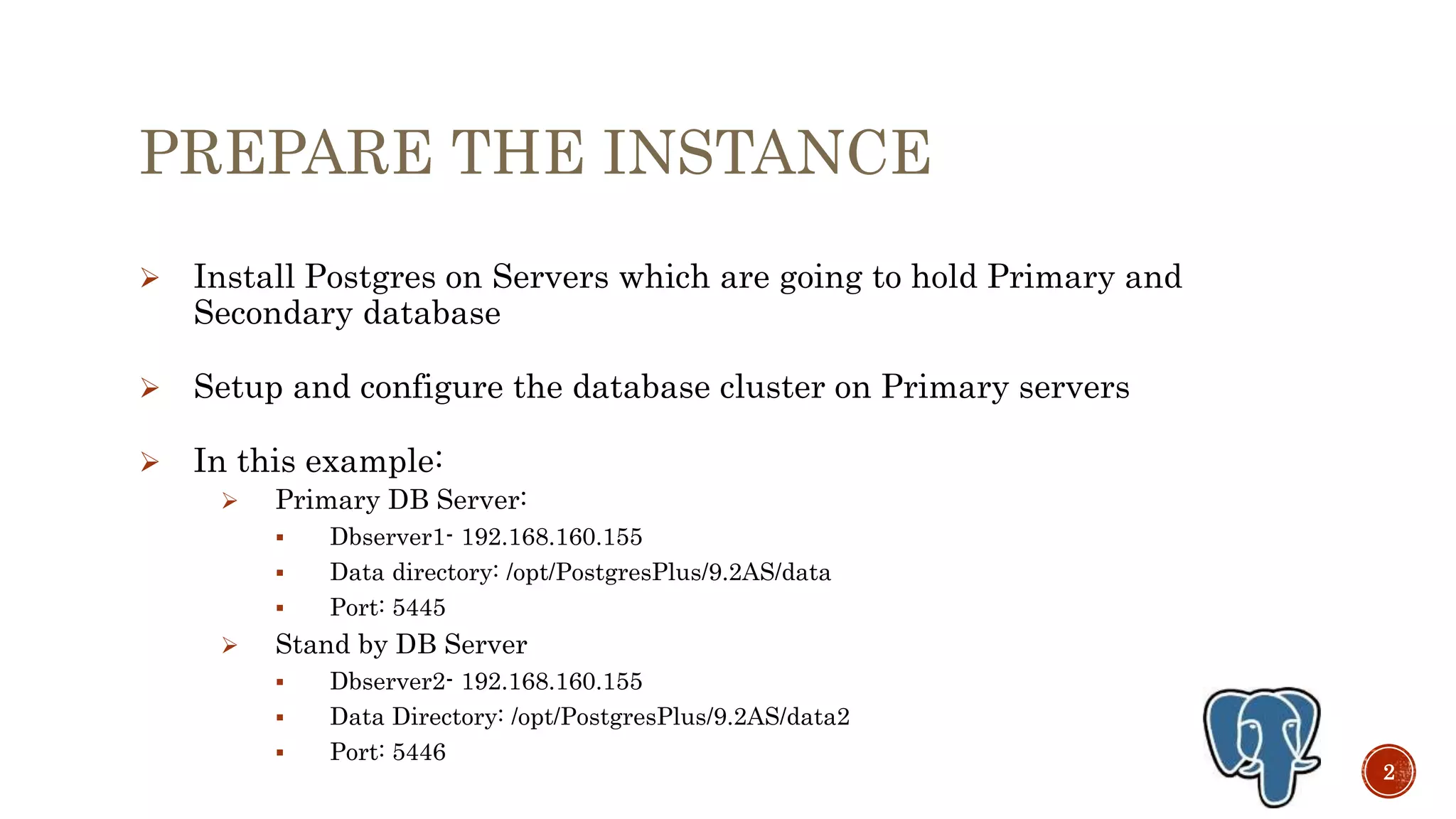

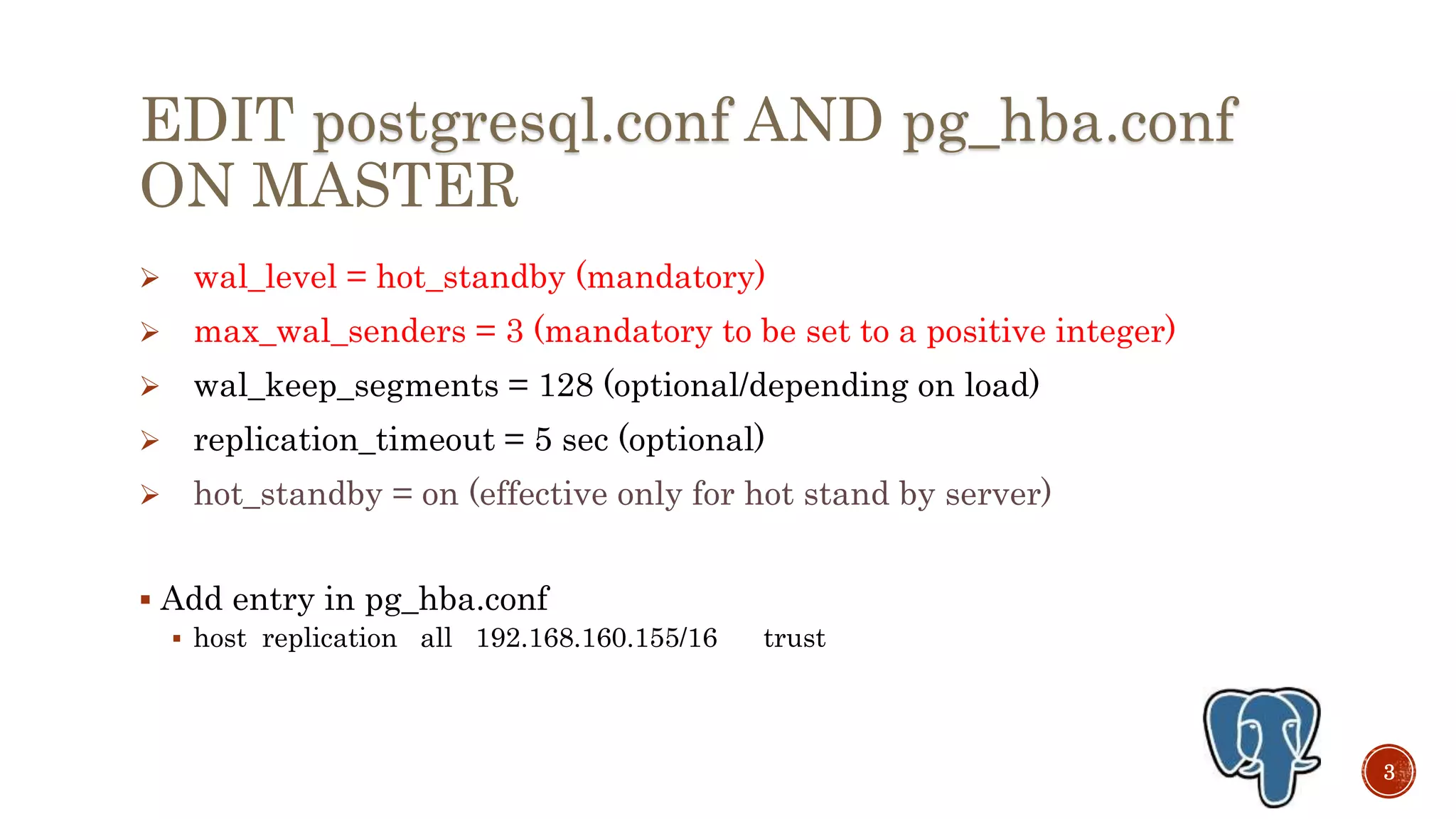

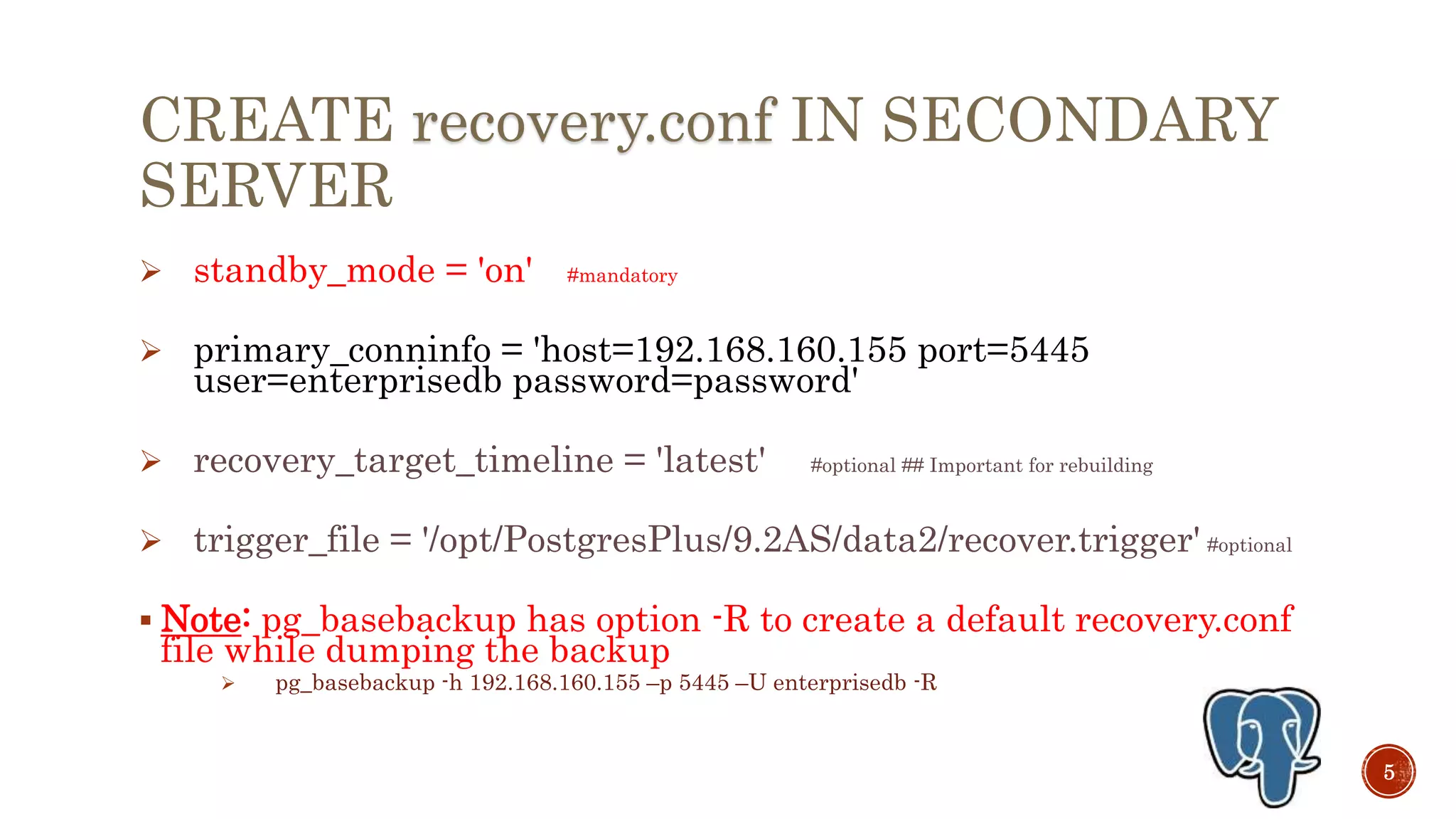

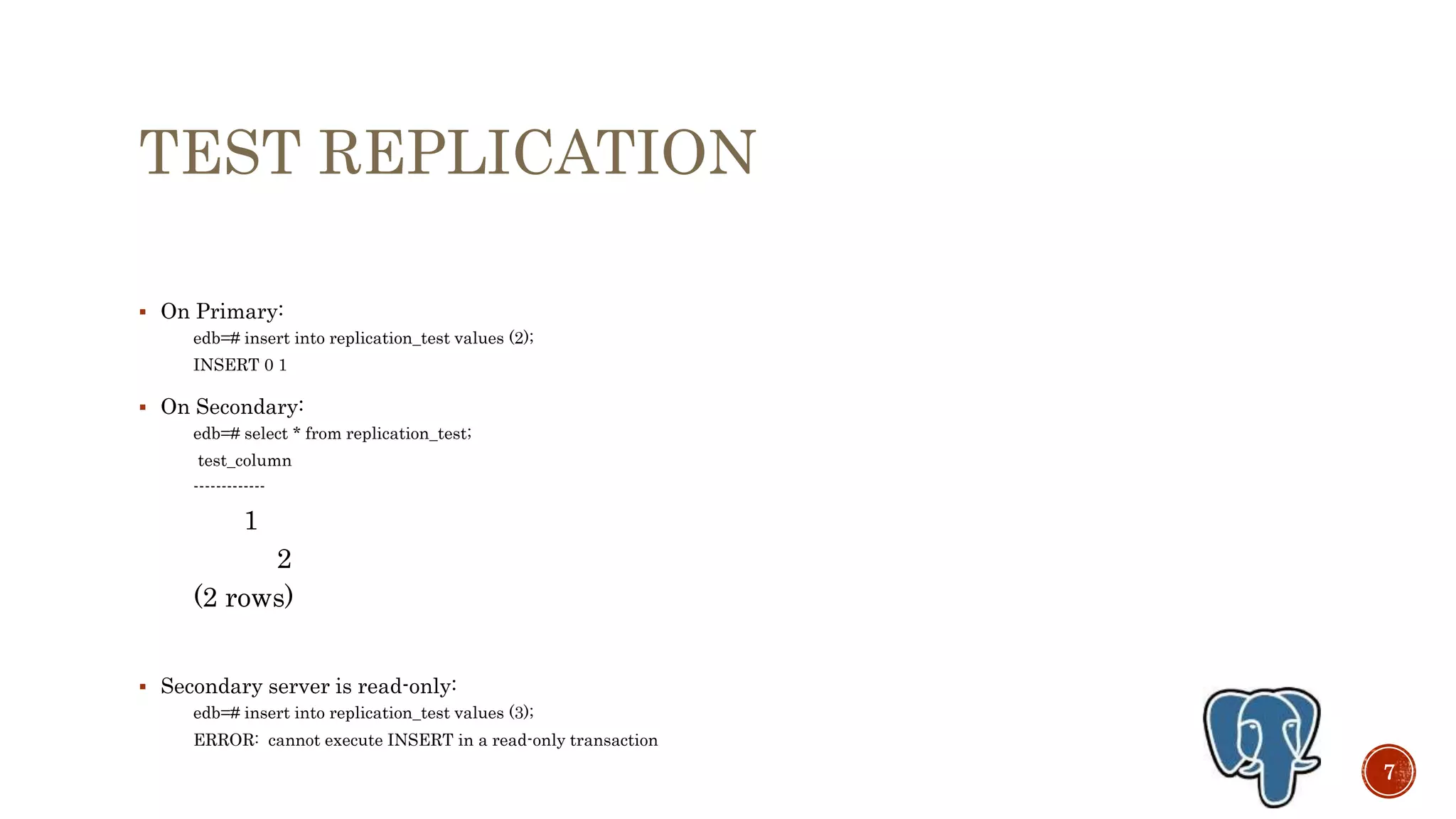

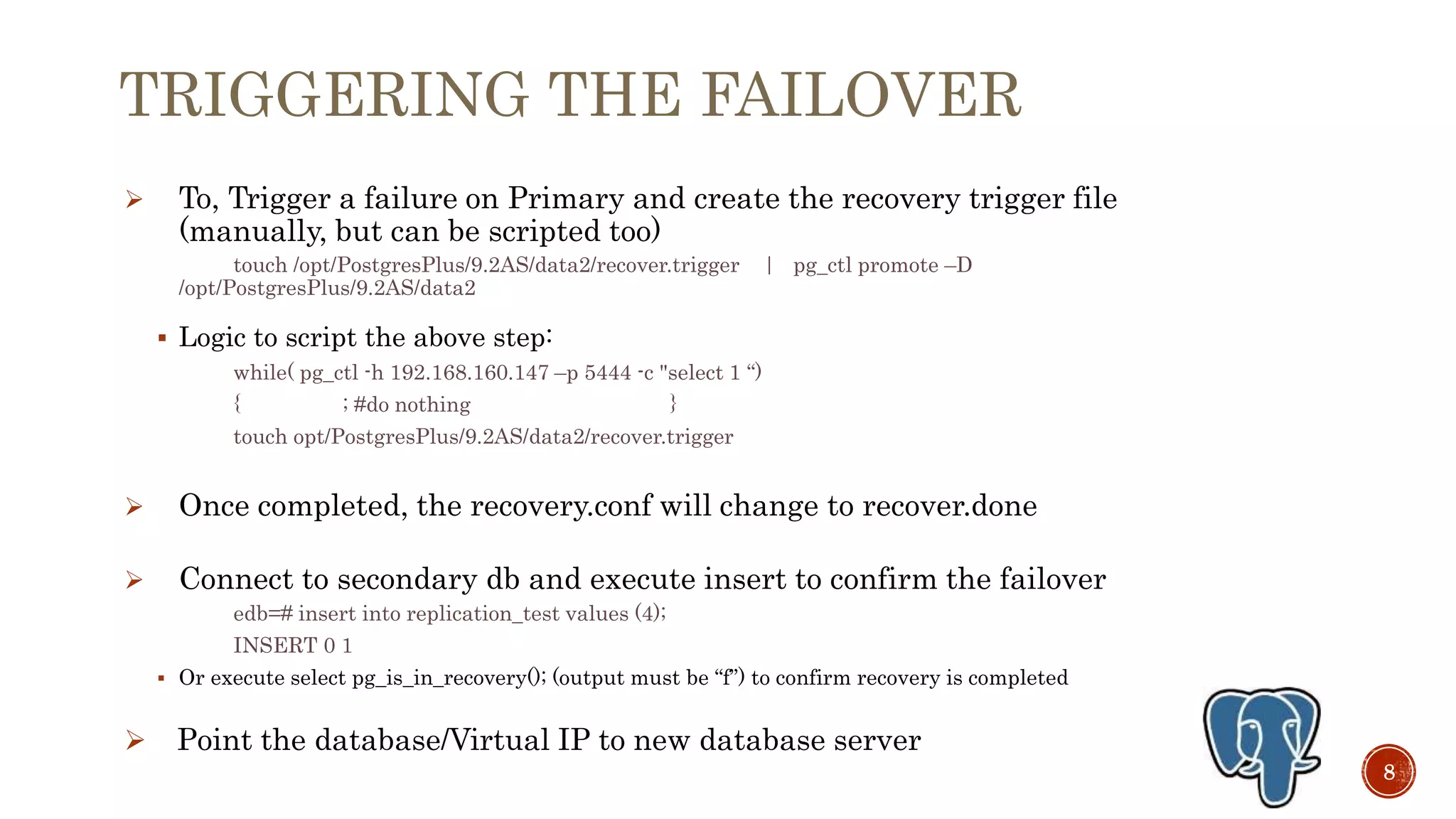

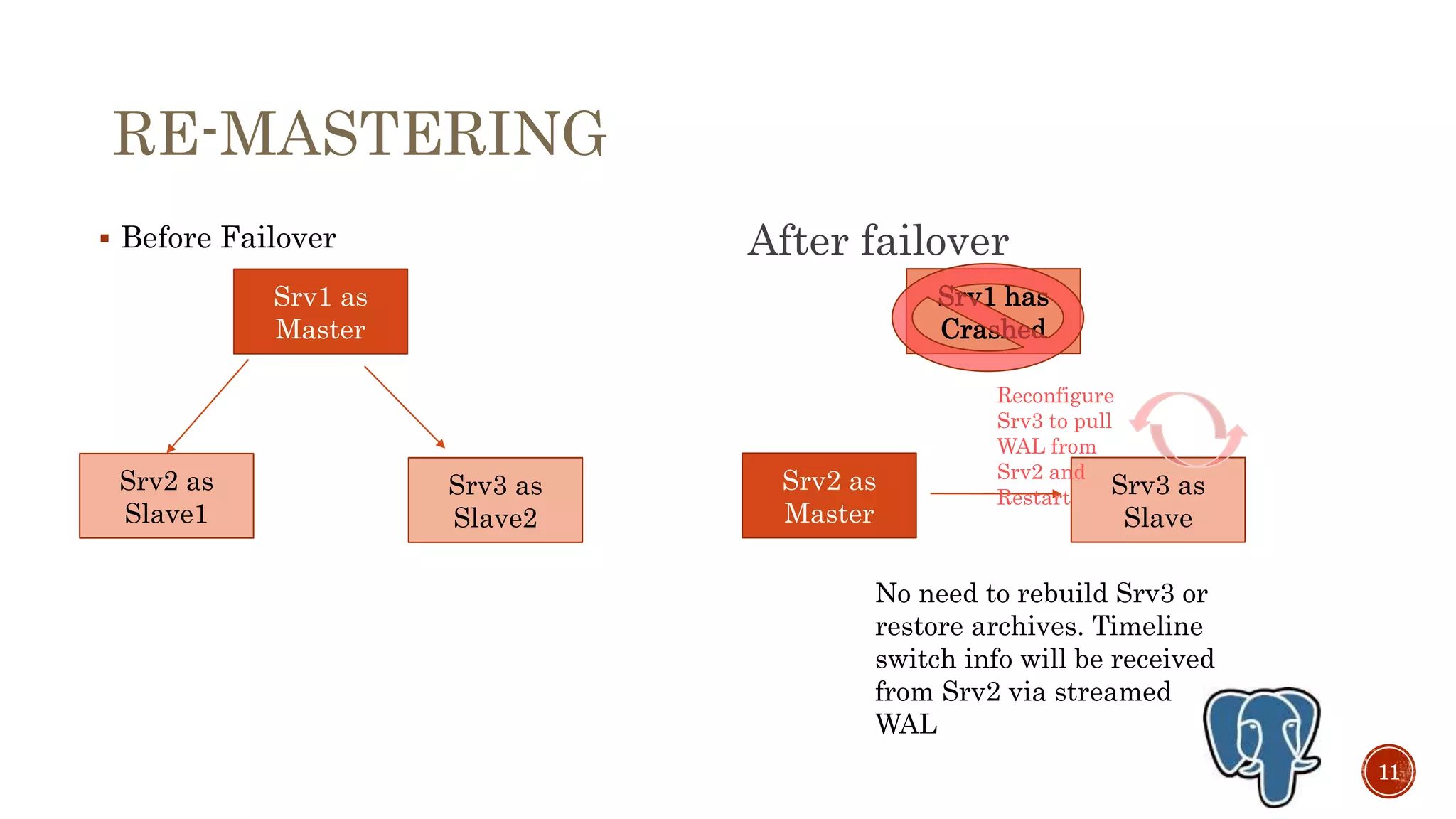

This document discusses setting up streaming replication in PostgreSQL v9.3 to enable high availability. It covers preparing primary and standby servers, configuring wal_level and max_wal_senders on the primary, taking a backup and restoring on the standby, creating a recovery.conf file, starting the servers to test replication, triggering failover by promoting the standby, handling multiple replicas without rebuilding, and rebuilding the original primary as a new standby. Monitoring replication status is also addressed using views like pg_stat_replication.