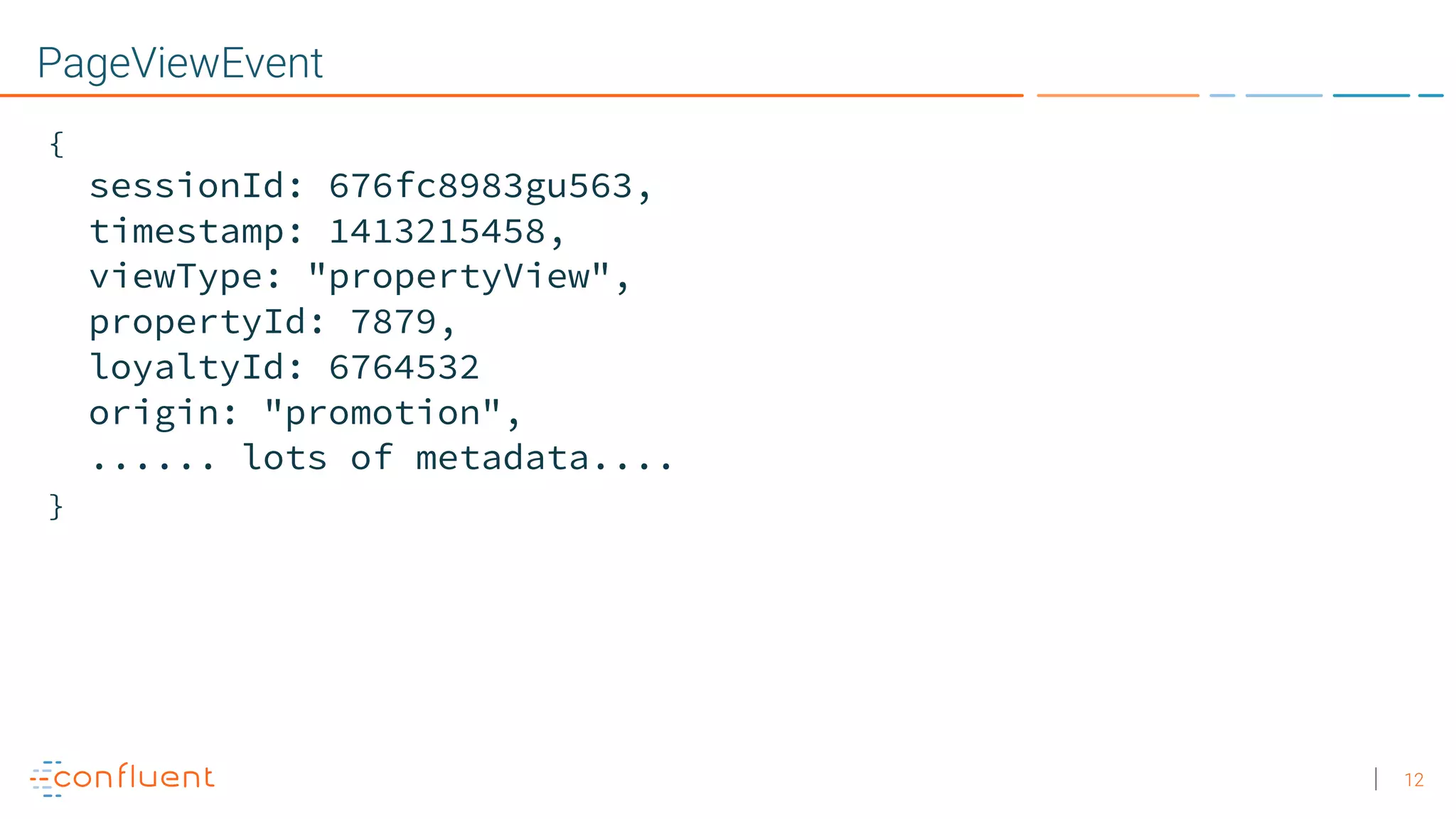

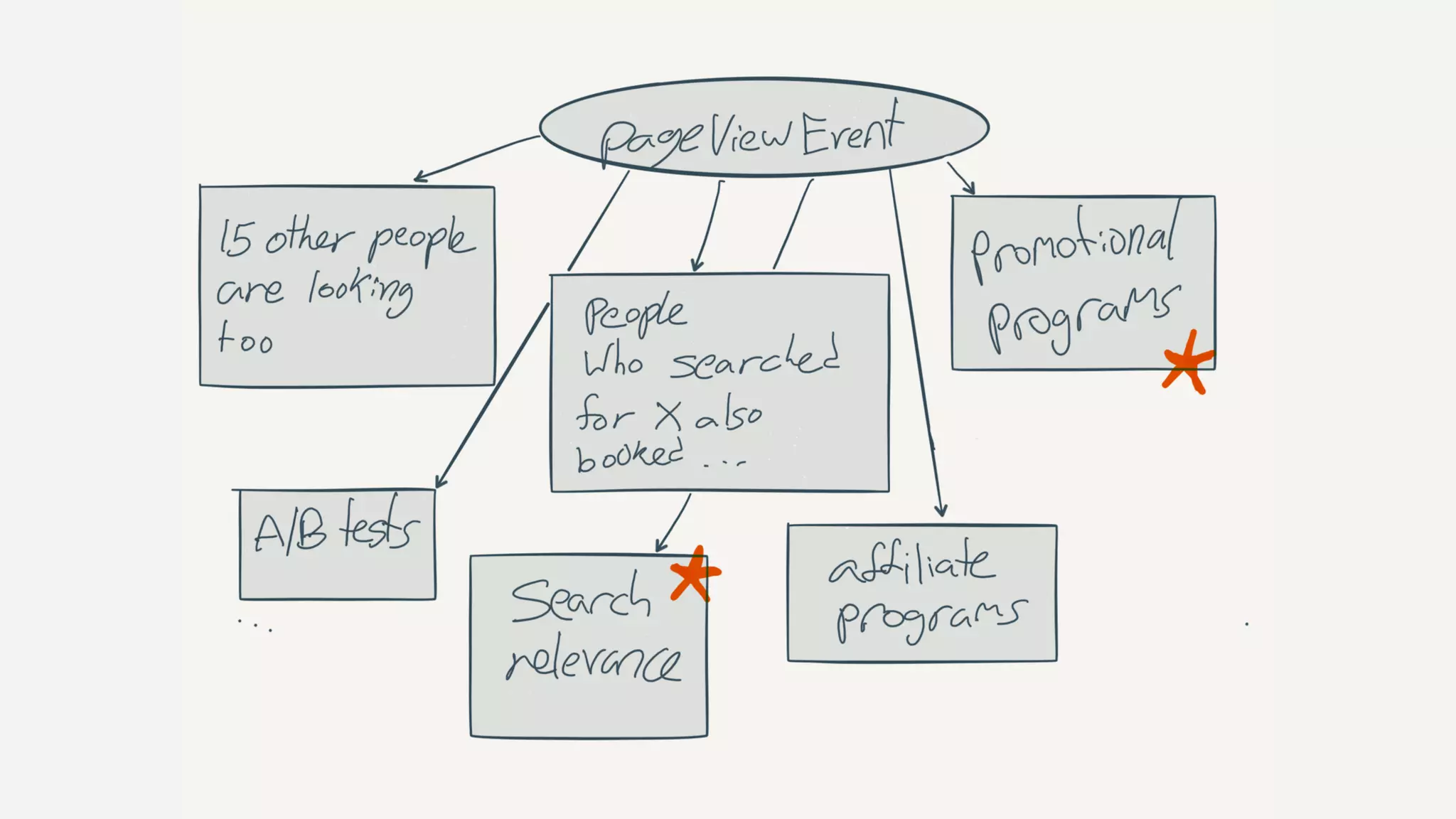

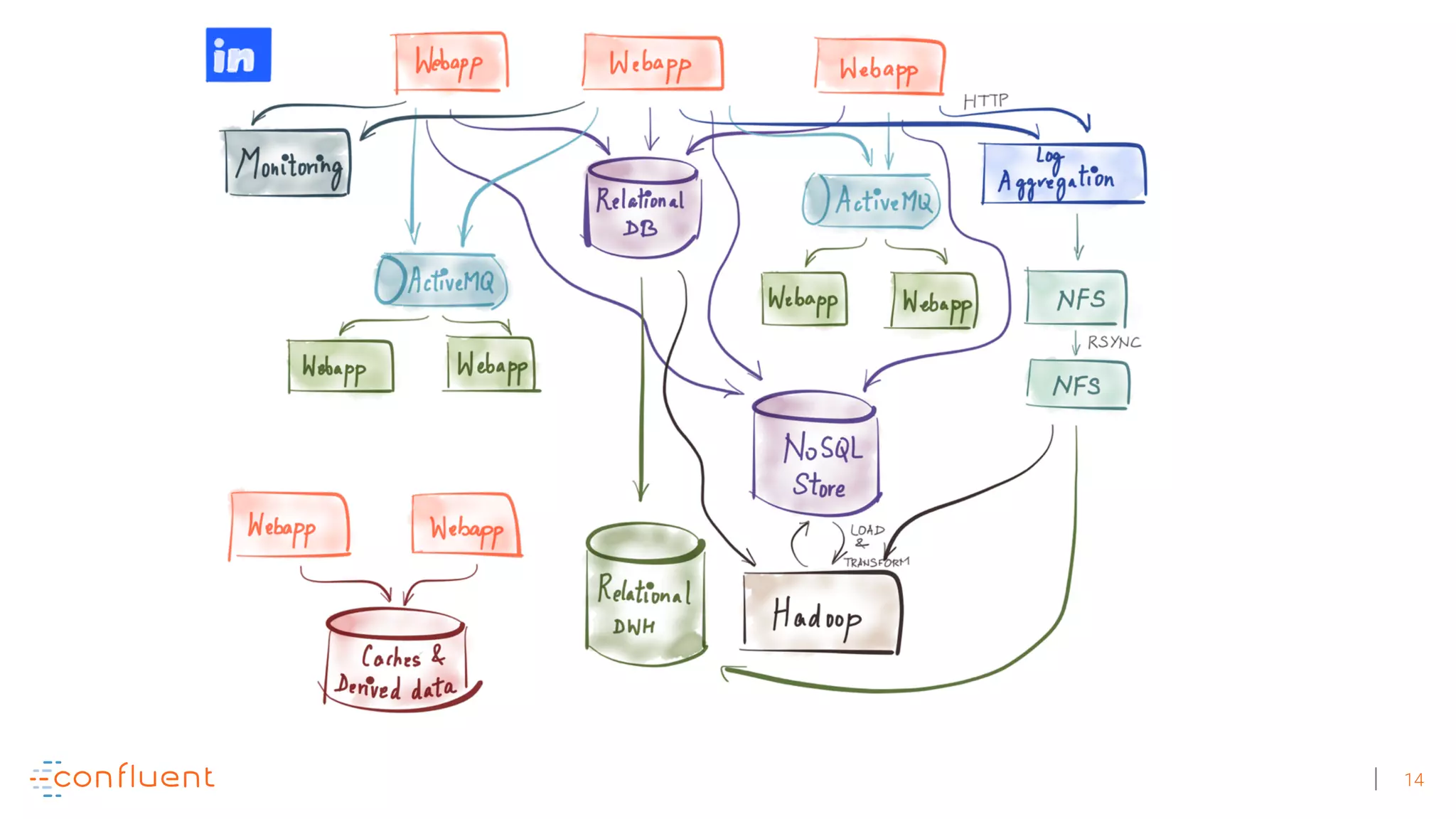

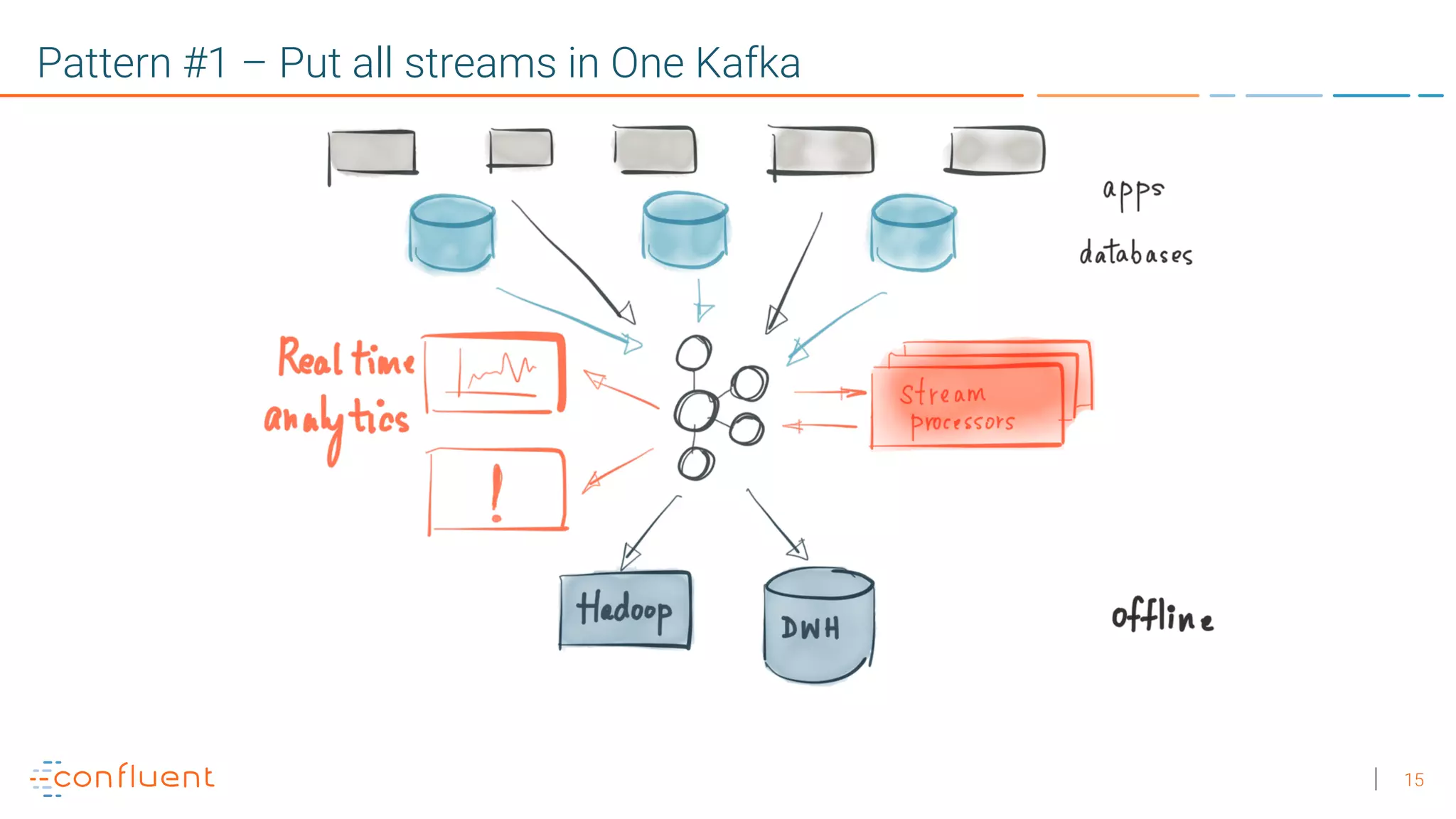

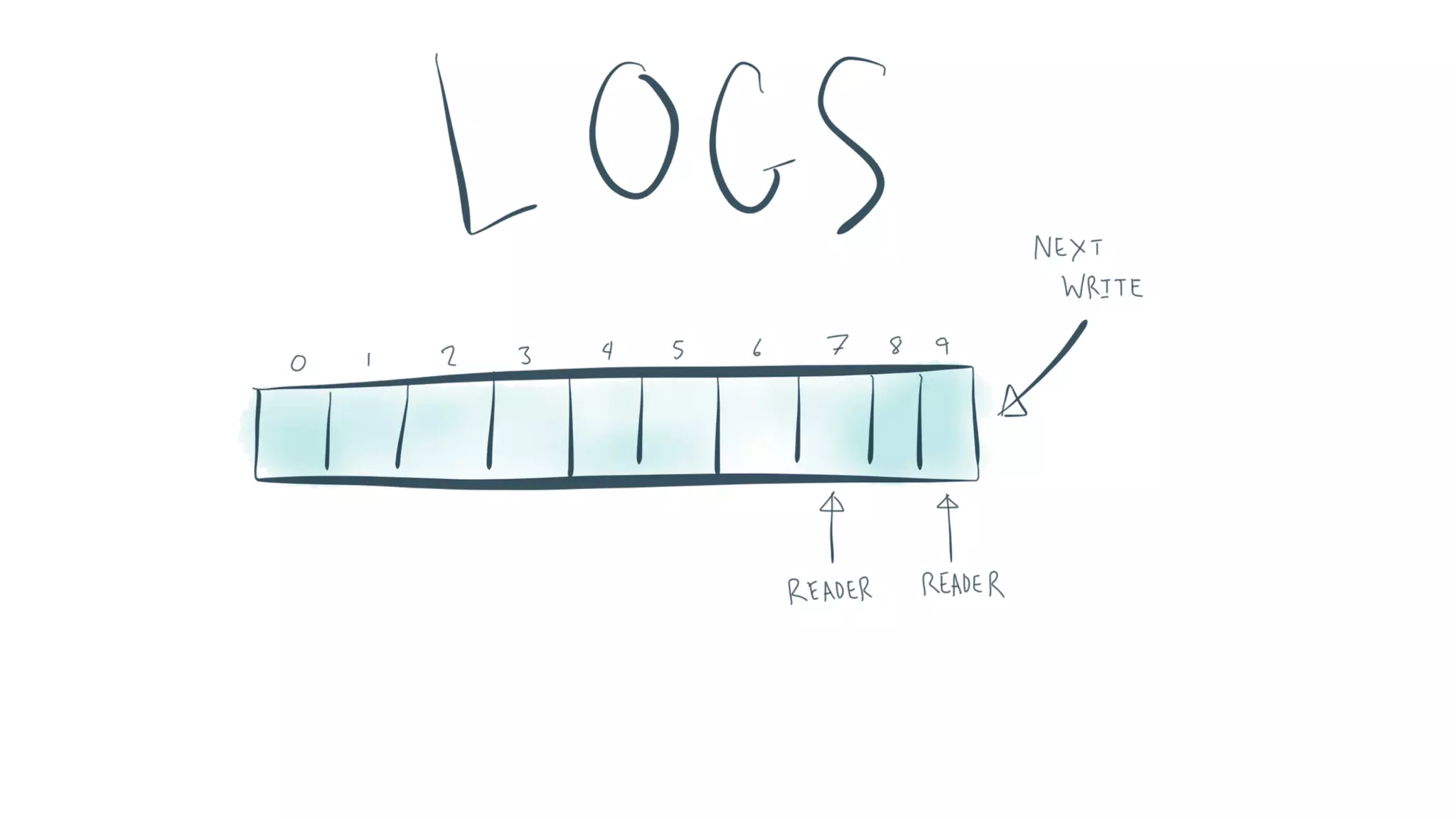

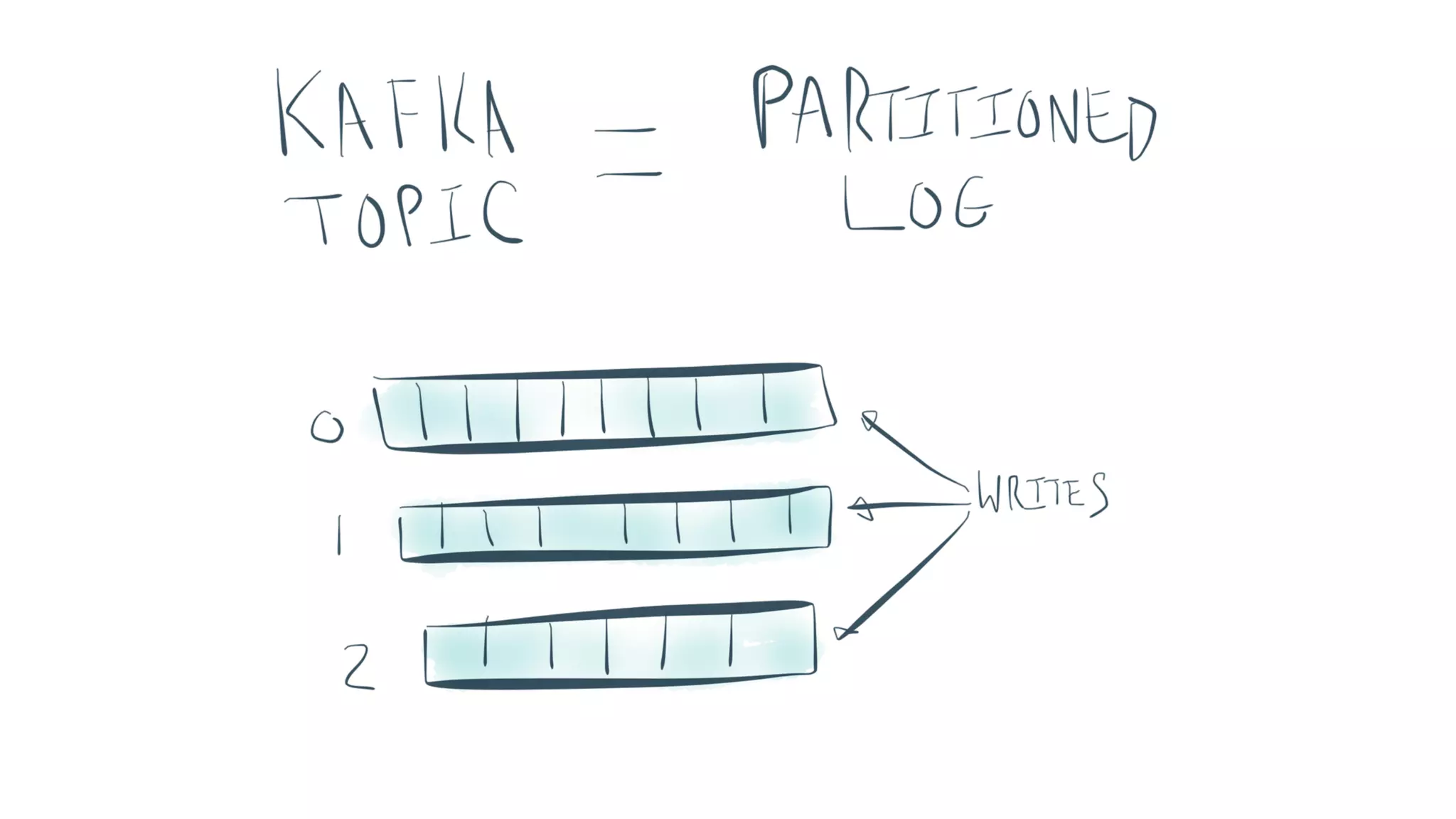

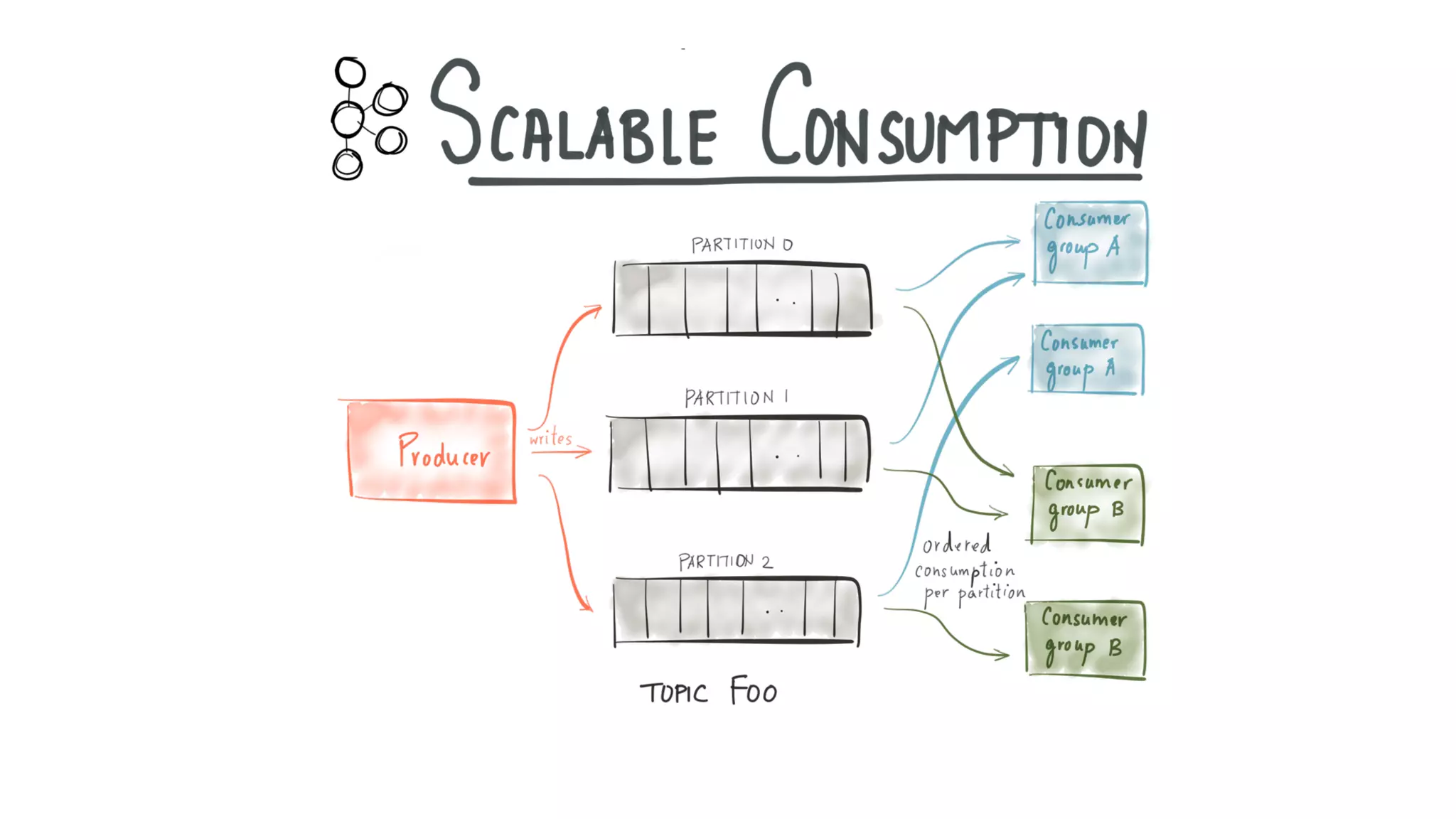

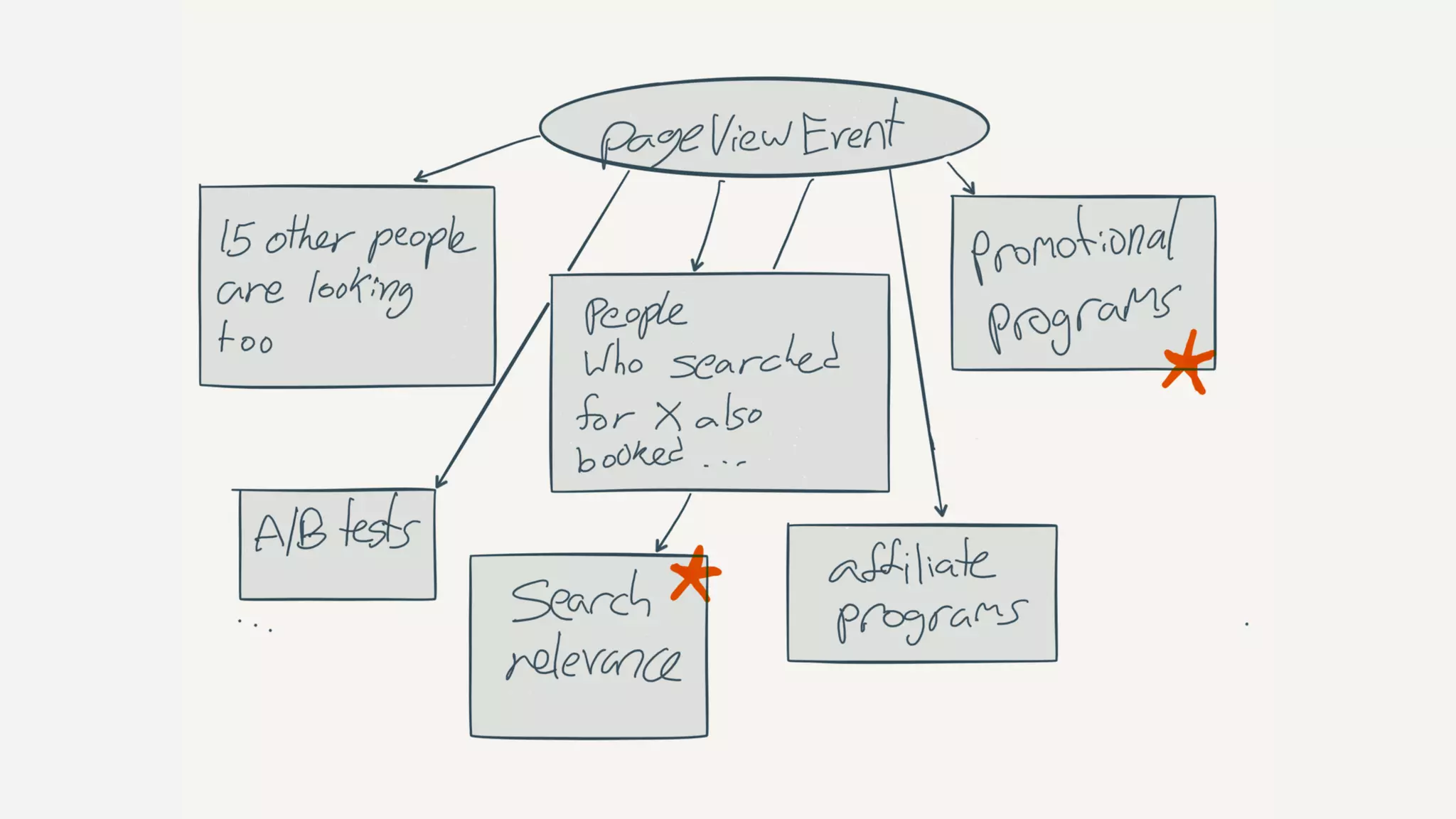

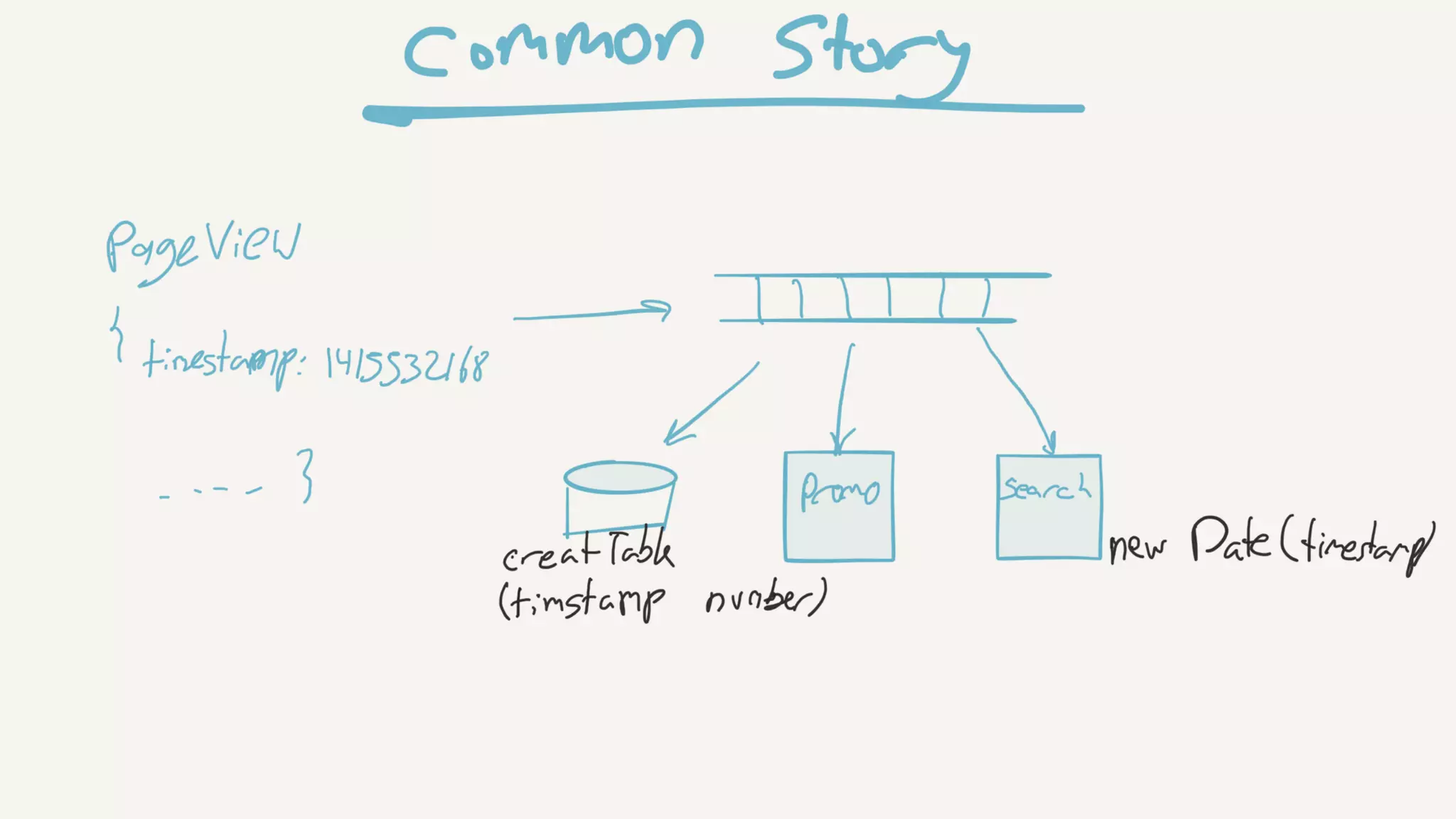

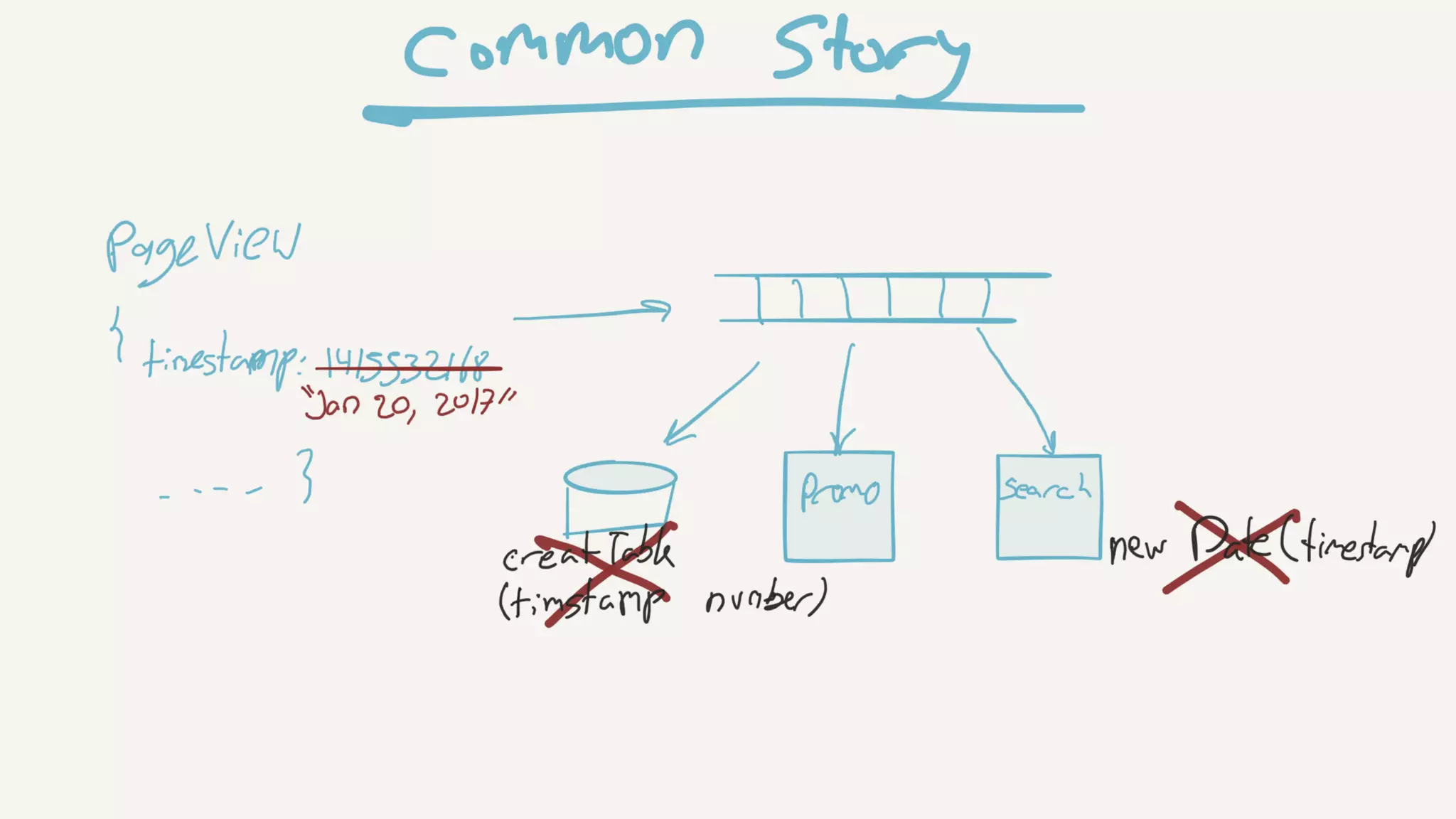

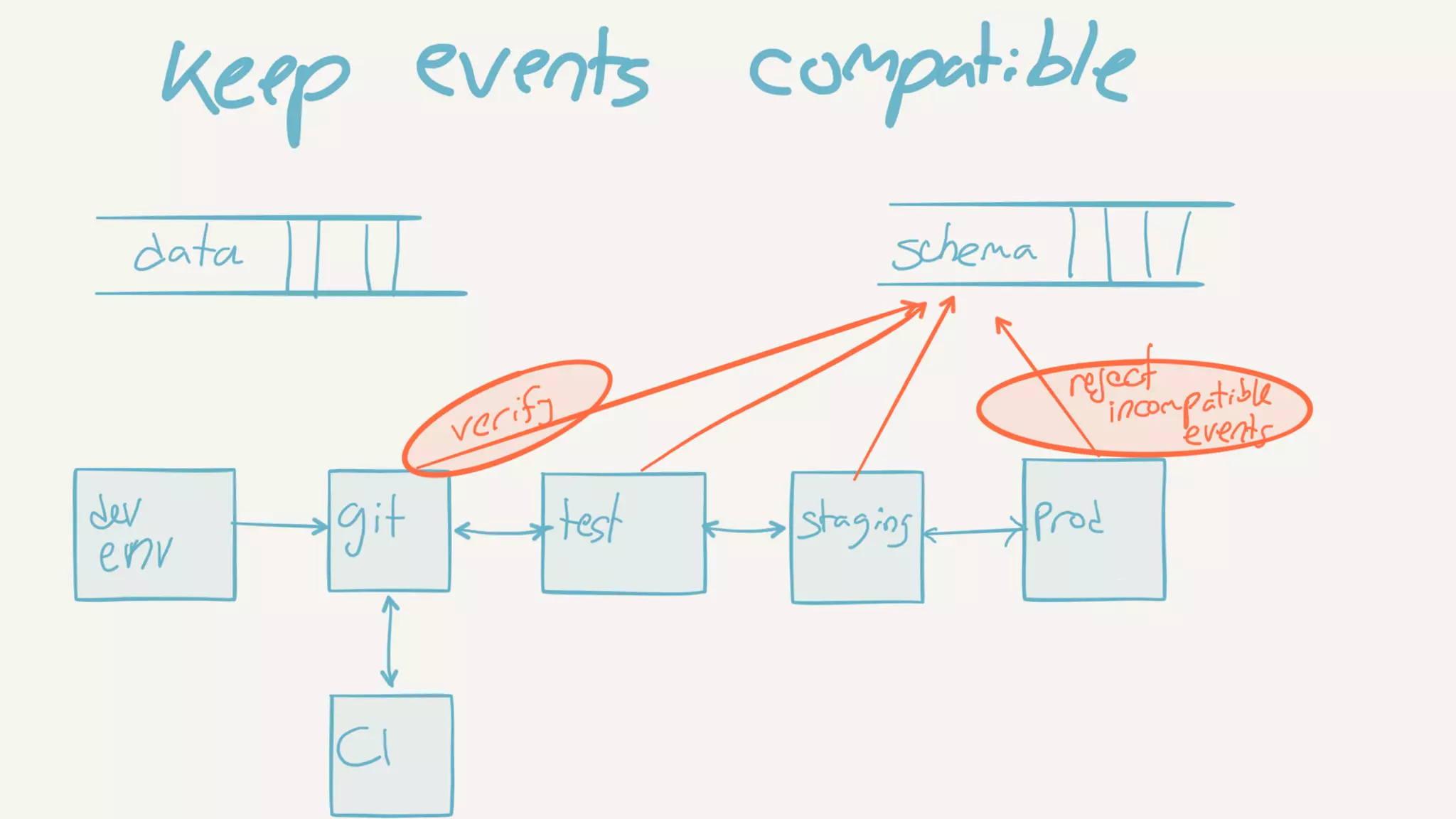

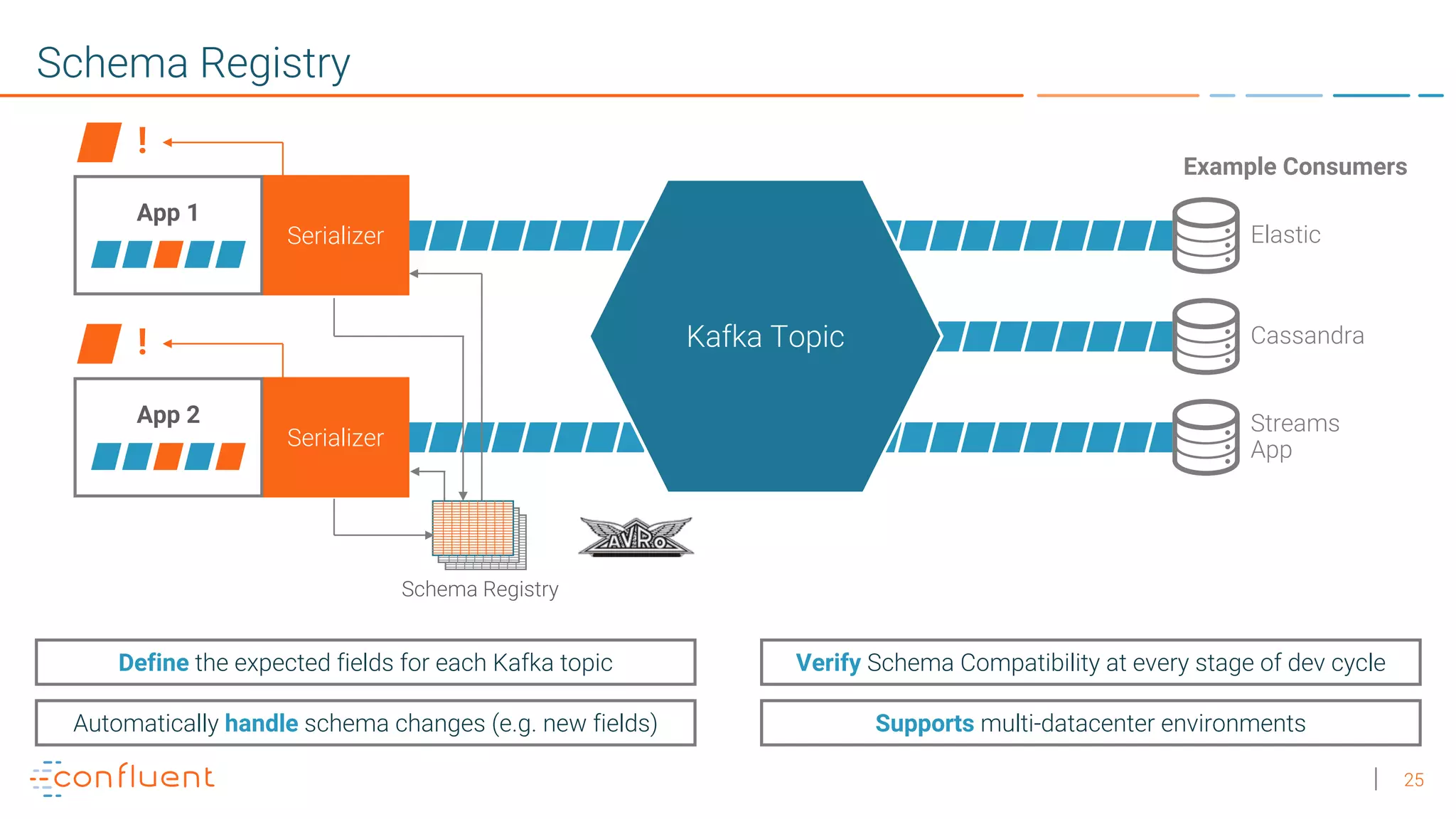

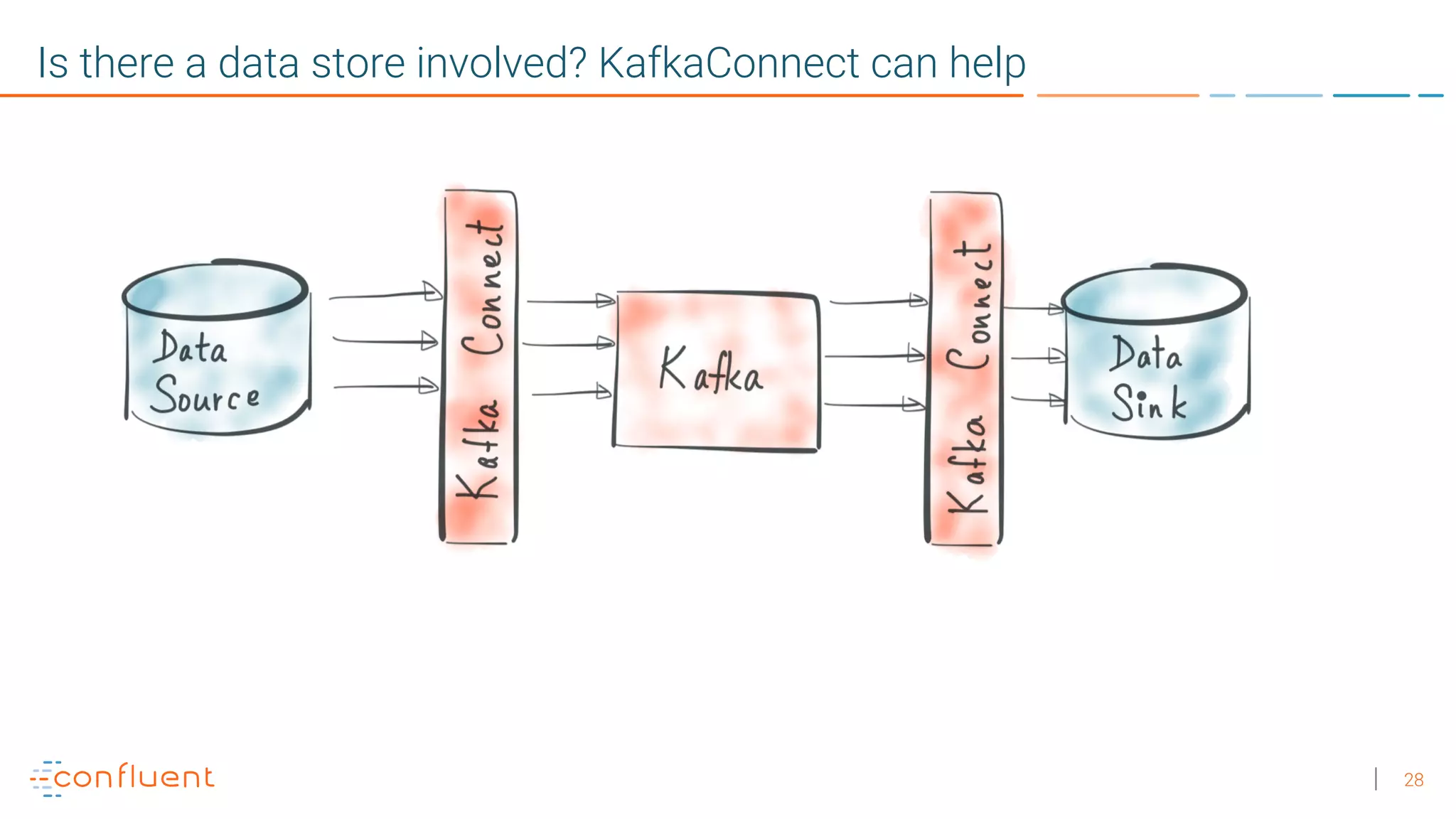

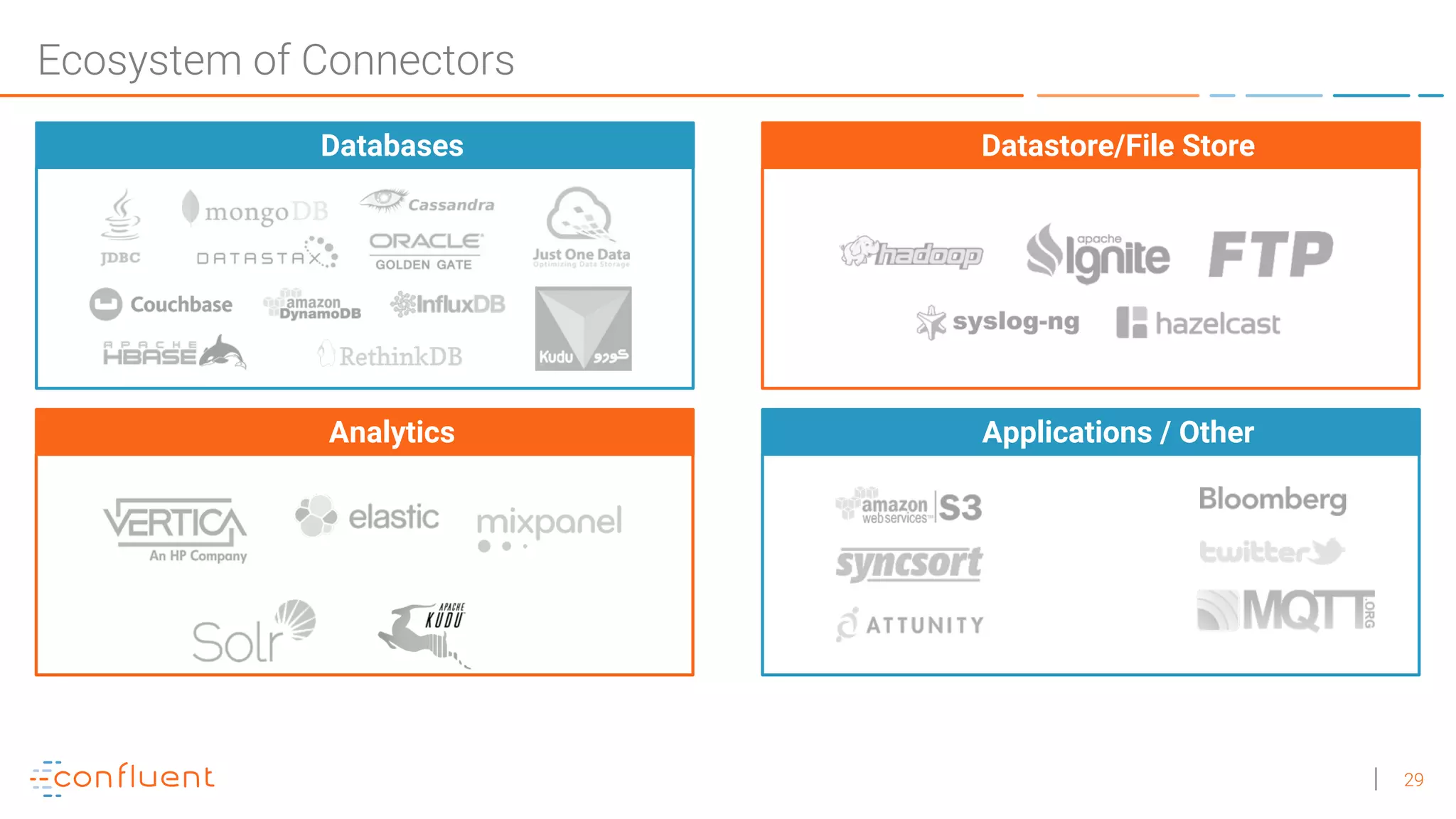

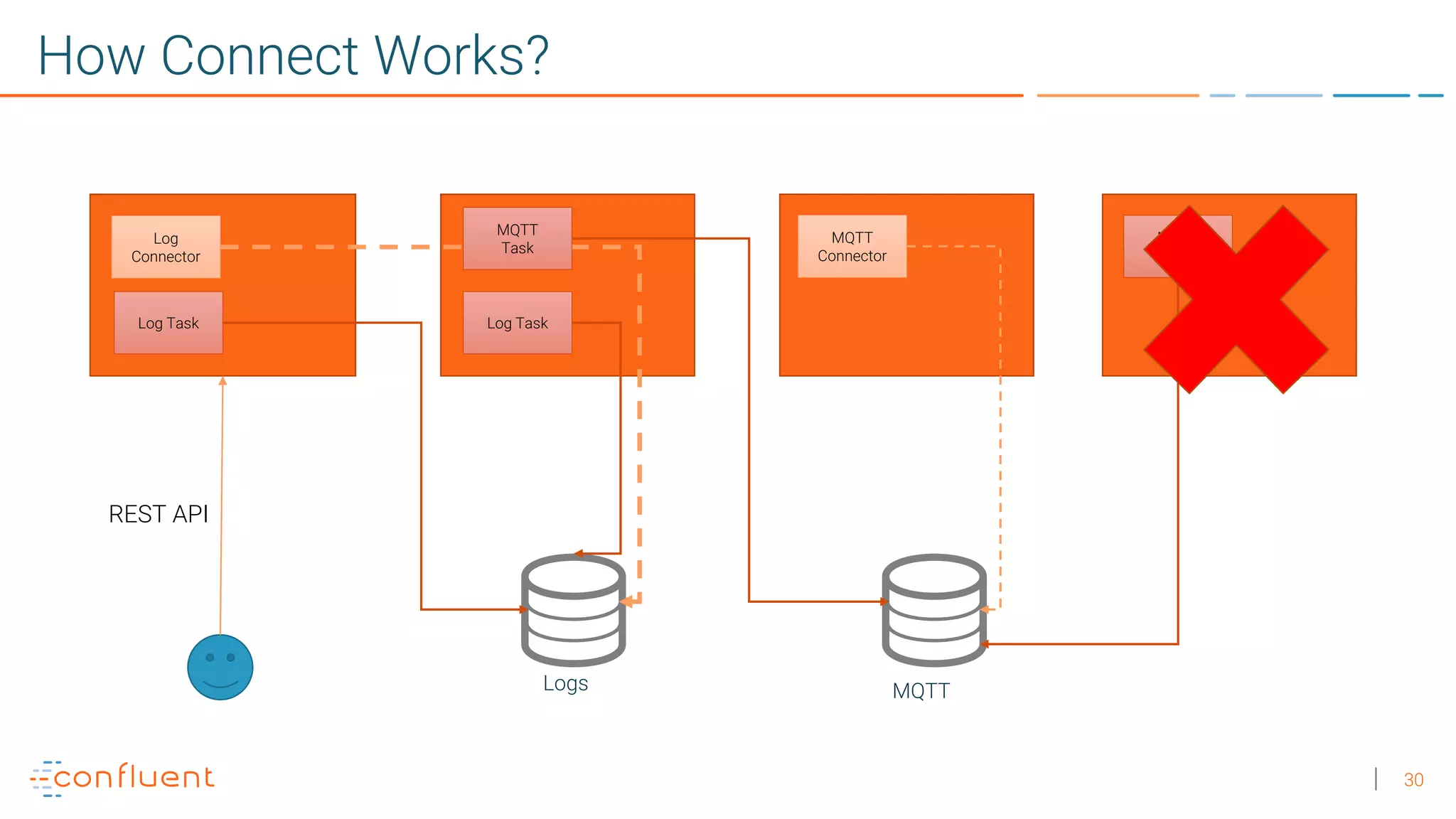

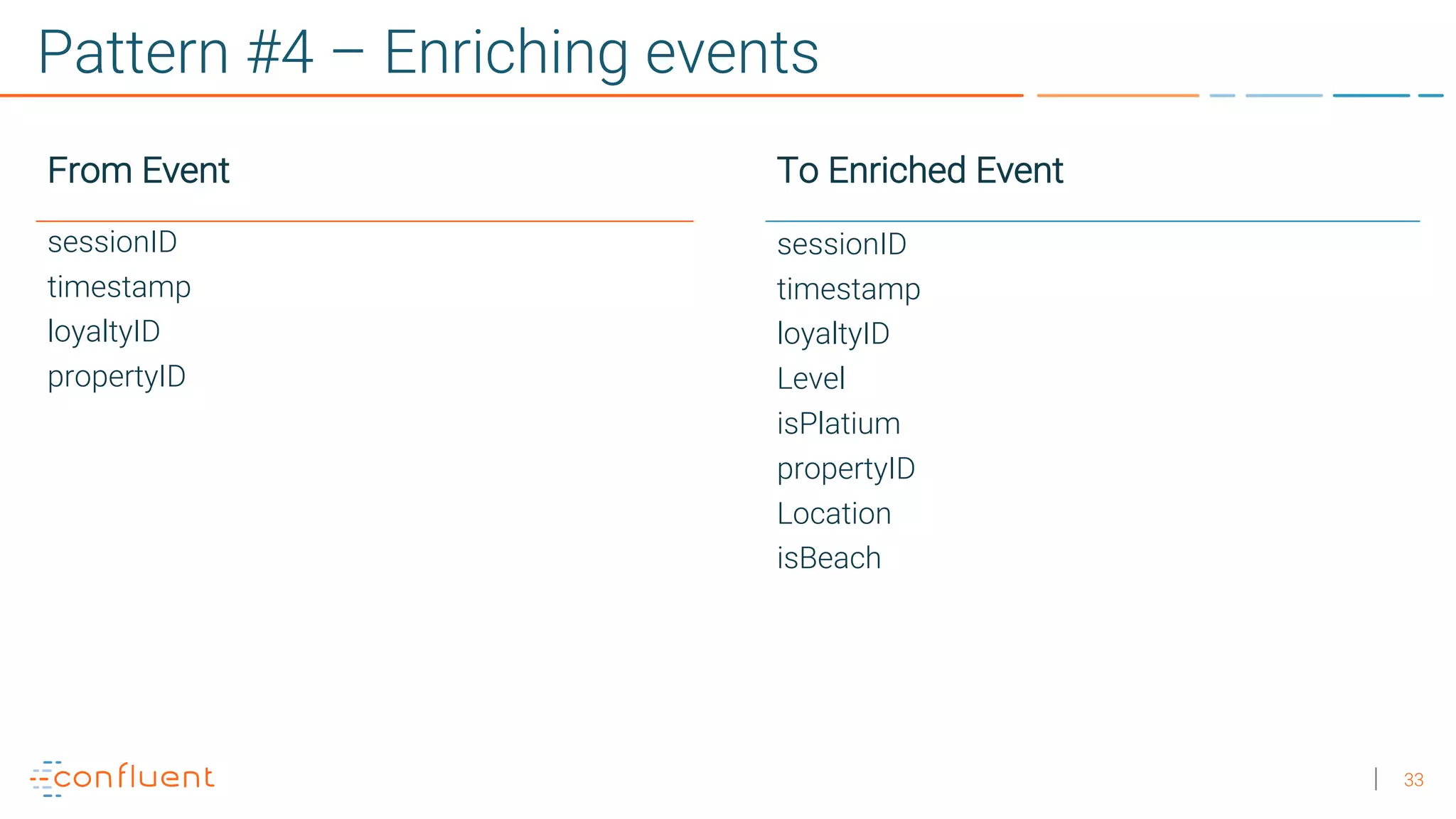

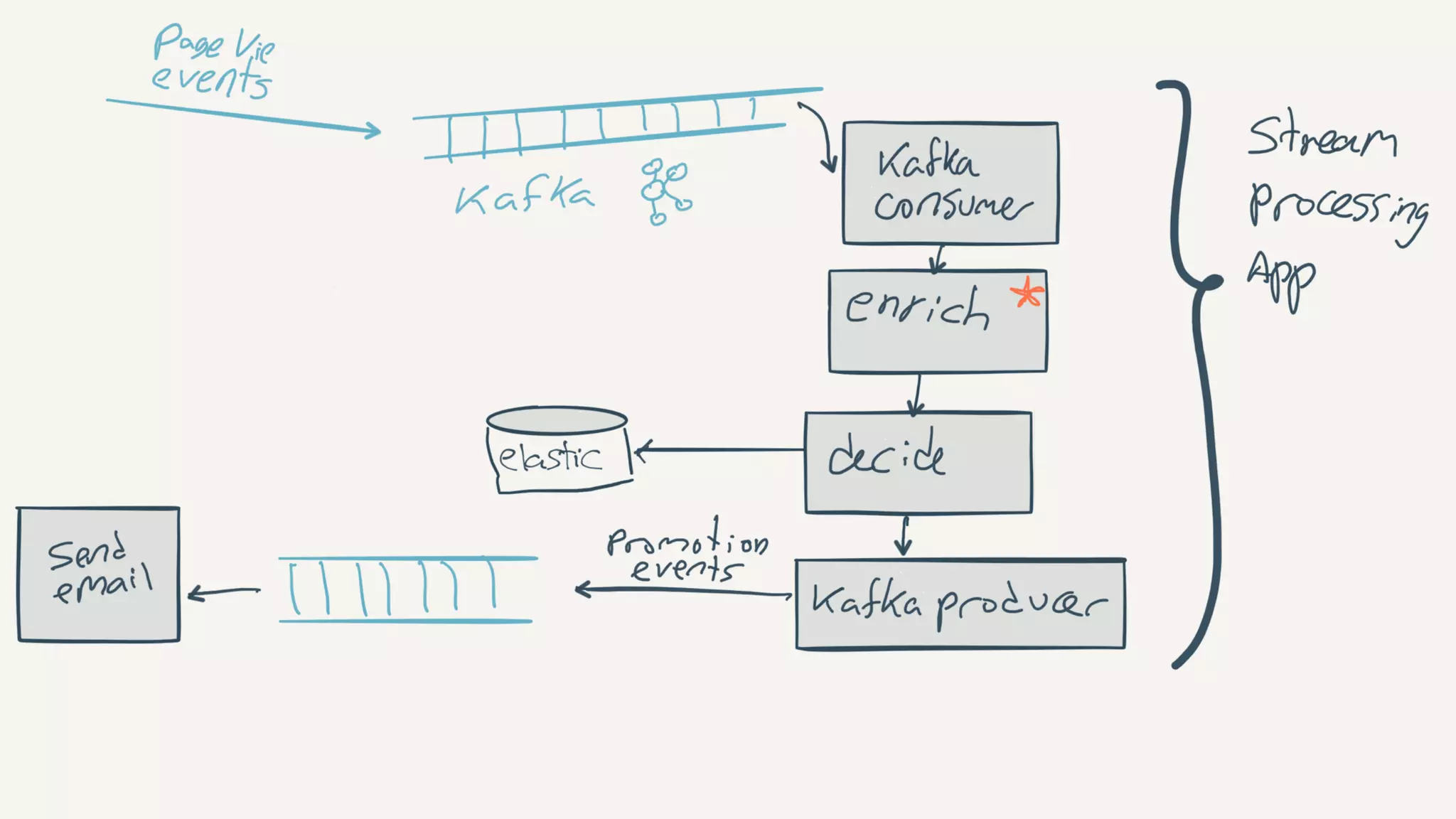

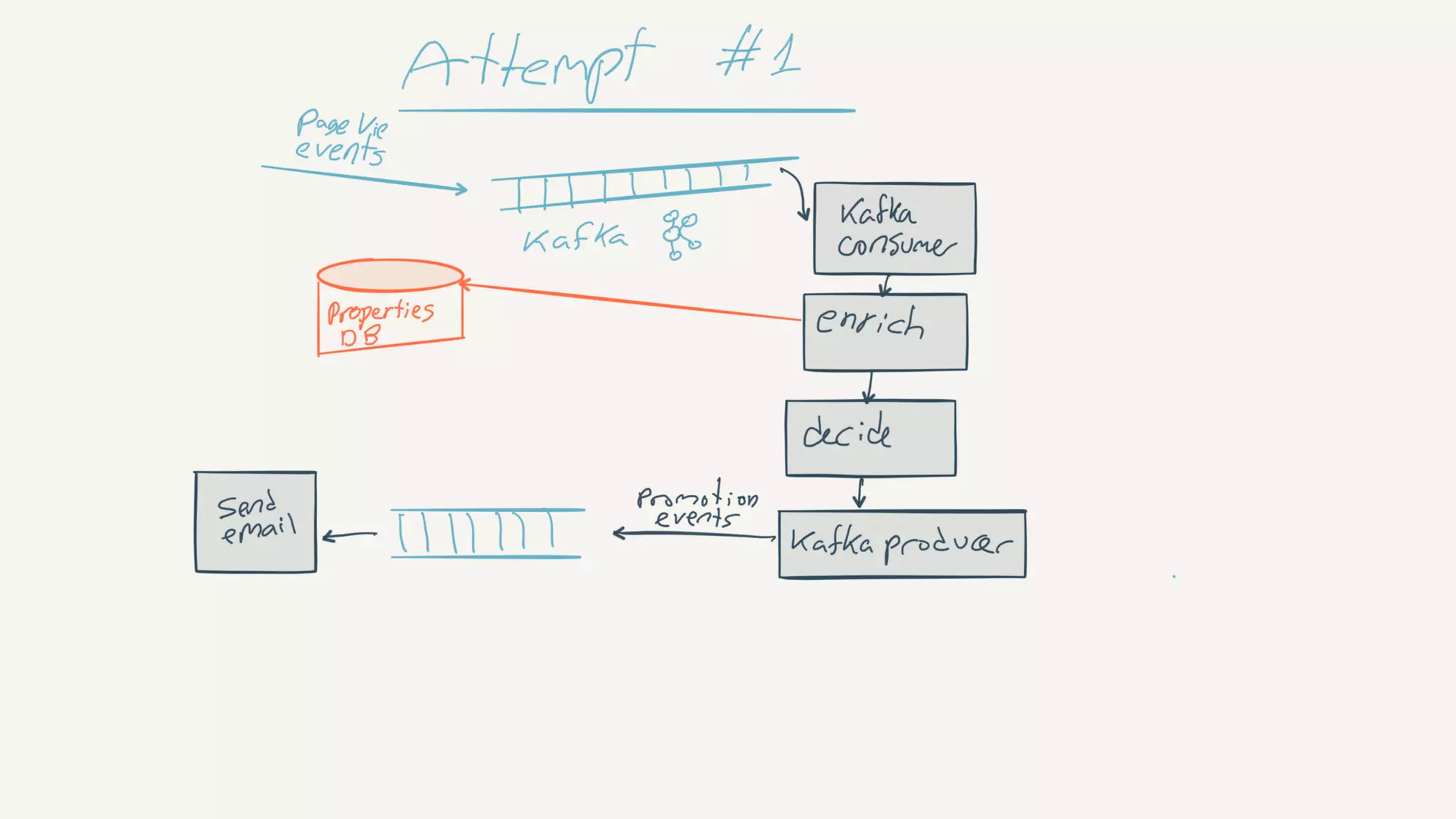

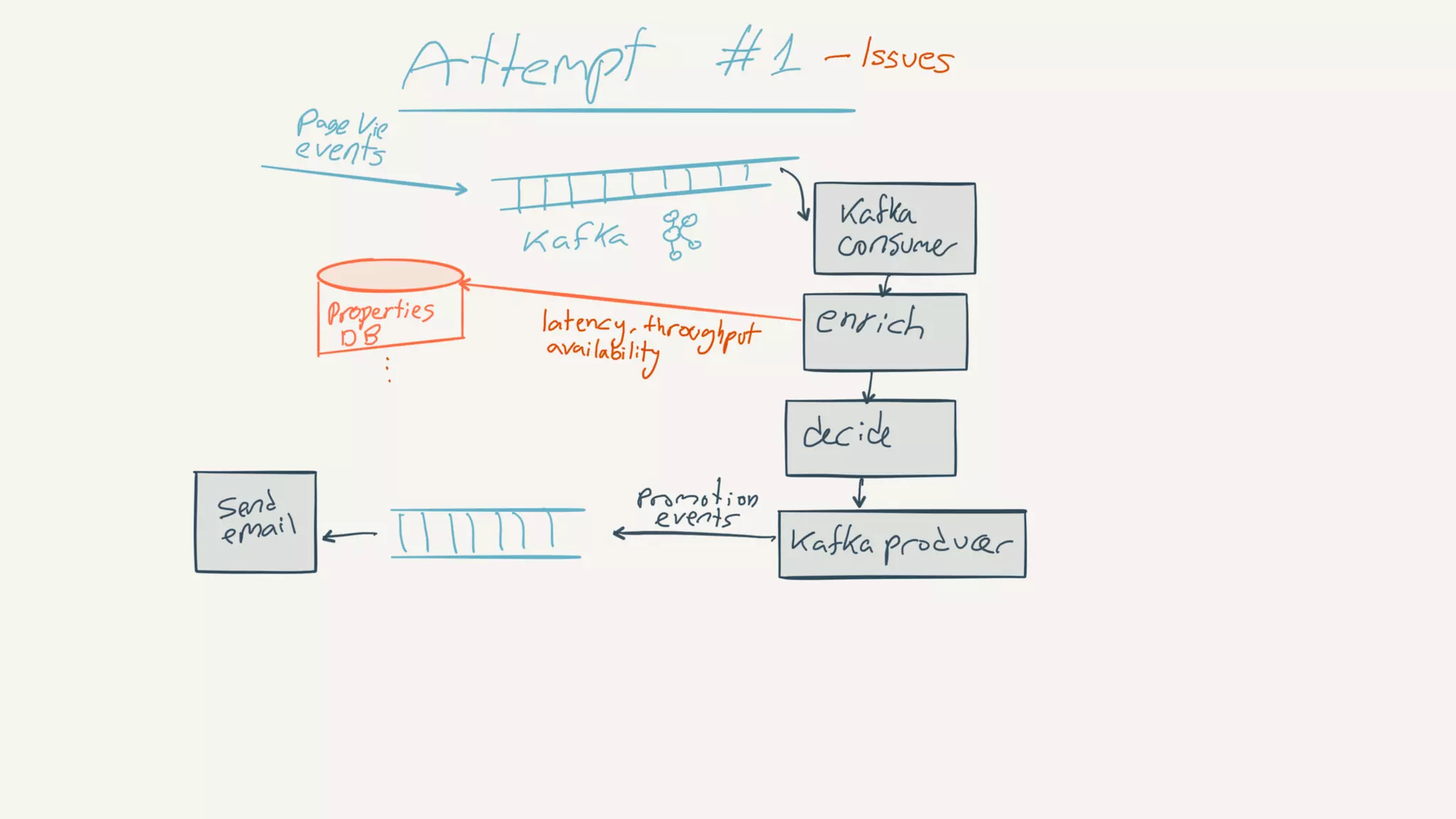

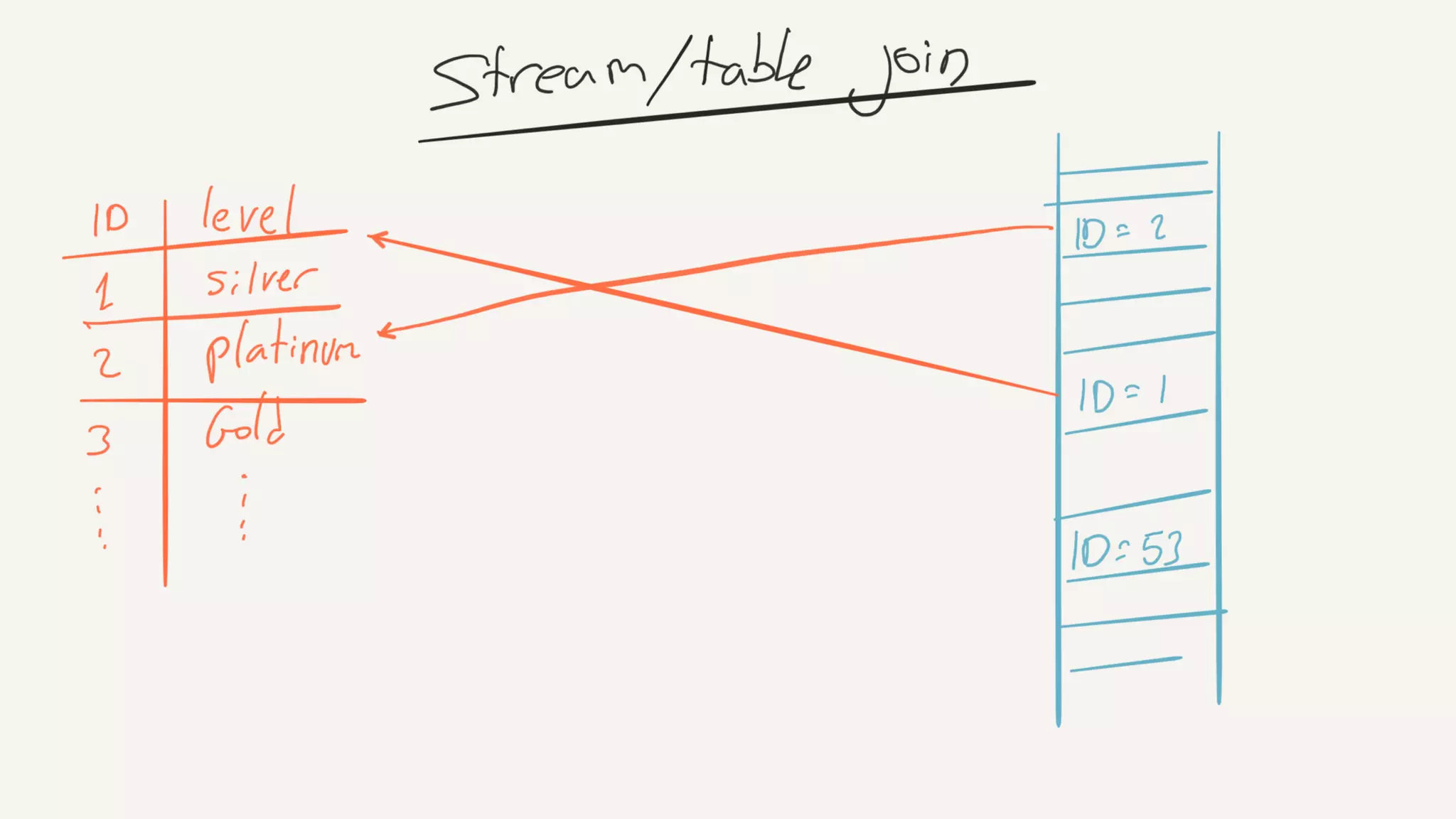

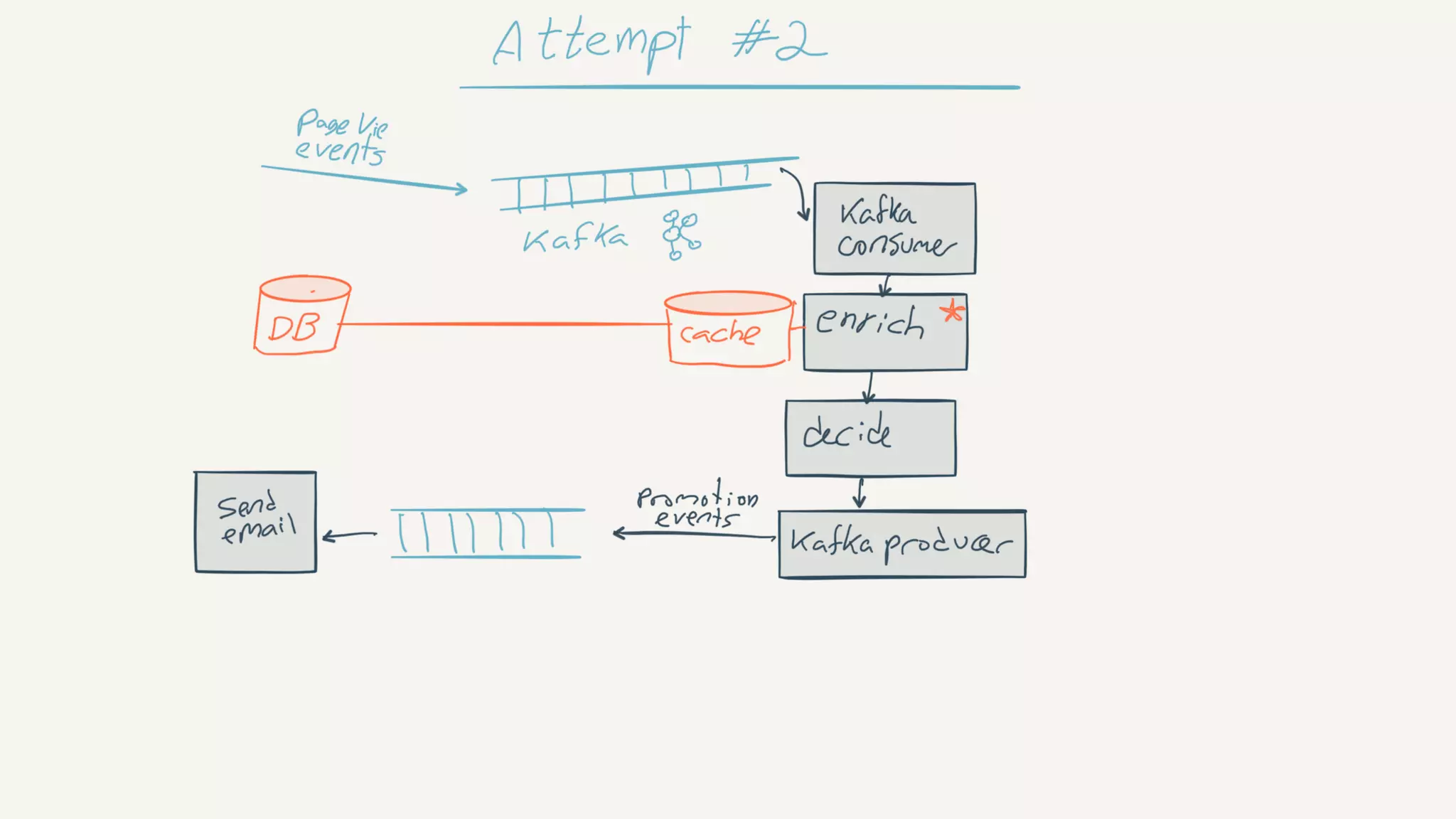

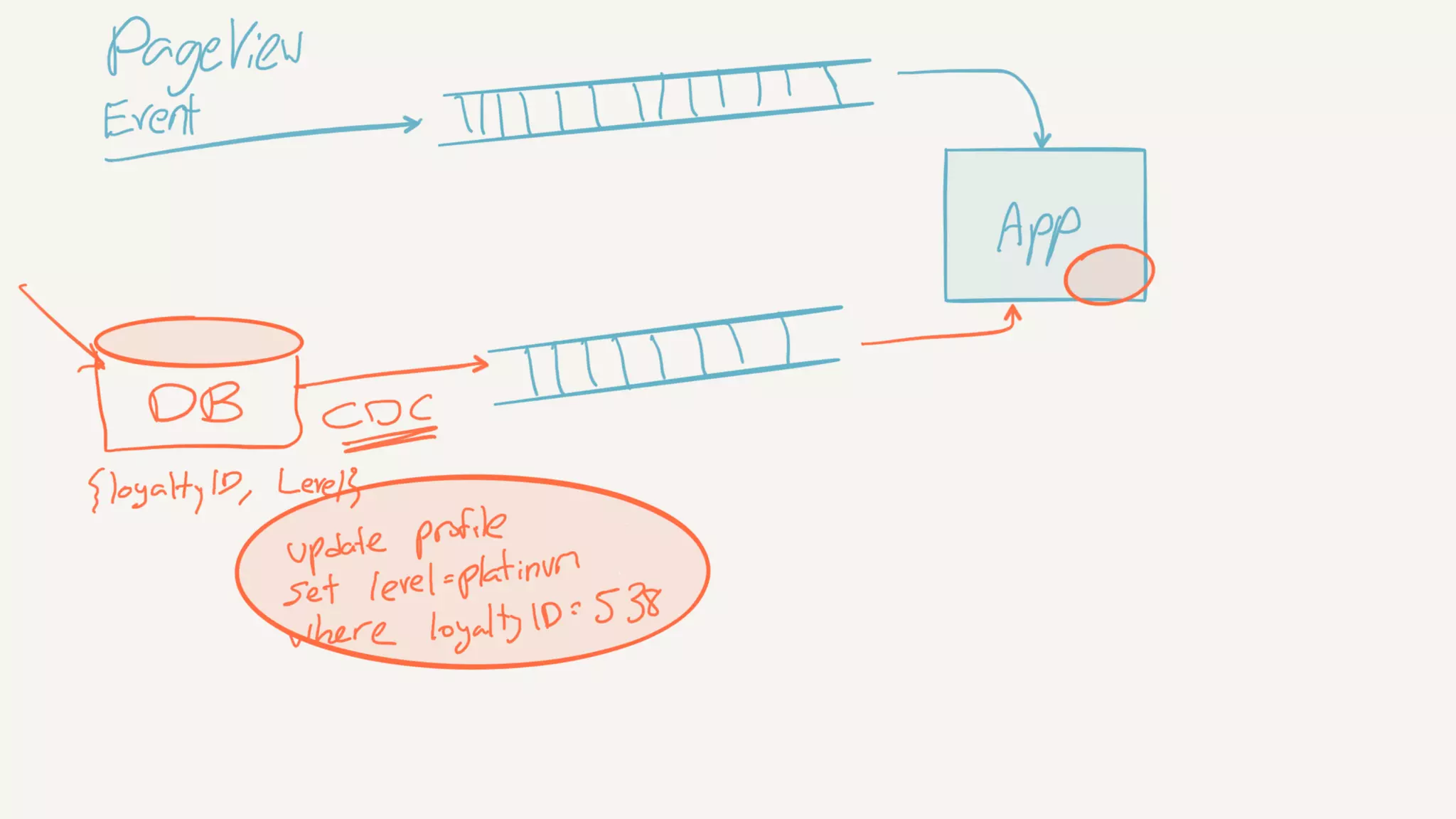

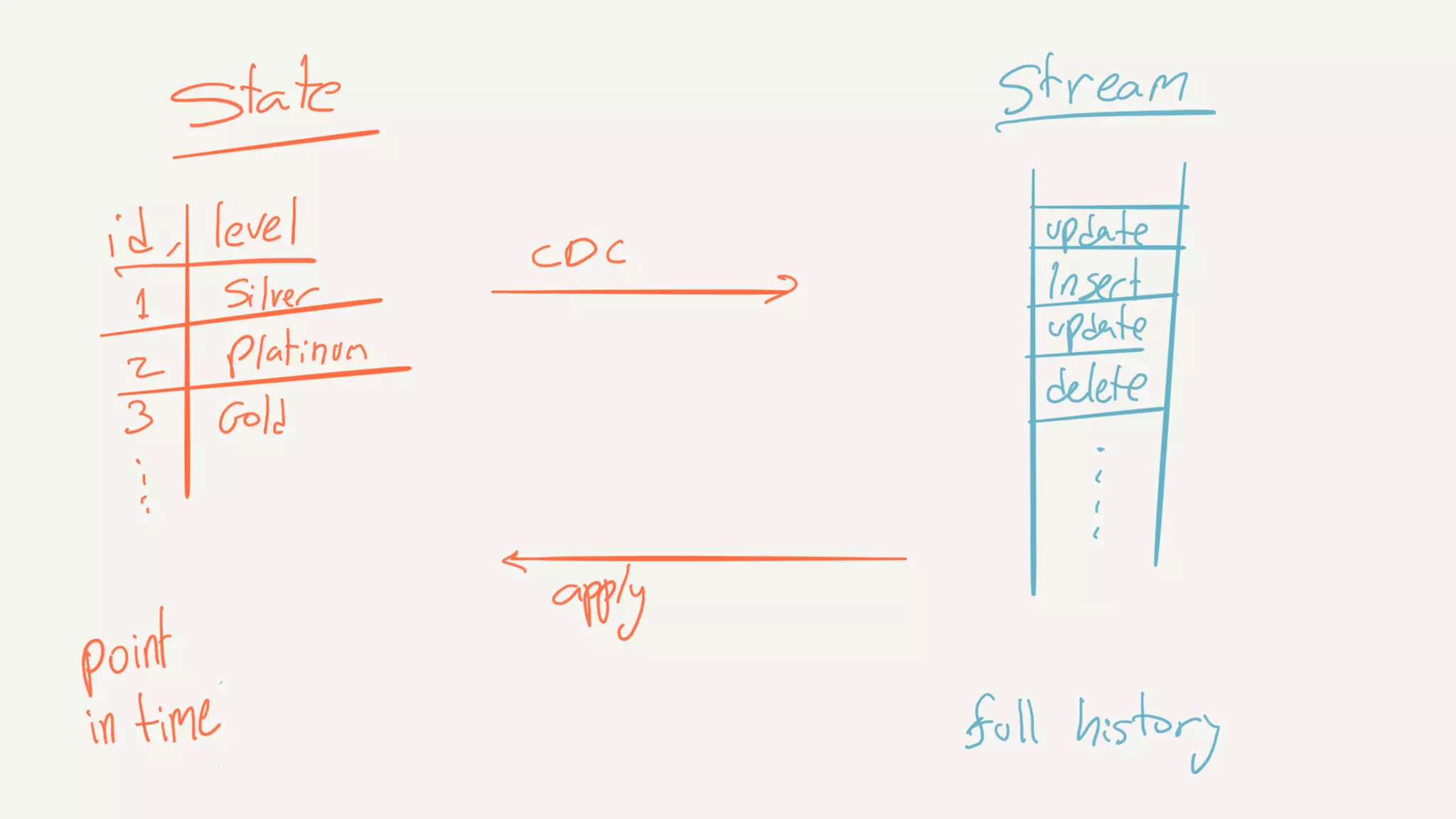

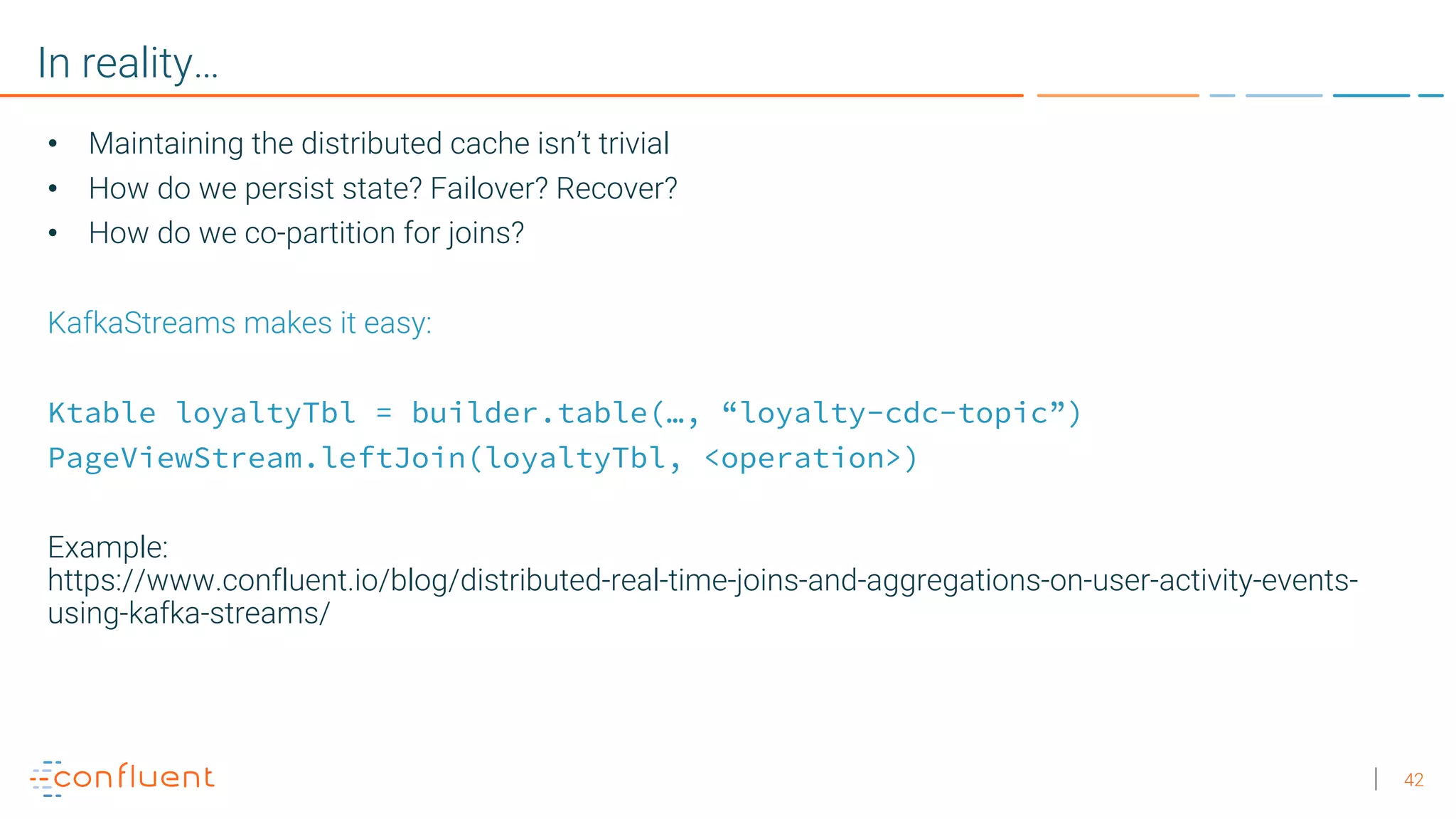

This document discusses patterns for modern data integration using streaming data. It outlines an evolution from data warehouses to data lakes to streaming data. It then describes four key patterns: 1) Stream all things (data) in one place, 2) Keep schemas compatible and process data on, 3) Enable ridiculously parallel single message transformations, and 4) Perform streaming data enrichment to add additional context to events. Examples are provided of using Apache Kafka and Kafka Connect to implement these patterns for a large hotel chain integrating various data sources and performing real-time analytics on customer events.