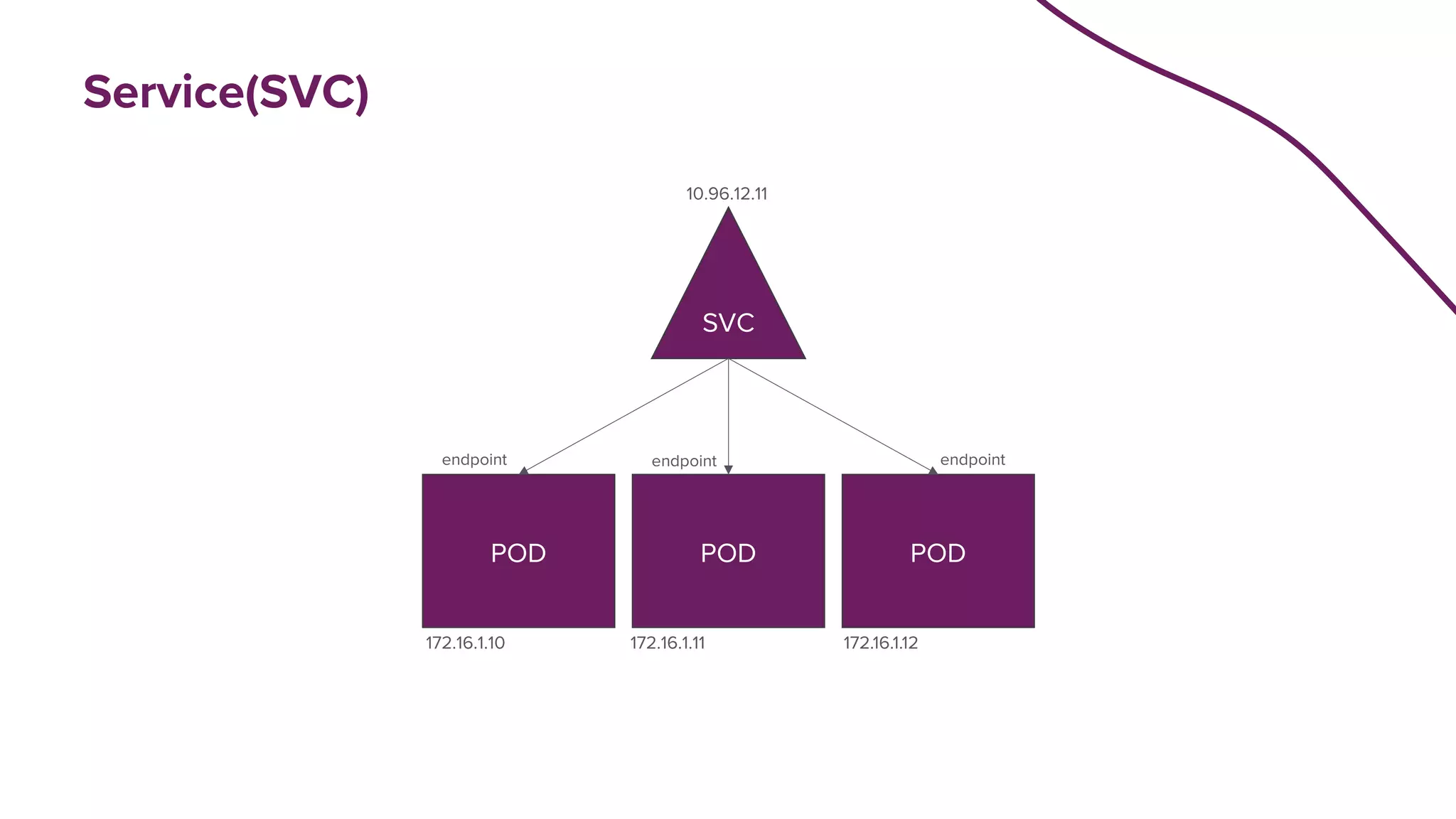

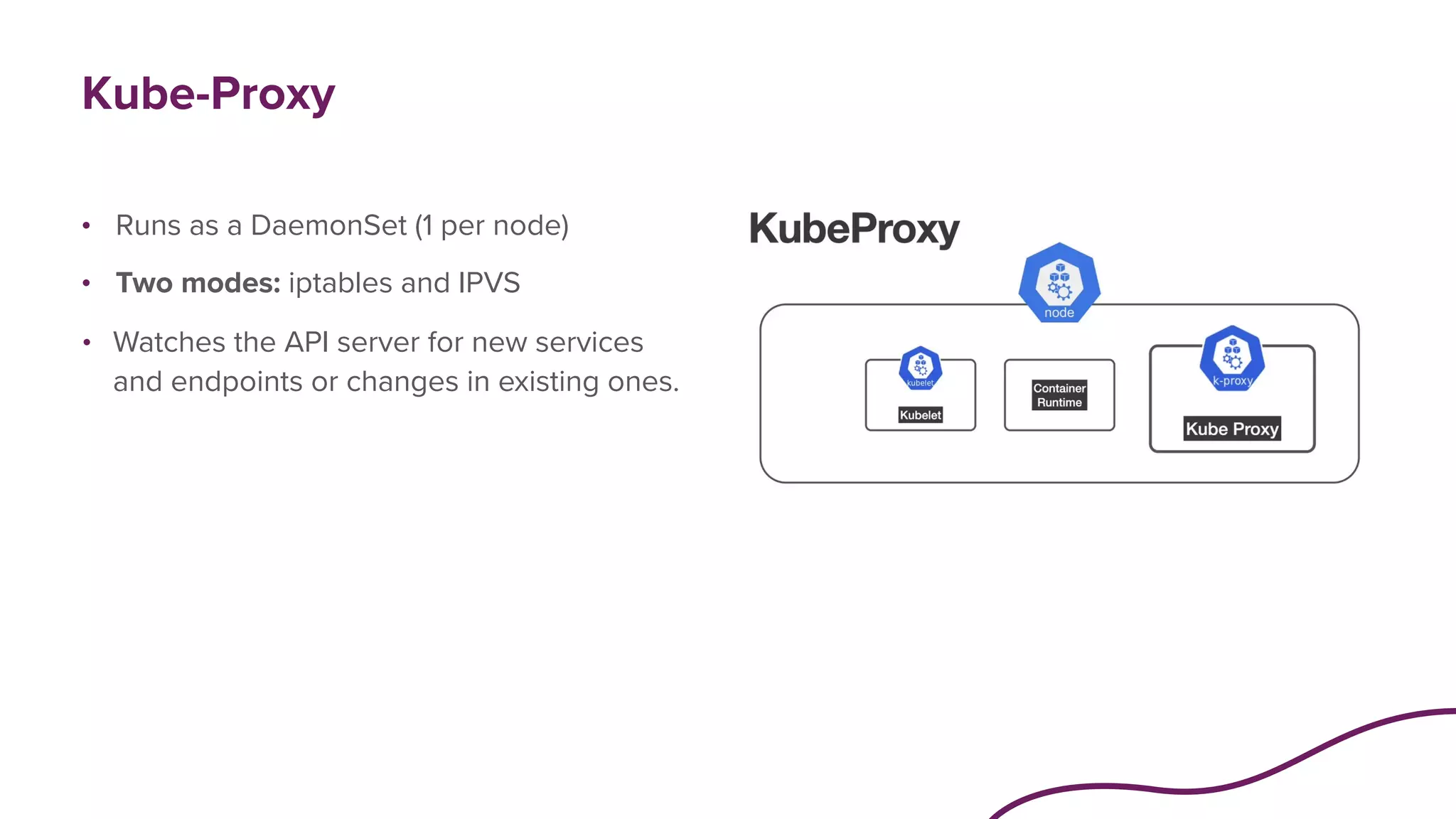

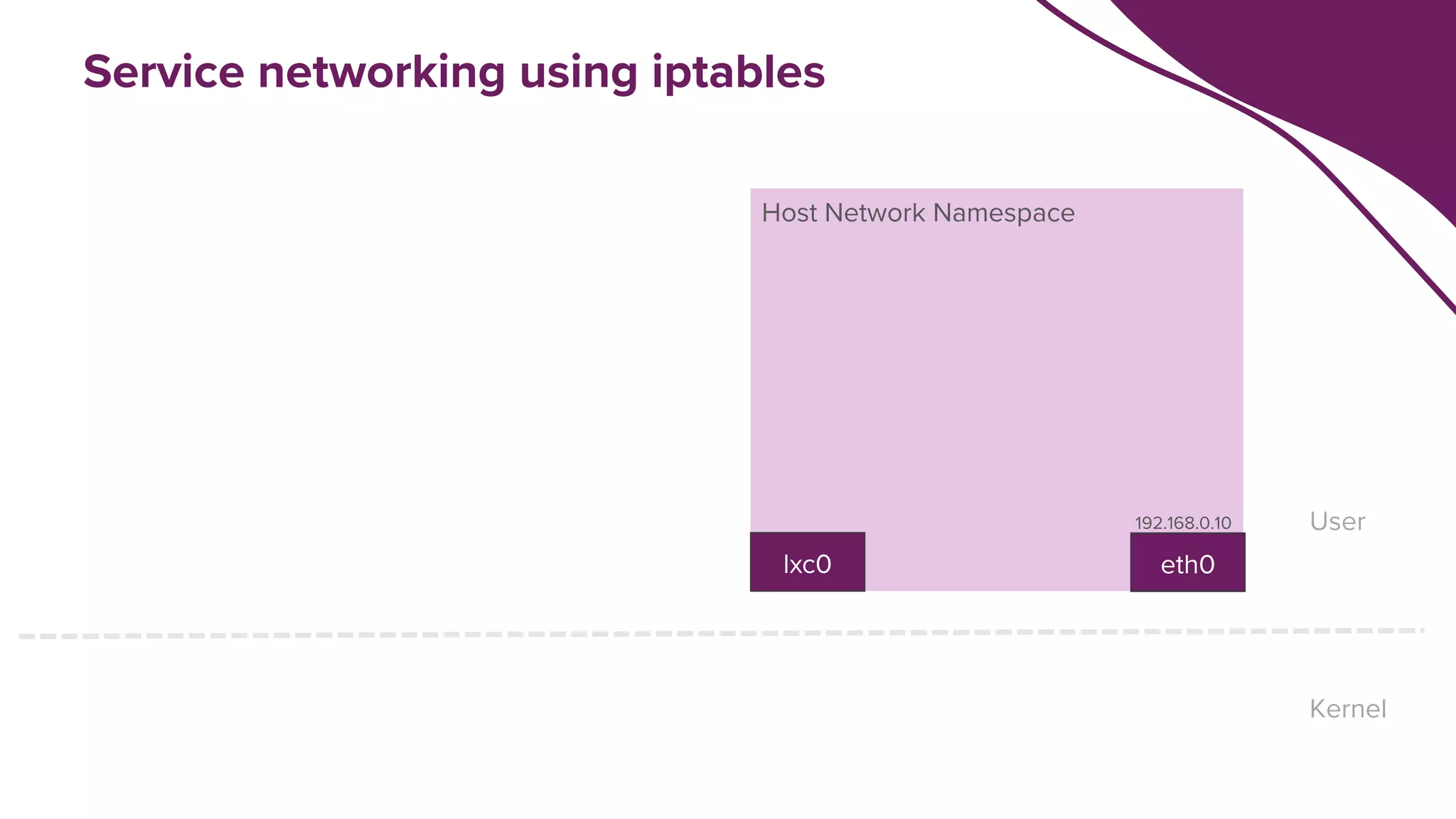

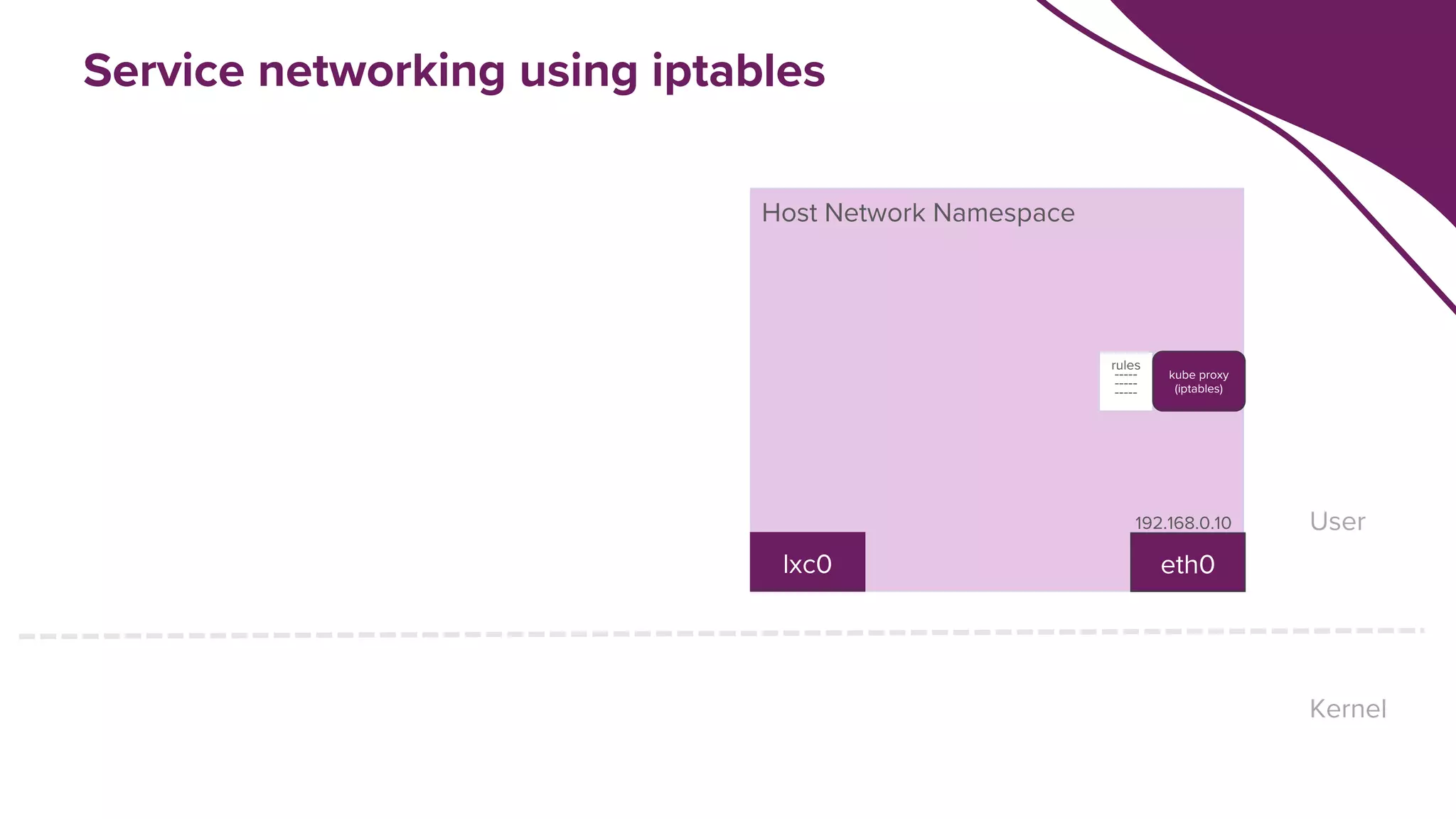

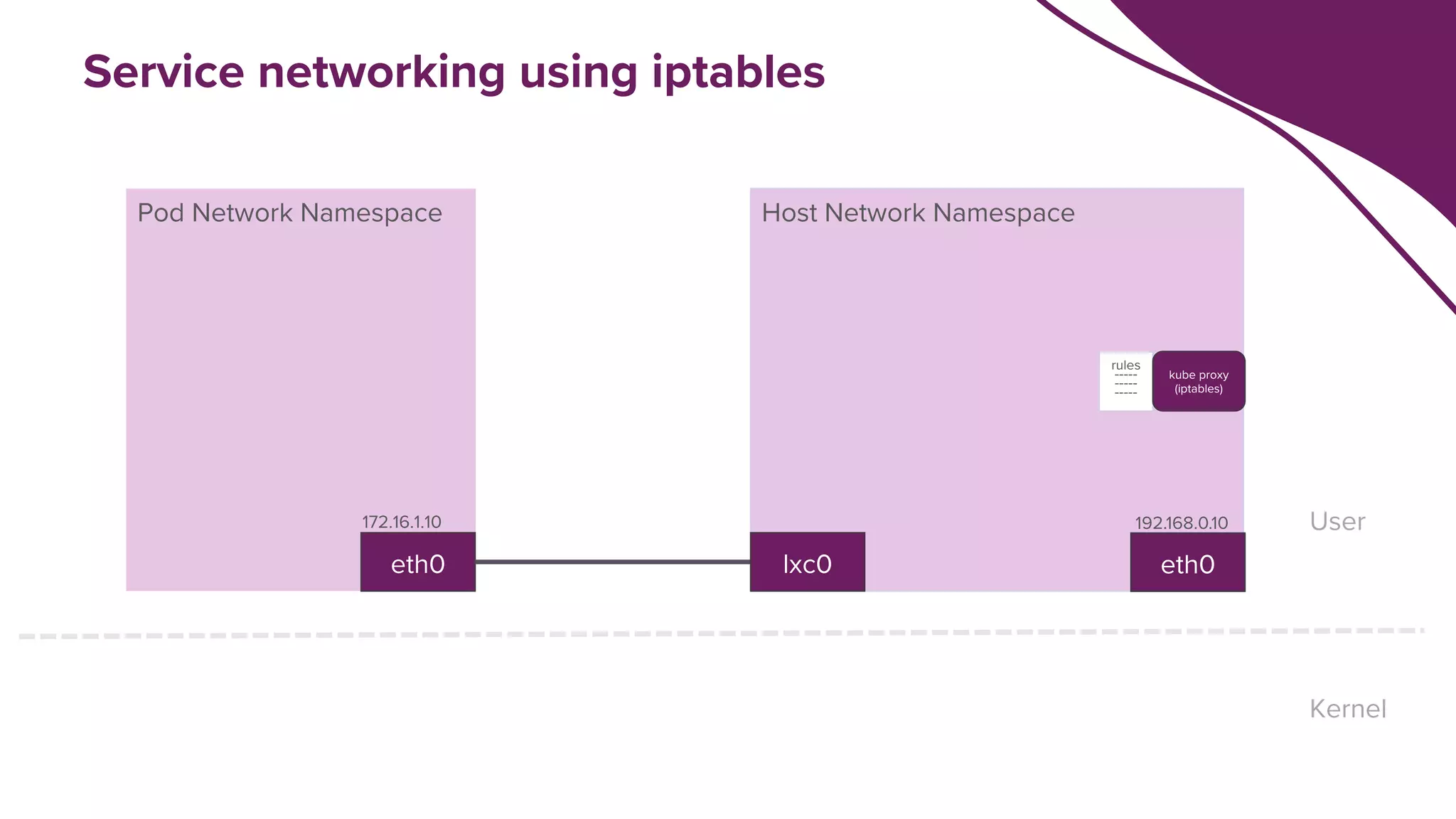

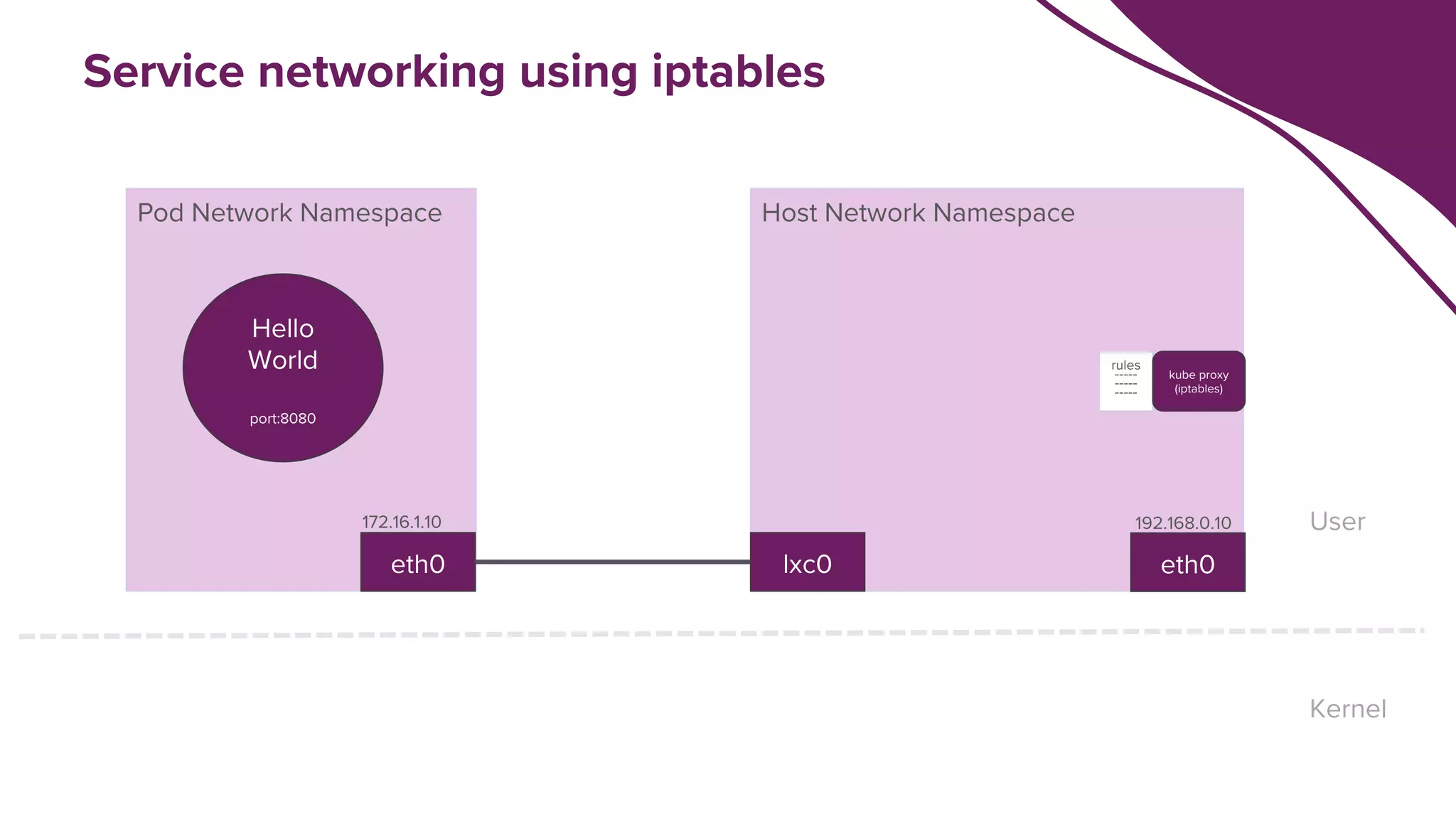

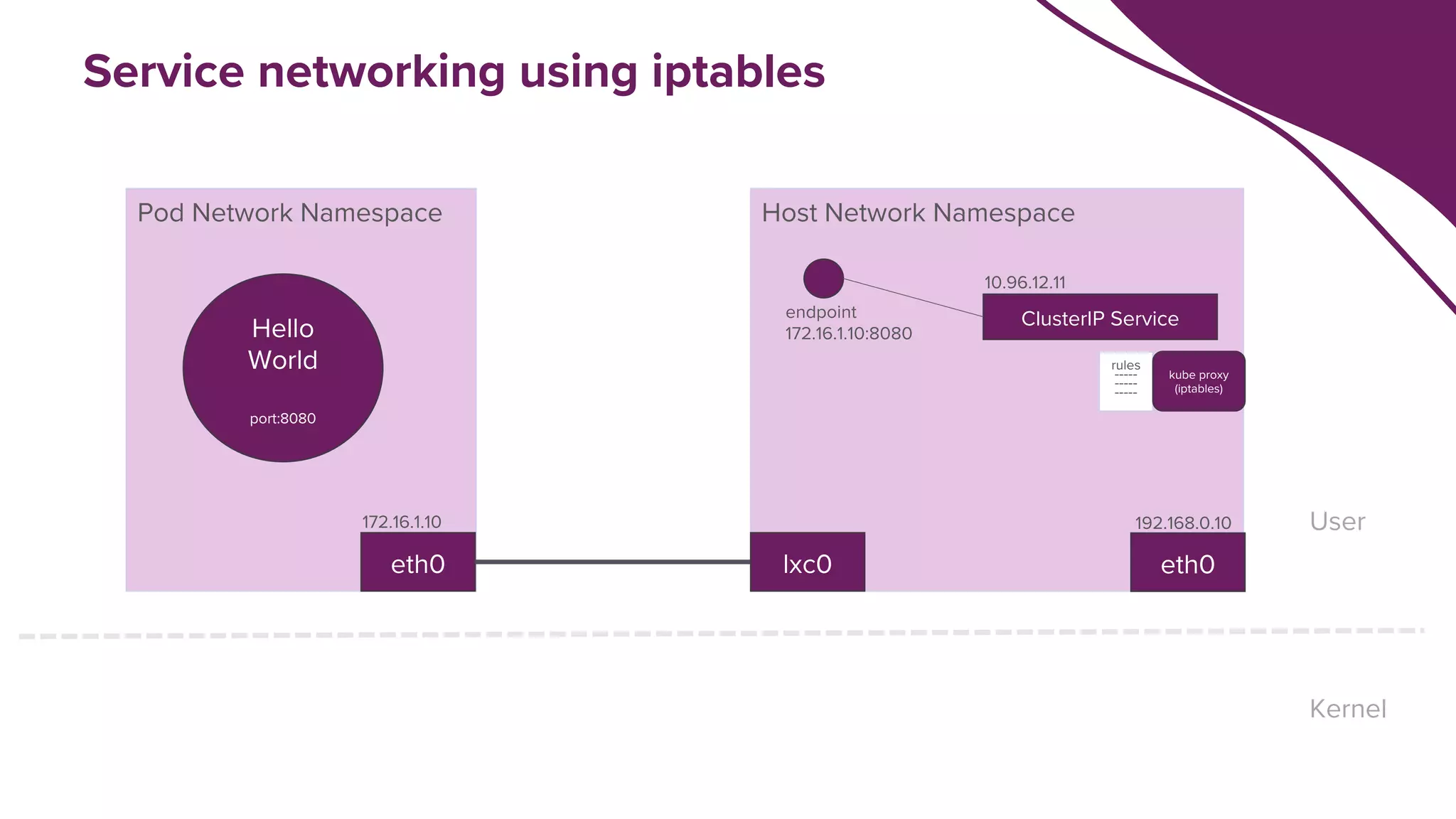

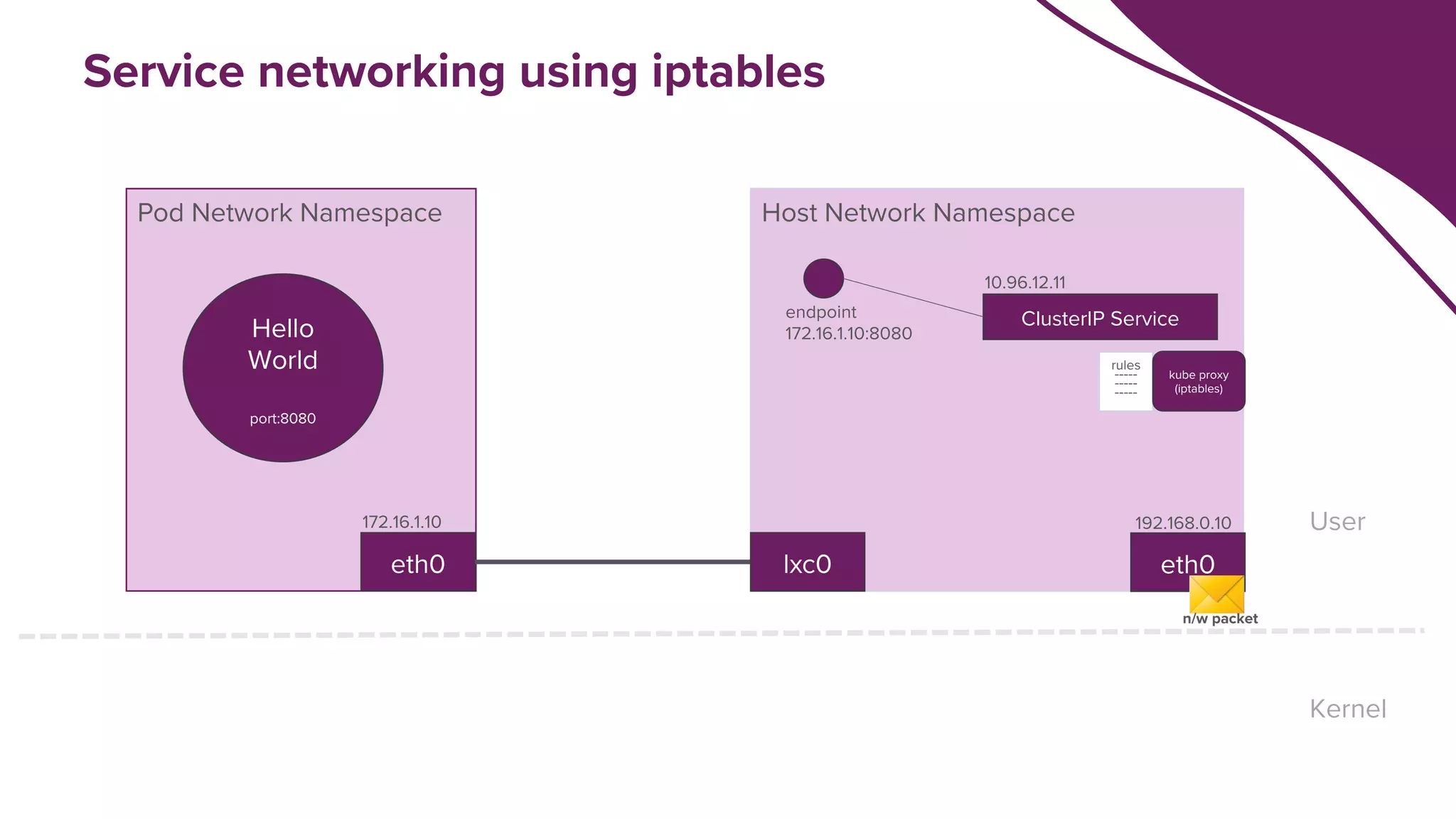

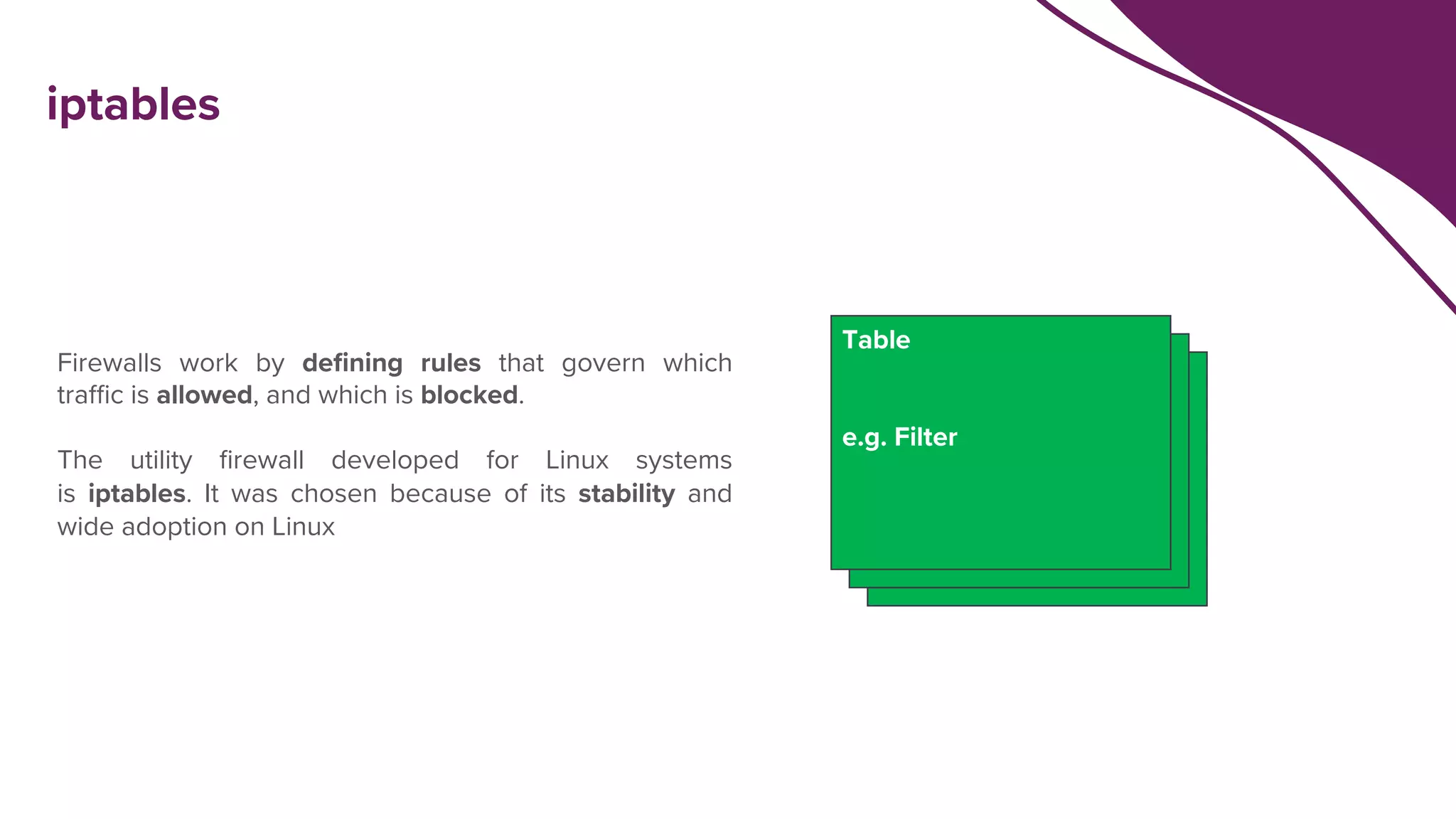

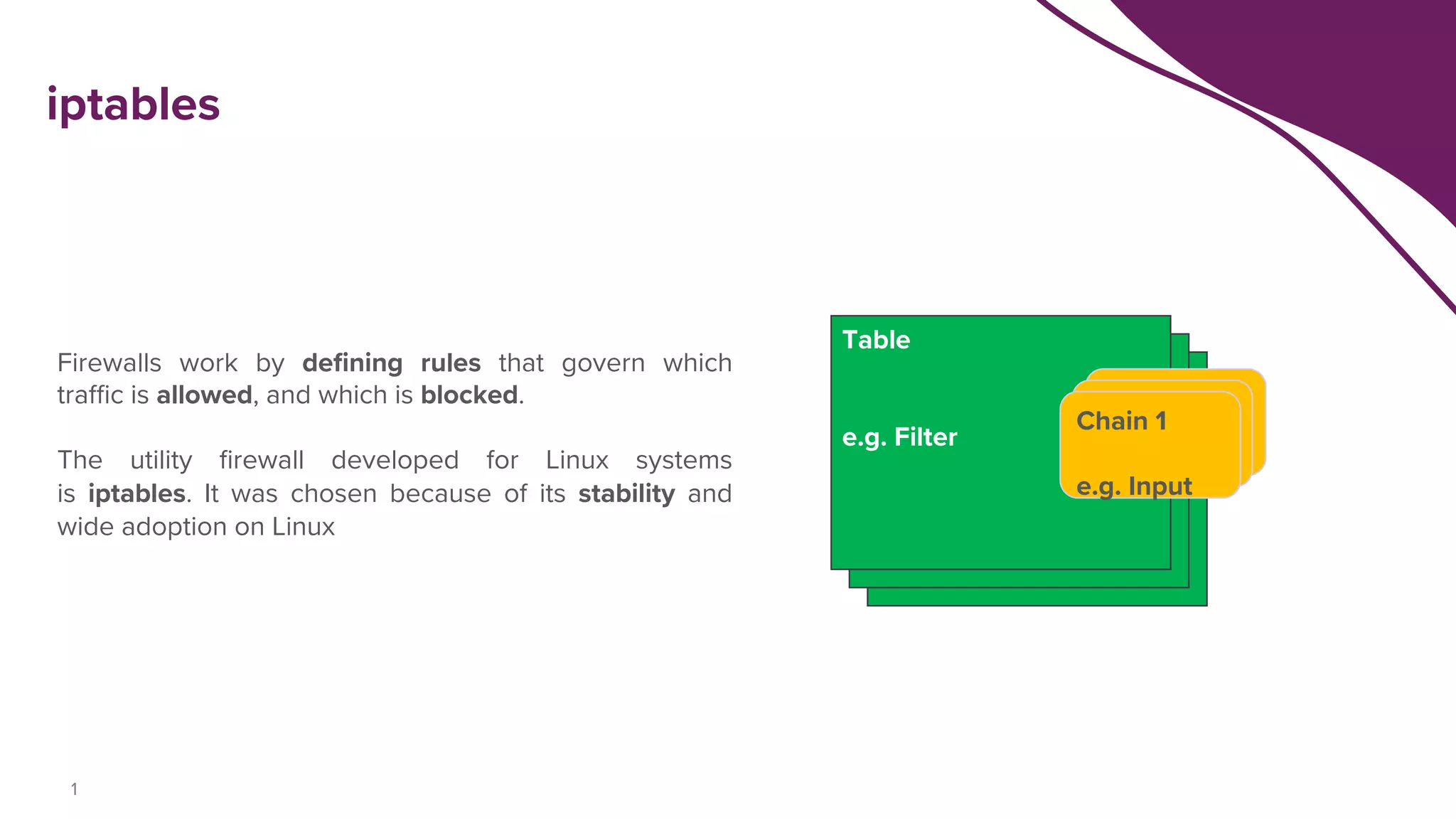

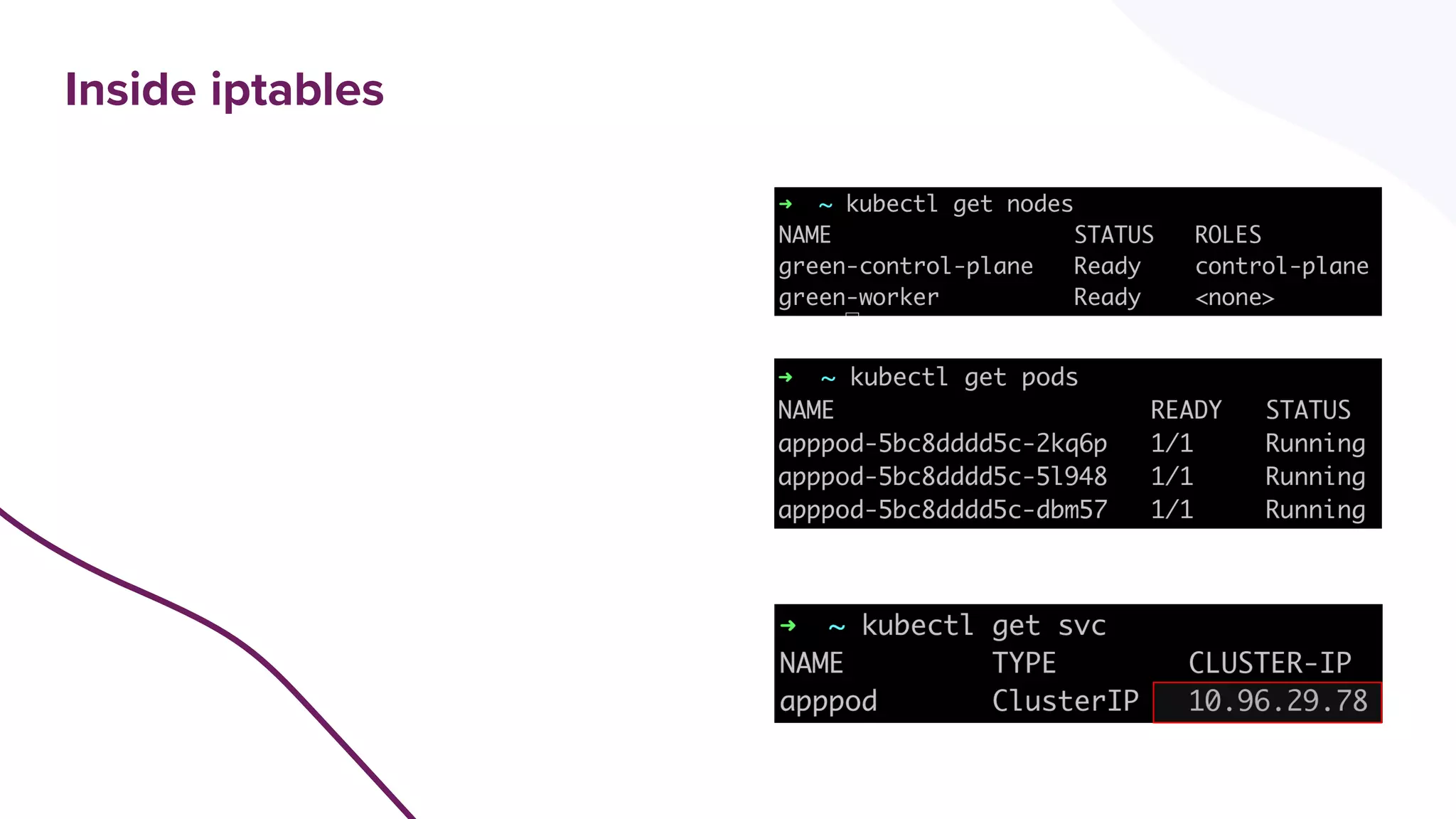

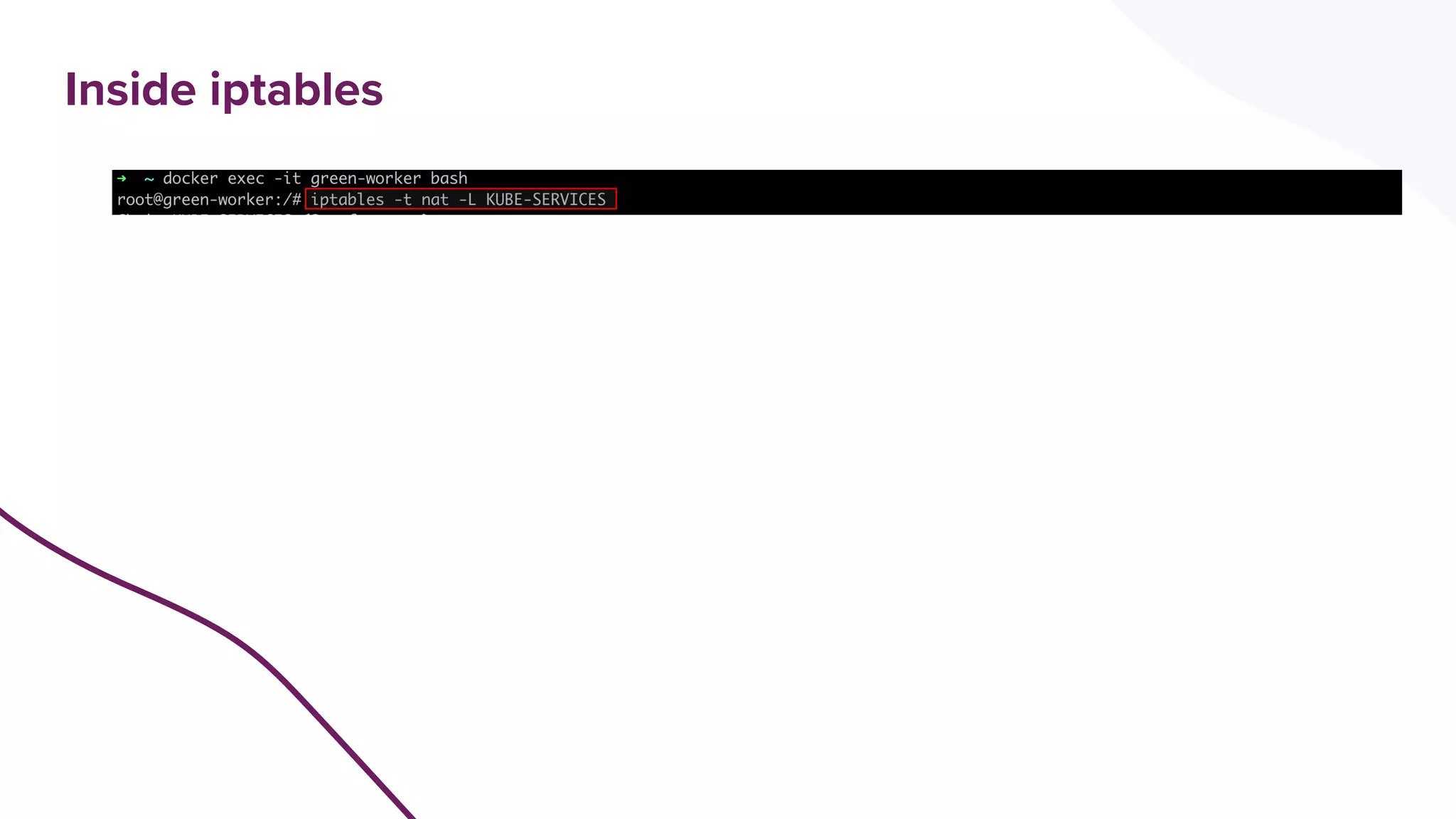

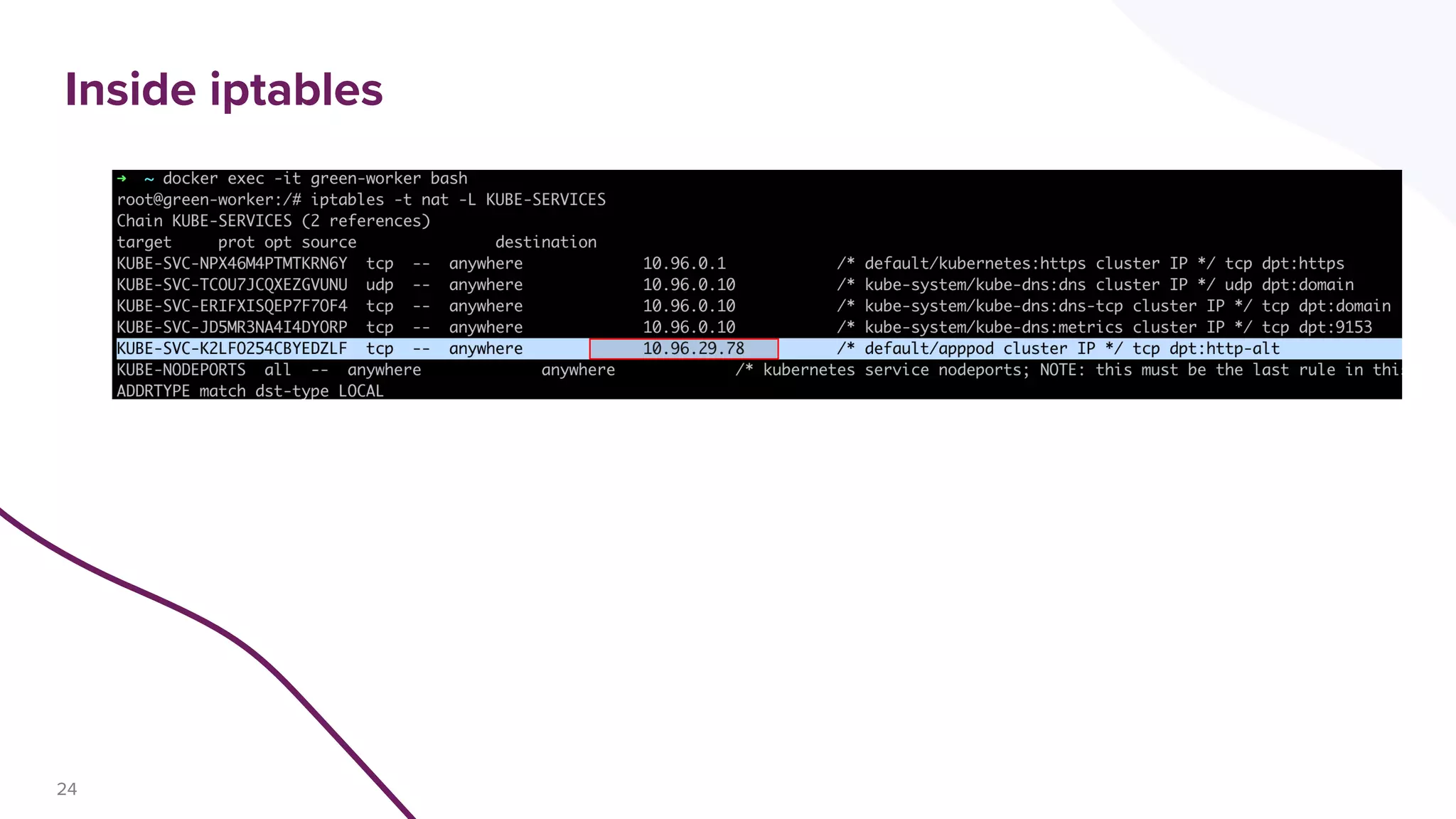

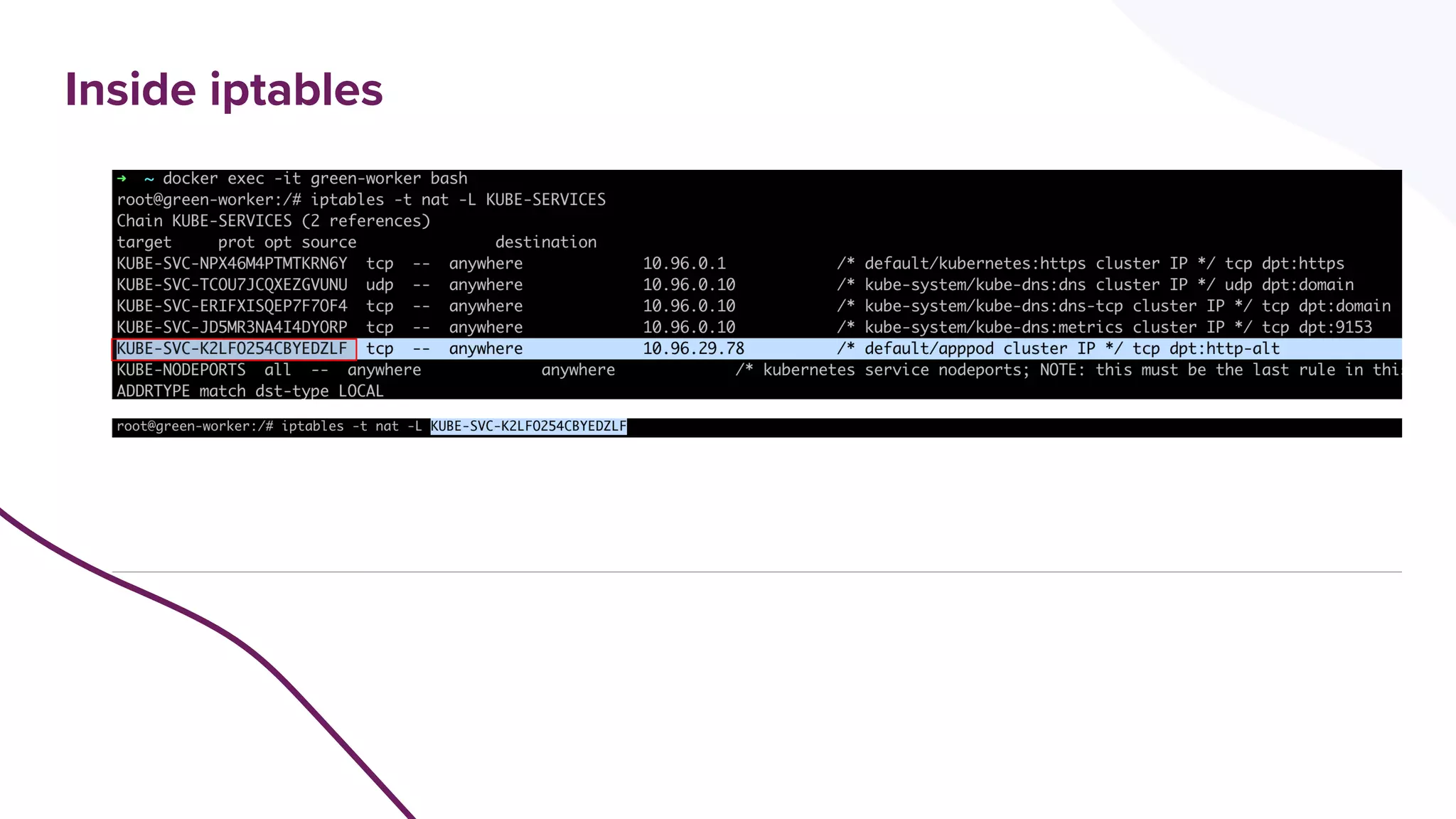

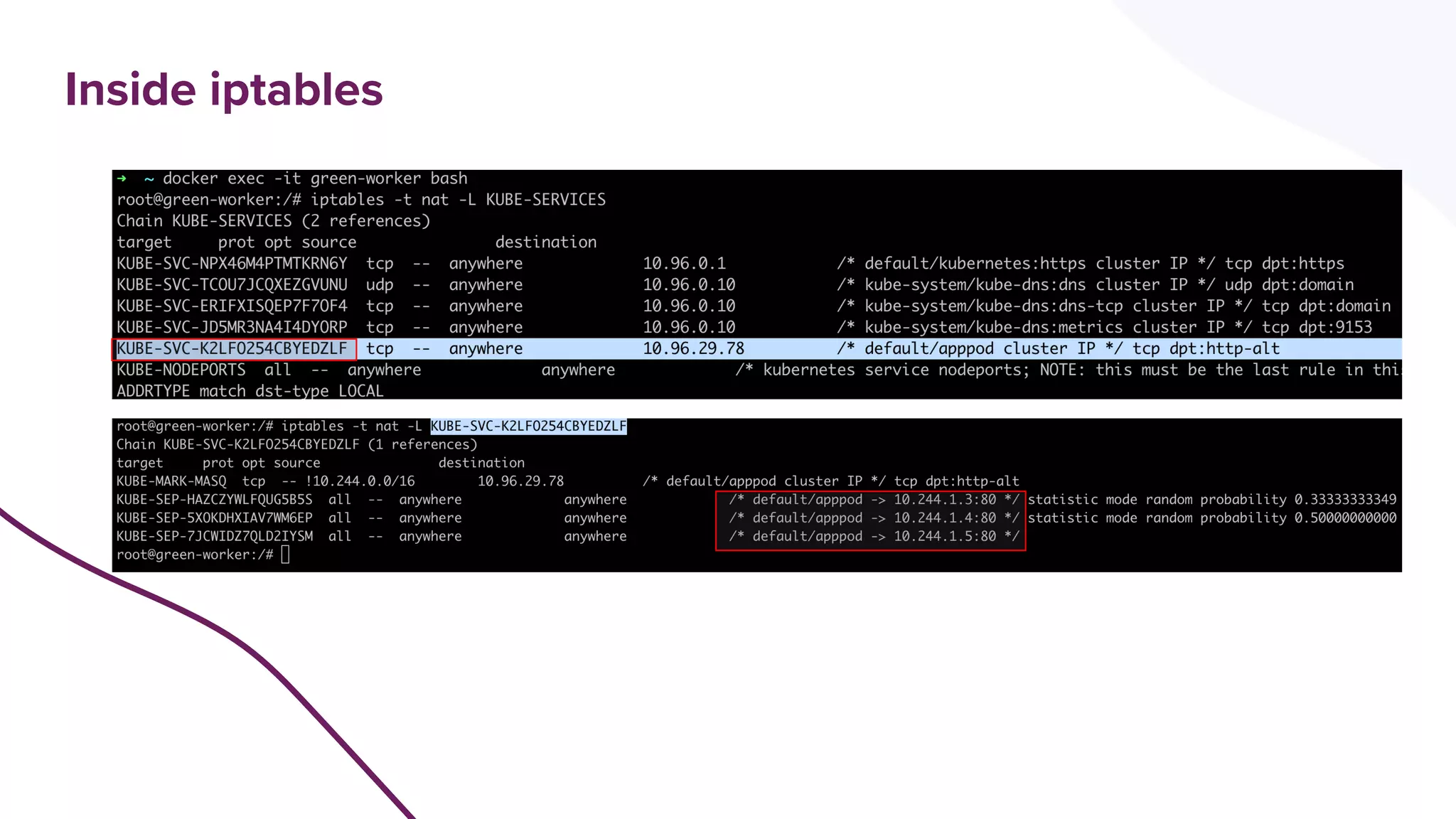

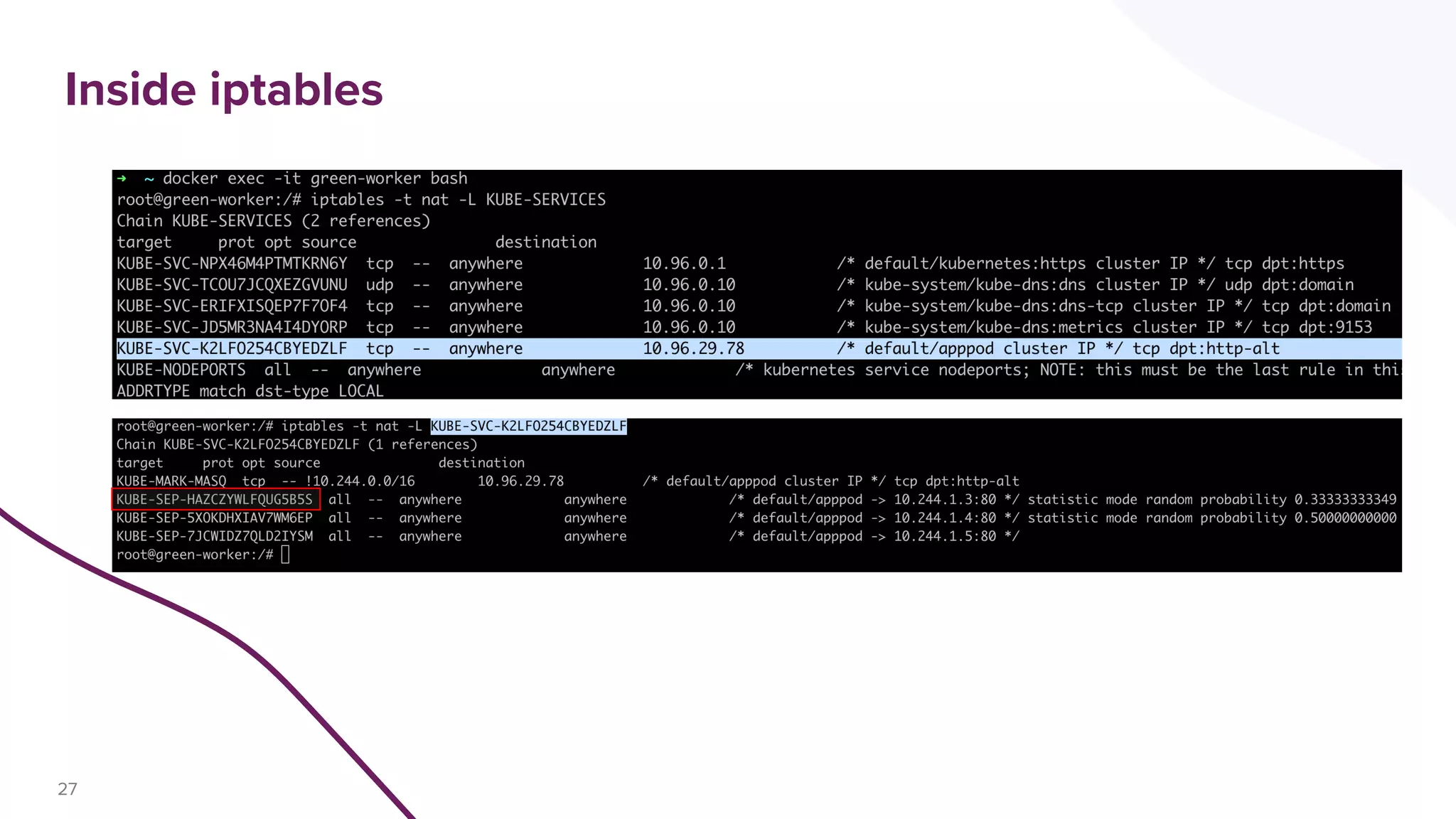

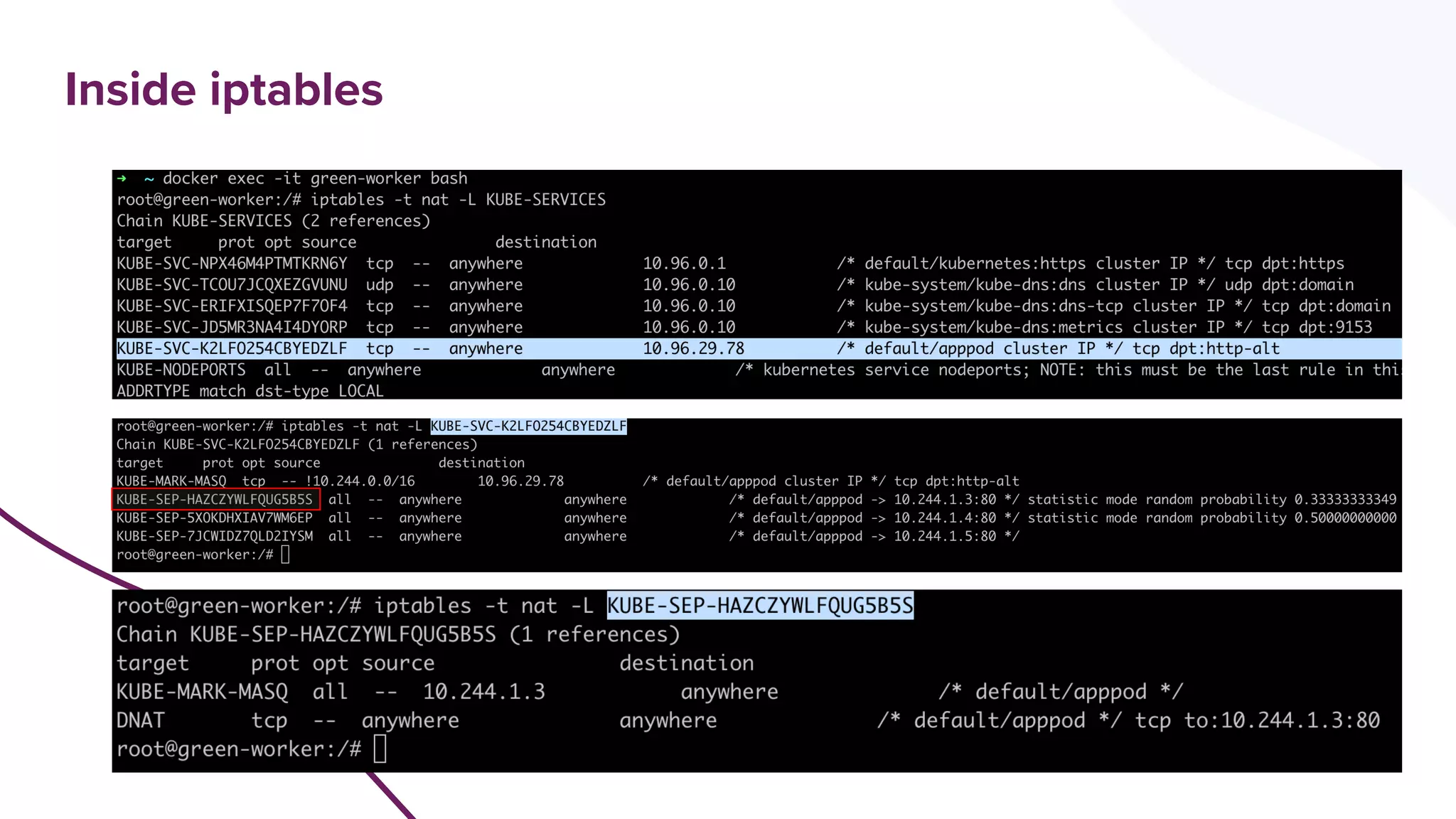

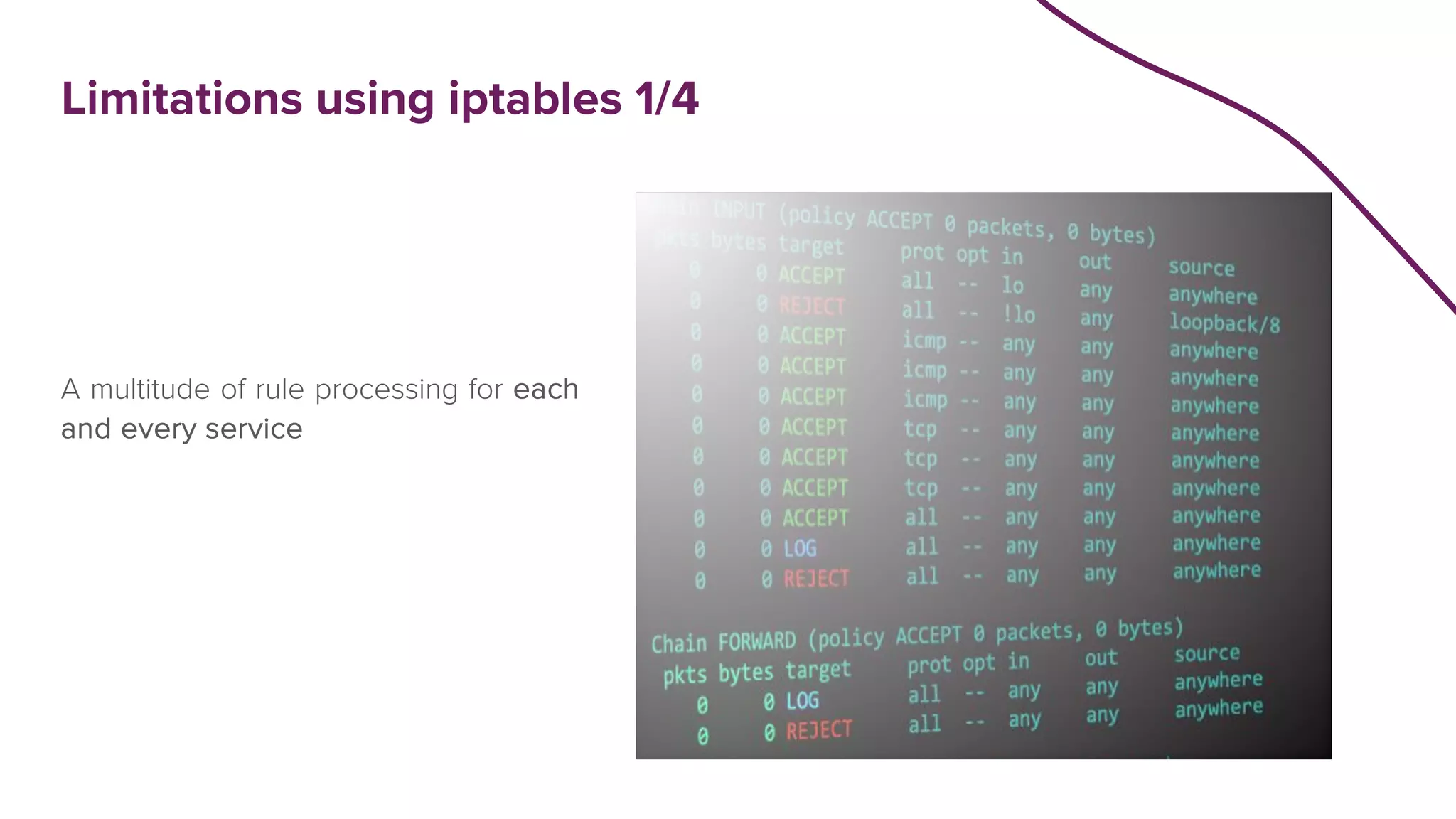

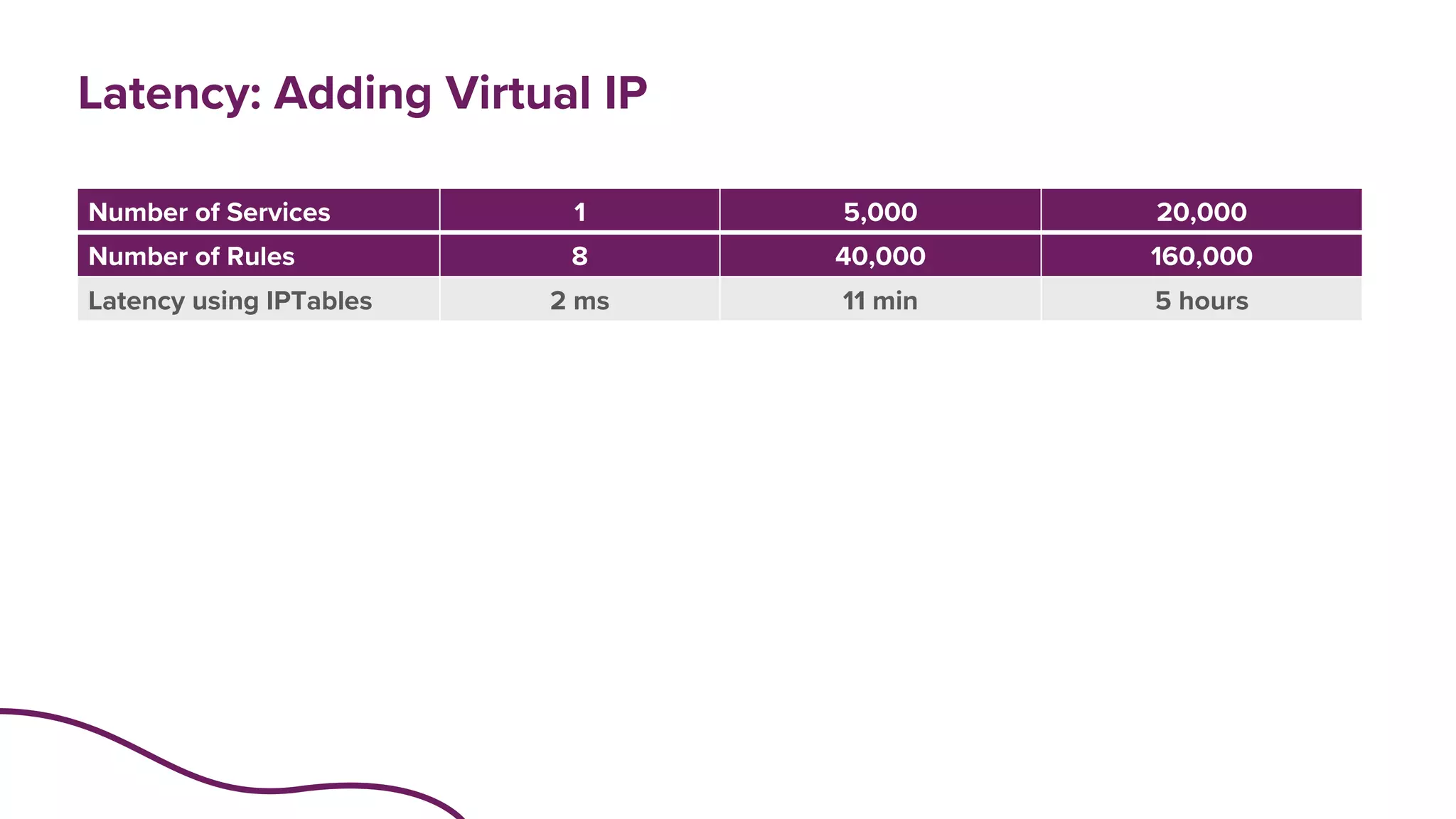

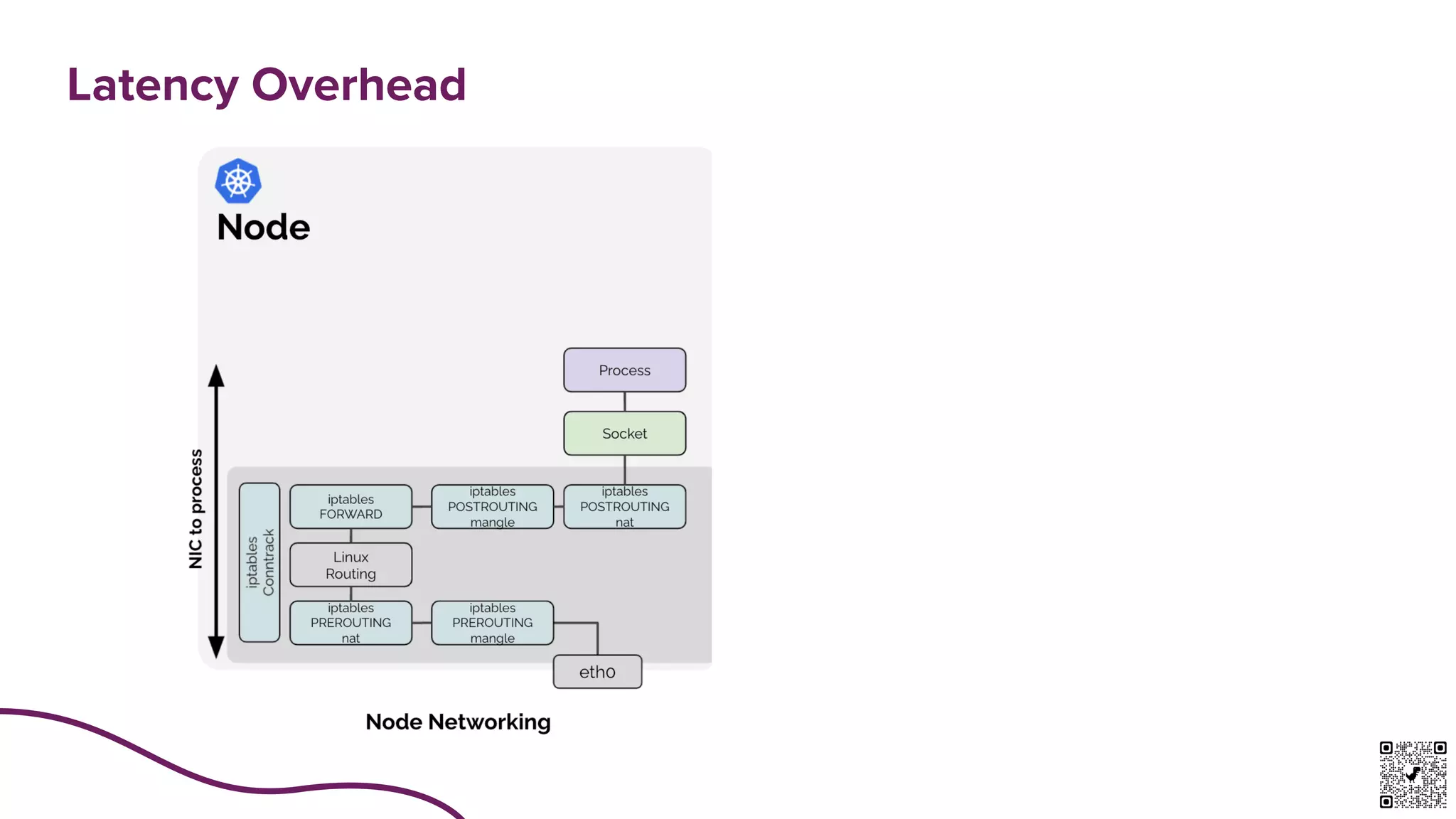

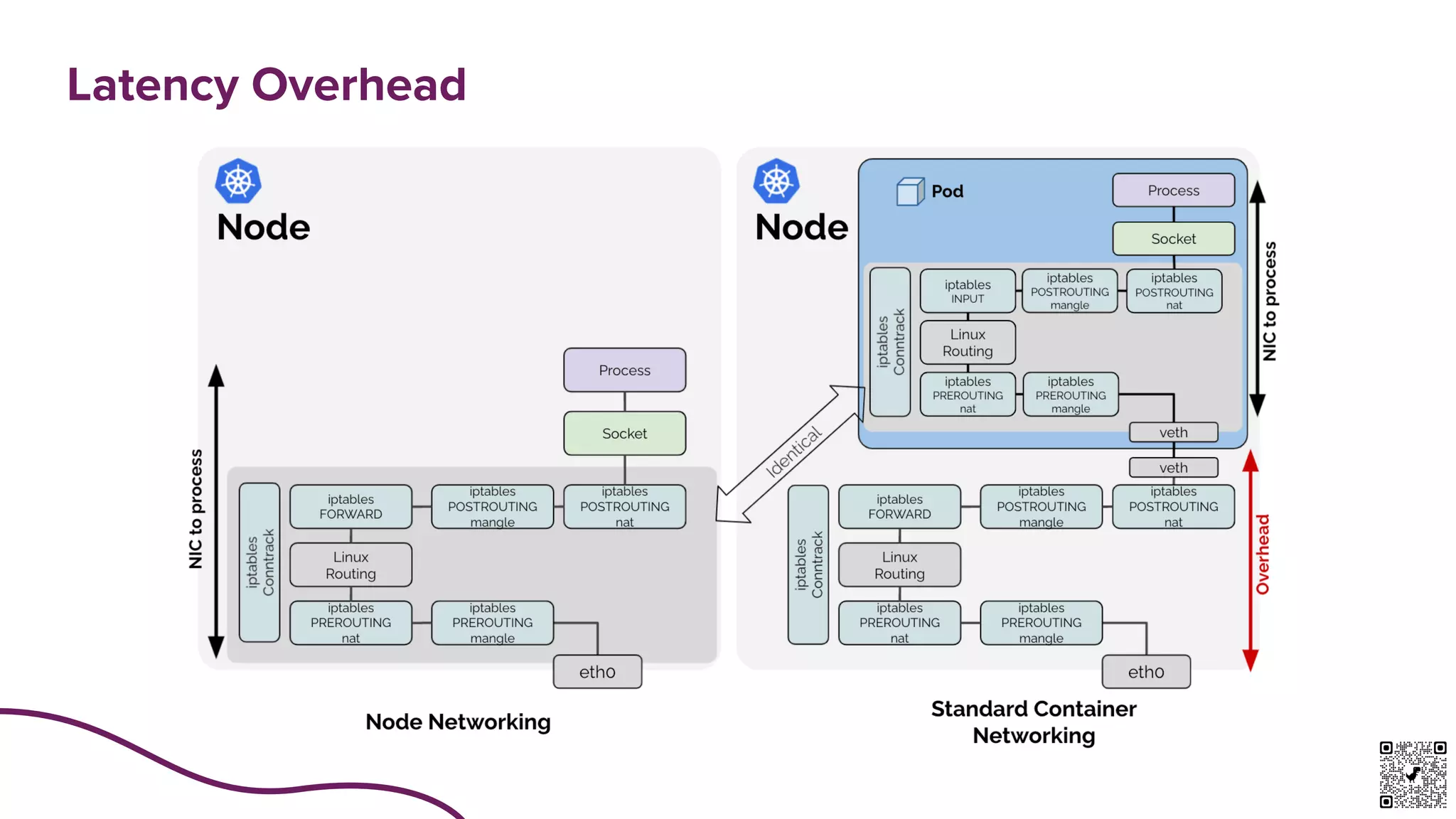

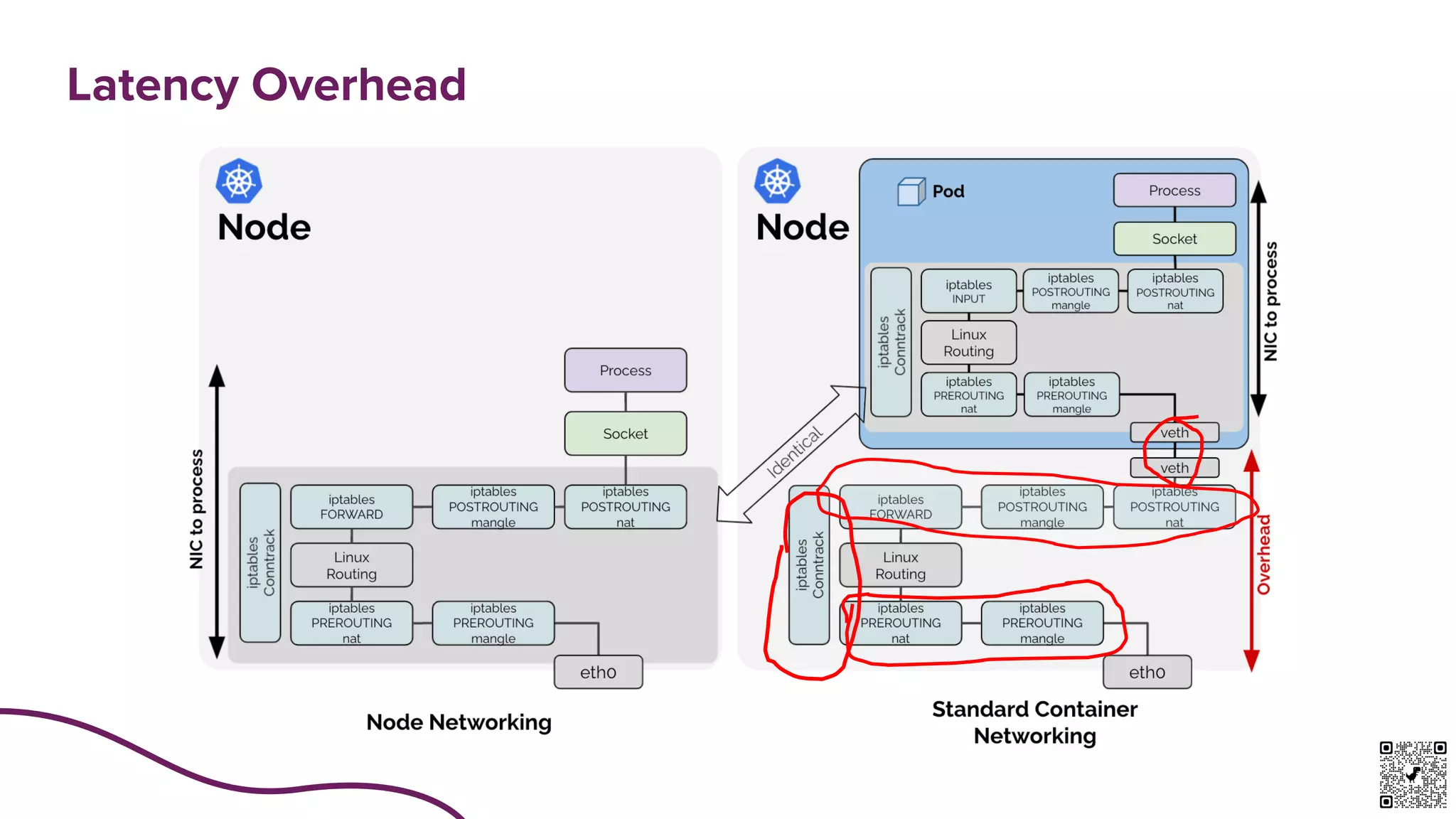

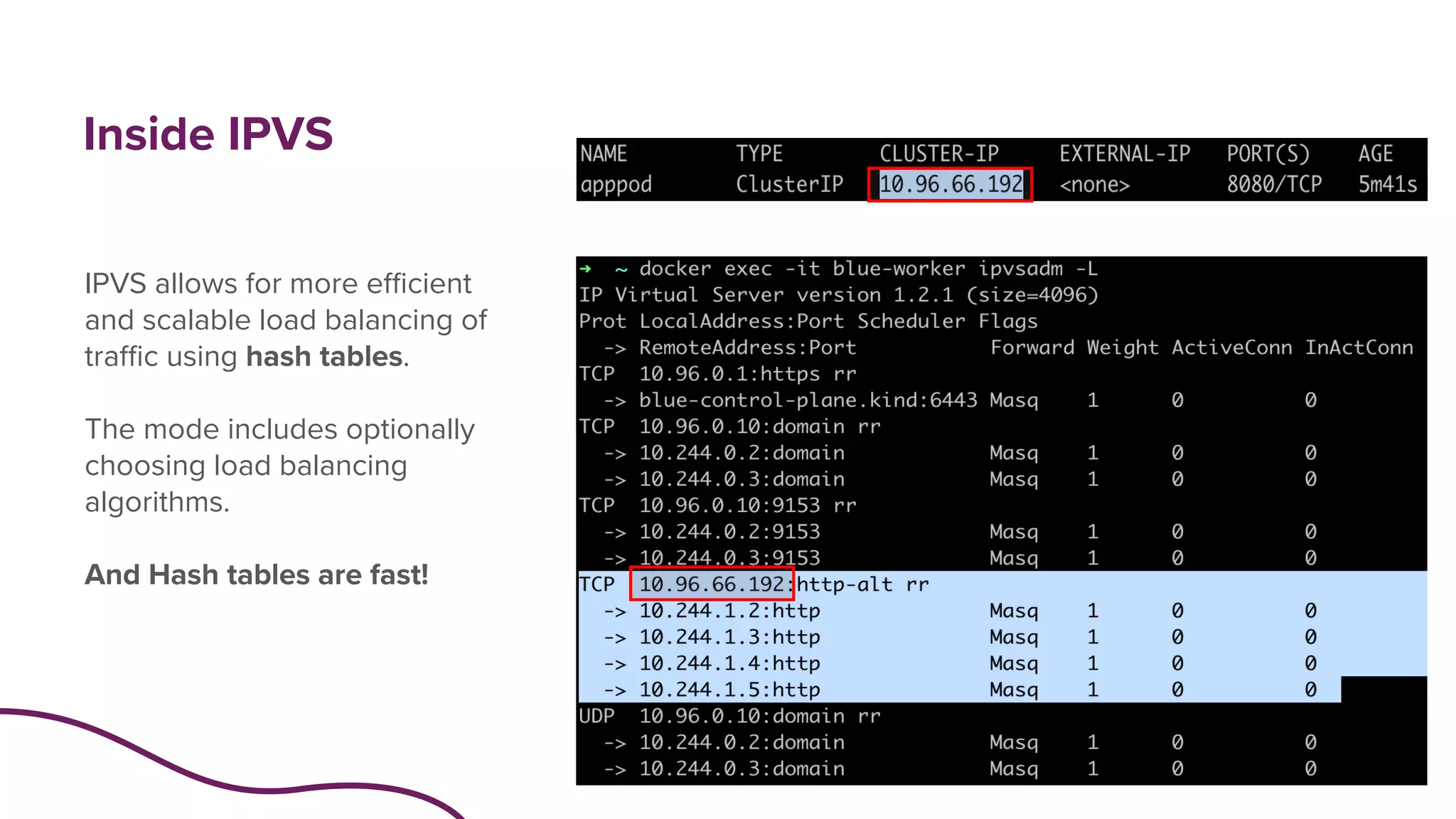

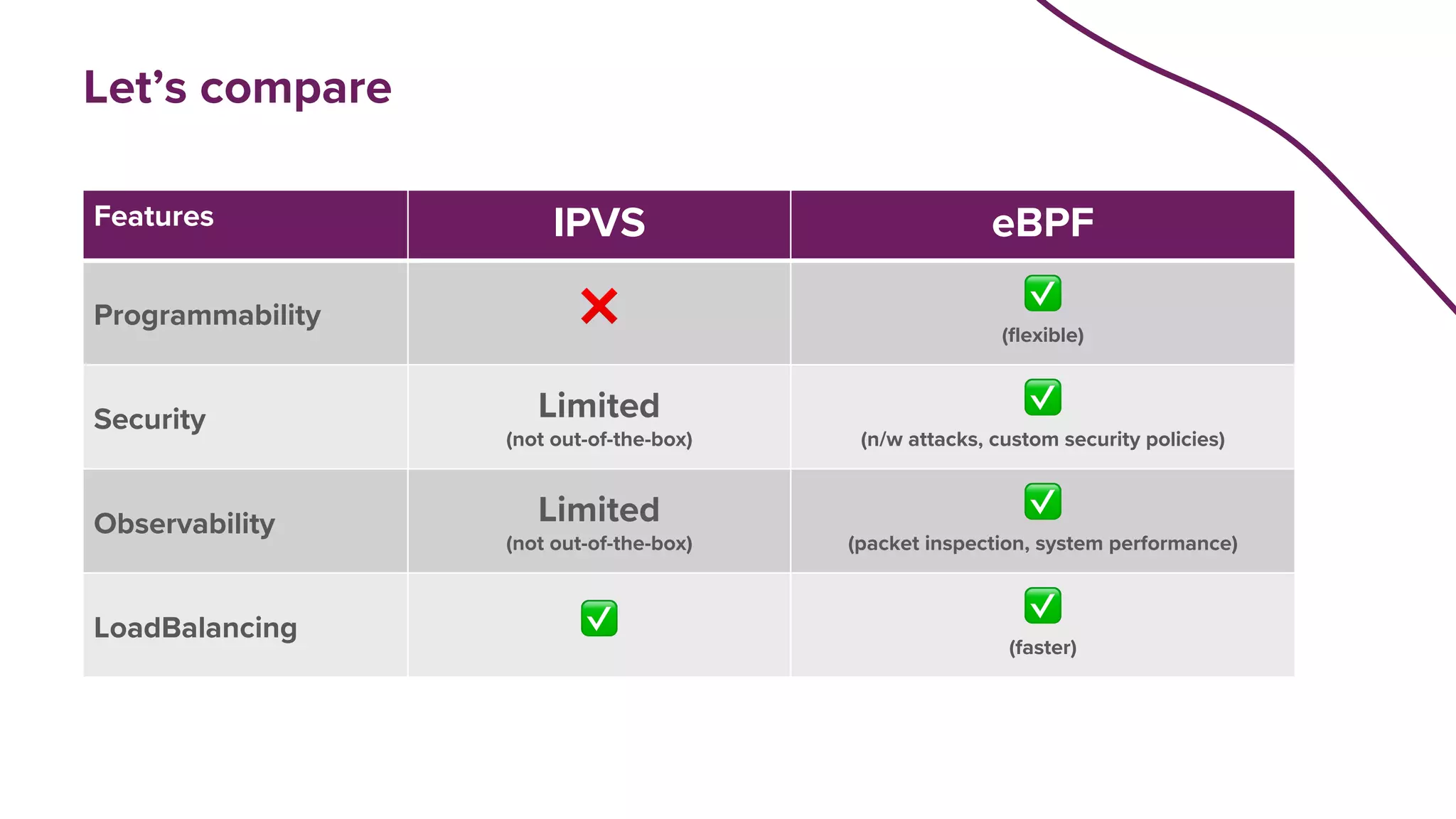

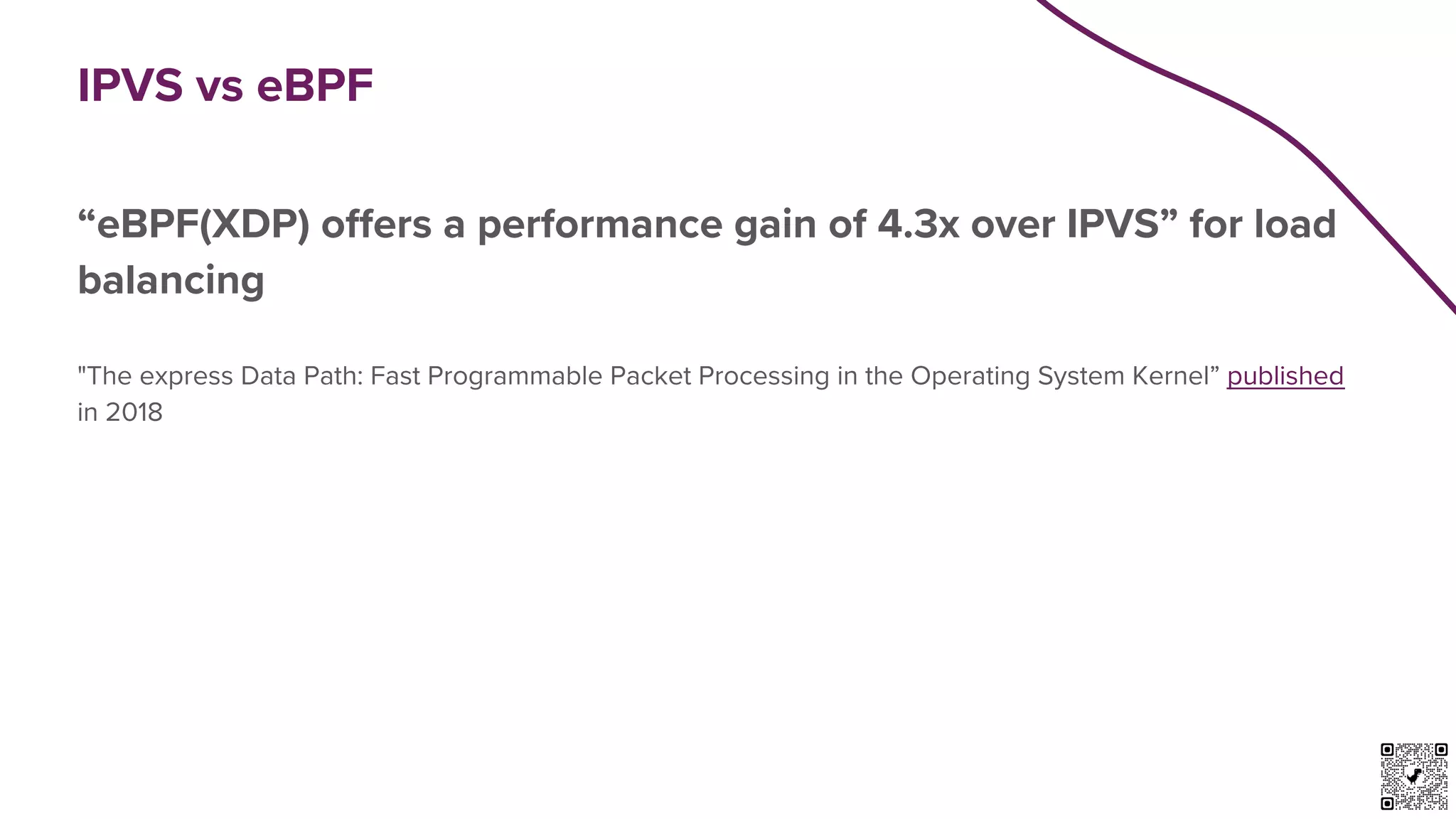

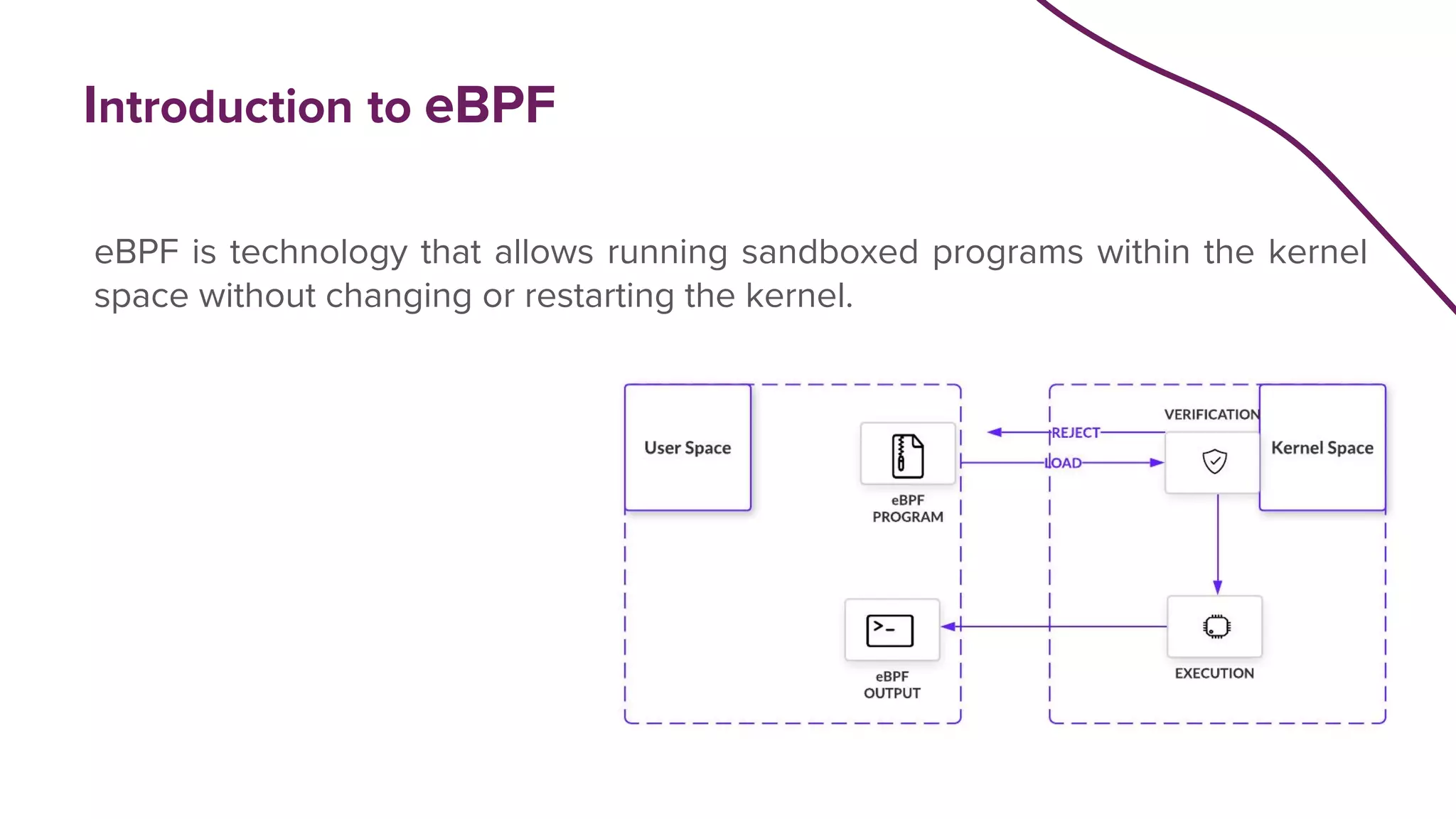

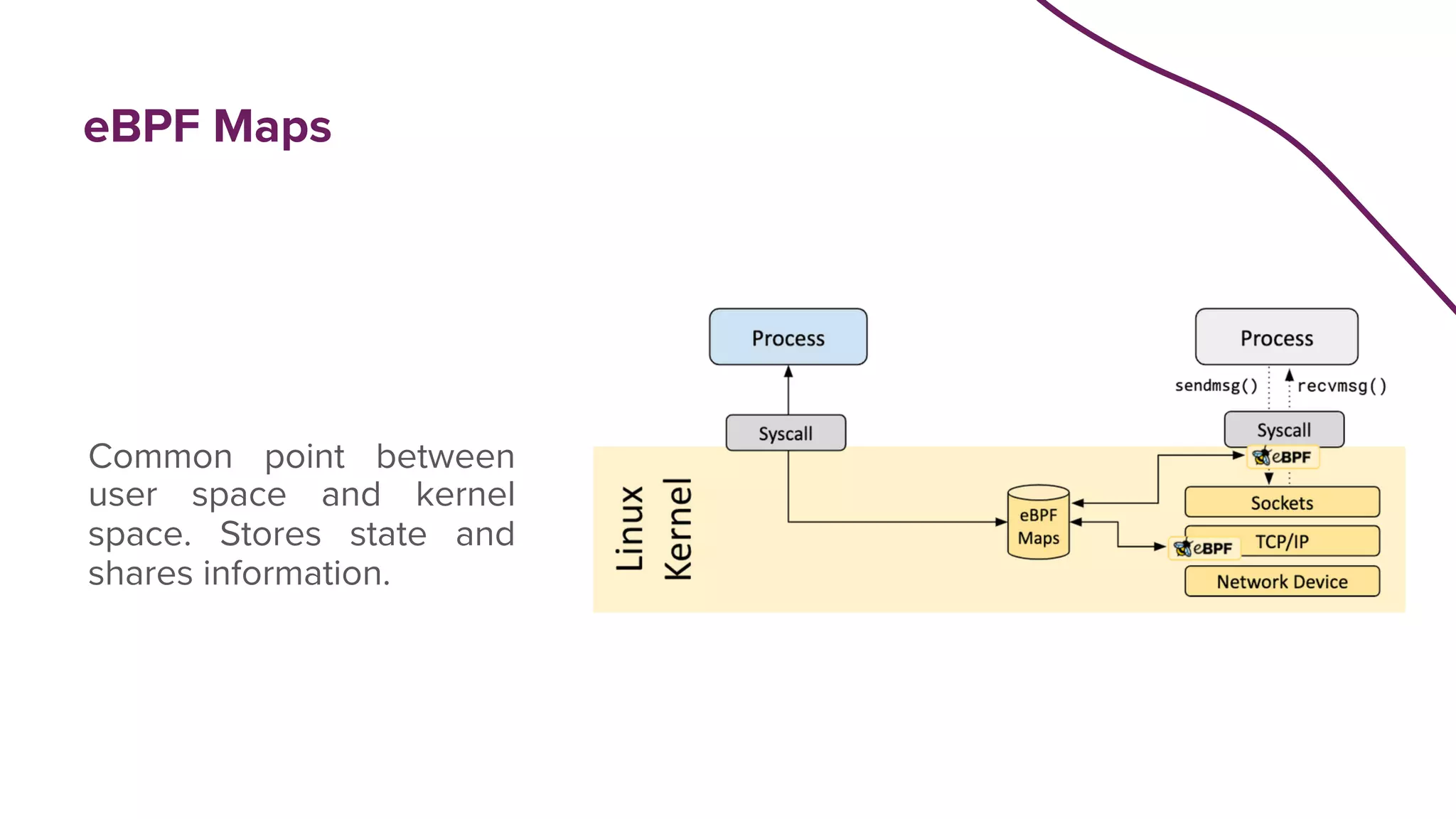

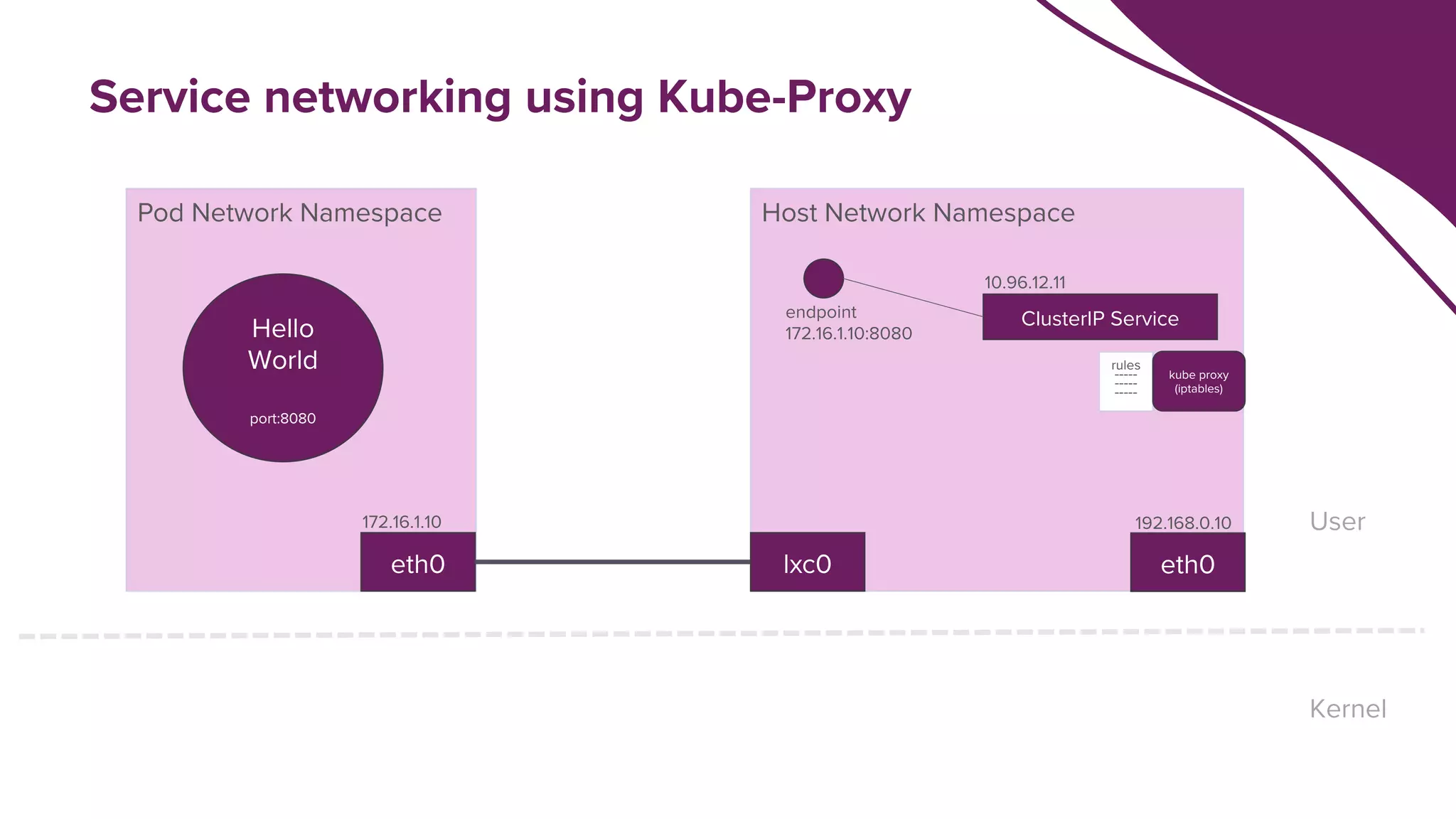

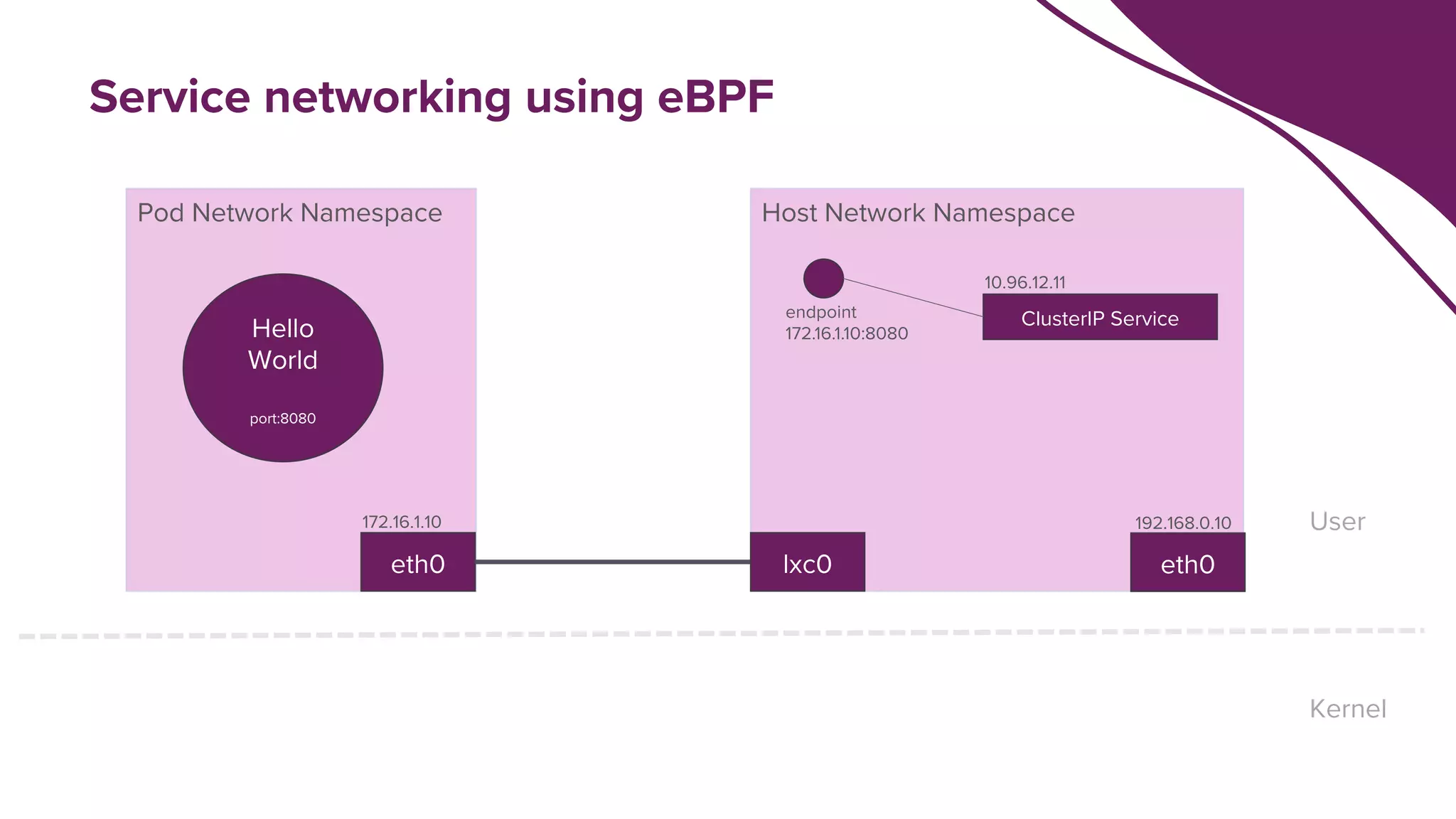

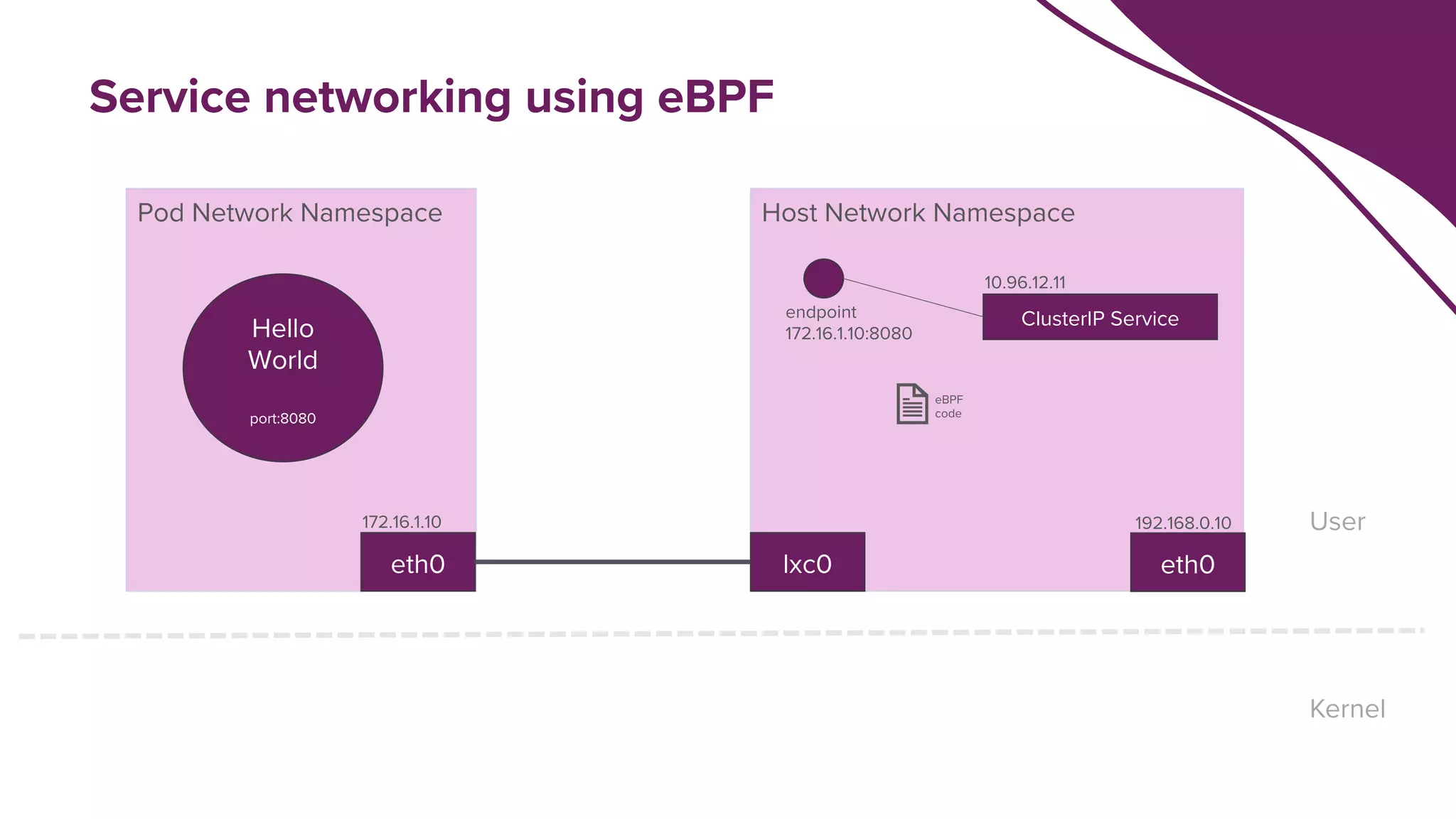

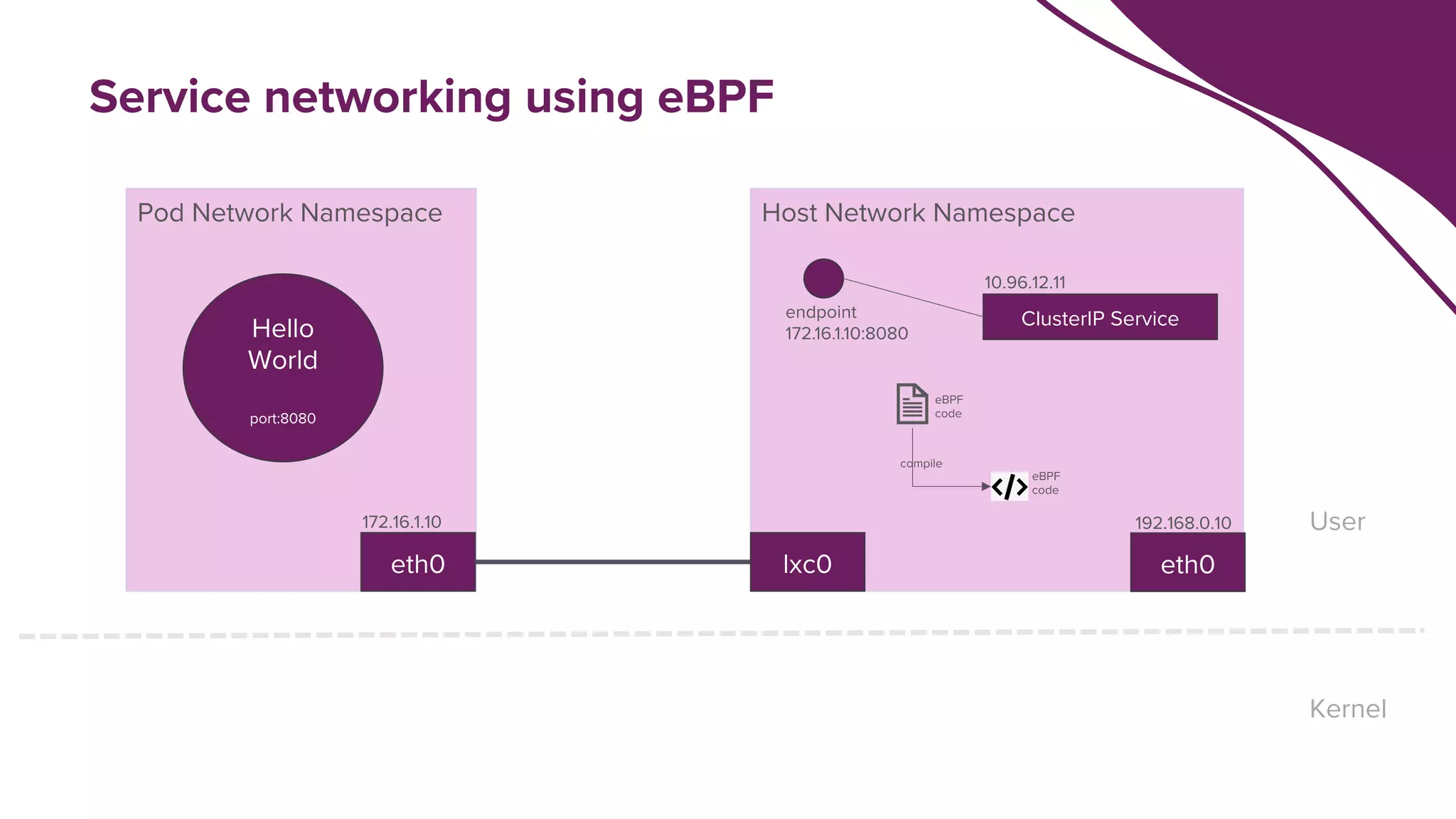

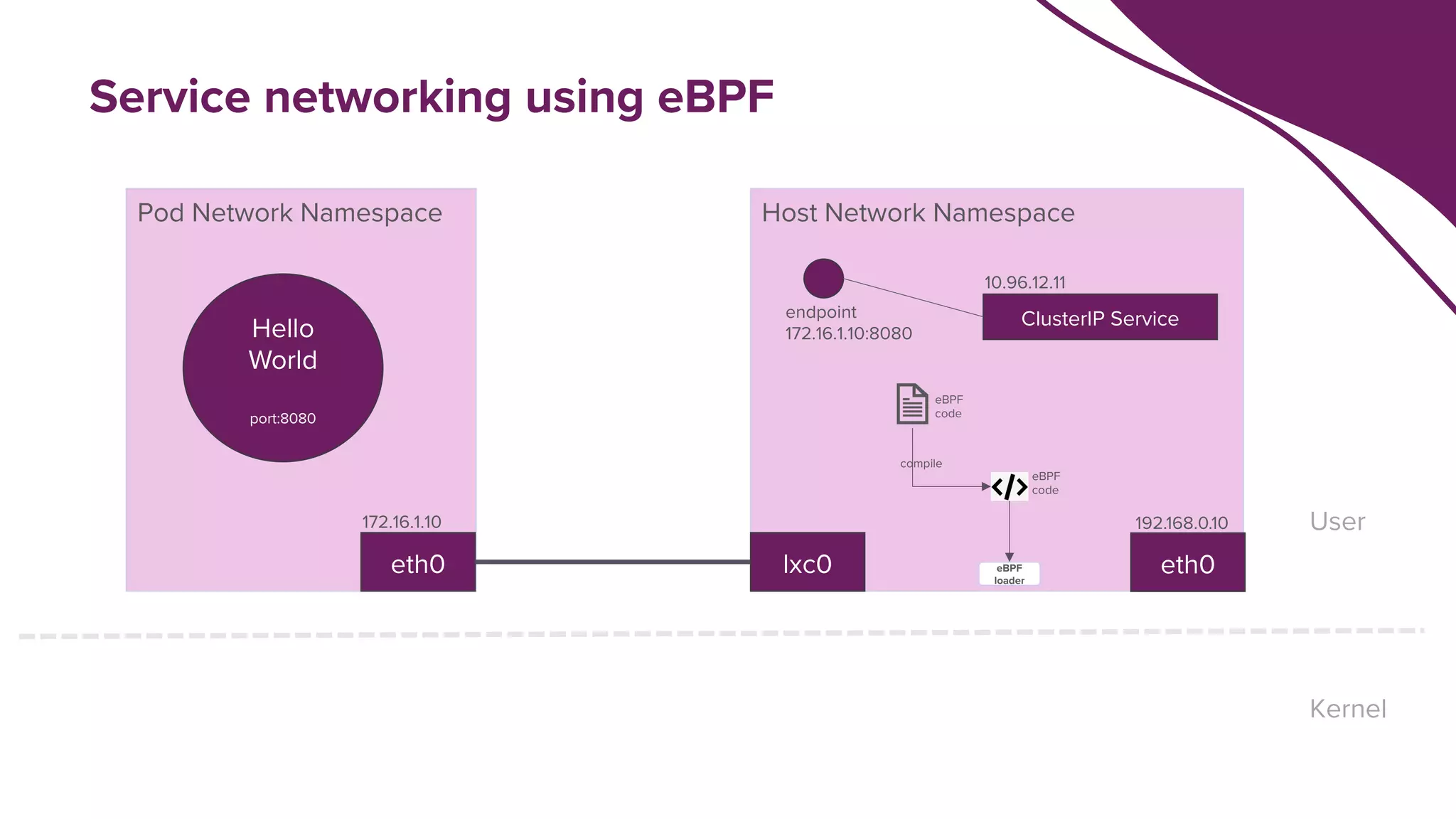

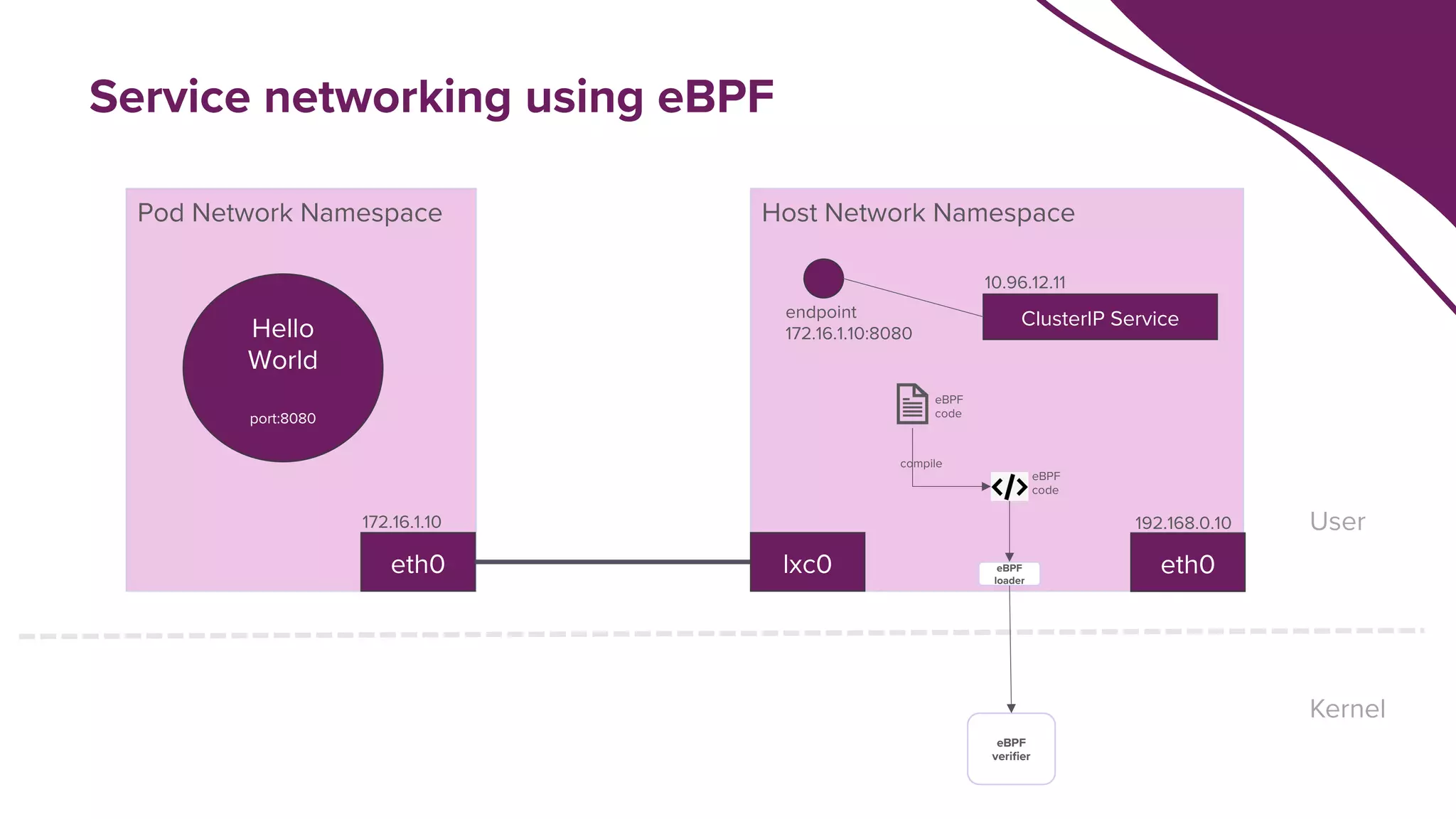

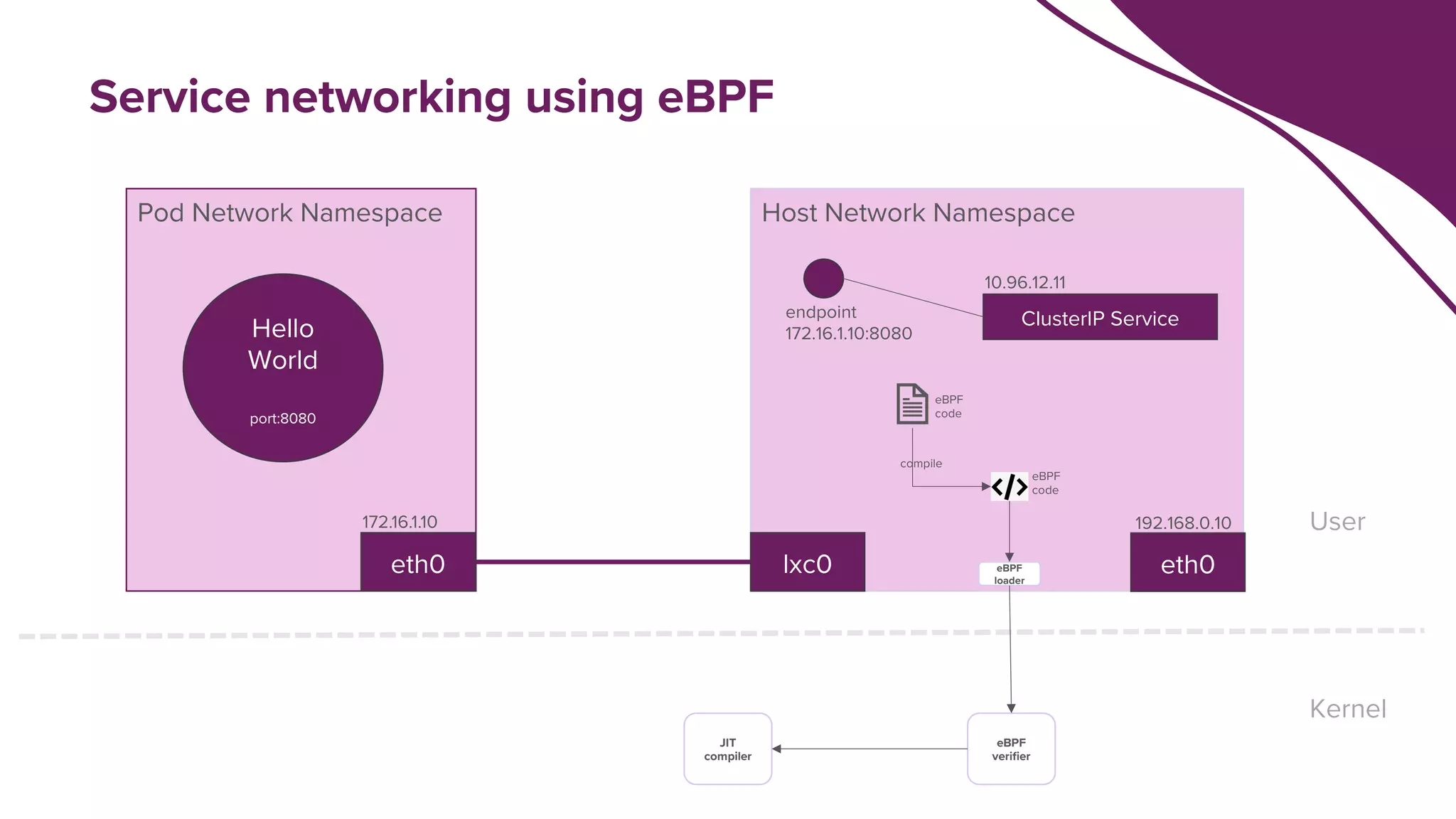

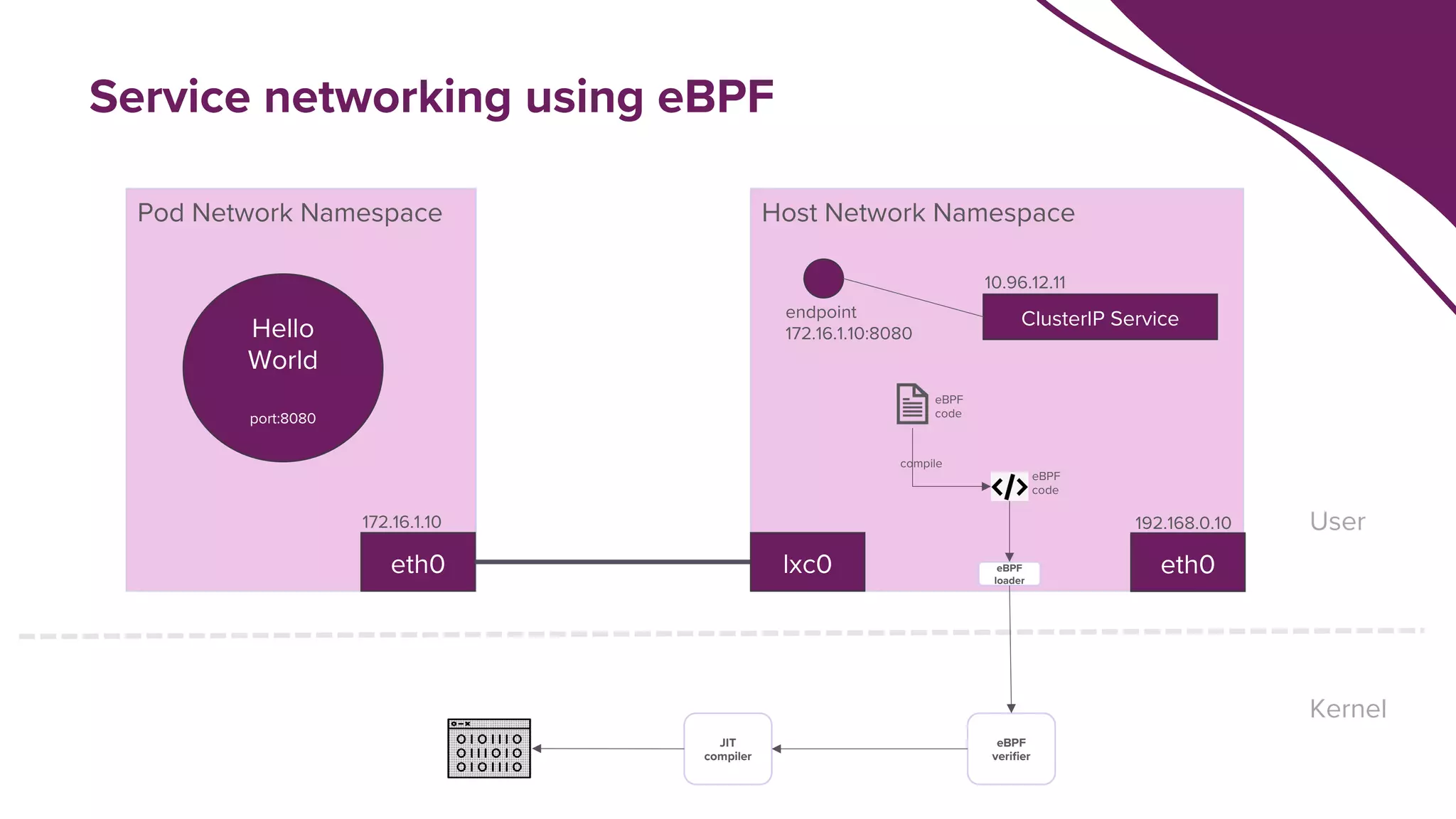

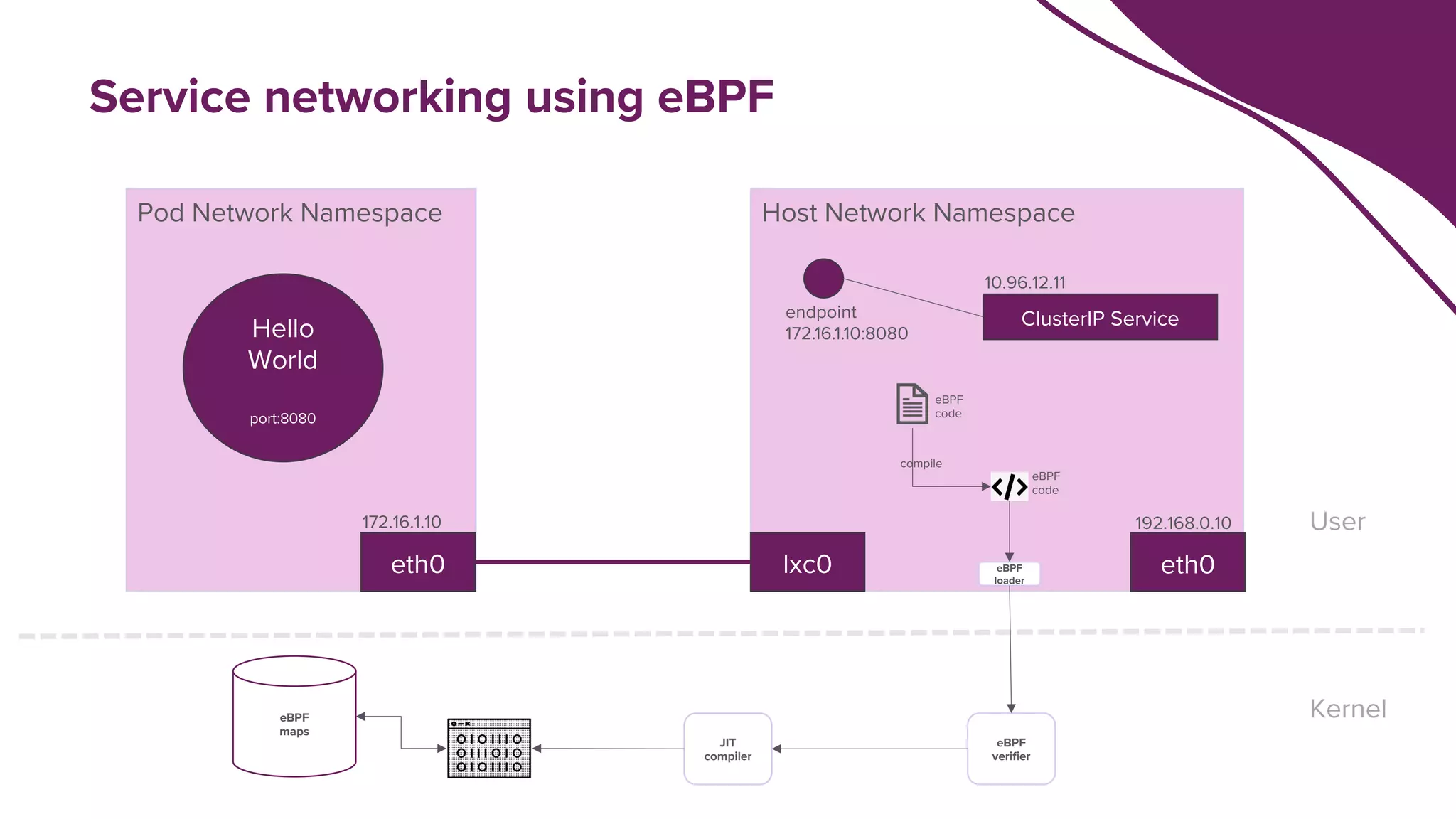

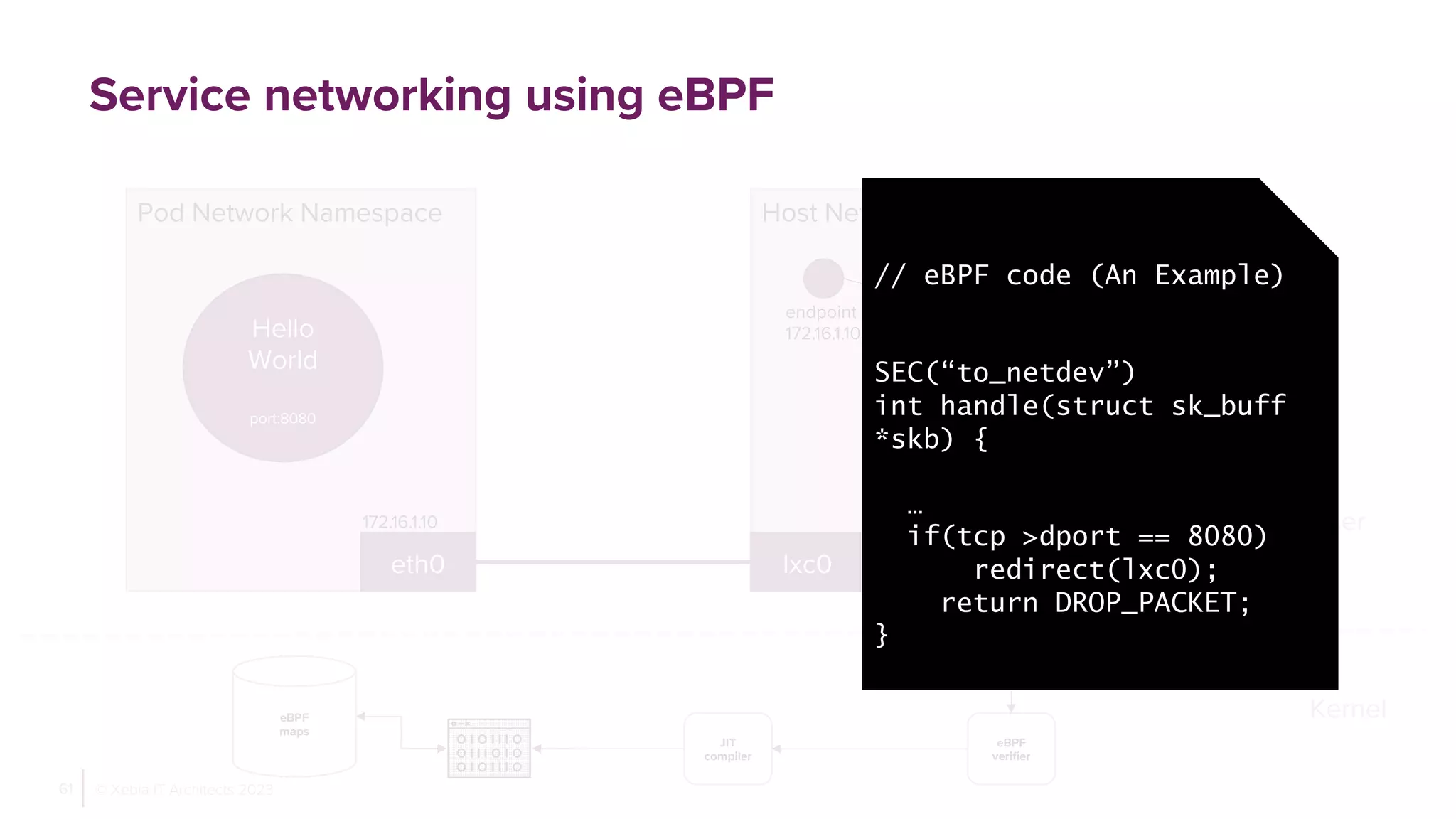

The document discusses how eBPF enhances Kubernetes service networking performance by comparing it with traditional iptables and IPVS methods. It highlights the limitations of iptables such as high latency and inefficient rule processing while showcasing eBPF's programmability, security, and observability advantages. The presentation also presents a specific use case with Cilium and emphasizes significant performance improvements in load balancing when using eBPF.