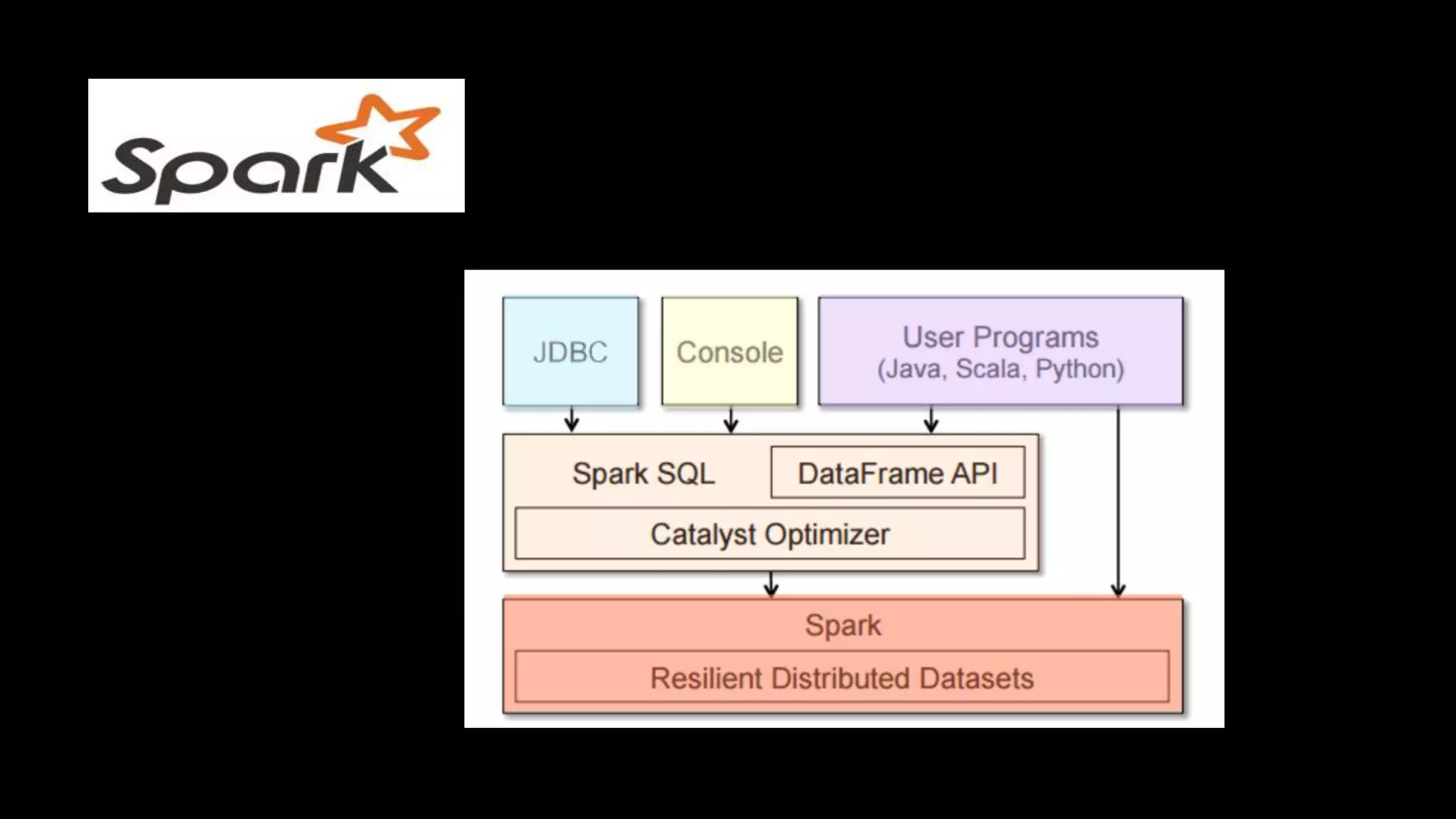

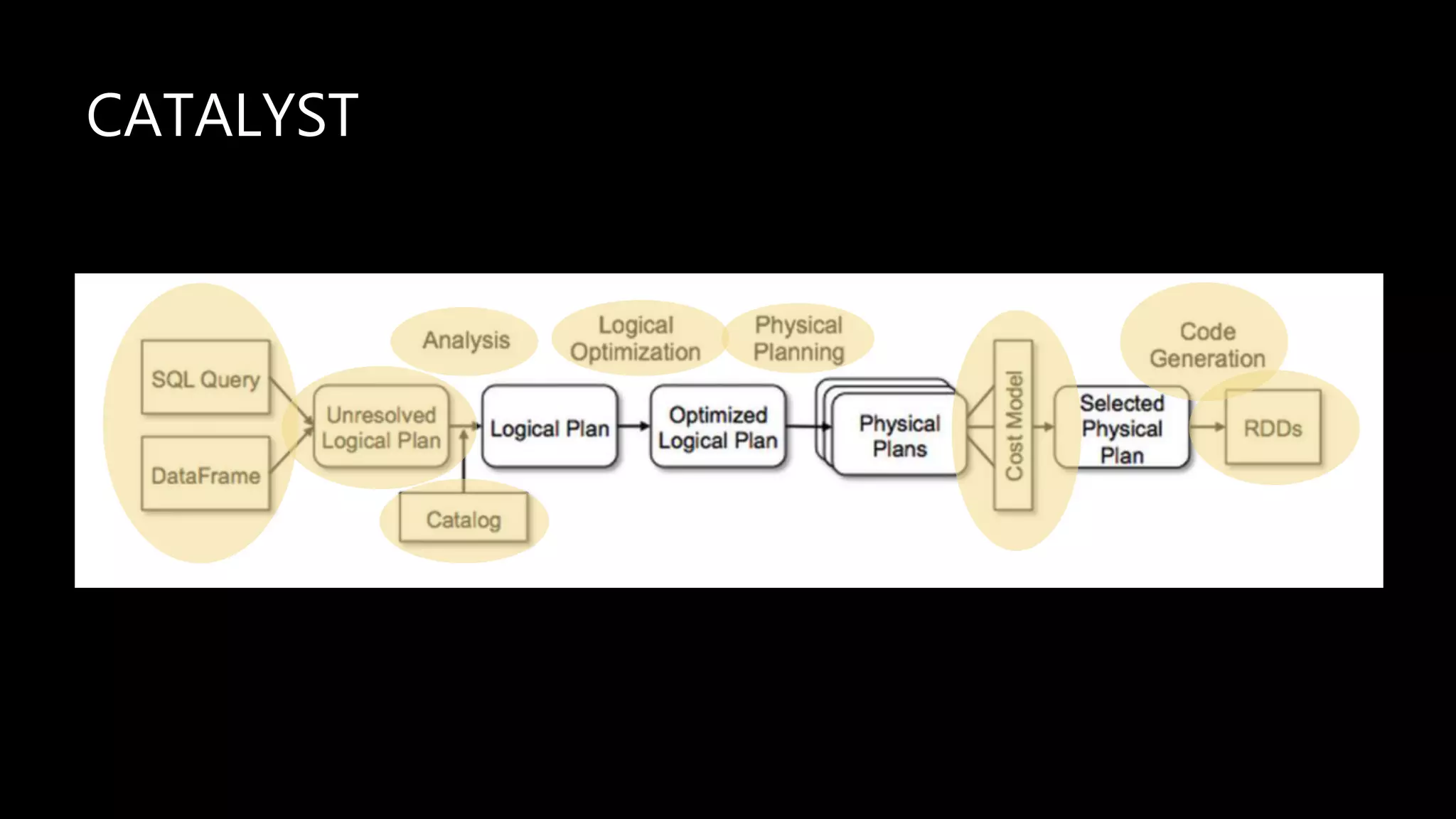

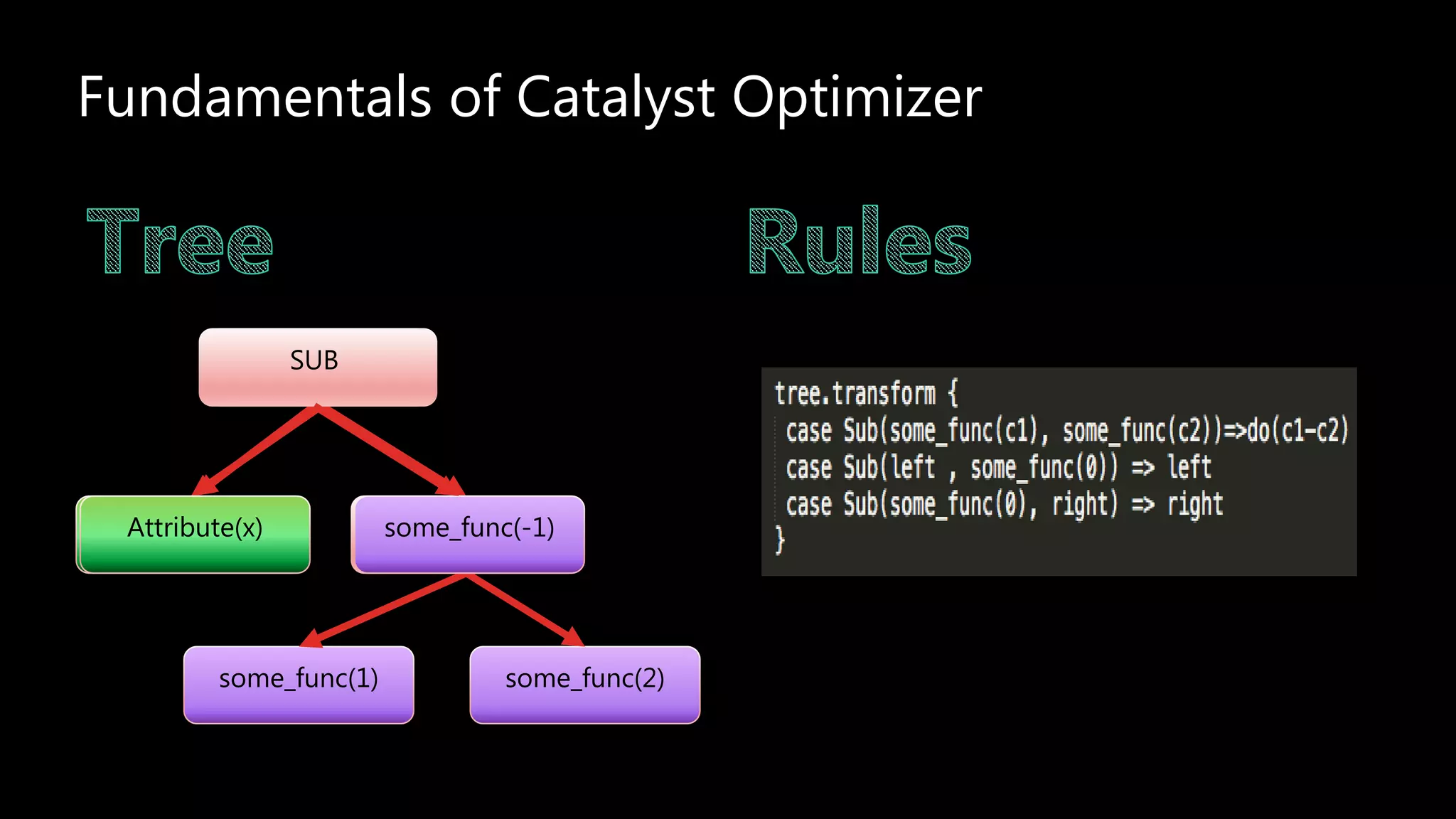

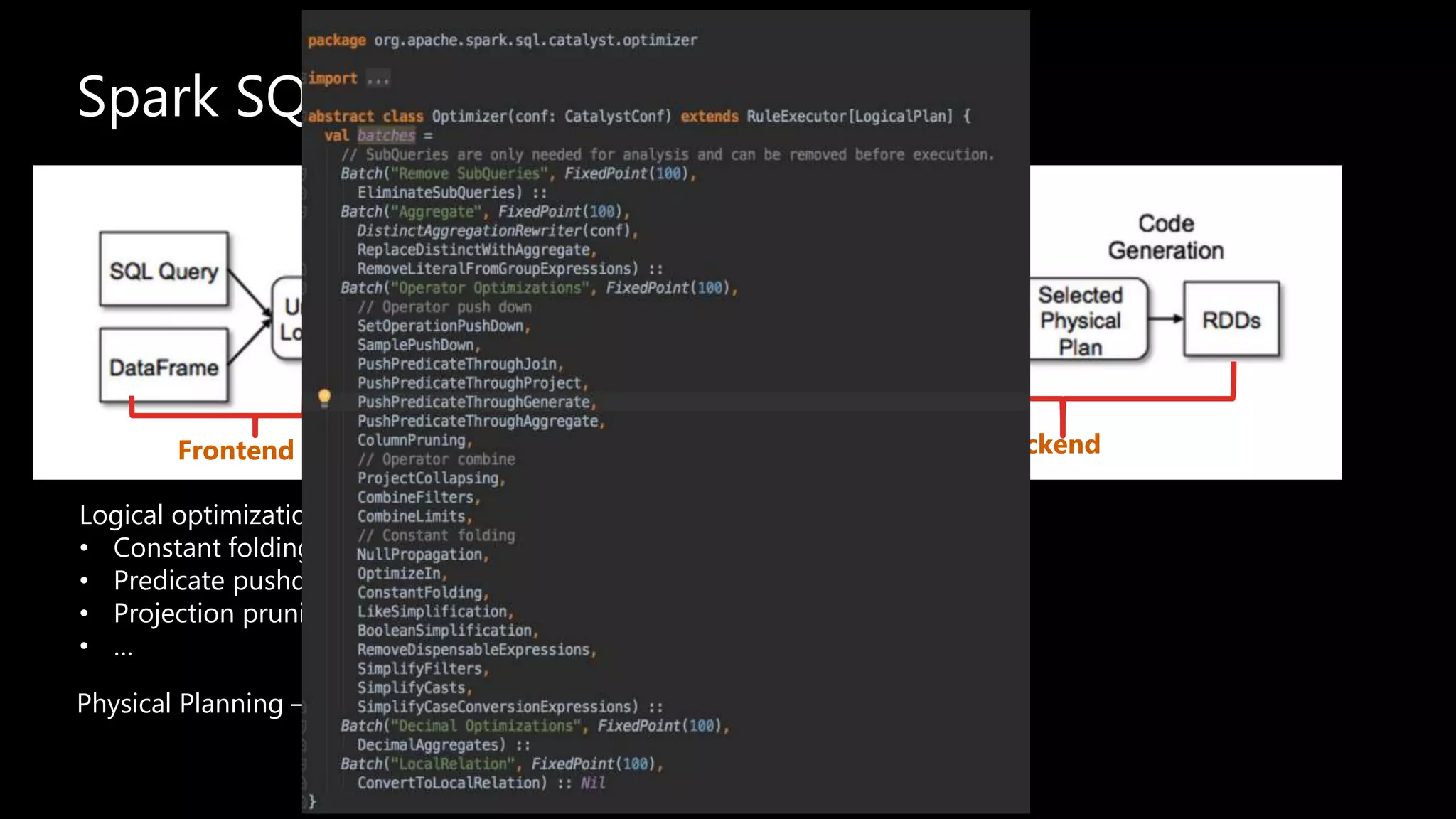

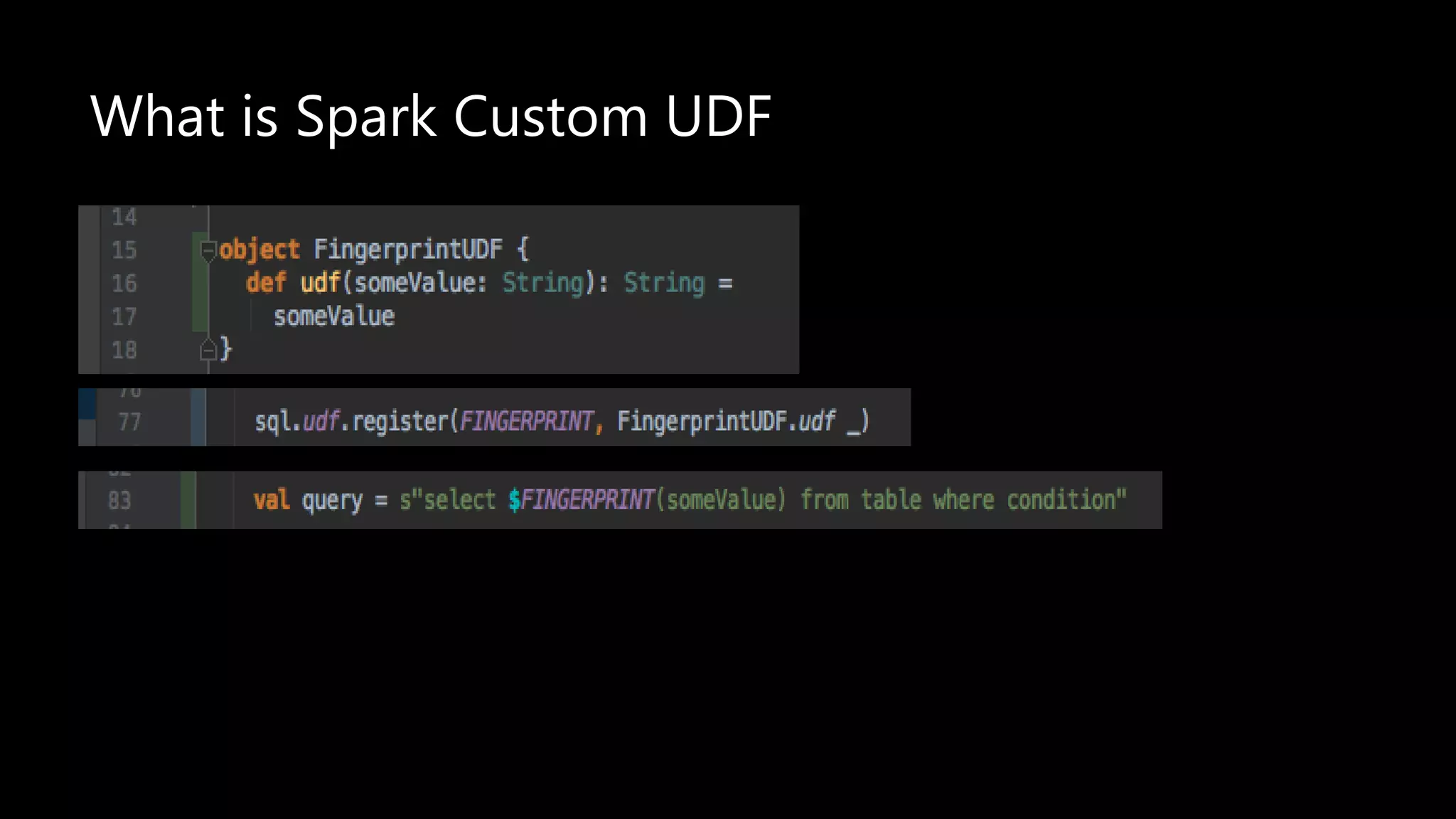

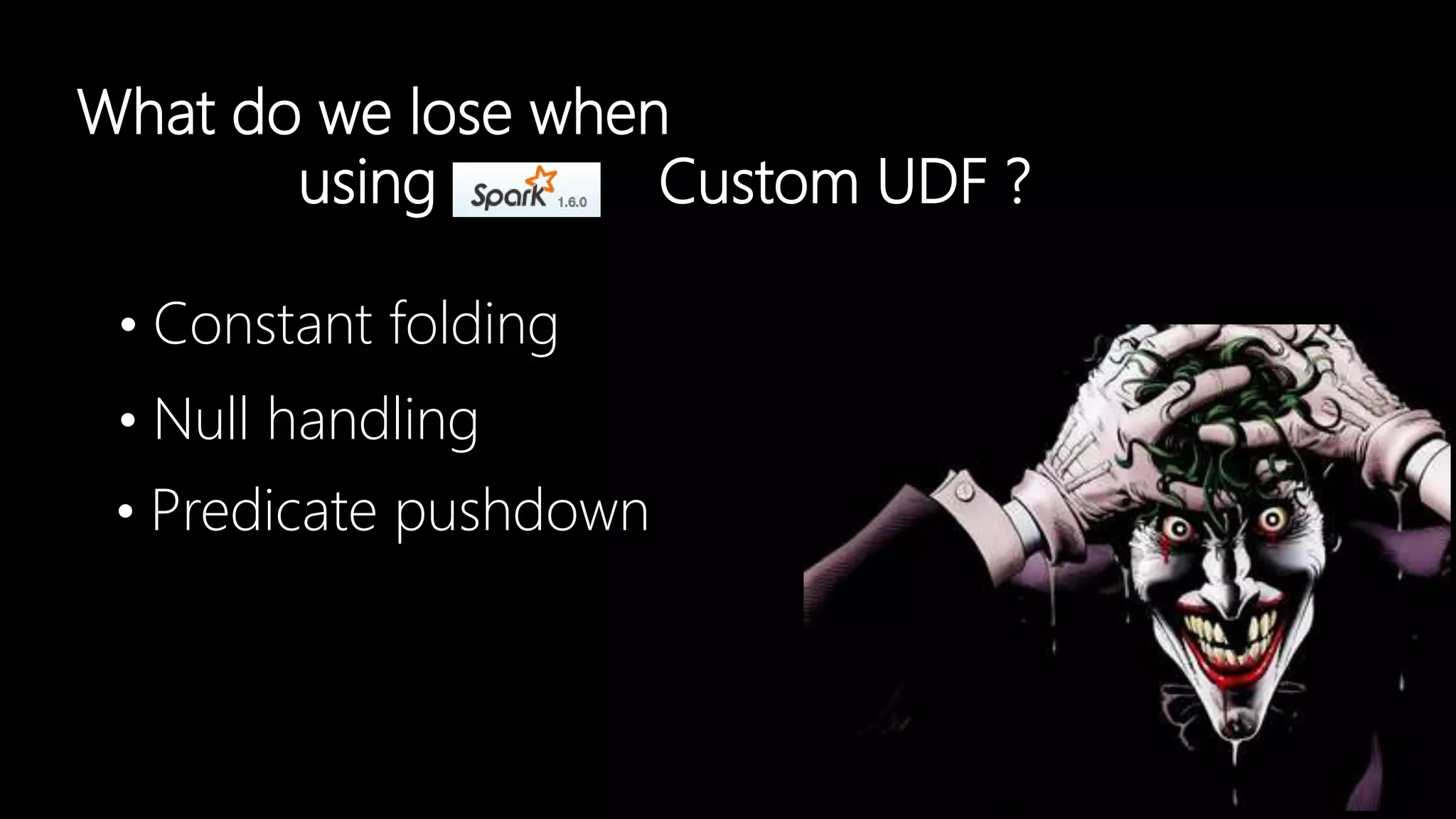

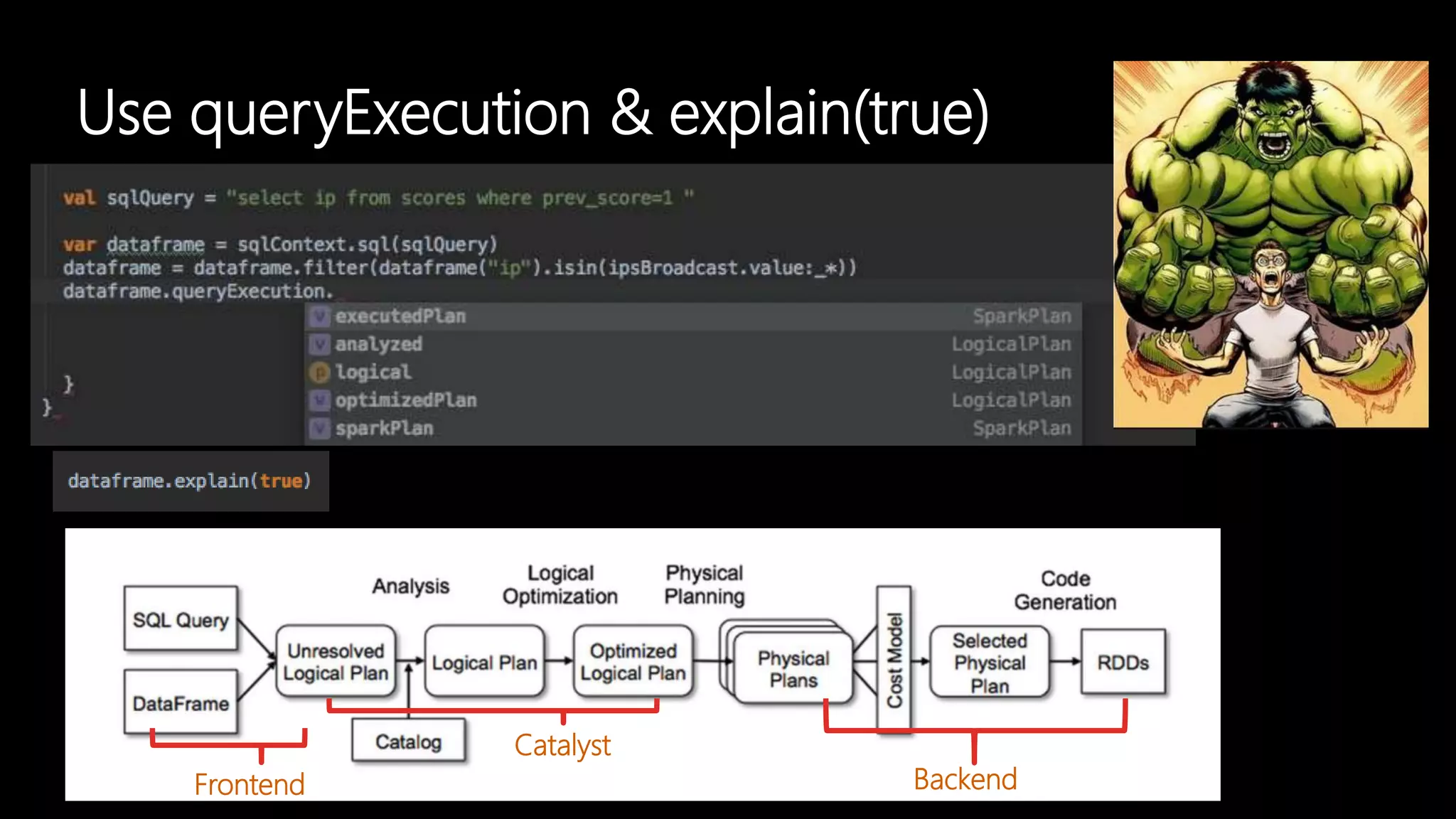

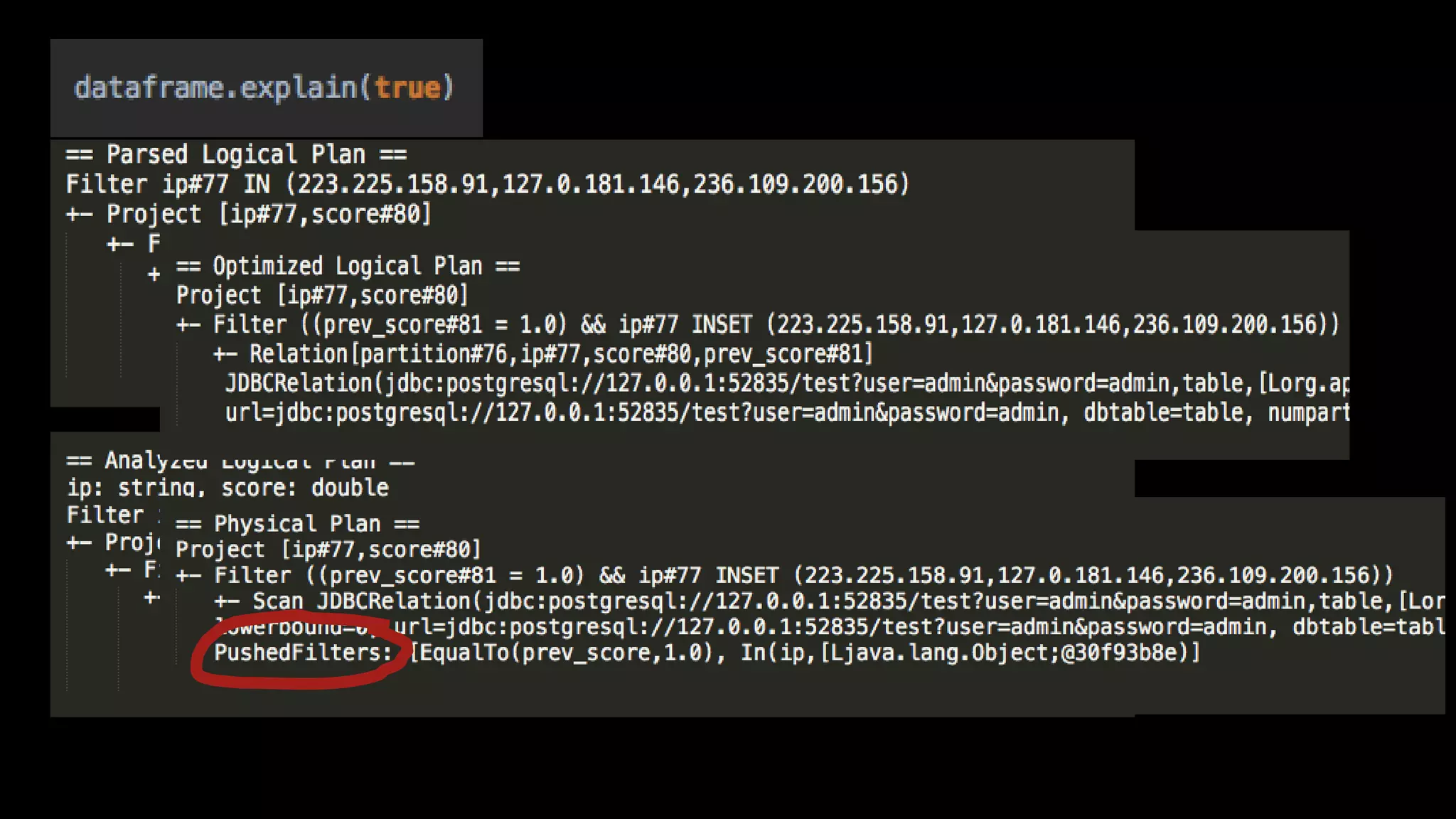

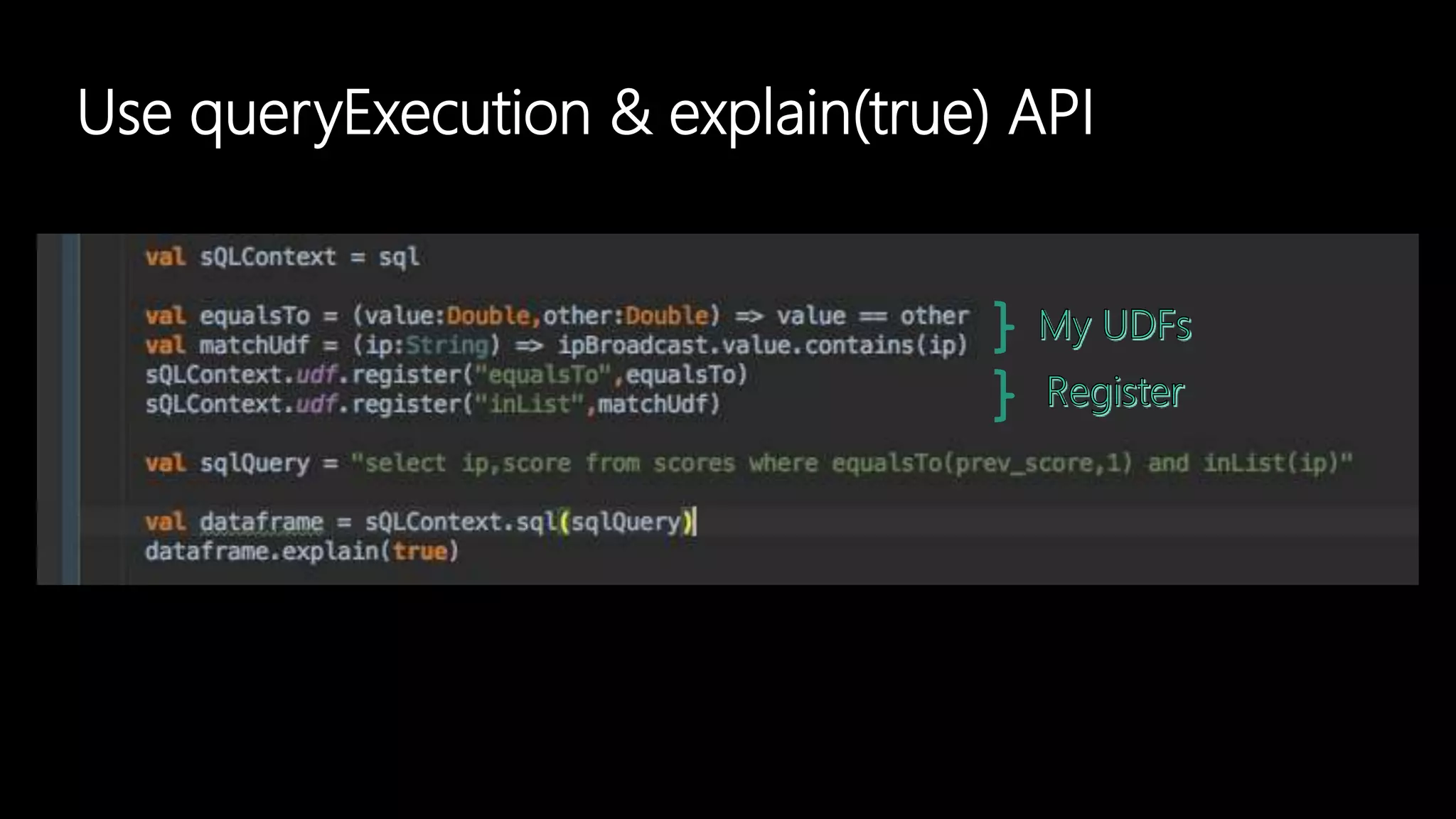

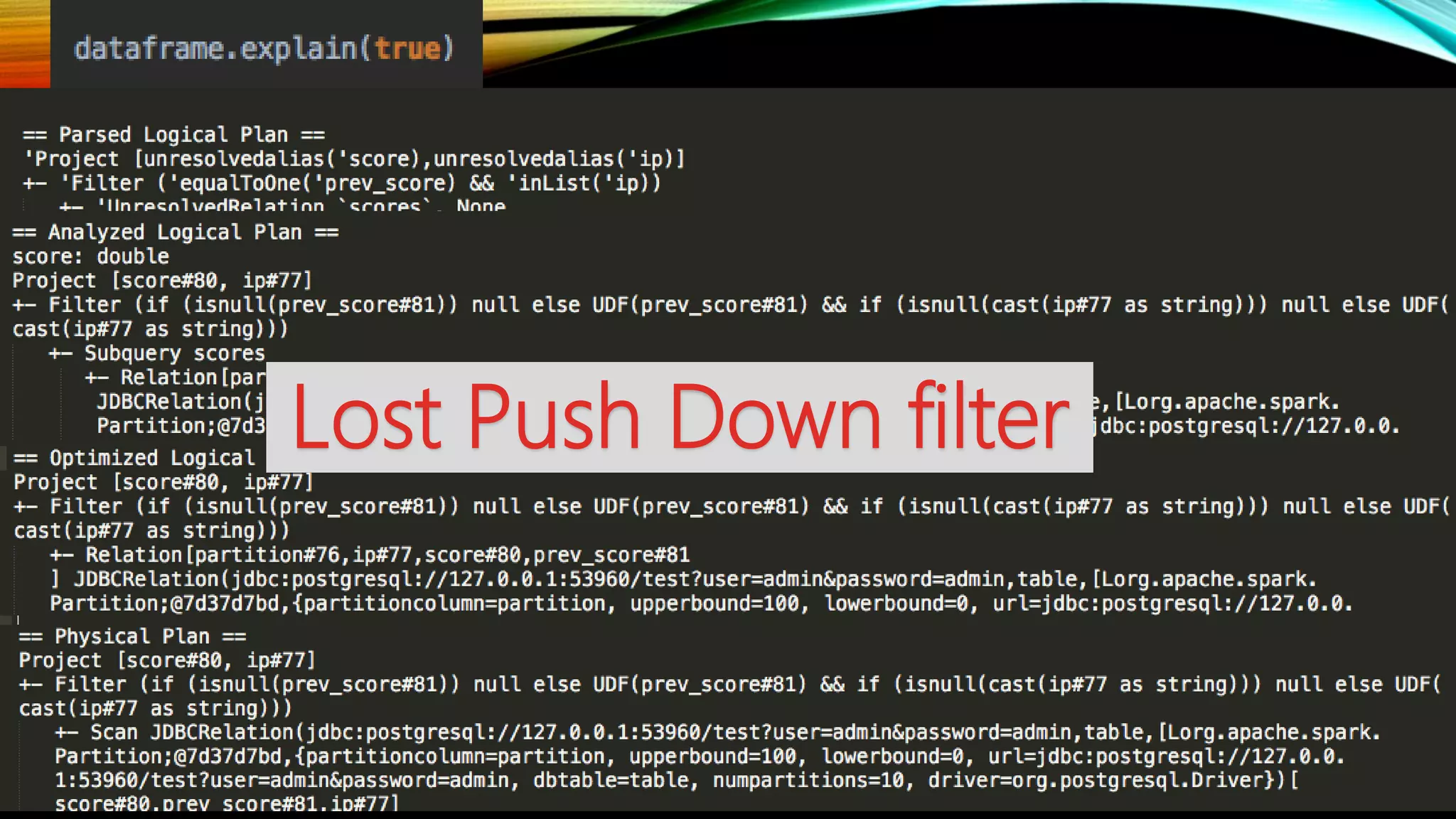

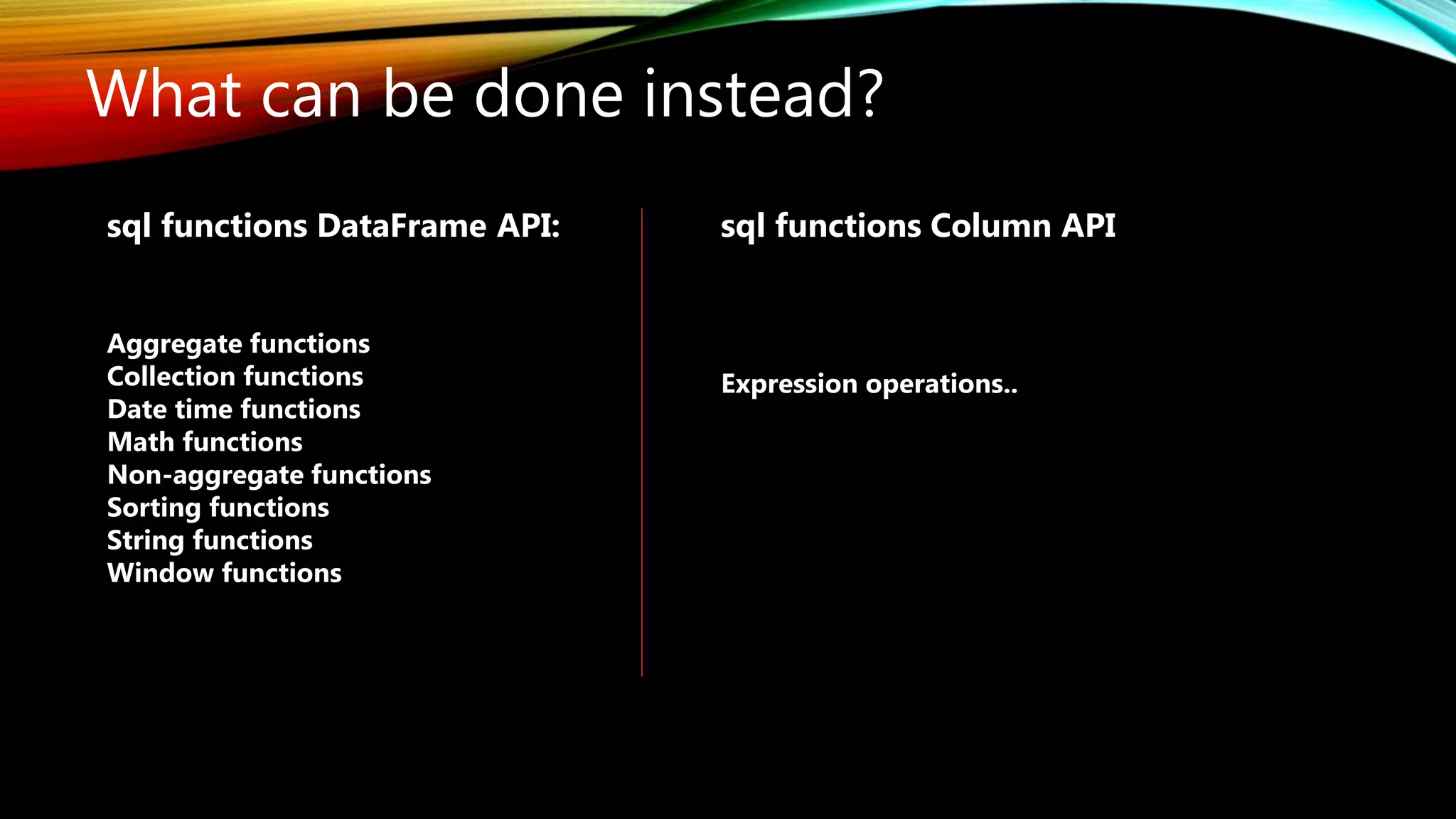

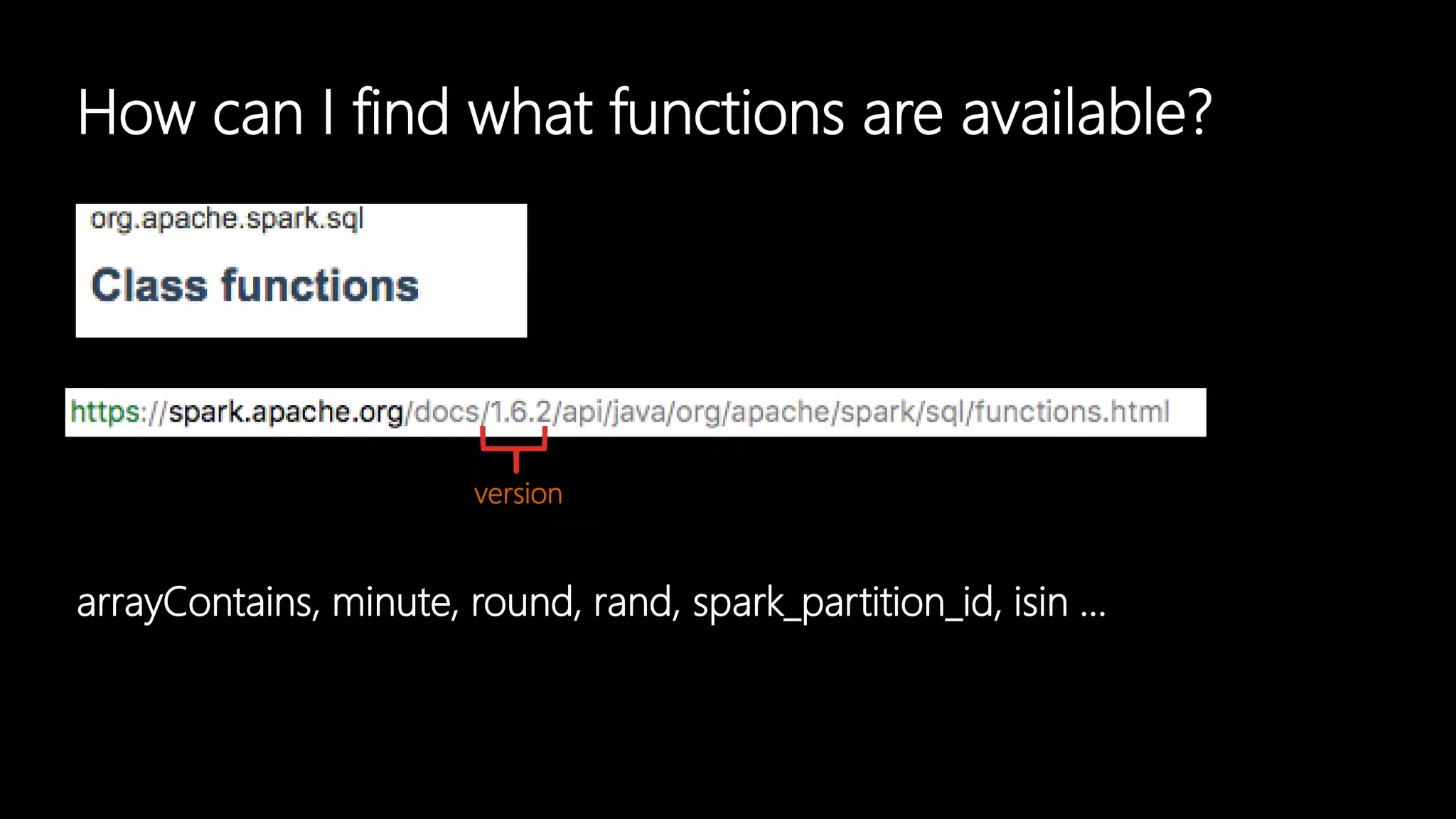

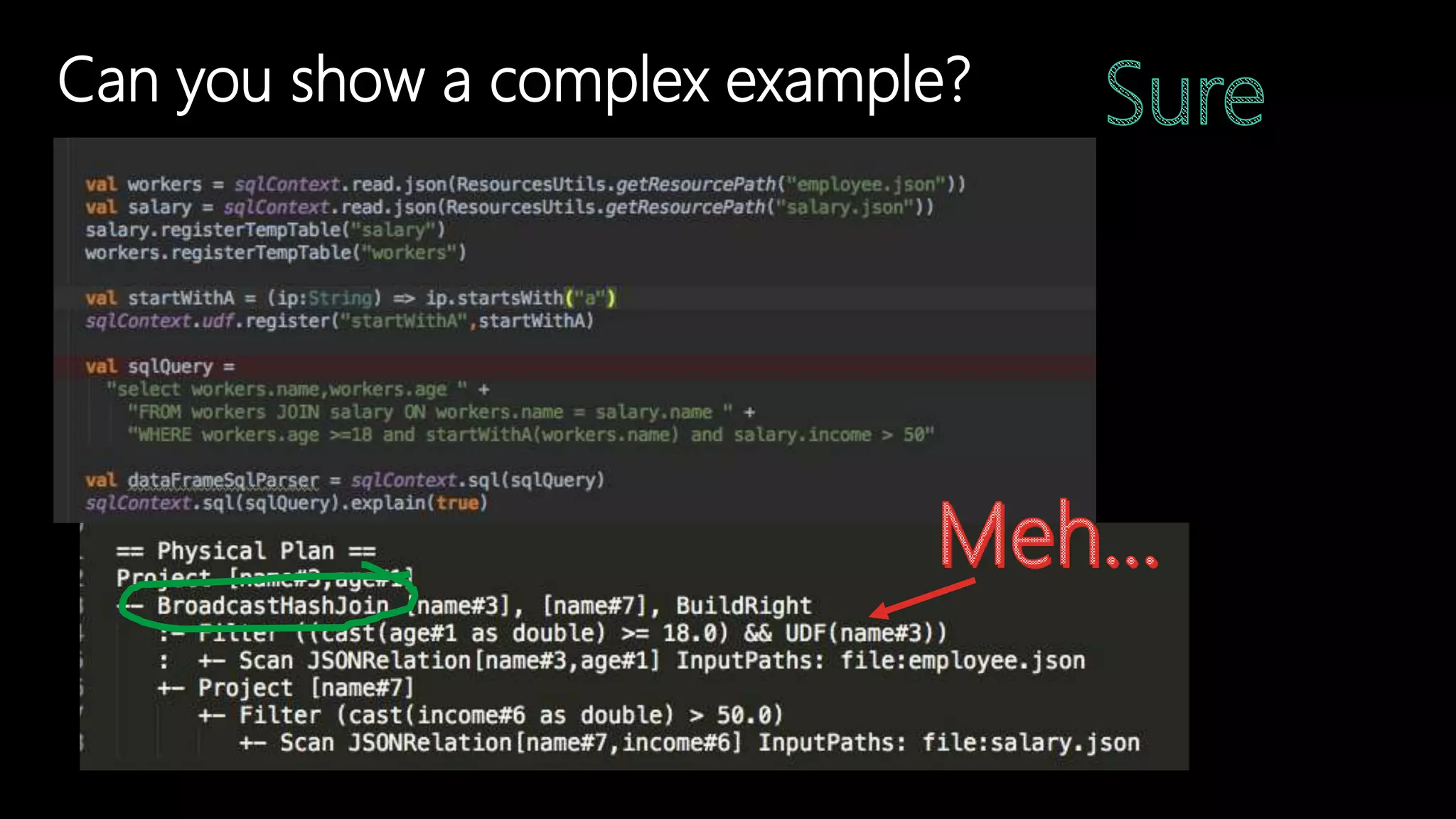

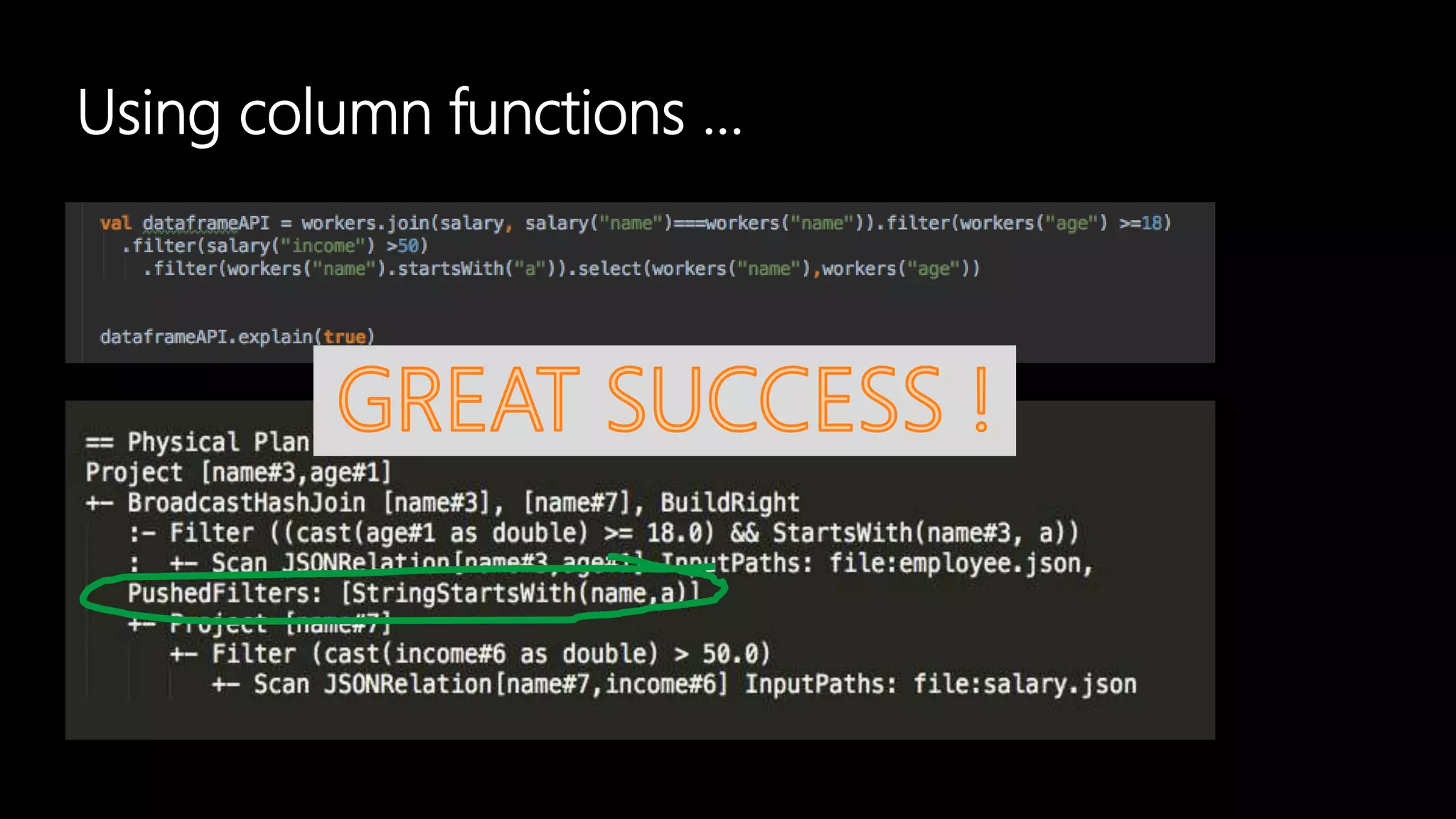

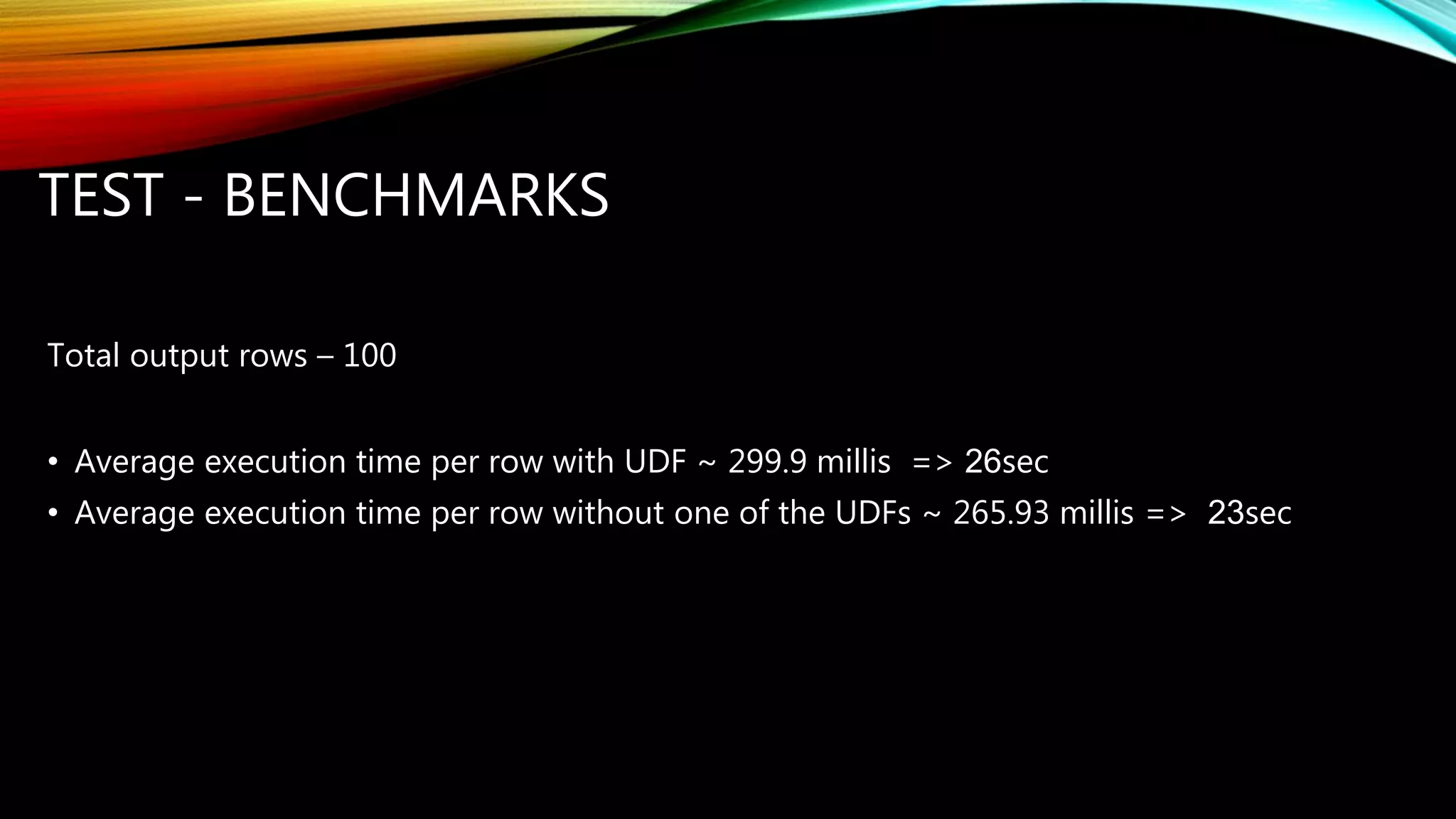

Adi Polak, a Senior Software Engineer at Akamai, discusses the drawbacks of using custom User Defined Functions (UDFs) in Apache Spark and advocates for leveraging higher-level standard column functions whenever possible to enhance optimization. The document highlights the importance of understanding Spark's Catalyst optimization framework and provides recommendations for maintaining performance, including utilizing built-in functions and analyzing execution plans. Key conclusions urge developers to treat UDFs as a last resort and to verify their impact on performance using the 'explain' method.