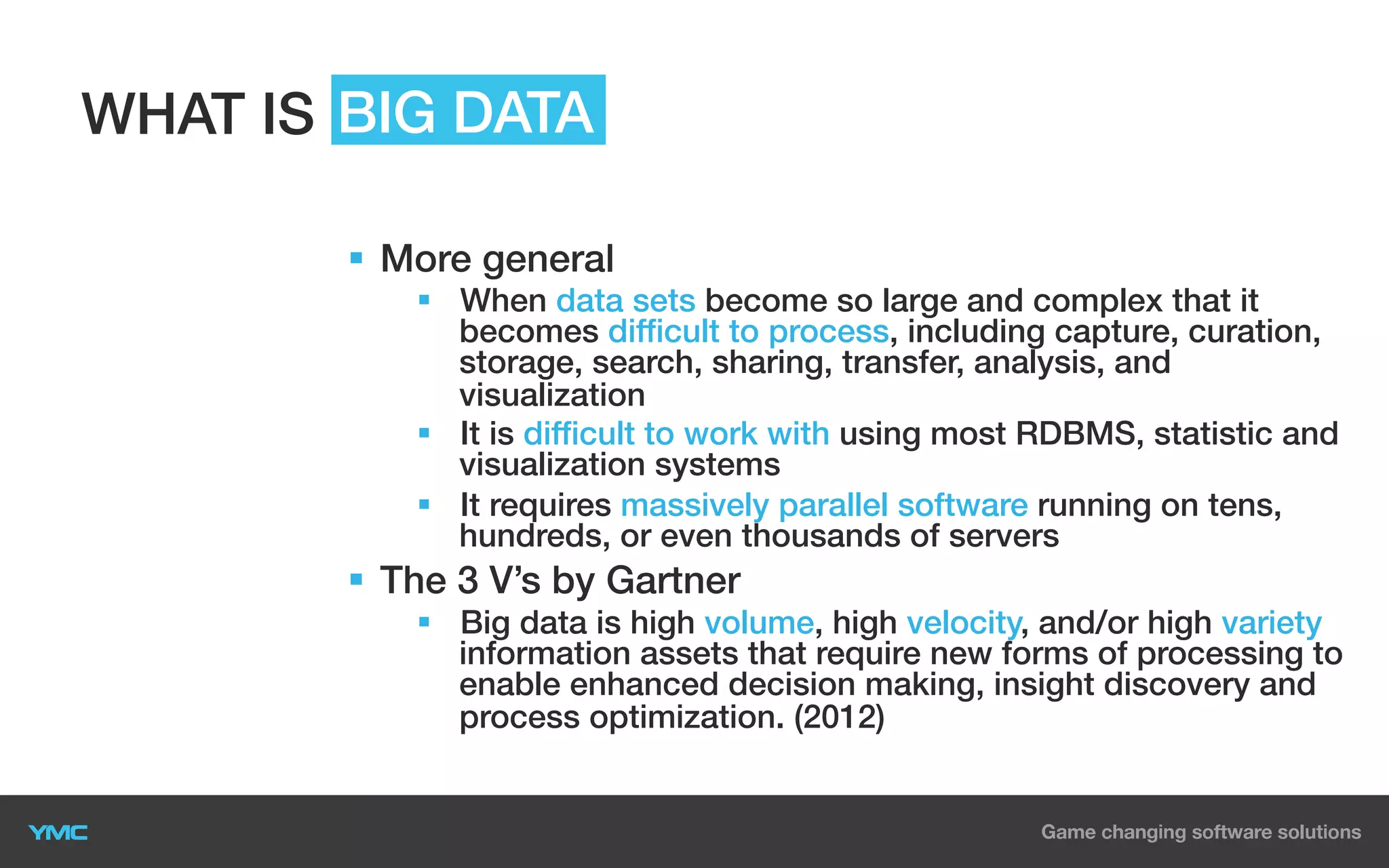

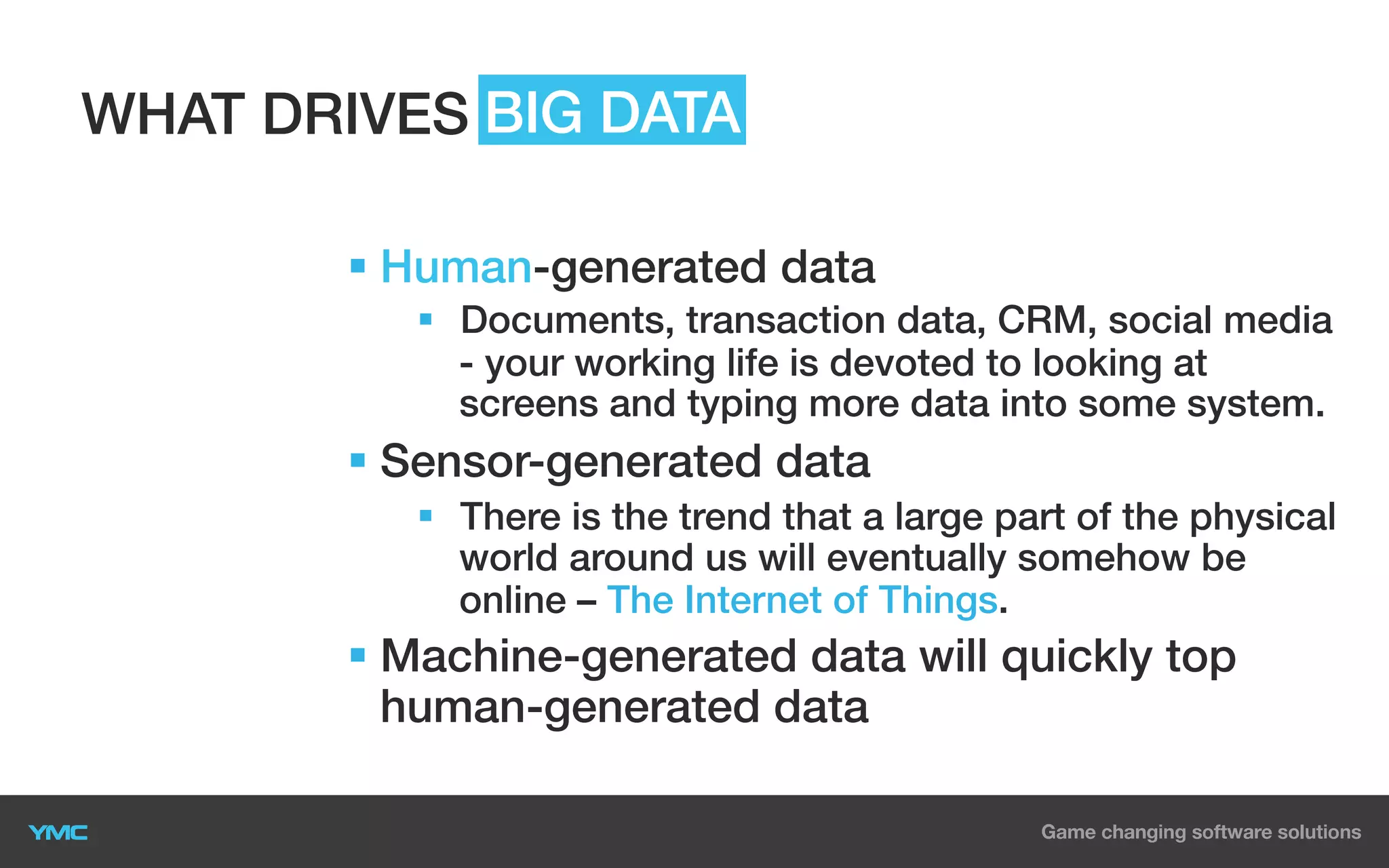

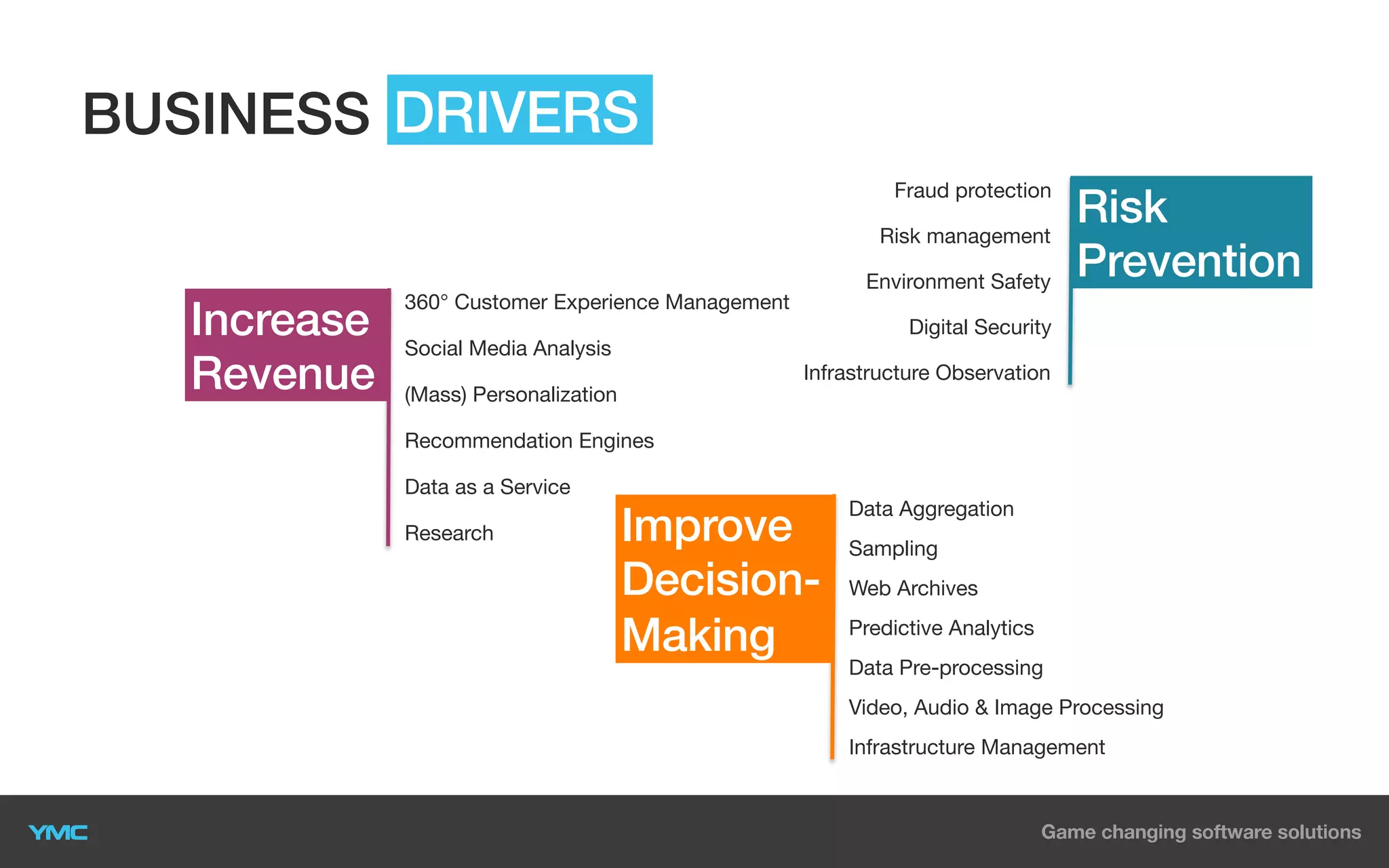

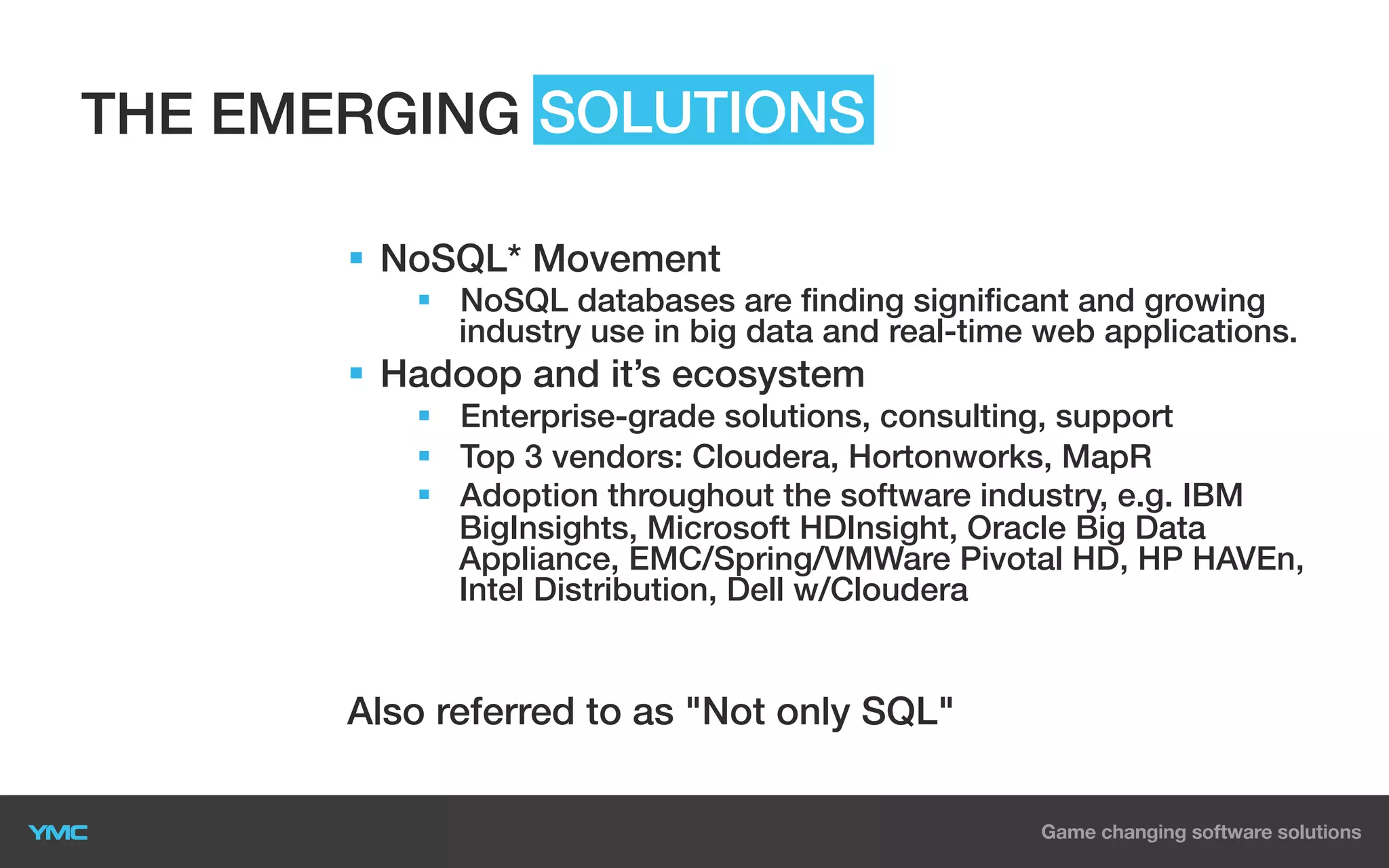

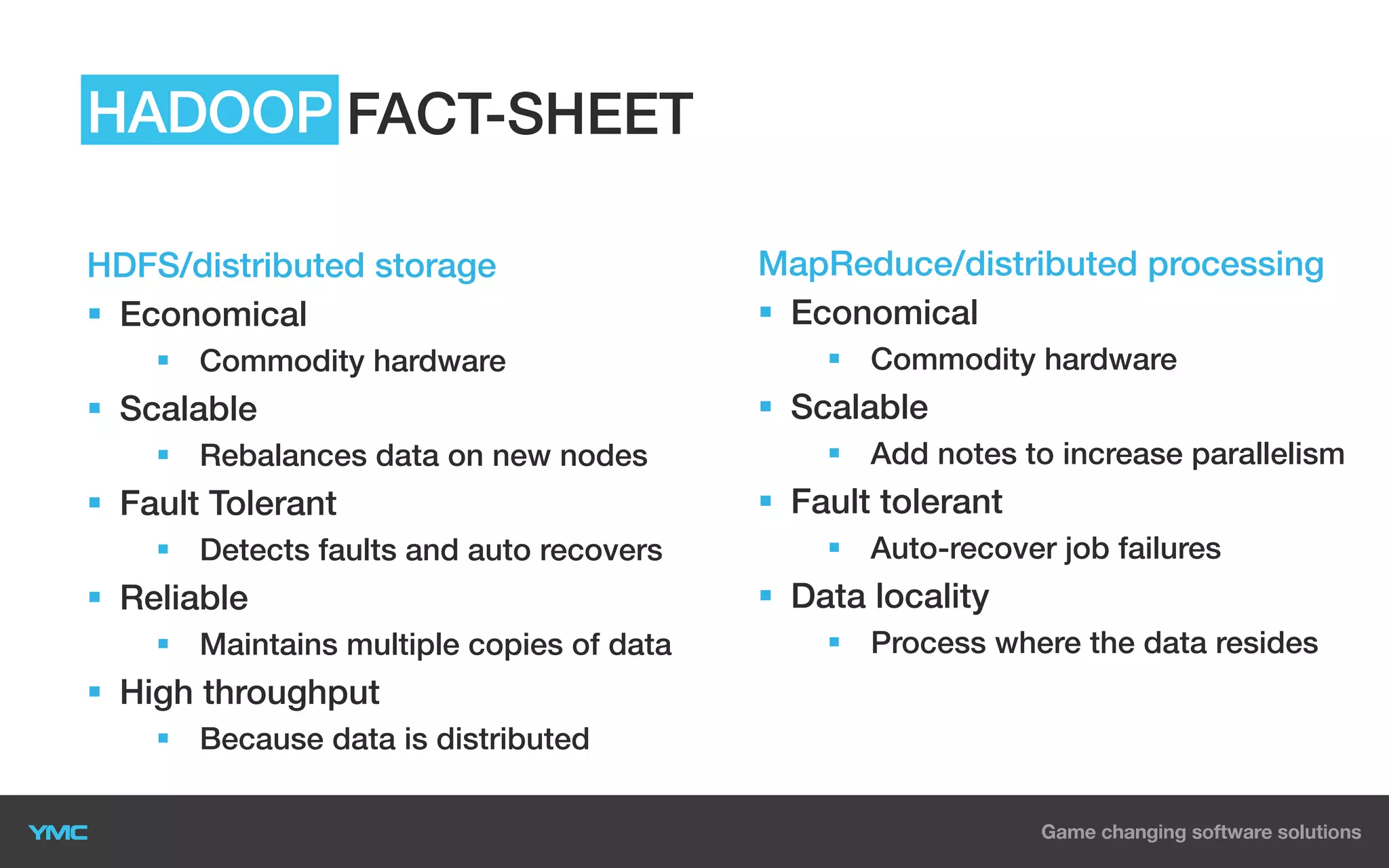

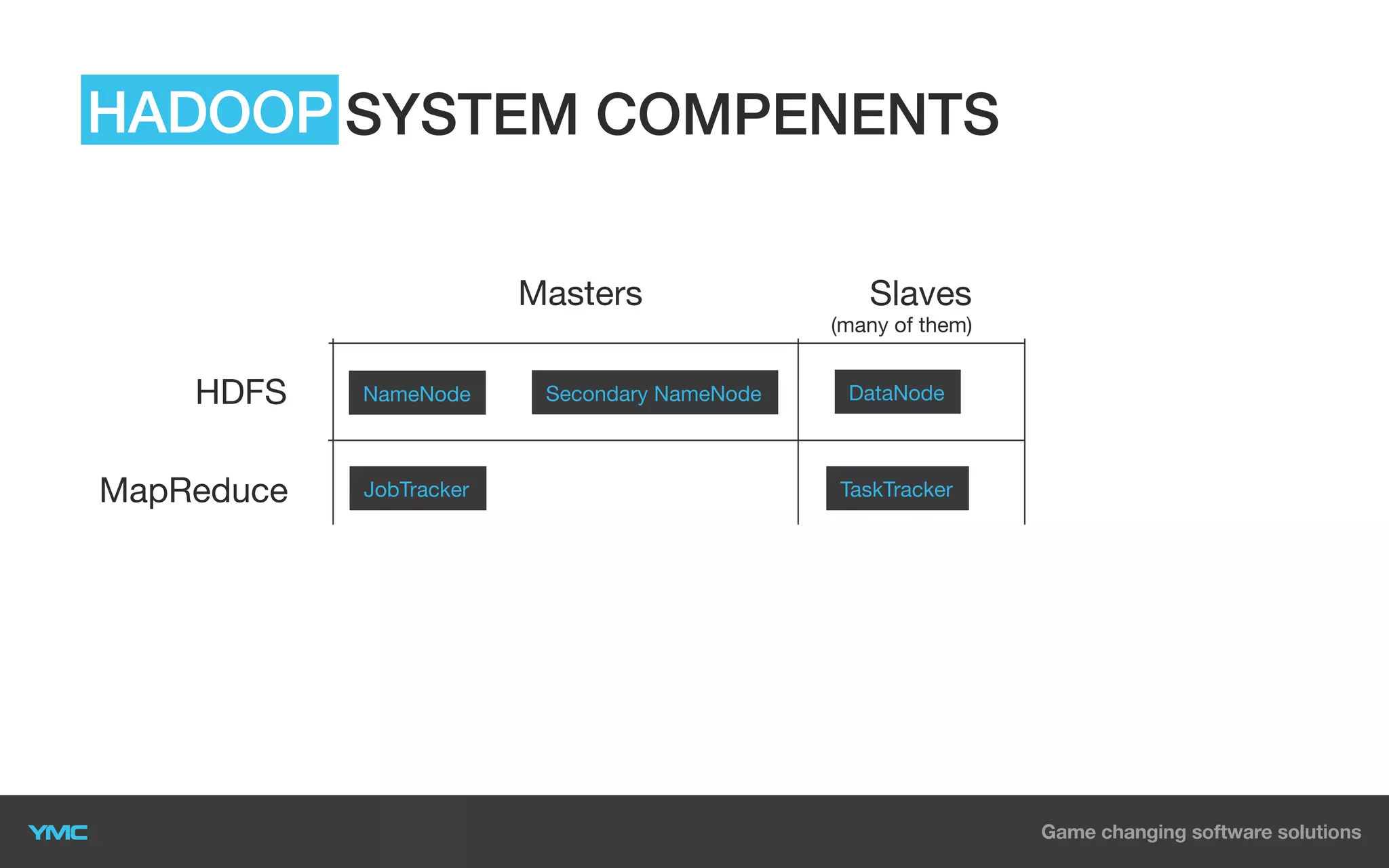

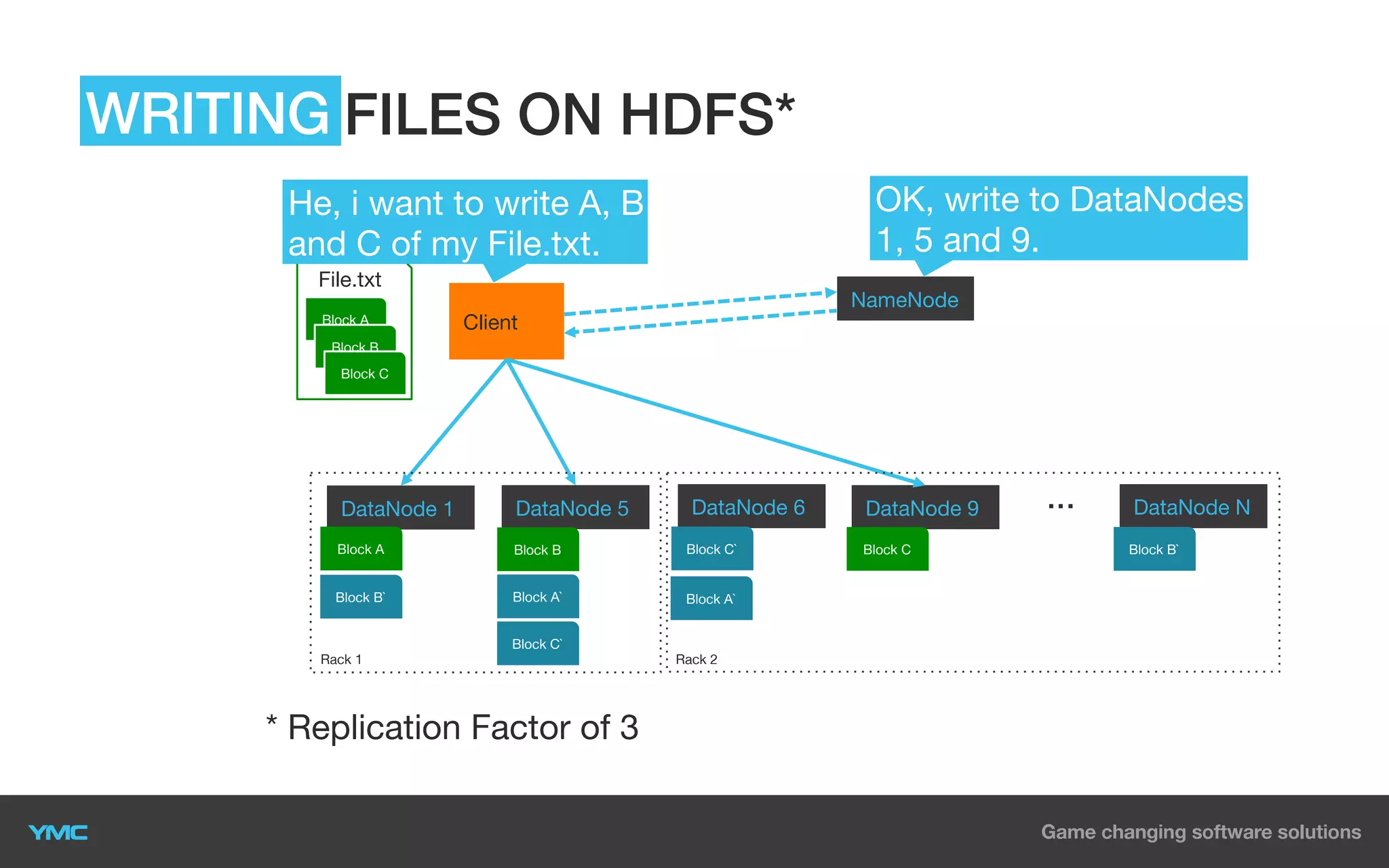

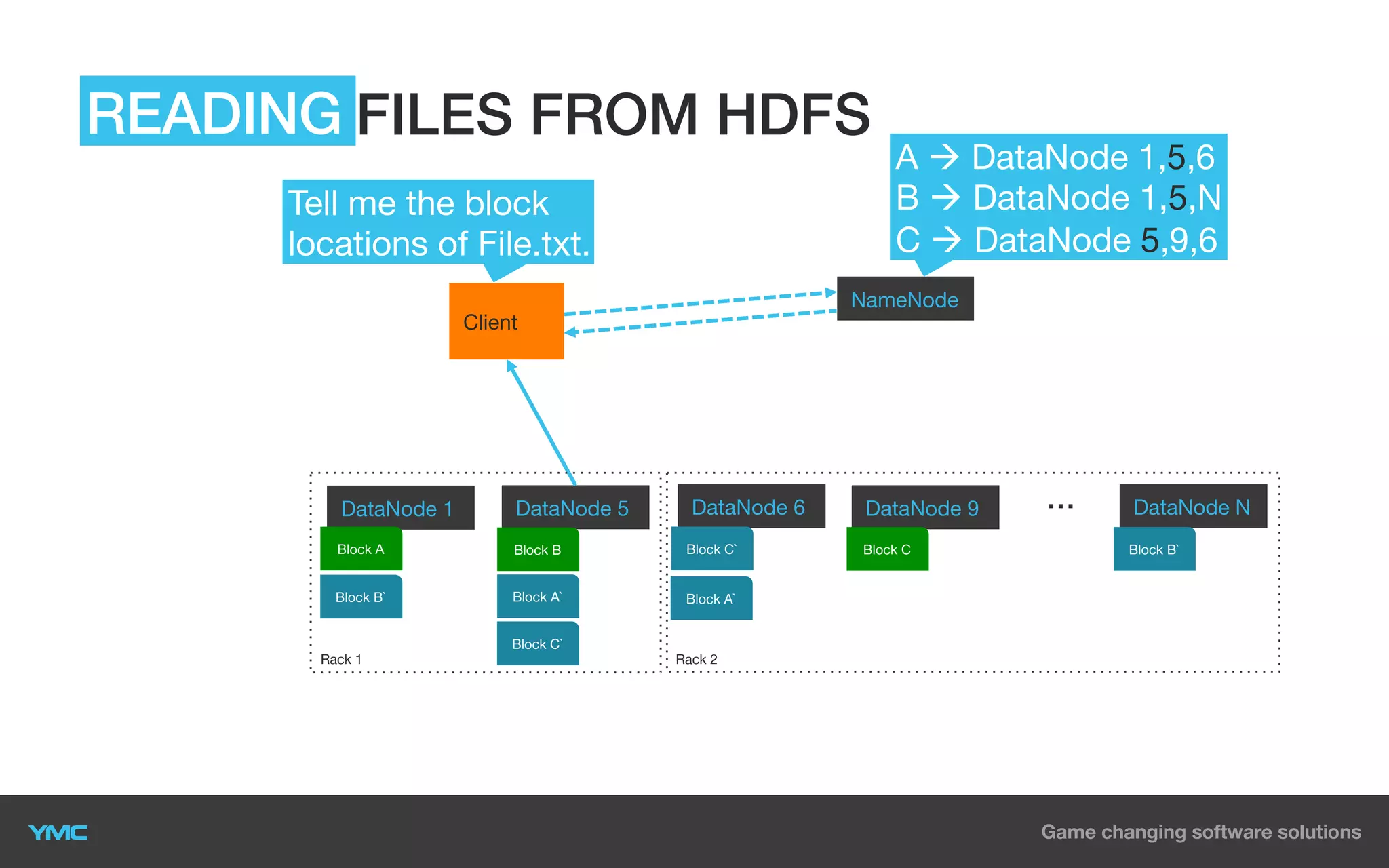

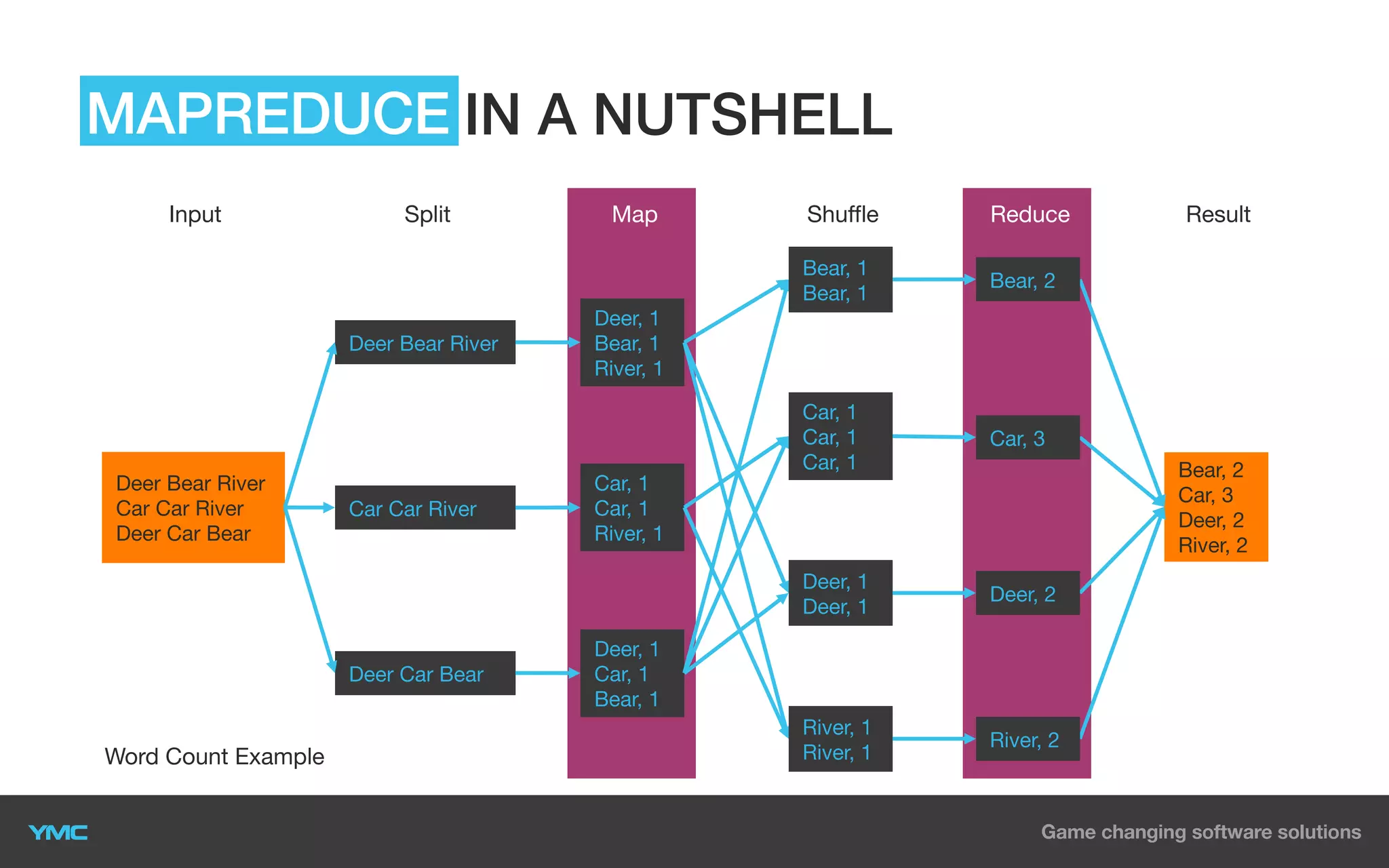

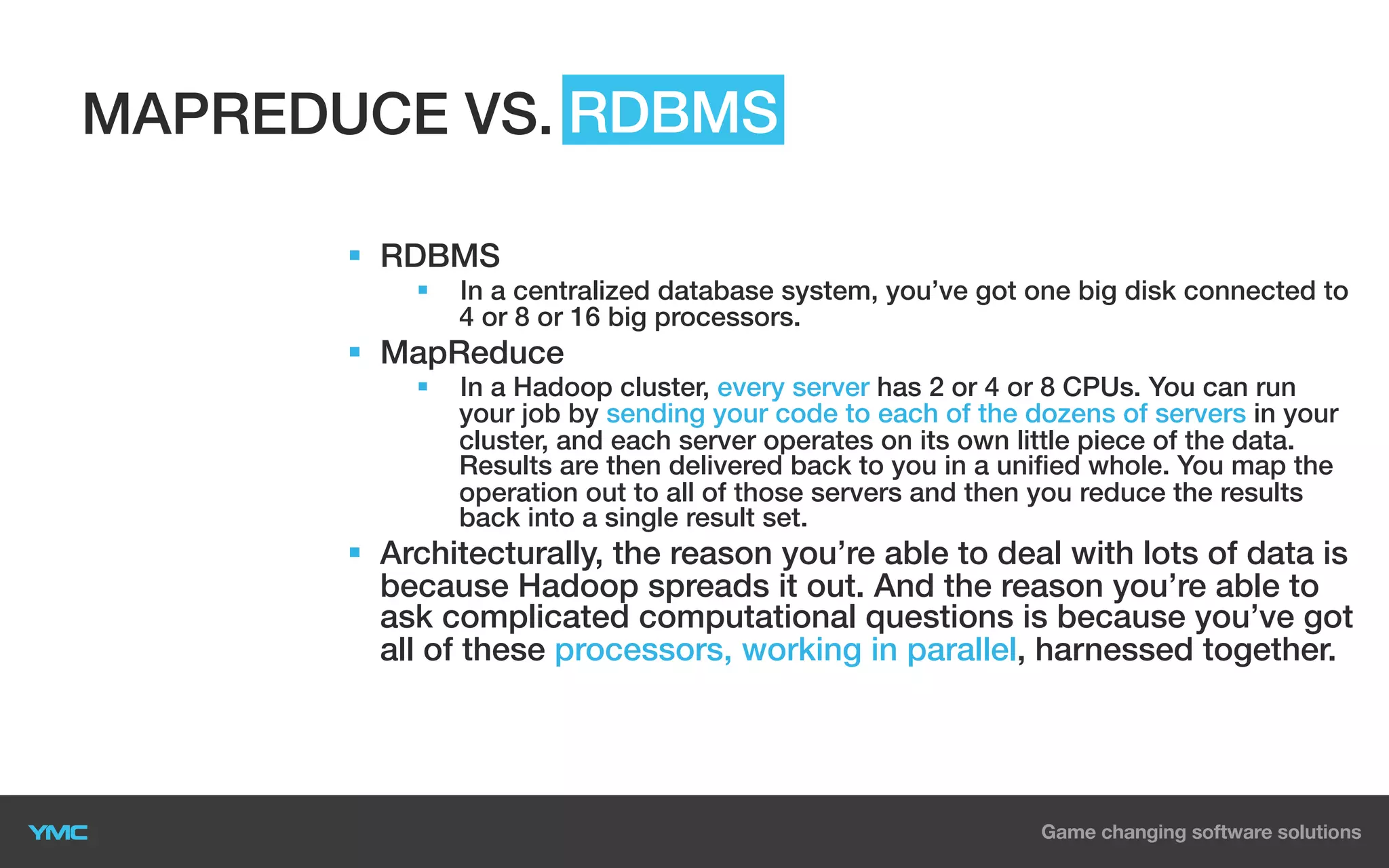

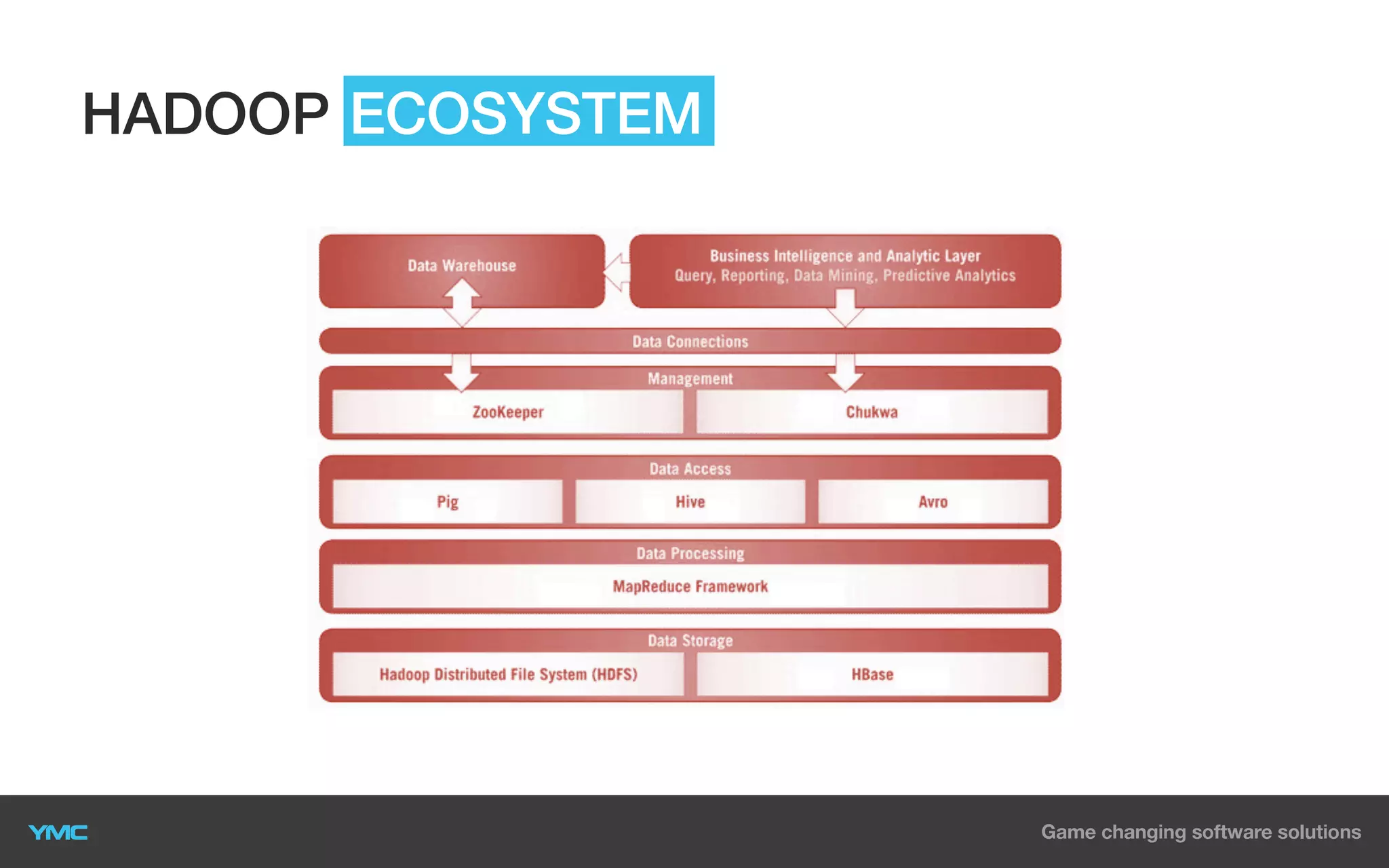

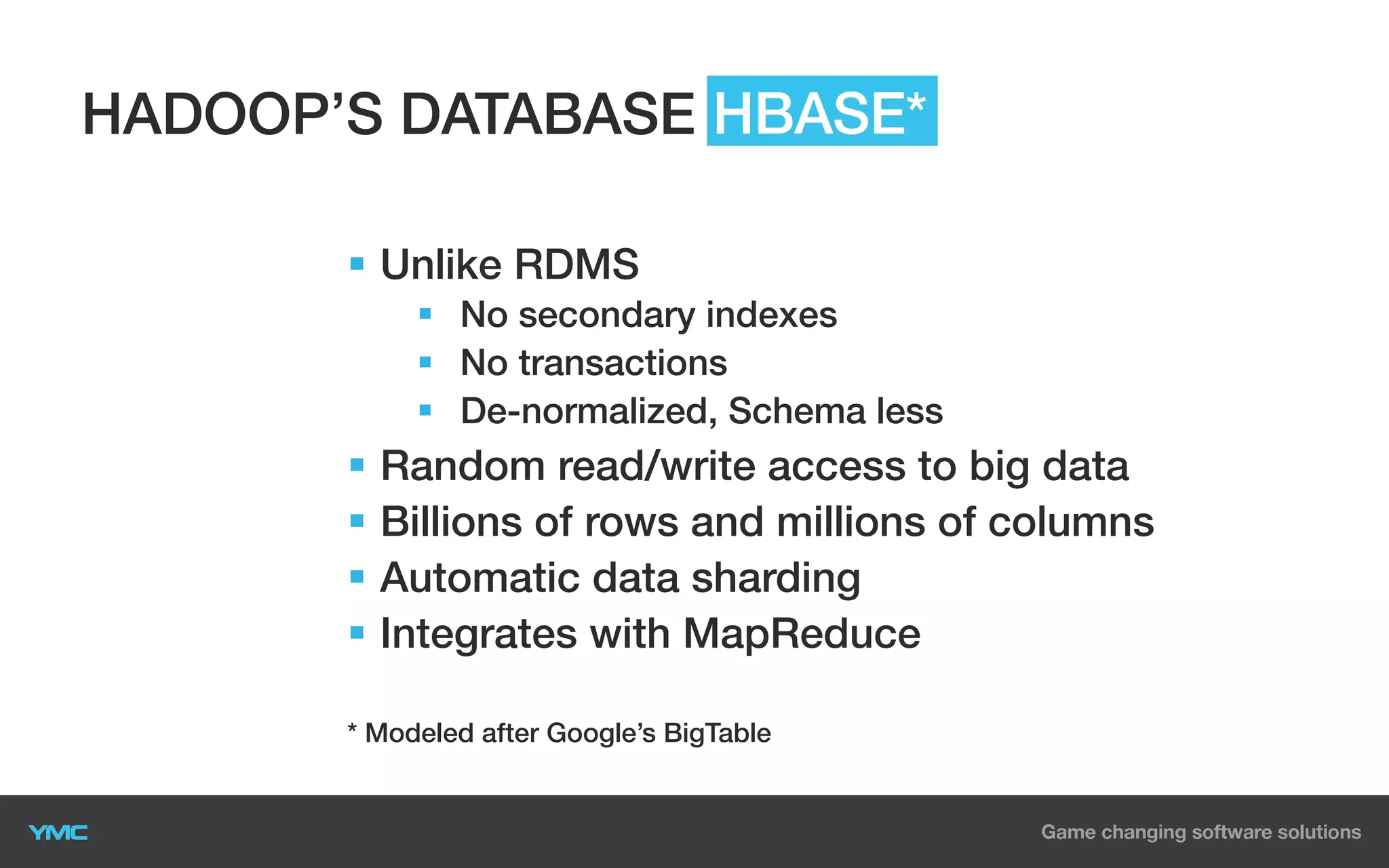

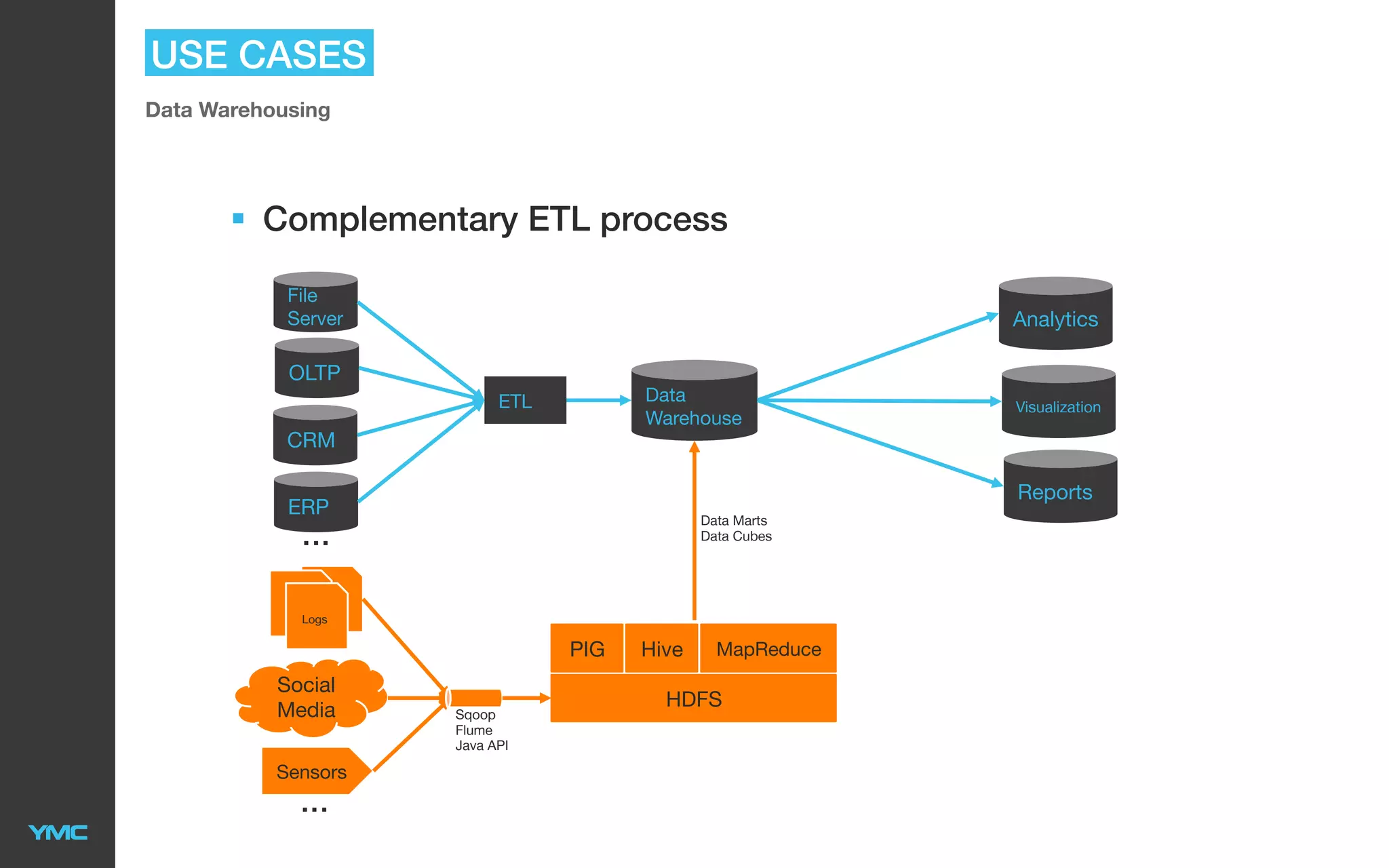

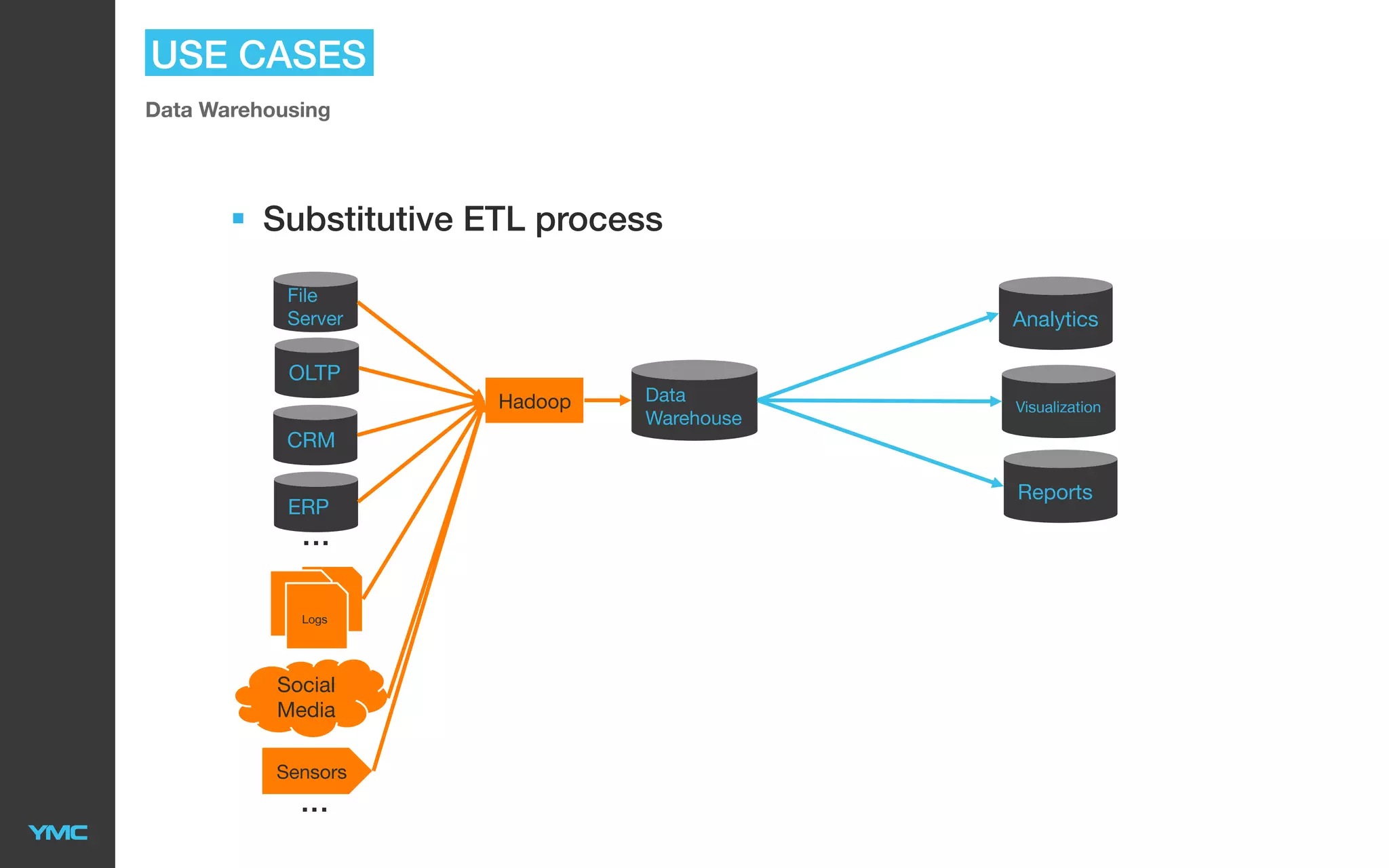

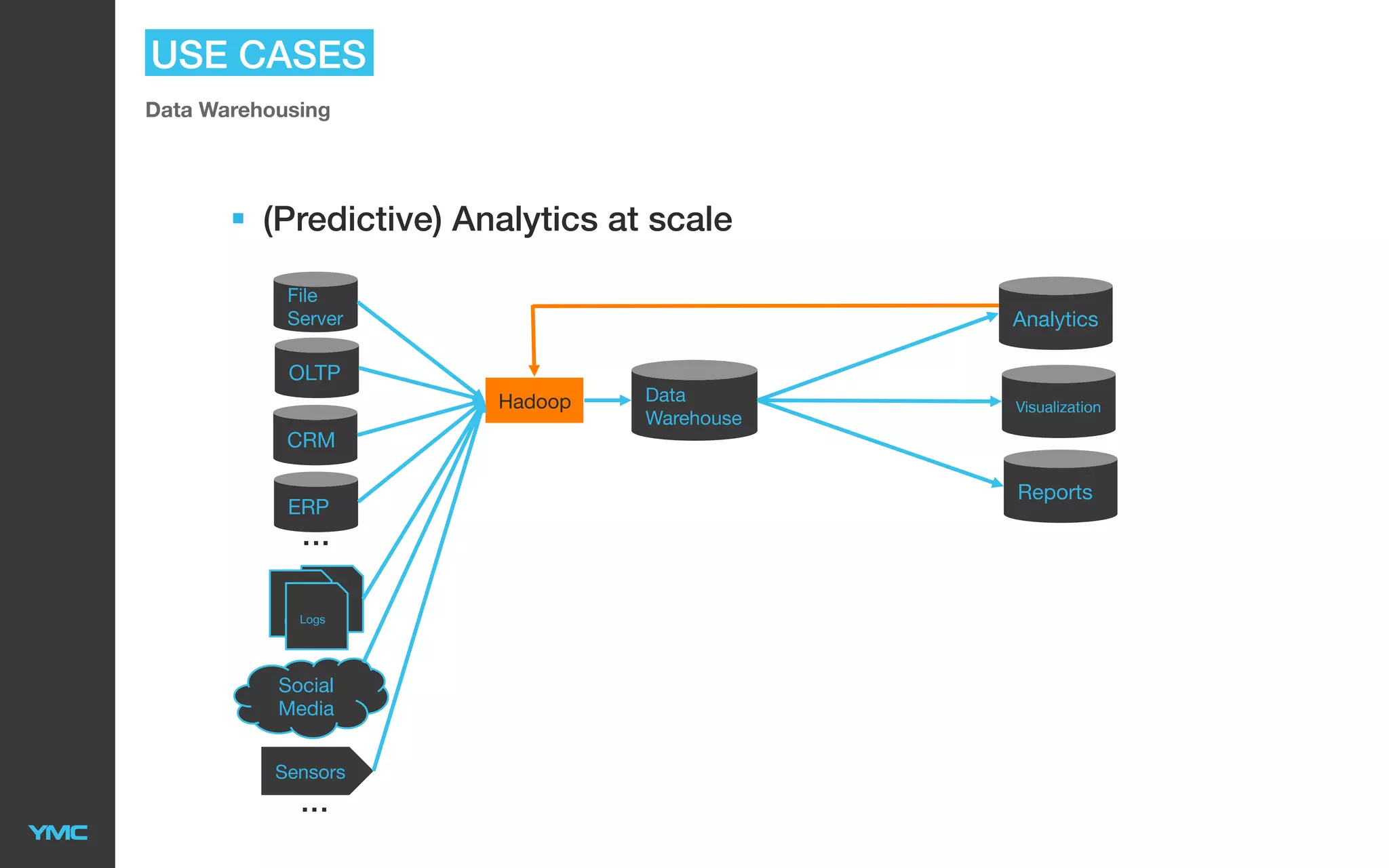

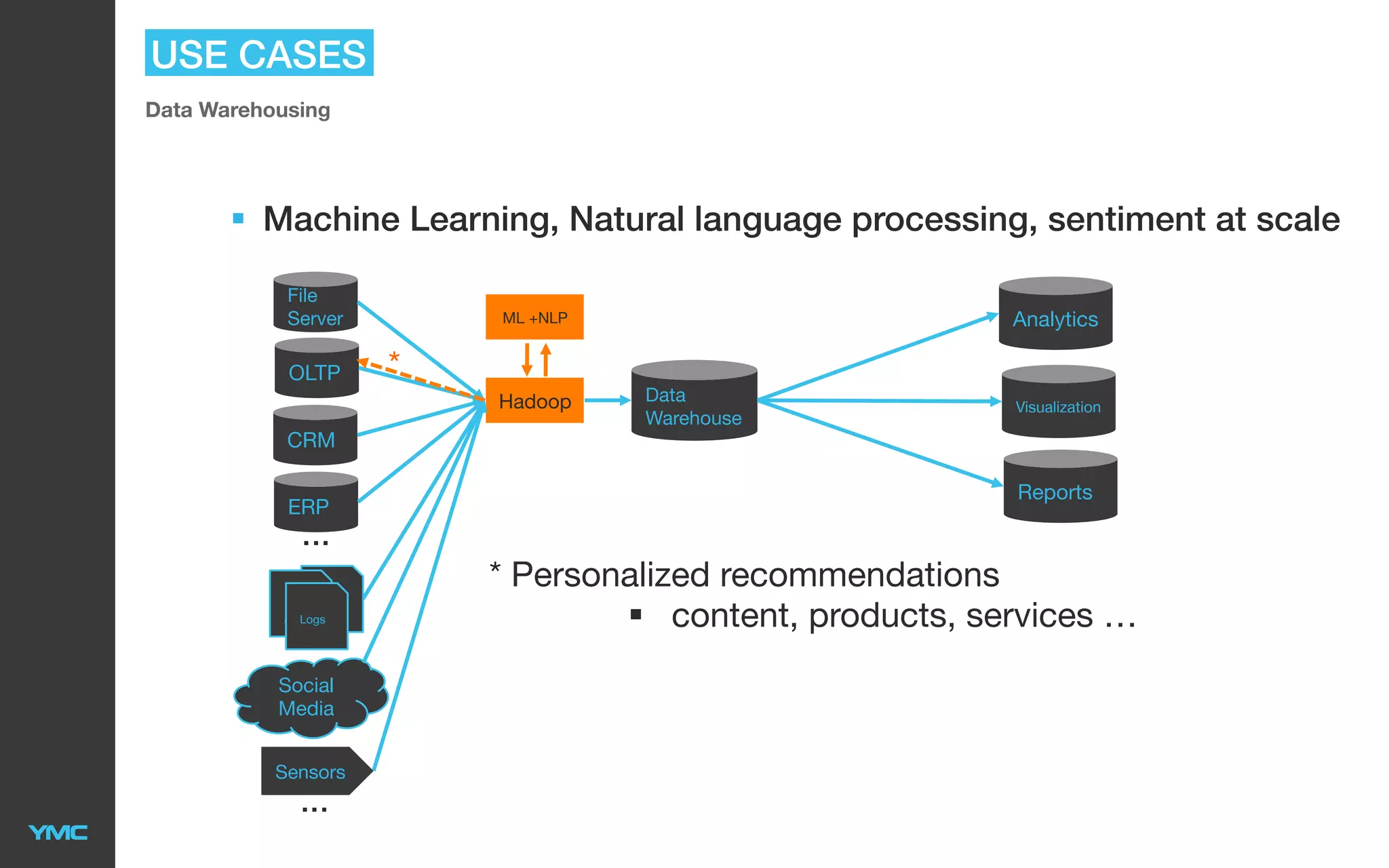

This document provides an overview of Big Data and Hadoop. It defines Big Data as large and complex datasets that are difficult to process using traditional databases and systems. The three V's of Big Data are described as high volume, velocity, and variety. Hadoop is introduced as an open-source software framework for distributed storage and processing of large datasets across clusters of commodity servers. Key components of Hadoop including HDFS for storage and MapReduce for distributed processing are summarized. Example use cases for Hadoop in data warehousing and analytics are also outlined.