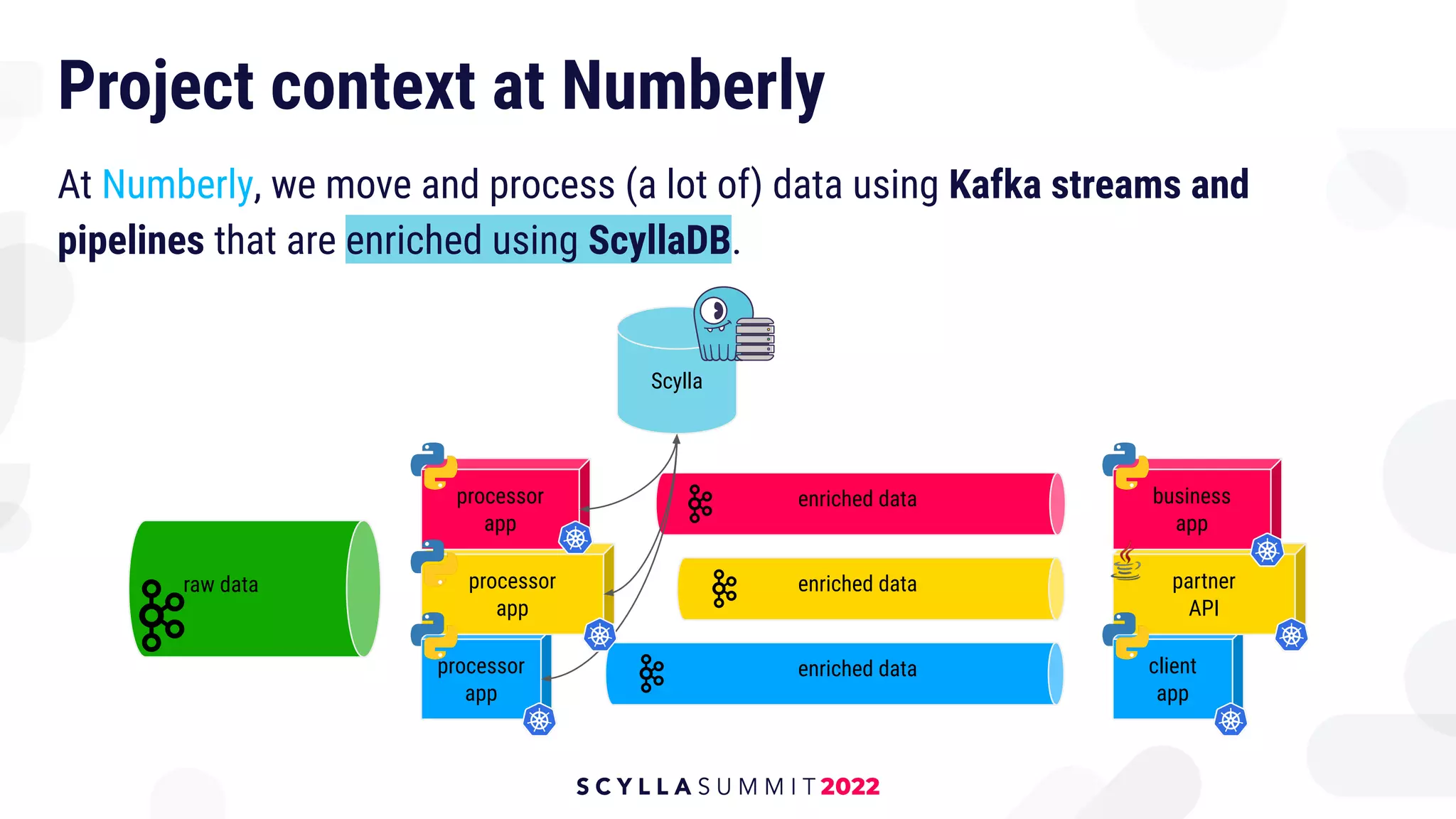

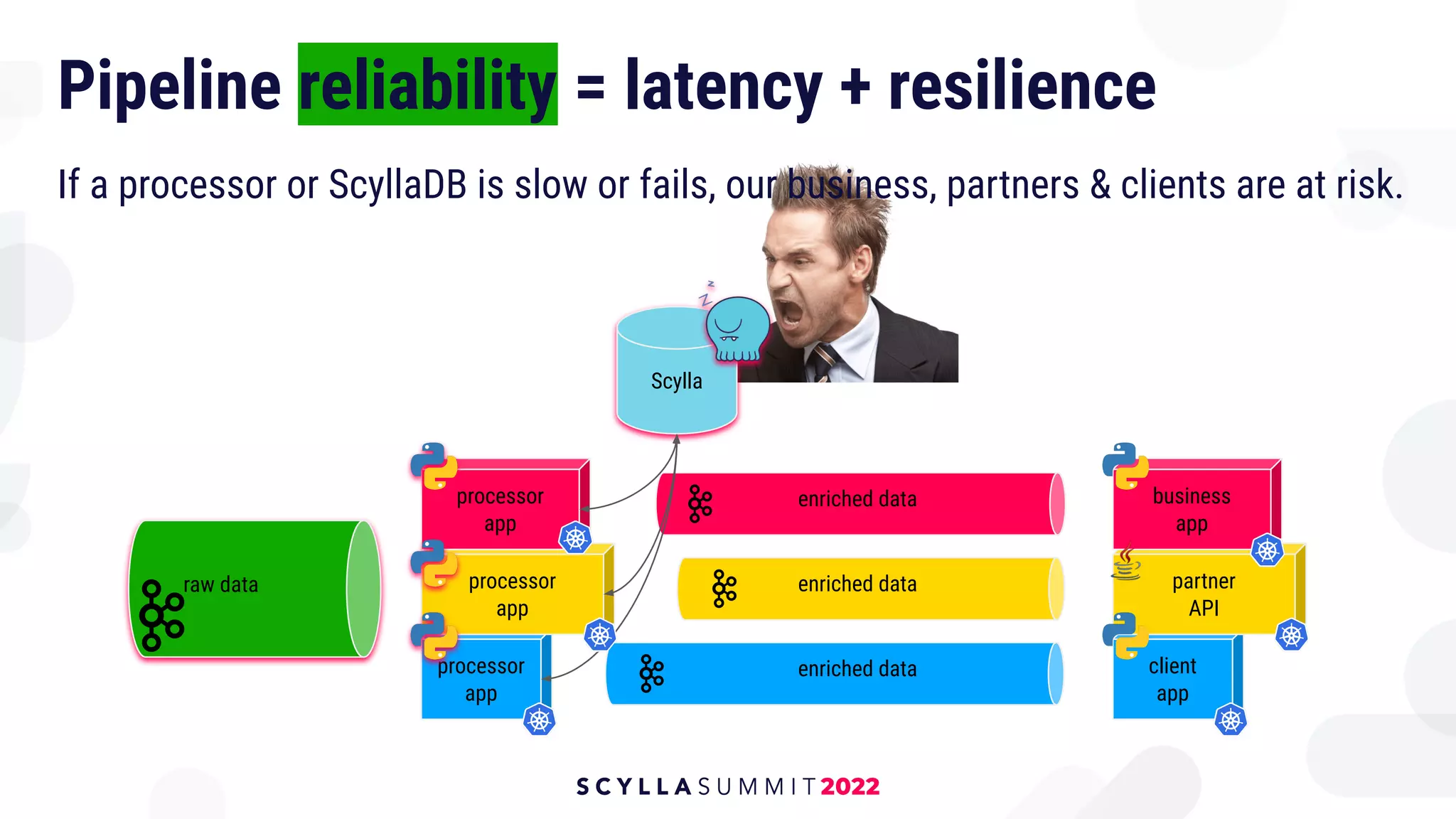

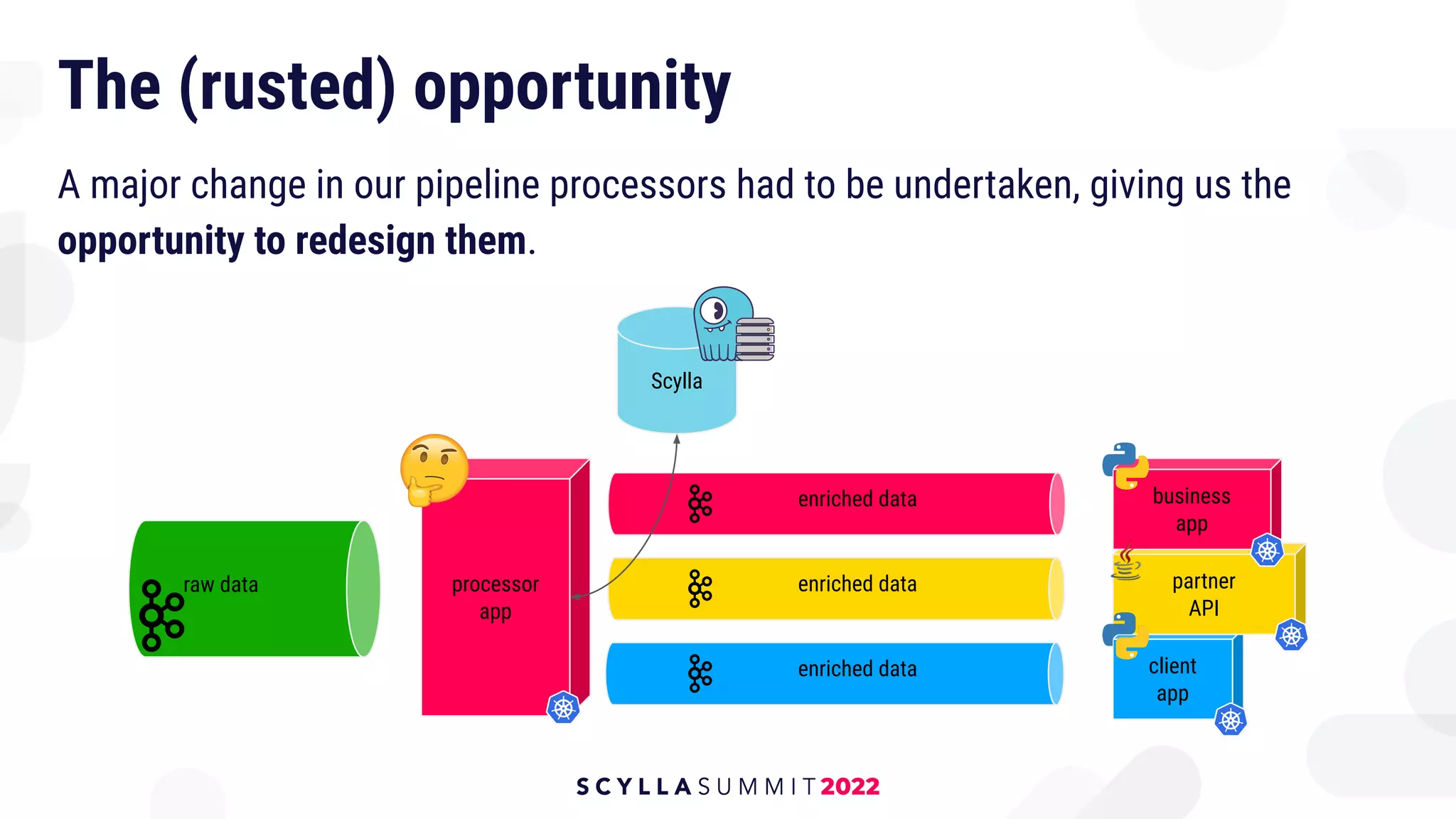

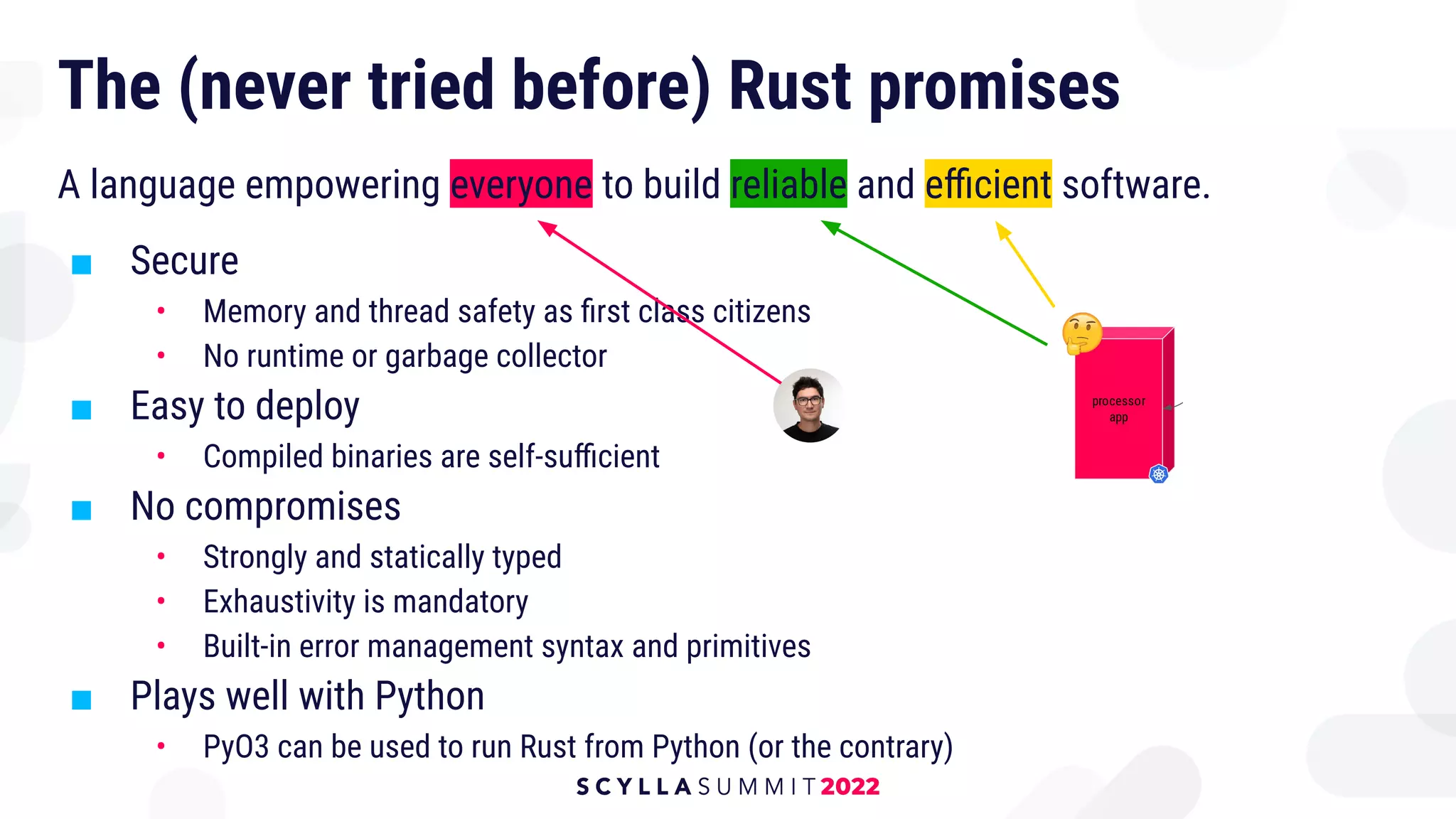

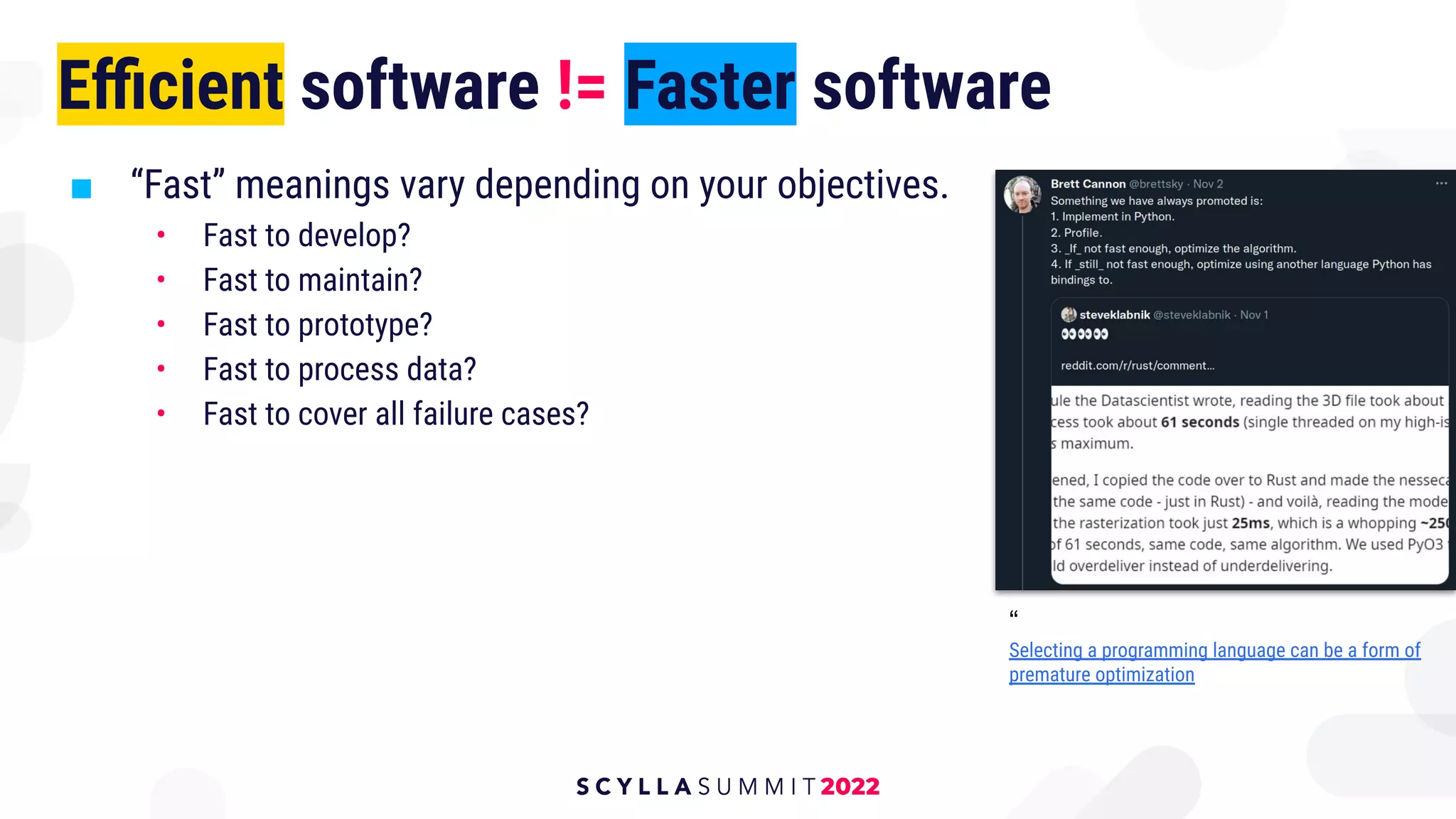

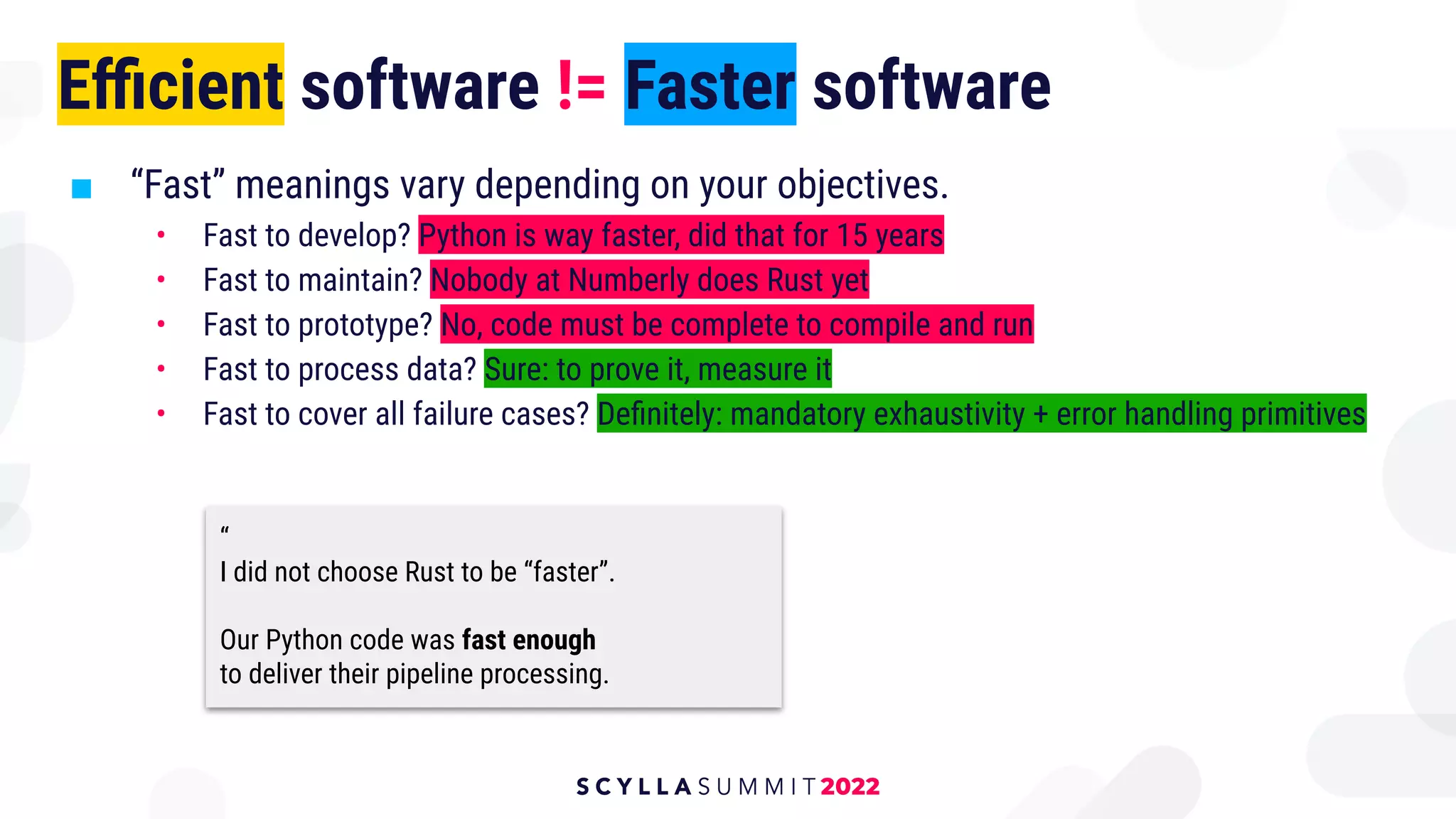

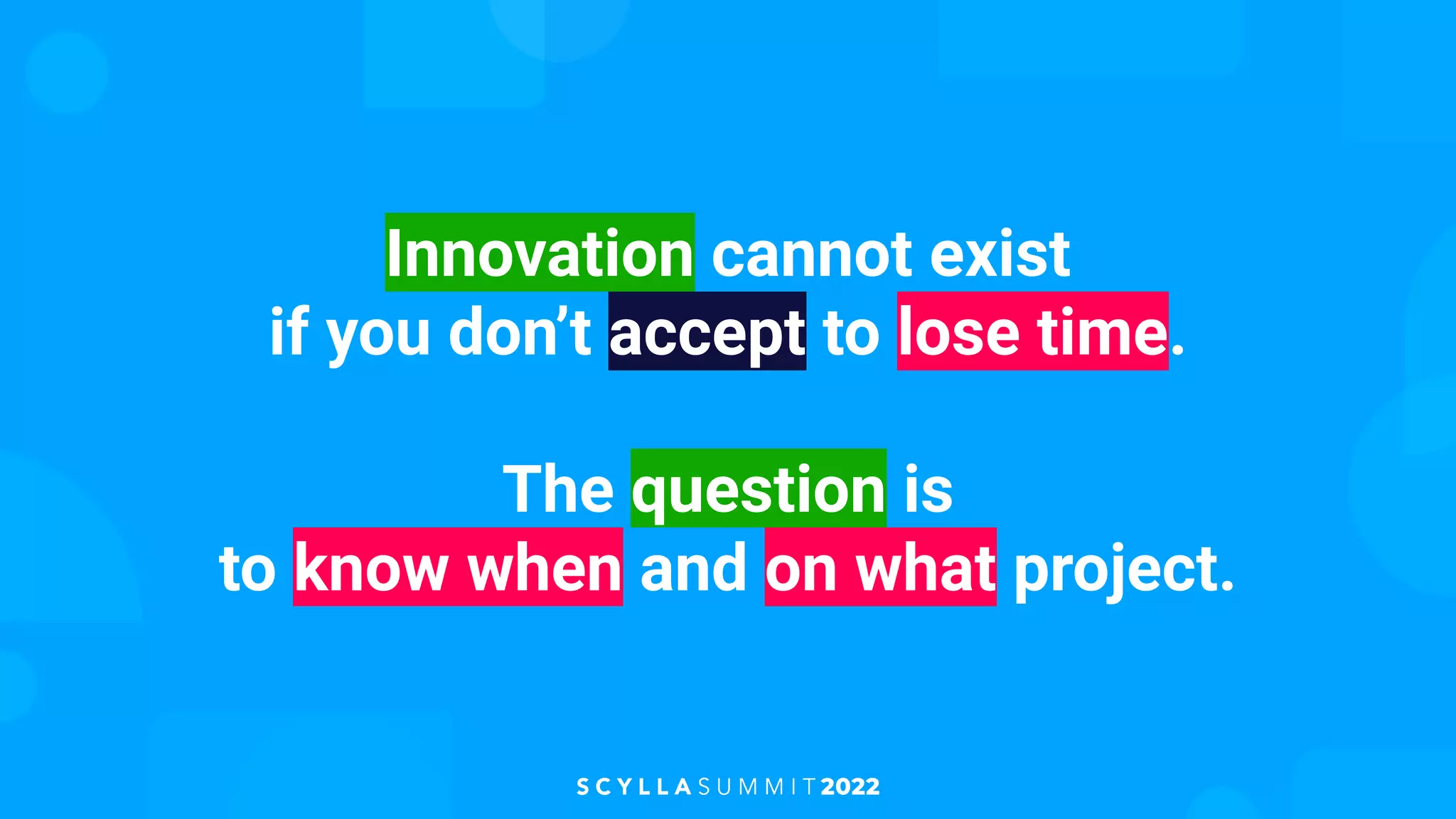

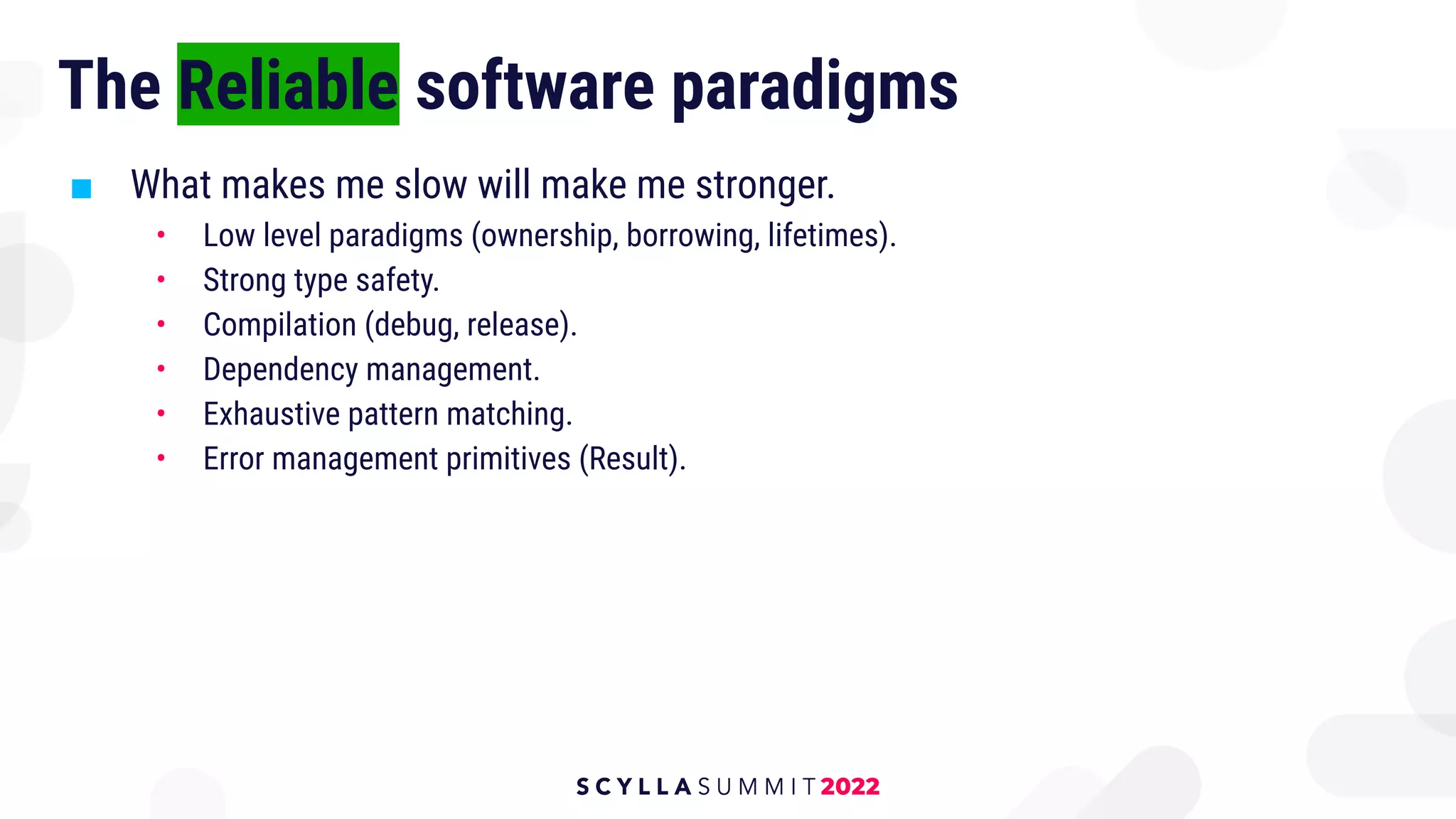

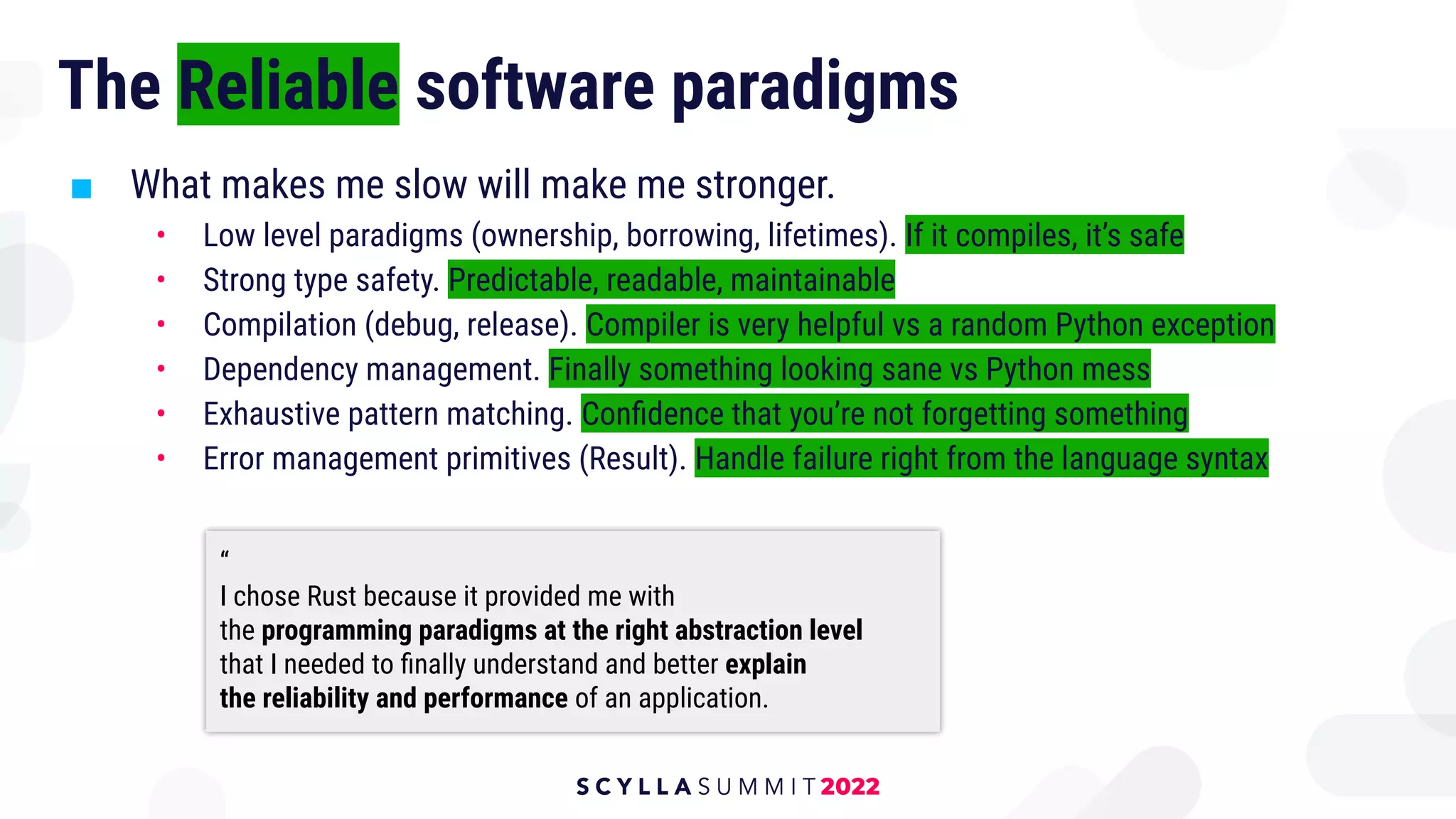

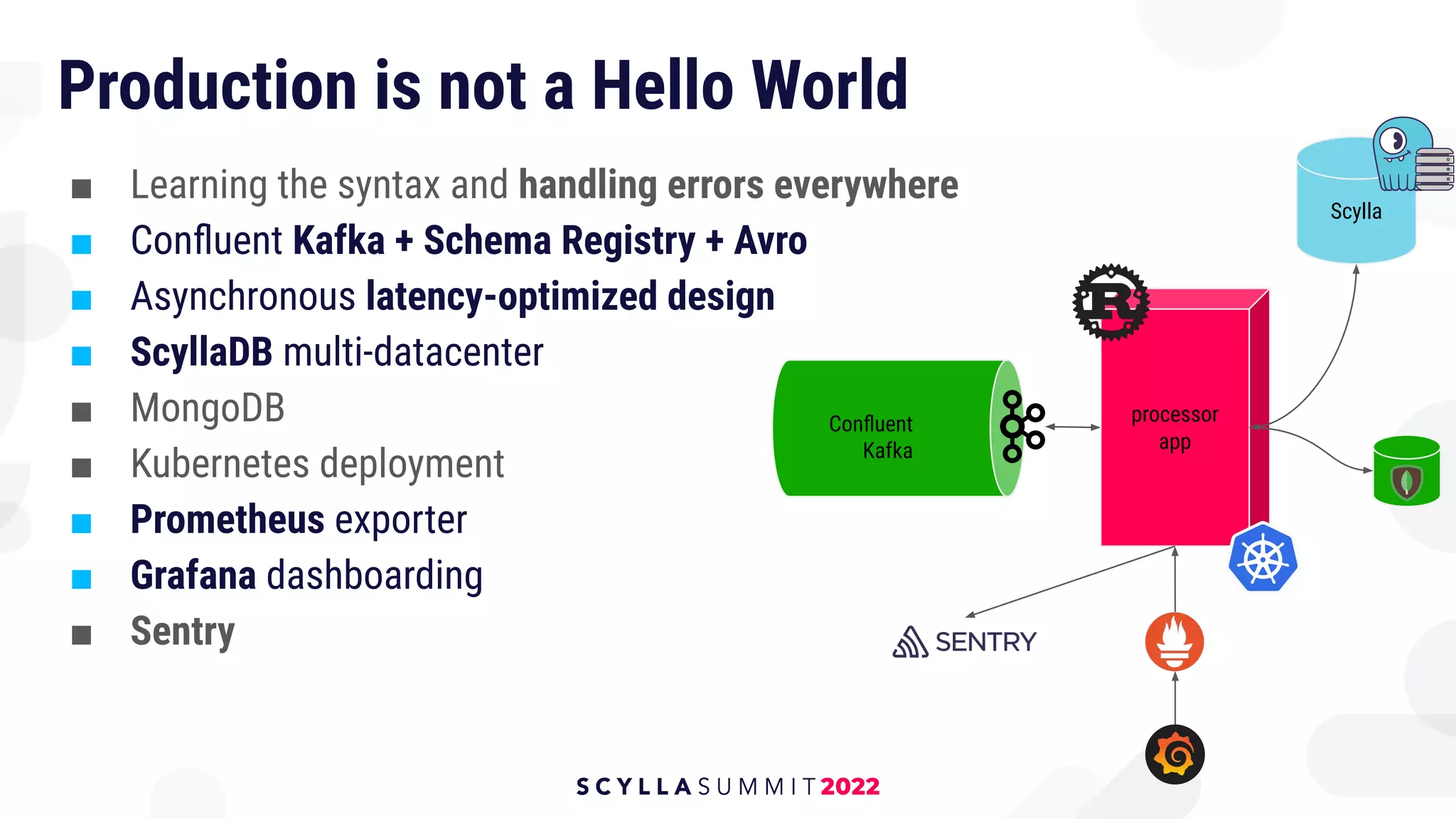

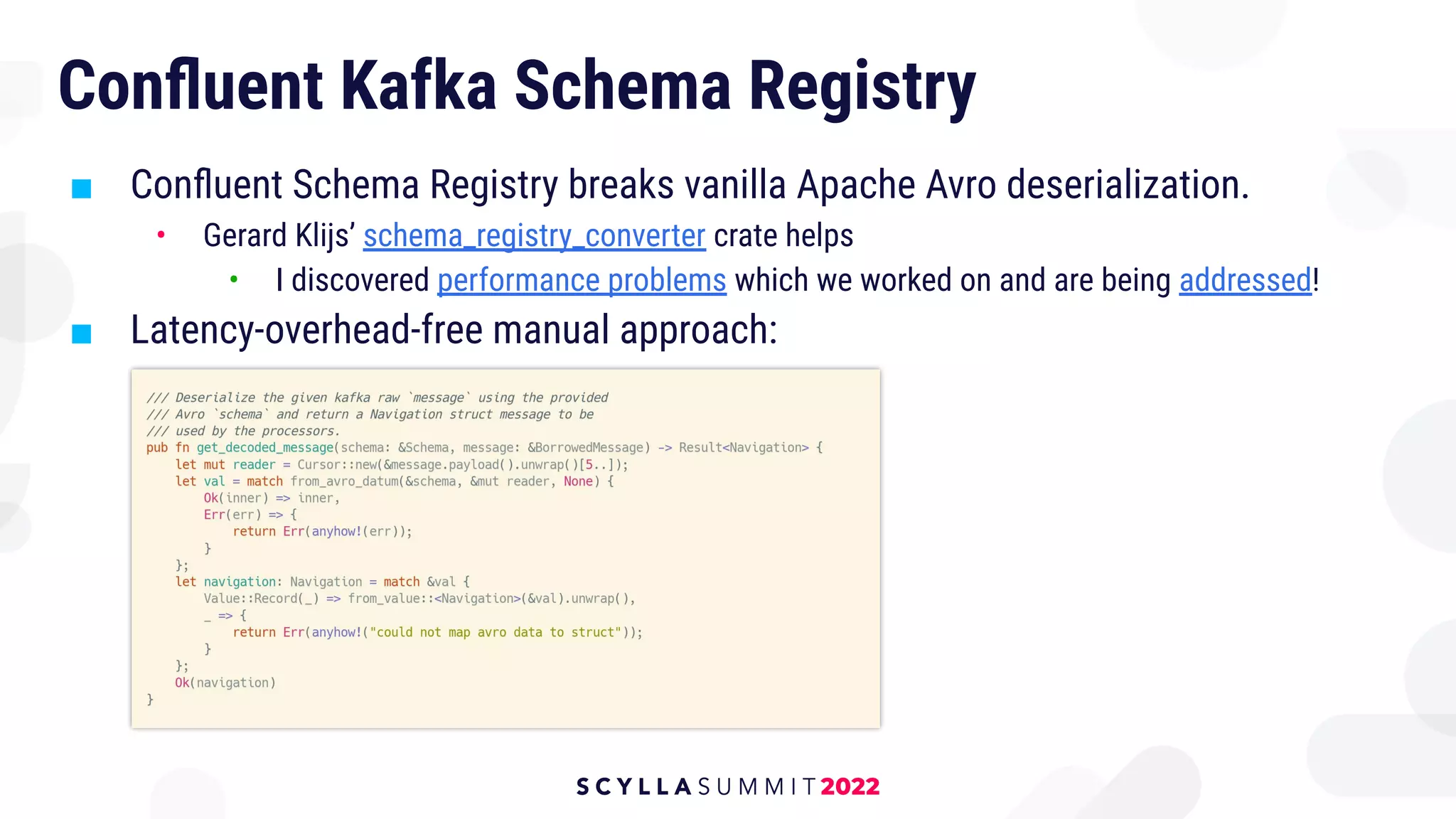

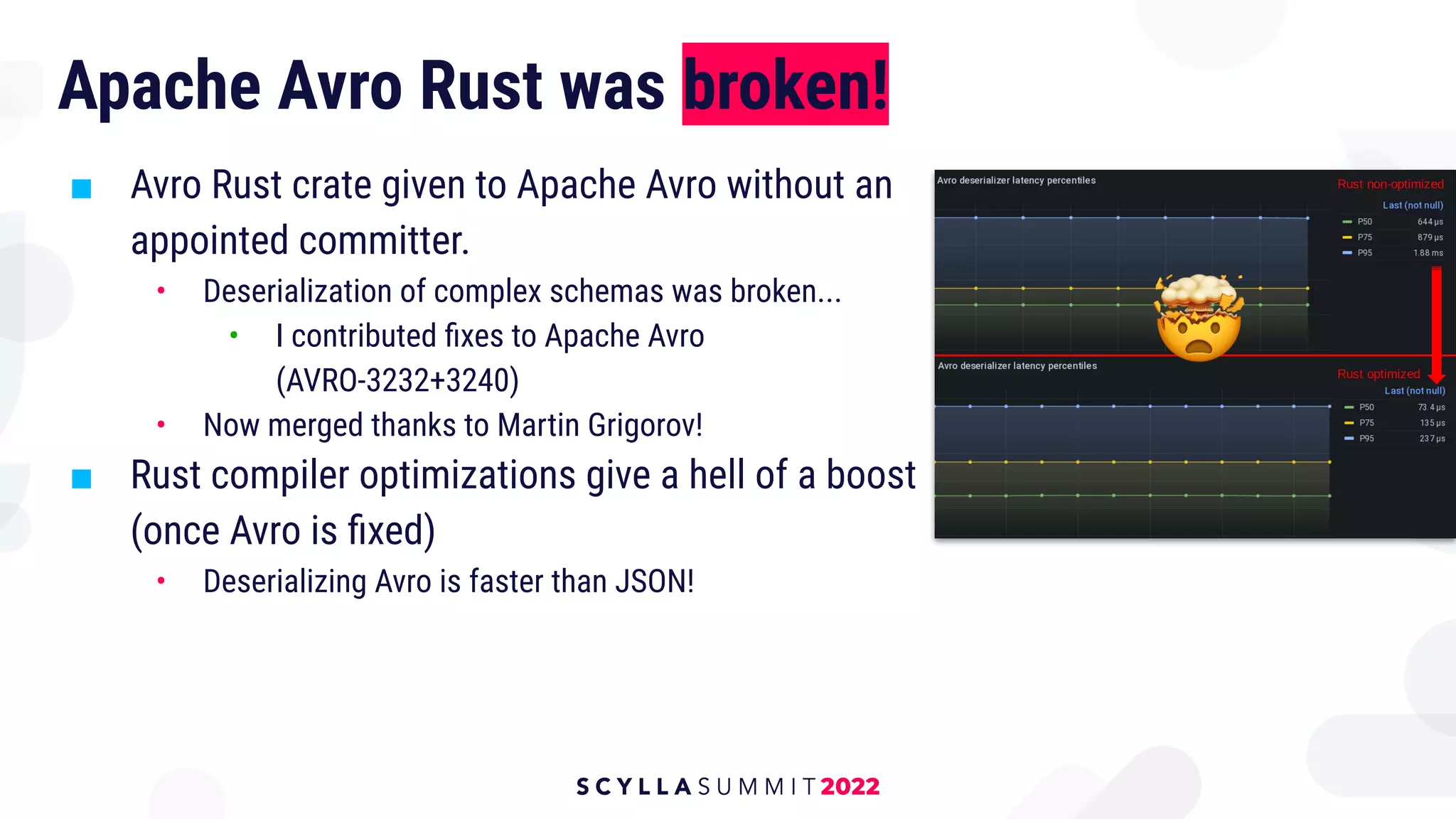

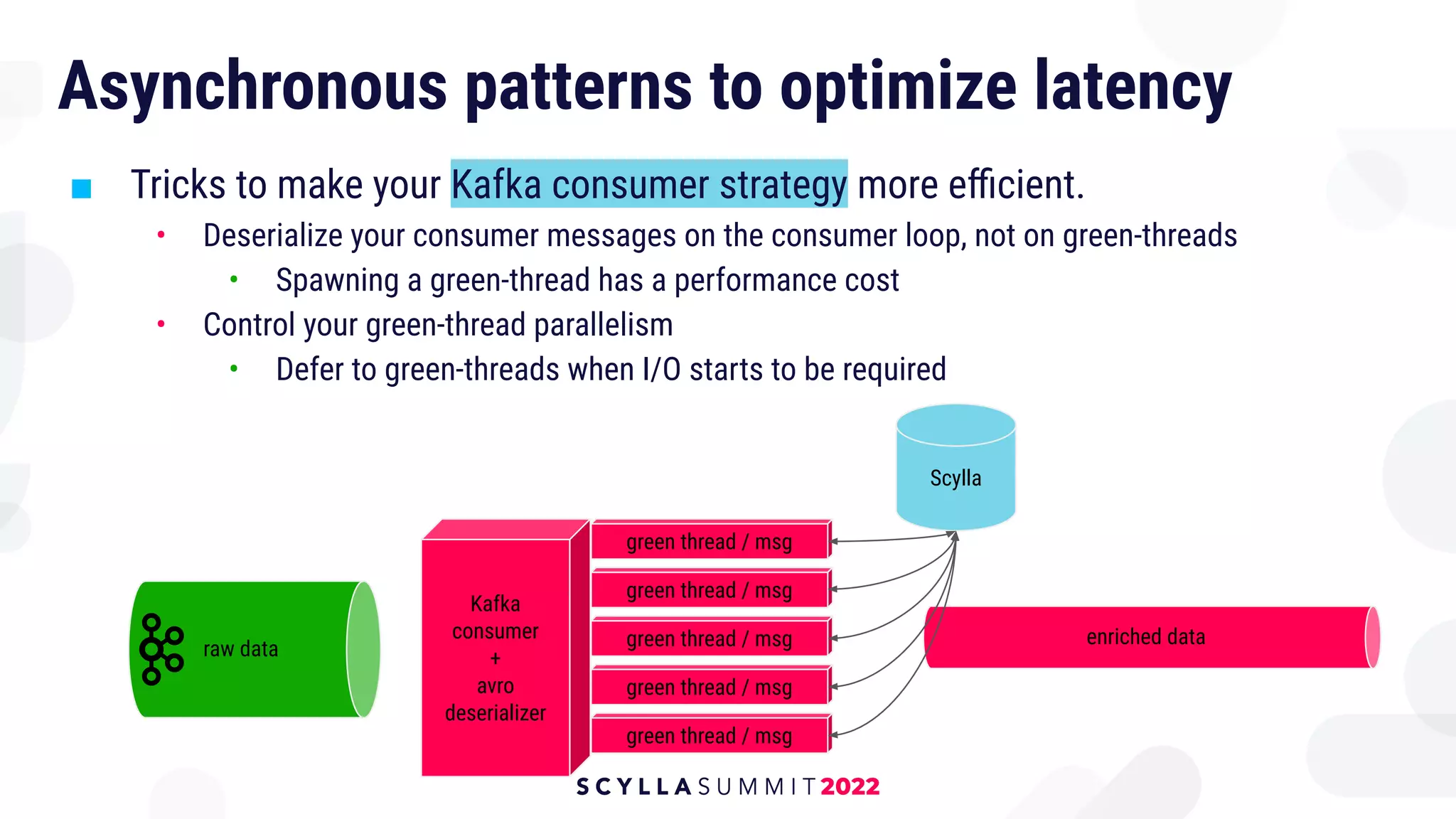

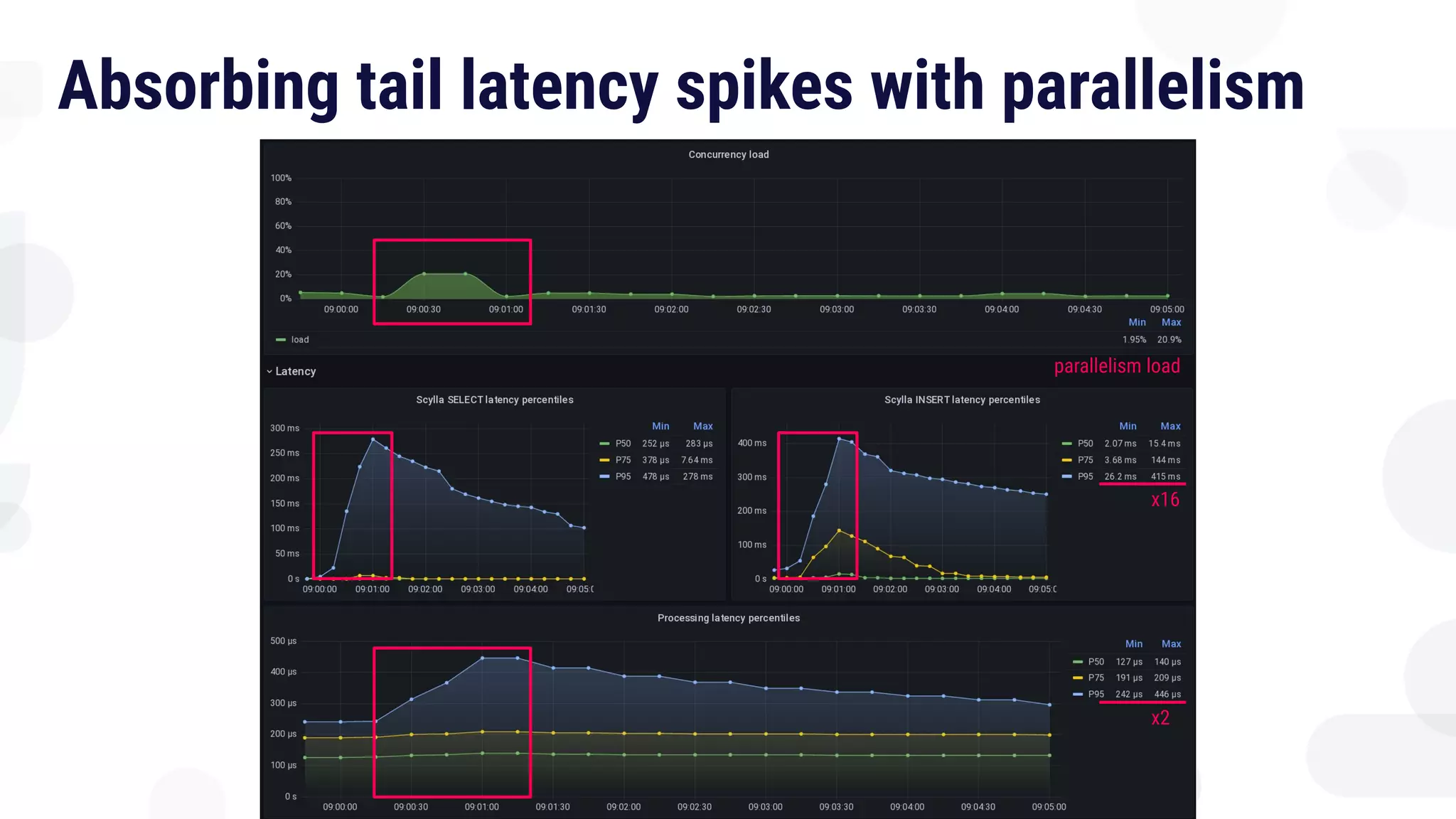

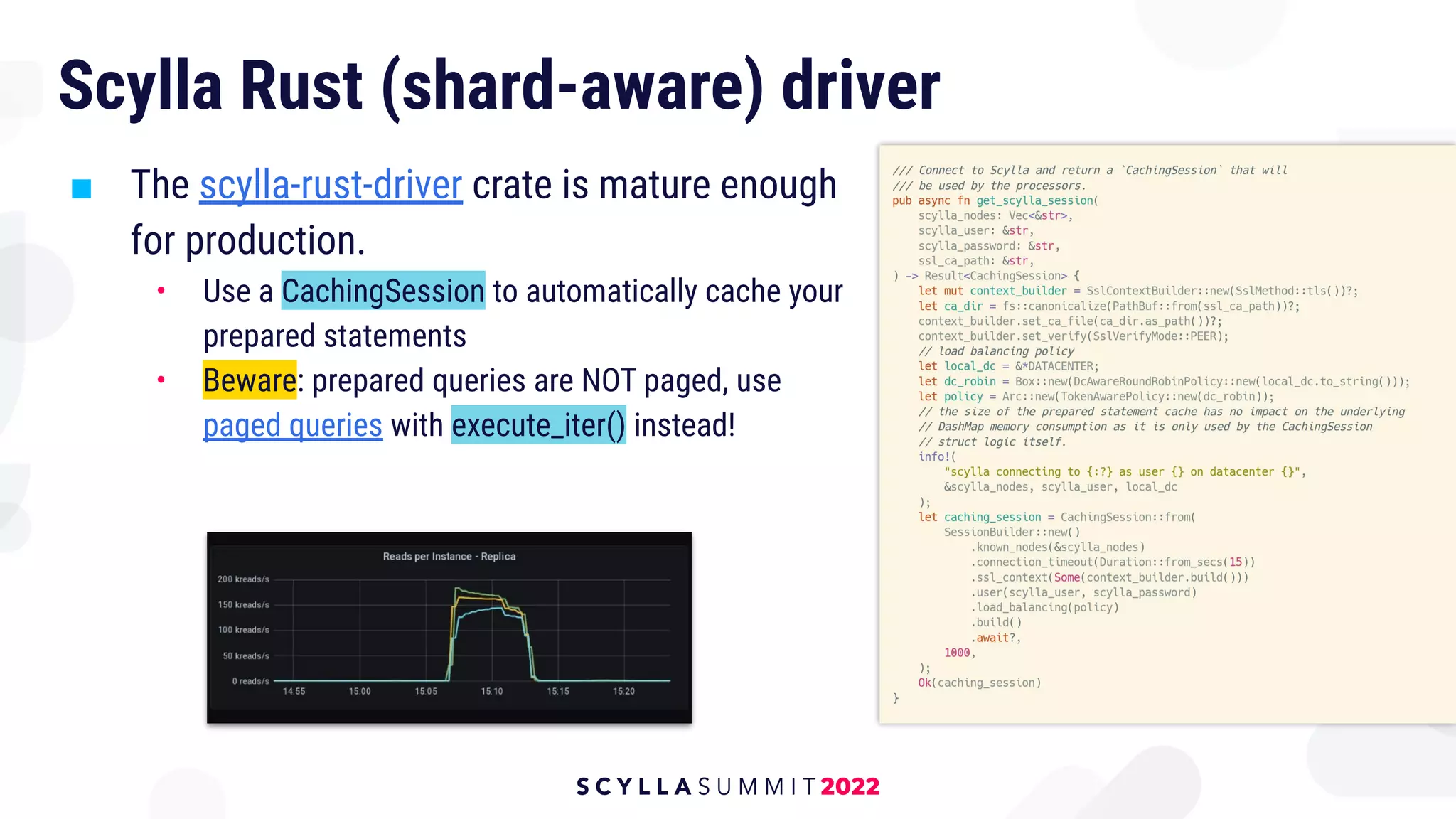

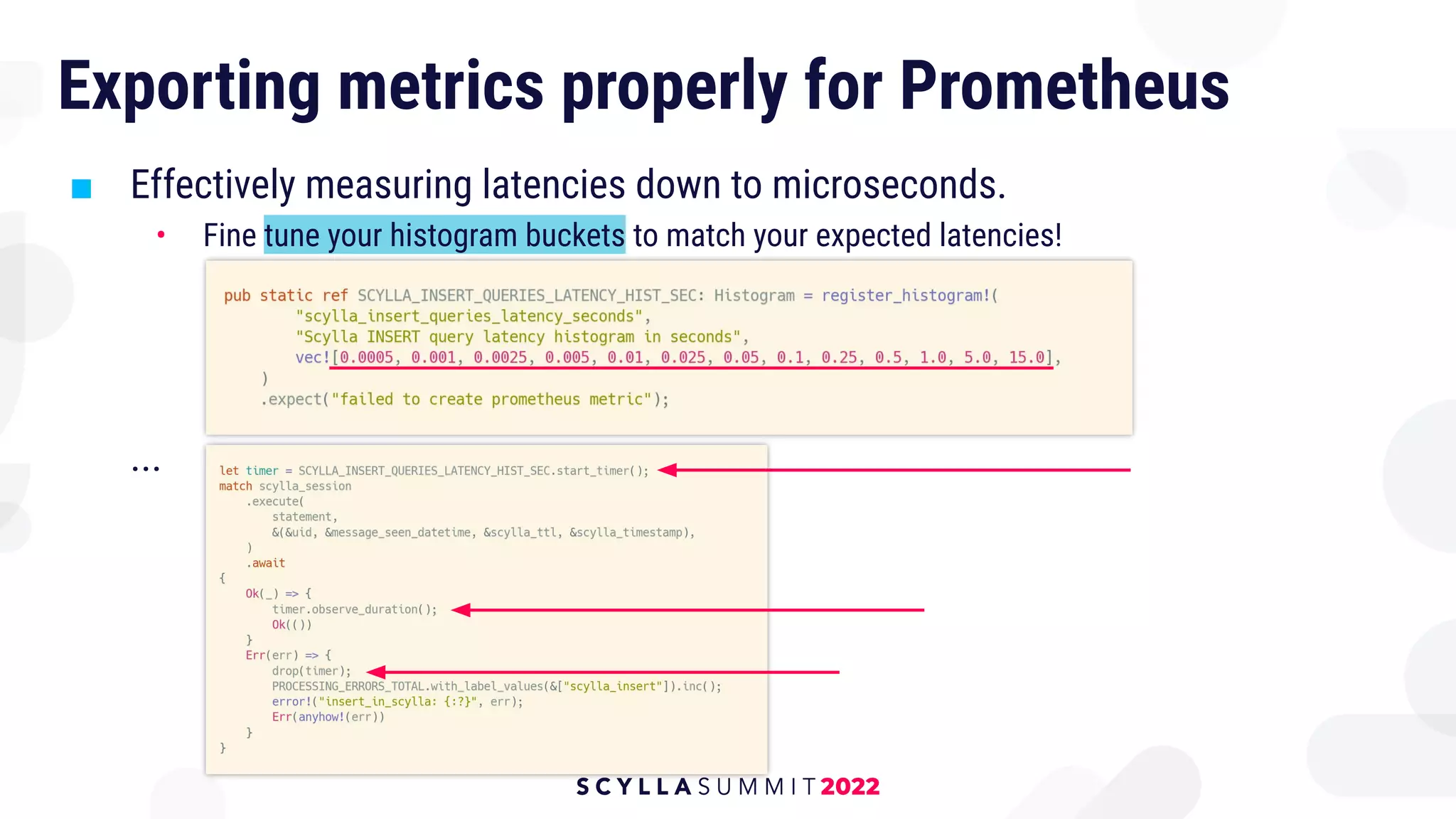

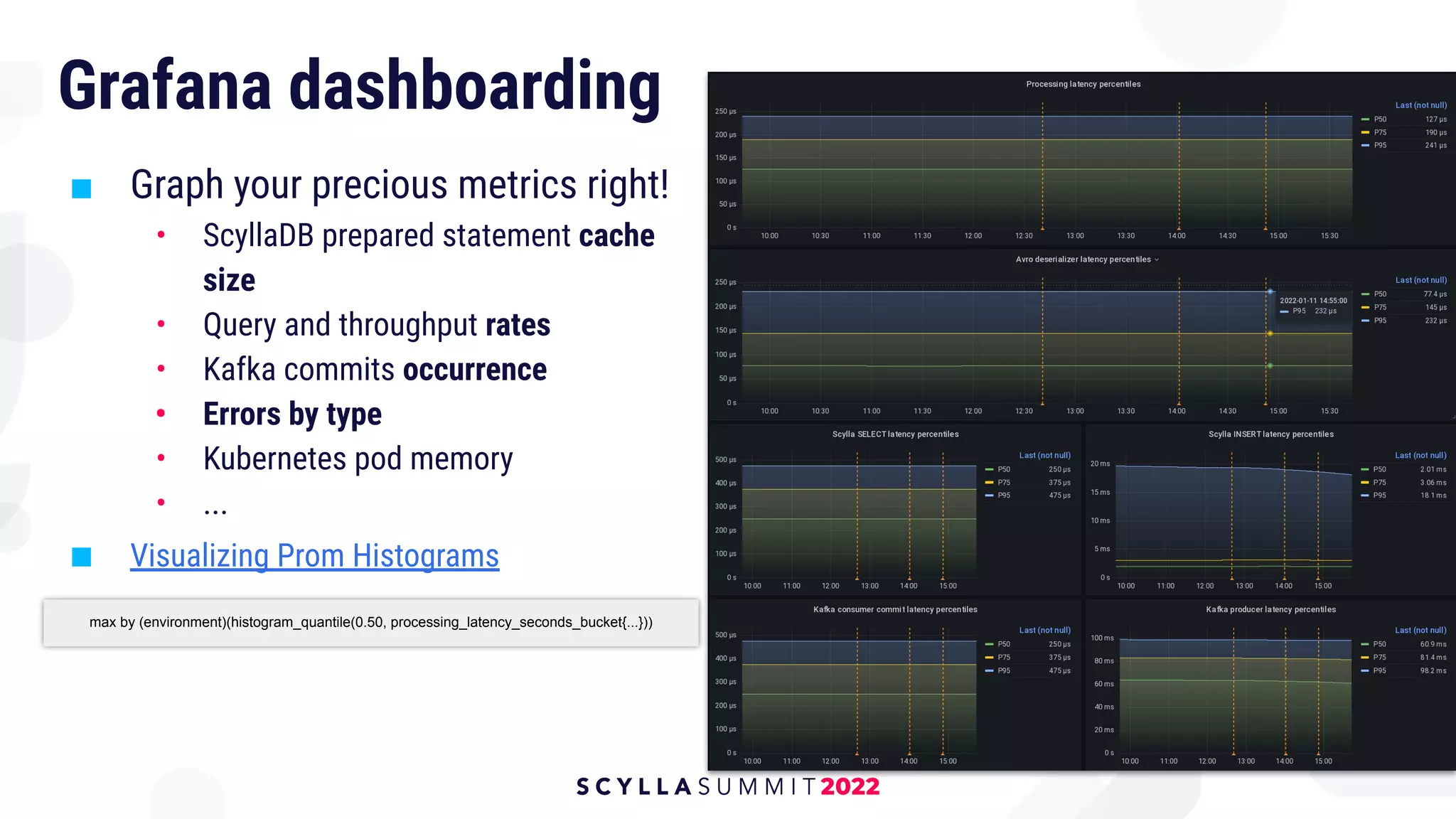

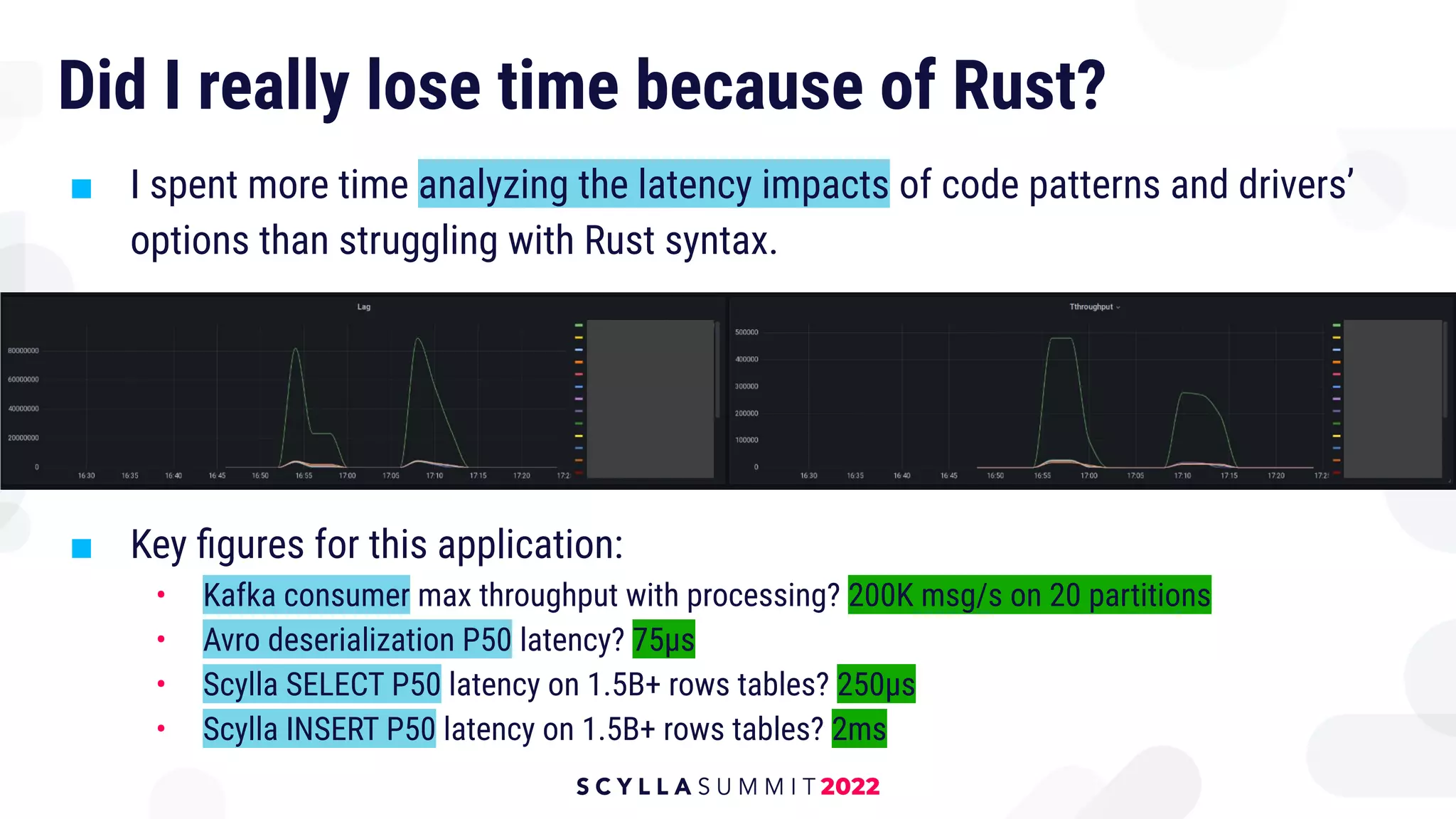

The document discusses the transition from Python to Rust for building a scalable Kafka and ScyllaDB pipeline at Numberly, detailing the advantages and challenges of Rust in terms of efficiency, reliability, and overall performance. Alexys Jacob highlights the paradigms that support reliable software development provided by Rust, as well as practical insights into optimizing latency and handling data serialization with Confluent Kafka and Avro. The transition resulted in a significant reduction of application pods and improved latency metrics, showcasing the success of rewriting Python applications into a single, efficient Rust application.