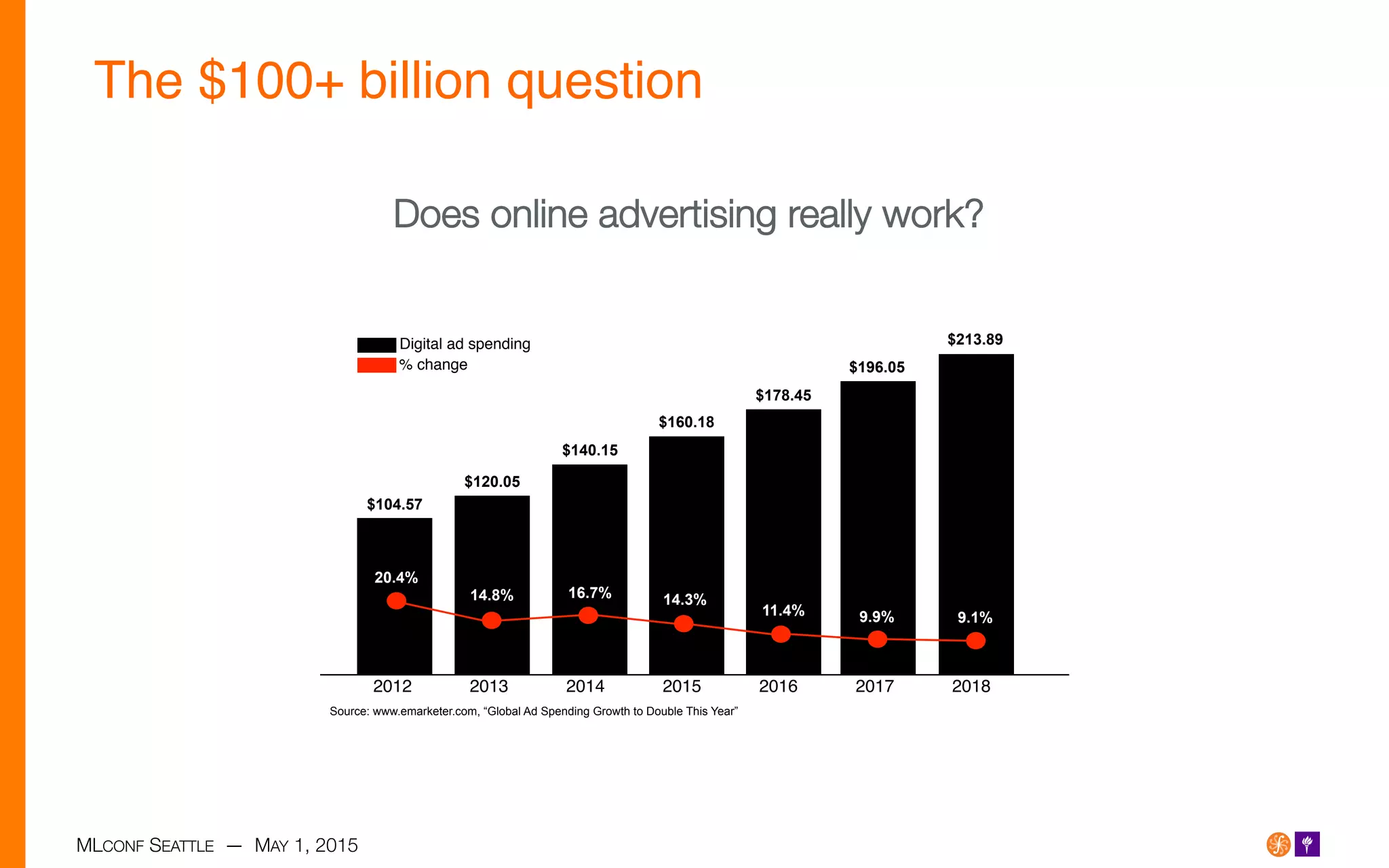

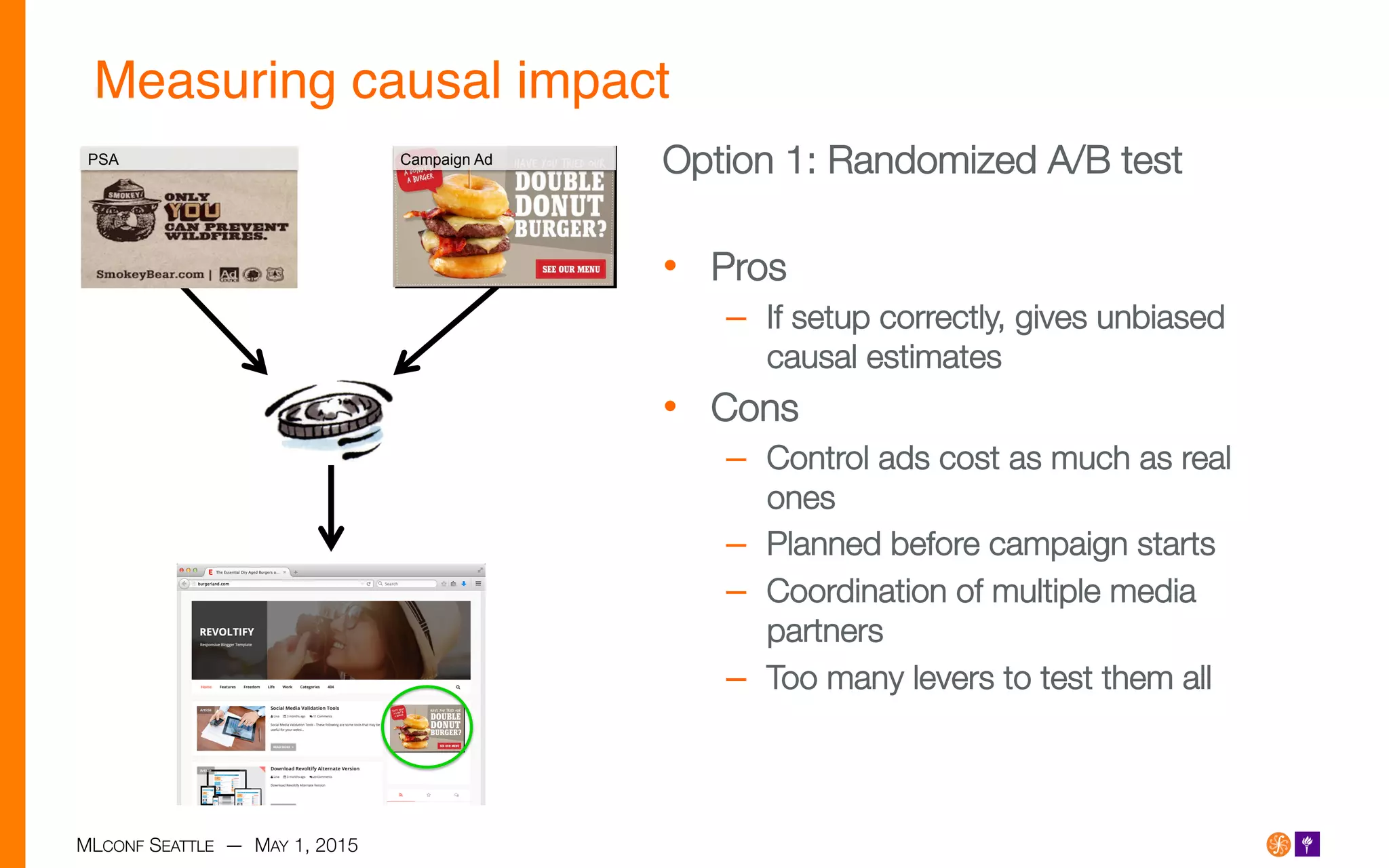

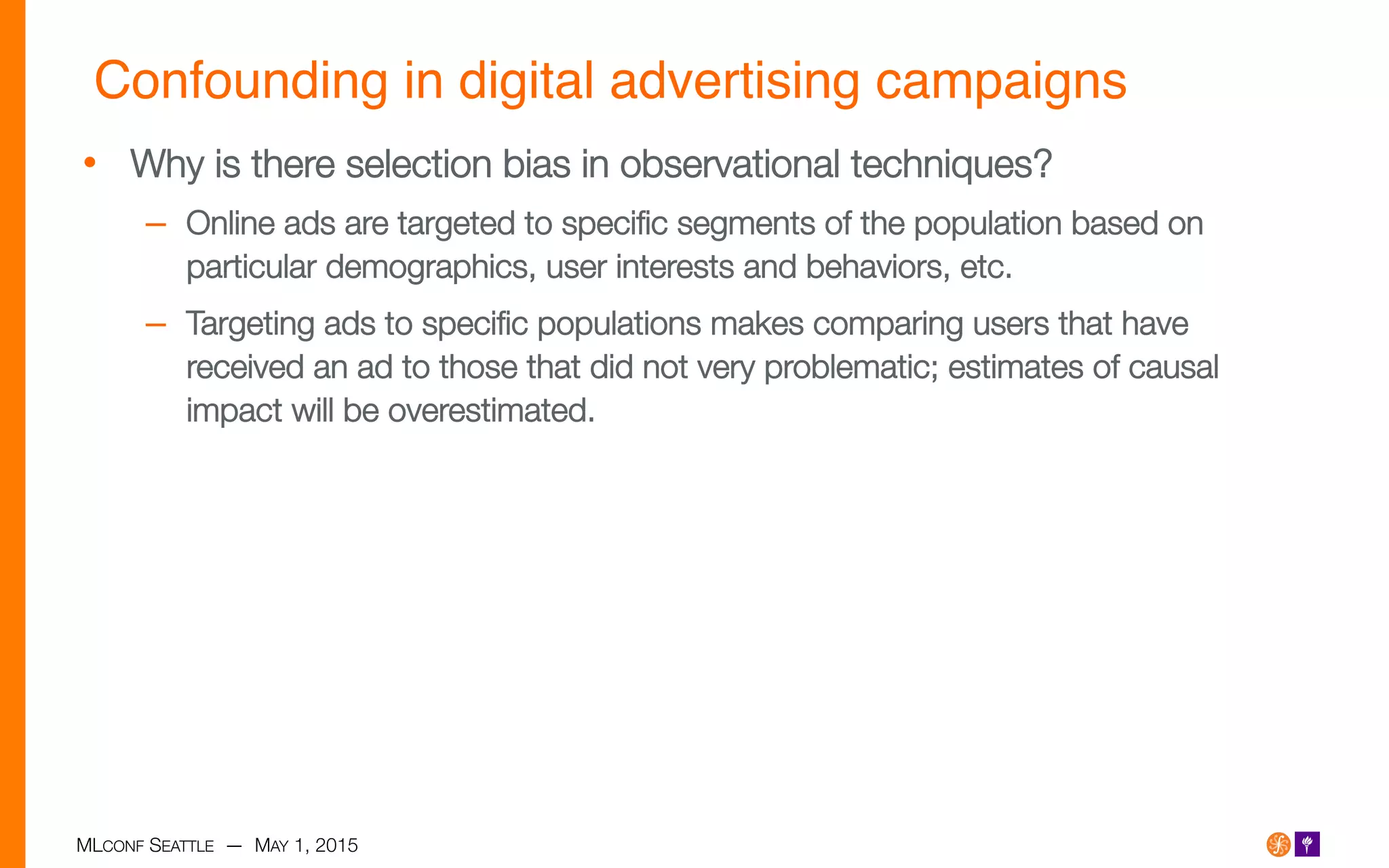

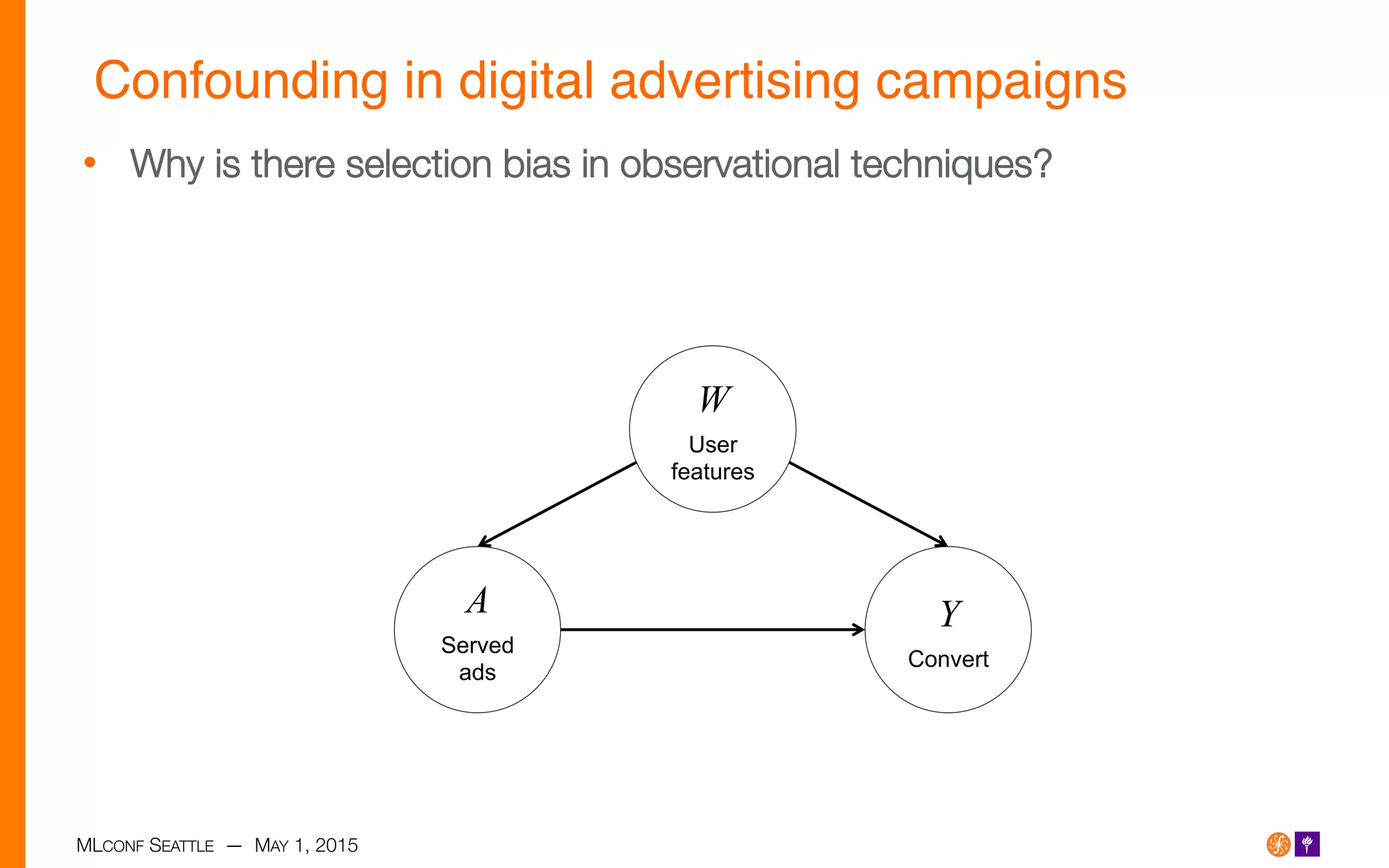

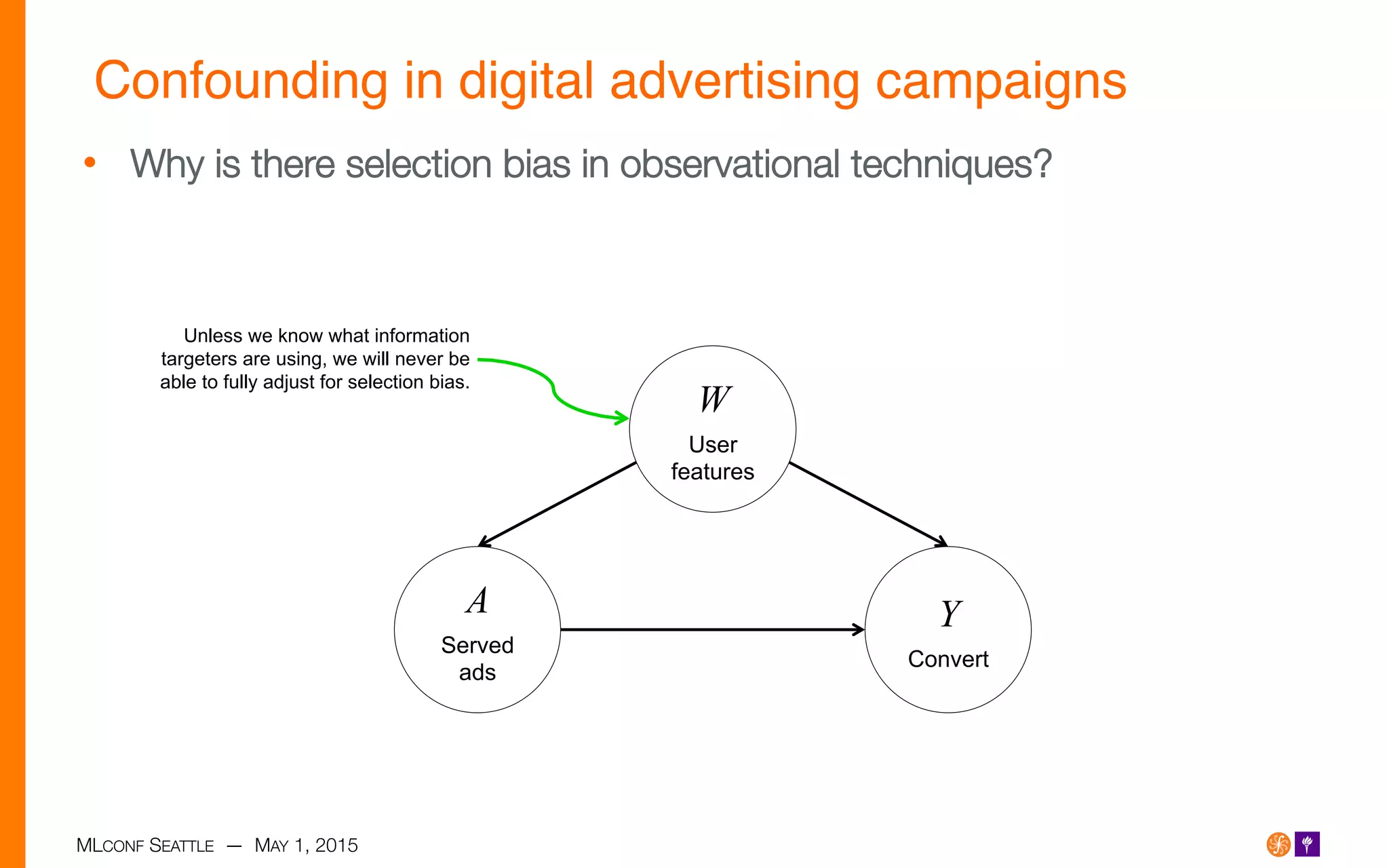

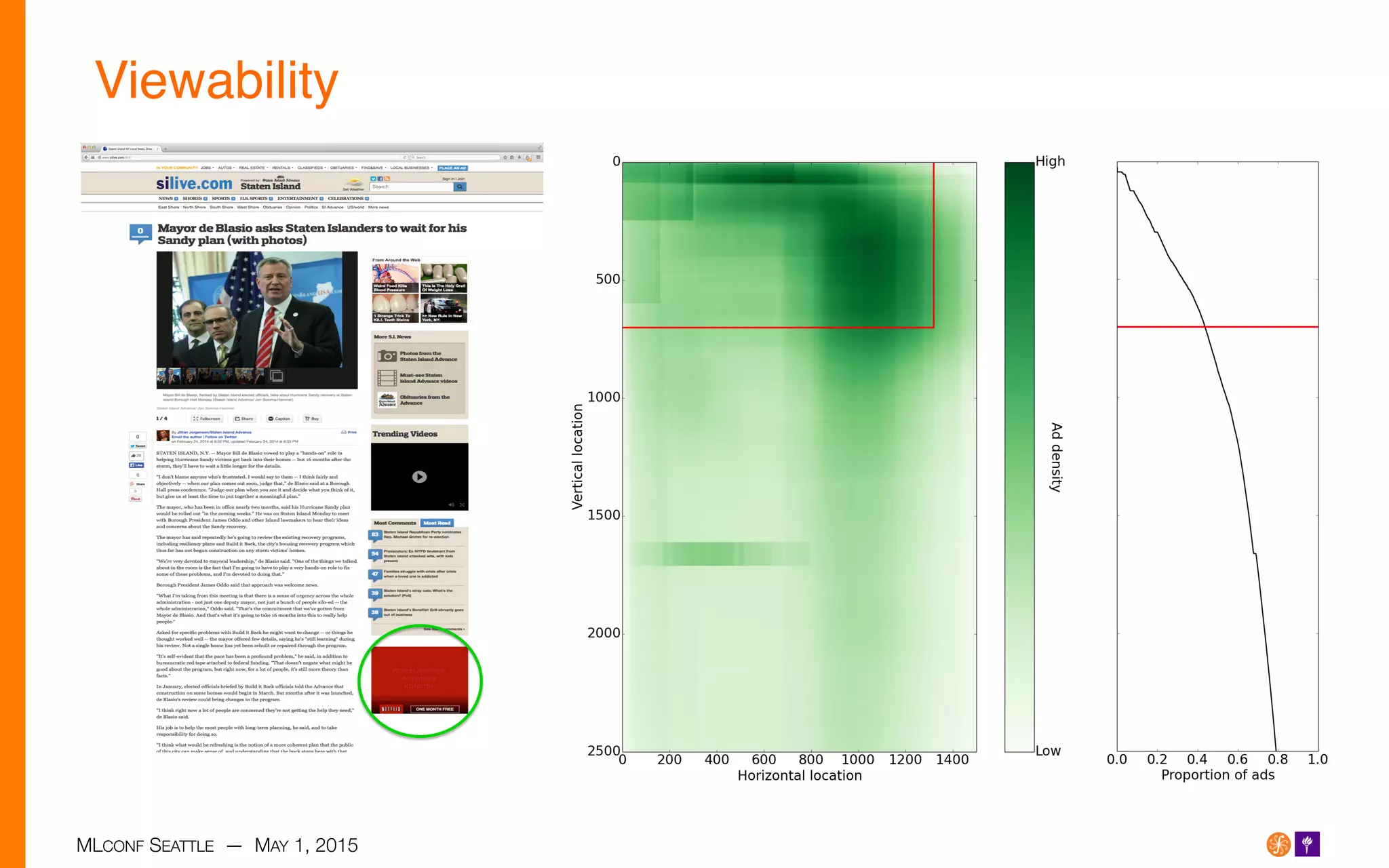

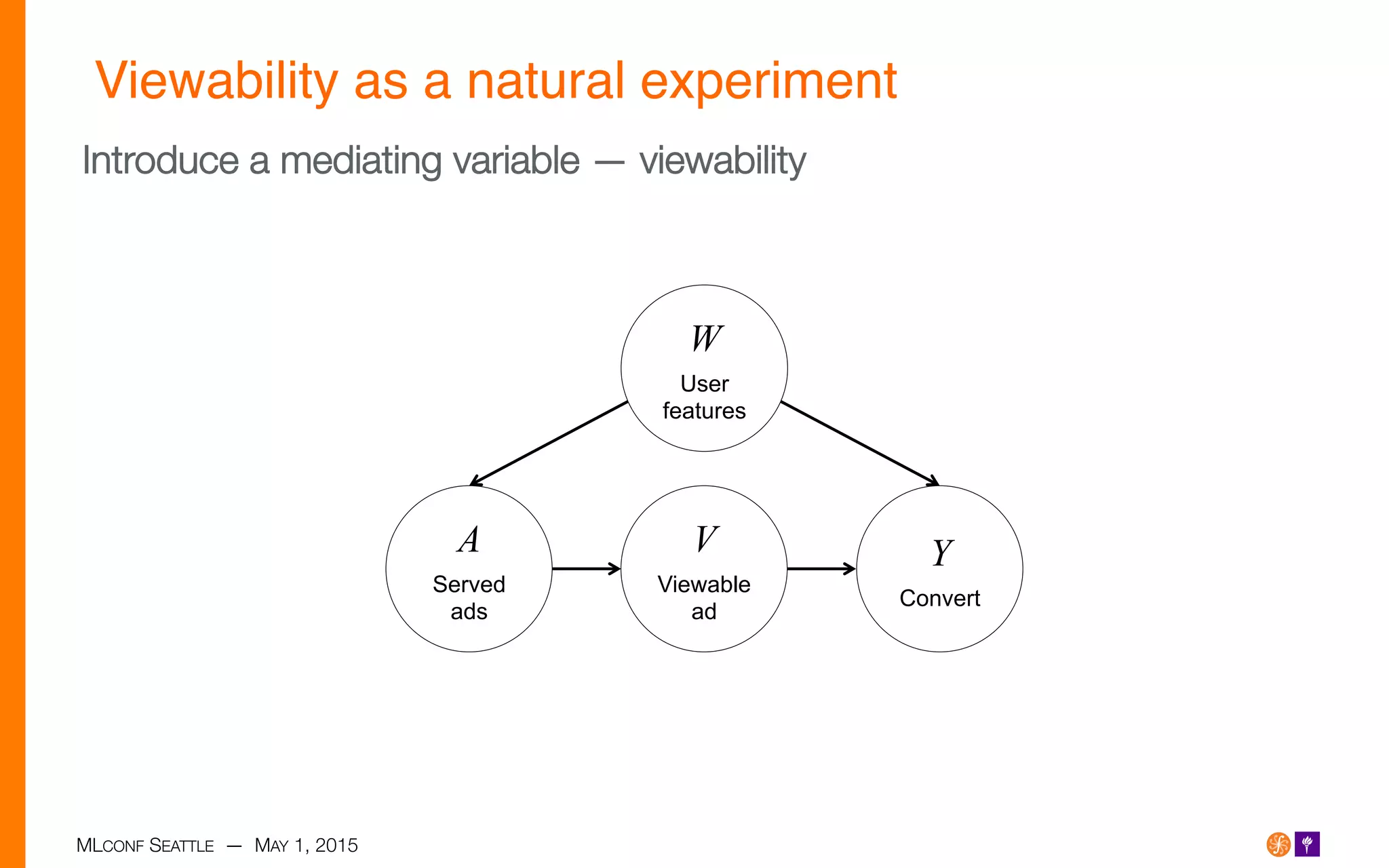

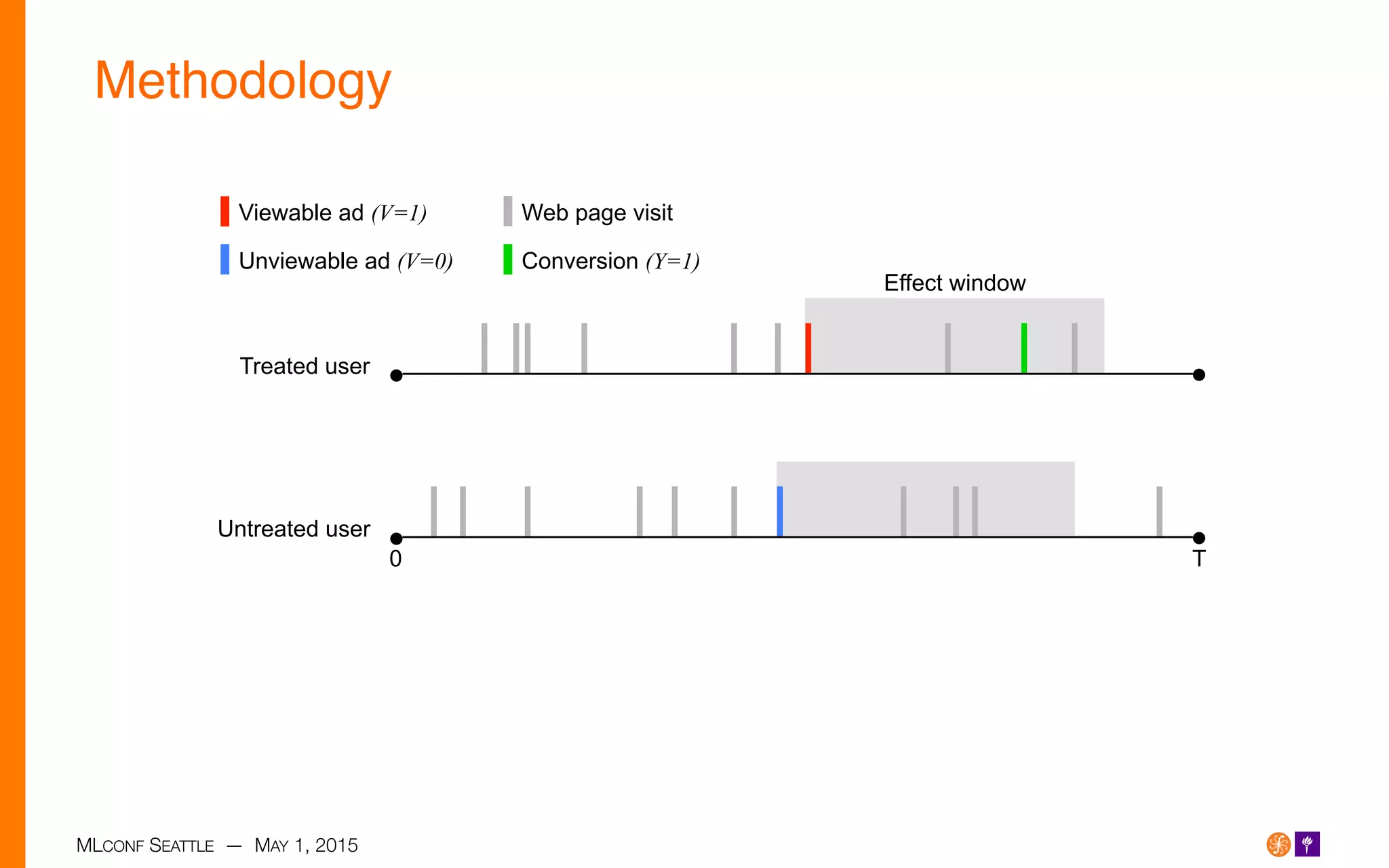

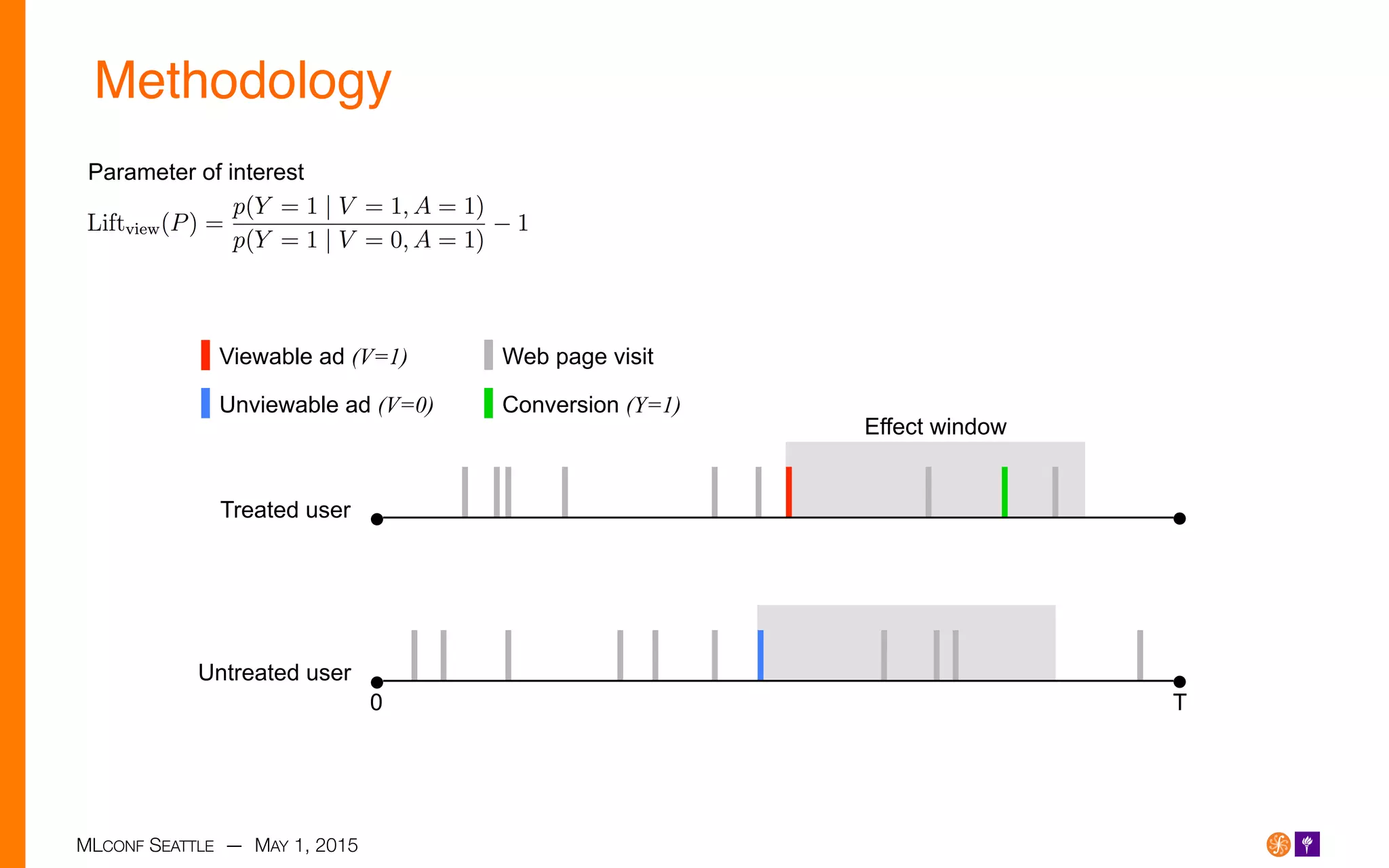

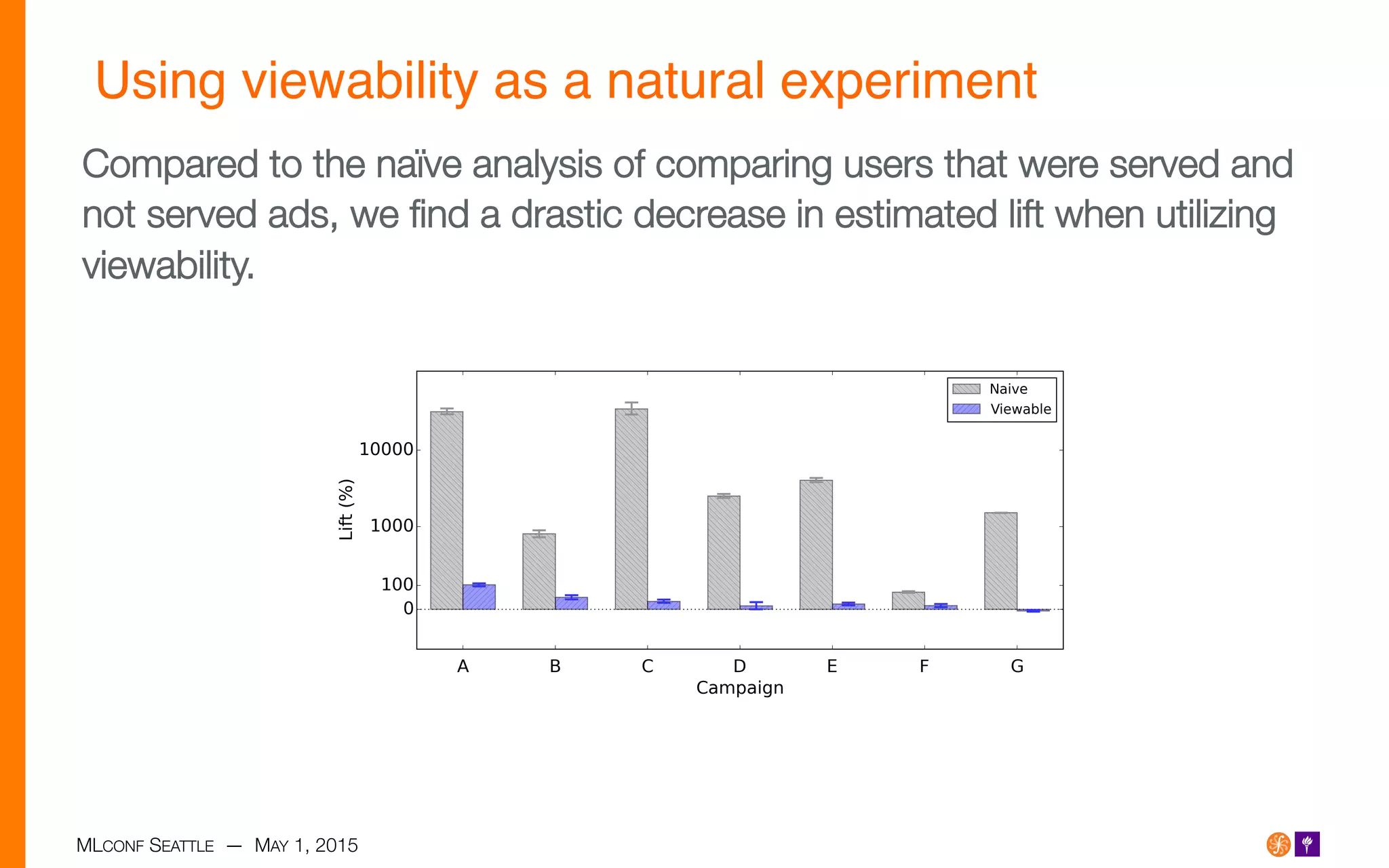

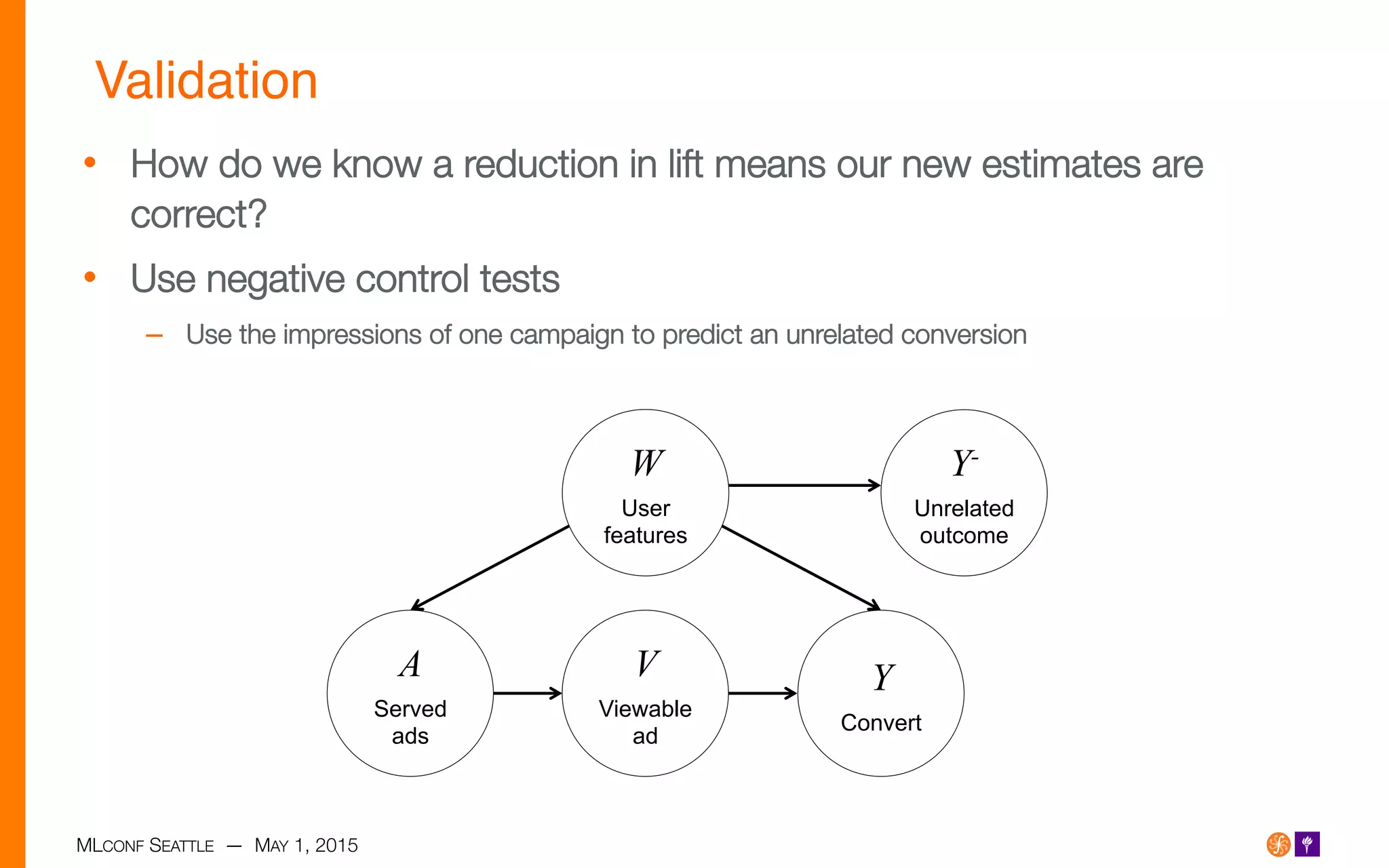

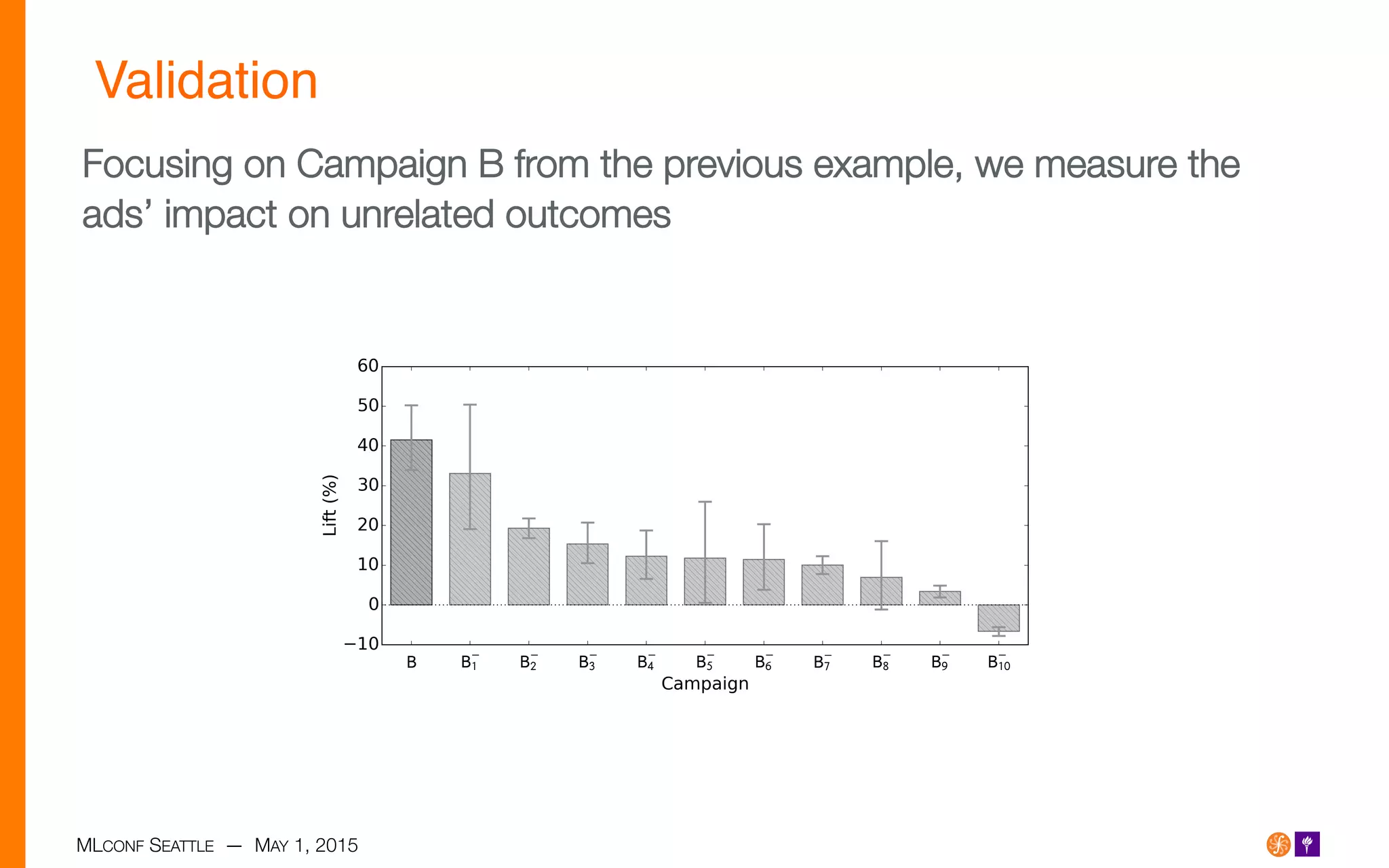

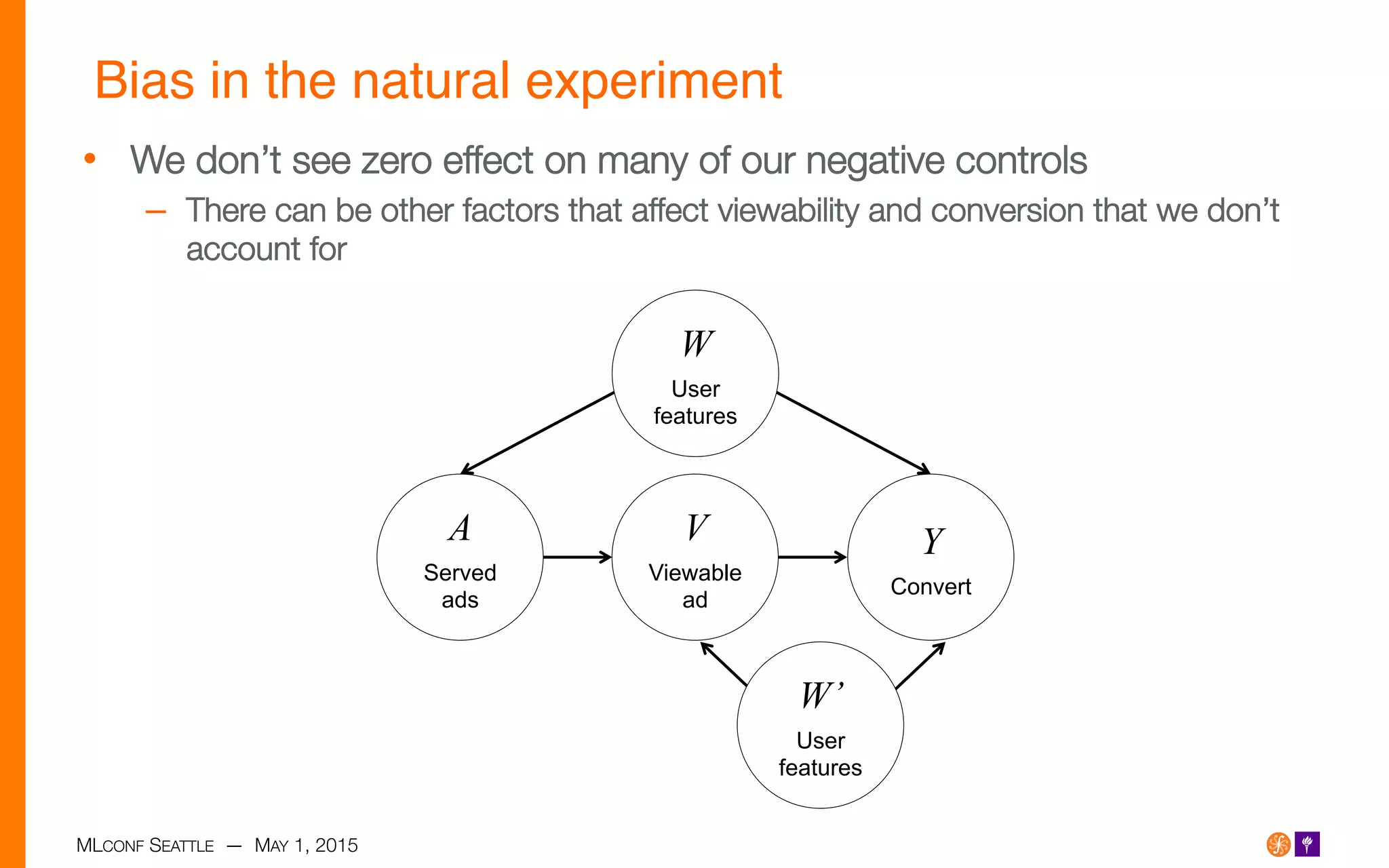

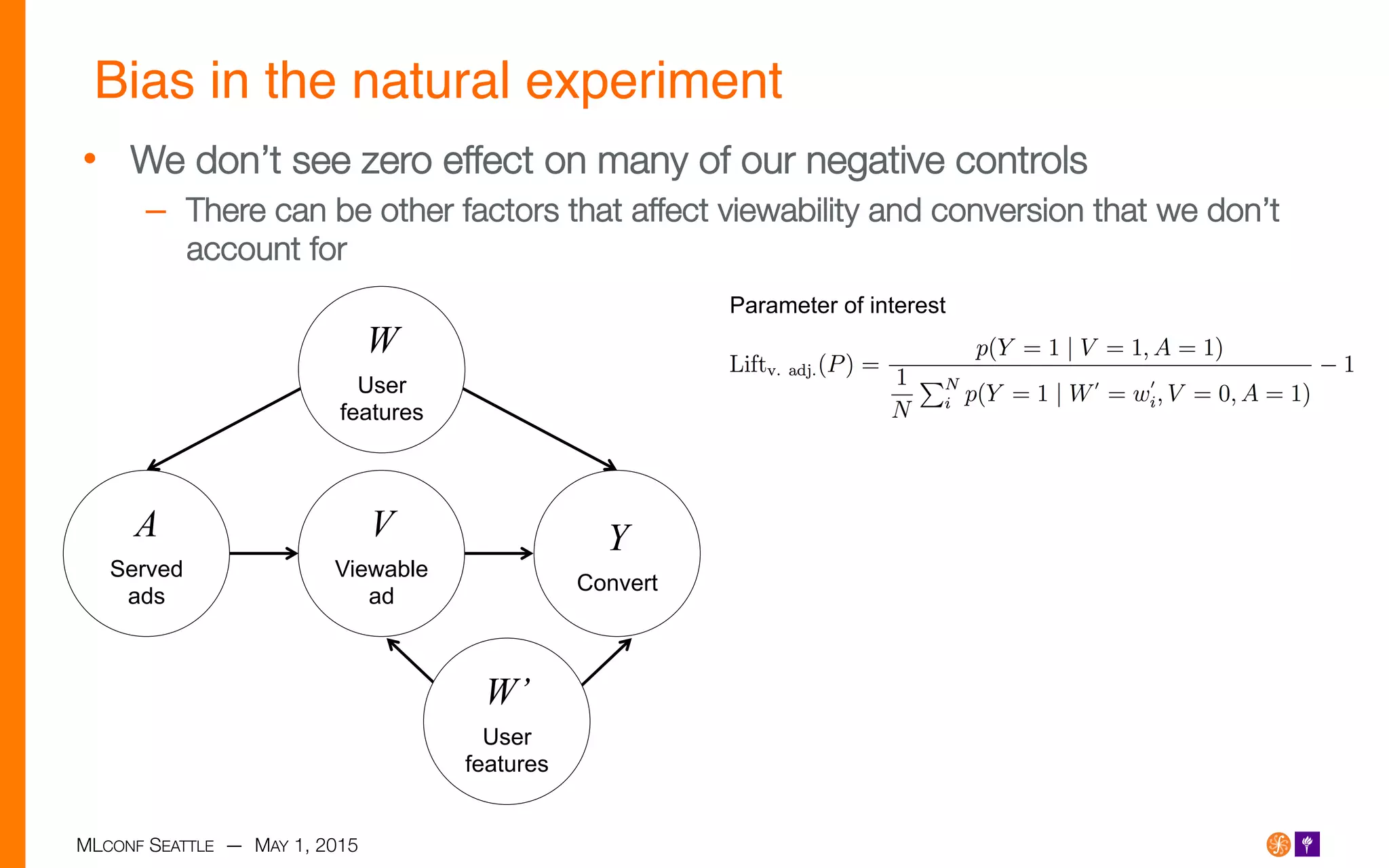

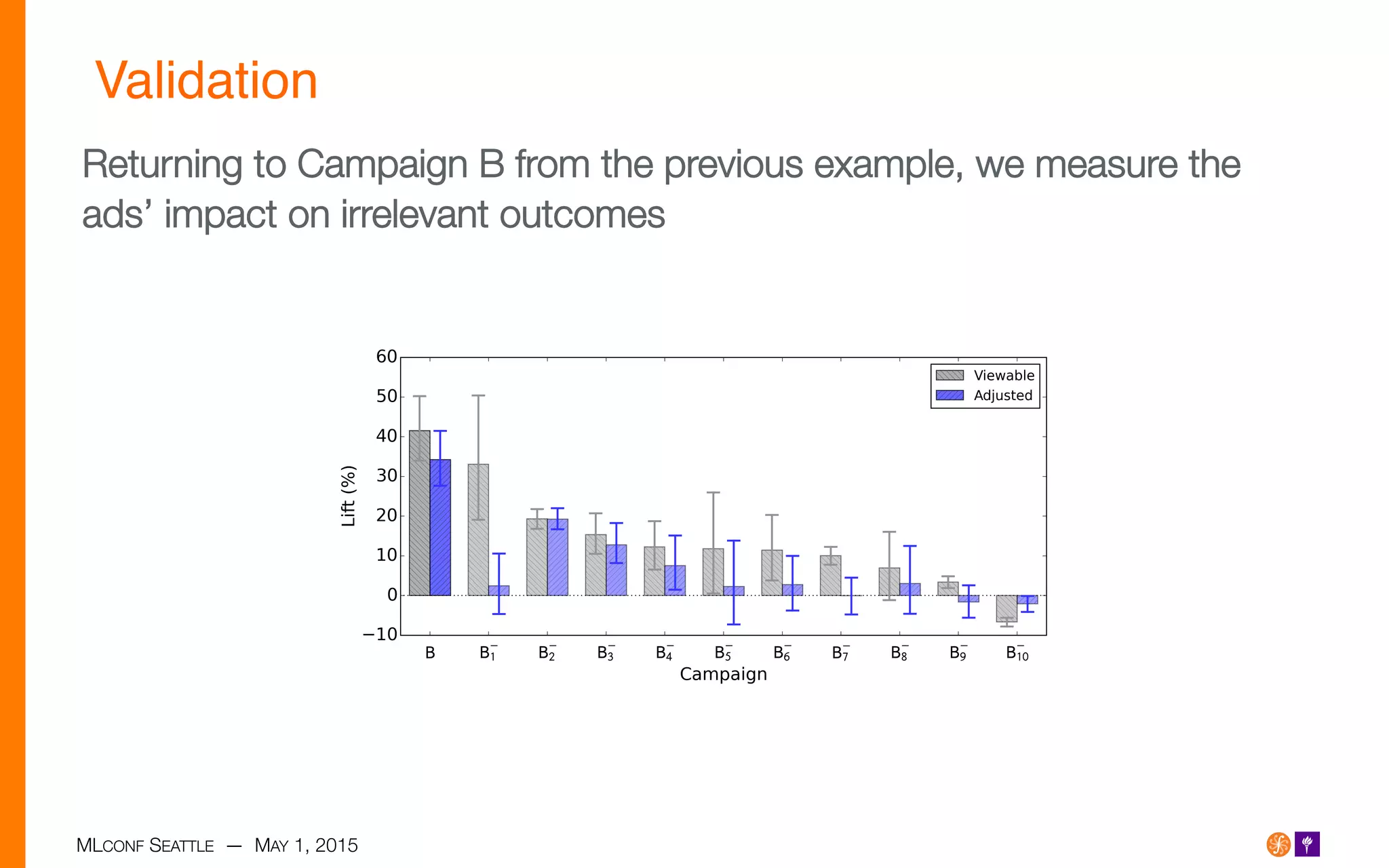

The document presents research on measuring the causal impact of online display advertising, focusing on the challenges of selection bias in observational studies and the use of viewability as a mediating variable. It highlights that traditional analysis tends to overestimate the effectiveness of ads due to targeting specific demographics and behaviors. By utilizing viewability in a natural experiment, the research aims to reduce bias and validate results using negative controls across various advertising campaigns.